|

Efficient Estimator

In statistics, efficiency is a measure of quality of an estimator, of an experimental design, or of a hypothesis testing procedure. Essentially, a more efficient estimator needs fewer input data or observations than a less efficient one to achieve the Cramér–Rao bound. An ''efficient estimator'' is characterized by having the smallest possible variance, indicating that there is a small deviance between the estimated value and the "true" value in the L2 norm sense. The relative efficiency of two procedures is the ratio of their efficiencies, although often this concept is used where the comparison is made between a given procedure and a notional "best possible" procedure. The efficiencies and the relative efficiency of two procedures theoretically depend on the sample size available for the given procedure, but it is often possible to use the asymptotic relative efficiency (defined as the limit of the relative efficiencies as the sample size grows) as the principal comparison ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

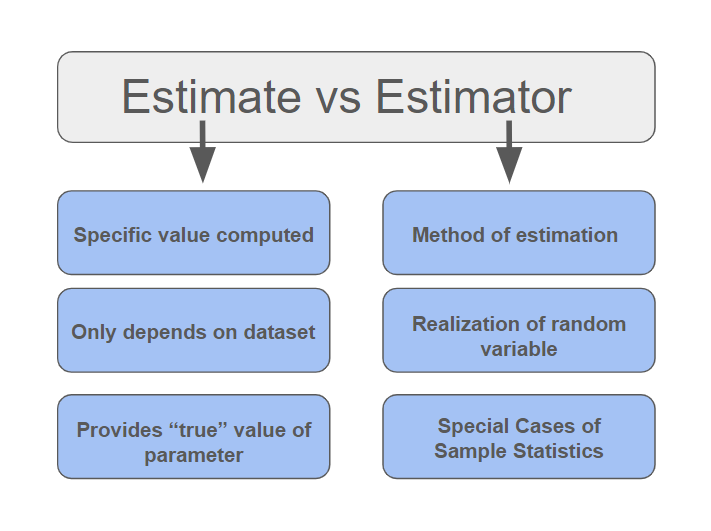

Estimator

In statistics, an estimator is a rule for calculating an estimate of a given quantity based on Sample (statistics), observed data: thus the rule (the estimator), the quantity of interest (the estimand) and its result (the estimate) are distinguished. For example, the sample mean is a commonly used estimator of the population mean. There are point estimator, point and interval estimators. The point estimators yield single-valued results. This is in contrast to an interval estimator, where the result would be a range of plausible values. "Single value" does not necessarily mean "single number", but includes vector valued or function valued estimators. ''Estimation theory'' is concerned with the properties of estimators; that is, with defining properties that can be used to compare different estimators (different rules for creating estimates) for the same quantity, based on the same data. Such properties can be used to determine the best rules to use under given circumstances. Howeve ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Minimum-variance Unbiased Estimator

In statistics a minimum-variance unbiased estimator (MVUE) or uniformly minimum-variance unbiased estimator (UMVUE) is an unbiased estimator that has lower variance than any other unbiased estimator for all possible values of the parameter. For practical statistics problems, it is important to determine the MVUE if one exists, since less-than-optimal procedures would naturally be avoided, other things being equal. This has led to substantial development of statistical theory related to the problem of optimal estimation. While combining the constraint of unbiasedness with the desirability metric of least variance leads to good results in most practical settings—making MVUE a natural starting point for a broad range of analyses—a targeted specification may perform better for a given problem; thus, MVUE is not always the best stopping point. Definition Consider estimation of g(\theta) based on data X_1, X_2, \ldots, X_n i.i.d. from some member of a family of densities p_\theta, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sample Median

The median of a set of numbers is the value separating the higher half from the lower half of a data sample, a population, or a probability distribution. For a data set, it may be thought of as the “middle" value. The basic feature of the median in describing data compared to the mean (often simply described as the "average") is that it is not skewed by a small proportion of extremely large or small values, and therefore provides a better representation of the center. Median income, for example, may be a better way to describe the center of the income distribution because increases in the largest incomes alone have no effect on the median. For this reason, the median is of central importance in robust statistics. Median is a 2-quantile; it is the value that partitions a set into two equal parts. Finite set of numbers The median of a finite list of numbers is the "middle" number, when those numbers are listed in order from smallest to greatest. If the data set has an odd numbe ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Standard Error

The standard error (SE) of a statistic (usually an estimator of a parameter, like the average or mean) is the standard deviation of its sampling distribution or an estimate of that standard deviation. In other words, it is the standard deviation of statistic values (each value is per sample that is a set of observations made per sampling on the same population). If the statistic is the sample mean, it is called the standard error of the mean (SEM). The standard error is a key ingredient in producing confidence intervals. The sampling distribution of a mean is generated by repeated sampling from the same population and recording the sample mean per sample. This forms a distribution of different means, and this distribution has its own mean and variance. Mathematically, the variance of the sampling mean distribution obtained is equal to the variance of the population divided by the sample size. This is because as the sample size increases, sample means cluster more closely arou ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Consistency (statistics)

In statistics, consistency of procedures, such as computing confidence intervals or conducting hypothesis tests, is a desired property of their behaviour as the number of items in the data set to which they are applied increases indefinitely. In particular, consistency requires that as the dataset size increases, the outcome of the procedure approaches the correct outcome. (entries for consistency, consistent estimator, consistent test) Use of the term in statistics derives from Sir Ronald Fisher in 1922. Use of the terms ''consistency'' and ''consistent'' in statistics is restricted to cases where essentially the same procedure can be applied to any number of data items. In complicated applications of statistics, there may be several ways in which the number of data items may grow. For example, records for rainfall within an area might increase in three ways: records for additional time periods; records for additional sites with a fixed area; records for extra sites obtained by ex ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Independent And Identically Distributed

Independent or Independents may refer to: Arts, entertainment, and media Artist groups * Independents (artist group), a group of modernist painters based in Pennsylvania, United States * Independentes (English: Independents), a Portuguese artist group Music Groups, labels, and genres * Independent music, a number of genres associated with independent labels * Independent record label, a record label not associated with a major label * Independent Albums, American albums chart Albums * ''Independent'' (Ai album), 2012 * ''Independent'' (Faze album), 2006 * ''Independent'' (Sacred Reich album), 1993 Songs * "Independent" (song), a 2007 song by Webbie * "Independent", a 2002 song by Ayumi Hamasaki from '' H'' News media organizations * Independent Media Center (also known as Indymedia or IMC), an open publishing network of journalist collectives that report on political and social issues, e.g., in ''The Indypendent'' newspaper of NYC * ITV (TV network) (Independent Television ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Multinomial Distribution

In probability theory, the multinomial distribution is a generalization of the binomial distribution. For example, it models the probability of counts for each side of a ''k''-sided die rolled ''n'' times. For ''n'' statistical independence, independent trials each of which leads to a success for exactly one of ''k'' categories, with each category having a given fixed success probability, the multinomial distribution gives the probability of any particular combination of numbers of successes for the various categories. When ''k'' is 2 and ''n'' is 1, the multinomial distribution is the Bernoulli distribution. When ''k'' is 2 and ''n'' is bigger than 1, it is the binomial distribution. When ''k'' is bigger than 2 and ''n'' is 1, it is the categorical distribution. The term "multinoulli" is sometimes used for the categorical distribution to emphasize this four-way relationship (so ''n'' determines the suffix, and ''k'' the prefix). The Bernoulli distribution models the outcome of a si ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Binomial Distribution

In probability theory and statistics, the binomial distribution with parameters and is the discrete probability distribution of the number of successes in a sequence of statistical independence, independent experiment (probability theory), experiments, each asking a yes–no question, and each with its own Boolean-valued function, Boolean-valued outcome (probability), outcome: ''success'' (with probability ) or ''failure'' (with probability ). A single success/failure experiment is also called a Bernoulli trial or Bernoulli experiment, and a sequence of outcomes is called a Bernoulli process; for a single trial, i.e., , the binomial distribution is a Bernoulli distribution. The binomial distribution is the basis for the binomial test of statistical significance. The binomial distribution is frequently used to model the number of successes in a sample of size drawn with replacement from a population of size . If the sampling is carried out without replacement, the draws ar ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Poisson Distribution

In probability theory and statistics, the Poisson distribution () is a discrete probability distribution that expresses the probability of a given number of events occurring in a fixed interval of time if these events occur with a known constant mean rate and independently of the time since the last event. It can also be used for the number of events in other types of intervals than time, and in dimension greater than 1 (e.g., number of events in a given area or volume). The Poisson distribution is named after French mathematician Siméon Denis Poisson. It plays an important role for discrete-stable distributions. Under a Poisson distribution with the expectation of ''λ'' events in a given interval, the probability of ''k'' events in the same interval is: :\frac . For instance, consider a call center which receives an average of ''λ ='' 3 calls per minute at all times of day. If the calls are independent, receiving one does not change the probability of when the next on ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Normal Distribution

In probability theory and statistics, a normal distribution or Gaussian distribution is a type of continuous probability distribution for a real-valued random variable. The general form of its probability density function is f(x) = \frac e^\,. The parameter is the mean or expectation of the distribution (and also its median and mode), while the parameter \sigma^2 is the variance. The standard deviation of the distribution is (sigma). A random variable with a Gaussian distribution is said to be normally distributed, and is called a normal deviate. Normal distributions are important in statistics and are often used in the natural and social sciences to represent real-valued random variables whose distributions are not known. Their importance is partly due to the central limit theorem. It states that, under some conditions, the average of many samples (observations) of a random variable with finite mean and variance is itself a random variable—whose distribution c ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

James–Stein Estimator

The James–Stein estimator is an estimator of the mean \boldsymbol\theta := (\theta_1, \theta_2, \dots \theta_m) for a multivariate random variable \boldsymbol Y := (Y_1, Y_2, \dots Y_m) . It arose sequentially in two main published papers. The earlier version of the estimator was developed in 1956, when Charles Stein reached a relatively shocking conclusion that while the then-usual estimate of the mean, the sample mean, is admissible when m \leq 2, it is inadmissible when m \geq 3. Stein proposed a possible improvement to the estimator that shrinks the sample means towards a more central mean vector \boldsymbol\nu (which can be chosen a priori or commonly as the "average of averages" of the sample means, given all samples share the same size). This observation is commonly referred to as Stein's example or paradox. In 1961, Willard James and Charles Stein simplified the original process. It can be shown that the James–Stein estimator dominates the "ordinary" least sq ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Admissible Procedure

In statistical decision theory, an admissible decision rule is a rule for making a decision such that there is no other rule that is always "better" than it (or at least sometimes better and never worse), in the precise sense of "better" defined below. This concept is analogous to Pareto efficiency. Definition Define sets \Theta\,, \mathcal and \mathcal, where \Theta\, are the states of nature, \mathcal the possible observations, and \mathcal the actions that may be taken. An observation of x \in \mathcal\,\! is distributed as F(x\mid\theta)\,\! and therefore provides evidence about the state of nature \theta\in\Theta\,\!. A decision rule is a function \delta:\rightarrow , where upon observing x\in \mathcal, we choose to take action \delta(x)\in \mathcal\,\!. Also define a loss function L: \Theta \times \mathcal \rightarrow \mathbb, which specifies the loss we would incur by taking action a \in \mathcal when the true state of nature is \theta \in \Theta. Usually we will take ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |