|

Admissible Procedure

In statistical decision theory, an admissible decision rule is a rule for making a decision such that there is no other rule that is always "better" than it (or at least sometimes better and never worse), in the precise sense of "better" defined below. This concept is analogous to Pareto efficiency. Definition Define sets \Theta\,, \mathcal and \mathcal, where \Theta\, are the states of nature, \mathcal the possible observations, and \mathcal the actions that may be taken. An observation of x \in \mathcal\,\! is distributed as F(x\mid\theta)\,\! and therefore provides evidence about the state of nature \theta\in\Theta\,\!. A decision rule is a function \delta:\rightarrow , where upon observing x\in \mathcal, we choose to take action \delta(x)\in \mathcal\,\!. Also define a loss function L: \Theta \times \mathcal \rightarrow \mathbb, which specifies the loss we would incur by taking action a \in \mathcal when the true state of nature is \theta \in \Theta. Usually we will take ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Decision Theory

Decision theory or the theory of rational choice is a branch of probability, economics, and analytic philosophy that uses expected utility and probability to model how individuals would behave rationally under uncertainty. It differs from the cognitive and behavioral sciences in that it is mainly prescriptive and concerned with identifying optimal decisions for a rational agent, rather than describing how people actually make decisions. Despite this, the field is important to the study of real human behavior by social scientists, as it lays the foundations to mathematically model and analyze individuals in fields such as sociology, economics, criminology, cognitive science, moral philosophy and political science. History The roots of decision theory lie in probability theory, developed by Blaise Pascal and Pierre de Fermat in the 17th century, which was later refined by others like Christiaan Huygens. These developments provided a framework for understanding risk and uncerta ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Frequency Probability

Frequentist probability or frequentism is an interpretation of probability; it defines an event's probability (the ''long-run probability'') as the limit of a sequence, limit of its Empirical probability, relative frequency in infinitely many Experiment (probability theory), trials. Probabilities can be found (in principle) by a repeatable objective process, as in repeated sampling (statistics), sampling from the same population (statistics), population, and are thus ideally devoid of subjectivity. The continued use of frequentist methods in scientific inference, however, has been called into question. The development of the frequentist account was motivated by the problems and paradoxes of the previously dominant viewpoint, the Classical definition of probability, classical interpretation. In the classical interpretation, probability was defined in terms of the principle of indifference, based on the natural symmetry of a problem, so, for example, the probabilities of dice game ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sample Variance

In probability theory and statistics, variance is the expected value of the squared deviation from the mean of a random variable. The standard deviation (SD) is obtained as the square root of the variance. Variance is a measure of dispersion, meaning it is a measure of how far a set of numbers is spread out from their average value. It is the second central moment of a distribution, and the covariance of the random variable with itself, and it is often represented by \sigma^2, s^2, \operatorname(X), V(X), or \mathbb(X). An advantage of variance as a measure of dispersion is that it is more amenable to algebraic manipulation than other measures of dispersion such as the expected absolute deviation; for example, the variance of a sum of uncorrelated random variables is equal to the sum of their variances. A disadvantage of the variance for practical applications is that, unlike the standard deviation, its units differ from the random variable, which is why the standard devia ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Normal Distribution

In probability theory and statistics, a normal distribution or Gaussian distribution is a type of continuous probability distribution for a real-valued random variable. The general form of its probability density function is f(x) = \frac e^\,. The parameter is the mean or expectation of the distribution (and also its median and mode), while the parameter \sigma^2 is the variance. The standard deviation of the distribution is (sigma). A random variable with a Gaussian distribution is said to be normally distributed, and is called a normal deviate. Normal distributions are important in statistics and are often used in the natural and social sciences to represent real-valued random variables whose distributions are not known. Their importance is partly due to the central limit theorem. It states that, under some conditions, the average of many samples (observations) of a random variable with finite mean and variance is itself a random variable—whose distribution c ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Ordinary Least Squares

In statistics, ordinary least squares (OLS) is a type of linear least squares method for choosing the unknown parameters in a linear regression In statistics, linear regression is a statistical model, model that estimates the relationship between a Scalar (mathematics), scalar response (dependent variable) and one or more explanatory variables (regressor or independent variable). A mode ... model (with fixed level-one effects of a linear function of a set of explanatory variables) by the principle of least squares: minimizing the sum of the squares of the differences between the observed dependent variable (values of the variable being observed) in the input dataset and the output of the (linear) function of the independent variable. Some sources consider OLS to be linear regression. Geometrically, this is seen as the sum of the squared distances, parallel to the axis of the dependent variable, between each data point in the set and the corresponding point on the regression ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

James–Stein Estimator

The James–Stein estimator is an estimator of the mean \boldsymbol\theta := (\theta_1, \theta_2, \dots \theta_m) for a multivariate random variable \boldsymbol Y := (Y_1, Y_2, \dots Y_m) . It arose sequentially in two main published papers. The earlier version of the estimator was developed in 1956, when Charles Stein reached a relatively shocking conclusion that while the then-usual estimate of the mean, the sample mean, is admissible when m \leq 2, it is inadmissible when m \geq 3. Stein proposed a possible improvement to the estimator that shrinks the sample means towards a more central mean vector \boldsymbol\nu (which can be chosen a priori or commonly as the "average of averages" of the sample means, given all samples share the same size). This observation is commonly referred to as Stein's example or paradox. In 1961, Willard James and Charles Stein simplified the original process. It can be shown that the James–Stein estimator dominates the "ordinary" least sq ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Stein's Example

In decision theory and estimation theory, Stein's example (also known as Stein's phenomenon or Stein's paradox) is the observation that when three or more parameters are estimated simultaneously, there exist combined estimators more accurate on average (that is, having lower expected mean squared error) than any method that handles the parameters separately. It is named after Charles Stein of Stanford University, who discovered the phenomenon in 1955. An intuitive explanation is that optimizing for the mean-squared error of a ''combined'' estimator is not the same as optimizing for the errors of separate estimators of the individual parameters. In practical terms, if the combined error is in fact of interest, then a combined estimator should be used, even if the underlying parameters are independent. If one is instead interested in estimating an individual parameter, then using a combined estimator does not help and is in fact worse. Formal statement The following is the simples ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Prior Probability

A prior probability distribution of an uncertain quantity, simply called the prior, is its assumed probability distribution before some evidence is taken into account. For example, the prior could be the probability distribution representing the relative proportions of voters who will vote for a particular politician in a future election. The unknown quantity may be a parameter of the model or a latent variable rather than an observable variable. In Bayesian statistics, Bayes' rule prescribes how to update the prior with new information to obtain the posterior probability distribution, which is the conditional distribution of the uncertain quantity given new data. Historically, the choice of priors was often constrained to a conjugate family of a given likelihood function, so that it would result in a tractable posterior of the same family. The widespread availability of Markov chain Monte Carlo methods, however, has made this less of a concern. There are many ways to const ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Decision Theory

Decision theory or the theory of rational choice is a branch of probability theory, probability, economics, and analytic philosophy that uses expected utility and probabilities, probability to model how individuals would behave Rationality, rationally under uncertainty. It differs from the Cognitive science, cognitive and Behavioural sciences, behavioral sciences in that it is mainly Prescriptive economics, prescriptive and concerned with identifying optimal decision, optimal decisions for a rational agent, rather than Descriptive economics, describing how people actually make decisions. Despite this, the field is important to the study of real human behavior by Social science, social scientists, as it lays the foundations to Mathematical model, mathematically model and analyze individuals in fields such as sociology, economics, criminology, cognitive science, moral philosophy and political science. History The roots of decision theory lie in probability theory, developed by Blai ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Frequentist

Frequentist inference is a type of statistical inference based in frequentist probability, which treats “probability” in equivalent terms to “frequency” and draws conclusions from sample-data by means of emphasizing the frequency or proportion of findings in the data. Frequentist inference underlies frequentist statistics, in which the well-established methodologies of statistical hypothesis testing and confidence intervals are founded. History of frequentist statistics Frequentism is based on the presumption that statistics represent probabilistic frequencies. This view was primarily developed by Ronald Fisher and the team of Jerzy Neyman and Egon Pearson. Ronald Fisher contributed to frequentist statistics by developing the frequentist concept of "significance testing", which is the study of the significance of a measure of a statistic when compared to the hypothesis. Neyman-Pearson extended Fisher's ideas to apply to multiple hypotheses. They posed that the ratio ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bayes' Theorem

Bayes' theorem (alternatively Bayes' law or Bayes' rule, after Thomas Bayes) gives a mathematical rule for inverting Conditional probability, conditional probabilities, allowing one to find the probability of a cause given its effect. For example, if the risk of developing health problems is known to increase with age, Bayes' theorem allows the risk to someone of a known age to be assessed more accurately by conditioning it relative to their age, rather than assuming that the person is typical of the population as a whole. Based on Bayes' law, both the prevalence of a disease in a given population and the error rate of an infectious disease test must be taken into account to evaluate the meaning of a positive test result and avoid the ''base-rate fallacy''. One of Bayes' theorem's many applications is Bayesian inference, an approach to statistical inference, where it is used to invert the probability of Realization (probability), observations given a model configuration (i.e., th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

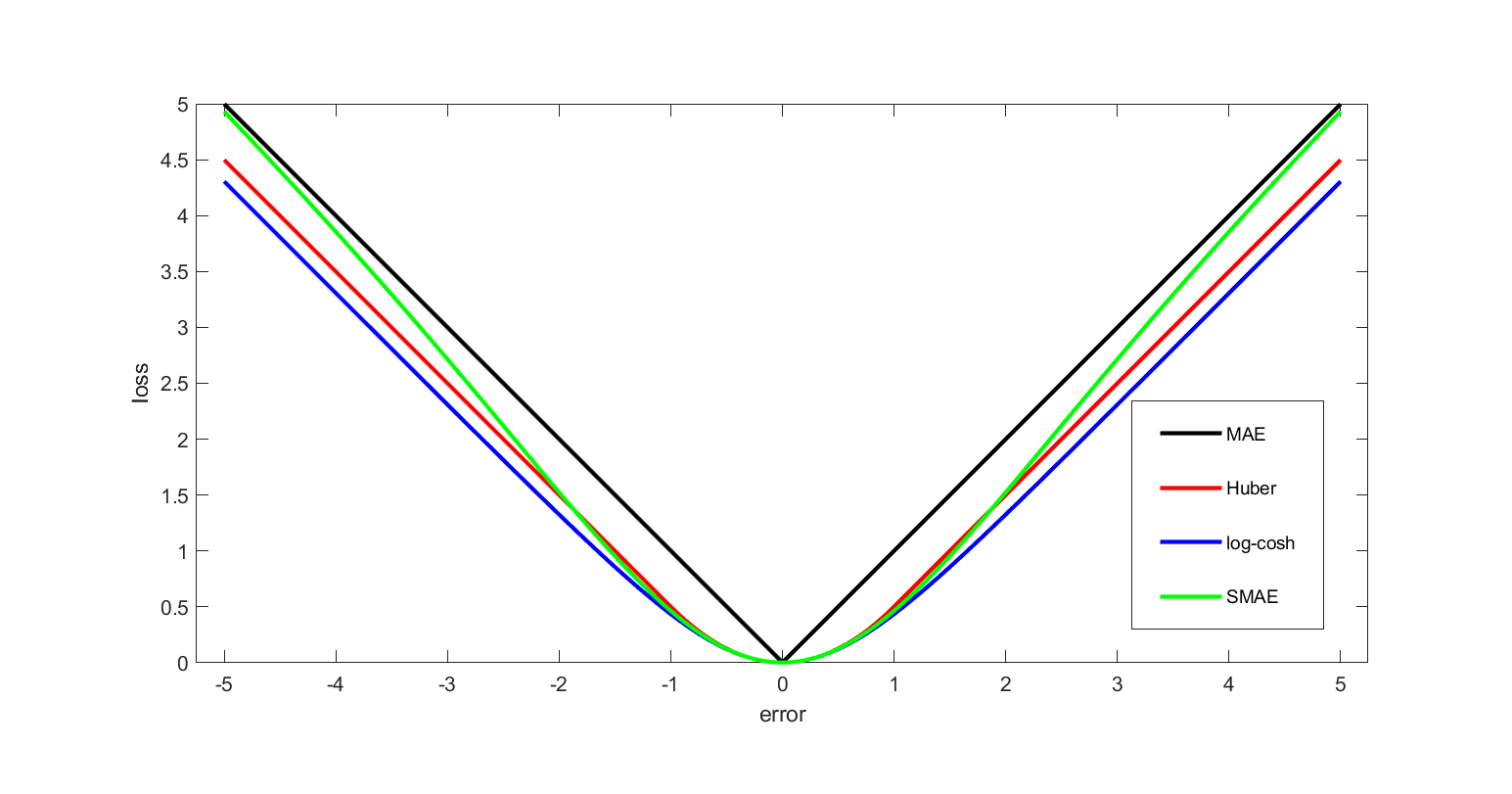

Loss Function

In mathematical optimization and decision theory, a loss function or cost function (sometimes also called an error function) is a function that maps an event or values of one or more variables onto a real number intuitively representing some "cost" associated with the event. An optimization problem seeks to minimize a loss function. An objective function is either a loss function or its opposite (in specific domains, variously called a reward function, a profit function, a utility function, a fitness function, etc.), in which case it is to be maximized. The loss function could include terms from several levels of the hierarchy. In statistics, typically a loss function is used for parameter estimation, and the event in question is some function of the difference between estimated and true values for an instance of data. The concept, as old as Pierre-Simon Laplace, Laplace, was reintroduced in statistics by Abraham Wald in the middle of the 20th century. In the context of economi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |