|

Commutation Matrix

In mathematics, especially in linear algebra and matrix theory, the commutation matrix is used for transforming the vectorized form of a matrix into the vectorized form of its transpose. Specifically, the commutation matrix K(''m'',''n'') is the ''nm'' × ''mn'' matrix which, for any ''m'' × ''n'' matrix A, transforms vec(A) into vec(AT): :K(''m'',''n'') vec(A) = vec(AT) . Here vec(A) is the ''mn'' × 1 column vector obtain by stacking the columns of A on top of one another: :\operatorname(\mathbf) = mathbf_, \ldots, \mathbf_, \mathbf_, \ldots, \mathbf_, \ldots, \mathbf_, \ldots, \mathbf_ where A = ''A''i'',''j'' In other words, vec(A) is the vector obtained by vectorizing A in column-major order. Similarly, vec(AT) is the vector obtaining by vectorizing A in row-major order. In the context of quantum information theory, the commutation matrix is sometimes referred to as the swap matrix or swap operator Properties * The commutation matrix is a special type of permutation matr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Mathematics

Mathematics is an area of knowledge that includes the topics of numbers, formulas and related structures, shapes and the spaces in which they are contained, and quantities and their changes. These topics are represented in modern mathematics with the major subdisciplines of number theory, algebra, geometry, and analysis, respectively. There is no general consensus among mathematicians about a common definition for their academic discipline. Most mathematical activity involves the discovery of properties of abstract objects and the use of pure reason to prove them. These objects consist of either abstractions from nature orin modern mathematicsentities that are stipulated to have certain properties, called axioms. A ''proof'' consists of a succession of applications of deductive rules to already established results. These results include previously proved theorems, axioms, andin case of abstraction from naturesome basic properties that are considered true starting points of ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Linear Algebra

Linear algebra is the branch of mathematics concerning linear equations such as: :a_1x_1+\cdots +a_nx_n=b, linear maps such as: :(x_1, \ldots, x_n) \mapsto a_1x_1+\cdots +a_nx_n, and their representations in vector spaces and through matrices. Linear algebra is central to almost all areas of mathematics. For instance, linear algebra is fundamental in modern presentations of geometry, including for defining basic objects such as lines, planes and rotations. Also, functional analysis, a branch of mathematical analysis, may be viewed as the application of linear algebra to spaces of functions. Linear algebra is also used in most sciences and fields of engineering, because it allows modeling many natural phenomena, and computing efficiently with such models. For nonlinear systems, which cannot be modeled with linear algebra, it is often used for dealing with first-order approximations, using the fact that the differential of a multivariate function at a point is the linear ma ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Matrix (mathematics)

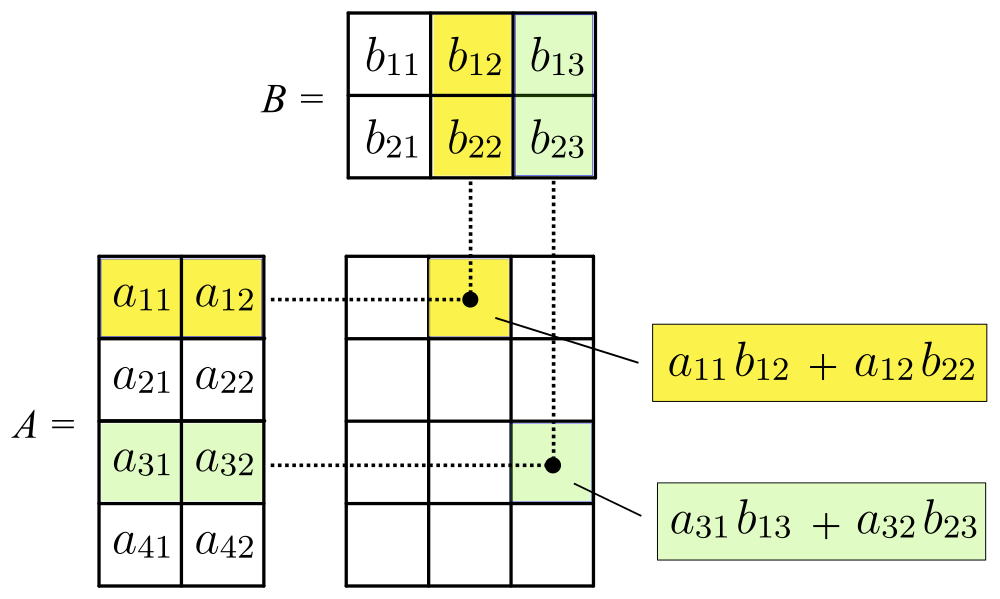

In mathematics, a matrix (plural matrices) is a rectangular array or table of numbers, symbols, or expressions, arranged in rows and columns, which is used to represent a mathematical object or a property of such an object. For example, \begin1 & 9 & -13 \\20 & 5 & -6 \end is a matrix with two rows and three columns. This is often referred to as a "two by three matrix", a "-matrix", or a matrix of dimension . Without further specifications, matrices represent linear maps, and allow explicit computations in linear algebra. Therefore, the study of matrices is a large part of linear algebra, and most properties and operations of abstract linear algebra can be expressed in terms of matrices. For example, matrix multiplication represents composition of linear maps. Not all matrices are related to linear algebra. This is, in particular, the case in graph theory, of incidence matrices, and adjacency matrices. ''This article focuses on matrices related to linear algebra, and, unle ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Vectorization (mathematics)

In mathematics, especially in linear algebra and matrix theory, the vectorization of a matrix is a linear transformation which converts the matrix into a column vector. Specifically, the vectorization of a matrix ''A'', denoted vec(''A''), is the column vector obtained by stacking the columns of the matrix ''A'' on top of one another: :\operatorname(A) = _, \ldots, a_, a_, \ldots, a_, \ldots, a_, \ldots, a_\mathrm Here, a_ represents A(i,j) and the superscript ^\mathrm denotes the transpose. Vectorization expresses, through coordinates, the isomorphism \mathbf^ := \mathbf^m \otimes \mathbf^n \cong \mathbf^ between these (i.e., of matrices and vectors) as vector spaces. For example, for the 2×2 matrix A = \begin a & b \\ c & d \end, the vectorization is \operatorname(A) = \begin a \\ c \\ b \\ d \end. The connection between the vectorization of ''A'' and the vectorization of its transpose is given by the commutation matrix. Compatibility with Kronecker products The vector ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Matrix (mathematics)

In mathematics, a matrix (plural matrices) is a rectangular array or table of numbers, symbols, or expressions, arranged in rows and columns, which is used to represent a mathematical object or a property of such an object. For example, \begin1 & 9 & -13 \\20 & 5 & -6 \end is a matrix with two rows and three columns. This is often referred to as a "two by three matrix", a "-matrix", or a matrix of dimension . Without further specifications, matrices represent linear maps, and allow explicit computations in linear algebra. Therefore, the study of matrices is a large part of linear algebra, and most properties and operations of abstract linear algebra can be expressed in terms of matrices. For example, matrix multiplication represents composition of linear maps. Not all matrices are related to linear algebra. This is, in particular, the case in graph theory, of incidence matrices, and adjacency matrices. ''This article focuses on matrices related to linear algebra, and, unle ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Transpose

In linear algebra, the transpose of a matrix is an operator which flips a matrix over its diagonal; that is, it switches the row and column indices of the matrix by producing another matrix, often denoted by (among other notations). The transpose of a matrix was introduced in 1858 by the British mathematician Arthur Cayley. In the case of a logical matrix representing a binary relation R, the transpose corresponds to the converse relation RT. Transpose of a matrix Definition The transpose of a matrix , denoted by , , , A^, , , or , may be constructed by any one of the following methods: # Reflect over its main diagonal (which runs from top-left to bottom-right) to obtain #Write the rows of as the columns of #Write the columns of as the rows of Formally, the -th row, -th column element of is the -th row, -th column element of : :\left mathbf^\operatorname\right = \left mathbf\right. If is an matrix, then is an matrix. In the case of square matrices, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Column Vector

In linear algebra, a column vector with m elements is an m \times 1 matrix consisting of a single column of m entries, for example, \boldsymbol = \begin x_1 \\ x_2 \\ \vdots \\ x_m \end. Similarly, a row vector is a 1 \times n matrix for some n, consisting of a single row of n entries, \boldsymbol a = \begin a_1 & a_2 & \dots & a_n \end. (Throughout this article, boldface is used for both row and column vectors.) The transpose (indicated by T) of any row vector is a column vector, and the transpose of any column vector is a row vector: \begin x_1 \; x_2 \; \dots \; x_m \end^ = \begin x_1 \\ x_2 \\ \vdots \\ x_m \end and \begin x_1 \\ x_2 \\ \vdots \\ x_m \end^ = \begin x_1 \; x_2 \; \dots \; x_m \end. The set of all row vectors with ''n'' entries in a given field (such as the real numbers) forms an ''n''-dimensional vector space; similarly, the set of all column vectors with ''m'' entries forms an ''m''-dimensional vector space. The space of row vectors with ''n'' entries can b ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Row- And Column-major Order

In computing, row-major order and column-major order are methods for storing multidimensional arrays in linear storage such as random access memory. The difference between the orders lies in which elements of an array are contiguous in memory. In row-major order, the consecutive elements of a row reside next to each other, whereas the same holds true for consecutive elements of a column in column-major order. While the terms allude to the rows and columns of a two-dimensional array, i.e. a matrix, the orders can be generalized to arrays of any dimension by noting that the terms row-major and column-major are equivalent to lexicographic and colexicographic orders, respectively. Data layout is critical for correctly passing arrays between programs written in different programming languages. It is also important for performance when traversing an array because modern CPUs process sequential data more efficiently than nonsequential data. This is primarily due to CPU caching which ex ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Quantum Information

Quantum information is the information of the state of a quantum system. It is the basic entity of study in quantum information theory, and can be manipulated using quantum information processing techniques. Quantum information refers to both the technical definition in terms of Von Neumann entropy and the general computational term. It is an interdisciplinary field that involves quantum mechanics, computer science, information theory, philosophy and cryptography among other fields. Its study is also relevant to disciplines such as cognitive science, psychology and neuroscience. Its main focus is in extracting information from matter at the microscopic scale. Observation in science is one of the most important ways of acquiring information and measurement is required in order to quantify the observation, making this crucial to the scientific method. In quantum mechanics, due to the uncertainty principle, non-commuting observables cannot be precisely measured simultaneously, as ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Quantum Logic Gate

In quantum computing and specifically the quantum circuit model of computation, a quantum logic gate (or simply quantum gate) is a basic quantum circuit operating on a small number of qubits. They are the building blocks of quantum circuits, like classical logic gates are for conventional digital circuits. Unlike many classical logic gates, quantum logic gates are reversible. It is possible to perform classical computing using only reversible gates. For example, the reversible Toffoli gate can implement all Boolean functions, often at the cost of having to use ancilla bits. The Toffoli gate has a direct quantum equivalent, showing that quantum circuits can perform all operations performed by classical circuits. Quantum gates are unitary operators, and are described as unitary matrices relative to some basis. Usually we use the ''computational basis'', which unless we compare it with something, just means that for a ''d''-level quantum system (such as a qubit, a quantum register ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Permutation Matrix

In mathematics, particularly in matrix theory, a permutation matrix is a square binary matrix that has exactly one entry of 1 in each row and each column and 0s elsewhere. Each such matrix, say , represents a permutation of elements and, when used to multiply another matrix, say , results in permuting the rows (when pre-multiplying, to form ) or columns (when post-multiplying, to form ) of the matrix . Definition Given a permutation of ''m'' elements, :\pi : \lbrace 1, \ldots, m \rbrace \to \lbrace 1, \ldots, m \rbrace represented in two-line form by :\begin 1 & 2 & \cdots & m \\ \pi(1) & \pi(2) & \cdots & \pi(m) \end, there are two natural ways to associate the permutation with a permutation matrix; namely, starting with the ''m'' × ''m'' identity matrix, , either permute the columns or permute the rows, according to . Both methods of defining permutation matrices appear in the literature and the properties expressed in one representation can be easily converted to th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Orthogonal Matrix

In linear algebra, an orthogonal matrix, or orthonormal matrix, is a real square matrix whose columns and rows are orthonormal vectors. One way to express this is Q^\mathrm Q = Q Q^\mathrm = I, where is the transpose of and is the identity matrix. This leads to the equivalent characterization: a matrix is orthogonal if its transpose is equal to its inverse: Q^\mathrm=Q^, where is the inverse of . An orthogonal matrix is necessarily invertible (with inverse ), unitary (), where is the Hermitian adjoint (conjugate transpose) of , and therefore normal () over the real numbers. The determinant of any orthogonal matrix is either +1 or −1. As a linear transformation, an orthogonal matrix preserves the inner product of vectors, and therefore acts as an isometry of Euclidean space, such as a rotation, reflection or rotoreflection. In other words, it is a unitary transformation. The set of orthogonal matrices, under multiplication, forms the group , known as the orthogonal gr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

.jpg)