|

Cache Pollution

Cache pollution describes situations where an executing computer program loads data into CPU cache unnecessarily, thus causing other useful data to be evicted from the cache into lower levels of the memory hierarchy, degrading performance. For example, in a multi-core processor, one core may replace the blocks fetched by other cores into shared cache, or prefetched blocks may replace demand-fetched blocks from the cache. Example Consider the following illustration: T = T + 1; for i in 0..sizeof(CACHE) C = C + 1; T = T + C izeof(CACHE)-1 (The assumptions here are that the cache is composed of only one level, it is unlocked, the replacement policy is pseudo-LRU, all data is cacheable, the set associativity of the cache is N (where N > 1), and at most one processor register is available to contain program values). Right before the loop starts, T will be fetched from memory into cache, its value updated. However, as the loop executes, because the number of data elem ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Computer Program

A computer program is a sequence or set of instructions in a programming language for a computer to Execution (computing), execute. It is one component of software, which also includes software documentation, documentation and other intangible components. A ''computer program'' in its human-readable form is called source code. Source code needs another computer program to Execution (computing), execute because computers can only execute their native machine instructions. Therefore, source code may be Translator (computing), translated to machine instructions using a compiler written for the language. (Assembly language programs are translated using an Assembler (computing), assembler.) The resulting file is called an executable. Alternatively, source code may execute within an interpreter (computing), interpreter written for the language. If the executable is requested for execution, then the operating system Loader (computing), loads it into Random-access memory, memory and ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cache Control Instruction

In computing, a cache control instruction is a hint embedded in the instruction stream of a processor intended to improve the performance of hardware caches, using foreknowledge of the memory access pattern supplied by the programmer or compiler. They may reduce cache pollution, reduce bandwidth requirement, bypass latencies, by providing better control over the working set. Most cache control instructions do not affect the semantics of a program, although some can. Examples Several such instructions, with variants, are supported by several processor instruction set architectures, such as ARM, MIPS, PowerPC, and x86. Prefetch Also termed ''data cache block touch'', the effect is to request loading the cache line associated with a given address. This is performed by the PREFETCH instruction in the x86 instruction set. Some variants bypass higher levels of the cache hierarchy, which is useful in a 'streaming' context for data that is traversed once, rather than held in the w ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Memory Wall

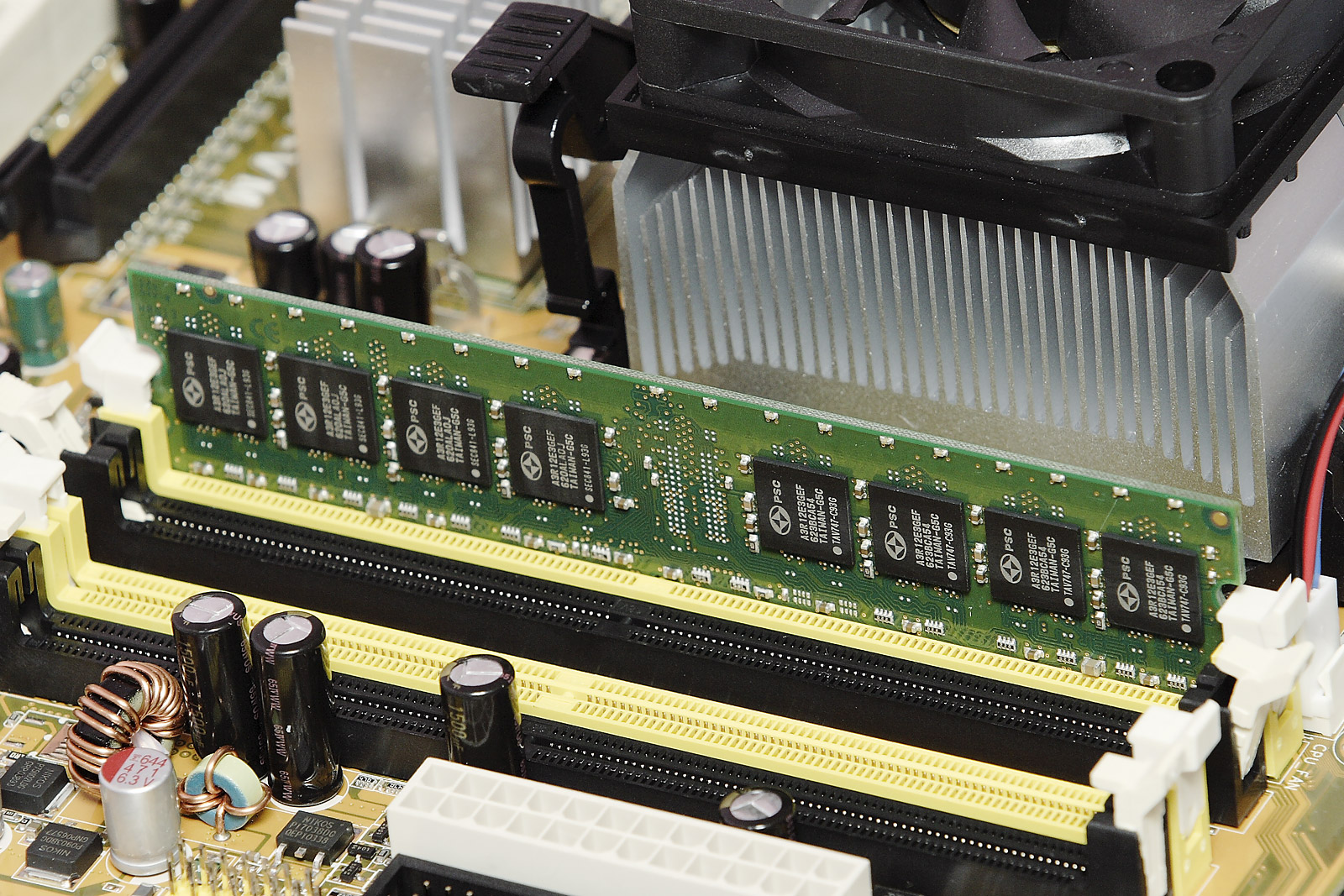

Random-access memory (RAM; ) is a form of electronic computer memory that can be read and changed in any order, typically used to store working data and machine code. A random-access memory device allows data items to be read or written in almost the same amount of time irrespective of the physical location of data inside the memory, in contrast with other direct-access data storage media (such as hard disks and magnetic tape), where the time required to read and write data items varies significantly depending on their physical locations on the recording medium, due to mechanical limitations such as media rotation speeds and arm movement. In today's technology, random-access memory takes the form of integrated circuit (IC) chips with MOS (metal–oxide–semiconductor) memory cells. RAM is normally associated with volatile types of memory where stored information is lost if power is removed. The two main types of volatile random-access semiconductor memory are static r ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Memory Access Pattern

In computing, a memory access pattern or IO access pattern is the pattern with which a system or program reads and writes memory on secondary storage. These patterns differ in the level of locality of reference and drastically affect cache performance, and also have implications for the approach to parallelism and distribution of workload in shared memory systems. Further, cache coherency issues can affect multiprocessor performance, which means that certain memory access patterns place a ceiling on parallelism (which manycore approaches seek to break). Computer memory is usually described as " random access", but traversals by software will still exhibit patterns that can be exploited for efficiency. Various tools exist to help system designers and programmers understand, analyse and improve the memory access pattern, including VTune and Vectorization Advisor, including tools to address GPU memory access patterns. Memory access patterns also have implications for securi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cache Eviction

A CPU cache is a hardware cache used by the central processing unit (CPU) of a computer to reduce the average cost (time or energy) to access data (computer science), data from the main memory. A cache is a smaller, faster memory, located closer to a processor core, which stores copies of the data from frequently used main memory locations. Most CPUs have a hierarchy of multiple cache #MULTILEVEL, levels (L1, L2, often L3, and rarely even L4), with different instruction-specific and data-specific caches at level 1. The cache memory is typically implemented with static random-access memory (SRAM), in modern CPUs by far the largest part of them by chip area, but SRAM is not always used for all levels (of I- or D-cache), or even any level, sometimes some latter or all levels are implemented with eDRAM. Other types of caches exist (that are not counted towards the "cache size" of the most important caches mentioned above), such as the translation lookaside buffer (TLB) which is part of ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

PowerPC

PowerPC (with the backronym Performance Optimization With Enhanced RISC – Performance Computing, sometimes abbreviated as PPC) is a reduced instruction set computer (RISC) instruction set architecture (ISA) created by the 1991 Apple Inc., Apple–IBM–Motorola alliance, known as AIM alliance, AIM. PowerPC, as an evolving instruction set, has been named Power ISA since 2006, while the old name lives on as a trademark for some implementations of Power Architecture–based processors. Originally intended for personal computers, the architecture is well known for being used by Apple's desktop and laptop lines from 1994 until 2006, and in several videogame consoles including Microsoft's Xbox 360, Sony's PlayStation 3, and Nintendo's GameCube, Wii, and Wii U. PowerPC was also used for the Curiosity (rover), Curiosity and Perseverance (rover), Perseverance rovers on Mars and a variety of satellites. It has since become a niche architecture for personal computers, particularly with A ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Operating System

An operating system (OS) is system software that manages computer hardware and software resources, and provides common daemon (computing), services for computer programs. Time-sharing operating systems scheduler (computing), schedule tasks for efficient use of the system and may also include accounting software for cost allocation of Scheduling (computing), processor time, mass storage, peripherals, and other resources. For hardware functions such as input and output and memory allocation, the operating system acts as an intermediary between programs and the computer hardware, although the application code is usually executed directly by the hardware and frequently makes system calls to an OS function or is interrupted by it. Operating systems are found on many devices that contain a computerfrom cellular phones and video game consoles to web servers and supercomputers. , Android (operating system), Android is the most popular operating system with a 46% market share, followed ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Main Memory

Computer data storage or digital data storage is a technology consisting of computer components and recording media that are used to retain digital data. It is a core function and fundamental component of computers. The central processing unit (CPU) of a computer is what manipulates data by performing computations. In practice, almost all computers use a storage hierarchy, which puts fast but expensive and small storage options close to the CPU and slower but less expensive and larger options further away. Generally, the fast technologies are referred to as "memory", while slower persistent technologies are referred to as "storage". Even the first computer designs, Charles Babbage's Analytical Engine and Percy Ludgate's Analytical Machine, clearly distinguished between processing and memory (Babbage stored numbers as rotations of gears, while Ludgate stored numbers as displacements of rods in shuttles). This distinction was extended in the Von Neumann architecture, wh ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

CPU Cache

A CPU cache is a hardware cache used by the central processing unit (CPU) of a computer to reduce the average cost (time or energy) to access data from the main memory. A cache is a smaller, faster memory, located closer to a processor core, which stores copies of the data from frequently used main memory locations. Most CPUs have a hierarchy of multiple cache levels (L1, L2, often L3, and rarely even L4), with different instruction-specific and data-specific caches at level 1. The cache memory is typically implemented with static random-access memory (SRAM), in modern CPUs by far the largest part of them by chip area, but SRAM is not always used for all levels (of I- or D-cache), or even any level, sometimes some latter or all levels are implemented with eDRAM. Other types of caches exist (that are not counted towards the "cache size" of the most important caches mentioned above), such as the translation lookaside buffer (TLB) which is part of the memory management unit (M ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Computer Bus

In computer architecture, a bus (historically also called a data highway or databus) is a communication system that transfers data between components inside a computer or between computers. It encompasses both hardware (e.g., wires, optical fiber) and software, including communication protocols. At its core, a bus is a shared physical pathway, typically composed of wires, traces on a circuit board, or busbars, that allows multiple devices to communicate. To prevent conflicts and ensure orderly data exchange, buses rely on a communication protocol to manage which device can transmit data at a given time. Buses are categorized based on their role, such as system buses (also known as internal buses, internal data buses, or memory buses) connecting the CPU and memory. Expansion buses, also called peripheral buses, extend the system to connect additional devices, including peripherals. Examples of widely used buses include PCI Express (PCIe) for high-speed internal connectio ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |