|

Active Learning (machine Learning)

Active learning is a special case of machine learning in which a learning algorithm can interactively query a user (or some other information source) to label new data points with the desired outputs. In statistics literature, it is sometimes also called optimal experimental design. The information source is also called ''teacher'' or ''oracle''. There are situations in which unlabeled data is abundant but manual labeling is expensive. In such a scenario, learning algorithms can actively query the user/teacher for labels. This type of iterative supervised learning is called active learning. Since the learner chooses the examples, the number of examples to learn a concept can often be much lower than the number required in normal supervised learning. With this approach, there is a risk that the algorithm is overwhelmed by uninformative examples. Recent developments are dedicated to multi-label active learning, hybrid active learning and active learning in a single-pass (on-line) c ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Machine Learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence. Machine learning algorithms build a model based on sample data, known as training data, in order to make predictions or decisions without being explicitly programmed to do so. Machine learning algorithms are used in a wide variety of applications, such as in medicine, email filtering, speech recognition, agriculture, and computer vision, where it is difficult or unfeasible to develop conventional algorithms to perform the needed tasks.Hu, J.; Niu, H.; Carrasco, J.; Lennox, B.; Arvin, F.,Voronoi-Based Multi-Robot Autonomous Exploration in Unknown Environments via Deep Reinforcement Learning IEEE Transactions on Vehicular Technology, 2020. A subset of machine learning is closely related to computational statistics, which focuses on making predicti ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Optimal Experimental Design

In the design of experiments, optimal designs (or optimum designs) are a class of experimental designs that are optimal with respect to some statistical criterion. The creation of this field of statistics has been credited to Danish statistician Kirstine Smith. In the design of experiments for estimating statistical models, optimal designs allow parameters to be estimated without bias and with minimum variance. A non-optimal design requires a greater number of experimental runs to estimate the parameters with the same precision as an optimal design. In practical terms, optimal experiments can reduce the costs of experimentation. The optimality of a design depends on the statistical model and is assessed with respect to a statistical criterion, which is related to the variance-matrix of the estimator. Specifying an appropriate model and specifying a suitable criterion function both require understanding of statistical theory and practical knowledge with designing exper ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Incremental Learning

In computer science, incremental learning is a method of machine learning in which input data is continuously used to extend the existing model's knowledge i.e. to further train the model. It represents a dynamic technique of supervised learning and unsupervised learning that can be applied when training data becomes available gradually over time or its size is out of system memory limits. Algorithms that can facilitate incremental learning are known as incremental machine learning algorithms. Many traditional machine learning algorithms inherently support incremental learning. Other algorithms can be adapted to facilitate incremental learning. Examples of incremental algorithms include decision trees (IDE4, ID5R angaenari, decision rules, artificial neural networks ( RBF networks, Learn++, Fuzzy ARTMAP, TopoART,Marko Tscherepanow, Marco Kortkamp, and Marc KammerA Hierarchical ART Network for the Stable Incremental Learning of Topological Structures and Associations from Noisy Dat ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Online Machine Learning

In computer science, online machine learning is a method of machine learning in which data becomes available in a sequential order and is used to update the best predictor for future data at each step, as opposed to batch learning techniques which generate the best predictor by learning on the entire training data set at once. Online learning is a common technique used in areas of machine learning where it is computationally infeasible to train over the entire dataset, requiring the need of out-of-core algorithms. It is also used in situations where it is necessary for the algorithm to dynamically adapt to new patterns in the data, or when the data itself is generated as a function of time, e.g., stock price prediction. Online learning algorithms may be prone to catastrophic interference, a problem that can be addressed by incremental learning approaches. Introduction In the setting of supervised learning, a function of f : X \to Y is to be learned, where X is thought of as a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Crowdsourcing

Crowdsourcing involves a large group of dispersed participants contributing or producing goods or services—including ideas, votes, micro-tasks, and finances—for payment or as volunteers. Contemporary crowdsourcing often involves digital platforms to attract and divide work between participants to achieve a cumulative result. Crowdsourcing is not limited to online activity, however, and there are various historical examples of crowdsourcing. The word crowdsourcing is a portmanteau of "crowd" and " outsourcing". In contrast to outsourcing, crowdsourcing usually involves less specific and more public groups of participants. Advantages of using crowdsourcing include lowered costs, improved speed, improved quality, increased flexibility, and/or increased scalability of the work, as well as promoting diversity. Crowdsourcing methods include competitions, virtual labor markets, open online collaboration and data donation. Some forms of crowdsourcing, such as in "idea competiti ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Amazon Mechanical Turk

Amazon Mechanical Turk (MTurk) is a crowdsourcing website for businesses to hire remotely located "crowdworkers" to perform discrete on-demand tasks that computers are currently unable to do. It is operated under Amazon Web Services, and is owned by Amazon. Employers (known as ''requesters'') post jobs known as ''Human Intelligence Tasks'' (HITs), such as identifying specific content in an image or video, writing product descriptions, or answering survey questions. Workers, colloquially known as ''Turkers'' or ''crowdworkers'', browse among existing jobs and complete them in exchange for a fee set by the employer. To place jobs, the requesting programs use an open application programming interface (API), or the more limited MTurk Requester site. As of April 2019, Requesters could register from 49 approved countries. History The service was conceived by Venky Harinarayan in a US patent disclosure in 2001.Multiple sources: * * Amazon coined the term ''artificial artificial intelli ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Generalization Error

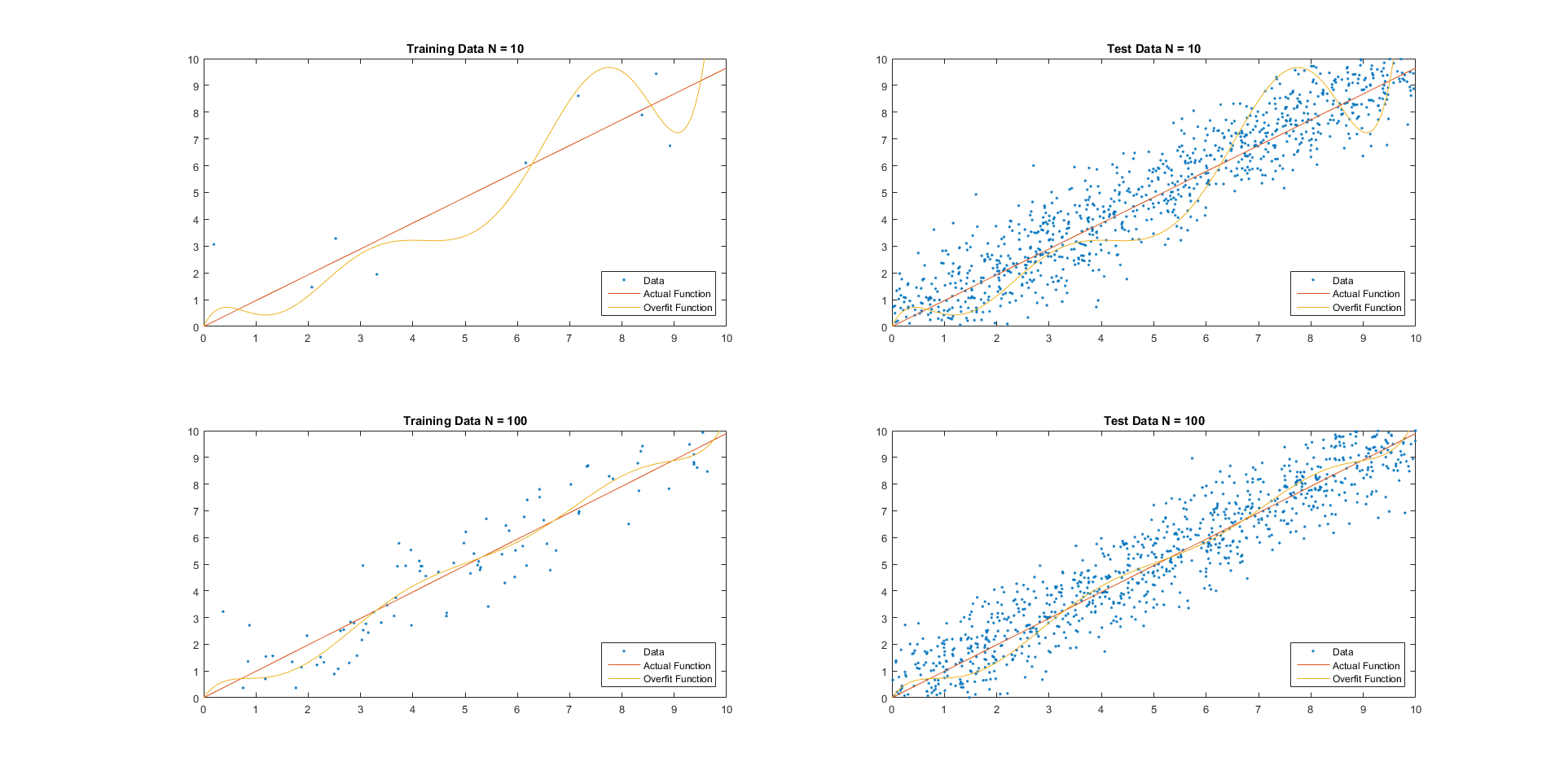

For supervised learning applications in machine learning and statistical learning theory, generalization error (also known as the out-of-sample error or the risk) is a measure of how accurately an algorithm is able to predict outcome values for previously unseen data. Because learning algorithms are evaluated on finite samples, the evaluation of a learning algorithm may be sensitive to sampling error. As a result, measurements of prediction error on the current data may not provide much information about predictive ability on new data. Generalization error can be minimized by avoiding overfitting in the learning algorithm. The performance of a machine learning algorithm is visualized by plots that show values of ''estimates'' of the generalization error through the learning process, which are called learning curves. Definition In a learning problem, the goal is to develop a function f_n(\vec) that predicts output values y for each input datum \vec. The subscript n indicates tha ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Feature (machine Learning)

In machine learning and pattern recognition, a feature is an individual measurable property or characteristic of a phenomenon. Choosing informative, discriminating and independent features is a crucial element of effective algorithms in pattern recognition, classification and regression. Features are usually numeric, but structural features such as strings and graphs are used in syntactic pattern recognition. The concept of "feature" is related to that of explanatory variable used in statistical techniques such as linear regression. Classification A numeric feature can be conveniently described by a feature vector. One way to achieve binary classification is using a linear predictor function (related to the perceptron) with a feature vector as input. The method consists of calculating the scalar product between the feature vector and a vector of weights, qualifying those observations whose result exceeds a threshold. Algorithms for classification from a feature vector incl ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Conformal Prediction

Conformal prediction (CP) is a statistical technique for producing prediction sets without assumptions on the predictive algorithm (often a machine learning system) and only assuming exchangeability of the data. CP works by computing a nonconformity measure, often called a score function, on previously labeled data, and using these to create prediction sets on a new (unlabeled) test data point. A version of CP was first proposed in 1998 by Gammerman, Vovk, and Vapnik, and since, several variants of conformal prediction have been developed with different computational complexities, formal guarantees, and practical applications. Conformal prediction requires a user-specified ''significance level'' for which the algorithm should produce its predictions. This significance level restricts the frequency of errors that the algorithm is allowed to make. For example, a significance level of 0.1 means that the algorithm can make ''at most'' 10% erroneous predictions. To meet this requirem ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Support-vector Machine

In machine learning, support vector machines (SVMs, also support vector networks) are supervised learning models with associated learning algorithms that analyze data for classification and regression analysis. Developed at AT&T Bell Laboratories by Vladimir Vapnik with colleagues (Boser et al., 1992, Guyon et al., 1993, Cortes and Vapnik, 1995, Vapnik et al., 1997) SVMs are one of the most robust prediction methods, being based on statistical learning frameworks or VC theory proposed by Vapnik (1982, 1995) and Chervonenkis (1974). Given a set of training examples, each marked as belonging to one of two categories, an SVM training algorithm builds a model that assigns new examples to one category or the other, making it a non- probabilistic binary linear classifier (although methods such as Platt scaling exist to use SVM in a probabilistic classification setting). SVM maps training examples to points in space so as to maximise the width of the gap between the two categories. New ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Margin (machine Learning)

In machine learning the margin of a single data point is defined to be the distance from the data point to a decision boundary. Note that there are many distances and decision boundaries that may be appropriate for certain datasets and goals. A margin classifier is a classifier that explicitly utilizes the margin of each example while learning a classifier. There are theoretical justifications (based on the VC dimension) as to why maximizing the margin (under some suitable constraints) may be beneficial for machine learning and statistical inferences algorithms. There are many hyperplanes that might classify the data. One reasonable choice as the best hyperplane is the one that represents the largest separation, or margin, between the two classes. So we choose the hyperplane so that the distance from it to the nearest data point on each side is maximized. If such a hyperplane exists, it is known as the ''maximum-margin hyperplane'' and the linear classifier it defines is known ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |