|

Zigzag Code

In coding theory, a zigzag code is a type of linear error-correcting code introduced by .. They are defined by partitioning the input data into segments of fixed size, and adding sequence of check bits to the data, where each check bit is the exclusive or of the bits in a single segment and of the previous check bit in the sequence. The code rate In telecommunication and information theory, the code rate (or information rateHuffman, W. Cary, and Pless, Vera, ''Fundamentals of Error-Correcting Codes'', Cambridge, 2003.) of a forward error correction code is the proportion of the data-strea ... is high: where is the number of bits per segment. Its worst-case ability to correct transmission errors is very limited: in the worst case it can only detect a single bit error and cannot correct any errors. However, it works better in the soft-decision model of decoding: its regular structure allows the task of finding a maximum-likelihood decoding or a posteriori probability decodi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Coding Theory

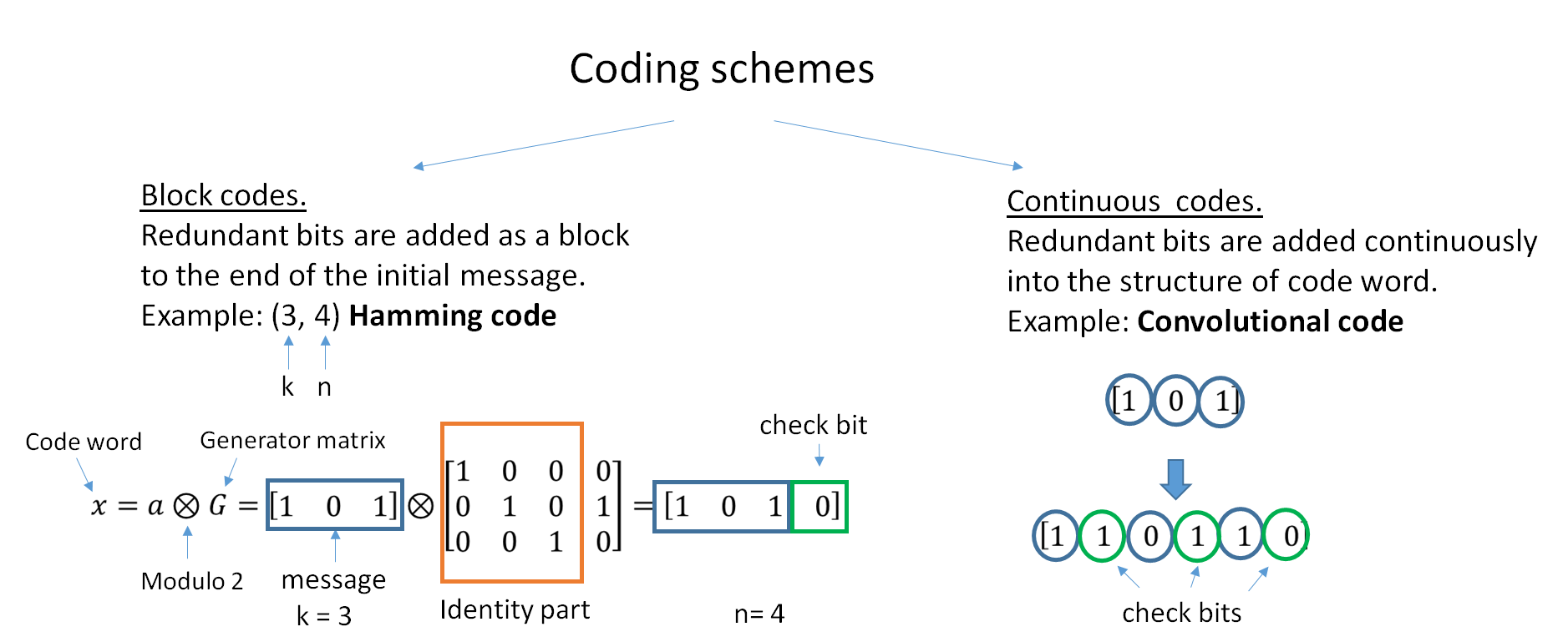

Coding theory is the study of the properties of codes and their respective fitness for specific applications. Codes are used for data compression, cryptography, error detection and correction, data transmission and data storage. Codes are studied by various scientific disciplines—such as information theory, electrical engineering, mathematics, linguistics, and computer science—for the purpose of designing efficient and reliable data transmission methods. This typically involves the removal of redundancy and the correction or detection of errors in the transmitted data. There are four types of coding: # Data compression (or ''source coding'') # Error control (or ''channel coding'') # Cryptographic coding # Line coding Data compression attempts to remove unwanted redundancy from the data from a source in order to transmit it more efficiently. For example, ZIP data compression makes data files smaller, for purposes such as to reduce Internet traffic. Data compressio ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Linear Code

In coding theory, a linear code is an error-correcting code for which any linear combination of codewords is also a codeword. Linear codes are traditionally partitioned into block codes and convolutional codes, although turbo codes can be seen as a hybrid of these two types. Linear codes allow for more efficient encoding and decoding algorithms than other codes (cf. syndrome decoding). Linear codes are used in forward error correction and are applied in methods for transmitting symbols (e.g., bits) on a communications channel so that, if errors occur in the communication, some errors can be corrected or detected by the recipient of a message block. The codewords in a linear block code are blocks of symbols that are encoded using more symbols than the original value to be sent. A linear code of length ''n'' transmits blocks containing ''n'' symbols. For example, the ,4,3Hamming code is a linear binary code which represents 4-bit messages using 7-bit codewords. Two distinct ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Error-correcting Code

In computing, telecommunication, information theory, and coding theory, an error correction code, sometimes error correcting code, (ECC) is used for controlling errors in data over unreliable or noisy communication channels. The central idea is the sender encodes the message with redundant information in the form of an ECC. The redundancy allows the receiver to detect a limited number of errors that may occur anywhere in the message, and often to correct these errors without retransmission. The American mathematician Richard Hamming pioneered this field in the 1940s and invented the first error-correcting code in 1950: the Hamming (7,4) code. ECC contrasts with error detection in that errors that are encountered can be corrected, not simply detected. The advantage is that a system using ECC does not require a reverse channel to request retransmission of data when an error occurs. The downside is that there is a fixed overhead that is added to the message, thereby requiring a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

IEEE Transactions On Information Theory

''IEEE Transactions on Information Theory'' is a monthly peer-reviewed scientific journal published by the IEEE Information Theory Society. It covers information theory and the mathematics of communications. It was established in 1953 as ''IRE Transactions on Information Theory''. The editor-in-chief is Muriel Médard (Massachusetts Institute of Technology). As of 2007, the journal allows the posting of preprints on arXiv. According to Jack van Lint, it is the leading research journal in the whole field of coding theory. A 2006 study using the PageRank network analysis algorithm found that, among hundreds of computer science-related journals, ''IEEE Transactions on Information Theory'' had the highest ranking and was thus deemed the most prestigious. ''ACM Computing Surveys'', with the highest impact factor The impact factor (IF) or journal impact factor (JIF) of an academic journal is a scientometric index calculated by Clarivate that reflects the yearly mean number of c ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Exclusive Or

Exclusive or or exclusive disjunction is a logical operation that is true if and only if its arguments differ (one is true, the other is false). It is symbolized by the prefix operator J and by the infix operators XOR ( or ), EOR, EXOR, , , , , , and . The negation of XOR is the logical biconditional, which yields true if and only if the two inputs are the same. It gains the name "exclusive or" because the meaning of "or" is ambiguous when both operands are true; the exclusive or operator ''excludes'' that case. This is sometimes thought of as "one or the other but not both". This could be written as "A or B, but not, A and B". Since it is associative, it may be considered to be an ''n''-ary operator which is true if and only if an odd number of arguments are true. That is, ''a'' XOR ''b'' XOR ... may be treated as XOR(''a'',''b'',...). Truth table The truth table of A XOR B shows that it outputs true whenever the inputs differ: Equivalences, elimination, and introd ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Code Rate

In telecommunication and information theory, the code rate (or information rateHuffman, W. Cary, and Pless, Vera, ''Fundamentals of Error-Correcting Codes'', Cambridge, 2003.) of a forward error correction code is the proportion of the data-stream that is useful (non- redundant). That is, if the code rate is k/n for every bits of useful information, the coder generates a total of bits of data, of which n-k are redundant. If is the gross bit rate or data signalling rate (inclusive of redundant error coding), the net bit rate (the useful bit rate exclusive of error correction codes) is \leq R \cdot k/n. For example: The code rate of a convolutional code will typically be , , , , , etc., corresponding to one redundant bit inserted after every single, second, third, etc., bit. The code rate of the octet oriented Reed Solomon block code denoted RS(204,188) is 188/204, meaning that redundant octets (or bytes) are added to each block of 188 octets of useful information. A f ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Soft-decision Decoder

In information theory, a soft-decision decoder is a kind of decoding methods – a class of algorithm used to decode data that has been encoded with an error correcting code. Whereas a hard-decision decoder operates on data that take on a fixed set of possible values (typically 0 or 1 in a binary code), the inputs to a soft-decision decoder may take on a whole range of values in-between. This extra information indicates the reliability of each input data point, and is used to form better estimates of the original data. Therefore, a soft-decision decoder will typically perform better in the presence of corrupted data than its hard-decision counterpart. Soft-decision decoders are often used in Viterbi decoder A Viterbi decoder uses the Viterbi algorithm for decoding a bitstream that has been encoded using a convolutional code or trellis code. There are other algorithms for decoding a convolutionally encoded stream (for example, the Fano algorithm). Th ...s and turbo code dec ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Decoding Methods

In coding theory, decoding is the process of translating received messages into codewords of a given code. There have been many common methods of mapping messages to codewords. These are often used to recover messages sent over a noisy channel, such as a binary symmetric channel. Notation C \subset \mathbb_2^n is considered a binary code with the length n; x,y shall be elements of \mathbb_2^n; and d(x,y) is the distance between those elements. Ideal observer decoding One may be given the message x \in \mathbb_2^n, then ideal observer decoding generates the codeword y \in C. The process results in this solution: :\mathbb(y \mbox \mid x \mbox) For example, a person can choose the codeword y that is most likely to be received as the message x after transmission. Decoding conventions Each codeword does not have an expected possibility: there may be more than one codeword with an equal likelihood of mutating into the received message. In such a case, the sender and receiver(s) mu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Coding Theory

Coding theory is the study of the properties of codes and their respective fitness for specific applications. Codes are used for data compression, cryptography, error detection and correction, data transmission and data storage. Codes are studied by various scientific disciplines—such as information theory, electrical engineering, mathematics, linguistics, and computer science—for the purpose of designing efficient and reliable data transmission methods. This typically involves the removal of redundancy and the correction or detection of errors in the transmitted data. There are four types of coding: # Data compression (or ''source coding'') # Error control (or ''channel coding'') # Cryptographic coding # Line coding Data compression attempts to remove unwanted redundancy from the data from a source in order to transmit it more efficiently. For example, ZIP data compression makes data files smaller, for purposes such as to reduce Internet traffic. Data compressio ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |