|

Vanishing Gradient Problem

In machine learning, the vanishing gradient problem is encountered when training artificial neural networks with gradient-based learning methods and backpropagation. In such methods, during each iteration of training each of the neural network's weights receives an update proportional to the partial derivative of the error function with respect to the current weight. The problem is that in some cases, the gradient will be vanishingly small, effectively preventing the weight from changing its value. In the worst case, this may completely stop the neural network from further training. As one example of the problem cause, traditional activation functions such as the hyperbolic tangent function have gradients in the range , and backpropagation computes gradients by the chain rule. This has the effect of multiplying of these small numbers to compute gradients of the early layers in an -layer network, meaning that the gradient (error signal) decreases exponentially with while the early ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Machine Learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence. Machine learning algorithms build a model based on sample data, known as training data, in order to make predictions or decisions without being explicitly programmed to do so. Machine learning algorithms are used in a wide variety of applications, such as in medicine, email filtering, speech recognition, agriculture, and computer vision, where it is difficult or unfeasible to develop conventional algorithms to perform the needed tasks.Hu, J.; Niu, H.; Carrasco, J.; Lennox, B.; Arvin, F.,Voronoi-Based Multi-Robot Autonomous Exploration in Unknown Environments via Deep Reinforcement Learning IEEE Transactions on Vehicular Technology, 2020. A subset of machine learning is closely related to computational statistics, which focuses on making predicti ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Operator Norm

In mathematics, the operator norm measures the "size" of certain linear operators by assigning each a real number called its . Formally, it is a norm defined on the space of bounded linear operators between two given normed vector spaces. Introduction and definition Given two normed vector spaces V and W (over the same base field, either the real numbers \R or the complex numbers \Complex), a linear map A : V \to W is continuous if and only if there exists a real number c such that \, Av\, \leq c \, v\, \quad \mbox v\in V. The norm on the left is the one in W and the norm on the right is the one in V. Intuitively, the continuous operator A never increases the length of any vector by more than a factor of c. Thus the image of a bounded set under a continuous operator is also bounded. Because of this property, the continuous linear operators are also known as bounded operators. In order to "measure the size" of A, one can take the infimum of the numbers c such that the above i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Generative Model

In statistical classification, two main approaches are called the generative approach and the discriminative approach. These compute classifiers by different approaches, differing in the degree of statistical modelling. Terminology is inconsistent, but three major types can be distinguished, following : # A generative model is a statistical model of the joint probability distribution P(X, Y) on given observable variable ''X'' and target variable ''Y'';: "Generative classifiers learn a model of the joint probability, p(x, y), of the inputs ''x'' and the label ''y'', and make their predictions by using Bayes rules to calculate p(y\mid x), and then picking the most likely label ''y''. # A discriminative model is a model of the conditional probability P(Y\mid X = x) of the target ''Y'', given an observation ''x''; and # Classifiers computed without using a probability model are also referred to loosely as "discriminative". The distinction between these last two classes is not cons ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Log Likelihood

The likelihood function (often simply called the likelihood) represents the probability of Realization (probability), random variable realizations conditional on particular values of the statistical parameters. Thus, when evaluated on a Sample (statistics), given sample, the likelihood function indicates which parameter values are more ''likely'' than others, in the sense that they would have made the observed data more probable. Consequently, the likelihood is often written as \mathcal(\theta\mid X) instead of P(X \mid \theta), to emphasize that it is to be understood as a function of the parameters \theta instead of the random variable X. In maximum likelihood estimation, the arg max of the likelihood function serves as a Point estimation, point estimate for \theta, while local curvature (approximated by the likelihood's Hessian matrix) indicates the estimate's Accuracy and precision, precision. Meanwhile in Bayesian statistics, parameter estimates are derived from the converse o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Lower Bound

In mathematics, particularly in order theory, an upper bound or majorant of a subset of some preordered set is an element of that is greater than or equal to every element of . Dually, a lower bound or minorant of is defined to be an element of that is less than or equal to every element of . A set with an upper (respectively, lower) bound is said to be bounded from above or majorized (respectively bounded from below or minorized) by that bound. The terms bounded above (bounded below) are also used in the mathematical literature for sets that have upper (respectively lower) bounds. Examples For example, is a lower bound for the set (as a subset of the integers or of the real numbers, etc.), and so is . On the other hand, is not a lower bound for since it is not smaller than every element in . The set has as both an upper bound and a lower bound; all other numbers are either an upper bound or a lower bound for that . Every subset of the natural numbers has a lowe ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Restricted Boltzmann Machine

A restricted Boltzmann machine (RBM) is a generative stochastic artificial neural network that can learn a probability distribution over its set of inputs. RBMs were initially invented under the name Harmonium by Paul Smolensky in 1986, and rose to prominence after Geoffrey Hinton and collaborators invented fast learning algorithms for them in the mid-2000. RBMs have found applications in dimensionality reduction, classification, collaborative filtering, feature learning, topic modellingRuslan Salakhutdinov and Geoffrey Hinton (2010)Replicated softmax: an undirected topic model ''Neural Information Processing Systems'' 23. and even many body quantum mechanics. They can be trained in either supervised or unsupervised ways, depending on the task. As their name implies, RBMs are a variant of Boltzmann machines, with the restriction that their neurons must form a bipartite graph: a pair of nodes from each of the two groups of units (commonly referred to as the "visible" and "hid ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Latent Variable

In statistics, latent variables (from Latin: present participle of ''lateo'', “lie hidden”) are variables that can only be inferred indirectly through a mathematical model from other observable variables that can be directly observed or measured. Such ''latent variable models'' are used in many disciplines, including political science, demography, engineering, medicine, ecology, physics, machine learning/artificial intelligence, bioinformatics, chemometrics, natural language processing, management and the social sciences. Latent variables may correspond to aspects of physical reality. These could in principle be measured, but may not be for practical reasons. In this situation, the term ''hidden variables'' is commonly used (reflecting the fact that the variables are meaningful, but not observable). Other latent variables correspond to abstract concepts, like categories, behavioral or mental states, or data structures. The terms ''hypothetical variables'' or ''hypothetical ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Deep Belief Network

In machine learning, a deep belief network (DBN) is a generative graphical model, or alternatively a class of deep neural network, composed of multiple layers of latent variables ("hidden units"), with connections between the layers but not between units within each layer. When trained on a set of examples without supervision, a DBN can learn to probabilistically reconstruct its inputs. The layers then act as feature detectors. After this learning step, a DBN can be further trained with supervision to perform classification. DBNs can be viewed as a composition of simple, unsupervised networks such as restricted Boltzmann machines (RBMs) or autoencoders, where each sub-network's hidden layer serves as the visible layer for the next. An RBM is an undirected, generative energy-based model with a "visible" input layer and a hidden layer and connections between but not within layers. This composition leads to a fast, layer-by-layer unsupervised training procedure, where contrastiv ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Feature Detection (nervous System)

Feature detection is a process by which the nervous system sorts or filters complex natural stimuli in order to extract behaviorally relevant cues that have a high probability of being associated with important objects or organisms in their environment, as opposed to irrelevant background or noise. ''Feature detectors'' are individual neurons—or groups of neurons—in the brain which code for perceptually significant stimuli. Early in the sensory pathway feature detectors tend to have simple properties; later they become more and more complex as the features to which they respond become more and more specific. For example, simple cells in the visual cortex of the domestic cat (''Felis catus''), respond to edges—a feature which is more likely to occur in objects and organisms in the environment. By contrast, the background of a natural visual environment tends to be noisy—emphasizing high spatial frequencies but lacking in extended edges. Responding selectively to an extended ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Unsupervised Learning

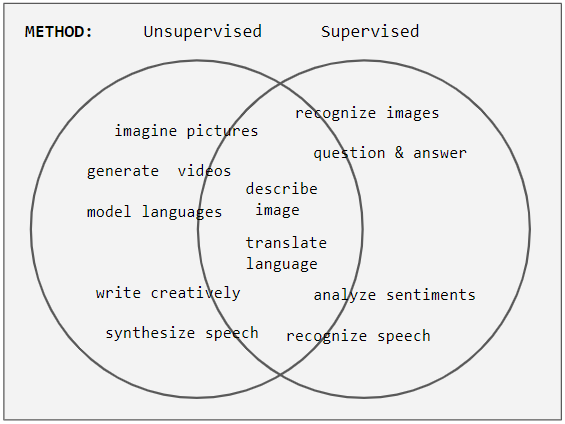

Unsupervised learning is a type of algorithm that learns patterns from untagged data. The hope is that through mimicry, which is an important mode of learning in people, the machine is forced to build a concise representation of its world and then generate imaginative content from it. In contrast to supervised learning where data is tagged by an expert, e.g. tagged as a "ball" or "fish", unsupervised methods exhibit self-organization that captures patterns as probability densities or a combination of neural feature preferences encoded in the machine's weights and activations. The other levels in the supervision spectrum are reinforcement learning where the machine is given only a numerical performance score as guidance, and semi-supervised learning where a small portion of the data is tagged. Neural networks Tasks vs. methods Neural network tasks are often categorized as discriminative (recognition) or generative (imagination). Often but not always, discriminative tas ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Jürgen Schmidhuber

Jürgen Schmidhuber (born 17 January 1963) is a German computer scientist most noted for his work in the field of artificial intelligence, deep learning and artificial neural networks. He is a co-director of the Dalle Molle Institute for Artificial Intelligence Research in Lugano, in Ticino in southern Switzerland. Following Google Scholar, from 2016 to 2021 he has received more than 100,000 scientific citations. He has been referred to as "father of modern AI," "father of AI," "dad of mature AI," "Papa" of famous AI products, "Godfather," and "father of deep learning." (Schmidhuber himself, however, has called Alexey Grigorevich Ivakhnenko the "father of deep learning.") Schmidhuber completed his undergraduate (1987) and PhD (1991) studies at the Technical University of Munich in Munich, Germany. His PhD advisors were Wilfried Brauer and Klaus Schulten. He taught there from 2004 until 2009 when he became a professor of artificial intelligence at the Università della Sviz ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Batch Normalization

Batch normalization (also known as batch norm) is a method used to make training of artificial neural networks faster and more stable through normalization of the layers' inputs by re-centering and re-scaling. It was proposed by Sergey Ioffe and Christian Szegedy in 2015. While the effect of batch normalization is evident, the reasons behind its effectiveness remain under discussion. It was believed that it can mitigate the problem of ''internal covariate shift'', where parameter initialization and changes in the distribution of the inputs of each layer affect the learning rate of the network. Recently, some scholars have argued that batch normalization does not reduce internal covariate shift, but rather smooths the objective function, which in turn improves the performance. However, at initialization, batch normalization in fact induces severe gradient explosion in deep networks, which is only alleviated by skip connections in residual networks. Others maintain that batch nor ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |