|

Universal Approximation Theorem

In the mathematical theory of artificial neural networks, universal approximation theorems are results that establish the density of an algorithmically generated class of functions within a given function space of interest. Typically, these results concern the approximation capabilities of the feedforward architecture on the space of continuous functions between two Euclidean spaces, and the approximation is with respect to the compact convergence topology. However, there are also a variety of results between non-Euclidean spaces and other commonly used architectures and, more generally, algorithmically generated sets of functions, such as the convolutional neural network (CNN) architecture, radial basis-functions, or neural networks with specific properties. Most universal approximation theorems can be parsed into two classes. The first quantifies the approximation capabilities of neural networks with an arbitrary number of artificial neurons ("''arbitrary width''" case) and the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Mathematics

Mathematics is an area of knowledge that includes the topics of numbers, formulas and related structures, shapes and the spaces in which they are contained, and quantities and their changes. These topics are represented in modern mathematics with the major subdisciplines of number theory, algebra, geometry, and analysis, respectively. There is no general consensus among mathematicians about a common definition for their academic discipline. Most mathematical activity involves the discovery of properties of abstract objects and the use of pure reason to prove them. These objects consist of either abstractions from nature orin modern mathematicsentities that are stipulated to have certain properties, called axioms. A ''proof'' consists of a succession of applications of deductive rules to already established results. These results include previously proved theorems, axioms, andin case of abstraction from naturesome basic properties that are considered true starting points of ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

ReLU

In the context of artificial neural networks, the rectifier or ReLU (rectified linear unit) activation function is an activation function defined as the positive part of its argument: : f(x) = x^+ = \max(0, x), where ''x'' is the input to a neuron. This is also known as a ramp function and is analogous to half-wave rectification in electrical engineering. This activation function started showing up in the context of visual feature extraction in hierarchical neural networks starting in the late 1960s. It was later argued that it has strong biological motivations and mathematical justifications. In 2011 it was found to enable better training of deeper networks, compared to the widely used activation functions prior to 2011, e.g., the logistic sigmoid (which is inspired by probability theory; see logistic regression) and its more practical counterpart, the hyperbolic tangent. The rectifier is, , the most popular activation function for deep neural networks. Rectified linear unit ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Derivative

In mathematics, the derivative of a function of a real variable measures the sensitivity to change of the function value (output value) with respect to a change in its argument (input value). Derivatives are a fundamental tool of calculus. For example, the derivative of the position of a moving object with respect to time is the object's velocity: this measures how quickly the position of the object changes when time advances. The derivative of a function of a single variable at a chosen input value, when it exists, is the slope of the tangent line to the graph of the function at that point. The tangent line is the best linear approximation of the function near that input value. For this reason, the derivative is often described as the "instantaneous rate of change", the ratio of the instantaneous change in the dependent variable to that of the independent variable. Derivatives can be generalized to functions of several real variables. In this generalization, the derivativ ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Differentiable Function

In mathematics, a differentiable function of one real variable is a function whose derivative exists at each point in its domain. In other words, the graph of a differentiable function has a non-vertical tangent line at each interior point in its domain. A differentiable function is smooth (the function is locally well approximated as a linear function at each interior point) and does not contain any break, angle, or cusp. If is an interior point in the domain of a function , then is said to be ''differentiable at'' if the derivative f'(x_0) exists. In other words, the graph of has a non-vertical tangent line at the point . is said to be differentiable on if it is differentiable at every point of . is said to be ''continuously differentiable'' if its derivative is also a continuous function over the domain of the function f. Generally speaking, is said to be of class if its first k derivatives f^(x), f^(x), \ldots, f^(x) exist and are continuous over the domain of the func ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Continuous Function

In mathematics, a continuous function is a function such that a continuous variation (that is a change without jump) of the argument induces a continuous variation of the value of the function. This means that there are no abrupt changes in value, known as '' discontinuities''. More precisely, a function is continuous if arbitrarily small changes in its value can be assured by restricting to sufficiently small changes of its argument. A discontinuous function is a function that is . Up until the 19th century, mathematicians largely relied on intuitive notions of continuity, and considered only continuous functions. The epsilon–delta definition of a limit was introduced to formalize the definition of continuity. Continuity is one of the core concepts of calculus and mathematical analysis, where arguments and values of functions are real and complex numbers. The concept has been generalized to functions between metric spaces and between topological spaces. The latter are the mo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Compact Set

In mathematics, specifically general topology, compactness is a property that seeks to generalize the notion of a closed and bounded subset of Euclidean space by making precise the idea of a space having no "punctures" or "missing endpoints", i.e. that the space not exclude any ''limiting values'' of points. For example, the open interval (0,1) would not be compact because it excludes the limiting values of 0 and 1, whereas the closed interval ,1would be compact. Similarly, the space of rational numbers \mathbb is not compact, because it has infinitely many "punctures" corresponding to the irrational numbers, and the space of real numbers \mathbb is not compact either, because it excludes the two limiting values +\infty and -\infty. However, the ''extended'' real number line ''would'' be compact, since it contains both infinities. There are many ways to make this heuristic notion precise. These ways usually agree in a metric space, but may not be equivalent in other topologic ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

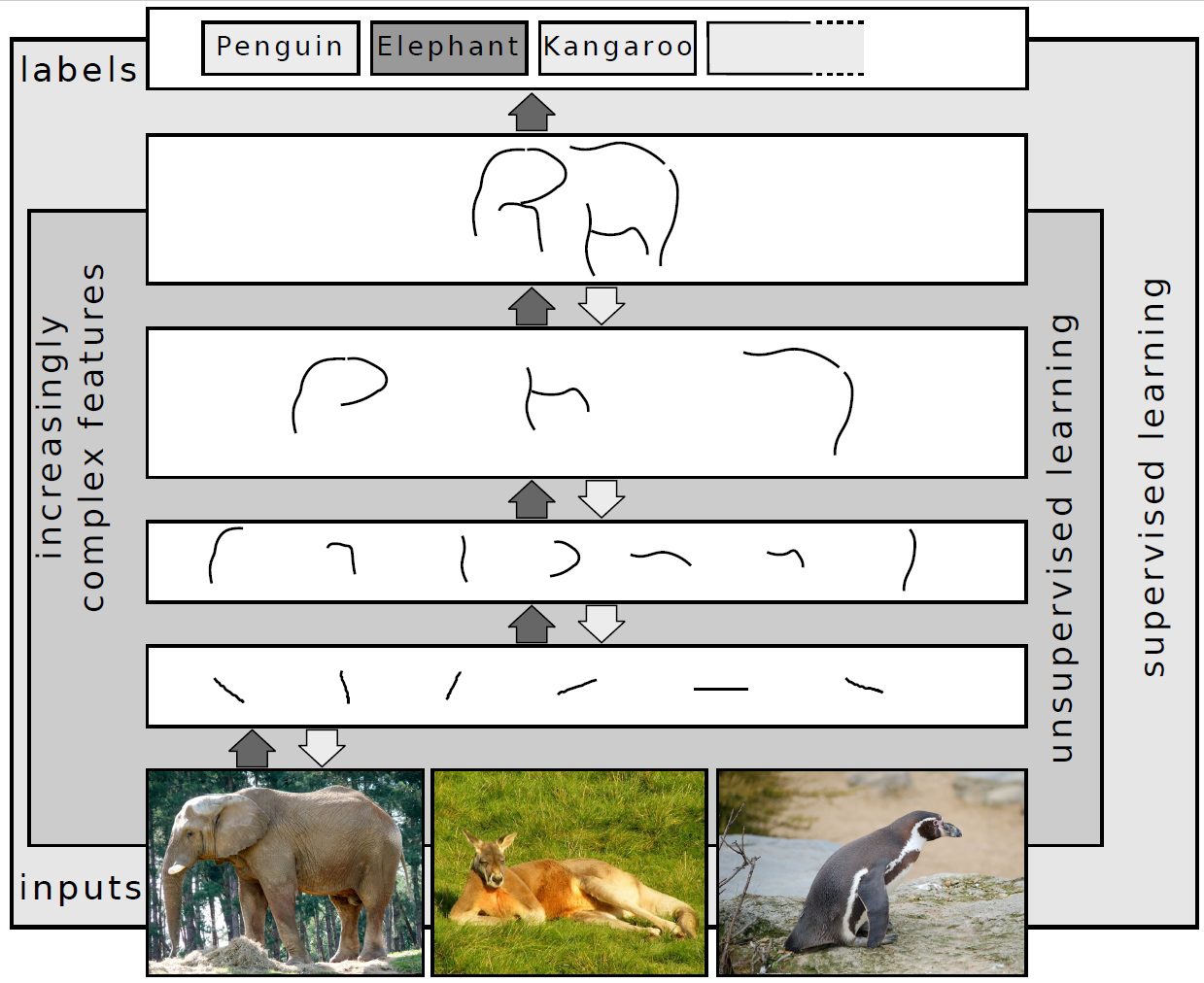

Deep Learning

Deep learning (also known as deep structured learning) is part of a broader family of machine learning methods based on artificial neural networks with representation learning. Learning can be supervised, semi-supervised or unsupervised. Deep-learning architectures such as deep neural networks, deep belief networks, deep reinforcement learning, recurrent neural networks, convolutional neural networks and Transformers have been applied to fields including computer vision, speech recognition, natural language processing, machine translation, bioinformatics, drug design, medical image analysis, Climatology, climate science, material inspection and board game programs, where they have produced results comparable to and in some cases surpassing human expert performance. Artificial neural networks (ANNs) were inspired by information processing and distributed communication nodes in biological systems. ANNs have various differences from biological brains. Specifically, artificial ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Affine Transformation

In Euclidean geometry, an affine transformation or affinity (from the Latin, ''affinis'', "connected with") is a geometric transformation that preserves lines and parallelism, but not necessarily Euclidean distances and angles. More generally, an affine transformation is an automorphism of an affine space (Euclidean spaces are specific affine spaces), that is, a function which maps an affine space onto itself while preserving both the dimension of any affine subspaces (meaning that it sends points to points, lines to lines, planes to planes, and so on) and the ratios of the lengths of parallel line segments. Consequently, sets of parallel affine subspaces remain parallel after an affine transformation. An affine transformation does not necessarily preserve angles between lines or distances between points, though it does preserve ratios of distances between points lying on a straight line. If is the point set of an affine space, then every affine transformation on can be repre ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Rectifier (neural Networks)

In the context of artificial neural networks, the rectifier or ReLU (rectified linear unit) activation function is an activation function defined as the positive part of its argument: : f(x) = x^+ = \max(0, x), where ''x'' is the input to a neuron. This is also known as a ramp function and is analogous to half-wave rectification in electrical engineering. This activation function started showing up in the context of visual feature extraction in hierarchical neural networks starting in the late 1960s. It was later argued that it has strong biological motivations and mathematical justifications. In 2011 it was found to enable better training of deeper networks, compared to the widely used activation functions prior to 2011, e.g., the logistic sigmoid (which is inspired by probability theory; see logistic regression) and its more practical counterpart, the hyperbolic tangent. The rectifier is, , the most popular activation function for deep neural networks. Rectified linear uni ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Fully Connected Network

Network topology is the arrangement of the elements ( links, nodes, etc.) of a communication network. Network topology can be used to define or describe the arrangement of various types of telecommunication networks, including command and control radio networks, industrial fieldbusses and computer networks. Network topology is the topological structure of a network and may be depicted physically or logically. It is an application of graph theory wherein communicating devices are modeled as nodes and the connections between the devices are modeled as links or lines between the nodes. Physical topology is the placement of the various components of a network (e.g., device location and cable installation), while logical topology illustrates how data flows within a network. Distances between nodes, physical interconnections, transmission rates, or signal types may differ between two different networks, yet their logical topologies may be identical. A network’s physical topology ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bochner Integral

In mathematics, the Bochner integral, named for Salomon Bochner, extends the definition of Lebesgue integral to functions that take values in a Banach space, as the limit of integrals of simple functions. Definition Let (X, \Sigma, \mu) be a measure space, and B be a Banach space. The Bochner integral of a function f : X \to B is defined in much the same way as the Lebesgue integral. First, define a simple function to be any finite sum of the form s(x) = \sum_^n \chi_(x) b_i where the E_i are disjoint members of the \sigma-algebra \Sigma, the b_i are distinct elements of B, and χE is the characteristic function of E. If \mu\left(E_i\right) is finite whenever b_i \neq 0, then the simple function is integrable, and the integral is then defined by \int_X \left sum_^n \chi_(x) b_i\right, d\mu = \sum_^n \mu(E_i) b_i exactly as it is for the ordinary Lebesgue integral. A measurable function f : X \to B is Bochner integrable if there exists a sequence of integrable simple functions s ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

1710

In the Swedish calendar it was a common year starting on Saturday, one day ahead of the Julian and ten days behind the Gregorian calendar. Events January–March * January 1 – In Prussia, Cölln is merged with Alt-Berlin by Frederick I to form Berlin. * January 4 – Robert Balfour, 5th Lord Balfour of Burleigh, two days before he is due to be executed for murder, escapes from the Edinburgh Tolbooth by exchanging clothes with his sister. * February 17 – Mauritius, a Dutch colony since 1638, is abandoned by the Dutch. * February 28 (Swedish calendar) – Battle of Helsingborg: Fourteen thousand Danish invaders, under Jørgen Rantzau, are decisively defeated by an equally large Swedish army, under Magnus Stenbock. * March 1 – The Sacheverell riots start in London with an attack on an elegant Presbyterian meeting-house in Lincoln's Inn Fields, followed by riots through the West End of London. * March 6 – The ancient Roman Pillar ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |