|

Test–retest Reliability

Repeatability or test–retest reliability is the closeness of the agreement between the results of successive measurements of the same measure, when carried out under the same conditions of measurement. In other words, the measurements are taken by a single person or instrument on the same item, under the same conditions, and in a short period of time. A less-than-perfect test–retest reliability causes test–retest variability. Such variability can be caused by, for example, intra-individual variability and inter-observer variability. A measurement may be said to be ''repeatable'' when this variation is smaller than a pre-determined acceptance criterion. Test–retest variability is practically used, for example, in medical monitoring of conditions. In these situations, there is often a predetermined "critical difference", and for differences in monitored values that are smaller than this critical difference, the possibility of variability as a sole cause of the differenc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Measurements

Measurement is the quantification of attributes of an object or event, which can be used to compare with other objects or events. In other words, measurement is a process of determining how large or small a physical quantity is as compared to a basic reference quantity of the same kind. The scope and application of measurement are dependent on the context and discipline. In natural sciences and engineering, measurements do not apply to nominal properties of objects or events, which is consistent with the guidelines of the ''International vocabulary of metrology'' published by the International Bureau of Weights and Measures. However, in other fields such as statistics as well as the social and behavioural sciences, measurements can have multiple levels, which would include nominal, ordinal, interval and ratio scales. Measurement is a cornerstone of trade, science, technology and quantitative research in many disciplines. Historically, many measurement systems existed fo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Accuracy And Precision

Accuracy and precision are two measures of '' observational error''. ''Accuracy'' is how close a given set of measurements (observations or readings) are to their '' true value'', while ''precision'' is how close the measurements are to each other. In other words, ''precision'' is a description of '' random errors'', a measure of statistical variability. ''Accuracy'' has two definitions: # More commonly, it is a description of only ''systematic errors'', a measure of statistical bias of a given measure of central tendency; low accuracy causes a difference between a result and a true value; ISO calls this ''trueness''. # Alternatively, ISO defines accuracy as describing a combination of both types of observational error (random and systematic), so high accuracy requires both high precision and high trueness. In the first, more common definition of "accuracy" above, the concept is independent of "precision", so a particular set of data can be said to be accurate, precise, both ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Reliability

In statistics, a consistent estimator or asymptotically consistent estimator is an estimator—a rule for computing estimates of a parameter ''θ''0—having the property that as the number of data points used increases indefinitely, the resulting sequence of estimates converges in probability to ''θ''0. This means that the distributions of the estimates become more and more concentrated near the true value of the parameter being estimated, so that the probability of the estimator being arbitrarily close to ''θ''0 converges to one. In practice one constructs an estimator as a function of an available sample of size ''n'', and then imagines being able to keep collecting data and expanding the sample ''ad infinitum''. In this way one would obtain a sequence of estimates indexed by ''n'', and consistency is a property of what occurs as the sample size “grows to infinity”. If the sequence of estimates can be mathematically shown to converge in probability to the true value ' ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Reproducibility

Reproducibility, also known as replicability and repeatability, is a major principle underpinning the scientific method. For the findings of a study to be reproducible means that results obtained by an experiment or an observational study or in a statistical analysis of a data set should be achieved again with a high degree of reliability when the study is replicated. There are different kinds of replication but typically replication studies involve different researchers using the same methodology. Only after one or several such successful replications should a result be recognized as scientific knowledge. With a narrower scope, ''reproducibility'' has been introduced in computational sciences: Any results should be documented by making all data and code available in such a way that the computations can be executed again with identical results. In recent decades, there has been a rising concern that many published scientific results fail the test of reproducibility, evoking a re ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Reliability (statistics)

In statistics and psychometrics, reliability is the overall consistency of a measure. A measure is said to have a high reliability if it produces similar results under consistent conditions:"It is the characteristic of a set of test scores that relates to the amount of random error from the measurement process that might be embedded in the scores. Scores that are highly reliable are precise, reproducible, and consistent from one testing occasion to another. That is, if the testing process were repeated with a group of test takers, essentially the same results would be obtained. Various kinds of reliability coefficients, with values ranging between 0.00 (much error) and 1.00 (no error), are usually used to indicate the amount of error in the scores." For example, measurements of people's height and weight are often extremely reliable.The Marketing Accountability Standards Board (MASB) endorses this definition as part of its ongoinCommon Language: Marketing Activities and Metrics ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Monitoring (medicine)

In medicine, monitoring is the observation of a disease, condition or one or several medical parameters over time. It can be performed by continuously measuring certain parameters by using a medical monitor (for example, by continuously measuring vital signs by a bedside monitor), and/or by repeatedly performing medical tests (such as blood glucose monitoring with a glucose meter in people with diabetes mellitus). Transmitting data from a monitor to a distant monitoring station is known as telemetry or biotelemetry. Classification by target parameter Monitoring can be classified by the target of interest, including: * Cardiac monitoring, which generally refers to continuous electrocardiography with assessment of the patients condition relative to their cardiac rhythm. A small monitor worn by an ambulatory patient for this purpose is known as a Holter monitor. Cardiac monitoring can also involve cardiac output monitoring via an invasive Swan-Ganz catheter. * Hemodyna ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Accuracy

Accuracy and precision are two measures of '' observational error''. ''Accuracy'' is how close a given set of measurements (observations or readings) are to their '' true value'', while ''precision'' is how close the measurements are to each other. In other words, ''precision'' is a description of ''random errors'', a measure of statistical variability. ''Accuracy'' has two definitions: # More commonly, it is a description of only ''systematic errors'', a measure of statistical bias of a given measure of central tendency; low accuracy causes a difference between a result and a true value; ISO calls this ''trueness''. # Alternatively, ISO defines accuracy as describing a combination of both types of observational error (random and systematic), so high accuracy requires both high precision and high trueness. In the first, more common definition of "accuracy" above, the concept is independent of "precision", so a particular set of data can be said to be accurate, precise, both, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Carryover Effect

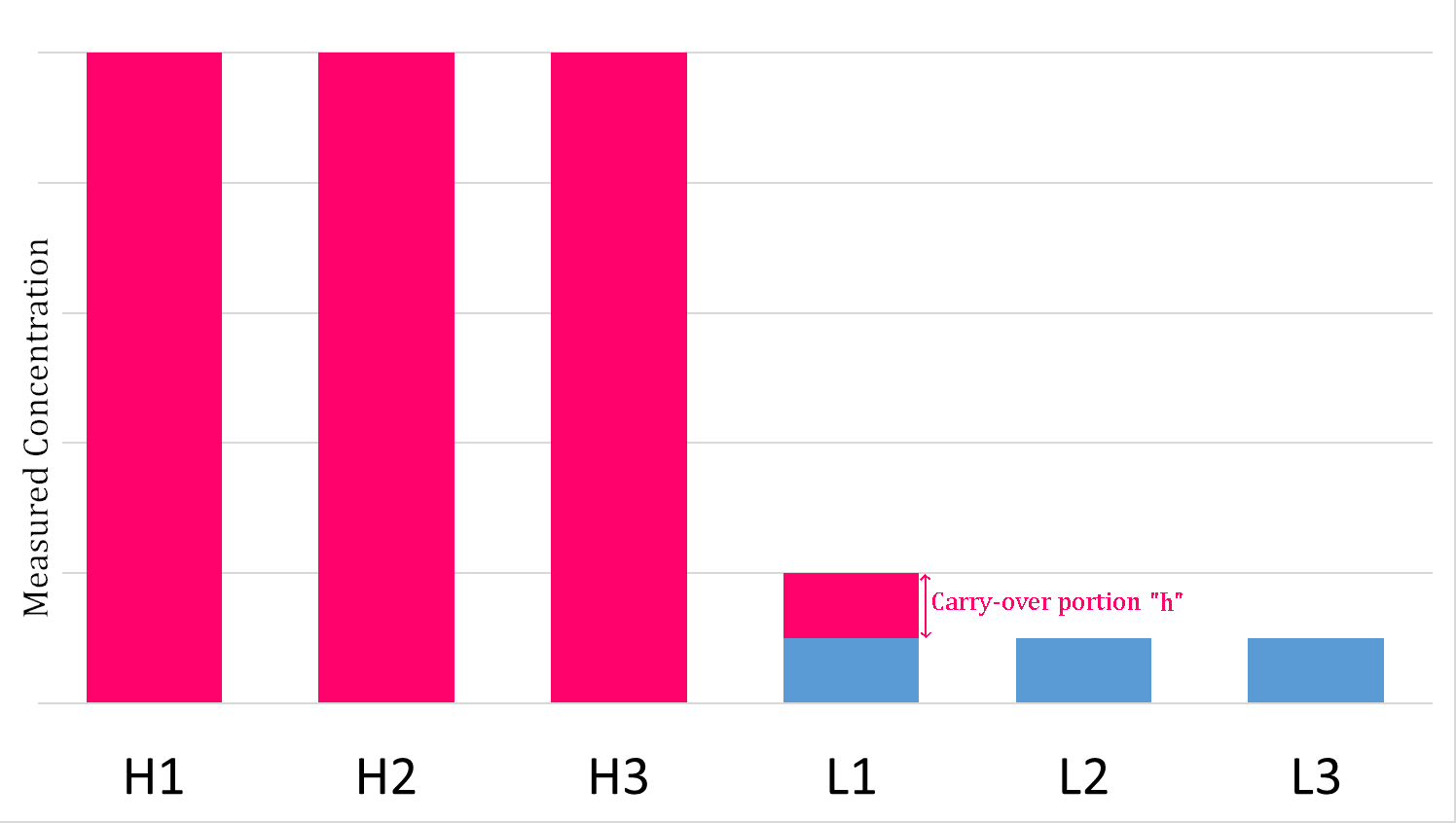

The carryover effect is a term used in clinical chemistry to describe the transfer of unwanted material from one container or mixture to another. It describes the influence of one sample upon the following one. It may be from a specimen, or a reagent, or even the washing medium. The significance of carry over is that even a small amount can lead to erroneous results. Carryover effect in clinical laboratory Carryover experiments are widely used for clinical chemistry and immunochemistry analyzers to evaluate and validate carryover effects. The pipetting and washing systems in an automated analyzer are designed to continuously cycle between the aspiration of patient specimens and cleaning. An obvious concern is a potential for carryover of analyte from one patient specimen into one or more following patient specimens, which can falsely increase or decrease the measured analyte concentration. Specimen carryover is typically addressed by judicious choice of probe material, probe de ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Reproducibility

Reproducibility, also known as replicability and repeatability, is a major principle underpinning the scientific method. For the findings of a study to be reproducible means that results obtained by an experiment or an observational study or in a statistical analysis of a data set should be achieved again with a high degree of reliability when the study is replicated. There are different kinds of replication but typically replication studies involve different researchers using the same methodology. Only after one or several such successful replications should a result be recognized as scientific knowledge. With a narrower scope, ''reproducibility'' has been introduced in computational sciences: Any results should be documented by making all data and code available in such a way that the computations can be executed again with identical results. In recent decades, there has been a rising concern that many published scientific results fail the test of reproducibility, evoking a re ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Accuracy

Accuracy and precision are two measures of '' observational error''. ''Accuracy'' is how close a given set of measurements (observations or readings) are to their '' true value'', while ''precision'' is how close the measurements are to each other. In other words, ''precision'' is a description of ''random errors'', a measure of statistical variability. ''Accuracy'' has two definitions: # More commonly, it is a description of only ''systematic errors'', a measure of statistical bias of a given measure of central tendency; low accuracy causes a difference between a result and a true value; ISO calls this ''trueness''. # Alternatively, ISO defines accuracy as describing a combination of both types of observational error (random and systematic), so high accuracy requires both high precision and high trueness. In the first, more common definition of "accuracy" above, the concept is independent of "precision", so a particular set of data can be said to be accurate, precise, both, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Standard Deviation

In statistics, the standard deviation is a measure of the amount of variation or dispersion of a set of values. A low standard deviation indicates that the values tend to be close to the mean (also called the expected value) of the set, while a high standard deviation indicates that the values are spread out over a wider range. Standard deviation may be abbreviated SD, and is most commonly represented in mathematical texts and equations by the lower case Greek letter σ (sigma), for the population standard deviation, or the Latin letter '' s'', for the sample standard deviation. The standard deviation of a random variable, sample, statistical population, data set, or probability distribution is the square root of its variance. It is algebraically simpler, though in practice less robust, than the average absolute deviation. A useful property of the standard deviation is that, unlike the variance, it is expressed in the same unit as the data. The standard deviation o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |