|

Source Coding Theorem

In information theory, Shannon's source coding theorem (or noiseless coding theorem) establishes the statistical limits to possible data compression for data whose source is an independent identically-distributed random variable, and the operational meaning of the Shannon entropy. Named after Claude Shannon, the source coding theorem shows that, in the limit, as the length of a stream of independent and identically-distributed random variable (i.i.d.) data tends to infinity, it is impossible to compress such data such that the code rate (average number of bits per symbol) is less than the Shannon entropy of the source, without it being virtually certain that information will be lost. However it is possible to get the code rate arbitrarily close to the Shannon entropy, with negligible probability of loss. The source coding theorem for symbol codes places an upper and a lower bound on the minimal possible expected length of codewords as a function of the entropy of the input w ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Information Theory

Information theory is the mathematical study of the quantification (science), quantification, Data storage, storage, and telecommunications, communication of information. The field was established and formalized by Claude Shannon in the 1940s, though early contributions were made in the 1920s through the works of Harry Nyquist and Ralph Hartley. It is at the intersection of electronic engineering, mathematics, statistics, computer science, Neuroscience, neurobiology, physics, and electrical engineering. A key measure in information theory is information entropy, entropy. Entropy quantifies the amount of uncertainty involved in the value of a random variable or the outcome of a random process. For example, identifying the outcome of a Fair coin, fair coin flip (which has two equally likely outcomes) provides less information (lower entropy, less uncertainty) than identifying the outcome from a roll of a dice, die (which has six equally likely outcomes). Some other important measu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Differential Entropy

Differential entropy (also referred to as continuous entropy) is a concept in information theory that began as an attempt by Claude Shannon to extend the idea of (Shannon) entropy (a measure of average surprisal) of a random variable, to continuous probability distributions. Unfortunately, Shannon did not derive this formula, and rather just assumed it was the correct continuous analogue of discrete entropy, but it is not. The actual continuous version of discrete entropy is the limiting density of discrete points (LDDP). Differential entropy (described here) is commonly encountered in the literature, but it is a limiting case of the LDDP, and one that loses its fundamental association with discrete entropy. In terms of measure theory, the differential entropy of a probability measure is the negative relative entropy from that measure to the Lebesgue measure, where the latter is treated as if it were a probability measure, despite being unnormalized. Definition Let X be a rand ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

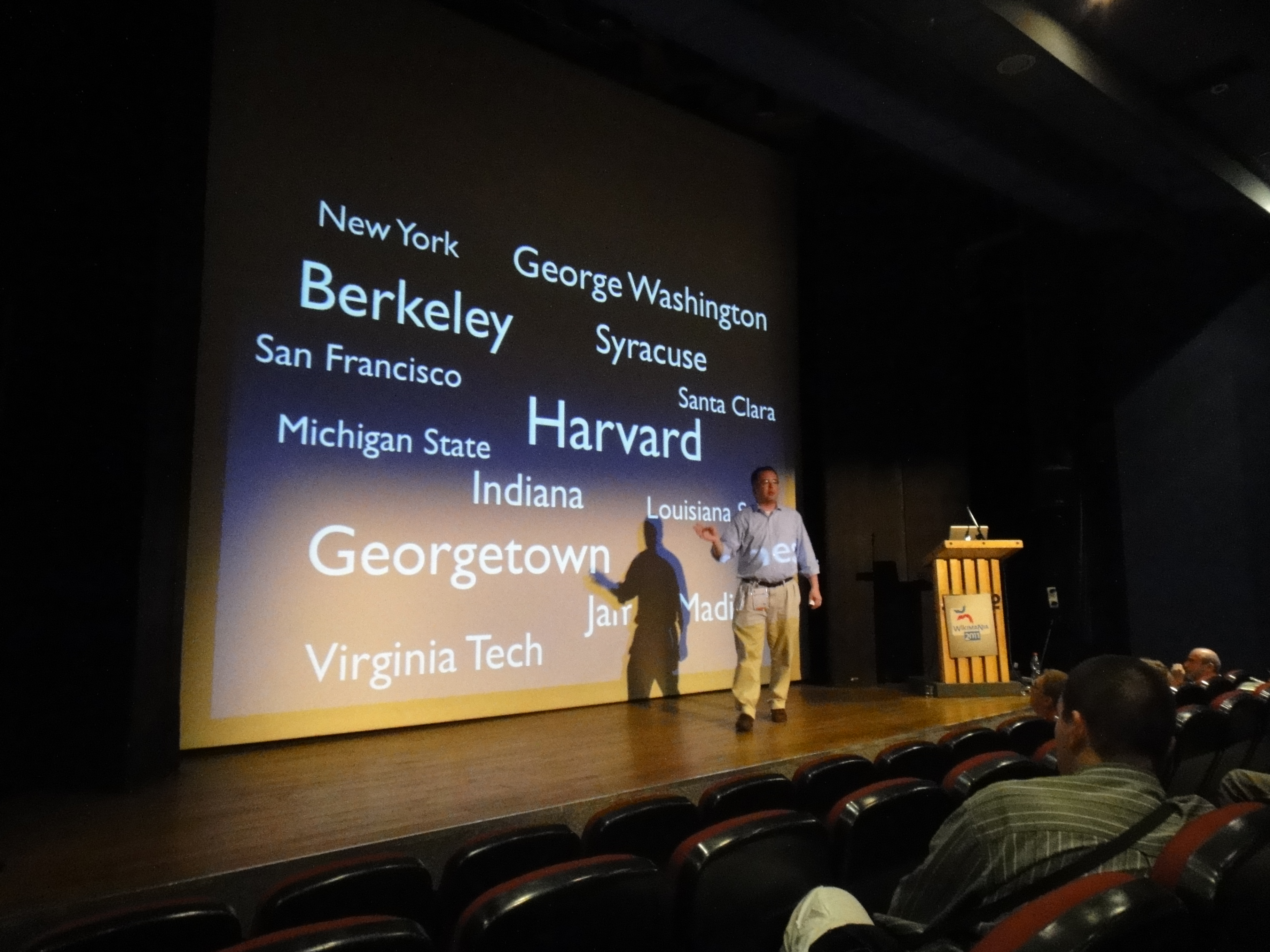

Presentation Layer Protocols

A presentation conveys information from a speaker to an audience. Presentations are typically demonstrations, introduction, lecture, or speech meant to inform, persuade, inspire, motivate, build goodwill, or present a new idea/product. Presentations usually require preparation, organization, Event management, event planning, writing, use of visual aids, dealing with stress, and answering questions. "The key elements of a presentation consists of presenter, audience, message, reaction and method to deliver speech for organizational success in an effective manner." Presentations are widely used in tertiary work settings such as accountants giving a detailed report of a company's financials or an entrepreneur pitching their venture idea to investors. The term can also be used for a formal or ritualized introduction or offering, as with the presentation of a debutante. Presentations in certain formats are also known as keynote address. Interactive presentations, in which the audience ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Data Compression

In information theory, data compression, source coding, or bit-rate reduction is the process of encoding information using fewer bits than the original representation. Any particular compression is either lossy or lossless. Lossless compression reduces bits by identifying and eliminating statistical redundancy. No information is lost in lossless compression. Lossy compression reduces bits by removing unnecessary or less important information. Typically, a device that performs data compression is referred to as an encoder, and one that performs the reversal of the process (decompression) as a decoder. The process of reducing the size of a data file is often referred to as data compression. In the context of data transmission, it is called source coding: encoding is done at the source of the data before it is stored or transmitted. Source coding should not be confused with channel coding, for error detection and correction or line coding, the means for mapping data onto a sig ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Coding Theory

Coding theory is the study of the properties of codes and their respective fitness for specific applications. Codes are used for data compression, cryptography, error detection and correction, data transmission and computer data storage, data storage. Codes are studied by various scientific disciplines—such as information theory, electrical engineering, mathematics, linguistics, and computer science—for the purpose of designing efficient and reliable data transmission methods. This typically involves the removal of redundancy and the correction or detection of errors in the transmitted data. There are four types of coding: # Data compression (or ''source coding'') # Error detection and correction, Error control (or ''channel coding'') # Cryptography, Cryptographic coding # Line code, Line coding Data compression attempts to remove unwanted redundancy from the data from a source in order to transmit it more efficiently. For example, DEFLATE data compression makes files small ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Bell System Technical Journal

The ''Bell Labs Technical Journal'' was the in-house scientific journal for scientists of Bell Labs, published yearly by the IEEE society. The journal was originally established as ''The Bell System Technical Journal'' (BSTJ) in New York by the American Telephone and Telegraph Company (AT&T) in 1922. It was published under this name until 1983, when the breakup of the Bell System placed various parts of the companies in the system into independent corporate entities. The journal was devoted to the scientific fields and engineering disciplines practiced in the Bell System for improvements in the wide field of electrical communication. After the restructuring of Bell Labs in 1984, the journal was renamed to ''AT&T Bell Laboratories Technical Journal''. In 1985, it was published as the ''AT&T Technical Journal'' until 1996, when it was renamed to ''Bell Labs Technical Journal''. The journal was discontinued in 2020. The last managing editor was Charles Bahr. History The Bell System ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Noisy-channel Coding Theorem

In information theory, the noisy-channel coding theorem (sometimes Shannon's theorem or Shannon's limit), establishes that for any given degree of noise contamination of a communication channel, it is possible (in theory) to communicate discrete data (digital information) nearly error-free up to a computable maximum rate through the channel. This result was presented by Claude Shannon in 1948 and was based in part on earlier work and ideas of Harry Nyquist and Ralph Hartley. The Shannon limit or Shannon capacity of a communication channel refers to the maximum rate of error-free data that can theoretically be transferred over the channel if the link is subject to random data transmission errors, for a particular noise level. It was first described by Shannon (1948), and shortly after published in a book by Shannon and Warren Weaver entitled '' The Mathematical Theory of Communication'' (1949). This founded the modern discipline of information theory. Overview Stated by C ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Error Exponent

In information theory, the error exponent of a channel code or source code over the block length of the code is the rate at which the error probability decays exponentially with the block length of the code. Formally, it is defined as the limiting ratio of the negative logarithm of the error probability to the block length of the code for large block lengths. For example, if the probability of error P_ of a decoder drops as e^, where n is the block length, the error exponent is \alpha. In this example, \frac approaches \alpha for large n. Many of the information-theoretic theorems are of asymptotic nature, for example, the channel coding theorem states that for any rate less than the channel capacity, the probability of the error of the channel code can be made to go to zero as the block length goes to infinity. In practical situations, there are limitations to the delay of the communication and the block length must be finite. Therefore, it is important to study how the prob ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Channel Coding

In computing, telecommunication, information theory, and coding theory, forward error correction (FEC) or channel coding is a technique used for error control, controlling errors in data transmission over unreliable or noisy communication channels. The central idea is that the sender encodes the message in a Redundancy (information theory), redundant way, most often by using an error correction code, or error correcting code (ECC). The redundancy allows the receiver not only to error detection, detect errors that may occur anywhere in the message, but often to correct a limited number of errors. Therefore a reverse channel to request re-transmission may not be needed. The cost is a fixed, higher forward channel bandwidth. The American mathematician Richard Hamming pioneered this field in the 1940s and invented the first error-correcting code in 1950: the Hamming (7,4) code. FEC can be applied in situations where re-transmissions are costly or impossible, such as one-way communic ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Gibbs' Inequality

200px, Josiah Willard Gibbs In information theory, Gibbs' inequality is a statement about the information entropy of a discrete probability distribution. Several other bounds on the entropy of probability distributions are derived from Gibbs' inequality, including Fano's inequality. It was first presented by J. Willard Gibbs in the 19th century. Gibbs' inequality Suppose that P = \ and Q = \ are discrete probability distributions. Then : - \sum_^n p_i \log p_i \leq - \sum_^n p_i \log q_i with equality if and only if p_i = q_i for i = 1, \dots n. Put in words, the information entropy of a distribution P is less than or equal to its cross entropy with any other distribution Q. The difference between the two quantities is the Kullback–Leibler divergence or relative entropy, so the inequality can also be written: : D_(P\, Q) \equiv \sum_^n p_i \log \frac \geq 0. Note that the use of base-2 logarithms is optional, and allows one to refer to the quantity on each side o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Asymptotic Equipartition Property

In information theory, the asymptotic equipartition property (AEP) is a general property of the output samples of a stochastic source. It is fundamental to the concept of typical set used in theories of data compression. Roughly speaking, the theorem states that although there are many series of results that may be produced by a random process, the one actually produced is most probably from a loosely defined set of outcomes that all have approximately the same chance of being the one actually realized. (This is a consequence of the law of large numbers and ergodic theory.) Although there are individual outcomes which have a higher probability than any outcome in this set, the vast number of outcomes in the set almost guarantees that the outcome will come from the set. One way of intuitively understanding the property is through Cramér's large deviation theorem, which states that the probability of a large deviation from mean decays exponentially with the number of samples. Suc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |