|

Paired Difference Test

In statistics, a paired difference test is a type of location test that is used when comparing two sets of measurements to assess whether their expected value, population means differ. A paired difference test uses additional information about the sample (statistics), sample that is not present in an ordinary unpaired testing situation, either to increase the statistical power, or to reduce the effects of confounders. Specific methods for carrying out paired difference tests are, for normally distributed difference t-test (where the population standard deviation of difference is not known) and the paired Z-test (where the population standard deviation of the difference is known), and for differences that may not be normally distributed the Wilcoxon signed-rank test. The most familiar example of a paired difference test occurs when subjects are measured before and after a treatment. Such a "repeated measures" test compares these measurements within subjects, rather than across sub ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistics

Statistics (from German language, German: ''wikt:Statistik#German, Statistik'', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, industrial, or social problem, it is conventional to begin with a statistical population or a statistical model to be studied. Populations can be diverse groups of people or objects such as "all people living in a country" or "every atom composing a crystal". Statistics deals with every aspect of data, including the planning of data collection in terms of the design of statistical survey, surveys and experimental design, experiments.Dodge, Y. (2006) ''The Oxford Dictionary of Statistical Terms'', Oxford University Press. When census data cannot be collected, statisticians collect data by developing specific experiment designs and survey sample (statistics), samples. Representative sampling as ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

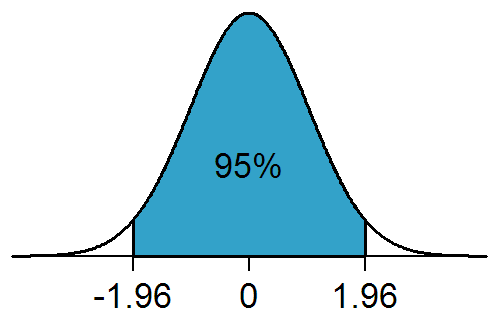

Two-tailed Test

In statistical significance testing, a one-tailed test and a two-tailed test are alternative ways of computing the statistical significance of a parameter inferred from a data set, in terms of a test statistic. A two-tailed test is appropriate if the estimated value is greater or less than a certain range of values, for example, whether a test taker may score above or below a specific range of scores. This method is used for null hypothesis testing and if the estimated value exists in the critical areas, the alternative hypothesis is accepted over the null hypothesis. A one-tailed test is appropriate if the estimated value may depart from the reference value in only one direction, left or right, but not both. An example can be whether a machine produces more than one-percent defective products. In this situation, if the estimated value exists in one of the one-sided critical areas, depending on the direction of interest (greater than or less than), the alternative hypothesis is a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sign Test

The sign test is a statistical method to test for consistent differences between pairs of observations, such as the weight of subjects before and after treatment. Given pairs of observations (such as weight pre- and post-treatment) for each subject, the sign test determines if one member of the pair (such as pre-treatment) tends to be greater than (or less than) the other member of the pair (such as post-treatment). The paired observations may be designated ''x'' and ''y''. For comparisons of paired observations (''x'',y), the sign test is most useful if comparisons can only be expressed as ''x'' > ''y'', ''x'' = ''y'', or ''x'' 0. Assuming that H0 is true, then ''W'' follows a binomial distribution ''W'' ~ b(''m'', 0.5). Assumptions Let ''Z''i = ''Y''i – ''X''i for ''i'' = 1, ... , ''n''. # The differences ''Zi'' are assumed to be independent. # Each ''Zi'' comes from the same continuous population. # The values ''X''''i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Pairwise Comparison

Pairwise comparison generally is any process of comparing entities in pairs to judge which of each entity is preferred, or has a greater amount of some quantitative property, or whether or not the two entities are identical. The method of pairwise comparison is used in the scientific study of preferences, attitudes, voting systems, social choice, public choice, requirements engineering and multiagent AI systems. In psychology literature, it is often referred to as paired comparison. Prominent psychometrician L. L. Thurstone first introduced a scientific approach to using pairwise comparisons for measurement in 1927, which he referred to as the law of comparative judgment. Thurstone linked this approach to psychophysical theory developed by Ernst Heinrich Weber and Gustav Fechner. Thurstone demonstrated that the method can be used to order items along a dimension such as preference or importance using an interval-type scale. Mathematician Ernst Zermelo (1929) first described ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Paired Data

Scientific experiments often require comparing two (or more) sets of data. In some cases, the data sets are paired, in that there is an obvious and meaningful one-to-one correspondence between the data in the first set and the data in the second set. For example, paired data can arise from measuring a single set of individuals at different points in time. A clinical trial might record the blood pressure in a set of ''n'' patients before and after giving them a medicine. In this case the "before" and "after" data sets are paired, in that each patient has a "before" measurement and an "after" measurement, which are probably related. In contrast, another clinical trial might measure ''n'' patients before treatment and a different set of ''m'' patients after treatment; in that case, the "before" and "after" data are unpaired. Statistical tests used to compare sets of data have been designed for data sets that are either paired or unpaired, and it is important to use the correct te ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Significance

In statistical hypothesis testing, a result has statistical significance when it is very unlikely to have occurred given the null hypothesis (simply by chance alone). More precisely, a study's defined significance level, denoted by \alpha, is the probability of the study rejecting the null hypothesis, given that the null hypothesis is true; and the ''p''-value of a result, ''p'', is the probability of obtaining a result at least as extreme, given that the null hypothesis is true. The result is statistically significant, by the standards of the study, when p \le \alpha. The significance level for a study is chosen before data collection, and is typically set to 5% or much lower—depending on the field of study. In any experiment or observation that involves drawing a sample from a population, there is always the possibility that an observed effect would have occurred due to sampling error alone. But if the ''p''-value of an observed effect is less than (or equal to) the significanc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Null Hypothesis

In scientific research, the null hypothesis (often denoted ''H''0) is the claim that no difference or relationship exists between two sets of data or variables being analyzed. The null hypothesis is that any experimentally observed difference is due to chance alone, and an underlying causative relationship does not exist, hence the term "null". In addition to the null hypothesis, an alternative hypothesis is also developed, which claims that a relationship does exist between two variables. Basic definitions The ''null hypothesis'' and the ''alternative hypothesis'' are types of conjectures used in statistical tests, which are formal methods of reaching conclusions or making decisions on the basis of data. The hypotheses are conjectures about a statistical model of the population, which are based on a sample of the population. The tests are core elements of statistical inference, heavily used in the interpretation of scientific experimental data, to separate scientific claims fr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Matching (statistics)

Matching is a statistical technique which is used to evaluate the effect of a treatment by comparing the treated and the non-treated units in an observational study or quasi-experiment (i.e. when the treatment is not randomly assigned). The goal of matching is to reduce bias for the estimated treatment effect in an observational-data study, by finding, for every treated unit, one (or more) non-treated unit(s) with similar observable characteristics against which the covariates are balanced out. By matching treated units to similar non-treated units, matching enables a comparison of outcomes among treated and non-treated units to estimate the effect of the treatment reducing bias due to confounding. Propensity score matching, an early matching technique, was developed as part of the Rubin causal model, but has been shown to increase model dependence, bias, inefficiency, and power and is no longer recommended compared to other matching methods. Matching has been promoted by Donald Rubi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Observational Study

In fields such as epidemiology, social sciences, psychology and statistics, an observational study draws inferences from a sample (statistics), sample to a statistical population, population where the dependent and independent variables, independent variable is not under the Scientific control, control of the researcher because of ethical concerns or logistical constraints. One common observational study is about the possible effect of a treatment on subjects, where the assignment of subjects into a treated group versus a control group is outside the control of the investigator. This is in contrast with experiments, such as randomized controlled trials, where each subject is Random assignment, randomly assigned to a treated group or a control group. Observational studies, for lacking an assignment mechanism, naturally present difficulties for inferential analysis. Motivation The independent variable may be beyond the control of the investigator for a variety of reasons: * A ran ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Type I And Type II Errors

In statistical hypothesis testing, a type I error is the mistaken rejection of an actually true null hypothesis (also known as a "false positive" finding or conclusion; example: "an innocent person is convicted"), while a type II error is the failure to reject a null hypothesis that is actually false (also known as a "false negative" finding or conclusion; example: "a guilty person is not convicted"). Much of statistical theory revolves around the minimization of one or both of these errors, though the complete elimination of either is a statistical impossibility if the outcome is not determined by a known, observable causal process. By selecting a low threshold (cut-off) value and modifying the alpha (α) level, the quality of the hypothesis test can be increased. The knowledge of type I errors and type II errors is widely used in medical science, biometrics and computer science. Intuitively, type I errors can be thought of as errors of ''commission'', i.e. the researcher unluck ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Random Effect

In statistics, a random effects model, also called a variance components model, is a statistical model where the model parameters are random variables. It is a kind of hierarchical linear model, which assumes that the data being analysed are drawn from a hierarchy of different populations whose differences relate to that hierarchy. A random effects model is a special case of a mixed model. Contrast this to the biostatistics definitions, as biostatisticians use "fixed" and "random" effects to respectively refer to the population-average and subject-specific effects (and where the latter are generally assumed to be unknown, latent variables). Qualitative description Random effect models assist in controlling for unobserved heterogeneity when the heterogeneity is constant over time and not correlated with independent variables. This constant can be removed from longitudinal data through differencing, since taking a first difference will remove any time invariant components of ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cumulative Distribution Function

In probability theory and statistics, the cumulative distribution function (CDF) of a real-valued random variable X, or just distribution function of X, evaluated at x, is the probability that X will take a value less than or equal to x. Every probability distribution supported on the real numbers, discrete or "mixed" as well as continuous, is uniquely identified by an ''upwards continuous'' ''monotonic increasing'' cumulative distribution function F : \mathbb R \rightarrow ,1/math> satisfying \lim_F(x)=0 and \lim_F(x)=1. In the case of a scalar continuous distribution, it gives the area under the probability density function from minus infinity to x. Cumulative distribution functions are also used to specify the distribution of multivariate random variables. Definition The cumulative distribution function of a real-valued random variable X is the function given by where the right-hand side represents the probability that the random variable X takes on a value less tha ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |