|

Partial Leverage

In regression analysis, partial leverage (PL) is a measure of the contribution of the individual independent variables to the total leverage of each observation. That is, if ''h''''i'' is the ''i''th element of the diagonal of the hat matrix, PL is a measure of how ''h''''i'' changes as a variable is added to the regression model. It is computed as: : \left(\mathrm_j\right)_i = \frac where :''j'' = index of independent variable :''i'' = index of observation :''X''''j''· 'j''/sub> = residuals from regressing ''X''''j'' against the remaining independent variables Note that the partial leverage is the leverage of the ''i''th point in the partial regression plot for the ''j''th variable. Data points with large partial leverage for an independent variable can exert undue influence on the selection of that variable in automatic regression model building procedures. See also * Leverage * Partial residual plot * Partial regression plot * Variance inflation factor for a multi-lin ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Regression Analysis

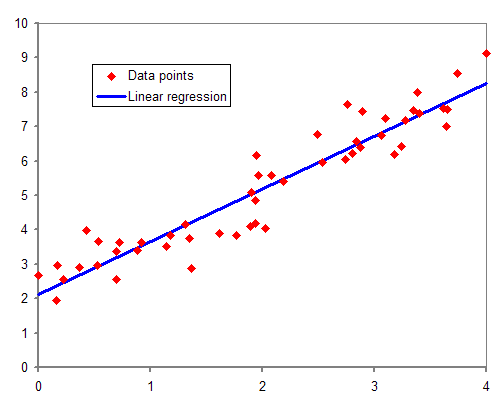

In statistical modeling, regression analysis is a set of statistical processes for estimating the relationships between a dependent variable (often called the 'outcome' or 'response' variable, or a 'label' in machine learning parlance) and one or more independent variables (often called 'predictors', 'covariates', 'explanatory variables' or 'features'). The most common form of regression analysis is linear regression, in which one finds the line (or a more complex linear combination) that most closely fits the data according to a specific mathematical criterion. For example, the method of ordinary least squares computes the unique line (or hyperplane) that minimizes the sum of squared differences between the true data and that line (or hyperplane). For specific mathematical reasons (see linear regression), this allows the researcher to estimate the conditional expectation (or population average value) of the dependent variable when the independent variables take on a given ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Independent Variable

Dependent and independent variables are variables in mathematical modeling, statistical modeling and experimental sciences. Dependent variables receive this name because, in an experiment, their values are studied under the supposition or demand that they depend, by some law or rule (e.g., by a mathematical function), on the values of other variables. Independent variables, in turn, are not seen as depending on any other variable in the scope of the experiment in question. In this sense, some common independent variables are time, space, density, mass, fluid flow rate, and previous values of some observed value of interest (e.g. human population size) to predict future values (the dependent variable). Of the two, it is always the dependent variable whose variation is being studied, by altering inputs, also known as regressors in a statistical context. In an experiment, any variable that can be attributed a value without attributing a value to any other variable is called an ind ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Leverage (statistics)

In statistics and in particular in regression analysis, leverage is a measure of how far away the independent variable values of an observation are from those of the other observations. ''High-leverage points'', if any, are outliers with respect to the independent variables. That is, high-leverage points have no neighboring points in \mathbb^ space, where '''' is the number of independent variables in a regression model. This makes the fitted model likely to pass close to a high leverage observation. Hence high-leverage points have the potential to cause large changes in the parameter estimates when they are deleted i.e., to be influential points. Although an influential point will typically have high leverage, a high leverage point is not necessarily an influential point. The leverage is typically defined as the diagonal elements of the hat matrix. Definition and interpretations Consider the linear regression model _i = \boldsymbol_i^\boldsymbol+_i, i=1,\, 2,\ldots,\, n. That is ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hat Matrix

In statistics, the projection matrix (\mathbf), sometimes also called the influence matrix or hat matrix (\mathbf), maps the vector of response values (dependent variable values) to the vector of fitted values (or predicted values). It describes the influence each response value has on each fitted value. The diagonal elements of the projection matrix are the leverages, which describe the influence each response value has on the fitted value for that same observation. Definition If the vector of response values is denoted by \mathbf and the vector of fitted values by \mathbf, :\mathbf = \mathbf \mathbf. As \mathbf is usually pronounced "y-hat", the projection matrix \mathbf is also named ''hat matrix'' as it "puts a hat on \mathbf". The element in the ''i''th row and ''j''th column of \mathbf is equal to the covariance between the ''j''th response value and the ''i''th fitted value, divided by the variance of the former: :p_ = \frac Application for residuals The formula for th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Errors And Residuals In Statistics

In statistics and optimization, errors and residuals are two closely related and easily confused measures of the deviation of an observed value of an element of a statistical sample from its "true value" (not necessarily observable). The error of an observation is the deviation of the observed value from the true value of a quantity of interest (for example, a population mean). The residual is the difference between the observed value and the ''estimated'' value of the quantity of interest (for example, a sample mean). The distinction is most important in regression analysis, where the concepts are sometimes called the regression errors and regression residuals and where they lead to the concept of studentized residuals. In econometrics, "errors" are also called disturbances. Introduction Suppose there is a series of observations from a univariate distribution and we want to estimate the mean of that distribution (the so-called location model). In this case, the errors are th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Partial Regression Plot

In applied statistics, a partial regression plot attempts to show the effect of adding another variable to a model that already has one or more independent variables. Partial regression plots are also referred to as added variable plots, adjusted variable plots, and individual coefficient plots. When performing a linear regression with a single independent variable, a scatter plot of the response variable against the independent variable provides a good indication of the nature of the relationship. If there is more than one independent variable, things become more complicated. Although it can still be useful to generate scatter plots of the response variable against each of the independent variables, this does not take into account the effect of the other independent variables in the model. Calculation Partial regression plots are formed by: #Computing the residuals of regressing the response variable against the independent variables but omitting ''X''i #Computing the residuals fr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Leverage (statistics)

In statistics and in particular in regression analysis, leverage is a measure of how far away the independent variable values of an observation are from those of the other observations. ''High-leverage points'', if any, are outliers with respect to the independent variables. That is, high-leverage points have no neighboring points in \mathbb^ space, where '''' is the number of independent variables in a regression model. This makes the fitted model likely to pass close to a high leverage observation. Hence high-leverage points have the potential to cause large changes in the parameter estimates when they are deleted i.e., to be influential points. Although an influential point will typically have high leverage, a high leverage point is not necessarily an influential point. The leverage is typically defined as the diagonal elements of the hat matrix. Definition and interpretations Consider the linear regression model _i = \boldsymbol_i^\boldsymbol+_i, i=1,\, 2,\ldots,\, n. That is ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Partial Residual Plot

In applied statistics, a partial residual plot is a graphical technique that attempts to show the relationship between a given independent variable and the response variable given that other independent variables are also in the model. Background When performing a linear regression with a single independent variable, a scatter plot of the response variable against the independent variable provides a good indication of the nature of the relationship. If there is more than one independent variable, things become more complicated. Although it can still be useful to generate scatter plots of the response variable against each of the independent variables, this does not take into account the effect of the other independent variables in the model. Definition Partial residual plots are formed as : \text + \hat_iX_i \text X_i, where : Residuals = residuals from the full model, : \hat_i = regression coefficient from the ''i''-th independent variable in the full model, : ''X''i = the ''i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Partial Regression Plot

In applied statistics, a partial regression plot attempts to show the effect of adding another variable to a model that already has one or more independent variables. Partial regression plots are also referred to as added variable plots, adjusted variable plots, and individual coefficient plots. When performing a linear regression with a single independent variable, a scatter plot of the response variable against the independent variable provides a good indication of the nature of the relationship. If there is more than one independent variable, things become more complicated. Although it can still be useful to generate scatter plots of the response variable against each of the independent variables, this does not take into account the effect of the other independent variables in the model. Calculation Partial regression plots are formed by: #Computing the residuals of regressing the response variable against the independent variables but omitting ''X''i #Computing the residuals fr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Variance Inflation Factor

In statistics, the variance inflation factor (VIF) is the ratio (quotient) of the variance of estimating some parameter in a model that includes multiple other terms (parameters) by the variance of a model constructed using only one term. It quantifies the severity of multicollinearity in an ordinary least squares Linear regression, regression analysis. It provides an index that measures how much the variance (the square of the estimate's standard deviation) of an estimated regression coefficient is increased because of collinearity. Cuthbert Daniel claims to have invented the concept behind the variance inflation factor, but did not come up with the name. Definition Consider the following linear model with ''k'' independent variables: : ''Y'' = ''β''0 + ''β''1 ''X''1 + ''β''2 ''X'' 2 + ... + ''β''''k'' ''X''''k'' + ''ε''. The Standard error (statistics), standard error of the estimate of ''β''''j'' is the square root of the ''j'' + 1 diagonal element of ''s''2(''X ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Dataplot

Dataplot is a public domain software system for scientific visualization and statistical analysis. It was developed and is being maintained at the National Institute of Standards and Technology. Dataplot's source code In computing, source code, or simply code, is any collection of code, with or without comments, written using a human-readable programming language, usually as plain text. The source code of a program is specially designed to facilitate the wo ... is available and in public domain. External linksNIST website References [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

National Institute Of Standards And Technology

The National Institute of Standards and Technology (NIST) is an agency of the United States Department of Commerce whose mission is to promote American innovation and industrial competitiveness. NIST's activities are organized into physical science laboratory programs that include nanoscale science and technology, engineering, information technology, neutron research, material measurement, and physical measurement. From 1901 to 1988, the agency was named the National Bureau of Standards. History Background The Articles of Confederation, ratified by the colonies in 1781, provided: The United States in Congress assembled shall also have the sole and exclusive right and power of regulating the alloy and value of coin struck by their own authority, or by that of the respective states—fixing the standards of weights and measures throughout the United States. Article 1, section 8, of the Constitution of the United States, ratified in 1789, granted these powers to the new Congr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |