|

Neyman–Pearson Lemma

In statistics, the Neyman–Pearson lemma was introduced by Jerzy Neyman and Egon Pearson in a paper in 1933. The Neyman-Pearson lemma is part of the Neyman-Pearson theory of statistical testing, which introduced concepts like errors of the second kind, power function, and inductive behavior.The Fisher, Neyman-Pearson Theories of Testing Hypotheses: One Theory or Two?: Journal of the American Statistical Association: Vol 88, No 424The Fisher, Neyman-Pearson Theories of Testing Hypotheses: One Theory or Two?: Journal of the American Statistical Association: Vol 88, No 424/ref>Wald: Chapter II: The Neyman-Pearson Theory of Testing a Statistical HypothesisWald: Chapter II: The Neyman-Pearson Theory of Testing a Statistical Hypothesis/ref>The Empire of ChanceThe Empire of Chance/ref> The previous Fisherian theory of significance testing postulated only one hypothesis. By introducing a competing hypothesis, the Neyman-Pearsonian flavor of statistical testing allows investigating the two ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistics

Statistics (from German language, German: ''wikt:Statistik#German, Statistik'', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, industrial, or social problem, it is conventional to begin with a statistical population or a statistical model to be studied. Populations can be diverse groups of people or objects such as "all people living in a country" or "every atom composing a crystal". Statistics deals with every aspect of data, including the planning of data collection in terms of the design of statistical survey, surveys and experimental design, experiments.Dodge, Y. (2006) ''The Oxford Dictionary of Statistical Terms'', Oxford University Press. When census data cannot be collected, statisticians collect data by developing specific experiment designs and survey sample (statistics), samples. Representative sampling as ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Consumer Theory

The theory of consumer choice is the branch of microeconomics that relates preferences to consumption expenditures and to consumer demand curves. It analyzes how consumers maximize the desirability of their consumption as measured by their preferences subject to limitations on their expenditures, by maximizing utility subject to a consumer budget constraint. Factors influencing consumers' evaluation of the utility of goods: income level, cultural factors, product information and physio-psychological factors. Consumption is separated from production, logically, because two different economic agents are involved. In the first case consumption is by the primary individual, individual tastes or preferences determine the amount of pleasure people derive from the goods and services they consume.; in the second case, a producer might make something that he would not consume himself. Therefore, different motivations and abilities are involved. The models that make up consumer theory ar ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Wilks' Theorem

In statistics Wilks' theorem offers an asymptotic distribution of the log-likelihood ratio statistic, which can be used to produce confidence intervals for maximum-likelihood estimates or as a test statistic for performing the likelihood-ratio test. Statistical tests (such as hypothesis testing) generally require knowledge of the probability distribution of the test statistic. This is often a problem for likelihood ratios, where the probability distribution can be very difficult to determine. A convenient result by Samuel S. Wilks says that as the sample size approaches \infty, the distribution of the test statistic -2 \log(\Lambda) asymptotically approaches the chi-squared (\chi^2) distribution under the null hypothesis H_0. Here, \Lambda denotes the likelihood ratio, and the \chi^2 distribution has degrees of freedom equal to the difference in dimensionality of \Theta and \Theta_0, where \Theta is the full parameter space and \Theta_0 is the subset of the parameter space asso ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Lemma (mathematics)

In mathematics, informal logic and argument mapping, a lemma (plural lemmas or lemmata) is a generally minor, proven proposition which is used as a stepping stone to a larger result. For that reason, it is also known as a "helping theorem" or an "auxiliary theorem". In many cases, a lemma derives its importance from the theorem it aims to prove; however, a lemma can also turn out to be more important than originally thought. The word "lemma" derives from the Ancient Greek ("anything which is received", such as a gift, profit, or a bribe). Comparison with theorem There is no formal distinction between a lemma and a theorem, only one of intention (see Theorem terminology). However, a lemma can be considered a minor result whose sole purpose is to help prove a more substantial theorem – a step in the direction of proof. Well-known lemmas A good stepping stone can lead to many others. Some powerful results in mathematics are known as lemmas, first named for their originally min ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

F-test

An ''F''-test is any statistical test in which the test statistic has an ''F''-distribution under the null hypothesis. It is most often used when comparing statistical models that have been fitted to a data set, in order to identify the model that best fits the population from which the data were sampled. Exact "''F''-tests" mainly arise when the models have been fitted to the data using least squares. The name was coined by George W. Snedecor, in honour of Ronald Fisher. Fisher initially developed the statistic as the variance ratio in the 1920s. Common examples Common examples of the use of ''F''-tests include the study of the following cases: * The hypothesis that the means of a given set of normally distributed populations, all having the same standard deviation, are equal. This is perhaps the best-known ''F''-test, and plays an important role in the analysis of variance (ANOVA). * The hypothesis that a proposed regression model fits the data well. See Lack-of-fit sum of ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Error Exponents In Hypothesis Testing

In statistical hypothesis testing, the error exponent of a hypothesis testing procedure is the rate at which the probabilities of Type I and Type II decay exponentially with the size of the sample used in the test. For example, if the probability of error P_ of a test decays as e^, where n is the sample size, the error exponent is \beta. Formally, the error exponent of a test is defined as the limiting value of the ratio of the negative logarithm of the error probability to the sample size for large sample sizes: \lim_\frac. Error exponents for different hypothesis tests are computed using Sanov's theorem and other results from large deviations theory. Error exponents in binary hypothesis testing Consider a binary hypothesis testing problem in which observations are modeled as independent and identically distributed random variables under each hypothesis. Let Y_1, Y_2, \ldots, Y_n denote the observations. Let f_0 denote the probability density function of each observation Y_i u ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Large Hadron Collider

The Large Hadron Collider (LHC) is the world's largest and highest-energy particle collider. It was built by the European Organization for Nuclear Research (CERN) between 1998 and 2008 in collaboration with over 10,000 scientists and hundreds of universities and laboratories, as well as more than 100 countries. It lies in a tunnel in circumference and as deep as beneath the France–Switzerland border near Geneva. The first collisions were achieved in 2010 at an energy of 3.5 teraelectronvolts (TeV) per beam, about four times the previous world record. After upgrades it reached 6.5 TeV per beam (13 TeV total collision energy). At the end of 2018, it was shut down for three years for further upgrades. The collider has four crossing points where the accelerated particles collide. Seven detectors, each designed to detect different phenomena, are positioned around the crossing points. The LHC primarily collides proton beams, but it can also accelerate beams of heavy ion ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Standard Model

The Standard Model of particle physics is the theory describing three of the four known fundamental forces (electromagnetism, electromagnetic, weak interaction, weak and strong interactions - excluding gravity) in the universe and classifying all known elementary particles. It was developed in stages throughout the latter half of the 20th century, through the work of many scientists worldwide, with the current formulation being finalized in the mid-1970s upon experimental confirmation of the existence of quarks. Since then, proof of the top quark (1995), the tau neutrino (2000), and the Higgs boson (2012) have added further credence to the Standard Model. In addition, the Standard Model has predicted various properties of weak neutral currents and the W and Z bosons with great accuracy. Although the Standard Model is believed to be theoretically self-consistent and has demonstrated huge successes in providing experimental predictions, it leaves some physics beyond the standard m ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

False Positives And False Negatives

A false positive is an error in binary classification in which a test result incorrectly indicates the presence of a condition (such as a disease when the disease is not present), while a false negative is the opposite error, where the test result incorrectly indicates the absence of a condition when it is actually present. These are the two kinds of errors in a binary test, in contrast to the two kinds of correct result (a and a ). They are also known in medicine as a false positive (or false negative) diagnosis, and in statistical classification as a false positive (or false negative) error. In statistical hypothesis testing the analogous concepts are known as type I and type II errors, where a positive result corresponds to rejecting the null hypothesis, and a negative result corresponds to not rejecting the null hypothesis. The terms are often used interchangeably, but there are differences in detail and interpretation due to the differences between medical testing and statist ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Signal Processing

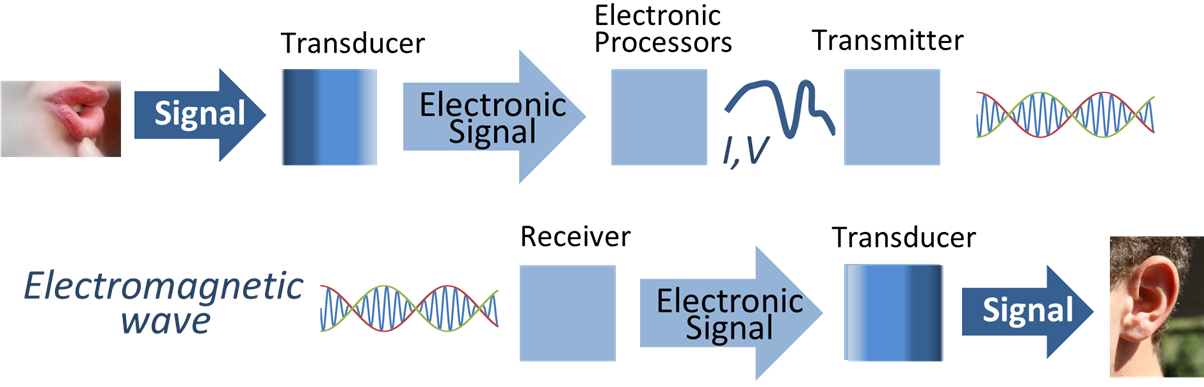

Signal processing is an electrical engineering subfield that focuses on analyzing, modifying and synthesizing ''signals'', such as audio signal processing, sound, image processing, images, and scientific measurements. Signal processing techniques are used to optimize transmissions, Data storage, digital storage efficiency, correcting distorted signals, subjective video quality and to also detect or pinpoint components of interest in a measured signal. History According to Alan V. Oppenheim and Ronald W. Schafer, the principles of signal processing can be found in the classical numerical analysis techniques of the 17th century. They further state that the digital refinement of these techniques can be found in the digital control systems of the 1940s and 1950s. In 1948, Claude Shannon wrote the influential paper "A Mathematical Theory of Communication" which was published in the Bell System Technical Journal. The paper laid the groundwork for later development of information c ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Data Transmission

Data transmission and data reception or, more broadly, data communication or digital communications is the transfer and reception of data in the form of a digital bitstream or a digitized analog signal transmitted over a point-to-point or point-to-multipoint communication channel. Examples of such channels are copper wires, optical fibers, wireless communication using radio spectrum, storage media and computer buses. The data are represented as an electromagnetic signal, such as an electrical voltage, radiowave, microwave, or infrared signal. Analog transmission is a method of conveying voice, data, image, signal or video information using a continuous signal which varies in amplitude, phase, or some other property in proportion to that of a variable. The messages are either represented by a sequence of pulses by means of a line code (''baseband transmission''), or by a limited set of continuously varying waveforms (''passband transmission''), using a digital modulat ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |