|

Neural Turing Machine

A neural Turing machine (NTM) is a recurrent neural network model of a Turing machine. The approach was published by Alex Graves et al. in 2014. NTMs combine the fuzzy pattern matching capabilities of neural networks with the algorithmic power of programmable computers. An NTM has a neural network controller coupled to external memory resources, which it interacts with through attentional mechanisms. The memory interactions are differentiable end-to-end, making it possible to optimize them using gradient descent. An NTM with a long short-term memory (LSTM) network controller can infer simple algorithms such as copying, sorting, and associative recall from examples alone. The authors of the original NTM paper did not publish their source code. The first stable open-source implementation was published in 2018 at the 27th International Conference on Artificial Neural Networks, receiving a best-paper award. Other open source implementations of NTMs exist but as of 2018 they are n ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Recurrent Neural Network

Recurrent neural networks (RNNs) are a class of artificial neural networks designed for processing sequential data, such as text, speech, and time series, where the order of elements is important. Unlike feedforward neural networks, which process inputs independently, RNNs utilize recurrent connections, where the output of a neuron at one time step is fed back as input to the network at the next time step. This enables RNNs to capture temporal dependencies and patterns within sequences. The fundamental building block of RNNs is the ''recurrent unit'', which maintains a ''hidden state''—a form of memory that is updated at each time step based on the current input and the previous hidden state. This feedback mechanism allows the network to learn from past inputs and incorporate that knowledge into its current processing. RNNs have been successfully applied to tasks such as unsegmented, connected handwriting recognition, speech recognition, natural language processing, and neural ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

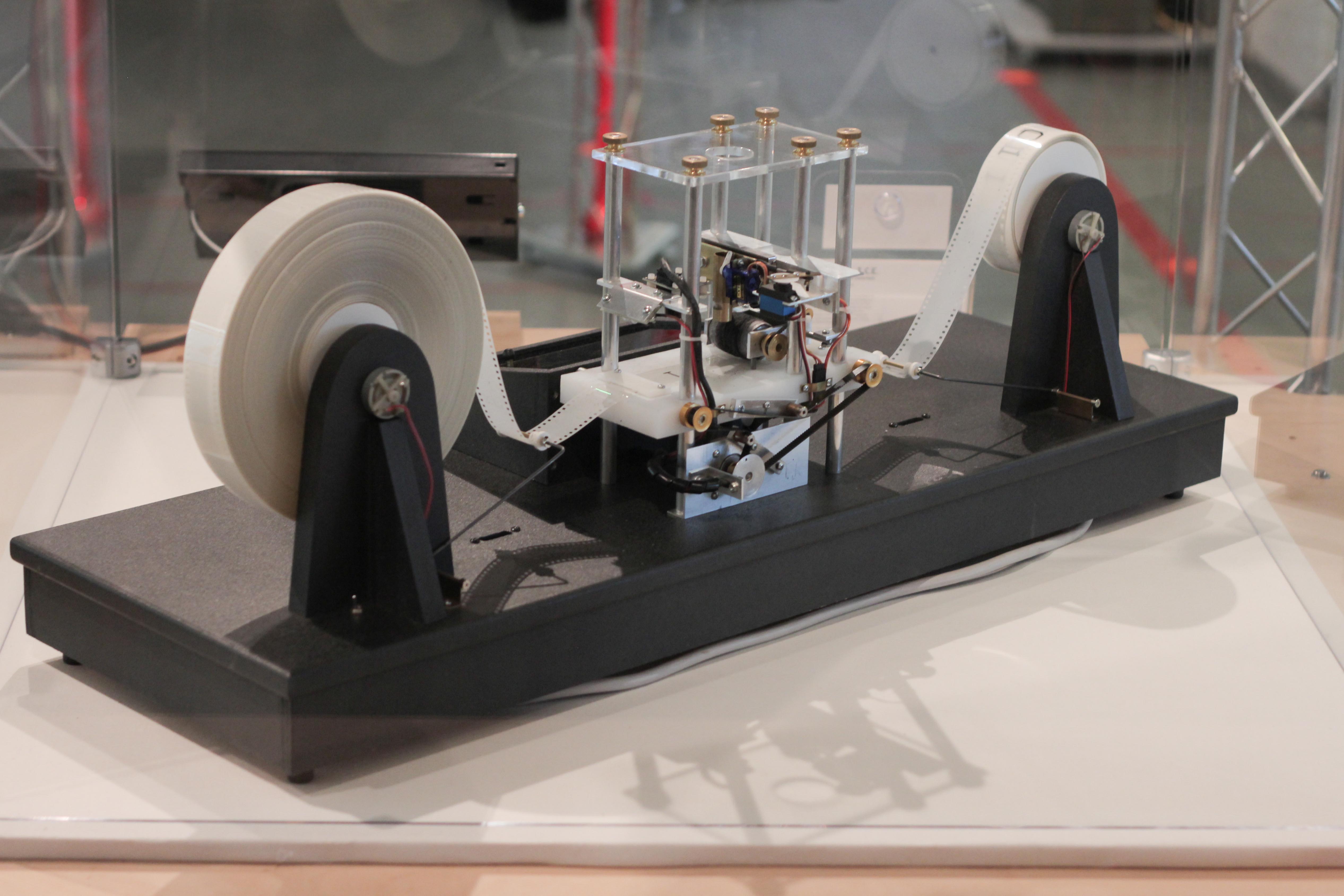

Turing Machine

A Turing machine is a mathematical model of computation describing an abstract machine that manipulates symbols on a strip of tape according to a table of rules. Despite the model's simplicity, it is capable of implementing any computer algorithm. The machine operates on an infinite memory tape divided into discrete mathematics, discrete cells, each of which can hold a single symbol drawn from a finite set of symbols called the Alphabet (formal languages), alphabet of the machine. It has a "head" that, at any point in the machine's operation, is positioned over one of these cells, and a "state" selected from a finite set of states. At each step of its operation, the head reads the symbol in its cell. Then, based on the symbol and the machine's own present state, the machine writes a symbol into the same cell, and moves the head one step to the left or the right, or halts the computation. The choice of which replacement symbol to write, which direction to move the head, and whet ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Alex Graves (computer Scientist)

Alex Graves is a computer scientist. Education Graves earned his Bachelor of Science degree in Theoretical Physics from the University of Edinburgh and a PhD in artificial intelligence from the Technical University of Munich supervised by Jürgen Schmidhuber at the Dalle Molle Institute for Artificial Intelligence Research. Career and research After his PhD, Graves was postdoc working with Schmidhuber at the Technical University of Munich and Geoffrey Hinton at the University of Toronto. At the Dalle Molle Institute for Artificial Intelligence Research, Graves trained long short-term memory (LSTM) neural networks by a novel method called connectionist temporal classification (CTC).Alex Graves, Santiago Fernandez, Faustino Gomez, and Jürgen Schmidhuber (2006). Connectionist temporal classification: Labelling unsegmented sequence data with recurrent neural nets. Proceedings of ICML’06, pp. 369–376. This method outperformed traditional speech recognition models in certain appl ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Pattern Matching

In computer science, pattern matching is the act of checking a given sequence of tokens for the presence of the constituents of some pattern. In contrast to pattern recognition, the match usually must be exact: "either it will or will not be a match." The patterns generally have the form of either sequences or tree structures. Uses of pattern matching include outputting the locations (if any) of a pattern within a token sequence, to output some component of the matched pattern, and to substitute the matching pattern with some other token sequence (i.e., search and replace). Sequence patterns (e.g., a text string) are often described using regular expressions and matched using techniques such as backtracking. Tree patterns are used in some programming languages as a general tool to process data based on its structure, e.g. C#, F#, Haskell, Java, ML, Python, Ruby, Rust, Scala, Swift and the symbolic mathematics language Mathematica have special syntax for expressing ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Neural Network

A neural network is a group of interconnected units called neurons that send signals to one another. Neurons can be either biological cells or signal pathways. While individual neurons are simple, many of them together in a network can perform complex tasks. There are two main types of neural networks. *In neuroscience, a '' biological neural network'' is a physical structure found in brains and complex nervous systems – a population of nerve cells connected by synapses. *In machine learning, an '' artificial neural network'' is a mathematical model used to approximate nonlinear functions. Artificial neural networks are used to solve artificial intelligence problems. In biology In the context of biology, a neural network is a population of biological neurons chemically connected to each other by synapses. A given neuron can be connected to hundreds of thousands of synapses. Each neuron sends and receives electrochemical signals called action potentials to its conne ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Algorithm

In mathematics and computer science, an algorithm () is a finite sequence of Rigour#Mathematics, mathematically rigorous instructions, typically used to solve a class of specific Computational problem, problems or to perform a computation. Algorithms are used as specifications for performing calculations and data processing. More advanced algorithms can use Conditional (computer programming), conditionals to divert the code execution through various routes (referred to as automated decision-making) and deduce valid inferences (referred to as automated reasoning). In contrast, a Heuristic (computer science), heuristic is an approach to solving problems without well-defined correct or optimal results.David A. Grossman, Ophir Frieder, ''Information Retrieval: Algorithms and Heuristics'', 2nd edition, 2004, For example, although social media recommender systems are commonly called "algorithms", they actually rely on heuristics as there is no truly "correct" recommendation. As an e ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Programmable Computer

A stored-program computer is a computer that stores program instructions in electronically, electromagnetically, or optically accessible memory. This contrasts with systems that stored the program instructions with plugboards or similar mechanisms. The definition is often extended with the requirement that the treatment of programs and data in memory be interchangeable or uniform. Description In principle, stored-program computers have been designed with various architectural characteristics. A computer with a von Neumann architecture stores program data and instruction data in the same memory, while a computer with a Harvard architecture has separate memories for storing program and data. However, the term ''stored-program computer'' is sometimes used as a synonym for the von Neumann architecture. Jack Copeland considers that it is "historically inappropriate, to refer to electronic stored-program digital computers as 'von Neumann machines. Hennessy and Patterson wrote that ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

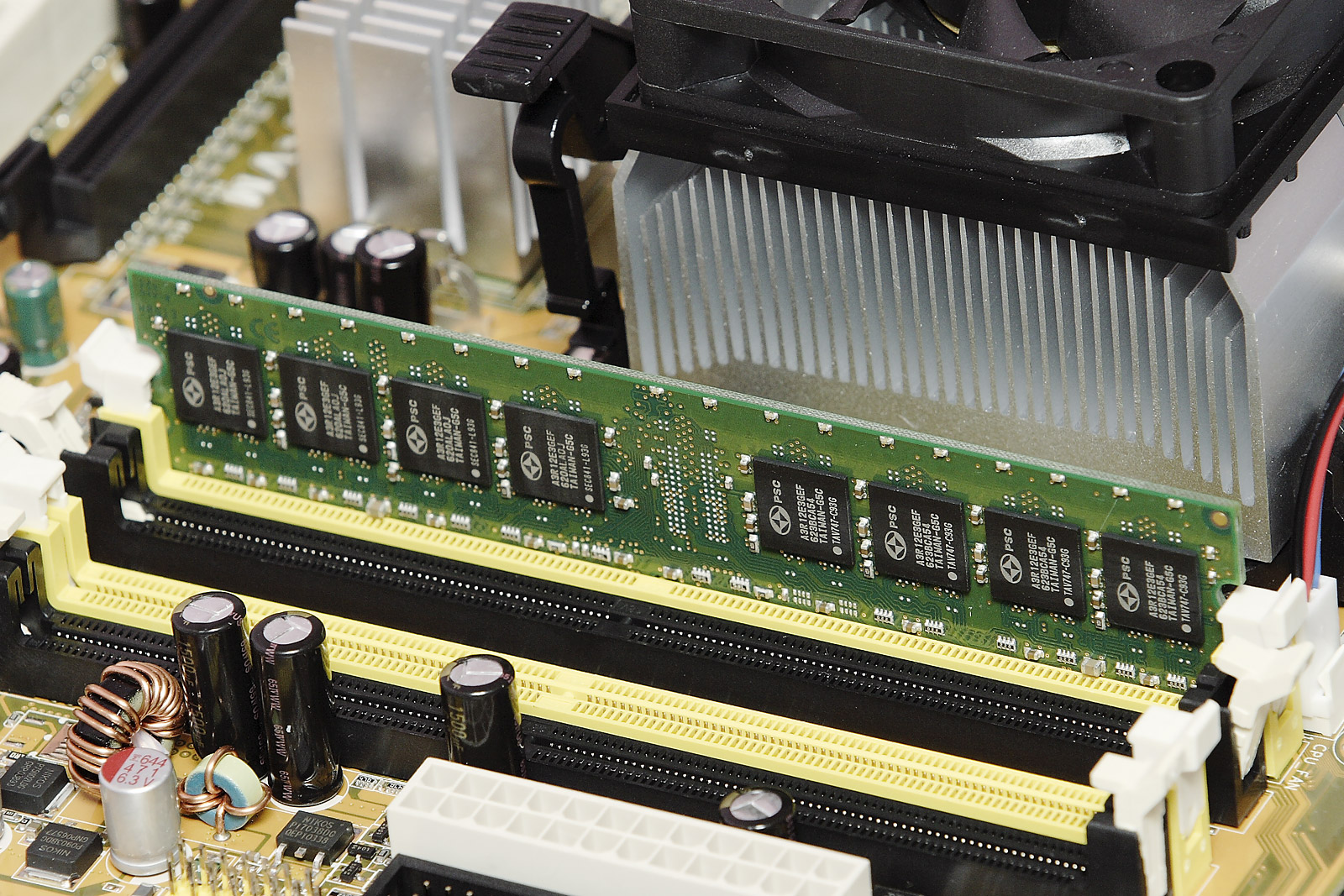

Auxiliary Memory

Computer data storage or digital data storage is a technology consisting of computer components and recording media that are used to retain digital data. It is a core function and fundamental component of computers. The central processing unit (CPU) of a computer is what manipulates data by performing computations. In practice, almost all computers use a storage hierarchy, which puts fast but expensive and small storage options close to the CPU and slower but less expensive and larger options further away. Generally, the fast technologies are referred to as "memory", while slower persistent technologies are referred to as "storage". Even the first computer designs, Charles Babbage's Analytical Engine and Percy Ludgate's Analytical Machine, clearly distinguished between processing and memory (Babbage stored numbers as rotations of gears, while Ludgate stored numbers as displacements of rods in shuttles). This distinction was extended in the Von Neumann architecture, wh ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Gradient Descent

Gradient descent is a method for unconstrained mathematical optimization. It is a first-order iterative algorithm for minimizing a differentiable multivariate function. The idea is to take repeated steps in the opposite direction of the gradient (or approximate gradient) of the function at the current point, because this is the direction of steepest descent. Conversely, stepping in the direction of the gradient will lead to a trajectory that maximizes that function; the procedure is then known as ''gradient ascent''. It is particularly useful in machine learning for minimizing the cost or loss function. Gradient descent should not be confused with local search algorithms, although both are iterative methods for optimization. Gradient descent is generally attributed to Augustin-Louis Cauchy, who first suggested it in 1847. Jacques Hadamard independently proposed a similar method in 1907. Its convergence properties for non-linear optimization problems were first studied by Has ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Long Short-term Memory

Long short-term memory (LSTM) is a type of recurrent neural network (RNN) aimed at mitigating the vanishing gradient problem commonly encountered by traditional RNNs. Its relative insensitivity to gap length is its advantage over other RNNs, hidden Markov models, and other sequence learning methods. It aims to provide a short-term memory for RNN that can last thousands of timesteps (thus "''long'' short-term memory"). The name is made in analogy with long-term memory and short-term memory and their relationship, studied by cognitive psychologists since the early 20th century. An LSTM unit is typically composed of a cell and three gates: an input gate, an output gate, and a forget gate. The cell remembers values over arbitrary time intervals, and the gates regulate the flow of information into and out of the cell. Forget gates decide what information to discard from the previous state, by mapping the previous state and the current input to a value between 0 and 1. A (rounded) ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Source Code

In computing, source code, or simply code or source, is a plain text computer program written in a programming language. A programmer writes the human readable source code to control the behavior of a computer. Since a computer, at base, only understands machine code, source code must be Translator (computing), translated before a computer can Execution (computing), execute it. The translation process can be implemented three ways. Source code can be converted into machine code by a compiler or an assembler (computing), assembler. The resulting executable is machine code ready for the computer. Alternatively, source code can be executed without conversion via an interpreter (computing), interpreter. An interpreter loads the source code into memory. It simultaneously translates and executes each statement (computer science), statement. A method that combines compilation and interpretation is to first produce bytecode. Bytecode is an intermediate representation of source code tha ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Stochastic Gradient Descent

Stochastic gradient descent (often abbreviated SGD) is an Iterative method, iterative method for optimizing an objective function with suitable smoothness properties (e.g. Differentiable function, differentiable or Subderivative, subdifferentiable). It can be regarded as a stochastic approximation of gradient descent optimization, since it replaces the actual gradient (calculated from the entire data set) by an estimate thereof (calculated from a randomly selected subset of the data). Especially in high-dimensional optimization problems this reduces the very high Computational complexity, computational burden, achieving faster iterations in exchange for a lower Rate of convergence, convergence rate. The basic idea behind stochastic approximation can be traced back to the Robbins–Monro algorithm of the 1950s. Today, stochastic gradient descent has become an important optimization method in machine learning. Background Both statistics, statistical M-estimation, estimation and ma ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |