|

Logarithmic Data Transformation

In statistics, data transformation is the application of a deterministic mathematical function to each point in a data set—that is, each data point ''zi'' is replaced with the transformed value ''yi'' = ''f''(''zi''), where ''f'' is a function. Transforms are usually applied so that the data appear to more closely meet the assumptions of a statistical inference procedure that is to be applied, or to improve the interpretability or appearance of graphs. Nearly always, the function that is used to transform the data is invertible, and generally is continuous. The transformation is usually applied to a collection of comparable measurements. For example, if we are working with data on peoples' incomes in some currency unit, it would be common to transform each person's income value by the logarithm function. Motivation Guidance for how data should be transformed, or whether a transformation should be applied at all, should come from the particular statistical analysis to be pe ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Population Vs Area

Population typically refers to the number of people in a single area, whether it be a city or town, region, country, continent, or the world. Governments typically quantify the size of the resident population within their jurisdiction using a census, a process of collecting, analysing, compiling, and publishing data regarding a population. Perspectives of various disciplines Social sciences In sociology and population geography, population refers to a group of human beings with some predefined criterion in common, such as location, race, ethnicity, nationality, or religion. Demography is a social science which entails the statistical study of populations. Ecology In ecology, a population is a group of organisms of the same species who inhabit the same particular geographical area and are capable of interbreeding. The area of a sexual population is the area where inter-breeding is possible between any pair within the area and more probable than cross-breeding with in ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Population

In statistics, a population is a set of similar items or events which is of interest for some question or experiment. A statistical population can be a group of existing objects (e.g. the set of all stars within the Milky Way galaxy) or a hypothetical and potentially infinite group of objects conceived as a generalization from experience (e.g. the set of all possible hands in a game of poker). A common aim of statistical analysis is to produce information about some chosen population. In statistical inference, a subset of the population (a statistical '' sample'') is chosen to represent the population in a statistical analysis. Moreover, the statistical sample must be unbiased and accurately model the population (every unit of the population has an equal chance of selection). The ratio of the size of this statistical sample to the size of the population is called a '' sampling fraction''. It is then possible to estimate the ''population parameters'' using the appropriate ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Variance

In probability theory and statistics, variance is the expectation of the squared deviation of a random variable from its population mean or sample mean. Variance is a measure of dispersion, meaning it is a measure of how far a set of numbers is spread out from their average value. Variance has a central role in statistics, where some ideas that use it include descriptive statistics, statistical inference, hypothesis testing, goodness of fit, and Monte Carlo sampling. Variance is an important tool in the sciences, where statistical analysis of data is common. The variance is the square of the standard deviation, the second central moment of a distribution, and the covariance of the random variable with itself, and it is often represented by \sigma^2, s^2, \operatorname(X), V(X), or \mathbb(X). An advantage of variance as a measure of dispersion is that it is more amenable to algebraic manipulation than other measures of dispersion such as the expected absolute deviatio ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

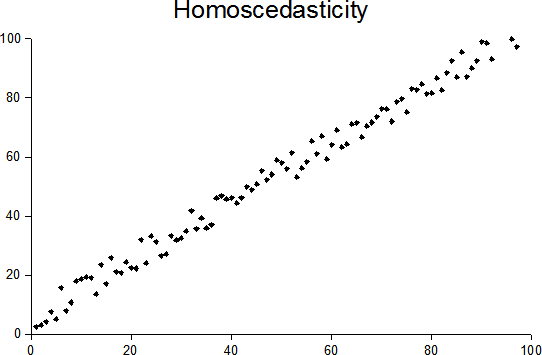

Homoscedasticity

In statistics, a sequence (or a vector) of random variables is homoscedastic () if all its random variables have the same finite variance. This is also known as homogeneity of variance. The complementary notion is called heteroscedasticity. The spellings ''homoskedasticity'' and ''heteroskedasticity'' are also frequently used. Assuming a variable is homoscedastic when in reality it is heteroscedastic () results in unbiased but inefficient point estimates and in biased estimates of standard errors, and may result in overestimating the goodness of fit as measured by the Pearson coefficient. The existence of heteroscedasticity is a major concern in regression analysis and the analysis of variance, as it invalidates statistical tests of significance that assume that the modelling errors all have the same variance. While the ordinary least squares estimator is still unbiased in the presence of heteroscedasticity, it is inefficient and generalized least squares should be used i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Polynomial Regression

In statistics, polynomial regression is a form of regression analysis in which the relationship between the independent variable ''x'' and the dependent variable ''y'' is modelled as an ''n''th degree polynomial in ''x''. Polynomial regression fits a nonlinear relationship between the value of ''x'' and the corresponding conditional mean of ''y'', denoted E(''y'' , ''x''). Although ''polynomial regression'' fits a nonlinear model to the data, as a statistical estimation problem it is linear, in the sense that the regression function E(''y'' , ''x'') is linear in the unknown parameters that are estimated from the data. For this reason, polynomial regression is considered to be a special case of multiple linear regression. The explanatory (independent) variables resulting from the polynomial expansion of the "baseline" variables are known as higher-degree terms. Such variables are also used in classification settings. History Polynomial regression models are usu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Dependent And Independent Variables

Dependent and independent variables are variables in mathematical modeling, statistical modeling and experimental sciences. Dependent variables receive this name because, in an experiment, their values are studied under the supposition or demand that they depend, by some law or rule (e.g., by a mathematical function), on the values of other variables. Independent variables, in turn, are not seen as depending on any other variable in the scope of the experiment in question. In this sense, some common independent variables are time, space, density, mass, fluid flow rate, and previous values of some observed value of interest (e.g. human population size) to predict future values (the dependent variable). Of the two, it is always the dependent variable whose variation is being studied, by altering inputs, also known as regressors in a statistical context. In an experiment, any variable that can be attributed a value without attributing a value to any other variable is called ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Expected Value

In probability theory, the expected value (also called expectation, expectancy, mathematical expectation, mean, average, or first moment) is a generalization of the weighted average. Informally, the expected value is the arithmetic mean of a large number of independently selected outcomes of a random variable. The expected value of a random variable with a finite number of outcomes is a weighted average of all possible outcomes. In the case of a continuum of possible outcomes, the expectation is defined by integration. In the axiomatic foundation for probability provided by measure theory, the expectation is given by Lebesgue integration. The expected value of a random variable is often denoted by , , or , with also often stylized as or \mathbb. History The idea of the expected value originated in the middle of the 17th century from the study of the so-called problem of points, which seeks to divide the stakes ''in a fair way'' between two players, who have to e ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Linear

Linearity is the property of a mathematical relationship ('' function'') that can be graphically represented as a straight line. Linearity is closely related to '' proportionality''. Examples in physics include rectilinear motion, the linear relationship of voltage and current in an electrical conductor ( Ohm's law), and the relationship of mass and weight. By contrast, more complicated relationships are ''nonlinear''. Generalized for functions in more than one dimension, linearity means the property of a function of being compatible with addition and scaling, also known as the superposition principle. The word linear comes from Latin ''linearis'', "pertaining to or resembling a line". In mathematics In mathematics, a linear map or linear function ''f''(''x'') is a function that satisfies the two properties: * Additivity: . * Homogeneity of degree 1: for all α. These properties are known as the superposition principle. In this definition, ''x'' is not necessarily ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Linear Regression

In statistics, linear regression is a linear approach for modelling the relationship between a scalar response and one or more explanatory variables (also known as dependent and independent variables). The case of one explanatory variable is called '' simple linear regression''; for more than one, the process is called multiple linear regression. This term is distinct from multivariate linear regression, where multiple correlated dependent variables are predicted, rather than a single scalar variable. In linear regression, the relationships are modeled using linear predictor functions whose unknown model parameters are estimated from the data. Such models are called linear models. Most commonly, the conditional mean of the response given the values of the explanatory variables (or predictors) is assumed to be an affine function of those values; less commonly, the conditional median or some other quantile is used. Like all forms of regression analysis, linear regressio ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Multiplicative Inverse

In mathematics, a multiplicative inverse or reciprocal for a number ''x'', denoted by 1/''x'' or ''x''−1, is a number which when multiplied by ''x'' yields the multiplicative identity, 1. The multiplicative inverse of a fraction ''a''/''b'' is ''b''/''a''. For the multiplicative inverse of a real number, divide 1 by the number. For example, the reciprocal of 5 is one fifth (1/5 or 0.2), and the reciprocal of 0.25 is 1 divided by 0.25, or 4. The reciprocal function, the function ''f''(''x'') that maps ''x'' to 1/''x'', is one of the simplest examples of a function which is its own inverse (an involution). Multiplying by a number is the same as dividing by its reciprocal and vice versa. For example, multiplication by 4/5 (or 0.8) will give the same result as division by 5/4 (or 1.25). Therefore, multiplication by a number followed by multiplication by its reciprocal yields the original number (since the product of the number and its reciprocal is 1). The term ''reciprocal' ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Probability Distribution

In probability theory and statistics, a probability distribution is the mathematical function that gives the probabilities of occurrence of different possible outcomes for an experiment. It is a mathematical description of a random phenomenon in terms of its sample space and the probabilities of events (subsets of the sample space). For instance, if is used to denote the outcome of a coin toss ("the experiment"), then the probability distribution of would take the value 0.5 (1 in 2 or 1/2) for , and 0.5 for (assuming that the coin is fair). Examples of random phenomena include the weather conditions at some future date, the height of a randomly selected person, the fraction of male students in a school, the results of a survey to be conducted, etc. Introduction A probability distribution is a mathematical description of the probabilities of events, subsets of the sample space. The sample space, often denoted by \Omega, is the set of all possible outcomes of a ra ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |