|

LZ1 (algorithm)

LZ77 and LZ78 are the two lossless data compression algorithms published in papers by Abraham Lempel and Jacob Ziv in 1977 and 1978. They are also known as LZ1 and LZ2 respectively. These two algorithms form the basis for many variations including LZW, LZSS, LZMA and others. Besides their academic influence, these algorithms formed the basis of several ubiquitous compression schemes, including GIF and the DEFLATE algorithm used in PNG and ZIP. They are both theoretically dictionary coders. LZ77 maintains a sliding window during compression. This was later shown to be equivalent to the ''explicit dictionary'' constructed by LZ78—however, they are only equivalent when the entire data is intended to be decompressed. Since LZ77 encodes and decodes from a sliding window over previously seen characters, decompression must always start at the beginning of the input. Conceptually, LZ78 decompression could allow random access to the input if the entire dictionary were known in adva ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Lossless Data Compression

Lossless compression is a class of data compression that allows the original data to be perfectly reconstructed from the compressed data with no loss of information. Lossless compression is possible because most real-world data exhibits statistical redundancy. By contrast, lossy compression permits reconstruction only of an approximation of the original data, though usually with greatly improved compression rates (and therefore reduced media sizes). By operation of the pigeonhole principle, no lossless compression algorithm can efficiently compress all possible data. For this reason, many different algorithms exist that are designed either with a specific type of input data in mind or with specific assumptions about what kinds of redundancy the uncompressed data are likely to contain. Therefore, compression ratios tend to be stronger on human- and machine-readable documents and code in comparison to entropic binary data (random bytes). Lossless data compression is used in ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Peter Shor

Peter Williston Shor (born August 14, 1959) is an American professor of applied mathematics at MIT. He is known for his work on quantum computation, in particular for devising Shor's algorithm, a quantum algorithm for factoring exponentially faster than the best currently-known algorithm running on a classical computer. Early life and education Shor was born in New York City to Joan Bopp Shor and S. W. Williston Shor, of Jewish descent. He grew up in Washington, D.C. and Mill Valley, California. While attending Tamalpais High School, he placed third in the 1977 USA Mathematical Olympiad. After graduation that year, he won a silver medal at the International Math Olympiad in Yugoslavia (the U.S. team achieved the most points per country that year). He received his B.S. in Mathematics in 1981 for undergraduate work at Caltech, and was a Putnam Fellow in 1978. He earned his PhD in Applied Mathematics from MIT in 1985. His doctoral advisor was F. Thomson Leighton, and his ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Lossless Compression Algorithms

Lossless compression is a class of data compression that allows the original data to be perfectly reconstructed from the compressed data with no loss of information. Lossless compression is possible because most real-world data exhibits statistical redundancy. By contrast, lossy compression permits reconstruction only of an approximation of the original data, though usually with greatly improved compression rates (and therefore reduced media sizes). By operation of the pigeonhole principle, no lossless compression algorithm can efficiently compress all possible data. For this reason, many different algorithms exist that are designed either with a specific type of input data in mind or with specific assumptions about what kinds of redundancy the uncompressed data are likely to contain. Therefore, compression ratios tend to be stronger on human- and machine-readable documents and code in comparison to entropic binary data (random bytes). Lossless data compression is used in man ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Faculty Of Electrical Engineering And Computing, University Of Zagreb

The Faculty of Electrical Engineering and Computing ( hr, Fakultet elektrotehnike i računarstva, abbr: ''FER'') is a faculty of the University of Zagreb. It is the largest technical faculty and the leading educational as well as research-and-development institution in the fields of electrical engineering and computing in Croatia. FER owns four buildings situated in the Zagreb neighbourhood of Martinovka, Trnje. The total area of the site is . , the Faculty employs more than 160 professors and 210 teaching and research assistants. In the academic year 2010/2011, the total number of students was about 3,800 in the undergraduate and graduate level, and about 450 in the PhD program. As of academic year 2004./2005., when the implementation of the Bologna process started at the University of Zagreb, the faculty has two baccalaureus programmes (each lasting 3 years): * Electrical engineering and information technology * Computing After receiving a bachelor's degree, students can ta ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Lempel–Ziv–Stac

Lempel–Ziv–Stac (LZS, or Stac compression or Stacker compression) is a lossless data compression algorithm that uses a combination of the LZ77 sliding-window compression algorithm and fixed Huffman coding. It was originally developed by Stac Electronics for tape compression, and subsequently adapted for hard disk compression and sold as the Stacker disk compression software. It was later specified as a compression algorithm for various network protocols. LZS is specified in the Cisco IOS stack. Standards LZS compression is standardized as an INCITS (previously ANSI) standard. LZS compression is specified for various Internet protocols: * – ''PPP LZS-DCP Compression Protocol (LZS-DCP)'' * – ''PPP Stac LZS Compression Protocol'' * – ''IP Payload Compression Using LZS'' * – ''Transport Layer Security (TLS) Protocol Compression Using Lempel-Ziv-Stac (LZS)'' Algorithm LZS compression and decompression uses an LZ77 type algorithm. It uses the last 2 KB of un ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Trie

In computer science, a trie, also called digital tree or prefix tree, is a type of ''k''-ary search tree, a tree data structure used for locating specific keys from within a set. These keys are most often strings, with links between nodes defined not by the entire key, but by individual characters. In order to access a key (to recover its value, change it, or remove it), the trie is traversed depth-first, following the links between nodes, which represent each character in the key. Unlike a binary search tree, nodes in the trie do not store their associated key. Instead, a node's position in the trie defines the key with which it is associated. This distributes the value of each key across the data structure, and means that not every node necessarily has an associated value. All the children of a node have a common prefix of the string associated with that parent node, and the root is associated with the empty string. This task of storing data accessible by its prefix ca ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

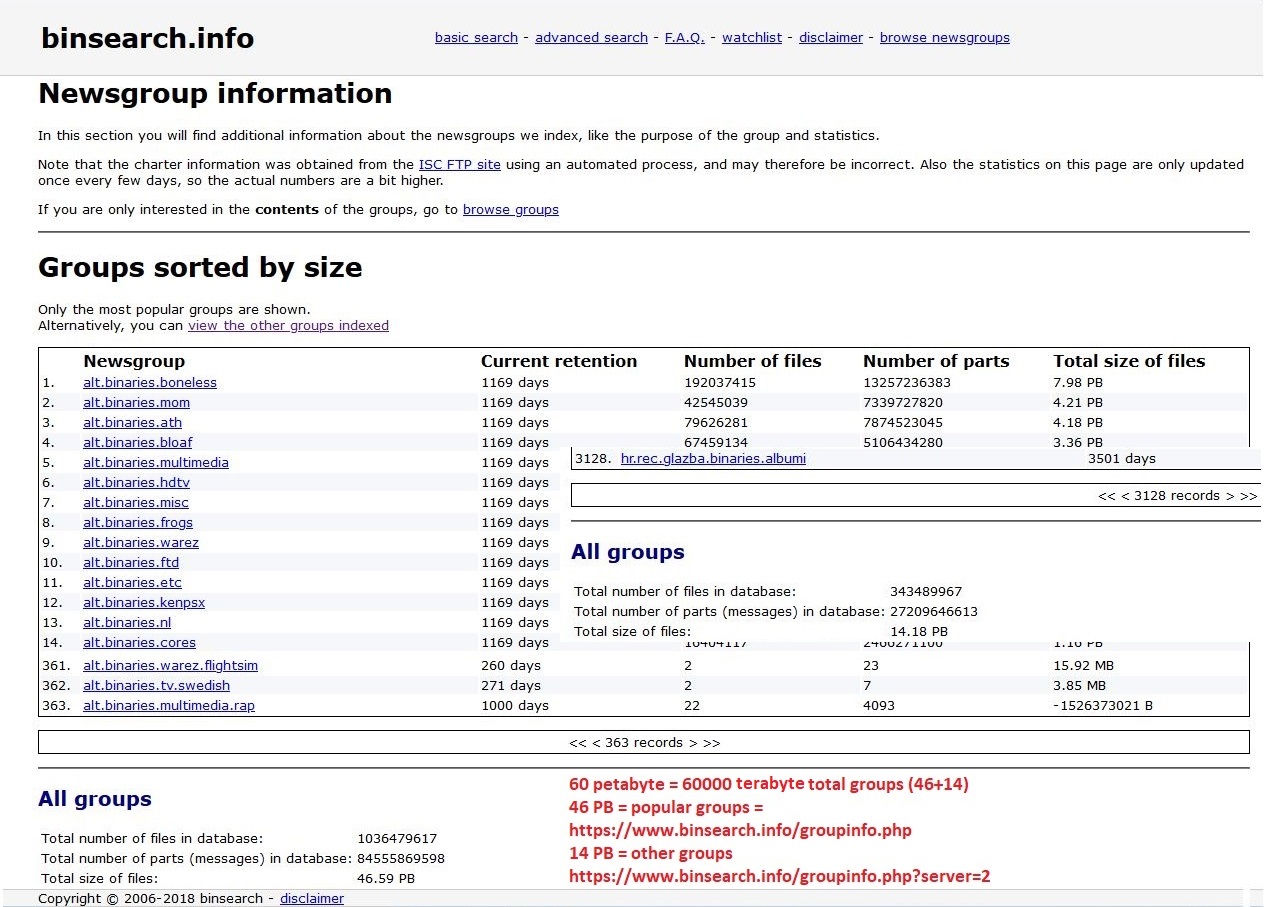

Usenet Newsgroup

A Usenet newsgroup is a repository usually within the Usenet system, for messages posted from users in different locations using the Internet. They are discussion groups and are not devoted to publishing news. Newsgroups are technically distinct from, but functionally similar to, discussion forums on the World Wide Web. Newsreader software is used to read the content of newsgroups. Before the adoption of the World Wide Web, Usenet newsgroups were among the most popular Internet services, and have retained their noncommercial nature in contrast to the increasingly ad-laden web. In recent years, this form of open discussion on the Internet has lost considerable ground to individually-operated browser-accessible forums and big media social networks such as Facebook and Twitter. Communication is facilitated by the Network News Transfer Protocol (NNTP) which allows connection to Usenet servers and data transfer over the internet. Similar to another early (yet still used) pro ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

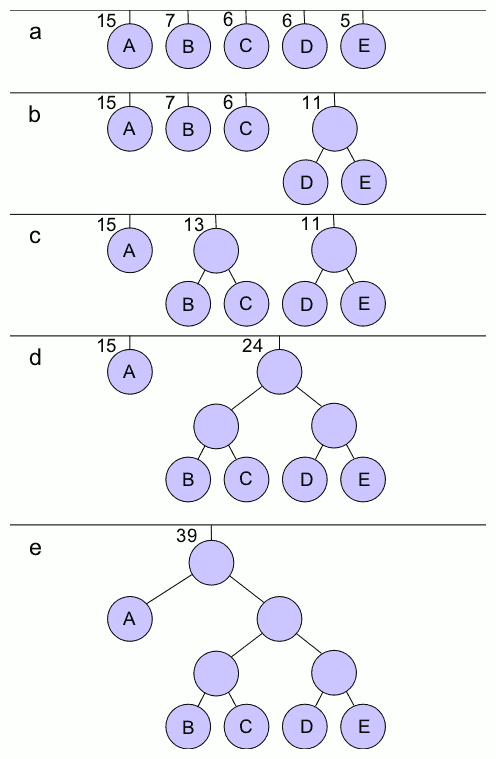

Huffman Coding

In computer science and information theory, a Huffman code is a particular type of optimal prefix code that is commonly used for lossless data compression. The process of finding or using such a code proceeds by means of Huffman coding, an algorithm developed by David A. Huffman while he was a Sc.D. student at MIT, and published in the 1952 paper "A Method for the Construction of Minimum-Redundancy Codes". The output from Huffman's algorithm can be viewed as a variable-length code table for encoding a source symbol (such as a character in a file). The algorithm derives this table from the estimated probability or frequency of occurrence (''weight'') for each possible value of the source symbol. As in other entropy encoding methods, more common symbols are generally represented using fewer bits than less common symbols. Huffman's method can be efficiently implemented, finding a code in time linear to the number of input weights if these weights are sorted. However, althou ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Endianness

In computing, endianness, also known as byte sex, is the order or sequence of bytes of a word of digital data in computer memory. Endianness is primarily expressed as big-endian (BE) or little-endian (LE). A big-endian system stores the most significant byte of a word at the smallest memory address and the least significant byte at the largest. A little-endian system, in contrast, stores the least-significant byte at the smallest address. Bi-endianness is a feature supported by numerous computer architectures that feature switchable endianness in data fetches and stores or for instruction fetches. Other orderings are generically called middle-endian or mixed-endian. Endianness may also be used to describe the order in which the bits are transmitted over a communication channel, e.g., big-endian in a communications channel transmits the most significant bits first. Bit-endianness is seldom used in other contexts. Etymology Danny Cohen introduced the terms ''big-endian'' ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Electronic Arts

Electronic Arts Inc. (EA) is an American video game company headquartered in Redwood City, California. Founded in May 1982 by Apple employee Trip Hawkins, the company was a pioneer of the early home computer game industry and promoted the designers and programmers responsible for its games as "software artists." EA published numerous games and some productivity software for personal computers, all of which were developed by external individuals or groups until 1987's '' Skate or Die!''. The company shifted toward internal game studios, often through acquisitions, such as Distinctive Software becoming EA Canada in 1991. Currently, EA develops and publishes games of established franchises, including '' Battlefield'', '' Need for Speed'', '' The Sims'', '' Medal of Honor'', '' Command & Conquer'', '' Dead Space'', '' Mass Effect'', '' Dragon Age'', '' Army of Two'', '' Apex Legends'', and ''Star Wars'', as well as the EA Sports titles '' FIFA'', '' Madden NFL'', '' NBA Liv ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

.jpg)