|

Joint Entropy

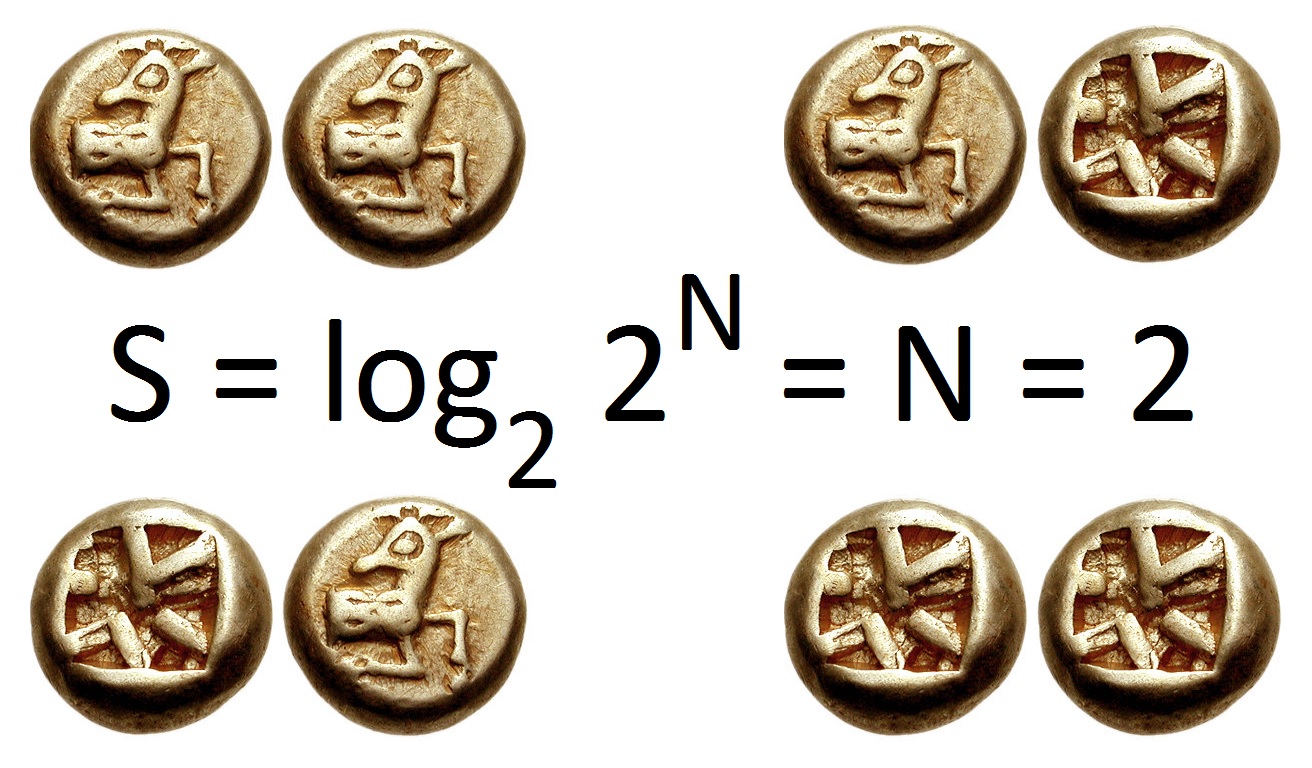

In information theory, joint entropy is a measure of the uncertainty associated with a set of variables. Definition The joint Shannon entropy (in bits) of two discrete random variables X and Y with images \mathcal X and \mathcal Y is defined as where x and y are particular values of X and Y, respectively, P(x,y) is the joint probability of these values occurring together, and P(x,y) \log_2 (x,y)/math> is defined to be 0 if P(x,y)=0. For more than two random variables X_1, ..., X_n this expands to where x_1,...,x_n are particular values of X_1,...,X_n, respectively, P(x_1, ..., x_n) is the probability of these values occurring together, and P(x_1, ..., x_n) \log_2 (x_1, ..., x_n)/math> is defined to be 0 if P(x_1, ..., x_n)=0. Properties Nonnegativity The joint entropy of a set of random variables is a nonnegative number. :\Eta(X,Y) \geq 0 :\Eta(X_1,\ldots, X_n) \geq 0 Greater than individual entropies The joint entropy of a set of variables is greater than or eq ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information Theory

Information theory is the scientific study of the quantification (science), quantification, computer data storage, storage, and telecommunication, communication of information. The field was originally established by the works of Harry Nyquist and Ralph Hartley, in the 1920s, and Claude Shannon in the 1940s. The field is at the intersection of probability theory, statistics, computer science, statistical mechanics, information engineering (field), information engineering, and electrical engineering. A key measure in information theory is information entropy, entropy. Entropy quantifies the amount of uncertainty involved in the value of a random variable or the outcome of a random process. For example, identifying the outcome of a fair coin flip (with two equally likely outcomes) provides less information (lower entropy) than specifying the outcome from a roll of a dice, die (with six equally likely outcomes). Some other important measures in information theory are mutual informat ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Entropy (information Theory)

In information theory, the entropy of a random variable is the average level of "information", "surprise", or "uncertainty" inherent to the variable's possible outcomes. Given a discrete random variable X, which takes values in the alphabet \mathcal and is distributed according to p: \mathcal\to , 1/math>: \Eta(X) := -\sum_ p(x) \log p(x) = \mathbb \log p(X), where \Sigma denotes the sum over the variable's possible values. The choice of base for \log, the logarithm, varies for different applications. Base 2 gives the unit of bits (or " shannons"), while base ''e'' gives "natural units" nat, and base 10 gives units of "dits", "bans", or " hartleys". An equivalent definition of entropy is the expected value of the self-information of a variable. The concept of information entropy was introduced by Claude Shannon in his 1948 paper "A Mathematical Theory of Communication",PDF archived froherePDF archived frohere and is also referred to as Shannon entropy. Shannon's theory defi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Random Variables

A random variable (also called random quantity, aleatory variable, or stochastic variable) is a mathematical formalization of a quantity or object which depends on random events. It is a mapping or a function from possible outcomes (e.g., the possible upper sides of a flipped coin such as heads H and tails T) in a sample space (e.g., the set \) to a measurable space, often the real numbers (e.g., \ in which 1 corresponding to H and -1 corresponding to T). Informally, randomness typically represents some fundamental element of chance, such as in the roll of a dice; it may also represent uncertainty, such as measurement error. However, the interpretation of probability is philosophically complicated, and even in specific cases is not always straightforward. The purely mathematical analysis of random variables is independent of such interpretational difficulties, and can be based upon a rigorous axiomatic setup. In the formal mathematical language of measure theory, a random vari ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Shannon Entropy

Shannon may refer to: People * Shannon (given name) * Shannon (surname) * Shannon (American singer), stage name of singer Shannon Brenda Greene (born 1958) * Shannon (South Korean singer), British-South Korean singer and actress Shannon Arrum Williams (born 1998) * Shannon, intermittent stage name of English singer-songwriter Marty Wilde (born 1939) * Claude Shannon (1916-2001) was American mathematician, electrical engineer, and cryptographer known as a "father of information theory" Places Australia * Shannon, Tasmania, a locality * Hundred of Shannon, a cadastral unit in South Australia * Shannon, a former name for the area named Calomba, South Australia since 1916 * Shannon River (Western Australia) Canada * Shannon, New Brunswick, a community * Shannon, Quebec, a city * Shannon Bay, former name of Darrell Bay, British Columbia * Shannon Falls, a waterfall in British Columbia Ireland * River Shannon, the longest river in Ireland ** Shannon Cave, a subterranean section o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Random Variable

A random variable (also called random quantity, aleatory variable, or stochastic variable) is a mathematical formalization of a quantity or object which depends on random events. It is a mapping or a function from possible outcomes (e.g., the possible upper sides of a flipped coin such as heads H and tails T) in a sample space (e.g., the set \) to a measurable space, often the real numbers (e.g., \ in which 1 corresponding to H and -1 corresponding to T). Informally, randomness typically represents some fundamental element of chance, such as in the roll of a dice; it may also represent uncertainty, such as measurement error. However, the interpretation of probability is philosophically complicated, and even in specific cases is not always straightforward. The purely mathematical analysis of random variables is independent of such interpretational difficulties, and can be based upon a rigorous axiomatic setup. In the formal mathematical language of measure theory, a random var ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Joint Probability

Given two random variables that are defined on the same probability space, the joint probability distribution is the corresponding probability distribution on all possible pairs of outputs. The joint distribution can just as well be considered for any given number of random variables. The joint distribution encodes the marginal distributions, i.e. the distributions of each of the individual random variables. It also encodes the conditional probability distributions, which deal with how the outputs of one random variable are distributed when given information on the outputs of the other random variable(s). In the formal mathematical setup of measure theory, the joint distribution is given by the pushforward measure, by the map obtained by pairing together the given random variables, of the sample space's probability measure. In the case of real-valued random variables, the joint distribution, as a particular multivariate distribution, may be expressed by a multivariate cumu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Subadditivity

In mathematics, subadditivity is a property of a function that states, roughly, that evaluating the function for the sum of two elements of the domain always returns something less than or equal to the sum of the function's values at each element. There are numerous examples of subadditive functions in various areas of mathematics, particularly norms and square roots. Additive maps are special cases of subadditive functions. Definitions A subadditive function is a function f \colon A \to B, having a domain ''A'' and an ordered codomain ''B'' that are both closed under addition, with the following property: \forall x, y \in A, f(x+y)\leq f(x)+f(y). An example is the square root function, having the non-negative real numbers as domain and codomain, since \forall x, y \geq 0 we have: \sqrt\leq \sqrt+\sqrt. A sequence \left \, n \geq 1, is called subadditive if it satisfies the inequality a_\leq a_n+a_m for all ''m'' and ''n''. This is a special case of subadditive function, if a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistically Independent

Independence is a fundamental notion in probability theory, as in statistics and the theory of stochastic processes. Two events are independent, statistically independent, or stochastically independent if, informally speaking, the occurrence of one does not affect the probability of occurrence of the other or, equivalently, does not affect the odds. Similarly, two random variables are independent if the realization of one does not affect the probability distribution of the other. When dealing with collections of more than two events, two notions of independence need to be distinguished. The events are called pairwise independent if any two events in the collection are independent of each other, while mutual independence (or collective independence) of events means, informally speaking, that each event is independent of any combination of other events in the collection. A similar notion exists for collections of random variables. Mutual independence implies pairwise independence, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Conditional Entropy

In information theory, the conditional entropy quantifies the amount of information needed to describe the outcome of a random variable Y given that the value of another random variable X is known. Here, information is measured in shannons, nats, or hartley Hartley may refer to: Places Australia *Hartley, New South Wales *Hartley, South Australia **Electoral district of Hartley, a state electoral district Canada *Hartley Bay, British Columbia United Kingdom *Hartley, Cumbria *Hartley, Plymou ...s. The ''entropy of Y conditioned on X'' is written as \Eta(Y, X). Definition The conditional entropy of Y given X is defined as where \mathcal X and \mathcal Y denote the support sets of X and Y. ''Note:'' Here, the convention is that the expression 0 \log 0 should be treated as being equal to zero. This is because \lim_ \theta\, \log \theta = 0. Intuitively, notice that by definition of Expected value, expected value and of Conditional Probability, conditional proba ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Mutual Information

In probability theory and information theory, the mutual information (MI) of two random variables is a measure of the mutual dependence between the two variables. More specifically, it quantifies the " amount of information" (in units such as shannons (bits), nats or hartleys) obtained about one random variable by observing the other random variable. The concept of mutual information is intimately linked to that of entropy of a random variable, a fundamental notion in information theory that quantifies the expected "amount of information" held in a random variable. Not limited to real-valued random variables and linear dependence like the correlation coefficient, MI is more general and determines how different the joint distribution of the pair (X,Y) is from the product of the marginal distributions of X and Y. MI is the expected value of the pointwise mutual information (PMI). The quantity was defined and analyzed by Claude Shannon in his landmark paper "A Mathemati ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Quantum Information Theory

Quantum information is the information of the quantum state, state of a quantum system. It is the basic entity of study in quantum information theory, and can be manipulated using quantum information processing techniques. Quantum information refers to both the technical definition in terms of Von Neumann entropy and the general computational term. It is an interdisciplinary field that involves quantum mechanics, computer science, information theory, philosophy and cryptography among other fields. Its study is also relevant to disciplines such as cognitive science, psychology and neuroscience. Its main focus is in extracting information from matter at the microscopic scale. Observation in science is one of the most important ways of acquiring information and measurement is required in order to quantify the observation, making this crucial to the scientific method. In quantum mechanics, due to the uncertainty principle, non-commuting Observable, observables cannot be precisely mea ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

.jpg)