|

Indicator Variable

In regression analysis, a dummy variable (also known as indicator variable or just dummy) is one that takes the values 0 or 1 to indicate the absence or presence of some categorical effect that may be expected to shift the outcome. For example, if we were studying the relationship between gender and income, we could use a dummy variable to represent the gender of each individual in the study. The variable would take on a value of 1 for males and 0 for females. Dummy variables are commonly used in regression analysis to represent categorical variables that have more than two levels, such as education level or occupation. In this case, multiple dummy variables would be created to represent each level of the variable, and only one dummy variable would take on a value of 1 for each observation. Dummy variables are useful because they allow us to include categorical variables in our analysis, which would otherwise be difficult to include due to their non-numeric nature. They can also h ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

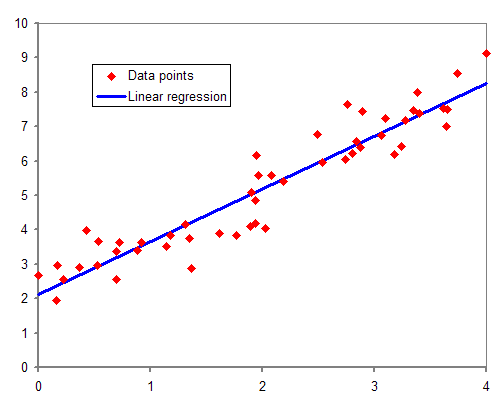

Regression Analysis

In statistical modeling, regression analysis is a set of statistical processes for estimating the relationships between a dependent variable (often called the 'outcome' or 'response' variable, or a 'label' in machine learning parlance) and one or more independent variables (often called 'predictors', 'covariates', 'explanatory variables' or 'features'). The most common form of regression analysis is linear regression, in which one finds the line (or a more complex linear combination) that most closely fits the data according to a specific mathematical criterion. For example, the method of ordinary least squares computes the unique line (or hyperplane) that minimizes the sum of squared differences between the true data and that line (or hyperplane). For specific mathematical reasons (see linear regression), this allows the researcher to estimate the conditional expectation (or population average value) of the dependent variable when the independent variables take on a given ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

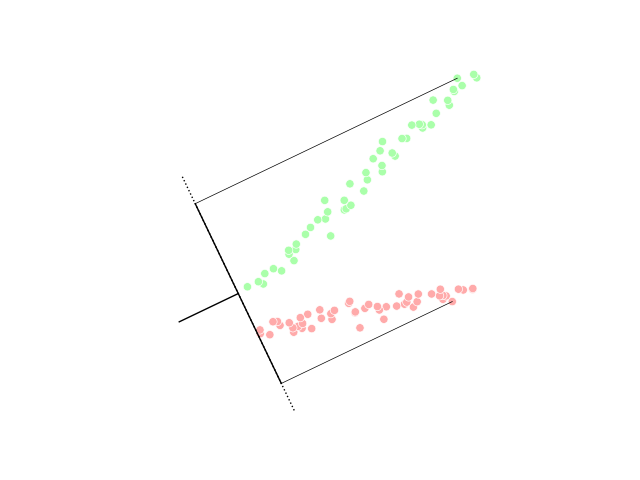

Linear Discriminant Analysis

Linear discriminant analysis (LDA), normal discriminant analysis (NDA), or discriminant function analysis is a generalization of Fisher's linear discriminant, a method used in statistics and other fields, to find a linear combination of features that characterizes or separates two or more classes of objects or events. The resulting combination may be used as a linear classifier, or, more commonly, for dimensionality reduction before later classification. LDA is closely related to analysis of variance (ANOVA) and regression analysis, which also attempt to express one dependent variable as a linear combination of other features or measurements. However, ANOVA uses categorical independent variables and a continuous dependent variable, whereas discriminant analysis has continuous independent variables and a categorical dependent variable (''i.e.'' the class label). Logistic regression and probit regression are more similar to LDA than ANOVA is, as they also explain a categorical var ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Indicator Function

In mathematics, an indicator function or a characteristic function of a subset of a set is a function that maps elements of the subset to one, and all other elements to zero. That is, if is a subset of some set , one has \mathbf_(x)=1 if x\in A, and \mathbf_(x)=0 otherwise, where \mathbf_A is a common notation for the indicator function. Other common notations are I_A, and \chi_A. The indicator function of is the Iverson bracket of the property of belonging to ; that is, :\mathbf_(x)= \in A For example, the Dirichlet function is the indicator function of the rational numbers as a subset of the real numbers. Definition The indicator function of a subset of a set is a function \mathbf_A \colon X \to \ defined as \mathbf_A(x) := \begin 1 ~&\text~ x \in A~, \\ 0 ~&\text~ x \notin A~. \end The Iverson bracket provides the equivalent notation, \in A/math> or to be used instead of \mathbf_(x)\,. The function \mathbf_A is sometimes denoted , , , or even just . Nota ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Hypothesis Testing

A statistical hypothesis test is a method of statistical inference used to decide whether the data at hand sufficiently support a particular hypothesis. Hypothesis testing allows us to make probabilistic statements about population parameters. History Early use While hypothesis testing was popularized early in the 20th century, early forms were used in the 1700s. The first use is credited to John Arbuthnot (1710), followed by Pierre-Simon Laplace (1770s), in analyzing the human sex ratio at birth; see . Modern origins and early controversy Modern significance testing is largely the product of Karl Pearson ( ''p''-value, Pearson's chi-squared test), William Sealy Gosset ( Student's t-distribution), and Ronald Fisher ("null hypothesis", analysis of variance, "significance test"), while hypothesis testing was developed by Jerzy Neyman and Egon Pearson (son of Karl). Ronald Fisher began his life in statistics as a Bayesian (Zabell 1992), but Fisher soon grew disenchanted with t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Chow Test

The Chow test (), proposed by econometrician Gregory Chow in 1960, is a test of whether the true coefficients in two linear regressions on different data sets are equal. In econometrics, it is most commonly used in time series analysis to test for the presence of a structural break at a period which can be assumed to be known ''a priori'' (for instance, a major historical event such as a war). In program evaluation, the Chow test is often used to determine whether the independent variables have different impacts on different subgroups of the population. Illustrations First Chow Test Suppose that we model our data as : y_t=a+bx_ + cx_ + \varepsilon.\, If we split our data into two groups, then we have : y_t=a_1+b_1x_ + c_1x_ + \varepsilon \, and : y_t=a_2+b_2x_ + c_2x_ + \varepsilon. \, The null hypothesis of the Chow test asserts that a_1=a_2, b_1=b_2, and c_1=c_2, and there is the assumption that the model errors \varepsilon are independent and identically distributed ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Binary Regression

In statistics, specifically regression analysis, a binary regression estimates a relationship between one or more explanatory variables and a single output binary variable. Generally the probability of the two alternatives is modeled, instead of simply outputting a single value, as in linear regression. Binary regression is usually analyzed as a special case of binomial regression, with a single outcome (n = 1), and one of the two alternatives considered as "success" and coded as 1: the value is the count of successes in 1 trial, either 0 or 1. The most common binary regression models are the logit model (logistic regression) and the probit model (probit regression). Applications Binary regression is principally applied either for prediction (binary classification), or for estimating the association between the explanatory variables and the output. In economics, binary regressions are used to model binary choice. Interpretations Binary regression models can be interpreted as late ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Multicollinearity

In statistics, multicollinearity (also collinearity) is a phenomenon in which one predictor variable in a multiple regression model can be linearly predicted from the others with a substantial degree of accuracy. In this situation, the coefficient estimates of the multiple regression may change erratically in response to small changes in the model or the data. Multicollinearity does not reduce the predictive power or reliability of the model as a whole, at least within the sample data set; it only affects calculations regarding individual predictors. That is, a multivariate regression model with collinear predictors can indicate how well the entire bundle of predictors predicts the outcome variable, but it may not give valid results about any individual predictor, or about which predictors are redundant with respect to others. Note that in statements of the assumptions underlying regression analyses such as ordinary least squares, the phrase "no multicollinearity" usually refer ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Constant Term

In mathematics, a constant term is a term in an algebraic expression that does not contain any variables and therefore is constant. For example, in the quadratic polynomial :x^2 + 2x + 3,\ the 3 is a constant term. After like terms are combined, an algebraic expression will have at most one constant term. Thus, it is common to speak of the quadratic polynomial :ax^2+bx+c,\ where x is the variable, as having a constant term of c. If the constant term is 0, then it will conventionally be omitted when the quadratic is written out. Any polynomial written in standard form has a unique constant term, which can be considered a coefficient of x^0. In particular, the constant term will always be the lowest degree term of the polynomial. This also applies to multivariate polynomials. For example, the polynomial :x^2+2xy+y^2-2x+2y-4\ has a constant term of −4, which can be considered to be the coefficient of x^0y^0, where the variables are eliminated by being exponentiated to ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cross-sectional Data

Cross-sectional data, or a cross section of a study population, in statistics and econometrics, is a type of data collected by observing many subjects (such as individuals, firms, countries, or regions) at the one point or period of time. The analysis might also have no regard to differences in time. Analysis of cross-sectional data usually consists of comparing the differences among selected subjects. For example, if we want to measure current obesity levels in a population, we could draw a sample of 1,000 people randomly from that population (also known as a cross section of that population), measure their weight and height, and calculate what percentage of that sample is categorized as obese. This cross-sectional sample provides us with a snapshot of that population, at that one point in time. Note that we do not know based on one cross-sectional sample if obesity is increasing or decreasing; we can only describe the current proportion. Cross-sectional data differs from time ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Coefficient Of Determination

In statistics, the coefficient of determination, denoted ''R''2 or ''r''2 and pronounced "R squared", is the proportion of the variation in the dependent variable that is predictable from the independent variable(s). It is a statistic used in the context of statistical models whose main purpose is either the prediction of future outcomes or the testing of hypotheses, on the basis of other related information. It provides a measure of how well observed outcomes are replicated by the model, based on the proportion of total variation of outcomes explained by the model. There are several definitions of ''R''2 that are only sometimes equivalent. One class of such cases includes that of simple linear regression where ''r''2 is used instead of ''R''2. When only an intercept is included, then ''r''2 is simply the square of the sample correlation coefficient (i.e., ''r'') between the observed outcomes and the observed predictor values. If additional regressors are included, ''R''2 ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Fixed Effects Estimator

In statistics, a fixed effects model is a statistical model in which the model parameters are fixed or non-random quantities. This is in contrast to random effects models and mixed models in which all or some of the model parameters are random variables. In many applications including econometrics and biostatistics a fixed effects model refers to a regression model in which the group means are fixed (non-random) as opposed to a random effects model in which the group means are a random sample from a population. Generally, data can be grouped according to several observed factors. The group means could be modeled as fixed or random effects for each grouping. In a fixed effects model each group mean is a group-specific fixed quantity. In panel data where longitudinal observations exist for the same subject, fixed effects represent the subject-specific means. In panel data analysis the term fixed effects estimator (also known as the within estimator) is used to refer to an estimator ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |