|

Generalized Multivariate Log-gamma Distribution

In probability theory and statistics, the generalized multivariate log-gamma (G-MVLG) distribution is a multivariate distribution introduced by Demirhan and Hamurkaroglu in 2011. The G-MVLG is a flexible distribution. Skewness and kurtosis are well controlled by the parameters of the distribution. This enables one to control dispersion of the distribution. Because of this property, the distribution is effectively used as a joint prior distribution in Bayesian analysis, especially when the likelihood is not from the location-scale family of distributions such as normal distribution. Joint probability density function If \boldsymbol \sim \mathrm\text\mathrm(\delta,\nu,\boldsymbol,\boldsymbol), the joint probability density function (pdf) of \boldsymbol=(Y_,\dots,Y_) is given as the following: :f(y_1,\dots,y_k)= \delta^\sum_^\infty \frac \exp\bigg\, where \boldsymbol\in \mathbb^, \nu>0, \lambda_>0, \mu_>0 for j=1,\dots,k, \delta=\det(\boldsymbol)^, and : \boldsymbol=\left( \begi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Probability Theory

Probability theory is the branch of mathematics concerned with probability. Although there are several different probability interpretations, probability theory treats the concept in a rigorous mathematical manner by expressing it through a set of axioms of probability, axioms. Typically these axioms formalise probability in terms of a probability space, which assigns a measure (mathematics), measure taking values between 0 and 1, termed the probability measure, to a set of outcomes called the sample space. Any specified subset of the sample space is called an event (probability theory), event. Central subjects in probability theory include discrete and continuous random variables, probability distributions, and stochastic processes (which provide mathematical abstractions of determinism, non-deterministic or uncertain processes or measured Quantity, quantities that may either be single occurrences or evolve over time in a random fashion). Although it is not possible to perfectly p ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Correlation

In statistics, correlation or dependence is any statistical relationship, whether causal or not, between two random variables or bivariate data. Although in the broadest sense, "correlation" may indicate any type of association, in statistics it usually refers to the degree to which a pair of variables are '' linearly'' related. Familiar examples of dependent phenomena include the correlation between the height of parents and their offspring, and the correlation between the price of a good and the quantity the consumers are willing to purchase, as it is depicted in the so-called demand curve. Correlations are useful because they can indicate a predictive relationship that can be exploited in practice. For example, an electrical utility may produce less power on a mild day based on the correlation between electricity demand and weather. In this example, there is a causal relationship, because extreme weather causes people to use more electricity for heating or cooling. H ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Type I Extreme Value Distribution

In probability theory Probability theory is the branch of mathematics concerned with probability. Although there are several different probability interpretations, probability theory treats the concept in a rigorous mathematical manner by expressing it through a set o ... and statistics, the Gumbel distribution (also known as the type-I generalized extreme value distribution) is used to model the distribution of the maximum (or the minimum) of a number of samples of various distributions. This distribution might be used to represent the distribution of the maximum level of a river in a particular year if there was a list of maximum values for the past ten years. It is useful in predicting the chance that an extreme earthquake, flood or other natural disaster will occur. The potential applicability of the Gumbel distribution to represent the distribution of maxima relates to extreme value theory, which indicates that it is likely to be useful if the distribution of the underlyin ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Gumbel Distribution

In probability theory and statistics, the Gumbel distribution (also known as the type-I generalized extreme value distribution) is used to model the distribution of the maximum (or the minimum) of a number of samples of various distributions. This distribution might be used to represent the distribution of the maximum level of a river in a particular year if there was a list of maximum values for the past ten years. It is useful in predicting the chance that an extreme earthquake, flood or other natural disaster will occur. The potential applicability of the Gumbel distribution to represent the distribution of maxima relates to extreme value theory, which indicates that it is likely to be useful if the distribution of the underlying sample data is of the normal or exponential type. ''This article uses the Gumbel distribution to model the distribution of the maximum value''. ''To model the minimum value, use the negative of the original values.'' The Gumbel distribution is a pa ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

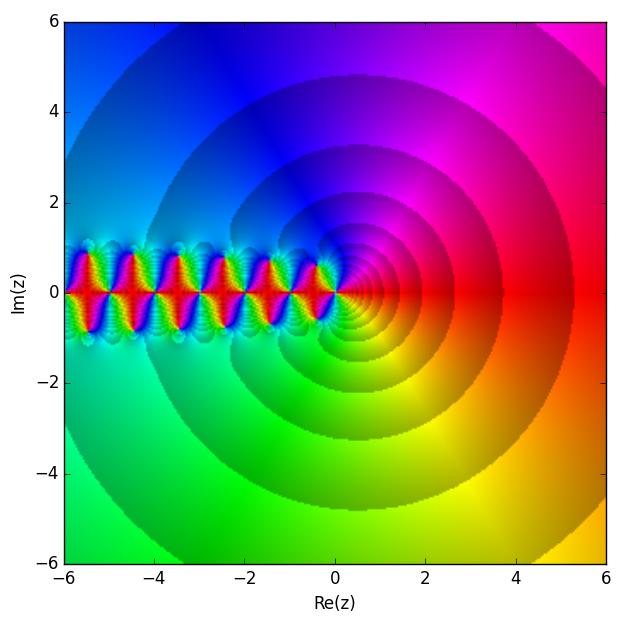

Trigamma Function

In mathematics, the trigamma function, denoted or , is the second of the polygamma functions, and is defined by : \psi_1(z) = \frac \ln\Gamma(z). It follows from this definition that : \psi_1(z) = \frac \psi(z) where is the digamma function. It may also be defined as the sum of the series : \psi_1(z) = \sum_^\frac, making it a special case of the Hurwitz zeta function : \psi_1(z) = \zeta(2,z). Note that the last two formulas are valid when is not a natural number. Calculation A double integral representation, as an alternative to the ones given above, may be derived from the series representation: : \psi_1(z) = \int_0^1\!\!\int_0^x\frac\,dy\,dx using the formula for the sum of a geometric series. Integration over yields: : \psi_1(z) = -\int_0^1\frac\,dx An asymptotic expansion as a Laurent series is : \psi_1(z) = \frac + \frac + \sum_^\frac = \sum_^\frac if we have chosen , i.e. the Bernoulli numbers of the second kind. Recurrence and reflection ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Digamma Function

In mathematics, the digamma function is defined as the logarithmic derivative of the gamma function: :\psi(x)=\frac\ln\big(\Gamma(x)\big)=\frac\sim\ln-\frac. It is the first of the polygamma functions. It is strictly increasing and strictly concave on (0,\infty). The digamma function is often denoted as \psi_0(x), \psi^(x) or (the uppercase form of the archaic Greek consonant digamma meaning double-gamma). Relation to harmonic numbers The gamma function obeys the equation :\Gamma(z+1)=z\Gamma(z). \, Taking the derivative with respect to gives: :\Gamma'(z+1)=z\Gamma'(z)+\Gamma(z) \, Dividing by or the equivalent gives: :\frac=\frac+\frac or: :\psi(z+1)=\psi(z)+\frac Since the harmonic numbers are defined for positive integers as :H_n=\sum_^n \frac 1 k, the digamma function is related to them by :\psi(n)=H_-\gamma, where and is the Euler–Mascheroni constant. For half-integer arguments the digamma function takes the values : \psi \left(n+\tfrac1 ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Moment Generating Function

In probability theory and statistics, the moment-generating function of a real-valued random variable is an alternative specification of its probability distribution. Thus, it provides the basis of an alternative route to analytical results compared with working directly with probability density functions or cumulative distribution functions. There are particularly simple results for the moment-generating functions of distributions defined by the weighted sums of random variables. However, not all random variables have moment-generating functions. As its name implies, the moment-generating function can be used to compute a distribution’s moments: the ''n''th moment about 0 is the ''n''th derivative of the moment-generating function, evaluated at 0. In addition to real-valued distributions (univariate distributions), moment-generating functions can be defined for vector- or matrix-valued random variables, and can even be extended to more general cases. The moment-generating fun ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Absolute Value

In mathematics, the absolute value or modulus of a real number x, is the non-negative value without regard to its sign. Namely, , x, =x if is a positive number, and , x, =-x if x is negative (in which case negating x makes -x positive), and For example, the absolute value of 3 and the absolute value of −3 is The absolute value of a number may be thought of as its distance from zero. Generalisations of the absolute value for real numbers occur in a wide variety of mathematical settings. For example, an absolute value is also defined for the complex numbers, the quaternions, ordered rings, fields and vector spaces. The absolute value is closely related to the notions of magnitude, distance, and norm in various mathematical and physical contexts. Terminology and notation In 1806, Jean-Robert Argand introduced the term ''module'', meaning ''unit of measure'' in French, specifically for the ''complex'' absolute value, Oxford English Dictionary, Draft Revision, Ju ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Determinant

In mathematics, the determinant is a scalar value that is a function of the entries of a square matrix. It characterizes some properties of the matrix and the linear map represented by the matrix. In particular, the determinant is nonzero if and only if the matrix is invertible and the linear map represented by the matrix is an isomorphism. The determinant of a product of matrices is the product of their determinants (the preceding property is a corollary of this one). The determinant of a matrix is denoted , , or . The determinant of a matrix is :\begin a & b\\c & d \end=ad-bc, and the determinant of a matrix is : \begin a & b & c \\ d & e & f \\ g & h & i \end= aei + bfg + cdh - ceg - bdi - afh. The determinant of a matrix can be defined in several equivalent ways. Leibniz formula expresses the determinant as a sum of signed products of matrix entries such that each summand is the product of different entries, and the number of these summands is n!, the factorial o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Probability Density Function

In probability theory, a probability density function (PDF), or density of a continuous random variable, is a function whose value at any given sample (or point) in the sample space (the set of possible values taken by the random variable) can be interpreted as providing a ''relative likelihood'' that the value of the random variable would be close to that sample. Probability density is the probability per unit length, in other words, while the ''absolute likelihood'' for a continuous random variable to take on any particular value is 0 (since there is an infinite set of possible values to begin with), the value of the PDF at two different samples can be used to infer, in any particular draw of the random variable, how much more likely it is that the random variable would be close to one sample compared to the other sample. In a more precise sense, the PDF is used to specify the probability of the random variable falling ''within a particular range of values'', as opposed ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistics

Statistics (from German: '' Statistik'', "description of a state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, industrial, or social problem, it is conventional to begin with a statistical population or a statistical model to be studied. Populations can be diverse groups of people or objects such as "all people living in a country" or "every atom composing a crystal". Statistics deals with every aspect of data, including the planning of data collection in terms of the design of surveys and experiments.Dodge, Y. (2006) ''The Oxford Dictionary of Statistical Terms'', Oxford University Press. When census data cannot be collected, statisticians collect data by developing specific experiment designs and survey samples. Representative sampling assures that inferences and conclusions can reasonably extend from the sample to the population as a whole. An ex ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |