|

Dirichlet-multinomial Distribution

In probability theory and statistics, the Dirichlet-multinomial distribution is a family of discrete multivariate probability distributions on a finite support of non-negative integers. It is also called the Dirichlet compound multinomial distribution (DCM) or multivariate Pólya distribution (after George Pólya). It is a compound probability distribution, where a probability vector p is drawn from a Dirichlet distribution with parameter vector \boldsymbol, and an observation drawn from a multinomial distribution with probability vector p and number of trials ''n''. The Dirichlet parameter vector captures the prior belief about the situation and can be seen as a pseudocount: observations of each outcome that occur before the actual data is collected. The compounding corresponds to a Pólya urn scheme. It is frequently encountered in Bayesian statistics, machine learning, empirical Bayes methods and classical statistics as an overdispersed multinomial distribution. It reduces to ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Integer

An integer is the number zero (), a positive natural number (, , , etc.) or a negative integer with a minus sign (−1, −2, −3, etc.). The negative numbers are the additive inverses of the corresponding positive numbers. In the language of mathematics, the set of integers is often denoted by the boldface or blackboard bold \mathbb. The set of natural numbers \mathbb is a subset of \mathbb, which in turn is a subset of the set of all rational numbers \mathbb, itself a subset of the real numbers \mathbb. Like the natural numbers, \mathbb is countably infinite. An integer may be regarded as a real number that can be written without a fractional component. For example, 21, 4, 0, and −2048 are integers, while 9.75, , and are not. The integers form the smallest group and the smallest ring containing the natural numbers. In algebraic number theory, the integers are sometimes qualified as rational integers to distinguish them from the more general algebraic integers ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Beta Distribution

In probability theory and statistics, the beta distribution is a family of continuous probability distributions defined on the interval , 1in terms of two positive parameters, denoted by ''alpha'' (''α'') and ''beta'' (''β''), that appear as exponents of the random variable and control the shape of the distribution. The beta distribution has been applied to model the behavior of random variables limited to intervals of finite length in a wide variety of disciplines. The beta distribution is a suitable model for the random behavior of percentages and proportions. In Bayesian inference, the beta distribution is the conjugate prior probability distribution for the Bernoulli, binomial, negative binomial and geometric distributions. The formulation of the beta distribution discussed here is also known as the beta distribution of the first kind, whereas ''beta distribution of the second kind'' is an alternative name for the beta prime distribution. The generalization to mult ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Variance

In probability theory and statistics, variance is the expectation of the squared deviation of a random variable from its population mean or sample mean. Variance is a measure of dispersion, meaning it is a measure of how far a set of numbers is spread out from their average value. Variance has a central role in statistics, where some ideas that use it include descriptive statistics, statistical inference, hypothesis testing, goodness of fit, and Monte Carlo sampling. Variance is an important tool in the sciences, where statistical analysis of data is common. The variance is the square of the standard deviation, the second central moment of a distribution, and the covariance of the random variable with itself, and it is often represented by \sigma^2, s^2, \operatorname(X), V(X), or \mathbb(X). An advantage of variance as a measure of dispersion is that it is more amenable to algebraic manipulation than other measures of dispersion such as the expected absolute deviation; for e ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Covariance Matrix

In probability theory and statistics, a covariance matrix (also known as auto-covariance matrix, dispersion matrix, variance matrix, or variance–covariance matrix) is a square matrix giving the covariance between each pair of elements of a given random vector. Any covariance matrix is symmetric and positive semi-definite and its main diagonal contains variances (i.e., the covariance of each element with itself). Intuitively, the covariance matrix generalizes the notion of variance to multiple dimensions. As an example, the variation in a collection of random points in two-dimensional space cannot be characterized fully by a single number, nor would the variances in the x and y directions contain all of the necessary information; a 2 \times 2 matrix would be necessary to fully characterize the two-dimensional variation. The covariance matrix of a random vector \mathbf is typically denoted by \operatorname_ or \Sigma. Definition Throughout this article, boldfaced unsubsc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Expected Value

In probability theory, the expected value (also called expectation, expectancy, mathematical expectation, mean, average, or first moment) is a generalization of the weighted average. Informally, the expected value is the arithmetic mean of a large number of independently selected outcomes of a random variable. The expected value of a random variable with a finite number of outcomes is a weighted average of all possible outcomes. In the case of a continuum of possible outcomes, the expectation is defined by integration. In the axiomatic foundation for probability provided by measure theory, the expectation is given by Lebesgue integration. The expected value of a random variable is often denoted by , , or , with also often stylized as or \mathbb. History The idea of the expected value originated in the middle of the 17th century from the study of the so-called problem of points, which seeks to divide the stakes ''in a fair way'' between two players, who have to end th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

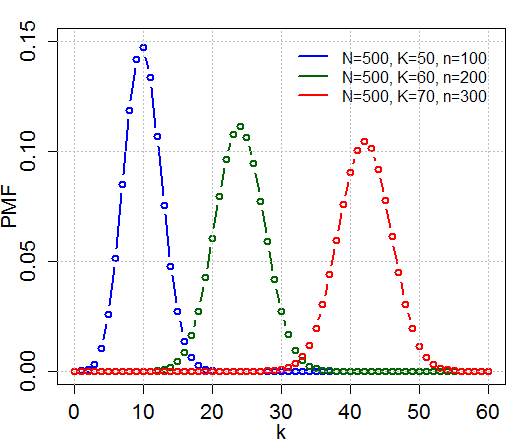

Multivariate Hypergeometric Distribution

In probability theory and statistics, the hypergeometric distribution is a discrete probability distribution that describes the probability of k successes (random draws for which the object drawn has a specified feature) in n draws, ''without'' replacement, from a finite population of size N that contains exactly K objects with that feature, wherein each draw is either a success or a failure. In contrast, the binomial distribution describes the probability of k successes in n draws ''with'' replacement. Definitions Probability mass function The following conditions characterize the hypergeometric distribution: * The result of each draw (the elements of the population being sampled) can be classified into one of two mutually exclusive categories (e.g. Pass/Fail or Employed/Unemployed). * The probability of a success changes on each draw, as each draw decreases the population (''sampling without replacement'' from a finite population). A random variable X follows the hyperg ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Polya Urn Model

Polyadenylation is the addition of a poly(A) tail to an RNA transcript, typically a messenger RNA (mRNA). The poly(A) tail consists of multiple adenosine monophosphates; in other words, it is a stretch of RNA that has only adenine bases. In eukaryotes, polyadenylation is part of the process that produces mature mRNA for translation. In many bacteria, the poly(A) tail promotes degradation of the mRNA. It, therefore, forms part of the larger process of gene expression. The process of polyadenylation begins as the transcription of a gene terminates. The 3′-most segment of the newly made pre-mRNA is first cleaved off by a set of proteins; these proteins then synthesize the poly(A) tail at the RNA's 3′ end. In some genes these proteins add a poly(A) tail at one of several possible sites. Therefore, polyadenylation can produce more than one transcript from a single gene (alternative polyadenylation), similar to alternative splicing. The poly(A) tail is important for the nuclear ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Urn Model

In probability and statistics, an urn problem is an idealized mental exercise in which some objects of real interest (such as atoms, people, cars, etc.) are represented as colored balls in an urn or other container. One pretends to remove one or more balls from the urn; the goal is to determine the probability of drawing one color or another, or some other properties. A number of important variations are described below. An urn model is either a set of probabilities that describe events within an urn problem, or it is a probability distribution, or a family of such distributions, of random variables associated with urn problems.Dodge, Yadolah (2003) ''Oxford Dictionary of Statistical Terms'', OUP. History In ''Ars Conjectandi'' (1713), Jacob Bernoulli considered the problem of determining, given a number of pebbles drawn from an urn, the proportions of different colored pebbles within the urn. This problem was known as the ''inverse probability'' problem, and was a topic of res ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Burstiness

In statistics, burstiness is the intermittent increases and decreases in activity or frequency of an event.Lambiotte, R. (2013.) "Burstiness and Spreading on Temporal Networks", University of Namur. One of measures of burstiness is the Fano factor—a ratio between the variance and mean of counts. Burstiness is observable in natural phenomena, such as natural disasters, or other phenomena, such as network/data/email network traffic or vehicular traffic. Burstiness is, in part, due to changes in the probability distribution of inter-event times. Distributions of bursty processes or events are characterised by heavy, or fat, tails. Burstiness of inter-contact time between nodes in a time-varying network can decidedly slow spreading processes over the network. This is of great interest for studying the spread of information and disease. Burstiness Score One relatively simple measure of burstiness is burstiness score. The burstiness score of a subset t of time period T relativ ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sparse Matrix

In numerical analysis and scientific computing, a sparse matrix or sparse array is a matrix in which most of the elements are zero. There is no strict definition regarding the proportion of zero-value elements for a matrix to qualify as sparse but a common criterion is that the number of non-zero elements is roughly equal to the number of rows or columns. By contrast, if most of the elements are non-zero, the matrix is considered dense. The number of zero-valued elements divided by the total number of elements (e.g., ''m'' × ''n'' for an ''m'' × ''n'' matrix) is sometimes referred to as the sparsity of the matrix. Conceptually, sparsity corresponds to systems with few pairwise interactions. For example, consider a line of balls connected by springs from one to the next: this is a sparse system as only adjacent balls are coupled. By contrast, if the same line of balls were to have springs connecting each ball to all other balls, the system would correspond to a dense matrix. The ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Beta Function

In mathematics, the beta function, also called the Euler integral of the first kind, is a special function that is closely related to the gamma function and to binomial coefficients. It is defined by the integral : \Beta(z_1,z_2) = \int_0^1 t^(1-t)^\,dt for complex number inputs z_1, z_2 such that \Re(z_1), \Re(z_2)>0. The beta function was studied by Leonhard Euler and Adrien-Marie Legendre and was given its name by Jacques Binet; its symbol is a Greek capital beta. Properties The beta function is symmetric, meaning that \Beta(z_1,z_2) = \Beta(z_2,z_1) for all inputs z_1 and z_2.Davis (1972) 6.2.2 p.258 A key property of the beta function is its close relationship to the gamma function: : \Beta(z_1,z_2)=\frac. A proof is given below in . The beta function is also closely related to binomial coefficients. When (or , by symmetry) is a positive integer, it follows from the definition of the gamma function thatDavis (1972) 6.2.1 p.258 : \Beta(m,n) =\dfrac = \frac \B ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Random Vector

In probability, and statistics, a multivariate random variable or random vector is a list of mathematical variables each of whose value is unknown, either because the value has not yet occurred or because there is imperfect knowledge of its value. The individual variables in a random vector are grouped together because they are all part of a single mathematical system — often they represent different properties of an individual statistical unit. For example, while a given person has a specific age, height and weight, the representation of these features of ''an unspecified person'' from within a group would be a random vector. Normally each element of a random vector is a real number. Random vectors are often used as the underlying implementation of various types of aggregate random variables, e.g. a random matrix, random tree, random sequence, stochastic process, etc. More formally, a multivariate random variable is a column vector \mathbf = (X_1,\dots,X_n)^\mathsf (or its ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |