|

Dependency Network (graphical Model)

Dependency networks (DNs) are graphical models, similar to Markov networks, wherein each vertex (node) corresponds to a random variable and each edge captures dependencies among variables. Unlike Bayesian networks, DNs may contain cycles. Each node is associated to a conditional probability table, which determines the realization of the random variable given its parents. Markov blanket In a Bayesian network, the Markov blanket of a node is the set of parents and children of that node, together with the children's parents. The values of the parents and children of a node evidently give information about that node. However, its children's parents also have to be included in the Markov blanket, because they can be used to explain away the node in question. In a Markov random field, the Markov blanket for a node is simply its adjacent (or neighboring) nodes. In a dependency network, the Markov blanket for a node is simply the set of its parents. Dependency network versus Baye ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Graphical Model

A graphical model or probabilistic graphical model (PGM) or structured probabilistic model is a probabilistic model for which a graph expresses the conditional dependence structure between random variables. Graphical models are commonly used in probability theory, statistics—particularly Bayesian statistics—and machine learning. Types of graphical models Generally, probabilistic graphical models use a graph-based representation as the foundation for encoding a distribution over a multi-dimensional space and a graph that is a compact or factorized representation of a set of independences that hold in the specific distribution. Two branches of graphical representations of distributions are commonly used, namely, Bayesian networks and Markov random fields. Both families encompass the properties of factorization and independences, but they differ in the set of independences they can encode and the factorization of the distribution that they induce. Undirected Graphical Model ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Markov Network

In the domain of physics and probability, a Markov random field (MRF), Markov network or undirected graphical model is a set of random variables having a Markov property described by an undirected graph. In other words, a random field is said to be a Markov random field if it satisfies Markov properties. The concept originates from the Sherrington–Kirkpatrick model. A Markov network or MRF is similar to a Bayesian network in its representation of dependencies; the differences being that Bayesian networks are directed and acyclic, whereas Markov networks are undirected and may be cyclic. Thus, a Markov network can represent certain dependencies that a Bayesian network cannot (such as cyclic dependencies ); on the other hand, it can't represent certain dependencies that a Bayesian network can (such as induced dependencies ). The underlying graph of a Markov random field may be finite or infinite. When the joint probability density of the random variables is strictly positive, i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Bayesian Network

A Bayesian network (also known as a Bayes network, Bayes net, belief network, or decision network) is a probabilistic graphical model that represents a set of variables and their conditional dependencies via a directed acyclic graph (DAG). While it is one of several forms of causal notation, causal networks are special cases of Bayesian networks. Bayesian networks are ideal for taking an event that occurred and predicting the likelihood that any one of several possible known causes was the contributing factor. For example, a Bayesian network could represent the probabilistic relationships between diseases and symptoms. Given symptoms, the network can be used to compute the probabilities of the presence of various diseases. Efficient algorithms can perform inference and learning in Bayesian networks. Bayesian networks that model sequences of variables (''e.g.'' speech signals or protein sequences) are called dynamic Bayesian networks. Generalizations of Bayesian networks tha ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Markov Blanket

In statistics and machine learning, a Markov blanket of a random variable is a minimal set of variables that renders the variable conditionally independent of all other variables in the system. This concept is central in probabilistic graphical models and feature selection. If a Markov blanket is minimal—meaning that no variable in it can be removed without losing this conditional independence—it is called a Markov boundary. Identifying a Markov blanket or boundary allows for efficient inference and helps isolate relevant variables for prediction or causal reasoning. The terms of Markov blanket and Markov boundary were coined by Judea Pearl in 1988. A Markov blanket may be derived from the structure of a probabilistic graphical model such as a Bayesian network or Markov random field. Markov blanket A Markov blanket of a random variable Y in a random variable set \mathcal=\ is any subset \mathcal_1 of \mathcal, conditioned on which other variables are independent with Y: ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Markov Random Field

In the domain of physics and probability, a Markov random field (MRF), Markov network or undirected graphical model is a set of random variables having a Markov property described by an undirected graph In discrete mathematics, particularly in graph theory, a graph is a structure consisting of a set of objects where some pairs of the objects are in some sense "related". The objects are represented by abstractions called '' vertices'' (also call .... In other words, a random field is said to be a Andrey Markov, Markov random field if it satisfies Markov properties. The concept originates from the Spin glass#Sherrington–Kirkpatrick model, Sherrington–Kirkpatrick model. A Markov network or MRF is similar to a Bayesian network in its representation of dependencies; the differences being that Bayesian networks are directed acyclic graph, directed and acyclic, whereas Markov networks are undirected and may be cyclic. Thus, a Markov network can represent certain dependencies th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Joint Probability Distribution

A joint or articulation (or articular surface) is the connection made between bones, ossicles, or other hard structures in the body which link an animal's skeletal system into a functional whole.Saladin, Ken. Anatomy & Physiology. 7th ed. McGraw-Hill Connect. Webp.274/ref> They are constructed to allow for different degrees and types of movement. Some joints, such as the knee, elbow, and shoulder, are self-lubricating, almost frictionless, and are able to withstand compression and maintain heavy loads while still executing smooth and precise movements. Other joints such as suture (joint), sutures between the bones of the skull permit very little movement (only during birth) in order to protect the brain and the sense organs. The connection between a tooth and the jawbone is also called a joint, and is described as a fibrous joint known as a gomphosis. Joints are classified both structurally and functionally. Joints play a vital role in the human body, contributing to movement, sta ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Gibbs Sampling

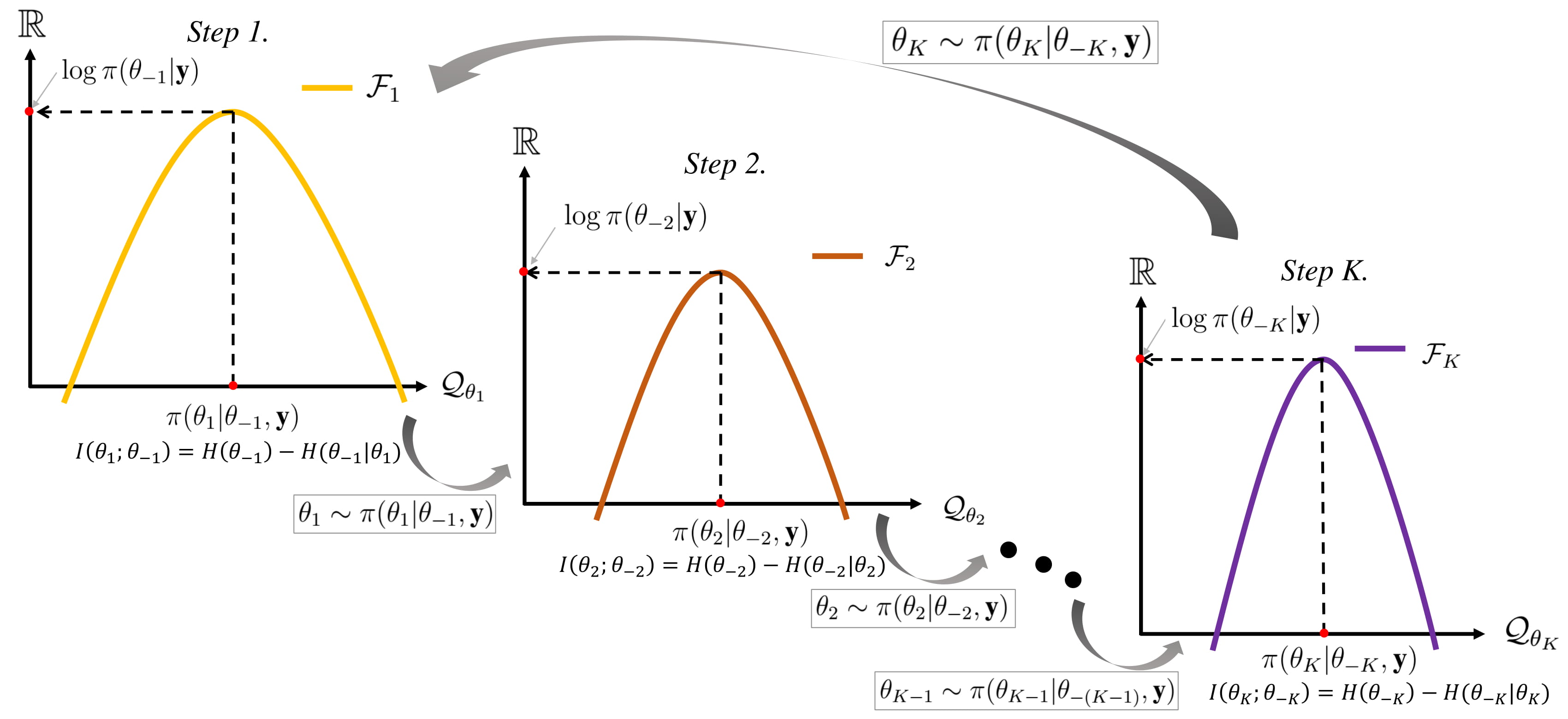

In statistics, Gibbs sampling or a Gibbs sampler is a Markov chain Monte Carlo (MCMC) algorithm for sampling from a specified multivariate distribution, multivariate probability distribution when direct sampling from the joint distribution is difficult, but sampling from the Conditional probability distribution, conditional distribution is more practical. This sequence can be used to approximate the joint distribution (e.g., to generate a histogram of the distribution); to approximate the marginal distribution of one of the variables, or some subset of the variables (for example, the unknown parameters or latent variables); or to compute an integral (such as the expected value of one of the variables). Typically, some of the variables correspond to observations whose values are known, and hence do not need to be sampled. Gibbs sampling is commonly used as a means of statistical inference, especially Bayesian inference. It is a randomized algorithm (i.e. an algorithm that makes ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Collaborative Filtering

Collaborative filtering (CF) is, besides content-based filtering, one of two major techniques used by recommender systems.Francesco Ricci and Lior Rokach and Bracha ShapiraIntroduction to Recommender Systems Handbook, Recommender Systems Handbook, Springer, 2011, pp. 1–35 Collaborative filtering has two senses, a narrow one and a more general one. In the newer, narrower sense, collaborative filtering is a method of making automatic predictions (filtering) about a user's interests by utilizing preferences or taste information collected from many users (collaborating). This approach assumes that if persons ''A'' and ''B'' share similar opinions on one issue, they are more likely to agree on other issues compared to a random pairing of ''A'' with another person. For instance, a collaborative filtering system for television programming could predict which shows a user might enjoy based on a limited list of the user's tastes (likes or dislikes). These predictions are specific to t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Relational Dependency Network

Relational dependency networks (RDNs) are graphical models which extend dependency networks to account for relational data. Relational data is data organized into one or more tables, which are cross-related through standard fields. A relational database is a canonical example of a system that serves to maintain relational data. A relational dependency network can be used to characterize the knowledge contained in a database. Introduction Relational Dependency Networks (or RDNs) aims to get the joint probability distribution over the variables of a dataset represented in the relational domain. They are based on Dependency Networks (or DNs) and extend them to the relational setting. RDNs have efficient learning methods where an RDN can learn the parameters independently, with the conditional probability distributions estimated separately. Since there may be some inconsistencies due to the independent learning method, RDNs use Gibbs sampling to recover joint distribution, like DN ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Graphical Models

A graphical model or probabilistic graphical model (PGM) or structured probabilistic model is a probabilistic model for which a graph expresses the conditional dependence structure between random variables. Graphical models are commonly used in probability theory, statistics—particularly Bayesian statistics—and machine learning. Types of graphical models Generally, probabilistic graphical models use a graph-based representation as the foundation for encoding a distribution over a multi-dimensional space and a graph that is a compact or factorized representation of a set of independences that hold in the specific distribution. Two branches of graphical representations of distributions are commonly used, namely, Bayesian networks and Markov random fields. Both families encompass the properties of factorization and independences, but they differ in the set of independences they can encode and the factorization of the distribution that they induce. Undirected Graphical Mod ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |