|

Dependent Dirichlet Process

In the mathematical theory of probability, the dependent Dirichlet process (DDP) provides a non-parametric prior over evolving mixture models. A construction of the DDP built on a Poisson point process. The concept is named after Peter Gustav Lejeune Dirichlet. In many applications we want to model a collection of distributions such as the one used to represent temporal and spatial stochastic processes. The Dirichlet process assumes that observations are exchangeable and therefore the data points have no inherent ordering that influences their labeling. This assumption is invalid for modelling temporal and spatial processes in which the order of data points plays a critical role in creating meaningful clusters may refer to: Science and technology Astronomy * Cluster (spacecraft), constellation of four European Space Agency spacecraft * Cluster II (spacecraft), a European Space Agency mission to study the magnetosphere * Asteroid cluster, a small .... Dependent Dirichlet ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

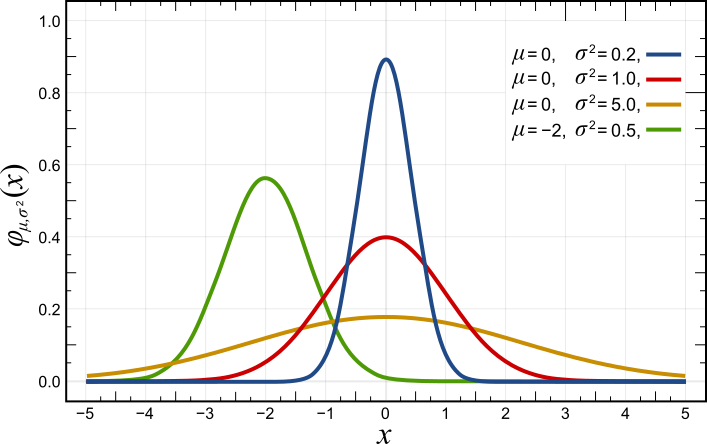

Probability

Probability is a branch of mathematics and statistics concerning events and numerical descriptions of how likely they are to occur. The probability of an event is a number between 0 and 1; the larger the probability, the more likely an event is to occur."Kendall's Advanced Theory of Statistics, Volume 1: Distribution Theory", Alan Stuart and Keith Ord, 6th ed., (2009), .William Feller, ''An Introduction to Probability Theory and Its Applications'', vol. 1, 3rd ed., (1968), Wiley, . This number is often expressed as a percentage (%), ranging from 0% to 100%. A simple example is the tossing of a fair (unbiased) coin. Since the coin is fair, the two outcomes ("heads" and "tails") are both equally probable; the probability of "heads" equals the probability of "tails"; and since no other outcomes are possible, the probability of either "heads" or "tails" is 1/2 (which could also be written as 0.5 or 50%). These concepts have been given an axiomatic mathematical formaliza ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Prior Probability Distribution

A prior probability distribution of an uncertain quantity, simply called the prior, is its assumed probability distribution before some evidence is taken into account. For example, the prior could be the probability distribution representing the relative proportions of voters who will vote for a particular politician in a future election. The unknown quantity may be a parameter of the model or a latent variable rather than an observable variable. In Bayesian statistics, Bayes' rule prescribes how to update the prior with new information to obtain the posterior probability distribution, which is the conditional distribution of the uncertain quantity given new data. Historically, the choice of priors was often constrained to a conjugate family of a given likelihood function, so that it would result in a tractable posterior of the same family. The widespread availability of Markov chain Monte Carlo methods, however, has made this less of a concern. There are many ways to constru ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Mixture Model

In statistics, a mixture model is a probabilistic model for representing the presence of subpopulations within an overall population, without requiring that an observed data set should identify the sub-population to which an individual observation belongs. Formally a mixture model corresponds to the mixture distribution that represents the probability distribution of observations in the overall population. However, while problems associated with "mixture distributions" relate to deriving the properties of the overall population from those of the sub-populations, "mixture models" are used to make statistical inferences about the properties of the sub-populations given only observations on the pooled population, without sub-population identity information. Mixture models are used for clustering, under the name model-based clustering, and also for density estimation. Mixture models should not be confused with models for compositional data, i.e., data whose components are constra ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Poisson Point Process

In probability theory, statistics and related fields, a Poisson point process (also known as: Poisson random measure, Poisson random point field and Poisson point field) is a type of mathematical object that consists of points randomly located on a mathematical space with the essential feature that the points occur independently of one another. The process's name derives from the fact that the number of points in any given finite region follows a Poisson distribution. The process and the distribution are named after French mathematician Siméon Denis Poisson. The process itself was discovered independently and repeatedly in several settings, including experiments on radioactive decay, telephone call arrivals and actuarial science. This point process is used as a mathematical model for seemingly random processes in numerous disciplines including astronomy,G. J. Babu and E. D. Feigelson. Spatial point processes in astronomy. ''Journal of statistical planning and inference'', 50( ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Peter Gustav Lejeune Dirichlet

Johann Peter Gustav Lejeune Dirichlet (; ; 13 February 1805 – 5 May 1859) was a German mathematician. In number theory, he proved special cases of Fermat's last theorem and created analytic number theory. In analysis, he advanced the theory of Fourier series and was one of the first to give the modern formal definition of a function. In mathematical physics, he studied potential theory, boundary-value problems, and heat diffusion, and hydrodynamics. Although his surname is Lejeune Dirichlet, he is commonly referred to by his mononym Dirichlet, in particular for results named after him. Biography Early life (1805–1822) Gustav Lejeune Dirichlet was born on 13 February 1805 in Düren, a town on the left bank of the Rhine which at the time was part of the First French Empire, reverting to Prussia after the Congress of Vienna in 1815. His father Johann Arnold Lejeune Dirichlet was the postmaster, merchant, and city councilor. His paternal grandfather had come to Düren from ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Stochastic Process

In probability theory and related fields, a stochastic () or random process is a mathematical object usually defined as a family of random variables in a probability space, where the index of the family often has the interpretation of time. Stochastic processes are widely used as mathematical models of systems and phenomena that appear to vary in a random manner. Examples include the growth of a bacterial population, an electrical current fluctuating due to thermal noise, or the movement of a gas molecule. Stochastic processes have applications in many disciplines such as biology, chemistry, ecology Ecology () is the natural science of the relationships among living organisms and their Natural environment, environment. Ecology considers organisms at the individual, population, community (ecology), community, ecosystem, and biosphere lev ..., neuroscience, physics, image processing, signal processing, stochastic control, control theory, information theory, computer scien ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Dirichlet Process

In probability theory, Dirichlet processes (after the distribution associated with Peter Gustav Lejeune Dirichlet) are a family of stochastic processes whose realizations are probability distributions. In other words, a Dirichlet process is a probability distribution whose range is itself a set of probability distributions. It is often used in Bayesian inference to describe the prior knowledge about the distribution of random variables—how likely it is that the random variables are distributed according to one or another particular distribution. As an example, a bag of 100 real-world dice is a ''random probability mass function (random pmf)''—to sample this random pmf you put your hand in the bag and draw out a die, that is, you draw a pmf. A bag of dice manufactured using a crude process 100 years ago will likely have probabilities that deviate wildly from the uniform pmf, whereas a bag of state-of-the-art dice used by Las Vegas casinos may have barely perceptible imperfe ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Exchangeable Random Variables

In statistics, an exchangeable sequence of random variables (also sometimes interchangeable) is a sequence ''X''1, ''X''2, ''X''3, ... (which may be finitely or infinitely long) whose joint probability distribution does not change when the positions in the sequence in which finitely many of them appear are altered. In other words, the joint distribution is invariant to finite permutation. Thus, for example the sequences : X_1, X_2, X_3, X_4, X_5, X_6 \quad \text \quad X_3, X_6, X_1, X_5, X_2, X_4 both have the same joint probability distribution. It is closely related to the use of independent and identically distributed random variables in statistical models. Exchangeable sequences of random variables arise in cases of simple random sampling. Definition Formally, an exchangeable sequence of random variables is a finite or infinite sequence ''X''1, ''X''2, ''X''3, ... of random variables such that for any finite permutation σ of the indices 1, 2 ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cluster Analysis

Cluster analysis or clustering is the data analyzing technique in which task of grouping a set of objects in such a way that objects in the same group (called a cluster) are more Similarity measure, similar (in some specific sense defined by the analyst) to each other than to those in other groups (clusters). It is a main task of exploratory data analysis, and a common technique for statistics, statistical data analysis, used in many fields, including pattern recognition, image analysis, information retrieval, bioinformatics, data compression, computer graphics and machine learning. Cluster analysis refers to a family of algorithms and tasks rather than one specific algorithm. It can be achieved by various algorithms that differ significantly in their understanding of what constitutes a cluster and how to efficiently find them. Popular notions of clusters include groups with small Distance function, distances between cluster members, dense areas of the data space, intervals or pa ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Nonparametric Statistics

Nonparametric statistics is a type of statistical analysis that makes minimal assumptions about the underlying distribution of the data being studied. Often these models are infinite-dimensional, rather than finite dimensional, as in parametric statistics. Nonparametric statistics can be used for descriptive statistics or statistical inference. Nonparametric tests are often used when the assumptions of parametric tests are evidently violated. Definitions The term "nonparametric statistics" has been defined imprecisely in the following two ways, among others: The first meaning of ''nonparametric'' involves techniques that do not rely on data belonging to any particular parametric family of probability distributions. These include, among others: * Methods which are ''distribution-free'', which do not rely on assumptions that the data are drawn from a given parametric family of probability distributions. * Statistics defined to be a function on a sample, without dependency on ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bayesian Statistics

Bayesian statistics ( or ) is a theory in the field of statistics based on the Bayesian interpretation of probability, where probability expresses a ''degree of belief'' in an event. The degree of belief may be based on prior knowledge about the event, such as the results of previous experiments, or on personal beliefs about the event. This differs from a number of other interpretations of probability, such as the frequentist interpretation, which views probability as the limit of the relative frequency of an event after many trials. More concretely, analysis in Bayesian methods codifies prior knowledge in the form of a prior distribution. Bayesian statistical methods use Bayes' theorem to compute and update probabilities after obtaining new data. Bayes' theorem describes the conditional probability of an event based on data as well as prior information or beliefs about the event or conditions related to the event. For example, in Bayesian inference, Bayes' theorem can ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |