|

Count–min Sketch

In computing, the count–min sketch (CM sketch) is a probabilistic data structure that serves as a frequency table of events in a stream of data. It uses hash functions to map events to frequencies, but unlike a hash table uses only sub-linear space, at the expense of overcounting some events due to collisions. The count–min sketch was invented in 2003 by Graham Cormode and S. Muthu Muthukrishnan and described by them in a 2005 paper. Count–min sketch is an alternative to count sketch and AMS sketch and can be considered an implementation of a counting Bloom filter (Fan et al., 1998) or multistage-filter. However, they are used differently and therefore sized differently: a count–min sketch typically has a sublinear number of cells, related to the desired approximation quality of the sketch, while a counting Bloom filter is more typically sized to match the number of elements in the set. Data structure The goal of the basic version of the count–min sketch is to cons ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Computing

Computing is any goal-oriented activity requiring, benefiting from, or creating computer, computing machinery. It includes the study and experimentation of algorithmic processes, and the development of both computer hardware, hardware and software. Computing has scientific, engineering, mathematical, technological, and social aspects. Major computing disciplines include computer engineering, computer science, cybersecurity, data science, information systems, information technology, and software engineering. The term ''computing'' is also synonymous with counting and calculation, calculating. In earlier times, it was used in reference to the action performed by Mechanical computer, mechanical computing machines, and before that, to Computer (occupation), human computers. History The history of computing is longer than the history of computing hardware and includes the history of methods intended for pen and paper (or for chalk and slate) with or without the aid of tables. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Pairwise Independence

In probability theory, a pairwise independent collection of random variables is a set of random variables any two of which are statistical independence, independent. Any collection of Mutual independence, mutually independent random variables is pairwise independent, but some pairwise independent collections are not mutually independent. Pairwise independent random variables with finite variance are uncorrelated. A pair of random variables ''X'' and ''Y'' are independent if and only if the random vector (''X'', ''Y'') with joint distribution, joint cumulative distribution function (CDF) F_(x,y) satisfies :F_(x,y) = F_X(x) F_Y(y), or equivalently, their joint density f_(x,y) satisfies :f_(x,y) = f_X(x) f_Y(y). That is, the joint distribution is equal to the product of the marginal distributions. Unless it is not clear in context, in practice the modifier "mutual" is usually dropped so that independence means mutual independence. A statement such as " ''X'', ''Y'', ''Z'' are in ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Hashing

Hash, hashes, hash mark, or hashing may refer to: Substances * Hash (food), a coarse mixture of ingredients, often based on minced meat * Hash (stew), a pork and onion-based gravy found in South Carolina * Hash, a nickname for hashish, a cannabis product Hash mark * Hash mark (sports), a marking on hockey rinks and gridiron football fields * Hatch marks, hash marks or tick marks, a form of mathematical notation * Number sign (#), also known as the hash, hash mark, or (in American English) pound sign * Service stripe, a military and paramilitary decoration * Tally mark, a counting notation * Checkmate symbol in chess Computing * Hash function, an encoding of data into a small, fixed size; used in hash tables and cryptography ** Hash table, a data structure using hash functions ** Cryptographic hash function, a hash function used to authenticate message integrity * URI fragment, in computer hypertext, a string of characters that refers to a subordinate resource * Geohash, a spat ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

MinHash

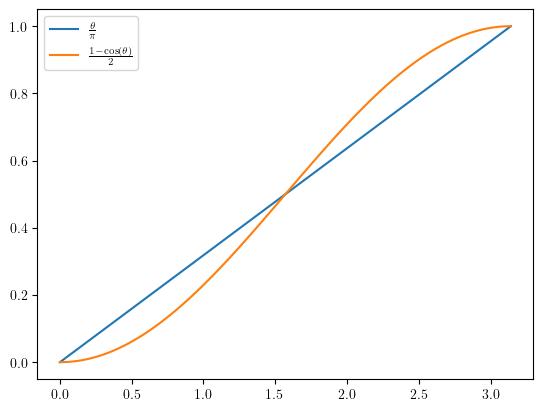

In computer science and data mining, MinHash (or the min-wise independent permutations locality sensitive hashing scheme) is a technique for quickly estimating how similar two sets are. The scheme was published by Andrei Broder in a 1997 conference, and initially used in the AltaVista search engine to detect duplicate web pages and eliminate them from search results.. It has also been applied in large-scale clustering problems, such as clustering documents by the similarity of their sets of words.. Jaccard similarity and minimum hash values The Jaccard similarity coefficient is a commonly used indicator of the similarity between two sets. Let be a set and and be subsets of , then the Jaccard index is defined to be the ratio of the number of elements of their intersection and the number of elements of their union: : J(A,B) = . This value is 0 when the two sets are disjoint, 1 when they are equal, and strictly between 0 and 1 otherwise. Two sets are more similar (i.e. have ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Locality-sensitive Hashing

In computer science, locality-sensitive hashing (LSH) is a fuzzy hashing technique that hashes similar input items into the same "buckets" with high probability. (The number of buckets is much smaller than the universe of possible input items.) Since similar items end up in the same buckets, this technique can be used for data clustering and nearest neighbor search. It differs from conventional hashing techniques in that hash collisions are maximized, not minimized. Alternatively, the technique can be seen as a way to reduce the dimensionality of high-dimensional data; high-dimensional input items can be reduced to low-dimensional versions while preserving relative distances between items. Hashing-based approximate nearest-neighbor search algorithms generally use one of two main categories of hashing methods: either data-independent methods, such as locality-sensitive hashing (LSH); or data-dependent methods, such as locality-preserving hashing (LPH). Locality-preserving hashin ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Feature Hashing

In machine learning, feature hashing, also known as the hashing trick (by analogy to the kernel trick), is a fast and space-efficient way of vectorizing features, i.e. turning arbitrary features into indices in a vector or matrix. It works by applying a hash function to the features and using their hash values as indices directly (after a modulo operation), rather than looking the indices up in an associative array. In addition to its use for encoding non-numeric values, feature hashing can also be used for dimensionality reduction. This trick is often attributed to Weinberger et al. (2009), but there exists a much earlier description of this method published by John Moody in 1989. Motivation Motivating example In a typical document classification task, the input to the machine learning algorithm (both during learning and classification) is free text. From this, a bag of words (BOW) representation is constructed: the individual tokens are extracted and counted, and each distin ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Maximum Likelihood Estimation

In statistics, maximum likelihood estimation (MLE) is a method of estimation theory, estimating the Statistical parameter, parameters of an assumed probability distribution, given some observed data. This is achieved by Mathematical optimization, maximizing a likelihood function so that, under the assumed statistical model, the Realization (probability), observed data is most probable. The point estimate, point in the parameter space that maximizes the likelihood function is called the maximum likelihood estimate. The logic of maximum likelihood is both intuitive and flexible, and as such the method has become a dominant means of statistical inference. If the likelihood function is Differentiable function, differentiable, the derivative test for finding maxima can be applied. In some cases, the first-order conditions of the likelihood function can be solved analytically; for instance, the ordinary least squares estimator for a linear regression model maximizes the likelihood when ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Bias Of An Estimator

In statistics, the bias of an estimator (or bias function) is the difference between this estimator's expected value and the true value of the parameter being estimated. An estimator or decision rule with zero bias is called ''unbiased''. In statistics, "bias" is an property of an estimator. Bias is a distinct concept from consistency: consistent estimators converge in probability to the true value of the parameter, but may be biased or unbiased (see bias versus consistency for more). All else being equal, an unbiased estimator is preferable to a biased estimator, although in practice, biased estimators (with generally small bias) are frequently used. When a biased estimator is used, bounds of the bias are calculated. A biased estimator may be used for various reasons: because an unbiased estimator does not exist without further assumptions about a population; because an estimator is difficult to compute (as in unbiased estimation of standard deviation); because a biased esti ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Euler's Number

The number is a mathematical constant approximately equal to 2.71828 that is the base of the natural logarithm and exponential function. It is sometimes called Euler's number, after the Swiss mathematician Leonhard Euler, though this can invite confusion with Euler numbers, or with Euler's constant, a different constant typically denoted \gamma. Alternatively, can be called Napier's constant after John Napier. The Swiss mathematician Jacob Bernoulli discovered the constant while studying compound interest. The number is of great importance in mathematics, alongside 0, 1, , and . All five appear in one formulation of Euler's identity e^+1=0 and play important and recurring roles across mathematics. Like the constant , is irrational, meaning that it cannot be represented as a ratio of integers, and moreover it is transcendental, meaning that it is not a root of any non-zero polynomial with rational coefficients. To 30 decimal places, the value of is: Definitions T ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Inner Product

In mathematics, an inner product space (or, rarely, a Hausdorff pre-Hilbert space) is a real vector space or a complex vector space with an operation called an inner product. The inner product of two vectors in the space is a scalar, often denoted with angle brackets such as in \langle a, b \rangle. Inner products allow formal definitions of intuitive geometric notions, such as lengths, angles, and orthogonality (zero inner product) of vectors. Inner product spaces generalize Euclidean vector spaces, in which the inner product is the dot product or ''scalar product'' of Cartesian coordinates. Inner product spaces of infinite dimension are widely used in functional analysis. Inner product spaces over the field of complex numbers are sometimes referred to as unitary spaces. The first usage of the concept of a vector space with an inner product is due to Giuseppe Peano, in 1898. An inner product naturally induces an associated norm, (denoted , x, and , y, in the pictu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Randomized Algorithm

A randomized algorithm is an algorithm that employs a degree of randomness as part of its logic or procedure. The algorithm typically uses uniformly random bits as an auxiliary input to guide its behavior, in the hope of achieving good performance in the "average case" over all possible choices of random determined by the random bits; thus either the running time, or the output (or both) are random variables. There is a distinction between algorithms that use the random input so that they always terminate with the correct answer, but where the expected running time is finite (Las Vegas algorithms, for example Quicksort), and algorithms which have a chance of producing an incorrect result ( Monte Carlo algorithms, for example the Monte Carlo algorithm for the MFAS problem) or fail to produce a result either by signaling a failure or failing to terminate. In some cases, probabilistic algorithms are the only practical means of solving a problem. In common practice, randomized alg ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Counting Bloom Filter

A counting Bloom filter is a probabilistic data structure that is used to test whether the number of occurrences of a given element in a sequence exceeds a given threshold. As a generalized form of the Bloom filter, false positive matches are possible, but false negatives are not – in other words, a query returns either "possibly bigger or equal than the threshold" or "definitely smaller than the threshold". Algorithm description Most of the parameters are defined same with Bloom filter, such as ''m, k. m'' is the number of counters in counting Bloom filter, which is expansion of ''m'' bits in Bloom filter. An ''empty counting Bloom filter'' is a ''m'' counters, all set to 0. Similar to Bloom filter, there must also be ''k'' different hash functions defined, each of which maps or hashes some set element to one of the ''m'' counter array positions, generating a uniform random distribution. It is also similar that ''k'' is a constant, much smaller than ''m'', which is proportional ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |