|

Contrastive Hebbian Learning

Contrastive Hebbian learning is a biologically plausible form of Hebbian learning. It is based on the contrastive divergence algorithm, which has been used to train a variety of energy-based latent variable models. In 2003, contrastive Hebbian learning was shown to be equivalent in power to the backpropagation algorithms commonly used in machine learning Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence. Machine .... References See also * Oja's rule * Generalized Hebbian algorithm Hebbian theory Artificial neural networks {{computing-stub ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hebbian Learning

Hebbian theory is a neuroscientific theory claiming that an increase in synaptic efficacy arises from a presynaptic cell's repeated and persistent stimulation of a postsynaptic cell. It is an attempt to explain synaptic plasticity, the adaptation of brain neurons during the learning process. It was introduced by Donald Hebb in his 1949 book '' The Organization of Behavior.'' The theory is also called Hebb's rule, Hebb's postulate, and cell assembly theory. Hebb states it as follows: Let us assume that the persistence or repetition of a reverberatory activity (or "trace") tends to induce lasting cellular changes that add to its stability. ... When an axon of cell ''A'' is near enough to excite a cell ''B'' and repeatedly or persistently takes part in firing it, some growth process or metabolic change takes place in one or both cells such that ''A''’s efficiency, as one of the cells firing ''B'', is increased. The theory is often summarized as "Cells that fire together wire tog ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Backpropagation

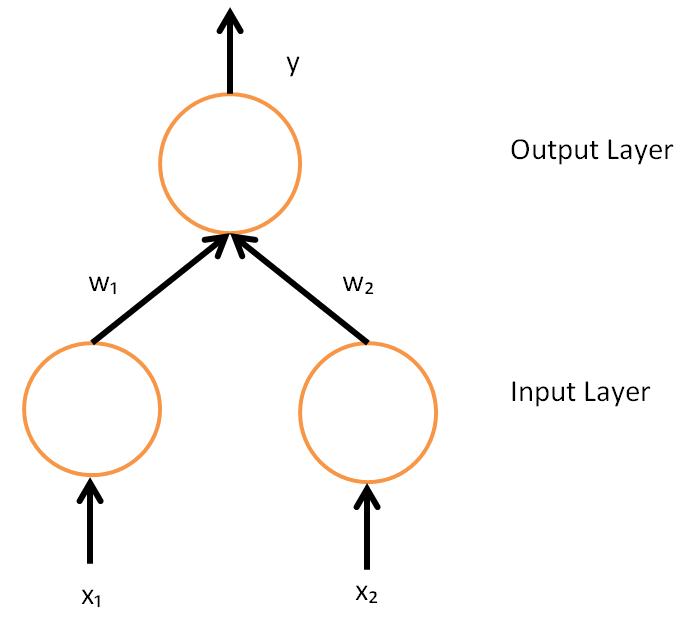

In machine learning, backpropagation (backprop, BP) is a widely used algorithm for training feedforward artificial neural networks. Generalizations of backpropagation exist for other artificial neural networks (ANNs), and for functions generally. These classes of algorithms are all referred to generically as "backpropagation". In fitting a neural network, backpropagation computes the gradient of the loss function with respect to the weights of the network for a single input–output example, and does so efficiently, unlike a naive direct computation of the gradient with respect to each weight individually. This efficiency makes it feasible to use gradient methods for training multilayer networks, updating weights to minimize loss; gradient descent, or variants such as stochastic gradient descent, are commonly used. The backpropagation algorithm works by computing the gradient of the loss function with respect to each weight by the chain rule, computing the gradient one laye ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Machine Learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence. Machine learning algorithms build a model based on sample data, known as training data, in order to make predictions or decisions without being explicitly programmed to do so. Machine learning algorithms are used in a wide variety of applications, such as in medicine, email filtering, speech recognition, agriculture, and computer vision, where it is difficult or unfeasible to develop conventional algorithms to perform the needed tasks.Hu, J.; Niu, H.; Carrasco, J.; Lennox, B.; Arvin, F.,Voronoi-Based Multi-Robot Autonomous Exploration in Unknown Environments via Deep Reinforcement Learning IEEE Transactions on Vehicular Technology, 2020. A subset of machine learning is closely related to computational statistics, which focuses on making pred ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hebbian Theory

Hebbian theory is a neuroscientific theory claiming that an increase in synaptic efficacy arises from a presynaptic cell's repeated and persistent stimulation of a postsynaptic cell. It is an attempt to explain synaptic plasticity, the adaptation of brain neurons during the learning process. It was introduced by Donald Hebb in his 1949 book '' The Organization of Behavior.'' The theory is also called Hebb's rule, Hebb's postulate, and cell assembly theory. Hebb states it as follows: Let us assume that the persistence or repetition of a reverberatory activity (or "trace") tends to induce lasting cellular changes that add to its stability. ... When an axon of cell ''A'' is near enough to excite a cell ''B'' and repeatedly or persistently takes part in firing it, some growth process or metabolic change takes place in one or both cells such that ''A''’s efficiency, as one of the cells firing ''B'', is increased. The theory is often summarized as "Cells that fire together wire t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |