|

Bartlett's Test

In statistics, Bartlett's test, named after Maurice Stevenson Bartlett, is used to test homoscedasticity, that is, if multiple samples are from populations with equal variances. Some statistical tests, such as the analysis of variance, assume that variances are equal across groups or samples, which can be checked with Bartlett's test. In a Bartlett test, we construct the null and alternative hypothesis. For this purpose several test procedures have been devised. The test procedure due to M.S.E (Mean Square Error/Estimator) Bartlett test is represented here. This test procedure is based on the statistic whose sampling distribution is approximately a Chi-Square distribution with (''k'' − 1) degrees of freedom, where ''k'' is the number of random samples, which may vary in size and are each drawn from independent normal distributions. Bartlett's test is sensitive to departures from normality. That is, if the samples come from non-normal distributions, then Bartlett's test may simpl ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistics

Statistics (from German language, German: ', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, industrial, or social problem, it is conventional to begin with a statistical population or a statistical model to be studied. Populations can be diverse groups of people or objects such as "all people living in a country" or "every atom composing a crystal". Statistics deals with every aspect of data, including the planning of data collection in terms of the design of statistical survey, surveys and experimental design, experiments. When census data (comprising every member of the target population) cannot be collected, statisticians collect data by developing specific experiment designs and survey sample (statistics), samples. Representative sampling assures that inferences and conclusions can reasonably extend from the sample ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

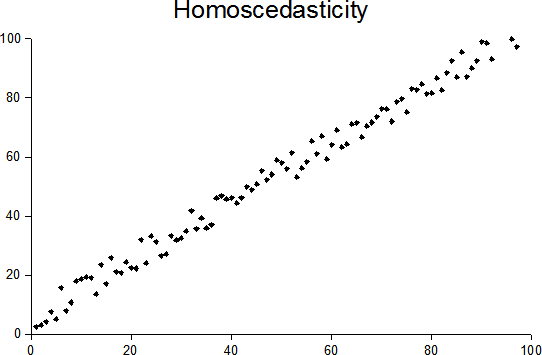

Homoscedasticity

In statistics, a sequence of random variables is homoscedastic () if all its random variables have the same finite variance; this is also known as homogeneity of variance. The complementary notion is called heteroscedasticity, also known as heterogeneity of variance. The spellings ''homoskedasticity'' and ''heteroskedasticity'' are also frequently used. “Skedasticity” comes from the Ancient Greek word “skedánnymi”, meaning “to scatter”. Assuming a variable is homoscedastic when in reality it is heteroscedastic () results in Biased estimator, unbiased but Efficiency (statistics), inefficient point estimates and in biased estimates of standard errors, and may result in overestimating the goodness of fit as measured by the Pearson product-moment correlation coefficient, Pearson coefficient. The existence of heteroscedasticity is a major concern in regression analysis and the analysis of variance, as it invalidates statistical hypothesis testing, statistical tests ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Variance

In probability theory and statistics, variance is the expected value of the squared deviation from the mean of a random variable. The standard deviation (SD) is obtained as the square root of the variance. Variance is a measure of dispersion, meaning it is a measure of how far a set of numbers is spread out from their average value. It is the second central moment of a distribution, and the covariance of the random variable with itself, and it is often represented by \sigma^2, s^2, \operatorname(X), V(X), or \mathbb(X). An advantage of variance as a measure of dispersion is that it is more amenable to algebraic manipulation than other measures of dispersion such as the expected absolute deviation; for example, the variance of a sum of uncorrelated random variables is equal to the sum of their variances. A disadvantage of the variance for practical applications is that, unlike the standard deviation, its units differ from the random variable, which is why the standard devi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

George W

George Walker Bush (born July 6, 1946) is an American politician and businessman who was the 43rd president of the United States from 2001 to 2009. A member of the Bush family and the Republican Party (United States), Republican Party, he is the eldest son of the 41st president, George H. W. Bush, and was the 46th governor of Texas from 1995 to 2000. Bush flew warplanes in the Texas Air National Guard in his twenties. After graduating from Harvard Business School in 1975, he worked in the oil industry. He later co-owned the Major League Baseball team Texas Rangers (baseball), Texas Rangers before being elected governor of Texas 1994 Texas gubernatorial election, in 1994. Governorship of George W. Bush, As governor, Bush successfully sponsored legislation for tort reform, increased education funding, set higher standards for schools, and reformed the criminal justice system. He also helped make Texas the Wind power in Texas, leading producer of wind-generated electricity in t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

William Gemmell Cochran

William Gemmell Cochran (15 July 1909 – 29 March 1980) was a prominent statistician. He was born in Scotland but spent most of his life in the United States. Cochran studied mathematics at the University of Glasgow and the University of Cambridge. He worked at Rothamsted Experimental Station from 1934 to 1939, when he moved to the United States. There he helped establish several departments of statistics. His longest spell in any one university was at Harvard, which he joined in 1957 and from which he retired in 1976. Writings Cochran wrote many articles and books. His books became standard texts: * ''Experimental Designs'' (with Gertrude Mary Cox) 1950 * * ''Statistical Methods Applied to Experiments in Agriculture and Biology'' by George W. Snedecor (Cochran contributed from the fifth (1956) edition) * ''Planning and Analysis of Observational Studies'' (edited by Lincoln E. Moses and Frederick Mosteller Charles Frederick Mosteller (December 24, 1916 – July 23, 2 ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Analysis Of Variance

Analysis of variance (ANOVA) is a family of statistical methods used to compare the Mean, means of two or more groups by analyzing variance. Specifically, ANOVA compares the amount of variation ''between'' the group means to the amount of variation ''within'' each group. If the between-group variation is substantially larger than the within-group variation, it suggests that the group means are likely different. This comparison is done using an F-test. The underlying principle of ANOVA is based on the law of total variance, which states that the total variance in a dataset can be broken down into components attributable to different sources. In the case of ANOVA, these sources are the variation between groups and the variation within groups. ANOVA was developed by the statistician Ronald Fisher. In its simplest form, it provides a statistical test of whether two or more population means are equal, and therefore generalizes the Student's t-test#Independent two-sample t-test, ''t''- ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Levene's Test

In statistics, Levene's test is an inferential statistic used to assess the equality of variances for a variable calculated for two or more groups. This test is used because some common statistical procedures assume that variances of the populations from which different samples are drawn are equal. Levene's test assesses this assumption. It tests the null hypothesis that the population variances are equal (called ''homogeneity of variance'' or ''homoscedasticity''). If the resulting ''p''-value of Levene's test is less than some significance level (typically 0.05), the obtained differences in sample variances are unlikely to have occurred based on random sampling from a population with equal variances. Thus, the null hypothesis of equal variances is rejected and it is concluded that there is a difference between the variances in the population. Levene's test has been used in the past before a comparison of means to inform the decision on whether to use a pooled t-test or the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Brown–Forsythe Test

The Brown–Forsythe test is a statistical test for the equality of group variances based on performing an Analysis of Variance (ANOVA) on a transformation of the response variable. When a one-way ANOVA is performed, samples are assumed to have been drawn from distributions with equal variance. If this assumption is not valid, the resulting ''F''-test is invalid. The Brown–Forsythe test statistic is the F statistic resulting from an ordinary one-way analysis of variance on the absolute deviations of the groups or treatments data from their individual medians. Transformation The transformed response variable is constructed to measure the spread in each group. Let : z_=\left\vert y_ - \tilde_j \right\vert where \tilde_j is the median of group ''j''. The Brown–Forsythe test statistic is the model ''F'' statistic from a one way ANOVA on ''zij'': : F = \frac \frac where ''p'' is the number of groups, ''nj'' is the number of observations in group ''j'', and ''N'' is the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sample Variance

In probability theory and statistics, variance is the expected value of the squared deviation from the mean of a random variable. The standard deviation (SD) is obtained as the square root of the variance. Variance is a measure of dispersion, meaning it is a measure of how far a set of numbers is spread out from their average value. It is the second central moment of a distribution, and the covariance of the random variable with itself, and it is often represented by \sigma^2, s^2, \operatorname(X), V(X), or \mathbb(X). An advantage of variance as a measure of dispersion is that it is more amenable to algebraic manipulation than other measures of dispersion such as the expected absolute deviation; for example, the variance of a sum of uncorrelated random variables is equal to the sum of their variances. A disadvantage of the variance for practical applications is that, unlike the standard deviation, its units differ from the random variable, which is why the standard devia ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Likelihood Ratio Test

In statistics, the likelihood-ratio test is a hypothesis test that involves comparing the goodness of fit of two competing statistical models, typically one found by maximization over the entire parameter space and another found after imposing some constraint, based on the ratio of their likelihoods. If the more constrained model (i.e., the null hypothesis) is supported by the observed data, the two likelihoods should not differ by more than sampling error. Thus the likelihood-ratio test tests whether this ratio is significantly different from one, or equivalently whether its natural logarithm is significantly different from zero. The likelihood-ratio test, also known as Wilks test, is the oldest of the three classical approaches to hypothesis testing, together with the Lagrange multiplier test and the Wald test. In fact, the latter two can be conceptualized as approximations to the likelihood-ratio test, and are asymptotically equivalent. In the case of comparing two model ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Box's M Test

Box's ''M'' test is a multivariate statistical test used to check the equality of multiple variance-covariance matrices. The test is commonly used to test the assumption of homogeneity of variances and covariances in MANOVA and linear discriminant analysis. It is named after George E. P. Box, who first discussed the test in 1949. The test uses a chi-squared approximation. Box's ''M'' test is susceptible to errors if the data does not meet model assumptions or if the sample size is too large or small. Box's ''M'' test is especially prone to error if the data does not meet the assumption of multivariate normality. See also * Bartlett's test * Levene's test In statistics, Levene's test is an inferential statistic used to assess the equality of variances for a variable calculated for two or more groups. This test is used because some common statistical procedures assume that variances of the population ... References Multivariate statistics Statistical tests {{statist ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Kaiser–Meyer–Olkin Test

The Kaiser–Meyer–Olkin (KMO) test is a statistical measure to determine how suited data is for factor analysis. The test measures sampling adequacy for each variable in the model and the complete model. The statistic is a measure of the proportion of variance among variables that might be common variance. The higher the proportion, the higher the KMO-value, the more suited the data is to factor analysis. History Henry Kaiser introduced a Measure of Sampling Adequacy (MSA) of factor analytic data matrices in 1970. Kaiser and Rice then modified it in 1974. Measure of sampling adequacy The measure of sampling adequacy is calculated for each indicator as :MSA_j = \frac and indicates to what extent an indicator is suitable for a factor analysis. Kaiser–Meyer–Olkin criterion : The Kaiser–Meyer–Olkin criterion is calculated and returns values between 0 and 1. : :KMO = \frac Here r_ is the correlation between the variable in question and another, and p_ is the par ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |