Information retrieval evaluation on:

[Wikipedia]

[Google]

[Amazon]

Information retrieval (IR) in

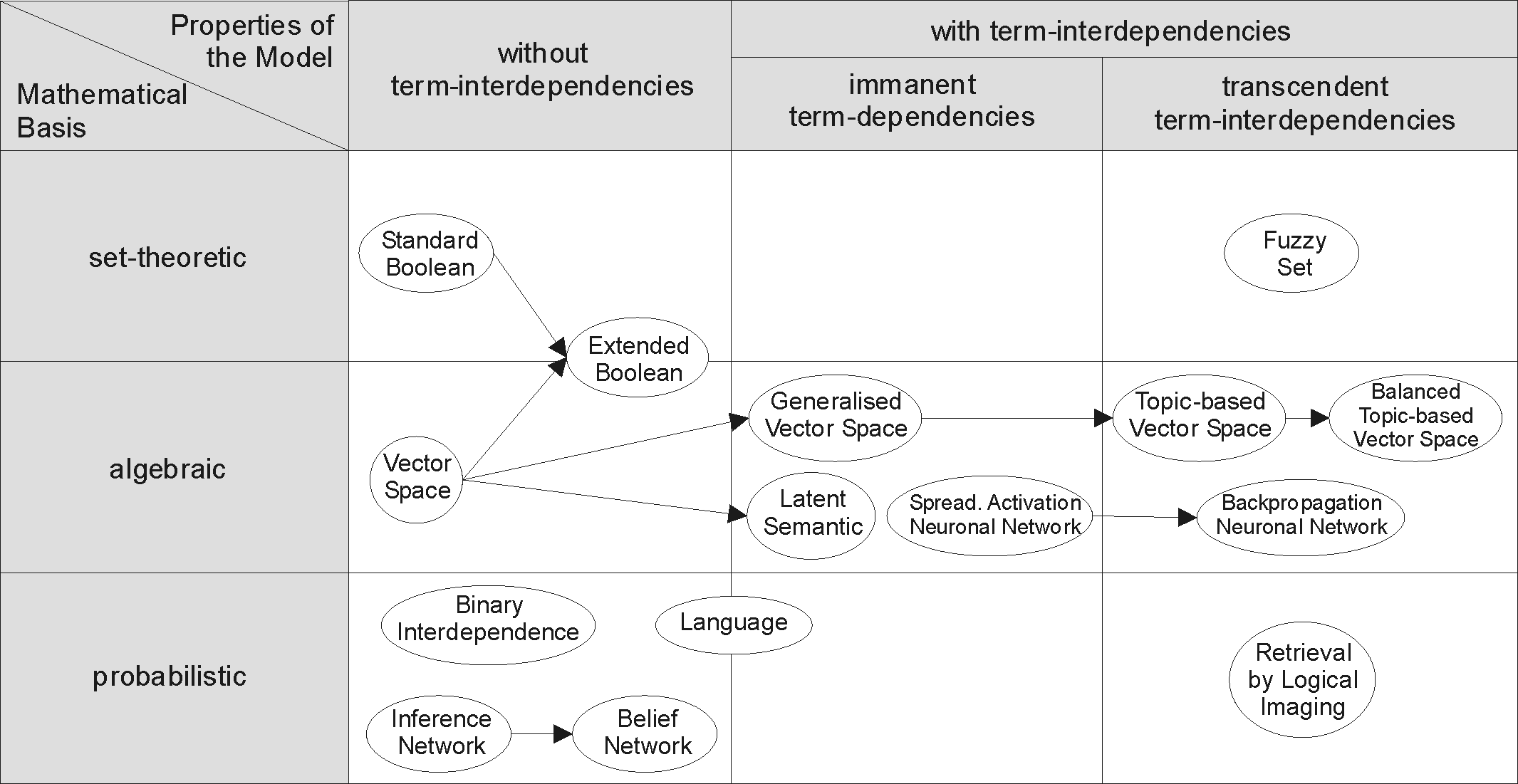

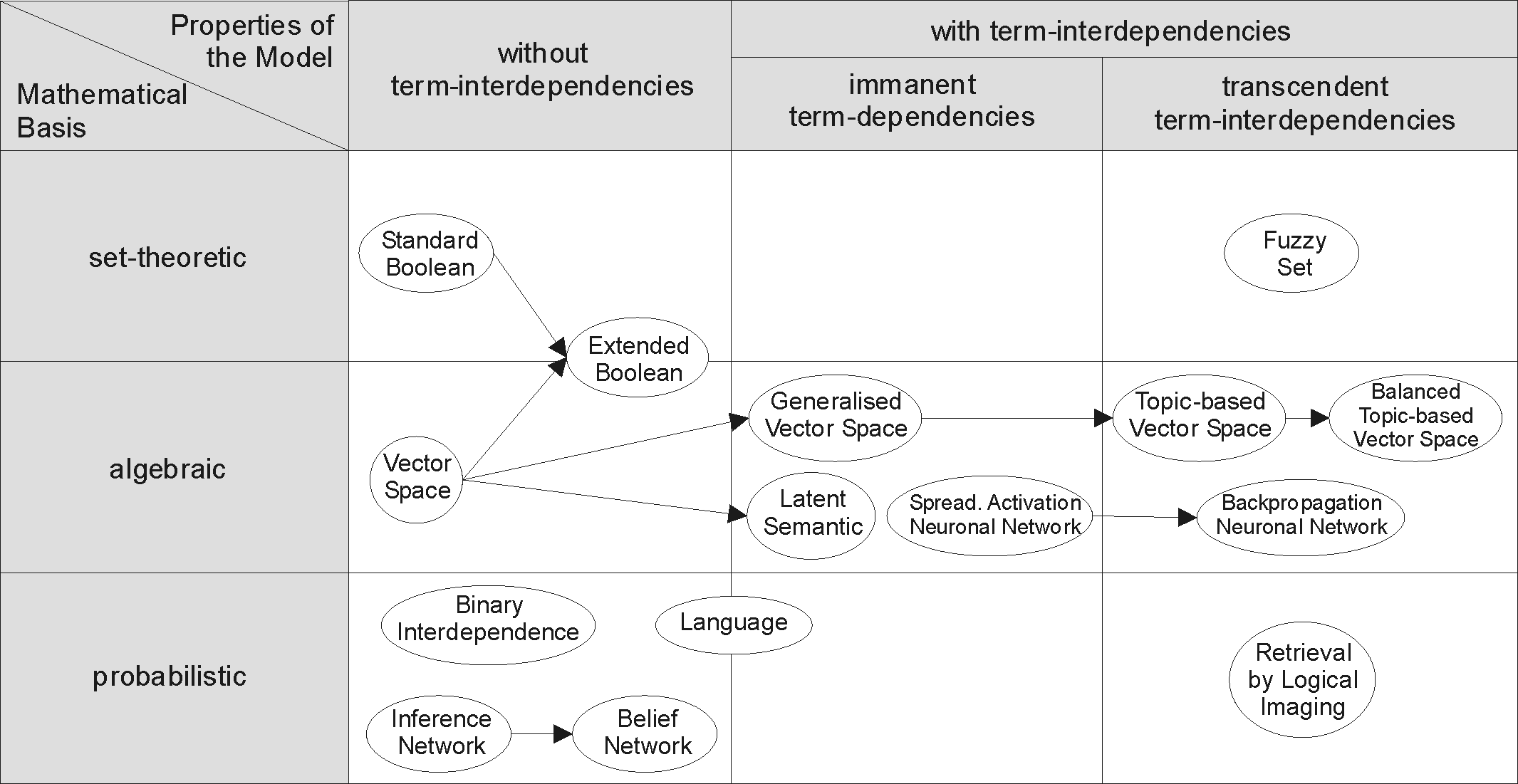

In order to effectively retrieve relevant documents by IR strategies, the documents are typically transformed into a suitable representation. Each retrieval strategy incorporates a specific model for its document representation purposes. The picture on the right illustrates the relationship of some common models. In the picture, the models are categorized according to two dimensions: the mathematical basis and the properties of the model.

In order to effectively retrieve relevant documents by IR strategies, the documents are typically transformed into a suitable representation. Each retrieval strategy incorporates a specific model for its document representation purposes. The picture on the right illustrates the relationship of some common models. In the picture, the models are categorized according to two dimensions: the mathematical basis and the properties of the model.

A nonlinear mapping for data structure analysis

" (IEEE Transactions on Computers) was the first proposal for visualization interface to an IR system. * 1970s *: early 1970s: *::* First online systems—NLM's AIM-TWX, MEDLINE; Lockheed's Dialog; SDC's ORBIT. *::* Theodor Nelson promoting concept of hypertext, published ''Computer Lib/Dream Machines''. *: 1971: Nicholas Jardine and Cornelis J. van Rijsbergen published "The use of hierarchic clustering in information retrieval", which articulated the "cluster hypothesis". *: 1975: Three highly influential publications by Salton fully articulated his vector processing framework and term discrimination model: *::* ''A Theory of Indexing'' (Society for Industrial and Applied Mathematics) *::* ''A Theory of Term Importance in Automatic Text Analysis'' ( JASIS v. 26) *::* ''A Vector Space Model for Automatic Indexing'' ( CACM 18:11) *: 1978: The First ACM SIGIR conference. *: 1979: C. J. van Rijsbergen published ''Information Retrieval'' (Butterworths). Heavy emphasis on probabilistic models. *: 1979: Tamas Doszkocs implemented the CITE natural language user interface for MEDLINE at the National Library of Medicine. The CITE system supported free form query input, ranked output and relevance feedback. * 1980s *: 1980: First international ACM SIGIR conference, joint with British Computer Society IR group in Cambridge. *: 1982: Nicholas J. Belkin, Robert N. Oddy, and Helen M. Brooks proposed the ASK (Anomalous State of Knowledge) viewpoint for information retrieval. This was an important concept, though their automated analysis tool proved ultimately disappointing. *: 1983: Salton (and Michael J. McGill) published ''Introduction to Modern Information Retrieval'' (McGraw-Hill), with heavy emphasis on vector models. *: 1985: David Blair and Bill Maron publish: An Evaluation of Retrieval Effectiveness for a Full-Text Document-Retrieval System *: mid-1980s: Efforts to develop end-user versions of commercial IR systems. *:: 1985–1993: Key papers on and experimental systems for visualization interfaces. *:: Work by Donald B. Crouch, Robert R. Korfhage, Matthew Chalmers, Anselm Spoerri and others. *: 1989: First

Modern Information Retrieval: The Concepts and Technology behind Search (second edition)

. Addison-Wesley, UK, 2011. * Stefan Büttcher, Charles L. A. Clarke, and Gordon V. Cormack

Information Retrieval: Implementing and Evaluating Search Engines

. MIT Press, Cambridge, Massachusetts, 2010. * * Christopher D. Manning, Prabhakar Raghavan, and Hinrich Schütze

Introduction to Information Retrieval

Cambridge University Press, 2008. * Yeo, ShinJoung. (2023) ''Behind the Search Box: Google and the Global Internet Industry'' (U of Illinois Press, 2023)

online

ACM SIGIR: Information Retrieval Special Interest GroupBCS IRSG: British Computer Society – Information Retrieval Specialist GroupText Retrieval Conference (TREC)Forum for Information Retrieval Evaluation (FIRE)

(online book) by C. J. van Rijsbergen

Information Retrieval Wiki

Information Retrieval Facility

TREC report on information retrieval evaluation techniquesHow eBay measures search relevanceInformation retrieval performance evaluation tool @ Athena Research Centre

{{DEFAULTSORT:Information Retrieval Natural language processing

computing

Computing is any goal-oriented activity requiring, benefiting from, or creating computer, computing machinery. It includes the study and experimentation of algorithmic processes, and the development of both computer hardware, hardware and softw ...

and information science is the task of identifying and retrieving information system

An information system (IS) is a formal, sociotechnical, organizational system designed to collect, process, Information Processing and Management, store, and information distribution, distribute information. From a sociotechnical perspective, info ...

resources that are relevant to an information need. The information need can be specified in the form of a search query. In the case of document retrieval, queries can be based on full-text or other content-based indexing. Information retrieval is the science

Science is a systematic discipline that builds and organises knowledge in the form of testable hypotheses and predictions about the universe. Modern science is typically divided into twoor threemajor branches: the natural sciences, which stu ...

of searching for information in a document, searching for documents themselves, and also searching for the metadata

Metadata (or metainformation) is "data that provides information about other data", but not the content of the data itself, such as the text of a message or the image itself. There are many distinct types of metadata, including:

* Descriptive ...

that describes data, and for database

In computing, a database is an organized collection of data or a type of data store based on the use of a database management system (DBMS), the software that interacts with end users, applications, and the database itself to capture and a ...

s of texts, images or sounds.

Automated information retrieval systems are used to reduce what has been called information overload. An IR system is a software system that provides access to books, journals and other documents; it also stores and manages those documents. Web search engine

A search engine is a software system that provides hyperlinks to web pages, and other relevant information on World Wide Web, the Web in response to a user's web query, query. The user enters a query in a web browser or a mobile app, and the sea ...

s are the most visible IR applications.

Overview

An information retrieval process begins when a user enters a query into the system. Queries are formal statements of information needs, for example search strings in web search engines. In information retrieval, a query does not uniquely identify a single object in the collection. Instead, several objects may match the query, perhaps with different degrees of relevance. An object is an entity that is represented by information in a content collection ordatabase

In computing, a database is an organized collection of data or a type of data store based on the use of a database management system (DBMS), the software that interacts with end users, applications, and the database itself to capture and a ...

. User queries are matched against the database information. However, as opposed to classical SQL queries of a database, in information retrieval the results returned may or may not match the query, so results are typically ranked. This ranking

A ranking is a relationship between a set of items, often recorded in a list, such that, for any two items, the first is either "ranked higher than", "ranked lower than", or "ranked equal to" the second. In mathematics, this is known as a weak ...

of results is a key difference of information retrieval searching compared to database searching.

Depending on the application the data objects may be, for example, text documents, images, audio, mind maps or videos. Often the documents themselves are not kept or stored directly in the IR system, but are instead represented in the system by document surrogates or metadata

Metadata (or metainformation) is "data that provides information about other data", but not the content of the data itself, such as the text of a message or the image itself. There are many distinct types of metadata, including:

* Descriptive ...

.

Most IR systems compute a numeric score on how well each object in the database matches the query, and rank the objects according to this value. The top ranking objects are then shown to the user. The process may then be iterated if the user wishes to refine the query.

History

The idea of using computers to search for relevant pieces of information was popularized in the article '' As We May Think'' by Vannevar Bush in 1945. It would appear that Bush was inspired by patents for a 'statistical machine' – filed byEmanuel Goldberg

Emanuel Goldberg (; ; ; 31August 188113September 1970) was an Israeli physicist and inventor. He was born in Moscow and moved first to Germany and later to Israel. He described himself as "a chemist by learning, physicist by calling, and a mecha ...

in the 1920s and 1930s – that searched for documents stored on film. The first description of a computer searching for information was described by Holmstrom in 1948, detailing an early mention of the Univac

UNIVAC (Universal Automatic Computer) was a line of electronic digital stored-program computers starting with the products of the Eckert–Mauchly Computer Corporation. Later the name was applied to a division of the Remington Rand company and ...

computer. Automated information retrieval systems were introduced in the 1950s: one even featured in the 1957 romantic comedy '' Desk Set''. In the 1960s, the first large information retrieval research group was formed by Gerard Salton at Cornell. By the 1970s several different retrieval techniques had been shown to perform well on small text corpora such as the Cranfield collection (several thousand documents). Large-scale retrieval systems, such as the Lockheed Dialog system, came into use early in the 1970s.

In 1992, the US Department of Defense along with the National Institute of Standards and Technology

The National Institute of Standards and Technology (NIST) is an agency of the United States Department of Commerce whose mission is to promote American innovation and industrial competitiveness. NIST's activities are organized into Outline of p ...

(NIST), cosponsored the Text Retrieval Conference (TREC) as part of the TIPSTER text program. The aim of this was to look into the information retrieval community by supplying the infrastructure that was needed for evaluation of text retrieval methodologies on a very large text collection. This catalyzed research on methods that scale to huge corpora. The introduction of web search engine

A search engine is a software system that provides hyperlinks to web pages, and other relevant information on World Wide Web, the Web in response to a user's web query, query. The user enters a query in a web browser or a mobile app, and the sea ...

s has boosted the need for very large scale retrieval systems even further.

By the late 1990s, the rise of the World Wide Web fundamentally transformed information retrieval. While early search engines such as AltaVista (1995) and Yahoo!

Yahoo (, styled yahoo''!'' in its logo) is an American web portal that provides the search engine Yahoo Search and related services including My Yahoo, Yahoo Mail, Yahoo News, Yahoo Finance, Yahoo Sports, y!entertainment, yahoo!life, and its a ...

(1994) offered keyword-based retrieval, they were limited in scale and ranking refinement. The breakthrough came in 1998 with the founding of Google

Google LLC (, ) is an American multinational corporation and technology company focusing on online advertising, search engine technology, cloud computing, computer software, quantum computing, e-commerce, consumer electronics, and artificial ...

, which introduced the PageRank algorithm, using the web’s hyperlink structure to assess page importance and improve relevance ranking.

During the 2000s, web search systems evolved rapidly with the integration of machine learning techniques. These systems began to incorporate user behavior data (e.g., click-through logs), query reformulation, and content-based signals to improve search accuracy and personalization. In 2009, Microsoft

Microsoft Corporation is an American multinational corporation and technology company, technology conglomerate headquartered in Redmond, Washington. Founded in 1975, the company became influential in the History of personal computers#The ear ...

launched Bing, introducing features that would later incorporate semantic

Semantics is the study of linguistic Meaning (philosophy), meaning. It examines what meaning is, how words get their meaning, and how the meaning of a complex expression depends on its parts. Part of this process involves the distinction betwee ...

web technologies through the development of its Satori knowledge base. Academic analysis have highlighted Bing’s semantic capabilities, including structured data use and entity recognition, as part of a broader industry shift toward improving search relevance and understanding user intent through natural language processing.

A major leap occurred in 2018, when Google deployed BERT (Bidirectional Encoder Representations from Transformers) to better understand the contextual meaning of queries and documents. This marked one of the first times deep neural language models were used at scale in real-world retrieval systems. BERT’s bidirectional training enabled a more refined comprehension of word relationships in context, improving the handling of natural language queries. Because of its success, transformer-based models gained traction in academic research and commercial search applications.

Simultaneously, the research community began exploring neural ranking models that outperformed traditional lexical-based methods. Long-standing benchmarks such as the Text REtrieval Conference ( TREC), initiated in 1992, and more recent evaluation frameworks Microsoft MARCO(MAchine Reading COmprehension) (2019) became central to training and evaluating retrieval systems across multiple tasks and domains. MS MARCO has also been adopted in the TREC Deep Learning Tracks, where it serves as a core dataset for evaluating advances in neural ranking models within a standardized benchmarking environment.

As deep learning became integral to information retrieval systems, researchers began to categorize neural approaches into three broad classes: sparse, dense, and hybrid models. Sparse models, including traditional term-based methods and learned variants like SPLADE, rely on interpretable representations and inverted indexes to enable efficient exact term matching with added semantic signals. Dense models, such as dual-encoder architectures like ColBERT, use continuous vector embeddings to support semantic similarity beyond keyword overlap. Hybrid models aim to combine the advantages of both, balancing the lexical (token) precision of sparse methods with the semantic depth of dense models. This way of categorizing models balances scalability, relevance, and efficiency in retrieval systems.

As IR systems increasingly rely on deep learning, concerns around bias, fairness, and explainability have also come to the picture. Research is now focused not just on relevance and efficiency, but on transparency, accountability, and user trust in retrieval algorithms.

Applications

Areas where information retrieval techniques are employed include (the entries are in alphabetical order within each category):General applications

* Digital libraries * Information filtering ** Recommender systems * Media search ** Blog search ** Image retrieval ** 3D retrieval ** Music retrieval ** News search ** Speech retrieval ** Video retrieval * Search engines ** Site search ** Desktop search ** Enterprise search ** Federated search **Mobile search

Mobile may refer to:

Places

* Mobile, Alabama, a U.S. port city

* Mobile County, Alabama

* Mobile, Arizona, a small town near Phoenix, U.S.

* Mobile, Newfoundland and Labrador

Arts, entertainment, and media Music Groups and labels

* Mobile (b ...

** Social search

** Web search

Domain-specific applications

* Expert search finding * Genomic information retrieval * Geographic information retrieval * Information retrieval for chemical structures * Information retrieval insoftware engineering

Software engineering is a branch of both computer science and engineering focused on designing, developing, testing, and maintaining Application software, software applications. It involves applying engineering design process, engineering principl ...

* Legal information retrieval

* Vertical search

Other retrieval methods

Methods/Techniques in which information retrieval techniques are employed include: * Adversarial information retrieval * Automatic summarization ** Multi-document summarization * Compound term processing * Cross-lingual retrieval * Document classification * Spam filtering * Question answeringModel types

In order to effectively retrieve relevant documents by IR strategies, the documents are typically transformed into a suitable representation. Each retrieval strategy incorporates a specific model for its document representation purposes. The picture on the right illustrates the relationship of some common models. In the picture, the models are categorized according to two dimensions: the mathematical basis and the properties of the model.

In order to effectively retrieve relevant documents by IR strategies, the documents are typically transformed into a suitable representation. Each retrieval strategy incorporates a specific model for its document representation purposes. The picture on the right illustrates the relationship of some common models. In the picture, the models are categorized according to two dimensions: the mathematical basis and the properties of the model.

First dimension: mathematical basis

* ''Set-theoretic'' models represent documents as sets of words or phrases. Similarities are usually derived from set-theoretic operations on those sets. Common models are: ** Standard Boolean model ** Extended Boolean model ** Fuzzy retrieval * ''Algebraic models'' represent documents and queries usually as vectors, matrices, or tuples. The similarity of the query vector and document vector is represented as a scalar value. ** Vector space model ** Generalized vector space model ** (Enhanced) Topic-based Vector Space Model ** Extended Boolean model ** Latent semantic indexing a.k.a.latent semantic analysis

Latent semantic analysis (LSA) is a technique in natural language processing, in particular distributional semantics, of analyzing relationships between a set of documents and the terms they contain by producing a set of concepts related to the d ...

* ''Probabilistic models'' treat the process of document retrieval as a probabilistic inference. Similarities are computed as probabilities that a document is relevant for a given query. Probabilistic theorems like Bayes' theorem are often used in these models.

** Binary Independence Model

** Probabilistic relevance model on which is based the okapi (BM25) relevance function

** Uncertain inference

** Language models

** Divergence-from-randomness model

** Latent Dirichlet allocation

* ''Feature-based retrieval models'' view documents as vectors of values of ''feature functions'' (or just ''features'') and seek the best way to combine these features into a single relevance score, typically by learning to rank

Learning to rank. Slides from Tie-Yan Liu's talk at World Wide Web Conference, WWW 2009 conference aravailable online or machine-learned ranking (MLR) is the application of machine learning, typically Supervised learning, supervised, Semi-supervi ...

methods. Feature functions are arbitrary functions of document and query, and as such can easily incorporate almost any other retrieval model as just another feature.

Second dimension: properties of the model

* ''Models without term-interdependencies'' treat different terms/words as independent. This fact is usually represented in vector space models by the orthogonality assumption of term vectors or in probabilistic models by an independency assumption for term variables. * ''Models with immanent term interdependencies'' allow a representation of interdependencies between terms. However the degree of the interdependency between two terms is defined by the model itself. It is usually directly or indirectly derived (e.g. by dimensional reduction) from the co-occurrence of those terms in the whole set of documents. * ''Models with transcendent term interdependencies'' allow a representation of interdependencies between terms, but they do not allege how the interdependency between two terms is defined. They rely on an external source for the degree of interdependency between two terms. (For example, a human or sophisticated algorithms.)Third Dimension: representational approach-based classification

In addition to the theoretical distinctions, modern information retrieval models are also categorized on how queries and documents are represented and compared, using a practical classification distinguishing between sparse, dense and hybrid models. * ''Sparse'' models utilize interpretable, term-based representations and typically rely on inverted index structures. Classical methods such as TF-IDF and BM25 fall under this category, along with more recent learned sparse models that integrate neural architectures while retaining sparsity. * ''Dense'' models represent queries and documents as continuous vectors using deep learning models, typically transformer-based encoders. These models enable semantic similarity matching beyond exact term overlap and are used in tasks involving semantic search and question answering. * ''Hybrid'' models aim to combine the strengths of both approaches, integrating lexical (tokens) and semantic signals through score fusion, late interaction, or multi-stage ranking pipelines. This classification has become increasingly common in both academic and the real world applications and is getting widely adopted and used in evaluation benchmarks for Information Retrieval models.Performance and correctness measures

The evaluation of an information retrieval system' is the process of assessing how well a system meets the information needs of its users. In general, measurement considers a collection of documents to be searched and a search query. Traditional evaluation metrics, designed for Boolean retrieval or top-k retrieval, include precision and recall. All measures assume a ground truth notion of relevance: every document is known to be either relevant or non-relevant to a particular query. In practice, queries may be ill-posed and there may be different shades of relevance.Libraries for searching and indexing

*Lemur

Lemurs ( ; from Latin ) are Strepsirrhini, wet-nosed primates of the Superfamily (biology), superfamily Lemuroidea ( ), divided into 8 Family (biology), families and consisting of 15 genera and around 100 existing species. They are Endemism, ...

* Lucene

** Solr

**Elasticsearch

Elasticsearch is a Search engine (computing), search engine based on Apache Lucene, a free and open-source search engine. It provides a distributed, Multitenancy, multitenant-capable full-text search engine with an HTTP web interface and schema ...

* Manatee

* Manticore search

*Sphinx

A sphinx ( ; , ; or sphinges ) is a mythical creature with the head of a human, the body of a lion, and the wings of an eagle.

In Culture of Greece, Greek tradition, the sphinx is a treacherous and merciless being with the head of a woman, th ...

* Terrier Search Engine

* Xapian

Timeline

* Before the 1900s *: 1801: Joseph Marie Jacquard invents theJacquard loom

The Jacquard machine () is a device fitted to a loom that simplifies the process of manufacturing textiles with such complex patterns as brocade, damask and matelassé. The resulting ensemble of the loom and Jacquard machine is then called a Jac ...

, the first machine to use punched cards to control a sequence of operations.

*: 1880s: Herman Hollerith invents an electro-mechanical data tabulator using punch cards as a machine readable medium.

*: 1890 Hollerith cards, keypunches and tabulators used to process the 1890 US census data.

* 1920s–1930s

*: Emanuel Goldberg

Emanuel Goldberg (; ; ; 31August 188113September 1970) was an Israeli physicist and inventor. He was born in Moscow and moved first to Germany and later to Israel. He described himself as "a chemist by learning, physicist by calling, and a mecha ...

submits patents for his "Statistical Machine", a document search engine that used photoelectric cells and pattern recognition to search the metadata on rolls of microfilmed documents.

* 1940s–1950s

*: late 1940s: The US military confronted problems of indexing and retrieval of wartime scientific research documents captured from Germans.

*:: 1945: Vannevar Bush's '' As We May Think'' appeared in '' Atlantic Monthly''.

*:: 1947: Hans Peter Luhn (research engineer at IBM since 1941) began work on a mechanized punch card-based system for searching chemical compounds.

*: 1950s: Growing concern in the US for a "science gap" with the USSR motivated, encouraged funding and provided a backdrop for mechanized literature searching systems ( Allen Kent ''et al.'') and the invention of the citation index

A citation index is a kind of bibliographic index, an index of citations between publications, allowing the user to easily establish which later documents cite which earlier documents. A form of citation index is first found in 12th-century H ...

by Eugene Garfield

Eugene Eli Garfield (September 16, 1925 – February 26, 2017) was an American linguistics, linguist and businessman, one of the founders of bibliometrics and scientometrics. He helped to create ''Current Contents'', ''Science Citation Index'' ( ...

.

*: 1950: The term "information retrieval" was coined by Calvin Mooers.

*: 1951: Philip Bagley conducted the earliest experiment in computerized document retrieval in a master thesis at MIT.

*: 1955: Allen Kent joined Case Western Reserve University, and eventually became associate director of the Center for Documentation and Communications Research. That same year, Kent and colleagues published a paper in American Documentation describing the precision and recall measures as well as detailing a proposed "framework" for evaluating an IR system which included statistical sampling methods for determining the number of relevant documents not retrieved.

*: 1958: International Conference on Scientific Information Washington DC included consideration of IR systems as a solution to problems identified. See: ''Proceedings of the International Conference on Scientific Information, 1958'' (National Academy of Sciences, Washington, DC, 1959)

*: 1959: Hans Peter Luhn published "Auto-encoding of documents for information retrieval".

* 1960s:

*: early 1960s: Gerard Salton began work on IR at Harvard, later moved to Cornell.

*: 1960: Melvin Earl Maron and John Lary Kuhns published "On relevance, probabilistic indexing, and information retrieval" in the Journal of the ACM 7(3):216–244, July 1960.

*: 1962:

*:* Cyril W. Cleverdon published early findings of the Cranfield studies, developing a model for IR system evaluation. See: Cyril W. Cleverdon, "Report on the Testing and Analysis of an Investigation into the Comparative Efficiency of Indexing Systems". Cranfield Collection of Aeronautics, Cranfield, England, 1962.

*:* Kent published ''Information Analysis and Retrieval''.

*: 1963:

*:* Weinberg report "Science, Government and Information" gave a full articulation of the idea of a "crisis of scientific information". The report was named after Dr. Alvin Weinberg.

*:* Joseph Becker and Robert M. Hayes published text on information retrieval. Becker, Joseph; Hayes, Robert Mayo. ''Information storage and retrieval: tools, elements, theories''. New York, Wiley (1963).

*: 1964:

*:* Karen Spärck Jones finished her thesis at Cambridge, ''Synonymy and Semantic Classification'', and continued work on computational linguistics as it applies to IR.

*:* The National Bureau of Standards sponsored a symposium titled "Statistical Association Methods for Mechanized Documentation". Several highly significant papers, including G. Salton's first published reference (we believe) to the SMART system.

*:mid-1960s:

*::* National Library of Medicine developed MEDLARS Medical Literature Analysis and Retrieval System, the first major machine-readable database and batch-retrieval system.

*::* Project Intrex at MIT.

*:: 1965: J. C. R. Licklider published ''Libraries of the Future''.

*:: 1966: Don Swanson was involved in studies at University of Chicago on Requirements for Future Catalogs.

*: late 1960s: F. Wilfrid Lancaster completed evaluation studies of the MEDLARS system and published the first edition of his text on information retrieval.

*:: 1968:

*:* Gerard Salton published ''Automatic Information Organization and Retrieval''.

*:* John W. Sammon, Jr.'s RADC Tech report "Some Mathematics of Information Storage and Retrieval..." outlined the vector model.

*:: 1969: Sammon'sA nonlinear mapping for data structure analysis

" (IEEE Transactions on Computers) was the first proposal for visualization interface to an IR system. * 1970s *: early 1970s: *::* First online systems—NLM's AIM-TWX, MEDLINE; Lockheed's Dialog; SDC's ORBIT. *::* Theodor Nelson promoting concept of hypertext, published ''Computer Lib/Dream Machines''. *: 1971: Nicholas Jardine and Cornelis J. van Rijsbergen published "The use of hierarchic clustering in information retrieval", which articulated the "cluster hypothesis". *: 1975: Three highly influential publications by Salton fully articulated his vector processing framework and term discrimination model: *::* ''A Theory of Indexing'' (Society for Industrial and Applied Mathematics) *::* ''A Theory of Term Importance in Automatic Text Analysis'' ( JASIS v. 26) *::* ''A Vector Space Model for Automatic Indexing'' ( CACM 18:11) *: 1978: The First ACM SIGIR conference. *: 1979: C. J. van Rijsbergen published ''Information Retrieval'' (Butterworths). Heavy emphasis on probabilistic models. *: 1979: Tamas Doszkocs implemented the CITE natural language user interface for MEDLINE at the National Library of Medicine. The CITE system supported free form query input, ranked output and relevance feedback. * 1980s *: 1980: First international ACM SIGIR conference, joint with British Computer Society IR group in Cambridge. *: 1982: Nicholas J. Belkin, Robert N. Oddy, and Helen M. Brooks proposed the ASK (Anomalous State of Knowledge) viewpoint for information retrieval. This was an important concept, though their automated analysis tool proved ultimately disappointing. *: 1983: Salton (and Michael J. McGill) published ''Introduction to Modern Information Retrieval'' (McGraw-Hill), with heavy emphasis on vector models. *: 1985: David Blair and Bill Maron publish: An Evaluation of Retrieval Effectiveness for a Full-Text Document-Retrieval System *: mid-1980s: Efforts to develop end-user versions of commercial IR systems. *:: 1985–1993: Key papers on and experimental systems for visualization interfaces. *:: Work by Donald B. Crouch, Robert R. Korfhage, Matthew Chalmers, Anselm Spoerri and others. *: 1989: First

World Wide Web

The World Wide Web (WWW or simply the Web) is an information system that enables Content (media), content sharing over the Internet through user-friendly ways meant to appeal to users beyond Information technology, IT specialists and hobbyis ...

proposals by Tim Berners-Lee at CERN.

* 1990s

*: 1992: First TREC conference.

*: 1997: Publication of Korfhage's ''Information Storage and Retrieval'' with emphasis on visualization and multi-reference point systems.

*: 1998: Google

Google LLC (, ) is an American multinational corporation and technology company focusing on online advertising, search engine technology, cloud computing, computer software, quantum computing, e-commerce, consumer electronics, and artificial ...

is founded by Larry Page and Sergey Brin. It introduces the PageRank algorithm, which evaluates the importance of web pages based on hyperlink structure.

*: 1999: Publication of Ricardo Baeza-Yates and Berthier Ribeiro-Neto's ''Modern Information Retrieval'' by Addison Wesley, the first book that attempts to cover all IR.

* 2000s

*: 2001: Wikipedia

Wikipedia is a free content, free Online content, online encyclopedia that is written and maintained by a community of volunteers, known as Wikipedians, through open collaboration and the wiki software MediaWiki. Founded by Jimmy Wales and La ...

launches as a free, collaborative online encyclopedia. It quickly becomes a major resource for information retrieval, particularly for natural language processing and semantic search benchmarks.

*: 2009: Microsoft launches Bing, introducing features such as related searches, semantic suggestions, and later incorporating deep learning techniques into its ranking algorithms.

* 2010s

*: 2013: Google’s Hummingbird algorithm goes live, marking a shift from keyword matching toward understanding query intent and semantic context in search queries.

*: 2018: Google AI researchers release BERT (Bidirectional Encoder Representations from Transformers), enabling deep bidirectional understanding of language and improving document ranking and query understanding in IR.

*: 2019: Microsoft introduces MS MARCO (Microsoft MAchine Reading COmprehension), a large-scale dataset designed for training and evaluating machine reading and passage ranking models.

* 2020s

*: 2020: The ColBERT (Contextualized Late Interaction over BERT) model, designed for efficient passage retrieval using contextualized embeddings, was introduced at SIGIR 2020.

*: 2021: SPLADE is introduced at SIGIR 2021. It’s a sparse neural retrieval model that balances lexical and semantic features using masked language modeling and sparsity regularization.

*: 2022: The BEIR benchmark is released to evaluate zero-shot IR across 18 datasets covering diverse tasks. It standardizes comparisons between dense, sparse, and hybrid IR models.

Major conferences

* SIGIR: Special Interest Group on Information Retrieval * ECIR: European Conference on Information Retrieval * CIKM: Conference on Information and Knowledge Management * WWW: International World Wide Web ConferenceAwards in the field

* Tony Kent Strix award * Gerard Salton Award * Karen Spärck Jones AwardSee also

* * * * * * * * * * ** ** ** * * * * * * * * * * * * * * * *References

Further reading

* Ricardo Baeza-Yates, Berthier Ribeiro-NetoModern Information Retrieval: The Concepts and Technology behind Search (second edition)

. Addison-Wesley, UK, 2011. * Stefan Büttcher, Charles L. A. Clarke, and Gordon V. Cormack

Information Retrieval: Implementing and Evaluating Search Engines

. MIT Press, Cambridge, Massachusetts, 2010. * * Christopher D. Manning, Prabhakar Raghavan, and Hinrich Schütze

Introduction to Information Retrieval

Cambridge University Press, 2008. * Yeo, ShinJoung. (2023) ''Behind the Search Box: Google and the Global Internet Industry'' (U of Illinois Press, 2023)

online

External links

ACM SIGIR: Information Retrieval Special Interest Group

(online book) by C. J. van Rijsbergen

Information Retrieval Wiki

Information Retrieval Facility

TREC report on information retrieval evaluation techniques

{{DEFAULTSORT:Information Retrieval Natural language processing