Data parallelism on:

[Wikipedia]

[Google]

[Amazon]

Data parallelism is parallelization across multiple processors in

Data parallelism is parallelization across multiple processors in

// Matrix multiplication

for (i = 0; i < row_length_A; i++)

// Array addition

for (i = 0; i < n; i++)

We can exploit data parallelism in the preceding code to execute it faster as the arithmetic is loop independent. Parallelization of the matrix multiplication code is achieved by using

// Matrix multiplication in parallel

#pragma omp parallel for schedule(dynamic,1) collapse(2)

for (i = 0; i < row_length_A; i++)

It can be observed from the example that a lot of processors will be required as the matrix sizes keep on increasing. Keeping the execution time low is the priority but as the matrix size increases, we are faced with other constraints like complexity of such a system and its associated costs. Therefore, constraining the number of processors in the system, we can still apply the same principle and divide the data into bigger chunks to calculate the product of two matrices.

For addition of arrays in a data parallel implementation, let's assume a more modest system with two

Data Parallel Algorithms

Data parallelism is parallelization across multiple processors in

Data parallelism is parallelization across multiple processors in parallel computing

Parallel computing is a type of computing, computation in which many calculations or Process (computing), processes are carried out simultaneously. Large problems can often be divided into smaller ones, which can then be solved at the same time. ...

environments. It focuses on distributing the data across different nodes, which operate on the data in parallel. It can be applied on regular data structures like arrays and matrices by working on each element in parallel. It contrasts to task parallelism

Task parallelism (also known as function parallelism and control parallelism) is a form of parallelization of computer code across multiple processors in parallel computing environments. Task parallelism focuses on distributing tasks—concurre ...

as another form of parallelism.

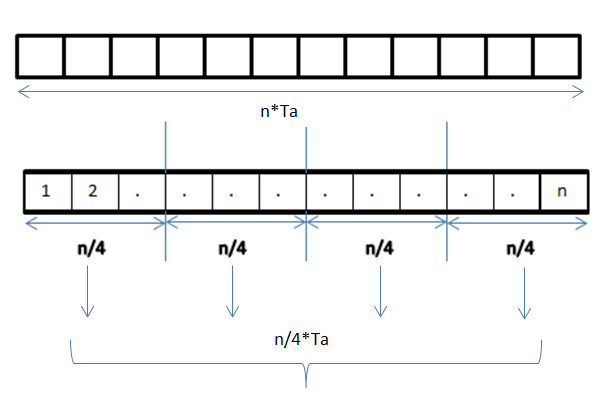

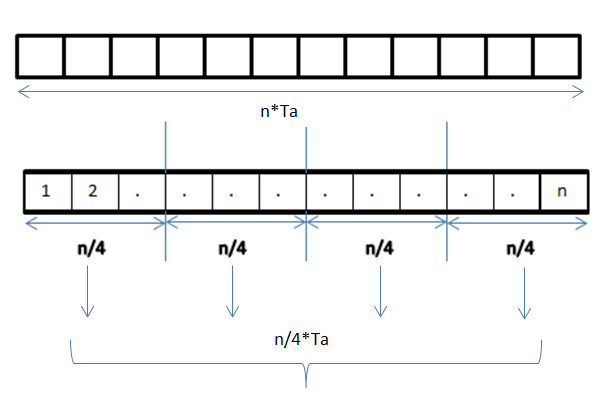

A data parallel job on an array of ''n'' elements can be divided equally among all the processors. Let us assume we want to sum all the elements of the given array and the time for a single addition operation is Ta time units. In the case of sequential execution, the time taken by the process will be ''n''×Ta time units as it sums up all the elements of an array. On the other hand, if we execute this job as a data parallel job on 4 processors the time taken would reduce to (''n''/4)×Ta + merging overhead time units. Parallel execution results in a speedup of 4 over sequential execution. The locality of data references plays an important part in evaluating the performance of a data parallel programming model. Locality of data depends on the memory accesses performed by the program as well as the size of the cache.

History

Exploitation of the concept of data parallelism started in 1960s with the development of the Solomon machine. The Solomon machine, also called avector processor

In computing, a vector processor or array processor is a central processing unit (CPU) that implements an instruction set where its instructions are designed to operate efficiently and effectively on large one-dimensional arrays of data called ...

, was developed to expedite the performance of mathematical operations by working on a large data array (operating on multiple data in consecutive time steps). Concurrency of data operations was also exploited by operating on multiple data at the same time using a single instruction. These processors were called 'array processors'. In the 1980s, the term was introduced to describe this programming style, which was widely used to program Connection Machine

The Connection Machine (CM) is a member of a series of massively parallel supercomputers sold by Thinking Machines Corporation. The idea for the Connection Machine grew out of doctoral research on alternatives to the traditional von Neumann arch ...

s in data parallel languages like C*. Today, data parallelism is best exemplified in graphics processing unit

A graphics processing unit (GPU) is a specialized electronic circuit designed for digital image processing and to accelerate computer graphics, being present either as a discrete video card or embedded on motherboards, mobile phones, personal ...

s (GPUs), which use both the techniques of operating on multiple data in space and time using a single instruction.

Most data parallel hardware supports only a fixed number of parallel levels, often only one. This means that within a parallel operation it is not possible to launch more parallel operations recursively, and means that programmers cannot make use of nested hardware parallelism. The programming language NESL was an early effort at implementing a nested data-parallel programming model on flat parallel machines, and in particular introduced the flattening transformation that transforms nested data parallelism to flat data parallelism. This work was continued by other languages such as Data Parallel Haskell and Futhark

Runes are the letters in a set of related alphabets, known as runic rows, runic alphabets or futharks (also, see '' futhark'' vs ''runic alphabet''), native to the Germanic peoples. Runes were primarily used to represent a sound value (a ...

, although arbitrary nested data parallelism is not widely available in current data-parallel programming languages.

Description

In a multiprocessor system executing a single set of instructions (SIMD

Single instruction, multiple data (SIMD) is a type of parallel computer, parallel processing in Flynn's taxonomy. SIMD describes computers with multiple processing elements that perform the same operation on multiple data points simultaneousl ...

), data parallelism is achieved when each processor performs the same task on different distributed data. In some situations, a single execution thread controls operations on all the data. In others, different threads control the operation, but they execute the same code.

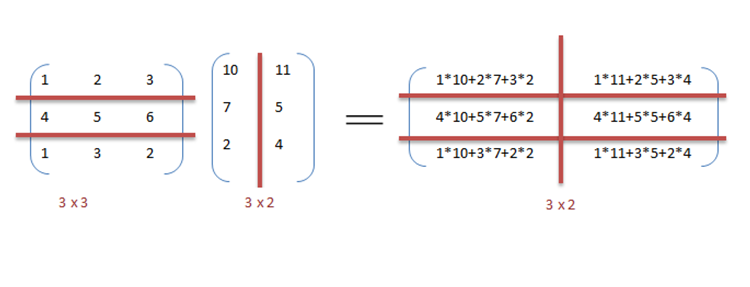

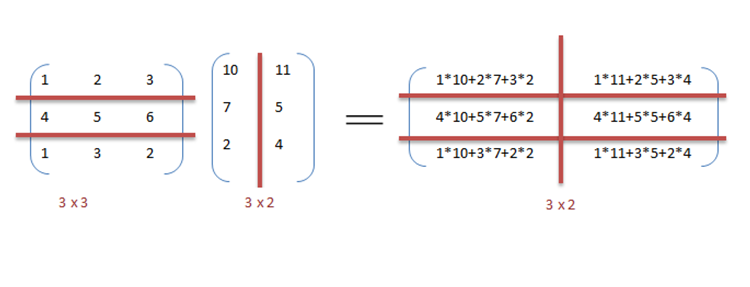

For instance, consider matrix multiplication

In mathematics, specifically in linear algebra, matrix multiplication is a binary operation that produces a matrix (mathematics), matrix from two matrices. For matrix multiplication, the number of columns in the first matrix must be equal to the n ...

and addition in a sequential manner as discussed in the example.

Example

Below is the sequential pseudo-code for multiplication and addition of two matrices where the result is stored in the matrix . The pseudo-code for multiplication calculates thedot product

In mathematics, the dot product or scalar productThe term ''scalar product'' means literally "product with a Scalar (mathematics), scalar as a result". It is also used for other symmetric bilinear forms, for example in a pseudo-Euclidean space. N ...

of two matrices , and stores the result into the output matrix .

If the following programs were executed sequentially, the time taken to calculate the result would be of the (assuming row lengths and column lengths of both matrices are n) and for multiplication and addition respectively.

OpenMP

OpenMP is an application programming interface (API) that supports multi-platform shared-memory multiprocessing programming in C, C++, and Fortran, on many platforms, instruction-set architectures and operating systems, including Solaris, ...

. An OpenMP directive, "omp parallel for" instructs the compiler to execute the code in the for loop in parallel. For multiplication, we can divide matrix A and B into blocks along rows and columns respectively. This allows us to calculate every element in matrix C individually thereby making the task parallel. For example: ''A x ndot B x k' can be finished in instead of when executed in parallel using ''m*k'' processors.

central processing unit

A central processing unit (CPU), also called a central processor, main processor, or just processor, is the primary Processor (computing), processor in a given computer. Its electronic circuitry executes Instruction (computing), instructions ...

s (CPU) A and B, CPU A could add all elements from the top half of the arrays, while CPU B could add all elements from the bottom half of the arrays. Since the two processors work in parallel, the job of performing array addition would take one half the time of performing the same operation in serial using one CPU alone.

The program expressed in pseudocode

In computer science, pseudocode is a description of the steps in an algorithm using a mix of conventions of programming languages (like assignment operator, conditional operator, loop) with informal, usually self-explanatory, notation of actio ...

below—which applies some arbitrary operation, foo, on every element in the array d—illustrates data parallelism:Some input data (e.g. when d.length evaluates to 1 and round rounds towards zero his is just an example, there are no requirements on what type of rounding is used will lead to lower_limit being greater than upper_limit, it's assumed that the loop will exit immediately (i.e. zero iterations will occur) when this happens.

if CPU = "a" then

lower_limit := 1

upper_limit := round(d.length / 2)

else if CPU = "b" then

lower_limit := round(d.length / 2) + 1

upper_limit := d.length

for i from lower_limit to upper_limit by 1 do

foo(d

In an SPMD

In computing, single program, multiple data (SPMD) is a term that has been used to refer to computational models for exploiting parallelism whereby multiple processors cooperate in the execution of a program in order to obtain results faster. ...

system executed on 2 processor system, both CPUs will execute the code.

Data parallelism emphasizes the distributed (parallel) nature of the data, as opposed to the processing (task parallelism). Most real programs fall somewhere on a continuum between task parallelism and data parallelism.

Steps to parallelization

The process of parallelizing a sequential program can be broken down into four discrete steps.Data parallelism vs. task parallelism

Data parallelism vs. model parallelism

Mixed data and task parallelism

Data and task parallelism, can be simultaneously implemented by combining them together for the same application. This is called Mixed data and task parallelism. Mixed parallelism requires sophisticated scheduling algorithms and software support. It is the best kind of parallelism when communication is slow and number of processors is large. Mixed data and task parallelism has many applications. It is particularly used in the following applications: # Mixed data and task parallelism finds applications in the global climate modeling. Large data parallel computations are performed by creating grids of data representing Earth's atmosphere and oceans and task parallelism is employed for simulating the function and model of the physical processes. # In timing based circuit simulation. The data is divided among different sub-circuits and parallelism is achieved with orchestration from the tasks.Data parallel programming environments

A variety of data parallel programming environments are available today, most widely used of which are: #Message Passing Interface

The Message Passing Interface (MPI) is a portable message-passing standard designed to function on parallel computing architectures. The MPI standard defines the syntax and semantics of library routines that are useful to a wide range of use ...

: It is a cross-platform message passing programming interface for parallel computers. It defines the semantics of library functions to allow users to write portable message passing programs in C, C++ and Fortran.

# OpenMP

OpenMP is an application programming interface (API) that supports multi-platform shared-memory multiprocessing programming in C, C++, and Fortran, on many platforms, instruction-set architectures and operating systems, including Solaris, ...

: It's an Application Programming Interface (API) which supports shared memory programming models on multiple platforms of multiprocessor systems. Since version 4.5, OpenMP is also able to target devices other than typical CPUs. It can program FPGAs, DSPs, GPUs and more. It is not confined to GPUs like OpenACC.

# CUDA

In computing, CUDA (Compute Unified Device Architecture) is a proprietary parallel computing platform and application programming interface (API) that allows software to use certain types of graphics processing units (GPUs) for accelerated gene ...

and OpenACC

OpenACC (for ''open accelerators'') is a programming standard for parallel computing developed by Cray, CAPS, Nvidia and PGI. The standard is designed to simplify parallel programming of heterogeneous CPU/ GPU systems.

As in OpenMP, the prog ...

: CUDA and OpenACC (respectively) are parallel computing API platforms designed to allow a software engineer to utilize GPUs' computational units for general purpose processing.

# Threading Building Blocks

oneAPI Threading Building Blocks (oneTBB; formerly Threading Building Blocks or TBB) is a C++ template library developed by Intel for parallel programming on multi-core processors. Using TBB, a computation is broken down into tasks that can r ...

and RaftLib: Both open source programming environments that enable mixed data/task parallelism in C/C++ environments across heterogeneous resources.

Applications

Data parallelism finds its applications in a variety of fields ranging from physics, chemistry, biology, material sciences to signal processing. Sciences imply data parallelism for simulating models like molecular dynamics, sequence analysis of genome data and other physical phenomenon. Driving forces in signal processing for data parallelism are video encoding, image and graphics processing, wireless communications to name a few.Data-intensive computing

See also

* Active message *Instruction level parallelism

Instruction-level parallelism (ILP) is the parallel or simultaneous execution of a sequence of instructions in a computer program. More specifically, ILP refers to the average number of instructions run per step of this parallel execution.

Di ...

* Parallel programming model

In computing, a parallel programming model is an abstraction of parallel computer architecture, with which it is convenient to express algorithms and their composition in programs. The value of a programming model can be judged on its ''generalit ...

* Prefix sum

In computer science, the prefix sum, cumulative sum, inclusive scan, or simply scan of a sequence of numbers is a second sequence of numbers , the summation, sums of Prefix (computer science), prefixes (running totals) of the input sequence:

:

:

: ...

* Scalable parallelism Software is said to exhibit scalable parallelism if it can make use of additional processors to solve larger problems,

i.e. this term refers to software for which Gustafson's law holds.

Consider a program whose execution time is dominated by one or ...

* Segmented scan

* Thread level parallelism

Notes

References

* Hillis, W. Daniel and Steele, Guy L.Data Parallel Algorithms

Communications of the ACM

''Communications of the ACM'' (''CACM'') is the monthly journal of the Association for Computing Machinery (ACM).

History

It was established in 1958, with Saul Rosen as its first managing editor. It is sent to all ACM members.

Articles are i ...

December 1986

* Blelloch, Guy E, Vector Models for Data-Parallel Computing MIT Press 1990.

{{Parallel Computing

Parallel computing

Articles with example pseudocode