communication-avoiding algorithm on:

[Wikipedia]

[Google]

[Amazon]

Communication-avoiding algorithms minimize movement of data within a

Energy consumption increases by orders of magnitude as we go higher in the memory hierarchy. United States president Barack Obama cited communication-avoiding algorithms in the FY 2012 Department of Energy budget request to Congress:

Energy consumption increases by orders of magnitude as we go higher in the memory hierarchy. United States president Barack Obama cited communication-avoiding algorithms in the FY 2012 Department of Energy budget request to Congress:

for i = 1 to n

for j = 1 to n

for k = 1 to n

C(i,j) = C(i,j) + A(i,k) * B(k,j)

Arithmetic cost (time-complexity): ''n''2(2''n'' − 1) for sufficiently large ''n'' or O(''n''3).

Rewriting this algorithm with communication cost labelled at each step

for i = 1 to n

- n² reads

for j = 1 to n

- n² reads

- n³ reads

for k = 1 to n

C(i,j) = C(i,j) + A(i,k) * B(k,j)

- n² writes

Fast memory may be defined as the local processor memory (

for i = 1 to n

for j = 1 to n

for k = 1 to n

C(i,j) = C(i,j) + A(i,k) * B(k,j)

Arithmetic cost (time-complexity): ''n''2(2''n'' − 1) for sufficiently large ''n'' or O(''n''3).

Rewriting this algorithm with communication cost labelled at each step

for i = 1 to n

- n² reads

for j = 1 to n

- n² reads

- n³ reads

for k = 1 to n

C(i,j) = C(i,j) + A(i,k) * B(k,j)

- n² writes

Fast memory may be defined as the local processor memory (

for i = 1 to n/b

for j = 1 to n/b

- b² × (n/b)² = n² reads

for k = 1 to n/b

- b² × (n/b)³ = n³/b reads

- b² × (n/b)³ = n³/b reads

C(i,j) = C(i,j) + A(i,k) * B(k,j) -

- b² × (n/b)² = n² writes

Communication cost: 2''n''3/''b'' + 2''n''2 reads/writes << 2''n''3 arithmetic cost

Making ''b'' as large possible:

: 3''b''2 ≤ ''M''

we achieve the following communication lower bound:

: 31/2''n''3/''M''1/2 + 2''n''2 or Ω (no. of FLOPs / ''M''1/2)

for i = 1 to n/b

for j = 1 to n/b

- b² × (n/b)² = n² reads

for k = 1 to n/b

- b² × (n/b)³ = n³/b reads

- b² × (n/b)³ = n³/b reads

C(i,j) = C(i,j) + A(i,k) * B(k,j) -

- b² × (n/b)² = n² writes

Communication cost: 2''n''3/''b'' + 2''n''2 reads/writes << 2''n''3 arithmetic cost

Making ''b'' as large possible:

: 3''b''2 ≤ ''M''

we achieve the following communication lower bound:

: 31/2''n''3/''M''1/2 + 2''n''2 or Ω (no. of FLOPs / ''M''1/2)

Exascale computing study: Technology challenges in exascale computing systems

"

Locality of reference in LU Decomposition with partial pivoting

” SIAM J. Matrix Anal. Appl., vol. 18, no. 4, 1997. F. Gustavson, “Recursion Leads to Automatic Variable Blocking for Dense Linear-Algebra Algorithms,” IBM Journal of Research and Development, vol. 41, no. 6, pp. 737–755, 1997. E. Elmroth, F. Gustavson, I. Jonsson, and B. Kagstrom, �

Recursive blocked algorithms and hybrid data structures for dense matrix library software

” SIAM Review, vol. 46, no. 1, pp. 3–45, 2004. Grigori, Laura.

Introduction to communication avoiding linear algebra algorithms in high performance computing

Parallel computing Algorithms Optimization algorithms and methods

memory hierarchy

In computer architecture, the memory hierarchy separates computer storage into a hierarchy based on response time. Since response time, complexity, and capacity are related, the levels may also be distinguished by their performance and controlli ...

for improving its running-time and energy consumption. These minimize the total of two costs (in terms of time and energy): arithmetic and communication. Communication, in this context refers to moving data, either between levels of memory or between multiple processors over a network. It is much more expensive than arithmetic.

Motivation

Consider the following running-time model: * Measure of computation = Time perFLOP

In computing, floating point operations per second (FLOPS, flops or flop/s) is a measure of computer performance, useful in fields of scientific computations that require floating-point calculations. For such cases, it is a more accurate meas ...

= γ

* Measure of communication = No. of words of data moved = β

⇒ Total running time = γ·(no. of FLOP

In computing, floating point operations per second (FLOPS, flops or flop/s) is a measure of computer performance, useful in fields of scientific computations that require floating-point calculations. For such cases, it is a more accurate meas ...

s) + β·(no. of words)

From the fact that ''β'' >> ''γ'' as measured in time and energy, communication cost dominates computation cost. Technological trends indicate that the relative cost of communication is increasing on a variety of platforms, from cloud computing

Cloud computing is the on-demand availability of computer system resources, especially data storage ( cloud storage) and computing power, without direct active management by the user. Large clouds often have functions distributed over m ...

to supercomputers

A supercomputer is a computer with a high level of performance as compared to a general-purpose computer. The performance of a supercomputer is commonly measured in floating-point operations per second (FLOPS) instead of million instructions ...

to mobile devices. The report also predicts that gap between DRAM

Dynamic random-access memory (dynamic RAM or DRAM) is a type of random-access semiconductor memory that stores each bit of data in a memory cell, usually consisting of a tiny capacitor and a transistor, both typically based on metal-oxi ...

access time and FLOPs will increase 100× over coming decade to balance power usage between processors and DRAM.

Energy consumption increases by orders of magnitude as we go higher in the memory hierarchy. United States president Barack Obama cited communication-avoiding algorithms in the FY 2012 Department of Energy budget request to Congress:

Energy consumption increases by orders of magnitude as we go higher in the memory hierarchy. United States president Barack Obama cited communication-avoiding algorithms in the FY 2012 Department of Energy budget request to Congress:

Objectives

Communication-avoiding algorithms are designed with the following objectives: * Reorganize algorithms to reduce communication across all memory hierarchies. * Attain the lower-bound on communication when possible. The following simple example demonstrates how these are achieved.Matrix multiplication example

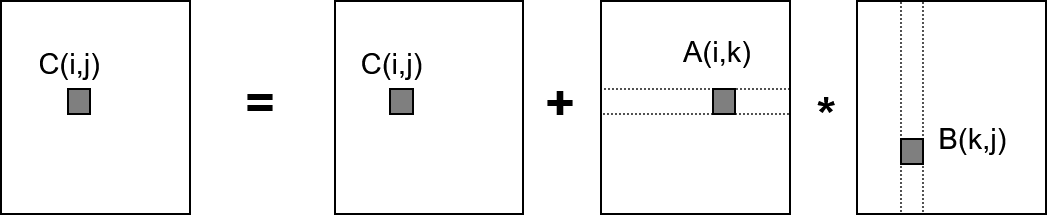

Let A, B and C be square matrices of order ''n'' × ''n''. The following naive algorithm implements C = C + A * B: for i = 1 to n

for j = 1 to n

for k = 1 to n

C(i,j) = C(i,j) + A(i,k) * B(k,j)

Arithmetic cost (time-complexity): ''n''2(2''n'' − 1) for sufficiently large ''n'' or O(''n''3).

Rewriting this algorithm with communication cost labelled at each step

for i = 1 to n

- n² reads

for j = 1 to n

- n² reads

- n³ reads

for k = 1 to n

C(i,j) = C(i,j) + A(i,k) * B(k,j)

- n² writes

Fast memory may be defined as the local processor memory (

for i = 1 to n

for j = 1 to n

for k = 1 to n

C(i,j) = C(i,j) + A(i,k) * B(k,j)

Arithmetic cost (time-complexity): ''n''2(2''n'' − 1) for sufficiently large ''n'' or O(''n''3).

Rewriting this algorithm with communication cost labelled at each step

for i = 1 to n

- n² reads

for j = 1 to n

- n² reads

- n³ reads

for k = 1 to n

C(i,j) = C(i,j) + A(i,k) * B(k,j)

- n² writes

Fast memory may be defined as the local processor memory (CPU cache

A CPU cache is a hardware cache used by the central processing unit (CPU) of a computer to reduce the average cost (time or energy) to access data from the main memory. A cache is a smaller, faster memory, located closer to a processor core, wh ...

) of size M and slow memory may be defined as the DRAM.

Communication cost (reads/writes): ''n''3 + 3''n''2 or O(''n''3)

Since total running time = ''γ''·O(''n''3) + ''β''·O(''n''3) and ''β'' >> ''γ'' the communication cost is dominant. The blocked (tiled) matrix multiplication algorithm reduces this dominant term:

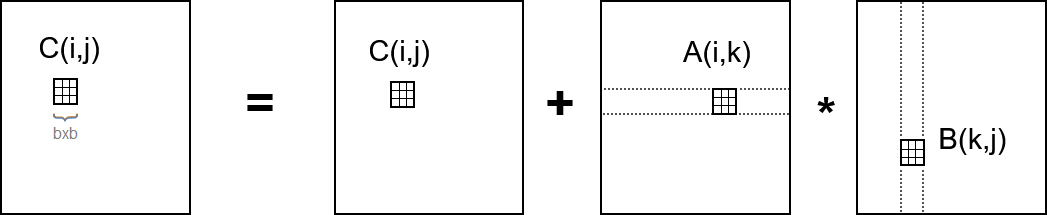

Blocked (tiled) matrix multiplication

Consider A, B and C to be ''n''/''b''-by-''n''/''b'' matrices of ''b''-by-''b'' sub-blocks where b is called the block size; assume three ''b''-by-''b'' blocks fit in fast memory. for i = 1 to n/b

for j = 1 to n/b

- b² × (n/b)² = n² reads

for k = 1 to n/b

- b² × (n/b)³ = n³/b reads

- b² × (n/b)³ = n³/b reads

C(i,j) = C(i,j) + A(i,k) * B(k,j) -

- b² × (n/b)² = n² writes

Communication cost: 2''n''3/''b'' + 2''n''2 reads/writes << 2''n''3 arithmetic cost

Making ''b'' as large possible:

: 3''b''2 ≤ ''M''

we achieve the following communication lower bound:

: 31/2''n''3/''M''1/2 + 2''n''2 or Ω (no. of FLOPs / ''M''1/2)

for i = 1 to n/b

for j = 1 to n/b

- b² × (n/b)² = n² reads

for k = 1 to n/b

- b² × (n/b)³ = n³/b reads

- b² × (n/b)³ = n³/b reads

C(i,j) = C(i,j) + A(i,k) * B(k,j) -

- b² × (n/b)² = n² writes

Communication cost: 2''n''3/''b'' + 2''n''2 reads/writes << 2''n''3 arithmetic cost

Making ''b'' as large possible:

: 3''b''2 ≤ ''M''

we achieve the following communication lower bound:

: 31/2''n''3/''M''1/2 + 2''n''2 or Ω (no. of FLOPs / ''M''1/2)

Previous approaches for reducing communication

Most of the approaches investigated in the past to address this problem rely on scheduling or tuning techniques that aim at overlapping communication with computation. However, this approach can lead to an improvement of at most a factor of two. Ghosting is a different technique for reducing communication, in which a processor stores and computes redundantly data from neighboring processors for future computations.Cache-oblivious algorithm

In computing, a cache-oblivious algorithm (or cache-transcendent algorithm) is an algorithm designed to take advantage of a processor cache without having the size of the cache (or the length of the cache lines, etc.) as an explicit parameter. An ...

s represent a different approach introduced in 1999 for fast Fourier transform

A fast Fourier transform (FFT) is an algorithm that computes the discrete Fourier transform (DFT) of a sequence, or its inverse (IDFT). Fourier analysis converts a signal from its original domain (often time or space) to a representation in t ...

s, and then extended to graph algorithms, dynamic programming, etc. They were also applied to several operations in linear algebra as dense LU and QR factorizations. The design of architecture specific algorithms is another approach that can be used for reducing the communication in parallel algorithms, and there are many examples in the literature of algorithms that are adapted to a given communication topology.

See also

*Data locality

In computer science, locality of reference, also known as the principle of locality, is the tendency of a processor to access the same set of memory locations repetitively over a short period of time. There are two basic types of reference localit ...

References

{{reflist, refs= Demmel, Jim. "Communication avoiding algorithms". 2012 SC Companion: High Performance Computing, Networking Storage and Analysis. IEEE, 2012. Demmel, James, and Kathy Yelick. "Communication Avoiding (CA) and Other Innovative Algorithms". The Berkeley Par Lab: Progress in the Parallel Computing Landscape: 243–250. Bergman, Keren, et al.Exascale computing study: Technology challenges in exascale computing systems

"

Defense Advanced Research Projects Agency

The Defense Advanced Research Projects Agency (DARPA) is a research and development agency of the United States Department of Defense responsible for the development of emerging technologies for use by the military.

Originally known as the Adv ...

Information Processing Techniques Office

The Information Processing Techniques Office (IPTO), originally "Command and Control Research",Lyon, Matthew; Hafner, Katie (1999-08-19). ''Where Wizards Stay Up Late: The Origins Of The Internet'' (p. 39). Simon & Schuster. Kindle Edition. was par ...

(DARPA IPTO), Tech. Rep 15 (2008).

Shalf, John, Sudip Dosanjh, and John Morrison. "Exascale computing technology challenges". High Performance Computing for Computational Science–VECPAR 2010. Springer Berlin Heidelberg, 2011. 1–25.

M. Frigo, C. E. Leiserson, H. Prokop, and S. Ramachandran, “Cacheoblivious algorithms”, In FOCS ’99: Proceedings of the 40th Annual Symposium on Foundations of Computer Science, 1999. IEEE Computer Society.

S. Toledo, �Locality of reference in LU Decomposition with partial pivoting

” SIAM J. Matrix Anal. Appl., vol. 18, no. 4, 1997. F. Gustavson, “Recursion Leads to Automatic Variable Blocking for Dense Linear-Algebra Algorithms,” IBM Journal of Research and Development, vol. 41, no. 6, pp. 737–755, 1997. E. Elmroth, F. Gustavson, I. Jonsson, and B. Kagstrom, �

Recursive blocked algorithms and hybrid data structures for dense matrix library software

” SIAM Review, vol. 46, no. 1, pp. 3–45, 2004. Grigori, Laura.

Introduction to communication avoiding linear algebra algorithms in high performance computing

Parallel computing Algorithms Optimization algorithms and methods