|

Maximum Entropy Spectral Estimation

Maximum entropy spectral estimation is a method of spectral density estimation. The goal is to improve the spectral quality based on the principle of maximum entropy. The method is based on choosing the spectrum which corresponds to the most random or the most unpredictable time series whose autocorrelation function agrees with the known values. This assumption, which corresponds to the concept of maximum entropy as used in both statistical mechanics and information theory, is maximally non-committal with regard to the unknown values of the autocorrelation function of the time series. It is simply the application of maximum entropy modeling to any type of spectrum and is used in all fields where data is presented in spectral form. The usefulness of the technique varies based on the source of the spectral data since it is dependent on the amount of assumed knowledge about the spectrum that can be applied to the model. In maximum entropy modeling, probability distributions are cre ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

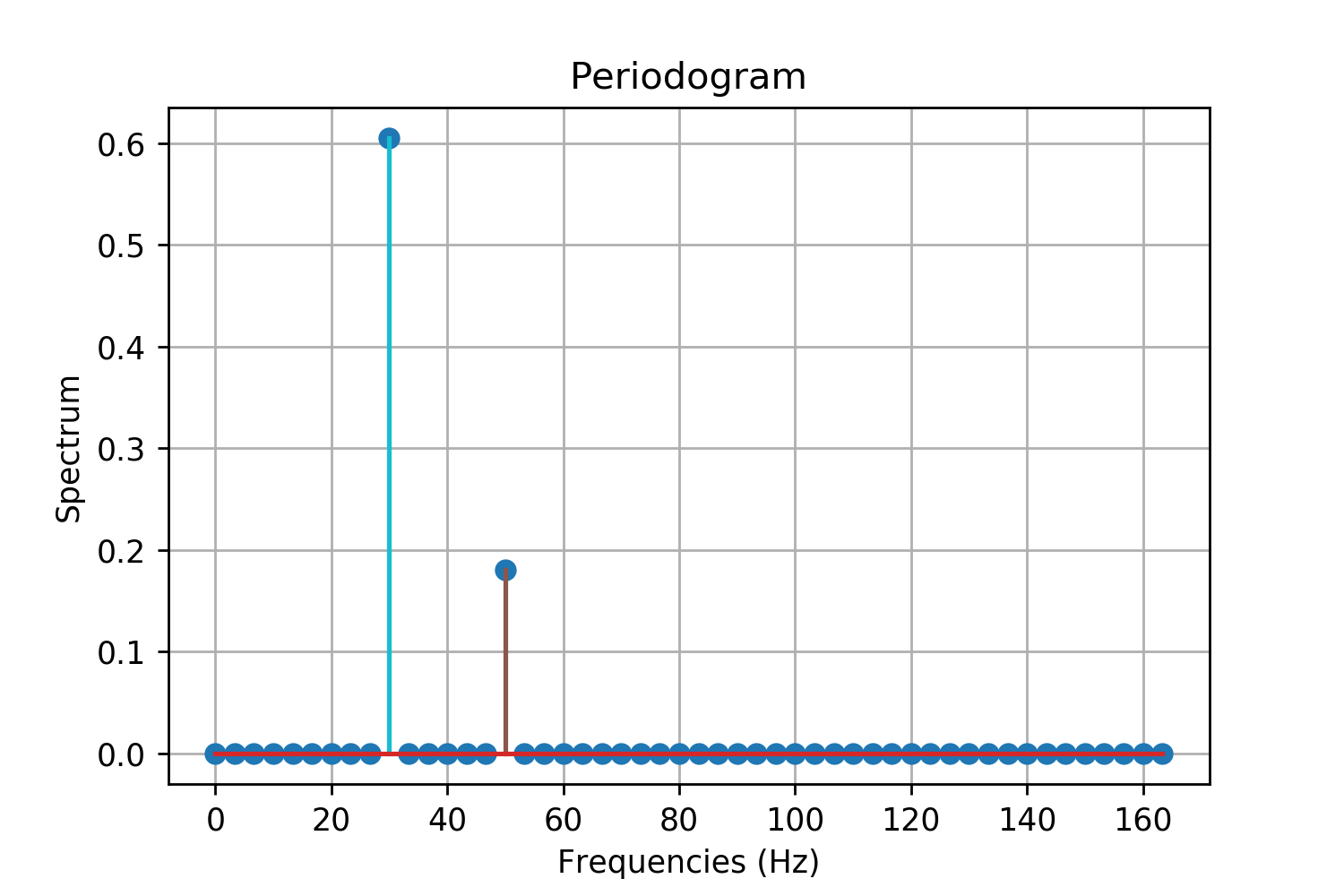

Spectral Density Estimation

In statistical signal processing, the goal of spectral density estimation (SDE) or simply spectral estimation is to estimate the spectral density (also known as the power spectral density) of a signal from a sequence of time samples of the signal. Intuitively speaking, the spectral density characterizes the frequency content of the signal. One purpose of estimating the spectral density is to detect any periodicities in the data, by observing peaks at the frequencies corresponding to these periodicities. Some SDE techniques assume that a signal is composed of a limited (usually small) number of generating frequencies plus noise and seek to find the location and intensity of the generated frequencies. Others make no assumption on the number of components and seek to estimate the whole generating spectrum. Overview Spectrum analysis, also referred to as frequency domain analysis or spectral density estimation, is the technical process of decomposing a complex signal in ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

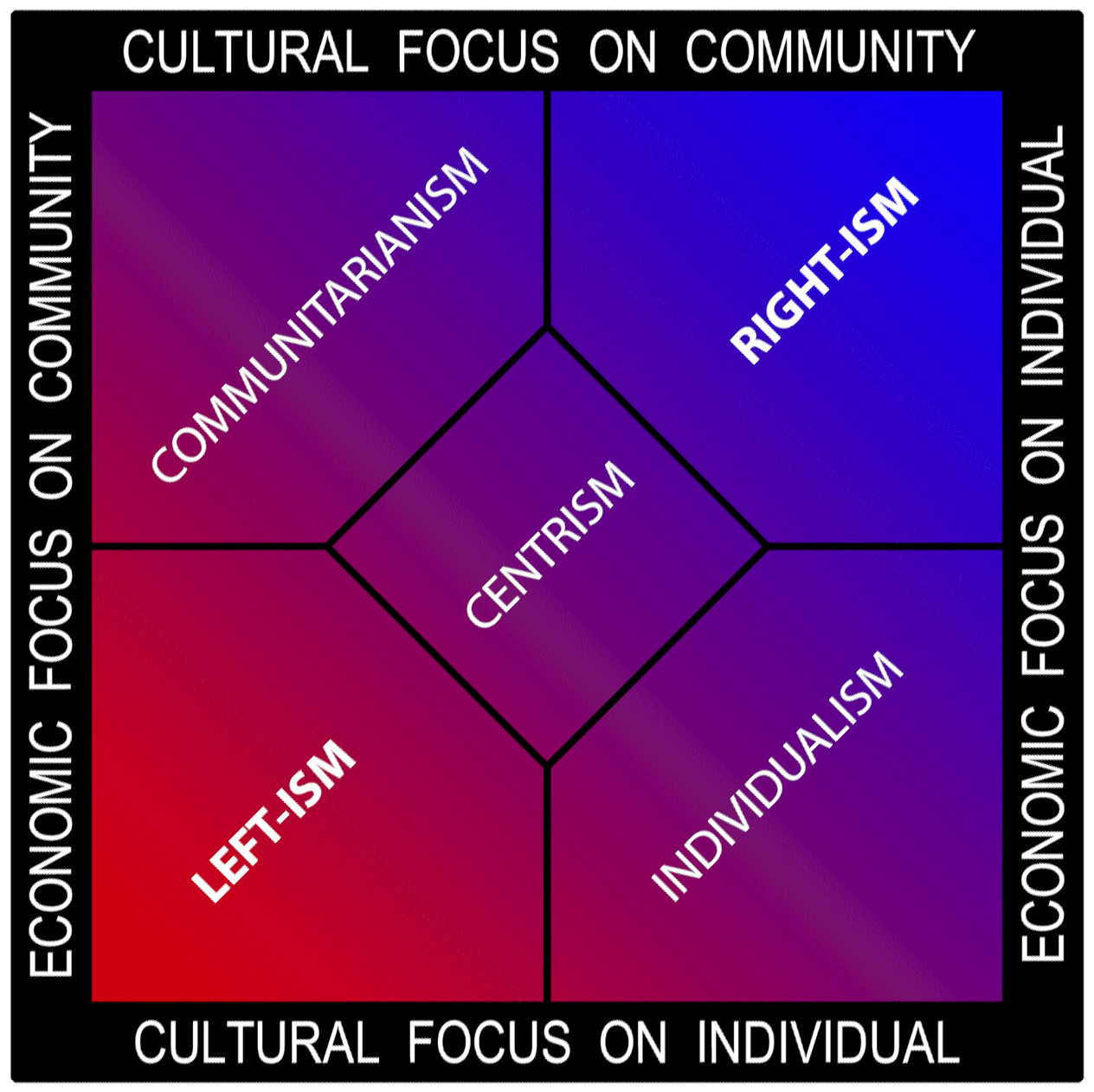

Spectrum

A spectrum (plural ''spectra'' or ''spectrums'') is a condition that is not limited to a specific set of values but can vary, without gaps, across a continuum. The word was first used scientifically in optics to describe the rainbow of colors in visible light after passing through a prism. As scientific understanding of light advanced, it came to apply to the entire electromagnetic spectrum. It thereby became a mapping of a range of magnitudes (wavelengths) to a range of qualities, which are the perceived "colors of the rainbow" and other properties which correspond to wavelengths that lie outside of the visible light spectrum. Spectrum has since been applied by analogy to topics outside optics. Thus, one might talk about the " spectrum of political opinion", or the "spectrum of activity" of a drug, or the " autism spectrum". In these uses, values within a spectrum may not be associated with precisely quantifiable numbers or definitions. Such uses imply a broad range of cond ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Principle Of Maximum Entropy

The principle of maximum entropy states that the probability distribution which best represents the current state of knowledge about a system is the one with largest entropy, in the context of precisely stated prior data (such as a proposition that expresses testable information). Another way of stating this: Take precisely stated prior data or testable information about a probability distribution function. Consider the set of all trial probability distributions that would encode the prior data. According to this principle, the distribution with maximal information entropy is the best choice. History The principle was first expounded by E. T. Jaynes in two papers in 1957 where he emphasized a natural correspondence between statistical mechanics and information theory. In particular, Jaynes offered a new and very general rationale why the Gibbsian method of statistical mechanics works. He argued that the entropy of statistical mechanics and the information entropy of infor ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Autocorrelation

Autocorrelation, sometimes known as serial correlation in the discrete time case, is the correlation of a signal with a delayed copy of itself as a function of delay. Informally, it is the similarity between observations of a random variable as a function of the time lag between them. The analysis of autocorrelation is a mathematical tool for finding repeating patterns, such as the presence of a periodic signal obscured by noise, or identifying the missing fundamental frequency in a signal implied by its harmonic frequencies. It is often used in signal processing for analyzing functions or series of values, such as time domain signals. Different fields of study define autocorrelation differently, and not all of these definitions are equivalent. In some fields, the term is used interchangeably with autocovariance. Unit root processes, trend-stationary processes, autoregressive processes, and moving average processes are specific forms of processes with autocorrelation ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Mechanics

In physics, statistical mechanics is a mathematical framework that applies statistical methods and probability theory to large assemblies of microscopic entities. It does not assume or postulate any natural laws, but explains the macroscopic behavior of nature from the behavior of such ensembles. Statistical mechanics arose out of the development of classical thermodynamics, a field for which it was successful in explaining macroscopic physical properties—such as temperature, pressure, and heat capacity—in terms of microscopic parameters that fluctuate about average values and are characterized by probability distributions. This established the fields of statistical thermodynamics and statistical physics. The founding of the field of statistical mechanics is generally credited to three physicists: * Ludwig Boltzmann, who developed the fundamental interpretation of entropy in terms of a collection of microstates *James Clerk Maxwell, who developed models of probability di ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information Theory

Information theory is the scientific study of the quantification, storage, and communication of information. The field was originally established by the works of Harry Nyquist and Ralph Hartley, in the 1920s, and Claude Shannon in the 1940s. The field is at the intersection of probability theory, statistics, computer science, statistical mechanics, information engineering, and electrical engineering. A key measure in information theory is entropy. Entropy quantifies the amount of uncertainty involved in the value of a random variable or the outcome of a random process. For example, identifying the outcome of a fair coin flip (with two equally likely outcomes) provides less information (lower entropy) than specifying the outcome from a roll of a die (with six equally likely outcomes). Some other important measures in information theory are mutual information, channel capacity, error exponents, and relative entropy. Important sub-fields of information theory include sourc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Inference

Statistical inference is the process of using data analysis to infer properties of an underlying distribution of probability.Upton, G., Cook, I. (2008) ''Oxford Dictionary of Statistics'', OUP. . Inferential statistical analysis infers properties of a population, for example by testing hypotheses and deriving estimates. It is assumed that the observed data set is sampled from a larger population. Inferential statistics can be contrasted with descriptive statistics. Descriptive statistics is solely concerned with properties of the observed data, and it does not rest on the assumption that the data come from a larger population. In machine learning, the term ''inference'' is sometimes used instead to mean "make a prediction, by evaluating an already trained model"; in this context inferring properties of the model is referred to as ''training'' or ''learning'' (rather than ''inference''), and using a model for prediction is referred to as ''inference'' (instead of ''prediction' ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Periodogram

In signal processing, a periodogram is an estimate of the spectral density of a signal. The term was coined by Arthur Schuster in 1898. Today, the periodogram is a component of more sophisticated methods (see spectral estimation). It is the most common tool for examining the amplitude vs frequency characteristics of FIR filters and window functions. FFT spectrum analyzers are also implemented as a time-sequence of periodograms. Definition There are at least two different definitions in use today. One of them involves time-averaging, and one does not. Time-averaging is also the purview of other articles ( Bartlett's method and Welch's method). This article is not about time-averaging. The definition of interest here is that the power spectral density of a continuous function, x(t), is the Fourier transform of its auto-correlation function (see Cross-correlation theorem, Spectral density#Power spectral density, and Wiener–Khinchin theorem): :\mathcal\ = X(f)\cdot ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Autoregressive Model

In statistics, econometrics and signal processing, an autoregressive (AR) model is a representation of a type of random process; as such, it is used to describe certain time-varying processes in nature, economics, etc. The autoregressive model specifies that the output variable depends linearly on its own previous values and on a stochastic term (an imperfectly predictable term); thus the model is in the form of a stochastic difference equation (or recurrence relation which should not be confused with differential equation). Together with the moving-average (MA) model, it is a special case and key component of the more general autoregressive–moving-average (ARMA) and autoregressive integrated moving average (ARIMA) models of time series, which have a more complicated stochastic structure; it is also a special case of the vector autoregressive model (VAR), which consists of a system of more than one interlocking stochastic difference equation in more than one evolving random vari ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Prentice-Hall

Prentice Hall was an American major educational publisher owned by Savvas Learning Company. Prentice Hall publishes print and digital content for the 6–12 and higher-education market, and distributes its technical titles through the Safari Books Online e-reference service. History On October 13, 1913, law professor Charles Gerstenberg and his student Richard Ettinger founded Prentice Hall. Gerstenberg and Ettinger took their mothers' maiden names, Prentice and Hall, to name their new company. Prentice Hall became known as a publisher of trade books by authors such as Norman Vincent Peale; elementary, secondary, and college textbooks; loose-leaf information services; and professional books. Prentice Hall acquired the training provider Deltak in 1979. Prentice Hall was acquired by Gulf+Western in 1984, and became part of that company's publishing division Simon & Schuster. S&S sold several Prentice Hall subsidiaries: Deltak and Resource Systems were sold to National Educatio ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Entropy

Entropy is a scientific concept, as well as a measurable physical property, that is most commonly associated with a state of disorder, randomness, or uncertainty. The term and the concept are used in diverse fields, from classical thermodynamics, where it was first recognized, to the microscopic description of nature in statistical physics, and to the principles of information theory. It has found far-ranging applications in chemistry and physics, in biological systems and their relation to life, in cosmology, economics, sociology, weather science, climate change, and information systems including the transmission of information in telecommunication. The thermodynamic concept was referred to by Scottish scientist and engineer William Rankine in 1850 with the names ''thermodynamic function'' and ''heat-potential''. In 1865, German physicist Rudolf Clausius, one of the leading founders of the field of thermodynamics, defined it as the quotient of an infinitesimal amount of h ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information Theory

Information theory is the scientific study of the quantification, storage, and communication of information. The field was originally established by the works of Harry Nyquist and Ralph Hartley, in the 1920s, and Claude Shannon in the 1940s. The field is at the intersection of probability theory, statistics, computer science, statistical mechanics, information engineering, and electrical engineering. A key measure in information theory is entropy. Entropy quantifies the amount of uncertainty involved in the value of a random variable or the outcome of a random process. For example, identifying the outcome of a fair coin flip (with two equally likely outcomes) provides less information (lower entropy) than specifying the outcome from a roll of a die (with six equally likely outcomes). Some other important measures in information theory are mutual information, channel capacity, error exponents, and relative entropy. Important sub-fields of information theory include sourc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |