|

Learning Rate

In machine learning and statistics, the learning rate is a tuning parameter in an optimization algorithm that determines the step size at each iteration while moving toward a minimum of a loss function. Since it influences to what extent newly acquired information overrides old information, it metaphorically represents the speed at which a machine learning model "learns". In the adaptive control literature, the learning rate is commonly referred to as gain. In setting a learning rate, there is a trade-off between the rate of convergence and overshooting. While the descent direction is usually determined from the gradient of the loss function, the learning rate determines how big a step is taken in that direction. A too high learning rate will make the learning jump over minima but a too low learning rate will either take too long to converge or get stuck in an undesirable local minimum. In order to achieve faster convergence, prevent oscillations and getting stuck in undesi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Machine Learning

Machine learning (ML) is a field of study in artificial intelligence concerned with the development and study of Computational statistics, statistical algorithms that can learn from data and generalise to unseen data, and thus perform Task (computing), tasks without explicit Machine code, instructions. Within a subdiscipline in machine learning, advances in the field of deep learning have allowed Neural network (machine learning), neural networks, a class of statistical algorithms, to surpass many previous machine learning approaches in performance. ML finds application in many fields, including natural language processing, computer vision, speech recognition, email filtering, agriculture, and medicine. The application of ML to business problems is known as predictive analytics. Statistics and mathematical optimisation (mathematical programming) methods comprise the foundations of machine learning. Data mining is a related field of study, focusing on exploratory data analysi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Stochastic Gradient Descent

Stochastic gradient descent (often abbreviated SGD) is an Iterative method, iterative method for optimizing an objective function with suitable smoothness properties (e.g. Differentiable function, differentiable or Subderivative, subdifferentiable). It can be regarded as a stochastic approximation of gradient descent optimization, since it replaces the actual gradient (calculated from the entire data set) by an estimate thereof (calculated from a randomly selected subset of the data). Especially in high-dimensional optimization problems this reduces the very high Computational complexity, computational burden, achieving faster iterations in exchange for a lower Rate of convergence, convergence rate. The basic idea behind stochastic approximation can be traced back to the Robbins–Monro algorithm of the 1950s. Today, stochastic gradient descent has become an important optimization method in machine learning. Background Both statistics, statistical M-estimation, estimation and ma ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

YouTube

YouTube is an American social media and online video sharing platform owned by Google. YouTube was founded on February 14, 2005, by Steve Chen, Chad Hurley, and Jawed Karim who were three former employees of PayPal. Headquartered in San Bruno, California, it is the second-most-visited website in the world, after Google Search. In January 2024, YouTube had more than 2.7billion monthly active users, who collectively watched more than one billion hours of videos every day. , videos were being uploaded to the platform at a rate of more than 500 hours of content per minute, and , there were approximately 14.8billion videos in total. On November 13, 2006, YouTube was purchased by Google for $1.65 billion (equivalent to $ billion in ). Google expanded YouTube's business model of generating revenue from advertisements alone, to offering paid content such as movies and exclusive content produced by and for YouTube. It also offers YouTube Premium, a paid subs ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Self-tuning

In control theory a self-tuning system is capable of optimizing its own internal running parameters in order to maximize or minimize the fulfilment of an objective function; typically the maximization of efficiency or error minimization. Self-tuning and auto-tuning often refer to the same concept. Many software research groups consider auto-tuning the proper nomenclature. Self-tuning systems typically exhibit non-linear adaptive control. Self-tuning systems have been a hallmark of the aerospace industry for decades, as this sort of feedback is necessary to generate optimal multi-variable control for non-linear processes. In the telecommunications industry, adaptive communications are often used to dynamically modify operational system parameters to maximize efficiency and robustness. Examples Examples of self-tuning systems in computing include: * TCP (Transmission Control Protocol) *Microsoft SQL Server (Newer implementations only) * FFTW (Fastest Fourier Transform in the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Model Selection

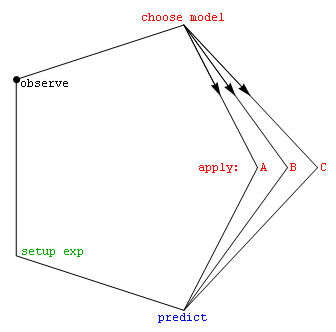

Model selection is the task of selecting a model from among various candidates on the basis of performance criterion to choose the best one. In the context of machine learning and more generally statistical analysis, this may be the selection of a statistical model from a set of candidate models, given data. In the simplest cases, a pre-existing set of data is considered. However, the task can also involve the design of experiments such that the data collected is well-suited to the problem of model selection. Given candidate models of similar predictive or explanatory power, the simplest model is most likely to be the best choice (Occam's razor). state, "The majority of the problems in statistical inference can be considered to be problems related to statistical modeling". Relatedly, has said, "How hetranslation from subject-matter problem to statistical model is done is often the most critical part of an analysis". Model selection may also refer to the problem of selecting ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

AutoML

Automated machine learning (AutoML) is the process of automating the tasks of applying machine learning to real-world problems. It is the combination of automation and ML. AutoML potentially includes every stage from beginning with a raw dataset to building a machine learning model ready for deployment. AutoML was proposed as an artificial intelligence-based solution to the growing challenge of applying machine learning. The high degree of automation in AutoML aims to allow non-experts to make use of machine learning models and techniques without requiring them to become experts in machine learning. Automating the process of applying machine learning end-to-end additionally offers the advantages of producing simpler solutions, faster creation of those solutions, and models that often outperform hand-designed models. Common techniques used in AutoML include hyperparameter optimization, meta-learning and neural architecture search. Comparison to the standard approach In a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Backpropagation

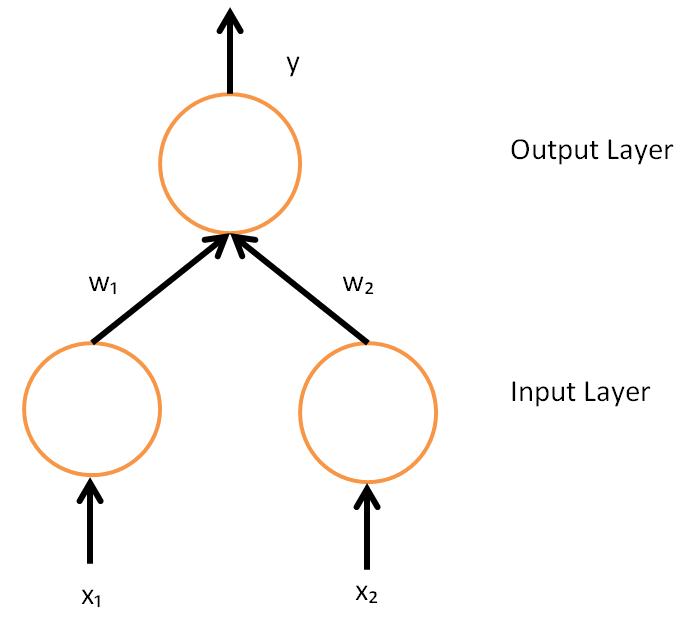

In machine learning, backpropagation is a gradient computation method commonly used for training a neural network to compute its parameter updates. It is an efficient application of the chain rule to neural networks. Backpropagation computes the gradient of a loss function with respect to the weights of the network for a single input–output example, and does so efficiently, computing the gradient one layer at a time, iterating backward from the last layer to avoid redundant calculations of intermediate terms in the chain rule; this can be derived through dynamic programming. Strictly speaking, the term ''backpropagation'' refers only to an algorithm for efficiently computing the gradient, not how the gradient is used; but the term is often used loosely to refer to the entire learning algorithm – including how the gradient is used, such as by stochastic gradient descent, or as an intermediate step in a more complicated optimizer, such as Adaptive Moment Estimation. The ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Overfitting

In mathematical modeling, overfitting is "the production of an analysis that corresponds too closely or exactly to a particular set of data, and may therefore fail to fit to additional data or predict future observations reliably". An overfitted model is a mathematical model that contains more parameters than can be justified by the data. In the special case where the model consists of a polynomial function, these parameters represent the degree of a polynomial. The essence of overfitting is to have unknowingly extracted some of the residual variation (i.e., the Statistical noise, noise) as if that variation represented underlying model structure. Underfitting occurs when a mathematical model cannot adequately capture the underlying structure of the data. An under-fitted model is a model where some parameters or terms that would appear in a correctly specified model are missing. Underfitting would occur, for example, when fitting a linear model to nonlinear data. Such a model ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Variable Metric Methods

In numerical analysis, a quasi-Newton method is an iterative numerical method used either to find zeroes or to find local maxima and minima of functions via an iterative recurrence formula much like the one for Newton's method, except using approximations of the derivatives of the functions in place of exact derivatives. Newton's method requires the Jacobian matrix of all partial derivatives of a multivariate function when used to search for zeros or the Hessian matrix when used for finding extrema. Quasi-Newton methods, on the other hand, can be used when the Jacobian matrices or Hessian matrices are unavailable or are impractical to compute at every iteration. Some iterative methods that reduce to Newton's method, such as sequential quadratic programming, may also be considered quasi-Newton methods. Search for zeros: root finding Newton's method to find zeroes of a function g of multiple variables is given by x_ = x_n - _g(x_n) g(x_n), where _g(x_n) is the left inverse ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hyperparameter Optimization

In machine learning, hyperparameter optimization or tuning is the problem of choosing a set of optimal hyperparameters for a learning algorithm. A hyperparameter is a parameter whose value is used to control the learning process, which must be configured before the process starts. Hyperparameter optimization determines the set of hyperparameters that yields an optimal model which minimizes a predefined loss function on a given data set. The objective function takes a set of hyperparameters and returns the associated loss. Cross-validation is often used to estimate this generalization performance, and therefore choose the set of values for hyperparameters that maximize it. Approaches Grid search The traditional method for hyperparameter optimization has been ''grid search'', or a ''parameter sweep'', which is simply an exhaustive searching through a manually specified subset of the hyperparameter space of a learning algorithm. A grid search algorithm must be guided by so ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Adaptive Algorithm

An adaptive algorithm is an algorithm that changes its behavior at the time it is run, based on information available and on ''a priori'' defined reward mechanism (or criterion). Such information could be the story of recently received data, information on the available computational resources, or other run-time acquired (or ''a priori'' known) information related to the environment in which it operates. Among the most used adaptive algorithms is the Widrow-Hoff’s least mean squares (LMS), which represents a class of stochastic gradient-descent algorithms used in adaptive filtering and machine learning. In adaptive filtering the LMS is used to mimic a desired filter by finding the filter coefficients that relate to producing the least mean square of the error signal (difference between the desired and the actual signal). For example, stable partition, using no additional memory is ''O''(''n'' lg ''n'') but given ''O''(''n'') memory, it can be ''O''(''n'') in time. As implemen ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Floor And Ceiling Functions

In mathematics, the floor function is the function that takes as input a real number , and gives as output the greatest integer less than or equal to , denoted or . Similarly, the ceiling function maps to the least integer greater than or equal to , denoted or . For example, for floor: , , and for ceiling: , and . The floor of is also called the integral part, integer part, greatest integer, or entier of , and was historically denoted (among other notations). However, the same term, ''integer part'', is also used for truncation towards zero, which differs from the floor function for negative numbers. For an integer , . Although and produce graphs that appear exactly alike, they are not the same when the value of is an exact integer. For example, when , . However, if , then , while . Notation The ''integral part'' or ''integer part'' of a number ( in the original) was first defined in 1798 by Adrien-Marie Legendre in his proof of the Legendre's formula. Carl ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |