|

Extensions Of Fisher's Method

In statistics, extensions of Fisher's method are a group of approaches that allow approximately valid statistical inferences to be made when the assumptions required for the direct application of Fisher's method are not valid. Fisher's method is a way of combining the information in the p-values from different statistical tests so as to form a single overall test: this method requires that the individual test statistics (or, more immediately, their resulting p-values) should be statistically independent. Dependent statistics A principal limitation of Fisher's method is its exclusive design to combine independent p-values, which renders it an unreliable technique to combine dependent p-values. To overcome this limitation, a number of methods were developed to extend its utility. Known covariance Brown's method Fisher's method showed that the log-sum of ''k'' independent p-values follow a ''χ''2-distribution with 2''k'' degrees of freedom: : X = -2\sum_^k \log_e(p_i) \sim \chi^2( ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistics

Statistics (from German language, German: ', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, industrial, or social problem, it is conventional to begin with a statistical population or a statistical model to be studied. Populations can be diverse groups of people or objects such as "all people living in a country" or "every atom composing a crystal". Statistics deals with every aspect of data, including the planning of data collection in terms of the design of statistical survey, surveys and experimental design, experiments. When census data (comprising every member of the target population) cannot be collected, statisticians collect data by developing specific experiment designs and survey sample (statistics), samples. Representative sampling assures that inferences and conclusions can reasonably extend from the sample ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

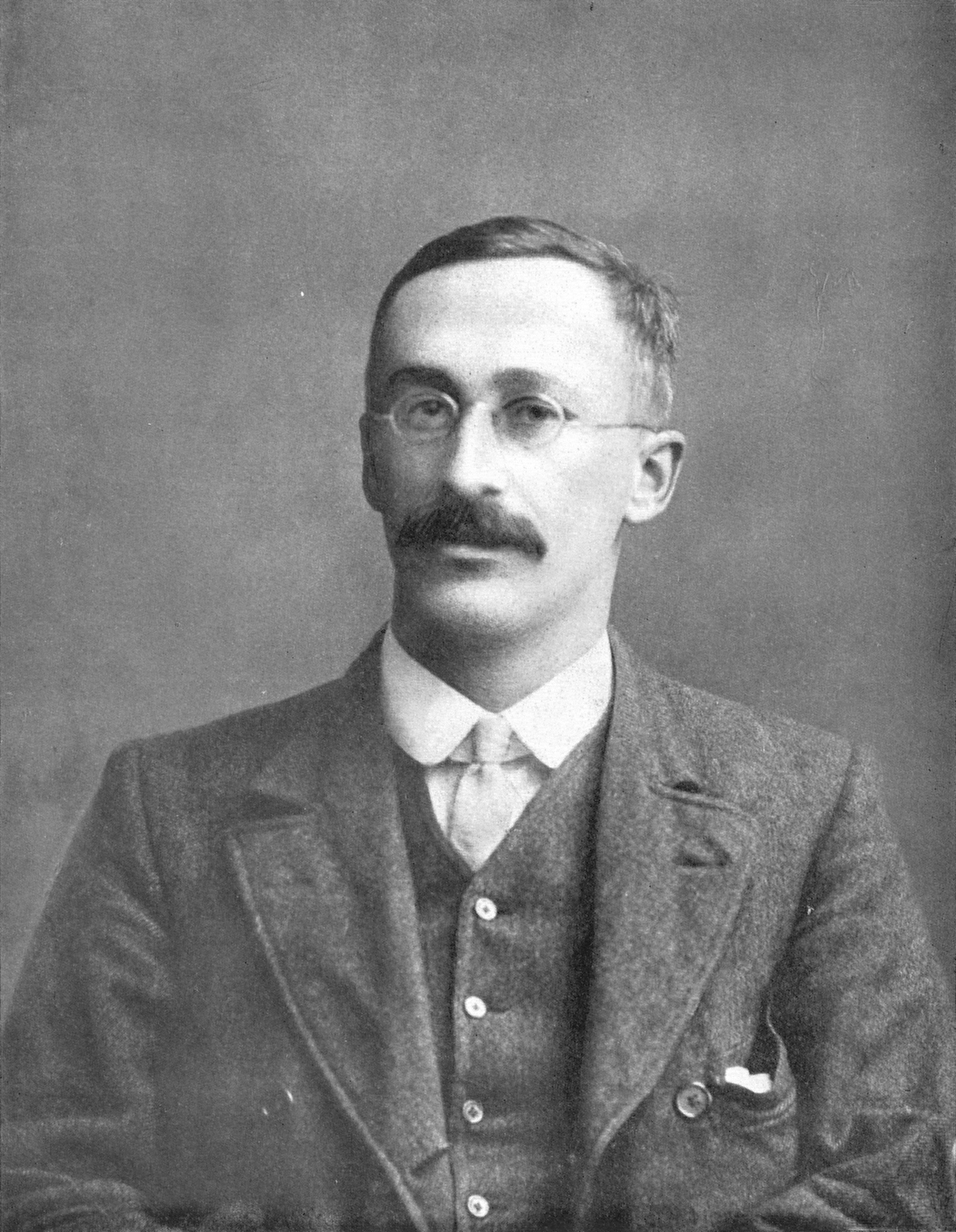

Fisher's Method

In statistics, Fisher's method, also known as Fisher's combined probability test, is a technique for data fusion or "meta-analysis" (analysis of analyses). It was developed by and named for Ronald Fisher. In its basic form, it is used to combine the results from several independence tests bearing upon the same overall hypothesis (''H''0). Application to independent test statistics Fisher's method combines extreme value probabilities from each test, commonly known as " ''p''-values", into one test statistic (''X''2) using the formula :X^2_ = -2\sum_^k \ln p_i, where ''p''''i'' is the ''p''-value for the ''i''th hypothesis test. When the ''p''-values tend to be small, the test statistic ''X''2 will be large, which suggests that the null hypotheses are not true for every test. When all the null hypotheses are true, and the ''p''''i'' (or their corresponding test statistics) are independent, ''X''2 has a chi-squared distribution with 2''k'' degrees of freedom, where ''k'' is ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

P-value

In null-hypothesis significance testing, the ''p''-value is the probability of obtaining test results at least as extreme as the result actually observed, under the assumption that the null hypothesis is correct. A very small ''p''-value means that such an extreme observed outcome would be very unlikely ''under the null hypothesis''. Even though reporting ''p''-values of statistical tests is common practice in academic publications of many quantitative fields, misinterpretation and misuse of p-values is widespread and has been a major topic in mathematics and metascience. In 2016, the American Statistical Association (ASA) made a formal statement that "''p''-values do not measure the probability that the studied hypothesis is true, or the probability that the data were produced by random chance alone" and that "a ''p''-value, or statistical significance, does not measure the size of an effect or the importance of a result" or "evidence regarding a model or hypothesis". That ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Chi-squared Distribution

In probability theory and statistics, the \chi^2-distribution with k Degrees of freedom (statistics), degrees of freedom is the distribution of a sum of the squares of k Independence (probability theory), independent standard normal random variables. The chi-squared distribution \chi^2_k is a special case of the gamma distribution and the univariate Wishart distribution. Specifically if X \sim \chi^2_k then X \sim \text(\alpha=\frac, \theta=2) (where \alpha is the shape parameter and \theta the scale parameter of the gamma distribution) and X \sim \text_1(1,k) . The scaled chi-squared distribution s^2 \chi^2_k is a reparametrization of the gamma distribution and the univariate Wishart distribution. Specifically if X \sim s^2 \chi^2_k then X \sim \text(\alpha=\frac, \theta=2 s^2) and X \sim \text_1(s^2,k) . The chi-squared distribution is one of the most widely used probability distributions in inferential statistics, notably in hypothesis testing and in constru ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Harmonic Mean P-value

The harmonic mean ''p''-value (HMP) is a statistical technique for addressing the multiple comparisons problem that controls the strong-sense family-wise error rate (this claim has been disputed). It improves on the power of Bonferroni correction by performing combined tests, i.e. by testing whether ''groups'' of ''p''-values are statistically significant, like Fisher's method. However, it avoids the restrictive assumption that the ''p''-values are independent, unlike Fisher's method. Consequently, it controls the false positive rate when tests are dependent, at the expense of less power (i.e. a higher false negative rate) when tests are independent. Besides providing an alternative to approaches such as Bonferroni correction that controls the stringent family-wise error rate, it also provides an alternative to the widely-used Benjamini-Hochberg procedure (BH) for controlling the less-stringent false discovery rate. This is because the power of the HMP to detect significant ''g ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Student's T-distribution

In probability theory and statistics, Student's distribution (or simply the distribution) t_\nu is a continuous probability distribution that generalizes the Normal distribution#Standard normal distribution, standard normal distribution. Like the latter, it is symmetric around zero and bell-shaped. However, t_\nu has Heavy-tailed distribution, heavier tails, and the amount of probability mass in the tails is controlled by the parameter \nu. For \nu = 1 the Student's distribution t_\nu becomes the standard Cauchy distribution, which has very fat-tailed distribution, "fat" tails; whereas for \nu \to \infty it becomes the standard normal distribution \mathcal(0, 1), which has very "thin" tails. The name "Student" is a pseudonym used by William Sealy Gosset in his scientific paper publications during his work at the Guinness Brewery in Dublin, Ireland. The Student's distribution plays a role in a number of widely used statistical analyses, including Student's t- ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cauchy Distribution

The Cauchy distribution, named after Augustin-Louis Cauchy, is a continuous probability distribution. It is also known, especially among physicists, as the Lorentz distribution (after Hendrik Lorentz), Cauchy–Lorentz distribution, Lorentz(ian) function, or Breit–Wigner distribution. The Cauchy distribution f(x; x_0,\gamma) is the distribution of the -intercept of a ray issuing from (x_0,\gamma) with a uniformly distributed angle. It is also the distribution of the Ratio distribution, ratio of two independent Normal distribution, normally distributed random variables with mean zero. The Cauchy distribution is often used in statistics as the canonical example of a "pathological (mathematics), pathological" distribution since both its expected value and its variance are undefined (but see below). The Cauchy distribution does not have finite moment (mathematics), moments of order greater than or equal to one; only fractional absolute moments exist., Chapter 16. The Cauchy dist ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |