Theory of probability on:

[Wikipedia]

[Google]

[Amazon]

Probability theory is the branch of

deals with events that occur in

deals with events that occur in

deals with events that occur in a continuous sample space.

:

The classical definition breaks down when confronted with the continuous case. See Bertrand's paradox.

:

If the sample space of a random variable ''X'' is the set of

deals with events that occur in a continuous sample space.

:

The classical definition breaks down when confronted with the continuous case. See Bertrand's paradox.

:

If the sample space of a random variable ''X'' is the set of

mathematics

Mathematics is an area of knowledge that includes the topics of numbers, formulas and related structures, shapes and the spaces in which they are contained, and quantities and their changes. These topics are represented in modern mathematics ...

concerned with probability

Probability is the branch of mathematics concerning numerical descriptions of how likely an event is to occur, or how likely it is that a proposition is true. The probability of an event is a number between 0 and 1, where, roughly speaking, ...

. Although there are several different probability interpretations

The word probability has been used in a variety of ways since it was first applied to the mathematical study of games of chance. Does probability measure the real, physical, tendency of something to occur, or is it a measure of how strongly one b ...

, probability theory treats the concept in a rigorous mathematical manner by expressing it through a set of axioms. Typically these axioms formalise probability in terms of a probability space

In probability theory, a probability space or a probability triple (\Omega, \mathcal, P) is a mathematical construct that provides a formal model of a random process or "experiment". For example, one can define a probability space which models t ...

, which assigns a measure taking values between 0 and 1, termed the probability measure

In mathematics, a probability measure is a real-valued function defined on a set of events in a probability space that satisfies measure properties such as ''countable additivity''. The difference between a probability measure and the more ge ...

, to a set of outcomes called the sample space

In probability theory, the sample space (also called sample description space, possibility space, or outcome space) of an experiment or random trial is the set of all possible outcomes or results of that experiment. A sample space is usually den ...

. Any specified subset of the sample space is called an event

Event may refer to:

Gatherings of people

* Ceremony, an event of ritual significance, performed on a special occasion

* Convention (meeting), a gathering of individuals engaged in some common interest

* Event management, the organization of ev ...

.

Central subjects in probability theory include discrete and continuous random variable

A random variable (also called random quantity, aleatory variable, or stochastic variable) is a mathematical formalization of a quantity or object which depends on random events. It is a mapping or a function from possible outcomes (e.g., the po ...

s, probability distributions

In probability theory and statistics, a probability distribution is the mathematical function that gives the probabilities of occurrence of different possible outcomes for an experiment. It is a mathematical description of a random phenomenon ...

, and stochastic process

In probability theory and related fields, a stochastic () or random process is a mathematical object usually defined as a family of random variables. Stochastic processes are widely used as mathematical models of systems and phenomena that ap ...

es (which provide mathematical abstractions of non-deterministic or uncertain processes or measured quantities that may either be single occurrences or evolve over time in a random fashion).

Although it is not possible to perfectly predict random events, much can be said about their behavior. Two major results in probability theory describing such behaviour are the law of large numbers

In probability theory, the law of large numbers (LLN) is a theorem that describes the result of performing the same experiment a large number of times. According to the law, the average of the results obtained from a large number of trials shou ...

and the central limit theorem

In probability theory, the central limit theorem (CLT) establishes that, in many situations, when independent random variables are summed up, their properly normalized sum tends toward a normal distribution even if the original variables themsel ...

.

As a mathematical foundation for statistics

Statistics (from German: '' Statistik'', "description of a state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, indust ...

, probability theory is essential to many human activities that involve quantitative analysis of data. Methods of probability theory also apply to descriptions of complex systems given only partial knowledge of their state, as in statistical mechanics

In physics, statistical mechanics is a mathematical framework that applies statistical methods and probability theory to large assemblies of microscopic entities. It does not assume or postulate any natural laws, but explains the macroscopic b ...

or sequential estimation. A great discovery of twentieth-century physics

Physics is the natural science that studies matter, its fundamental constituents, its motion and behavior through space and time, and the related entities of energy and force. "Physical science is that department of knowledge which ...

was the probabilistic nature of physical phenomena at atomic scales, described in quantum mechanics

Quantum mechanics is a fundamental theory in physics that provides a description of the physical properties of nature at the scale of atoms and subatomic particles. It is the foundation of all quantum physics including quantum chemistry, ...

.

History of probability

The modern mathematical theory ofprobability

Probability is the branch of mathematics concerning numerical descriptions of how likely an event is to occur, or how likely it is that a proposition is true. The probability of an event is a number between 0 and 1, where, roughly speaking, ...

has its roots in attempts to analyze games of chance by Gerolamo Cardano

Gerolamo Cardano (; also Girolamo or Geronimo; french: link=no, Jérôme Cardan; la, Hieronymus Cardanus; 24 September 1501– 21 September 1576) was an Italian polymath, whose interests and proficiencies ranged through those of mathematician, ...

in the sixteenth century, and by Pierre de Fermat

Pierre de Fermat (; between 31 October and 6 December 1607 – 12 January 1665) was a French mathematician who is given credit for early developments that led to infinitesimal calculus, including his technique of adequality. In particular, he ...

and Blaise Pascal

Blaise Pascal ( , , ; ; 19 June 1623 – 19 August 1662) was a French mathematician, physicist, inventor, philosopher, and Catholic writer.

He was a child prodigy who was educated by his father, a tax collector in Rouen. Pascal's earliest ...

in the seventeenth century (for example the " problem of points"). Christiaan Huygens

Christiaan Huygens, Lord of Zeelhem, ( , , ; also spelled Huyghens; la, Hugenius; 14 April 1629 – 8 July 1695) was a Dutch mathematician, physicist, engineer, astronomer, and inventor, who is regarded as one of the greatest scientists o ...

published a book on the subject in 1657. In the 19th century, what is considered the classical definition of probability was completed by Pierre Laplace

Pierre-Simon, marquis de Laplace (; ; 23 March 1749 – 5 March 1827) was a French scholar and polymath whose work was important to the development of engineering, mathematics, statistics, physics, astronomy, and philosophy. He summarized ...

.

Initially, probability theory mainly considered events, and its methods were mainly combinatorial. Eventually, analytical considerations compelled the incorporation of variables into the theory.

This culminated in modern probability theory, on foundations laid by Andrey Nikolaevich Kolmogorov

Andrey Nikolaevich Kolmogorov ( rus, Андре́й Никола́евич Колмого́ров, p=ɐnˈdrʲej nʲɪkɐˈlajɪvʲɪtɕ kəlmɐˈɡorəf, a=Ru-Andrey Nikolaevich Kolmogorov.ogg, 25 April 1903 – 20 October 1987) was a Sovi ...

. Kolmogorov combined the notion of sample space

In probability theory, the sample space (also called sample description space, possibility space, or outcome space) of an experiment or random trial is the set of all possible outcomes or results of that experiment. A sample space is usually den ...

, introduced by Richard von Mises

Richard Edler von Mises (; 19 April 1883 – 14 July 1953) was an Austrian scientist and mathematician who worked on solid mechanics, fluid mechanics, aerodynamics, aeronautics, statistics and probability theory. He held the position of Gordo ...

, and measure theory

In mathematics, the concept of a measure is a generalization and formalization of geometrical measures (length, area, volume) and other common notions, such as mass and probability of events. These seemingly distinct concepts have many simila ...

and presented his axiom system

In mathematics and logic, an axiomatic system is any set of axioms from which some or all axioms can be used in conjunction to logically derive theorems. A theory is a consistent, relatively-self-contained body of knowledge which usually contain ...

for probability theory in 1933. This became the mostly undisputed axiomatic basis for modern probability theory; but, alternatives exist, such as the adoption of finite rather than countable additivity by Bruno de Finetti

Bruno de Finetti (13 June 1906 – 20 July 1985) was an Italian probabilist statistician and actuary, noted for the "operational subjective" conception of probability. The classic exposition of his distinctive theory is the 1937 "La prévision: ...

.

Treatment

Most introductions to probability theory treat discrete probability distributions and continuous probability distributions separately. The measure theory-based treatment of probability covers the discrete, continuous, a mix of the two, and more.Motivation

Consider anexperiment

An experiment is a procedure carried out to support or refute a hypothesis, or determine the efficacy or likelihood of something previously untried. Experiments provide insight into cause-and-effect by demonstrating what outcome occurs whe ...

that can produce a number of outcomes. The set of all outcomes is called the ''sample space

In probability theory, the sample space (also called sample description space, possibility space, or outcome space) of an experiment or random trial is the set of all possible outcomes or results of that experiment. A sample space is usually den ...

'' of the experiment. The ''power set

In mathematics, the power set (or powerset) of a set is the set of all subsets of , including the empty set and itself. In axiomatic set theory (as developed, for example, in the ZFC axioms), the existence of the power set of any set is post ...

'' of the sample space (or equivalently, the event space) is formed by considering all different collections of possible results. For example, rolling an honest die produces one of six possible results. One collection of possible results corresponds to getting an odd number. Thus, the subset is an element of the power set of the sample space of die rolls. These collections are called ''events''. In this case, is the event that the die falls on some odd number. If the results that actually occur fall in a given event, that event is said to have occurred.

Probability is a way of assigning every "event" a value between zero and one, with the requirement that the event made up of all possible results (in our example, the event ) be assigned a value of one. To qualify as a probability distribution

In probability theory and statistics, a probability distribution is the mathematical function that gives the probabilities of occurrence of different possible outcomes for an experiment. It is a mathematical description of a random phenomenon ...

, the assignment of values must satisfy the requirement that if you look at a collection of mutually exclusive events (events that contain no common results, e.g., the events , , and are all mutually exclusive), the probability that any of these events occurs is given by the sum of the probabilities of the events.

The probability that any one of the events , , or will occur is 5/6. This is the same as saying that the probability of event is 5/6. This event encompasses the possibility of any number except five being rolled. The mutually exclusive event has a probability of 1/6, and the event has a probability of 1, that is, absolute certainty.

When doing calculations using the outcomes of an experiment, it is necessary that all those elementary event

In probability theory, an elementary event, also called an atomic event or sample point, is an event which contains only a single outcome in the sample space. Using set theory terminology, an elementary event is a singleton. Elementary events a ...

s have a number assigned to them. This is done using a random variable

A random variable (also called random quantity, aleatory variable, or stochastic variable) is a mathematical formalization of a quantity or object which depends on random events. It is a mapping or a function from possible outcomes (e.g., the po ...

. A random variable is a function that assigns to each elementary event in the sample space a real number

In mathematics, a real number is a number that can be used to measure a ''continuous'' one-dimensional quantity such as a distance, duration or temperature. Here, ''continuous'' means that values can have arbitrarily small variations. Every ...

. This function is usually denoted by a capital letter. In the case of a die, the assignment of a number to a certain elementary events can be done using the identity function

Graph of the identity function on the real numbers

In mathematics, an identity function, also called an identity relation, identity map or identity transformation, is a function that always returns the value that was used as its argument, un ...

. This does not always work. For example, when flipping a coin the two possible outcomes are "heads" and "tails". In this example, the random variable ''X'' could assign to the outcome "heads" the number "0" () and to the outcome "tails" the number "1" ().

Discrete probability distributions

countable

In mathematics, a set is countable if either it is finite or it can be made in one to one correspondence with the set of natural numbers. Equivalently, a set is ''countable'' if there exists an injective function from it into the natural numbers ...

sample spaces.

Examples: Throwing dice

Dice (singular die or dice) are small, throwable objects with marked sides that can rest in multiple positions. They are used for generating random values, commonly as part of tabletop games, including dice games, board games, role-playing ...

, experiments with decks of cards, random walk

In mathematics, a random walk is a random process that describes a path that consists of a succession of random steps on some mathematical space.

An elementary example of a random walk is the random walk on the integer number line \mathbb Z ...

, and tossing coin

A coin is a small, flat (usually depending on the country or value), round piece of metal or plastic used primarily as a medium of exchange or legal tender. They are standardized in weight, and produced in large quantities at a mint in order ...

s

:

Initially the probability of an event to occur was defined as the number of cases favorable for the event, over the number of total outcomes possible in an equiprobable sample space: see Classical definition of probability.

For example, if the event is "occurrence of an even number when a die is rolled", the probability is given by , since 3 faces out of the 6 have even numbers and each face has the same probability of appearing.

:

The modern definition starts with a finite or countable set called the sample space

In probability theory, the sample space (also called sample description space, possibility space, or outcome space) of an experiment or random trial is the set of all possible outcomes or results of that experiment. A sample space is usually den ...

, which relates to the set of all ''possible outcomes'' in classical sense, denoted by . It is then assumed that for each element , an intrinsic "probability" value is attached, which satisfies the following properties:

#

#

That is, the probability function ''f''(''x'') lies between zero and one for every value of ''x'' in the sample space ''Ω'', and the sum of ''f''(''x'') over all values ''x'' in the sample space ''Ω'' is equal to 1. An is defined as any subset

In mathematics, set ''A'' is a subset of a set ''B'' if all elements of ''A'' are also elements of ''B''; ''B'' is then a superset of ''A''. It is possible for ''A'' and ''B'' to be equal; if they are unequal, then ''A'' is a proper subset of ...

of the sample space . The of the event is defined as

:

So, the probability of the entire sample space is 1, and the probability of the null event is 0.

The function mapping a point in the sample space to the "probability" value is called a abbreviated as . The modern definition does not try to answer how probability mass functions are obtained; instead, it builds a theory that assumes their existence.

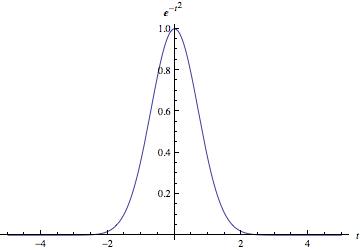

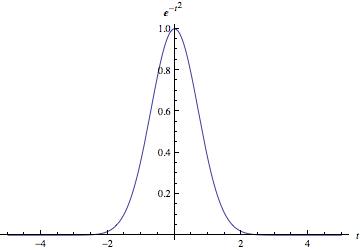

Continuous probability distributions

deals with events that occur in a continuous sample space.

:

The classical definition breaks down when confronted with the continuous case. See Bertrand's paradox.

:

If the sample space of a random variable ''X'' is the set of

deals with events that occur in a continuous sample space.

:

The classical definition breaks down when confronted with the continuous case. See Bertrand's paradox.

:

If the sample space of a random variable ''X'' is the set of real numbers

In mathematics, a real number is a number that can be used to measure a ''continuous'' one-dimensional quantity such as a distance, duration or temperature. Here, ''continuous'' means that values can have arbitrarily small variations. Every re ...

() or a subset thereof, then a function called the (or ) exists, defined by . That is, ''F''(''x'') returns the probability that ''X'' will be less than or equal to ''x''.

The cdf necessarily satisfies the following properties.

# is a monotonically non-decreasing

In mathematics, a monotonic function (or monotone function) is a function between ordered sets that preserves or reverses the given order. This concept first arose in calculus, and was later generalized to the more abstract setting of orde ...

, right-continuous function;

#

#

If is absolutely continuous

In calculus, absolute continuity is a smoothness property of functions that is stronger than continuity and uniform continuity. The notion of absolute continuity allows one to obtain generalizations of the relationship between the two central ope ...

, i.e., its derivative exists and integrating the derivative gives us the cdf back again, then the random variable ''X'' is said to have a or or simply

For a set , the probability of the random variable ''X'' being in is

:

In case the probability density function exists, this can be written as

:

Whereas the ''pdf'' exists only for continuous random variables, the ''cdf'' exists for all random variables (including discrete random variables) that take values in

These concepts can be generalized for multidimensional cases on and other continuous sample spaces.

Measure-theoretic probability theory

The ''raison d'être

Raison d'être is a French expression commonly used in English, meaning "reason for being" or "reason to be".

Raison d'être may refer to:

Music

* Raison d'être (band), a Swedish dark-ambient-industrial-drone music project

* ''Raison D'être' ...

'' of the measure-theoretic treatment of probability is that it unifies the discrete and the continuous cases, and makes the difference a question of which measure is used. Furthermore, it covers distributions that are neither discrete nor continuous nor mixtures of the two.

An example of such distributions could be a mix of discrete and continuous distributions—for example, a random variable that is 0 with probability 1/2, and takes a random value from a normal distribution with probability 1/2. It can still be studied to some extent by considering it to have a pdf of , where