Screenscraping on:

[Wikipedia]

[Google]

[Amazon]

Data scraping is a technique where a

Although the use of physical "

Although the use of physical "

"Data Pump transforms host data"

''

How to Scrape Data on Google: 2024 Step-by-Step Guide

13. Mitchell, R. (2022). "The Ethics of Data Scraping." Journal of Information Ethics, 31(2), 45-61. 14. Kavanagh, D. (2021). "Anti-Detect Browsers: The Next Frontier in Web Scraping." Web Security Review, 19(4), 33-48. 15.Walker, J. (2020). "Legal Implications of Data Scraping." Tech Law Journal, 22(3), 109-126.

computer program

A computer program is a sequence or set of instructions in a programming language for a computer to Execution (computing), execute. It is one component of software, which also includes software documentation, documentation and other intangibl ...

extracts data

Data ( , ) are a collection of discrete or continuous values that convey information, describing the quantity, quality, fact, statistics, other basic units of meaning, or simply sequences of symbols that may be further interpreted for ...

from human-readable

In computing, a human-readable medium or human-readable format is any encoding of data or information that can be naturally read by humans, resulting in human-readable data. It is often encoded as ASCII or Unicode text, rather than as binary da ...

output coming from another program.

Description

Normally,data transfer

Data communication, including data transmission and data reception, is the transfer of data, transmitted and received over a point-to-point or point-to-multipoint communication channel. Examples of such channels are copper wires, optical ...

between programs is accomplished using data structures

In computer science, a data structure is a data organization and storage format that is usually chosen for efficient access to data. More precisely, a data structure is a collection of data values, the relationships among them, and the functi ...

suited for automated

Automation describes a wide range of technologies that reduce human intervention in processes, mainly by predetermining decision criteria, subprocess relationships, and related actions, as well as embodying those predeterminations in machine ...

processing by computers

A computer is a machine that can be programmed to automatically carry out sequences of arithmetic or logical operations ('' computation''). Modern digital electronic computers can perform generic sets of operations known as ''programs'', ...

, not people. Such interchange formats and protocols

Protocol may refer to:

Sociology and politics

* Protocol (politics), a formal agreement between nation states

* Protocol (diplomacy), the etiquette of diplomacy and affairs of state

* Etiquette, a code of personal behavior

Science and technology

...

are typically rigidly structured, well-documented, easily parsed, and minimize ambiguity. Very often, these transmissions are not human-readable at all.

Thus, the key element that distinguishes data scraping from regular parsing

Parsing, syntax analysis, or syntactic analysis is a process of analyzing a String (computer science), string of Symbol (formal), symbols, either in natural language, computer languages or data structures, conforming to the rules of a formal gramm ...

is that the data being consumed is intended for display to an end-user

In product development, an end user (sometimes end-user) is a person who ultimately uses or is intended to ultimately use a product. The end user stands in contrast to users who support or maintain the product, such as sysops, system administrato ...

, rather than as an input to another program. It is therefore usually neither documented nor structured for convenient parsing. Data scraping often involves ignoring binary data

Binary data is data whose unit can take on only two possible states. These are often labelled as 0 and 1 in accordance with the binary numeral system and Boolean algebra.

Binary data occurs in many different technical and scientific fields, wh ...

(usually images or multimedia data), display formatting, redundant labels, superfluous commentary, and other information which is either irrelevant or hinders automated processing.

Data scraping is most often done either to interface

Interface or interfacing may refer to:

Academic journals

* ''Interface'' (journal), by the Electrochemical Society

* '' Interface, Journal of Applied Linguistics'', now merged with ''ITL International Journal of Applied Linguistics''

* '' Inter ...

to a legacy system

Legacy or Legacies may refer to:

Arts and entertainment

Comics

* " Batman: Legacy", a 1996 Batman storyline

* '' DC Universe: Legacies'', a comic book series from DC Comics

* ''Legacy'', a 1999 quarterly series from Antarctic Press

* ''Legacy ...

, which has no other mechanism which is compatible with current hardware, or to interface to a third-party system which does not provide a more convenient API

An application programming interface (API) is a connection between computers or between computer programs. It is a type of software interface, offering a service to other pieces of software. A document or standard that describes how to build ...

. In the second case, the operator of the third-party system will often see screen scraping

Data scraping is a technique where a computer program extracts data from human-readable output coming from another program.

Description

Normally, data transfer between programs is accomplished using data structures suited for automated processin ...

as unwanted, due to reasons such as increased system load, the loss of advertisement

Advertising is the practice and techniques employed to bring attention to a Product (business), product or Service (economics), service. Advertising aims to present a product or service in terms of utility, advantages, and qualities of int ...

revenue

In accounting, revenue is the total amount of income generated by the sale of product (business), goods and services related to the primary operations of a business.

Commercial revenue may also be referred to as sales or as turnover. Some compan ...

, or the loss of control of the information content.

Data scraping is generally considered an ''ad hoc

''Ad hoc'' is a List of Latin phrases, Latin phrase meaning literally for this. In English language, English, it typically signifies a solution designed for a specific purpose, problem, or task rather than a Generalization, generalized solution ...

'', inelegant technique, often used only as a "last resort" when no other mechanism for data interchange is available. Aside from the higher programming

Program (American English; also Commonwealth English in terms of computer programming and related activities) or programme (Commonwealth English in all other meanings), programmer, or programming may refer to:

Business and management

* Program m ...

and processing overhead, output displays intended for human consumption often change structure frequently. Humans can cope with this easily, but a computer program will fail. Depending on the quality and the extent of error handling

In computing and computer programming, exception handling is the process of responding to the occurrence of ''exceptions'' – anomalous or exceptional conditions requiring special processing – during the execution of a program. In general, a ...

logic present in the computer

A computer is a machine that can be Computer programming, programmed to automatically Execution (computing), carry out sequences of arithmetic or logical operations (''computation''). Modern digital electronic computers can perform generic set ...

, this failure can result in error messages, corrupted output or even program crash

In computing, a crash, or system crash, occurs when a computer program such as a software application or an operating system stops functioning properly and exit (system call), exits. On some operating systems or individual applications, a cras ...

es.

However, setting up a data scraping pipeline nowadays is straightforward, requiring minimal programming effort to meet practical needs (especially in biomedical data integration).

Technical variants

Screen scraping

Although the use of physical "

Although the use of physical "dumb terminal

A computer terminal is an electronic or electromechanical hardware device that can be used for entering data into, and transcribing data from, a computer or a computing system. Most early computers only had a front panel to input or display ...

" IBM 3270s is slowly diminishing, as more and more mainframe applications acquire Web

Web most often refers to:

* Spider web, a silken structure created by the animal

* World Wide Web or the Web, an Internet-based hypertext system

Web, WEB, or the Web may also refer to:

Computing

* WEB, a literate programming system created by ...

interfaces, some Web applications merely continue to use the technique of screen scraping to capture old screens and transfer the data to modern front-ends.

Screen scraping is normally associated with the programmatic collection of visual data from a source, instead of parsing data as in web scraping. Originally, ''screen scraping'' referred to the practice of reading text data from a computer display terminal's screen. This was generally done by reading the terminal's memory

Memory is the faculty of the mind by which data or information is encoded, stored, and retrieved when needed. It is the retention of information over time for the purpose of influencing future action. If past events could not be remembe ...

through its auxiliary port

A port is a maritime facility comprising one or more wharves or loading areas, where ships load and discharge cargo and passengers. Although usually situated on a sea coast or estuary, ports can also be found far inland, such as Hamburg, Manch ...

, or by connecting the terminal output port of one computer system to an input port on another. The term screen scraping is also commonly used to refer to the bidirectional exchange of data. This could be the simple cases where the controlling program navigates through the user interface, or more complex scenarios where the controlling program is entering data into an interface meant to be used by a human.

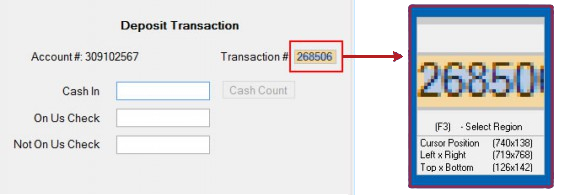

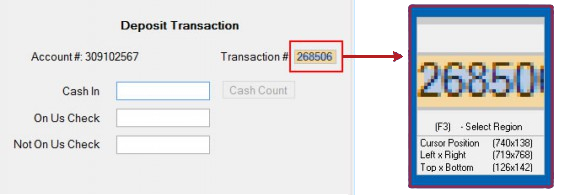

As a concrete example of a classic screen scraper, consider a hypothetical legacy system dating from the 1960s—the dawn of computerized data processing

Data processing is the collection and manipulation of digital data to produce meaningful information. Data processing is a form of ''information processing'', which is the modification (processing) of information in any manner detectable by an o ...

. Computer to user interface

In the industrial design field of human–computer interaction, a user interface (UI) is the space where interactions between humans and machines occur. The goal of this interaction is to allow effective operation and control of the machine fro ...

s from that era were often simply text-based dumb terminal

A computer terminal is an electronic or electromechanical hardware device that can be used for entering data into, and transcribing data from, a computer or a computing system. Most early computers only had a front panel to input or display ...

s which were not much more than virtual teleprinter

A teleprinter (teletypewriter, teletype or TTY) is an electromechanical device that can be used to send and receive typed messages through various communications channels, in both point-to-point (telecommunications), point-to-point and point- ...

s (such systems are still in use , for various reasons). The desire to interface such a system to more modern systems is common. A robust

Robustness is the property of being strong and healthy in constitution. When it is transposed into a system, it refers to the ability of tolerating perturbations that might affect the system's functional body. In the same line ''robustness'' can ...

solution will often require things no longer available, such as source code

In computing, source code, or simply code or source, is a plain text computer program written in a programming language. A programmer writes the human readable source code to control the behavior of a computer.

Since a computer, at base, only ...

, system documentation

Documentation is any communicable material that is used to describe, explain or instruct regarding some attributes of an object, system or procedure, such as its parts, assembly, installation, maintenance, and use. As a form of knowledge managem ...

, API

An application programming interface (API) is a connection between computers or between computer programs. It is a type of software interface, offering a service to other pieces of software. A document or standard that describes how to build ...

s, or programmers

A programmer, computer programmer or coder is an author of computer source code someone with skill in computer programming.

The professional titles ''software developer'' and ''software engineer'' are used for jobs that require a program ...

with experience in a 50-year-old computer system. In such cases, the only feasible solution may be to write a screen scraper that "pretends" to be a user at a terminal. The screen scraper might connect to the legacy system via Telnet

Telnet (sometimes stylized TELNET) is a client-server application protocol that provides access to virtual terminals of remote systems on local area networks or the Internet. It is a protocol for bidirectional 8-bit communications. Its main ...

, emulate

Emulate, Inc. (Emulate) is a biotechnology company that commercialized Organs-on-Chips technology—a human cell-based technology that recreates organ-level function to model organs in healthy and diseased states. The technology has applications ...

the keystrokes needed to navigate the old user interface, process the resulting display output, extract the desired data, and pass it on to the modern system. A sophisticated and resilient implementation of this kind, built on a platform providing the governance and control required by a major enterprise—e.g. change control, security, user management, data protection, operational audit, load balancing, and queue management, etc.—could be said to be an example of robotic process automation

Robotic process automation (RPA) is a form of business process automation that is based on software robots (bots) or artificial intelligence (AI) agents. RPA should not be confused with artificial intelligence as it is based on automation tech ...

software, called RPA or RPAAI for self-guided RPA 2.0 based on artificial intelligence

Artificial intelligence (AI) is the capability of computer, computational systems to perform tasks typically associated with human intelligence, such as learning, reasoning, problem-solving, perception, and decision-making. It is a field of re ...

.

In the 1980s, financial data providers such as Reuters

Reuters ( ) is a news agency owned by Thomson Reuters. It employs around 2,500 journalists and 600 photojournalists in about 200 locations worldwide writing in 16 languages. Reuters is one of the largest news agencies in the world.

The agency ...

, Telerate, and Quotron

Quotron was a Los Angeles–based company that in 1960 became the first financial data technology company to deliver stock market quotes to an electronic screen rather than on a printed ticker tape. The Quotron offered brokers and money managers ...

displayed data in 24×80 format intended for a human reader. Users of this data, particularly investment banks

Investment banking is an advisory-based financial service for institutional investors, corporations, governments, and similar clients. Traditionally associated with corporate finance, such a bank might assist in raising financial capital by und ...

, wrote applications to capture and convert this character data as numeric data for inclusion into calculations for trading decisions without re-keying the data. The common term for this practice, especially in the United Kingdom

The United Kingdom of Great Britain and Northern Ireland, commonly known as the United Kingdom (UK) or Britain, is a country in Northwestern Europe, off the coast of European mainland, the continental mainland. It comprises England, Scotlan ...

, was ''page shredding'', since the results could be imagined to have passed through a paper shredder

A paper shredder is a mechanical device used to cut sheets of paper into either strips or fine particles. Government organizations, businesses, and private individuals use shredders to destroy private, confidential, or otherwise sensitive do ...

. Internally Reuters used the term 'logicized' for this conversion process, running a sophisticated computer system on VAX/VMS called the Logicizer.

More modern screen scraping techniques include capturing the bitmap data from the screen and running it through an OCR engine, or for some specialised automated testing systems, matching the screen's bitmap data against expected results. This can be combined in the case of GUI

Gui or GUI may refer to:

People Surname

* Gui (surname), an ancient Chinese surname, ''xing''

* Bernard Gui (1261 or 1262–1331), inquisitor of the Dominican Order

* Luigi Gui (1914–2010), Italian politician

* Gui Minhai (born 1964), Ch ...

applications, with querying the graphical controls by programmatically obtaining references to their underlying programming objects. A sequence of screens is automatically captured and converted into a database.

Another modern adaptation to these techniques is to use, instead of a sequence of screens as input, a set of images or PDF files, so there are some overlaps with generic "document scraping" and report mining techniques.

There are many tools that can be used for screen scraping.

Web scraping

Web page

A web page (or webpage) is a World Wide Web, Web document that is accessed in a web browser. A website typically consists of many web pages hyperlink, linked together under a common domain name. The term "web page" is therefore a metaphor of pap ...

s are built using text-based mark-up languages (HTML

Hypertext Markup Language (HTML) is the standard markup language for documents designed to be displayed in a web browser. It defines the content and structure of web content. It is often assisted by technologies such as Cascading Style Sheets ( ...

and XHTML

Extensible HyperText Markup Language (XHTML) is part of the family of XML markup languages which mirrors or extends versions of the widely used HyperText Markup Language (HTML), the language in which Web pages are formulated.

While HTML, pr ...

), and frequently contain a wealth of useful data in text form. However, most web pages are designed for human end-users

In product development, an end user (sometimes end-user) is a person who ultimately uses or is intended to ultimately use a product. The end user stands in contrast to users who support or maintain the product, such as sysops, system administrato ...

and not for ease of automated use. Because of this, tool kits that scrape web content were created. A web scraper is an API

An application programming interface (API) is a connection between computers or between computer programs. It is a type of software interface, offering a service to other pieces of software. A document or standard that describes how to build ...

or tool to extract data from a website. Companies like Amazon AWS

Amazon Web Services, Inc. (AWS) is a subsidiary of Amazon that provides on-demand cloud computing platforms and APIs to individuals, companies, and governments, on a metered, pay-as-you-go basis. Clients will often use this in combination w ...

and Google

Google LLC (, ) is an American multinational corporation and technology company focusing on online advertising, search engine technology, cloud computing, computer software, quantum computing, e-commerce, consumer electronics, and artificial ...

provide web scraping tools, services, and public data available free of cost to end-users. Newer forms of web scraping involve listening to data feeds from web servers. For example, JSON

JSON (JavaScript Object Notation, pronounced or ) is an open standard file format and electronic data interchange, data interchange format that uses Human-readable medium and data, human-readable text to store and transmit data objects consi ...

is commonly used as a transport storage mechanism between the client and the webserver. A web scraper uses a website's URL

A uniform resource locator (URL), colloquially known as an address on the Web, is a reference to a resource that specifies its location on a computer network and a mechanism for retrieving it. A URL is a specific type of Uniform Resource Identi ...

to extract data, and stores this data for subsequent analysis. This method of web scraping enables the extraction of data in an efficient and accurate manner.

Recently, companies have developed web scraping systems that rely on using techniques in DOM parsing, computer vision

Computer vision tasks include methods for image sensor, acquiring, Image processing, processing, Image analysis, analyzing, and understanding digital images, and extraction of high-dimensional data from the real world in order to produce numerical ...

and natural language processing

Natural language processing (NLP) is a subfield of computer science and especially artificial intelligence. It is primarily concerned with providing computers with the ability to process data encoded in natural language and is thus closely related ...

to simulate the human processing that occurs when viewing a webpage to automatically extract useful information.

Large websites usually use defensive algorithms to protect their data from web scrapers and to limit the number of requests an IP or IP network may send. This has caused an ongoing battle between website developers and scraping developers.

Report mining is the extraction of data from human-readable computer reports. Conventional

data extraction

Data extraction is the act or process of retrieving data out of (usually unstructured or poorly structured) data sources for further data processing or data storage (data migration). The import into the intermediate extracting system is thus usual ...

requires a connection to a working source system, suitable connectivity standards or an API

An application programming interface (API) is a connection between computers or between computer programs. It is a type of software interface, offering a service to other pieces of software. A document or standard that describes how to build ...

, and usually complex querying. By using the source system's standard reporting options, and directing the output to a spool file instead of to a printer

Printer may refer to:

Technology

* Printer (publishing), a person

* Printer (computing), a hardware device

* Optical printer for motion picture films

People

* Nariman Printer (fl. c. 1940), Indian journalist and activist

* James Printer (1640 ...

, static reports can be generated suitable for offline analysis via report mining.Scott Steinacher"Data Pump transforms host data"

''

InfoWorld

''InfoWorld'' (''IW'') is an American information technology media business. Founded in 1978, it began as a monthly magazine. In 2007, it transitioned to a Web-only publication. Its parent company is International Data Group, and its sister pu ...

'', 30 August 1999, p55 This approach can avoid intensive CPU

A central processing unit (CPU), also called a central processor, main processor, or just processor, is the primary processor in a given computer. Its electronic circuitry executes instructions of a computer program, such as arithmetic, log ...

usage during business hours, can minimise end-user

In product development, an end user (sometimes end-user) is a person who ultimately uses or is intended to ultimately use a product. The end user stands in contrast to users who support or maintain the product, such as sysops, system administrato ...

licence costs for ERP customers, and can offer very rapid prototyping and development of custom reports. Whereas data scraping and web scraping involve interacting with dynamic output, report mining involves extracting data from files in a human-readable format, such as HTML

Hypertext Markup Language (HTML) is the standard markup language for documents designed to be displayed in a web browser. It defines the content and structure of web content. It is often assisted by technologies such as Cascading Style Sheets ( ...

, PDF, or text. These can be easily generated from almost any system by intercepting the data feed to a printer. This approach can provide a quick and simple route to obtaining data without the need to program an API to the source system.

Legal and Ethical Considerations

The legality and ethics of data scraping are often argued. Scraping publicly accessible data is generally legal, however scraping in a manner that infringes a website's terms of service, breaches security measures, or invades user privacy can lead to legal action. Moreover, some websites particularly prohibit data scraping in their robots.

See also

*Comparison of feed aggregators

The following is a comparison of RSS feed aggregators. E-mail programs and web browsers that have the ability to display RSS feeds are listed, as well as some cloud-based services that offer feed aggregation.

Many BitTorrent clients support ...

* Data cleansing

Data cleansing or data cleaning is the process of identifying and correcting (or removing) corrupt, inaccurate, or irrelevant records from a dataset, table, or database. It involves detecting incomplete, incorrect, or inaccurate parts of the dat ...

* Data munging

* Importer (computing)

* Information extraction

* Mashup (web application hybrid)

A mashup (computer industry jargon), in web development, is a web page or web application that uses content from more than one source to create a single new service displayed in a single graphical interface. For example, a user could combine the ...

* Metadata

Metadata (or metainformation) is "data that provides information about other data", but not the content of the data itself, such as the text of a message or the image itself. There are many distinct types of metadata, including:

* Descriptive ...

* Open data

Open data are data that are openly accessible, exploitable, editable and shareable by anyone for any purpose. Open data are generally licensed under an open license.

The goals of the open data movement are similar to those of other "open(-so ...

* Search engine scraping

Search engine scraping is the process of harvesting URLs, descriptions, or other information from search engines. This is a specific form of screen scraping or web scraping dedicated to search engines only.

Most commonly larger search engine opt ...

* Web scraping

Web scraping, web harvesting, or web data extraction is data scraping used for data extraction, extracting data from websites. Web scraping software may directly access the World Wide Web using the Hypertext Transfer Protocol or a web browser. W ...

References

12. Multilogin. (n.d.). Multilogin , Prevent account bans and enables scalingHow to Scrape Data on Google: 2024 Step-by-Step Guide

13. Mitchell, R. (2022). "The Ethics of Data Scraping." Journal of Information Ethics, 31(2), 45-61. 14. Kavanagh, D. (2021). "Anti-Detect Browsers: The Next Frontier in Web Scraping." Web Security Review, 19(4), 33-48. 15.Walker, J. (2020). "Legal Implications of Data Scraping." Tech Law Journal, 22(3), 109-126.

Further reading

* Hemenway, Kevin and Calishain, Tara. ''Spidering Hacks''. Cambridge, Massachusetts: O'Reilly, 2003. . {{DEFAULTSORT:Data Scraping Data processing