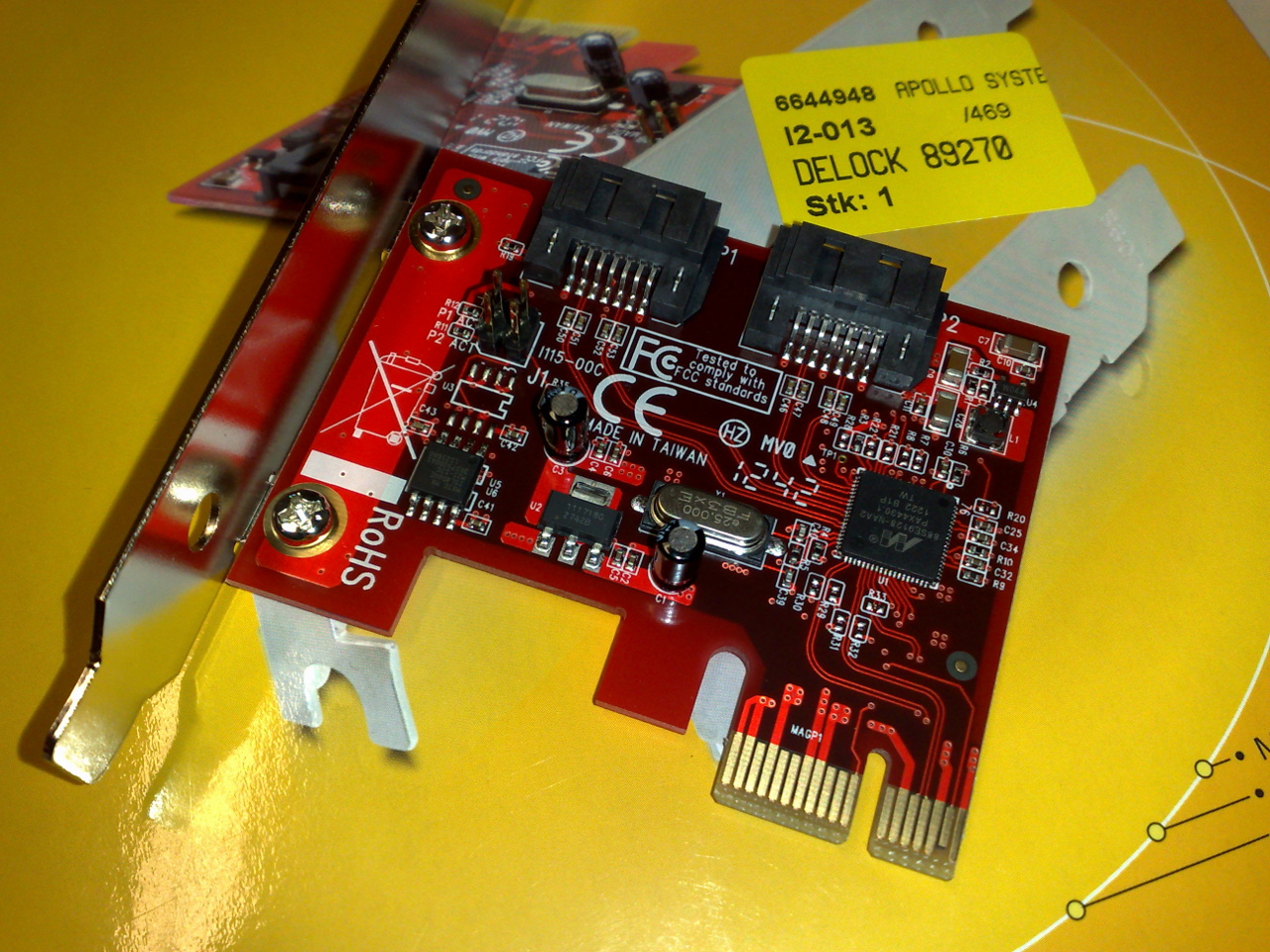

PCI Express (Peripheral Component Interconnect Express), officially abbreviated as PCIe or PCI-e,

is a high-speed

serial computer expansion bus

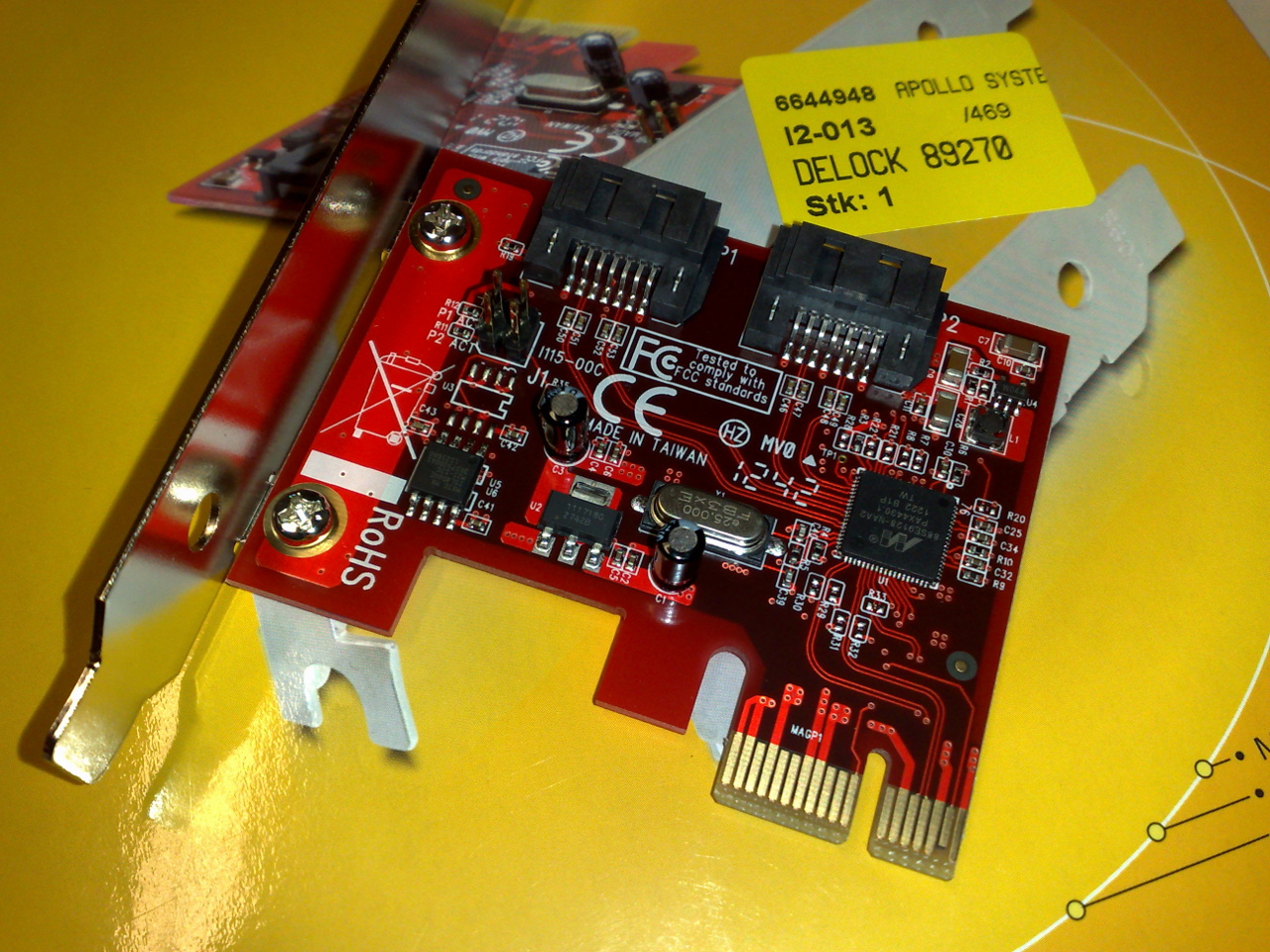

In computing, an expansion card (also called an expansion board, adapter card, peripheral card or accessory card) is a printed circuit board that can be inserted into an electrical connector, or expansion slot (also referred to as a bus slo ...

standard, designed to replace the older

PCI,

PCI-X

PCI-X, short for Peripheral Component Interconnect eXtended, is a computer bus and expansion card standard that enhances the 32-bit PCI local bus for higher bandwidth demanded mostly by servers and workstations. It uses a modified protocol t ...

and

AGP bus standards. It is the common

motherboard interface for personal computers'

graphics cards

A graphics card (also called a video card, display card, graphics adapter, VGA card/VGA, video adapter, display adapter, or mistakenly GPU) is an expansion card which generates a feed of output images to a display device, such as a computer moni ...

,

hard disk drive

A hard disk drive (HDD), hard disk, hard drive, or fixed disk is an electro-mechanical data storage device that stores and retrieves digital data using magnetic storage with one or more rigid rapidly rotating platters coated with magne ...

host adapter

In computer hardware, a host controller, host adapter, or host bus adapter (HBA), connects a computer system bus, which acts as the host system, to other network and storage devices. The terms are primarily used to refer to devices for conne ...

s,

SSDs

A solid-state drive (SSD) is a solid-state storage device that uses integrated circuit assemblies to store data persistently, typically using flash memory, and functioning as secondary storage in the hierarchy of computer storage. It is a ...

,

Wi-Fi

Wi-Fi () is a family of wireless network protocols, based on the IEEE 802.11 family of standards, which are commonly used for local area networking of devices and Internet access, allowing nearby digital devices to exchange data by radio wav ...

and

Ethernet

Ethernet () is a family of wired computer networking technologies commonly used in local area networks (LAN), metropolitan area networks (MAN) and wide area networks (WAN). It was commercially introduced in 1980 and first standardized in 198 ...

hardware connections.

PCIe has numerous improvements over the older standards, including higher maximum system bus

throughput

Network throughput (or just throughput, when in context) refers to the rate of message delivery over a communication channel, such as Ethernet or packet radio, in a communication network. The data that these messages contain may be delivered ov ...

, lower I/O pin count and smaller physical footprint, better performance scaling for bus devices, a more detailed error detection and reporting mechanism (Advanced Error Reporting, AER),

and native

hot-swap

Hot swapping is the replacement or addition of components to a computer system without stopping, shutting down, or rebooting the system; hot plugging describes the addition of components only. Components which have such functionality are said ...

functionality. More recent revisions of the PCIe standard provide hardware support for

I/O virtualization

In virtualization, input/output virtualization (I/O virtualization) is a methodology to simplify management, lower costs and improve performance of servers in enterprise environments. I/O virtualization environments are created by abstracting the ...

.

The PCI Express electrical interface is measured by the number of simultaneous lanes.

(A lane is a single send/receive line of data. The

analogy is a highway with traffic in both directions.) The interface is also used in a variety of other standards — most notably the

laptop expansion card interface called

ExpressCard. It is also used in the storage interfaces of

SATA Express

SATA Express (sometimes unofficially shortened to SATAe) is a computer bus interface that supports both Serial ATA (SATA) and PCI Express (PCIe) storage devices, initially standardized in the SATA 3.2 specification. The SATA Express con ...

,

U.2 (SFF-8639) and

M.2

M.2, pronounced ''m dot two'' and formerly known as the Next Generation Form Factor (NGFF), is a specification for internally mounted computer expansion cards and associated connectors. M.2 replaces the mSATA standard, which uses the PCI Ex ...

.

Format specifications are maintained and developed by the

PCI-SIG

PCI-SIG, or Peripheral Component Interconnect Special Interest Group, is an electronics industry consortium responsible for specifying the Peripheral Component Interconnect (PCI), PCI-X, and PCI Express (PCIe) computer buses. It is based in ...

(PCI

Special Interest Group) — a group of more than 900 companies that also maintains the

conventional PCI

Peripheral Component Interconnect (PCI) is a local computer bus for attaching hardware devices in a computer and is part of the PCI Local Bus standard. The PCI bus supports the functions found on a processor bus but in a standardized format ...

specifications.

Architecture

Conceptually, the PCI Express bus is a high-speed

serial replacement of the older PCI/PCI-X bus.

One of the key differences between the PCI Express bus and the older PCI is the bus topology; PCI uses a shared

parallel

Parallel is a geometric term of location which may refer to:

Computing

* Parallel algorithm

* Parallel computing

* Parallel metaheuristic

* Parallel (software), a UNIX utility for running programs in parallel

* Parallel Sysplex, a cluster of ...

bus

A bus (contracted from omnibus, with variants multibus, motorbus, autobus, etc.) is a road vehicle that carries significantly more passengers than an average car or van. It is most commonly used in public transport, but is also in use for cha ...

architecture, in which the PCI host and all devices share a common set of address, data, and control lines. In contrast, PCI Express is based on point-to-point

topology

In mathematics, topology (from the Greek words , and ) is concerned with the properties of a geometric object that are preserved under continuous deformations, such as stretching, twisting, crumpling, and bending; that is, without closing ...

, with separate

serial links connecting every device to the

root complex

In a PCI Express (PCIe) system, a root complex device connects the CPU and memory subsystem to the PCI Express switch fabric composed of one or more PCIe or PCI devices.

Similar to a host bridge in a PCI system, the root complex generates trans ...

(host). Because of its shared bus topology, access to the older PCI bus is arbitrated (in the case of multiple masters), and limited to one master at a time, in a single direction. Furthermore, the older PCI clocking scheme limits the bus clock to the slowest peripheral on the bus (regardless of the devices involved in the bus transaction). In contrast, a PCI Express bus link supports

full-duplex

A duplex communication system is a point-to-point system composed of two or more connected parties or devices that can communicate with one another in both directions. Duplex systems are employed in many communications networks, either to allow ...

communication between any two endpoints, with no inherent limitation on concurrent access across multiple endpoints.

In terms of bus protocol, PCI Express communication is encapsulated in packets. The work of packetizing and de-packetizing data and status-message traffic is handled by the transaction layer of the PCI Express port (described later). Radical differences in electrical signaling and bus protocol require the use of a different mechanical form factor and expansion connectors (and thus, new motherboards and new adapter boards); PCI slots and PCI Express slots are not interchangeable. At the software level, PCI Express preserves

backward compatibility

Backward compatibility (sometimes known as backwards compatibility) is a property of an operating system, product, or technology that allows for interoperability with an older legacy system, or with input designed for such a system, especiall ...

with PCI; legacy PCI system software can detect and configure newer PCI Express devices without explicit support for the PCI Express standard, though new PCI Express features are inaccessible.

The PCI Express link between two devices can vary in size from one to 16

lane

In road transport, a lane is part of a roadway that is designated to be used by a single line of vehicles to control and guide drivers and reduce traffic conflicts. Most public roads (highways) have at least two lanes, one for traffic in each ...

s. In a multi-lane link, the packet data is striped across lanes, and peak data throughput scales with the overall link width. The lane count is automatically negotiated during device initialization and can be restricted by either endpoint. For example, a single-lane PCI Express (x1) card can be inserted into a multi-lane slot (x4, x8, etc.), and the initialization cycle auto-negotiates the highest mutually supported lane count. The link can dynamically down-configure itself to use fewer lanes, providing a failure tolerance in case bad or unreliable lanes are present. The PCI Express standard defines link widths of x1, x2, x4, x8, and x16. Up to and including PCIe 5.0, x12, and x32 links were defined as well but never used.

This allows the PCI Express bus to serve both cost-sensitive applications where high throughput is not needed, and performance-critical applications such as 3D graphics, networking (

10 Gigabit Ethernet or multiport

Gigabit Ethernet

In computer networking, Gigabit Ethernet (GbE or 1 GigE) is the term applied to transmitting Ethernet frames at a rate of a gigabit per second. The most popular variant, 1000BASE-T, is defined by the IEEE 802.3ab standard. It came into use ...

), and enterprise storage (

SAS or

Fibre Channel). Slots and connectors are only defined for a subset of these widths, with link widths in between using the next larger physical slot size.

As a point of reference, a PCI-X (133 MHz 64-bit) device and a PCI Express 1.0 device using four lanes (x4) have roughly the same peak single-direction transfer rate of 1064 MB/s. The PCI Express bus has the potential to perform better than the PCI-X bus in cases where multiple devices are transferring data simultaneously, or if communication with the PCI Express peripheral is

bidirectional

Bidirectional may refer to:

* Bidirectional, a roadway that carries traffic moving in opposite directions

* Bi-directional vehicle, a tram or train or any other vehicle that can be controlled from either end and can move forward or backward with e ...

.

Interconnect

PCI Express devices communicate via a logical connection called an ''interconnect''

or ''link''. A link is a point-to-point communication channel between two PCI Express ports allowing both of them to send and receive ordinary PCI requests (configuration, I/O or memory read/write) and

interrupt

In digital computers, an interrupt (sometimes referred to as a trap) is a request for the processor to ''interrupt'' currently executing code (when permitted), so that the event can be processed in a timely manner. If the request is accepted, ...

s (

INTx,

MSI or MSI-X). At the physical level, a link is composed of one or more ''lanes''.

Low-speed peripherals (such as an

802.11

IEEE 802.11 is part of the IEEE 802 set of local area network (LAN) technical standards, and specifies the set of media access control (MAC) and physical layer (PHY) protocols for implementing wireless local area network (WLAN) computer com ...

Wi-Fi

Wi-Fi () is a family of wireless network protocols, based on the IEEE 802.11 family of standards, which are commonly used for local area networking of devices and Internet access, allowing nearby digital devices to exchange data by radio wav ...

card Card or The Card may refer to:

* Various types of plastic cards:

**By type

***Magnetic stripe card

*** Chip card

*** Digital card

**By function

***Payment card

****Credit card

**** Debit card

****EC-card

****Identity card

****European Health Insur ...

) use a single-lane (x1) link, while a graphics adapter typically uses a much wider and therefore faster 16-lane (x16) link.

Lane

A lane is composed of two

differential signaling

Differential signalling is a method for electrically transmitting information using two complementary signals. The technique sends the same electrical signal as a differential pair of signals, each in its own conductor. The pair of conduc ...

pairs, with one pair for receiving data and the other for transmitting. Thus, each lane is composed of four wires or

signal traces

A printed circuit board (PCB; also printed wiring board or PWB) is a medium used in electrical and electronic engineering to connect electronic components to one another in a controlled manner. It takes the form of a laminated sandwich stru ...

. Conceptually, each lane is used as a

full-duplex

A duplex communication system is a point-to-point system composed of two or more connected parties or devices that can communicate with one another in both directions. Duplex systems are employed in many communications networks, either to allow ...

byte stream

A bitstream (or bit stream), also known as binary sequence, is a sequence of bits.

A bytestream is a sequence of bytes. Typically, each byte is an 8-bit quantity, and so the term octet stream is sometimes used interchangeably. An octet may ...

, transporting data packets in eight-bit "byte" format simultaneously in both directions between endpoints of a link.

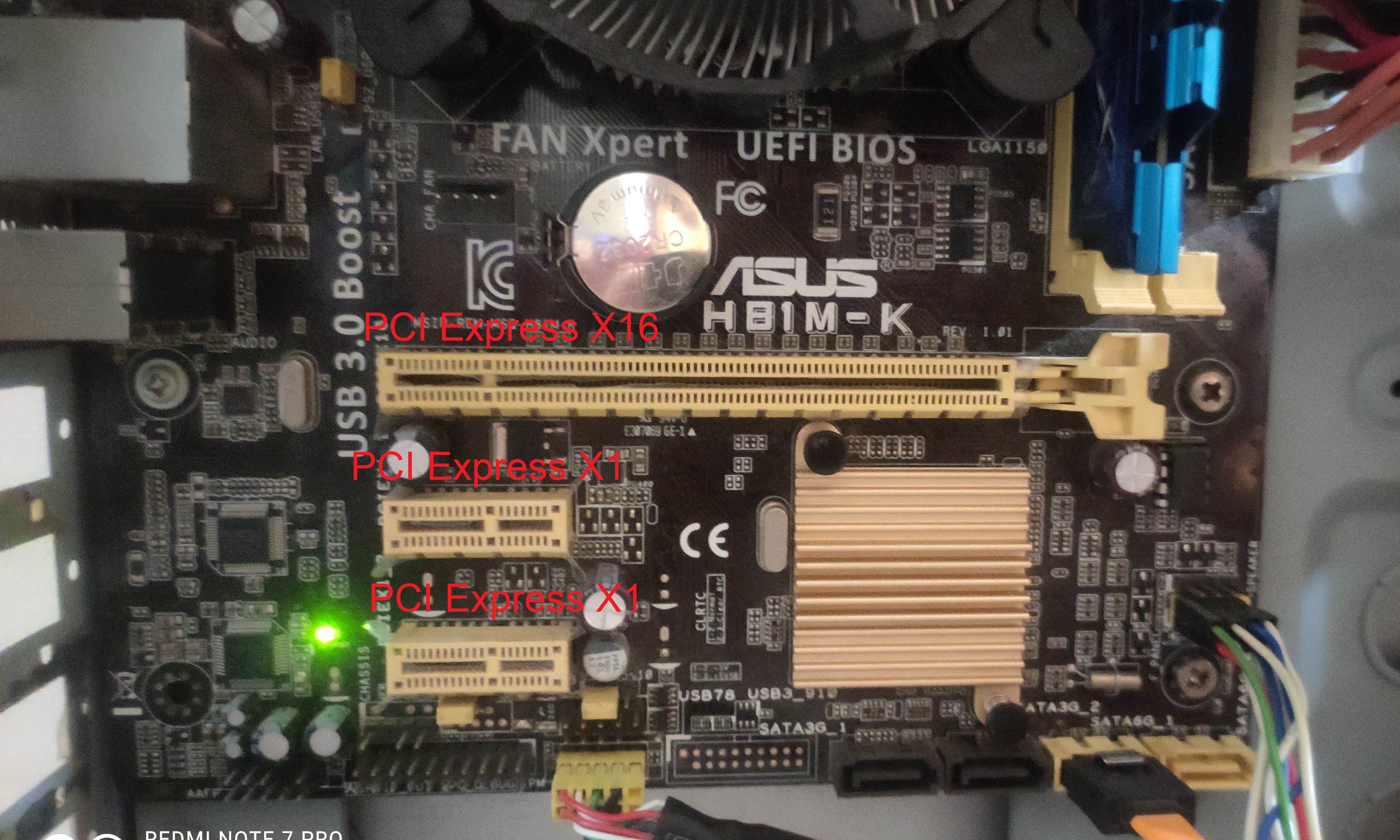

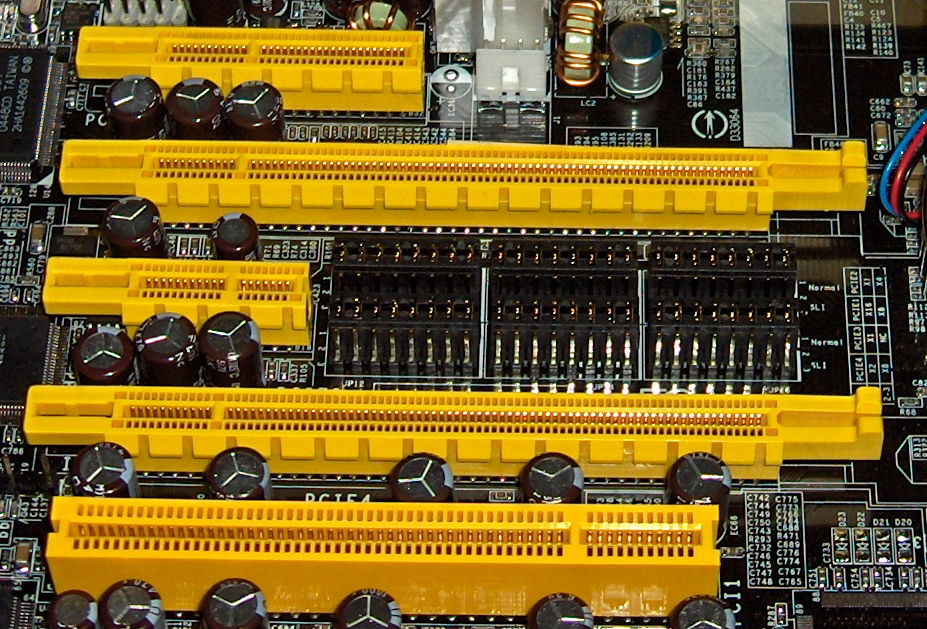

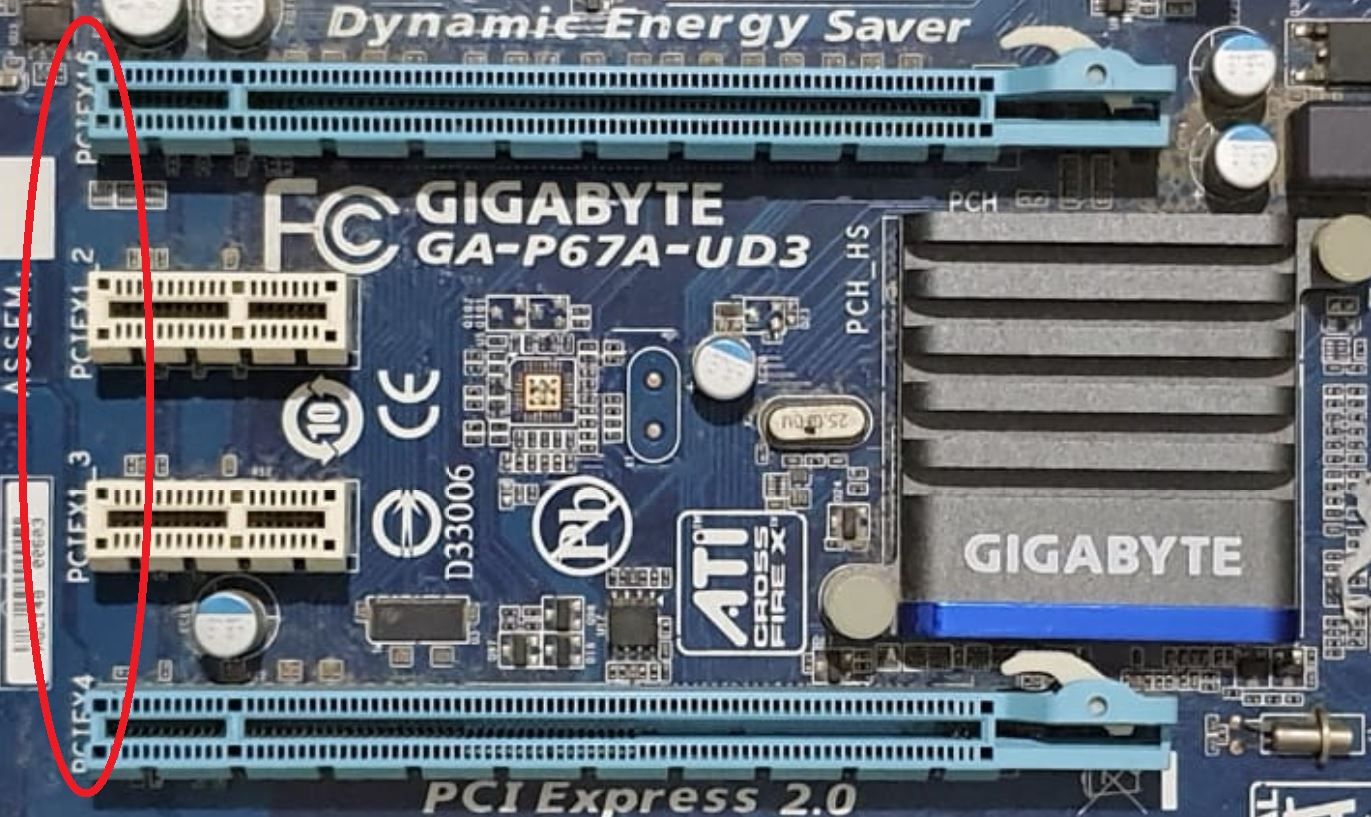

Physical PCI Express links may contain 1, 4, 8 or 16 lanes.

Lane counts are written with an "x" prefix (for example, "x8" represents an eight-lane card or slot), with x16 being the largest size in common use.

Lane sizes are also referred to via the terms "width" or "by" e.g., an eight-lane slot could be referred to as a "by 8" or as "8 lanes wide."

For mechanical card sizes, see

below.

Serial bus

The bonded serial

bus

A bus (contracted from omnibus, with variants multibus, motorbus, autobus, etc.) is a road vehicle that carries significantly more passengers than an average car or van. It is most commonly used in public transport, but is also in use for cha ...

architecture was chosen over the traditional parallel bus because of the inherent limitations of the latter, including

half-duplex

A duplex communication system is a point-to-point system composed of two or more connected parties or devices that can communicate with one another in both directions. Duplex systems are employed in many communications networks, either to allow ...

operation, excess signal count, and inherently lower

bandwidth

Bandwidth commonly refers to:

* Bandwidth (signal processing) or ''analog bandwidth'', ''frequency bandwidth'', or ''radio bandwidth'', a measure of the width of a frequency range

* Bandwidth (computing), the rate of data transfer, bit rate or thr ...

due to

timing skew Clock skew (sometimes called timing skew) is a phenomenon in synchronous digital circuit systems (such as computer systems) in which the same sourced clock signal arrives at different components at different times due to gate or, in more advanced s ...

. Timing skew results from separate electrical signals within a parallel interface traveling through conductors of different lengths, on potentially different

printed circuit board (PCB) layers, and at possibly different

signal velocities. Despite being transmitted simultaneously as a single

word

A word is a basic element of language that carries an objective or practical meaning, can be used on its own, and is uninterruptible. Despite the fact that language speakers often have an intuitive grasp of what a word is, there is no conse ...

, signals on a parallel interface have different travel duration and arrive at their destinations at different times. When the interface clock period is shorter than the largest time difference between signal arrivals, recovery of the transmitted word is no longer possible. Since timing skew over a parallel bus can amount to a few nanoseconds, the resulting bandwidth limitation is in the range of hundreds of megahertz.

A serial interface does not exhibit timing skew because there is only one differential signal in each direction within each lane, and there is no external clock signal since clocking information is

embedded within the serial signal itself. As such, typical bandwidth limitations on serial signals are in the multi-gigahertz range. PCI Express is one example of the general trend toward replacing parallel buses with serial interconnects; other examples include

Serial ATA (SATA),

USB

Universal Serial Bus (USB) is an industry standard that establishes specifications for cables, connectors and protocols for connection, communication and power supply (interfacing) between computers, peripherals and other computers. A broad ...

,

Serial Attached SCSI (SAS),

FireWire (IEEE 1394), and

RapidIO

The RapidIO architecture is a high-performance packet-switched electrical connection technology. RapidIO supports messaging, read/write and cache coherency semantics. Based on industry-standard electrical specifications such as those for Ether ...

. In digital video, examples in common use are

DVI

Digital Visual Interface (DVI) is a video display interface developed by the Digital Display Working Group (DDWG). The digital interface is used to connect a video source, such as a video display controller, to a display device, such as a comp ...

,

HDMI

High-Definition Multimedia Interface (HDMI) is a proprietary audio/video interface for transmitting uncompressed video data and compressed or uncompressed digital audio data from an HDMI-compliant source device, such as a display controlle ...

, and

DisplayPort

DisplayPort (DP) is a digital display interface developed by a consortium of PC and chip manufacturers and standardized by the Video Electronics Standards Association (VESA). It is primarily used to connect a video source to a display device su ...

.

Multichannel serial design increases flexibility with its ability to allocate fewer lanes for slower devices.

Form factors

PCI Express (standard)

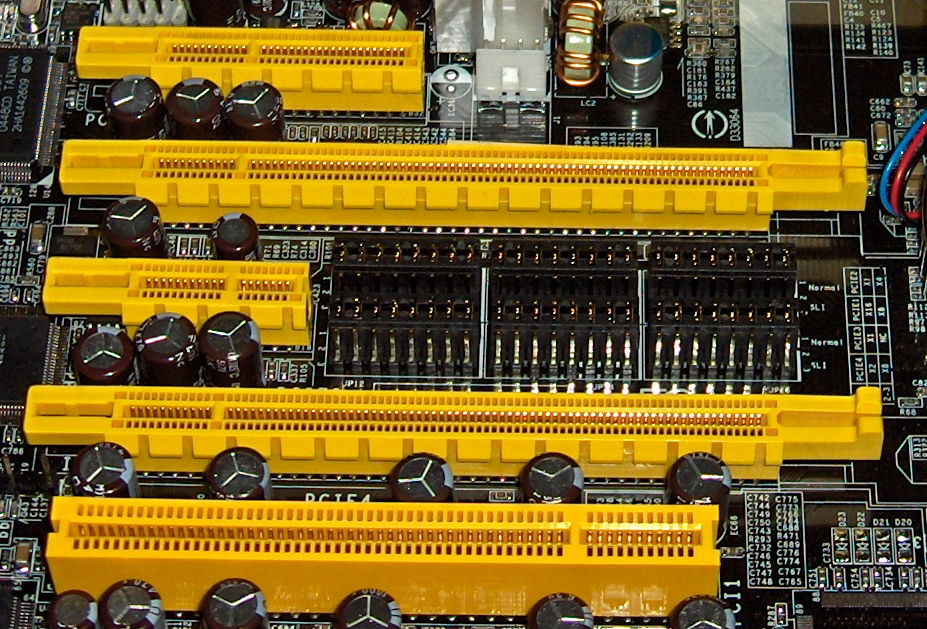

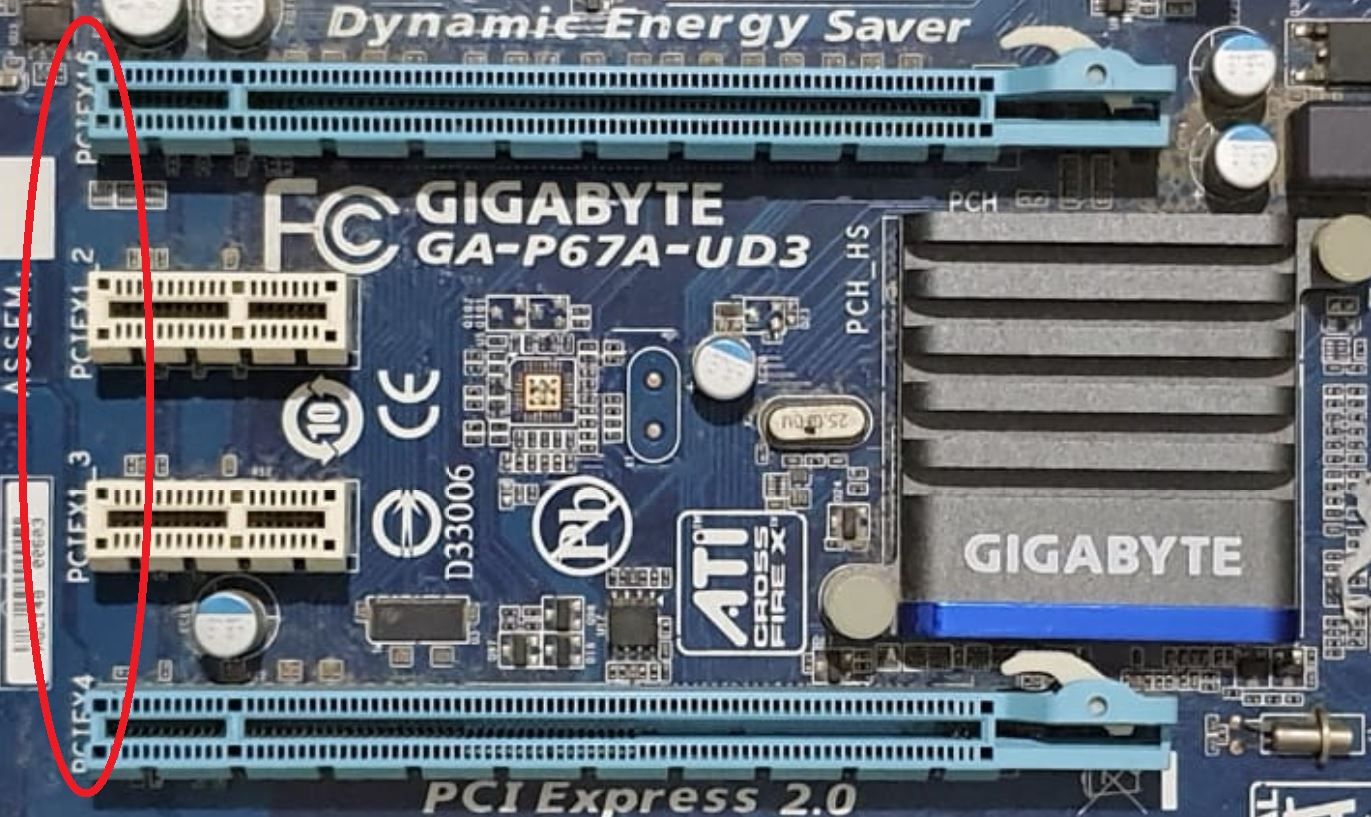

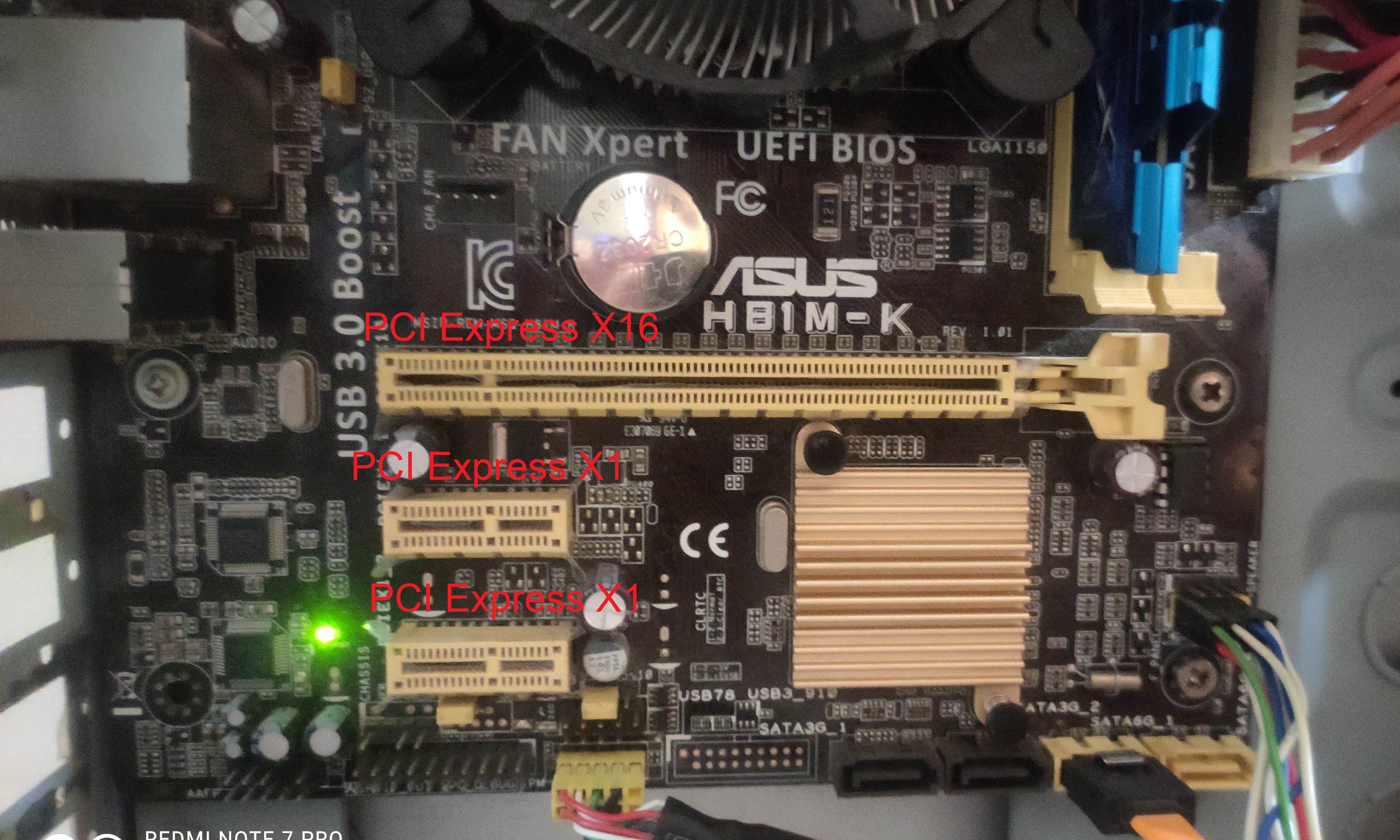

A PCI Express card fits into a slot of its physical size or larger (with x16 as the largest used), but may not fit into a smaller PCI Express slot; for example, a x16 card may not fit into a x4 or x8 slot. Some slots use open-ended sockets to permit physically longer cards and negotiate the best available electrical and logical connection.

The number of lanes actually connected to a slot may also be fewer than the number supported by the physical slot size. An example is a x16 slot that runs at x4, which accepts any x1, x2, x4, x8 or x16 card, but provides only four lanes. Its specification may read as "x16 (x4 mode)", while "mechanical @ electrical" notation (e.g. "x16 @ x4") is also common. The advantage is that such slots can accommodate a larger range of PCI Express cards without requiring motherboard hardware to support the full transfer rate. Standard mechanical sizes are x1, x4, x8, and x16. Cards with a differing number of lanes need to use the next larger mechanical size (i.e. a x2 card uses the x4 size, or a x12 card uses the x16 size).

The cards themselves are designed and manufactured in various sizes. For example,

solid-state drive

A solid-state drive (SSD) is a solid-state storage device that uses integrated circuit assemblies to store data persistently, typically using flash memory, and functioning as secondary storage in the hierarchy of computer storage. It is a ...

s (SSDs) that come in the form of PCI Express cards often use

HHHL (half height, half length) and

FHHL (full height, half length) to describe the physical dimensions of the card.

Non-standard video card form factors

Modern (since c.2012

) gaming

video card

A graphics card (also called a video card, display card, graphics adapter, VGA card/VGA, video adapter, display adapter, or mistakenly GPU) is an expansion card which generates a feed of output images to a display device, such as a computer mo ...

s usually exceed the height as well as thickness specified in the PCI Express standard, due to the need for more capable and quieter

cooling fans, as gaming video cards often emit hundreds of watts of heat.

Modern computer cases are often wider to accommodate these taller cards, but not always. Since full-length cards (312 mm) are uncommon, modern cases sometimes cannot fit those. The thickness of these cards also typically occupies the space of 2 PCIe slots. In fact, even the methodology of how to measure the cards varies between vendors, with some including the metal bracket size in dimensions and others not.

For instance, a 2020

Sapphire

Sapphire is a precious gemstone, a variety of the mineral corundum, consisting of aluminium oxide () with trace amounts of elements such as iron, titanium, chromium, vanadium, or magnesium. The name sapphire is derived via the Latin "sa ...

card measures 135 mm in height (excluding the metal bracket), which exceeds the PCIe standard height by 28 mm.

Another card by

XFX measures 55 mm thick (i.e. 2.7 PCI slots at 20.32 mm), taking up 3 PCIe slots.

The Asus GeForce RTX 3080 10 GB STRIX GAMING OC video card is a two-slot card that has dimensions of 318.5mm × 140.1mm × 57.8mm, exceeding PCI Express' maximum length, height, and thickness respectively.

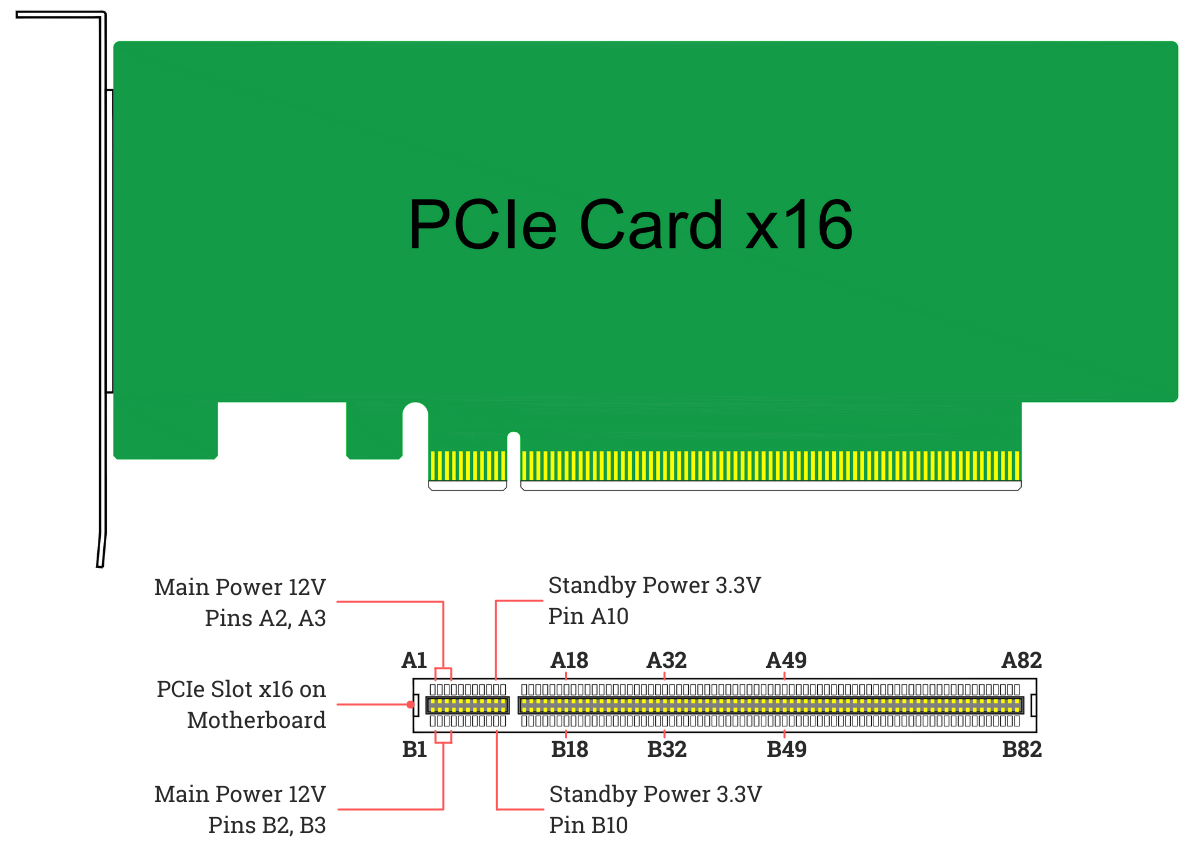

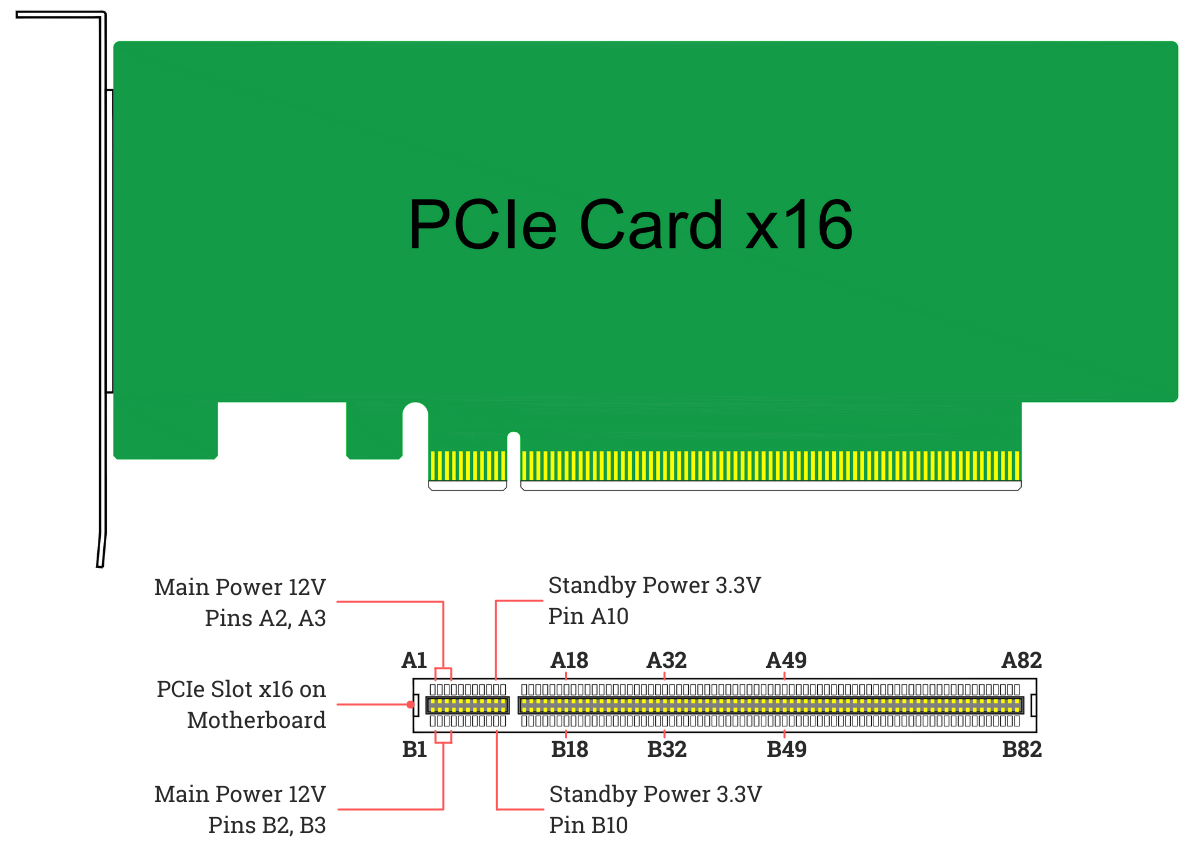

Pinout

The following table identifies the conductors on each side of the

edge connector

An edge connector is the portion of a printed circuit board (PCB) consisting of traces leading to the edge of the board that are intended to plug into a matching socket. The edge connector is a money-saving device because it only requires a sin ...

on a PCI Express card. The solder side of the

printed circuit board (PCB) is the A-side, and the component side is the B-side.

PRSNT1# and PRSNT2# pins must be slightly shorter than the rest, to ensure that a hot-plugged card is fully inserted. The WAKE# pin uses full voltage to wake the computer, but must be

pulled high from the standby power to indicate that the card is wake capable.

Power

All PCI express cards may consume up to at (). The amount of +12 V and total power they may consume depends on the form factor and the role of the card:

* x1 cards are limited to 0.5 A at +12V (6 W) and 10 W combined.

* x4 and wider cards are limited to 2.1 A at +12V (25 W) and 25 W combined.

* A full-sized x1 card may draw up to the 25 W limits after initialization and software configuration as a high-power device.

* A full-sized x16 graphics card may draw up to 5.5 A at +12V (66 W) and 75 W combined after initialization and software configuration as a high-power device.

Optional connectors add (6-pin) or (8-pin) of +12 V power for up to total ().

* Sense0 pin is connected to ground by the cable or power supply, or float on board if cable is not connected.

* Sense1 pin is connected to ground by the cable or power supply, or float on board if cable is not connected.

Some cards use two 8-pin connectors, but this has not been standardized yet , therefore such cards must not carry the official PCI Express logo. This configuration allows 375 W total () and will likely be standardized by PCI-SIG with the PCI Express 4.0 standard. The 8-pin PCI Express connector could be confused with the

EPS12V connector, which is mainly used for powering SMP and multi-core systems. The power connectors are variants of the Molex Mini-Fit Jr. series connectors.

PCI Express Mini Card

PCI Express Mini Card (also known as Mini PCI Express, Mini PCIe, Mini PCI-E, mPCIe, and PEM), based on PCI Express, is a replacement for the

Mini PCI

Peripheral Component Interconnect (PCI) is a local computer bus for attaching hardware devices in a computer and is part of the PCI Local Bus standard. The PCI bus supports the functions found on a processor bus but in a standardized format t ...

form factor. It is developed by the

PCI-SIG

PCI-SIG, or Peripheral Component Interconnect Special Interest Group, is an electronics industry consortium responsible for specifying the Peripheral Component Interconnect (PCI), PCI-X, and PCI Express (PCIe) computer buses. It is based in ...

. The host device supports both PCI Express and

USB

Universal Serial Bus (USB) is an industry standard that establishes specifications for cables, connectors and protocols for connection, communication and power supply (interfacing) between computers, peripherals and other computers. A broad ...

2.0 connectivity, and each card may use either standard. Most laptop computers built after 2005 use PCI Express for expansion cards; however, , many vendors are moving toward using the newer

M.2

M.2, pronounced ''m dot two'' and formerly known as the Next Generation Form Factor (NGFF), is a specification for internally mounted computer expansion cards and associated connectors. M.2 replaces the mSATA standard, which uses the PCI Ex ...

form factor for this purpose.

Due to different dimensions, PCI Express Mini Cards are not physically compatible with standard full-size PCI Express slots; however, passive adapters exist that let them be used in full-size slots.

Physical dimensions

Dimensions of PCI Express Mini Cards are 30 mm × 50.95 mm (width × length) for a Full Mini Card. There is a 52-pin

edge connector

An edge connector is the portion of a printed circuit board (PCB) consisting of traces leading to the edge of the board that are intended to plug into a matching socket. The edge connector is a money-saving device because it only requires a sin ...

, consisting of two staggered rows on a 0.8 mm pitch. Each row has eight contacts, a gap equivalent to four contacts, then a further 18 contacts.

Boards

Board or Boards may refer to:

Flat surface

* Lumber, or other rigid material, milled or sawn flat

** Plank (wood)

** Cutting board

** Sounding board, of a musical instrument

* Cardboard (paper product)

* Paperboard

* Fiberboard

** Hardboard, a t ...

have a thickness of 1.0 mm, excluding the components. A "Half Mini Card" (sometimes abbreviated as HMC) is also specified, having approximately half the physical length of 26.8 mm.

Electrical interface

PCI Express Mini Card edge connectors provide multiple connections and buses:

* PCI Express x1 (with SMBus)

* USB 2.0

* Wires to diagnostics LEDs for wireless network (i.e.,

Wi-Fi

Wi-Fi () is a family of wireless network protocols, based on the IEEE 802.11 family of standards, which are commonly used for local area networking of devices and Internet access, allowing nearby digital devices to exchange data by radio wav ...

) status on computer's chassis

*

SIM card for

GSM

The Global System for Mobile Communications (GSM) is a standard developed by the European Telecommunications Standards Institute (ETSI) to describe the protocols for second-generation ( 2G) digital cellular networks used by mobile devices such ...

and

WCDMA

The Universal Mobile Telecommunications System (UMTS) is a 3G, third generation mobile cellular system for networks based on the GSM standard. Developed and maintained by the 3GPP (3rd Generation Partnership Project), UMTS is a component of the I ...

applications (UIM signals on spec.)

* Future extension for another PCIe lane

* 1.5 V and 3.3 V power

Mini-SATA (mSATA) variant

Despite sharing the Mini PCI Express form factor, an

mSATA

SATA (Serial AT Attachment) is a computer bus interface that connects host adapter, host bus adapters to mass storage devices such as hard disk drives, optical drives, and solid-state drives. Serial ATA succeeded the earlier Parallel ATA (PATA) ...

slot is not necessarily electrically compatible with Mini PCI Express. For this reason, only certain notebooks are compatible with mSATA drives. Most compatible systems are based on Intel's Sandy Bridge processor architecture, using the Huron River platform. Notebooks such as Lenovo's ThinkPad T, W and X series, released in March–April 2011, have support for an mSATA SSD card in their

WWAN

Wireless wide area network (WWAN), is a form of wireless network.

The larger size of a wide area network compared to a local area network requires differences in technology.

Wireless networks of different sizes deliver data in the form of telephon ...

card slot. The ThinkPad Edge E220s/E420s, and the Lenovo IdeaPad Y460/Y560/Y570/Y580 also support mSATA.

On the contrary, the L-series among others can only support M.2 cards using the PCIe standard in the WWAN slot.

Some notebooks (notably the

Asus Eee PC

The ASUS Eee PC is a netbook computer line from Asus, and a part of the ASUS Eee product family. At the time of its introduction in late 2007, it was noted for its combination of a lightweight, Linux-based operating system, solid-state drive ( ...

, the

Apple

An apple is an edible fruit produced by an apple tree (''Malus domestica''). Apple fruit tree, trees are agriculture, cultivated worldwide and are the most widely grown species in the genus ''Malus''. The tree originated in Central Asia, wh ...

MacBook Air

The MacBook Air is a line of ultrabook computers developed and manufactured by Apple Inc. It consists of a full-size keyboard, a machined aluminum case, and, in the more modern versions, a thin light structure. The Air was originally position ...

, and the Dell mini9 and mini10) use a variant of the PCI Express Mini Card as an

SSD. This variant uses the reserved and several non-reserved pins to implement SATA and IDE interface passthrough, keeping only USB, ground lines, and sometimes the core PCIe x1 bus intact.

This makes the "miniPCIe" flash and solid-state drives sold for netbooks largely incompatible with true PCI Express Mini implementations.

Also, the typical Asus miniPCIe SSD is 71 mm long, causing the Dell 51 mm model to often be (incorrectly) referred to as half length. A true 51 mm Mini PCIe SSD was announced in 2009, with two stacked PCB layers that allow for higher storage capacity. The announced design preserves the PCIe interface, making it compatible with the standard mini PCIe slot. No working product has yet been developed.

Intel has numerous desktop boards with the PCIe x1 Mini-Card slot that typically do not support mSATA SSD. A list of desktop boards that natively support mSATA in the PCIe x1 Mini-Card slot (typically multiplexed with a SATA port) is provided on the Intel Support site.

PCI Express M.2

M.2 replaces the mSATA standard and Mini PCIe.

Computer bus interfaces provided through the M.2 connector are PCI Express 3.0 (up to four lanes), Serial ATA 3.0, and USB 3.0 (a single logical port for each of the latter two). It is up to the manufacturer of the M.2 host or device to choose which interfaces to support, depending on the desired level of host support and device type.

PCI Express External Cabling

''PCI Express External Cabling'' (also known as ''External PCI Express'', ''Cabled PCI Express'', or ''ePCIe'') specifications were released by the

PCI-SIG

PCI-SIG, or Peripheral Component Interconnect Special Interest Group, is an electronics industry consortium responsible for specifying the Peripheral Component Interconnect (PCI), PCI-X, and PCI Express (PCIe) computer buses. It is based in ...

in February 2007.

Standard cables and connectors have been defined for x1, x4, x8, and x16 link widths, with a transfer rate of 250 MB/s per lane. The PCI-SIG also expects the norm to evolve to reach 500 MB/s, as in PCI Express 2.0. An example of the uses of Cabled PCI Express is a metal enclosure, containing a number of PCIe slots and PCIe-to-ePCIe adapter circuitry. This device would not be possible had it not been for the ePCIe specification.

PCI Express OCuLink

''OCuLink'' (standing for "optical-copper link", since ''Cu'' is the

chemical symbol

Chemical symbols are the abbreviations used in chemistry for chemical elements, functional groups and chemical compounds. Element symbols for chemical elements normally consist of one or two letters from the Latin alphabet and are written with ...

for

Copper

Copper is a chemical element with the symbol Cu (from la, cuprum) and atomic number 29. It is a soft, malleable, and ductile metal with very high thermal and electrical conductivity. A freshly exposed surface of pure copper has a pinkis ...

) is an extension for the "cable version of PCI Express", acting as a competitor to version 3 of the Thunderbolt interface. Version 1.0 of OCuLink, released in Oct 2015, supports up to PCIe 3.0 x4 lanes (8

GT/s

In computer technology, transfers per second and its more common secondary terms gigatransfers per second (abbreviated as GT/s) and megatransfers per second (MT/s) are informal language that refer to the number of operations transferring data that ...

(gigatransfers per second), 3.9 GB/s) over copper cabling; a

fiber optic

An optical fiber, or optical fibre in Commonwealth English, is a flexible, transparent fiber made by drawing glass (silica) or plastic to a diameter slightly thicker than that of a human hair. Optical fibers are used most often as a means t ...

version may appear in the future.

In its latest version OCuLink-2, it supports up to 16 GB/s (PCIe 4.0 x8)

while the maximum bandwidth of a full speed Thunderbolt 4 cable is 5 GB/s. Some suppliers may design their connector product to be able to support next generation PCI Express 5.0 running at 32 GT/s per lane for future proofing and minimizing development costs over the next few years.

Initially, PCI-SIG expected to bring OCuLink into laptops for connection of powerful external GPU boxes. It turned out to be a rare use. Instead, OCuLink became popular for PCIe interconnections in servers.

Derivative forms

Numerous other form factors use, or are able to use, PCIe. These include:

* Low-height card

*

ExpressCard: Successor to the

PC Card form factor (with x1 PCIe and USB 2.0; hot-pluggable)

* PCI Express ExpressModule: A hot-pluggable modular form factor defined for servers and workstations

*

XQD card

The XQD card is a memory card format primarily developed for flash memory cards. It uses PCI Express as a data transfer interface.

The format is targeted at high-definition camcorders and high-resolution digital cameras. It offers target read ...

: A PCI Express-based flash card standard by the

CompactFlash Association

CompactFlash (CF) is a flash memory mass storage device used mainly in portable electronic devices. The format was specified and the devices were first manufactured by SanDisk in 1994.

CompactFlash became one of the most successful of the e ...

with x2 PCIe

*

CFexpress

CFexpress is a standard for removable media cards proposed by the CompactFlash Association (CFA). The standard uses PCIe 3.0 interface with 1 to 4 lanes where 1 GB/s data can be provided per lane. NVM Express is also supported to provide low overh ...

card: A PCI Express-based flash card by the CompactFlash Association in three form factors supporting 1 to 4 PCIe lanes

* SD card: The

SD Express bus, introduced in version 7.0 of the SD specification uses an x1 PCIe link

*

XMC: Similar to the

CMC/

PMC form factor (VITA 42.3)

*

AdvancedTCA

Advanced Telecommunications Computing Architecture (ATCA or AdvancedTCA) is the largest specification effort in the history of the PCI Industrial Computer Manufacturers Group (PICMG), with more than 100 companies participating. Known as AdvancedTC ...

: A complement to

CompactPCI

CompactPCI is a computer bus interconnect for industrial computers, combining a Eurocard-type connector and PCI signaling and protocols. Boards are standardized to 3 U or 6U sizes, and are typically interconnected via a passive backplane. The ...

for larger applications; supports serial based backplane topologies

*

AMC

AMC may refer to:

Film and television

* AMC Theatres, an American movie theater chain

* AMC Networks, an American entertainment company

** AMC (TV channel)

** AMC+, streaming service

** AMC Networks International, an entertainment company

*** ...

: A complement to the

AdvancedTCA

Advanced Telecommunications Computing Architecture (ATCA or AdvancedTCA) is the largest specification effort in the history of the PCI Industrial Computer Manufacturers Group (PICMG), with more than 100 companies participating. Known as AdvancedTC ...

specification; supports processor and I/O modules on ATCA boards (x1, x2, x4 or x8 PCIe).

*

FeaturePak: A tiny expansion card format (43mm × 65 mm) for embedded and small-form-factor applications, which implements two x1 PCIe links on a high-density connector along with USB, I2C, and up to 100 points of I/O

*

Universal IO

Universal is the adjective for universe.

Universal may also refer to:

Companies

* NBCUniversal, a media and entertainment company

** Universal Animation Studios, an American Animation studio, and a subsidiary of NBCUniversal

** Universal TV, a t ...

: A variant from

Super Micro Computer Inc designed for use in low-profile rack-mounted chassis.

It has the connector bracket reversed so it cannot fit in a normal PCI Express socket, but it is pin-compatible and may be inserted if the bracket is removed.

*

M.2

M.2, pronounced ''m dot two'' and formerly known as the Next Generation Form Factor (NGFF), is a specification for internally mounted computer expansion cards and associated connectors. M.2 replaces the mSATA standard, which uses the PCI Ex ...

(formerly known as NGFF)

*

M-PCIe brings PCIe 3.0 to mobile devices (such as tablets and smartphones), over the

M-PHY

M-PHY is a high speed data communications physical layer protocol standard developed by the MIPI Alliance, PHY Working group, and targeted at the needs of mobile multimedia devices. The specification's details are proprietary to MIPI member organ ...

physical layer.

*

U.2 (formerly known as SFF-8639)

The PCIe slot connector can also carry protocols other than PCIe. Some

9xx series Intel chipsets support

Serial Digital Video Out, a proprietary technology that uses a slot to transmit video signals from the host CPU's

integrated graphics

A graphics processing unit (GPU) is a specialized electronic circuit designed to manipulate and alter memory to accelerate the creation of images in a frame buffer intended for output to a display device. GPUs are used in embedded systems, mobi ...

instead of PCIe, using a supported add-in.

The PCIe transaction-layer protocol can also be used over some other interconnects, which are not electrically PCIe:

*

Thunderbolt

A thunderbolt or lightning bolt is a symbolic representation of lightning when accompanied by a loud thunderclap. In Indo-European mythology, the thunderbolt was identified with the 'Sky Father'; this association is also found in later Hel ...

: A royalty-free interconnect standard by Intel that combines

DisplayPort

DisplayPort (DP) is a digital display interface developed by a consortium of PC and chip manufacturers and standardized by the Video Electronics Standards Association (VESA). It is primarily used to connect a video source to a display device su ...

and PCIe protocols in a form factor compatible with

Mini DisplayPort

The Mini DisplayPort (MiniDP or mDP) is a miniaturized and less common version of the DisplayPort audio-visual digital interface.

It was announced by Apple in October 2008, and by early 2013 all new Apple Macintosh computers had Mini DisplayP ...

. Thunderbolt 3.0 also combines USB 3.1 and uses the

USB-C

USB-C (properly known as USB Type-C) is a 24-pin USB connector system with a rotationally symmetrical connector. The designation C refers only to the connector's physical configuration or form factor and should not be confused with the conn ...

form factor as opposed to Mini DisplayPort.

*

USB4

USB4 (aka: USB 4.0) is a specification by the USB Implementers Forum (USB-IF), which was released in version 1.0 on 29 August 2019. The USB4 protocol is based on the Thunderbolt 3 protocol; the Thunderbolt 3 specification was donated to the US ...

History and revisions

While in early development, PCIe was initially referred to as ''HSI'' (for ''High Speed Interconnect''), and underwent a name change to ''3GIO'' (for ''3rd Generation I/O'') before finally settling on its

PCI-SIG

PCI-SIG, or Peripheral Component Interconnect Special Interest Group, is an electronics industry consortium responsible for specifying the Peripheral Component Interconnect (PCI), PCI-X, and PCI Express (PCIe) computer buses. It is based in ...

name ''PCI Express''. A technical working group named the ''Arapaho Work Group'' (AWG) drew up the standard. For initial drafts, the AWG consisted only of Intel engineers; subsequently, the AWG expanded to include industry partners.

Since, PCIe has undergone several large and smaller revisions, improving on performance and other features.

; Notes

PCI Express 1.0a

In 2003, PCI-SIG introduced PCIe 1.0a, with a per-lane data rate of 250 MB/s and a

transfer rate

In telecommunications and computing, bit rate (bitrate or as a variable ''R'') is the number of bits that are conveyed or processed per unit of time.

The bit rate is expressed in the unit bit per second (symbol: bit/s), often in conjunction w ...

of 2.5 gigatransfers per second (GT/s).

Transfer rate is expressed in transfers per second instead of bits per second because the number of transfers includes the overhead bits, which do not provide additional throughput;

PCIe 1.x uses an

8b/10b encoding scheme, resulting in a 20% (= 2/10) overhead on the raw channel bandwidth.

So in the PCIe terminology, transfer rate refers to the encoded bit rate: 2.5 GT/s is 2.5 Gbps on the encoded serial link. This corresponds to 2.0 Gbps of pre-coded data or 250 MB/s, which is referred to as throughput in PCIe.

PCI Express 1.1

In 2005, PCI-SIG

introduced PCIe 1.1. This updated specification includes clarifications and several improvements, but is fully compatible with PCI Express 1.0a. No changes were made to the data rate.

PCI Express 2.0

PCI-SIG

PCI-SIG, or Peripheral Component Interconnect Special Interest Group, is an electronics industry consortium responsible for specifying the Peripheral Component Interconnect (PCI), PCI-X, and PCI Express (PCIe) computer buses. It is based in ...

announced the availability of the PCI Express Base 2.0 specification on 15 January 2007.

The PCIe 2.0 standard doubles the transfer rate compared with PCIe 1.0 to 5GT/s and the per-lane throughput rises from 250 MB/s to 500 MB/s. Consequently, a 16-lane PCIe connector (x16) can support an aggregate throughput of up to 8 GB/s.

PCIe 2.0 motherboard slots are fully

backward compatible

Backward compatibility (sometimes known as backwards compatibility) is a property of an operating system, product, or technology that allows for interoperability with an older legacy system, or with input designed for such a system, especially in ...

with PCIe v1.x cards. PCIe 2.0 cards are also generally backward compatible with PCIe 1.x motherboards, using the available bandwidth of PCI Express 1.1. Overall, graphic cards or motherboards designed for v2.0 work, with the other being v1.1 or v1.0a.

The PCI-SIG also said that PCIe 2.0 features improvements to the point-to-point data transfer protocol and its software architecture.

Intel

Intel Corporation is an American multinational corporation and technology company headquartered in Santa Clara, California. It is the world's largest semiconductor chip manufacturer by revenue, and is one of the developers of the x86 seri ...

's first PCIe 2.0 capable chipset was the

X38 and boards began to ship from various vendors (

Abit,

Asus,

Gigabyte) as of 21 October 2007.

AMD started supporting PCIe 2.0 with its

AMD 700 chipset series and nVidia started with the

MCP72.

All of Intel's prior chipsets, including the

Intel P35 chipset, supported PCIe 1.1 or 1.0a.

Like 1.x, PCIe 2.0 uses an

8b/10b encoding scheme, therefore delivering, per-lane, an effective 4 Gbit/s max. transfer rate from its 5 GT/s raw data rate.

PCI Express 2.1

PCI Express 2.1 (with its specification dated 4 March 2009) supports a large proportion of the management, support, and troubleshooting systems planned for full implementation in PCI Express 3.0. However, the speed is the same as PCI Express 2.0. The increase in power from the slot breaks backward compatibility between PCI Express 2.1 cards and some older motherboards with 1.0/1.0a, but most motherboards with PCI Express 1.1 connectors are provided with a BIOS update by their manufacturers through utilities to support backward compatibility of cards with PCIe 2.1.

PCI Express 3.0

PCI Express 3.0 Base specification revision 3.0 was made available in November 2010, after multiple delays. In August 2007, PCI-SIG announced that PCI Express 3.0 would carry a bit rate of 8

gigatransfer

In computer technology, transfers per second and its more common secondary terms gigatransfers per second (abbreviated as GT/s) and megatransfers per second (MT/s) are informal language that refer to the number of operations transferring data that ...

s per second (GT/s), and that it would be backward compatible with existing PCI Express implementations. At that time, it was also announced that the final specification for PCI Express 3.0 would be delayed until Q2 2010.

New features for the PCI Express 3.0 specification included a number of optimizations for enhanced signaling and data integrity, including transmitter and receiver equalization,

PLL improvements, clock data recovery, and channel enhancements of currently supported topologies.

Following a six-month technical analysis of the feasibility of scaling the PCI Express interconnect bandwidth, PCI-SIG's analysis found that 8 gigatransfers per second could be manufactured in mainstream silicon process technology, and deployed with existing low-cost materials and infrastructure, while maintaining full compatibility (with negligible impact) with the PCI Express protocol stack.

PCI Express 3.0 upgraded the

encoding scheme

In telecommunication, a line code is a pattern of voltage, current, or photons used to represent digital data transmitted down a communication channel or written to a storage medium. This repertoire of signals is usually called a constrained co ...

to 128b/130b from the previous

8b/10b encoding, reducing the bandwidth overhead from 20% of PCI Express 2.0 to approximately 1.54% (= 2/130). PCI Express 3.0's 8 GT/s bit rate effectively delivers 985 MB/s per lane, nearly doubling the lane bandwidth relative to PCI Express 2.0.

On 18 November 2010, the PCI Special Interest Group officially published the finalized PCI Express 3.0 specification to its members to build devices based on this new version of PCI Express.

PCI Express 3.1

In September 2013, PCI Express 3.1 specification was announced for release in late 2013 or early 2014, consolidating various improvements to the published PCI Express 3.0 specification in three areas: power management, performance and functionality.

It was released in November 2014.

PCI Express 4.0

On 29 November 2011, PCI-SIG preliminarily announced PCI Express 4.0,

providing a 16 GT/s bit rate that doubles the bandwidth provided by PCI Express 3.0 to 31.5 GB/s in each direction for a 16-lane configuration, while maintaining backward and

forward compatibility

Forward compatibility or upward compatibility is a design characteristic that allows a system to accept input intended for a later version of itself. The concept can be applied to entire systems, electrical interfaces, telecommunication signals, ...

in both software support and used mechanical interface.

PCI Express 4.0 specs also bring OCuLink-2, an alternative to

Thunderbolt

A thunderbolt or lightning bolt is a symbolic representation of lightning when accompanied by a loud thunderclap. In Indo-European mythology, the thunderbolt was identified with the 'Sky Father'; this association is also found in later Hel ...

. OCuLink version 2 has up to 16 GT/s (16GB/s total for x8 lanes),

while the maximum bandwidth of a Thunderbolt 3 link is 5GB/s.

In June 2016 Cadence, PLDA and Synopsys demoed PCIe 4.0 physical-layer, controller, switch and other IP blocks at the PCI SIG’s annual developer’s conference.

Mellanox Technologies

Mellanox Technologies Ltd. ( he, מלאנוקס טכנולוגיות בע"מ) was an Israeli-American multinational supplier of computer networking products based on InfiniBand and Ethernet technology. Mellanox offered adapters, switches, softwa ...

announced the first 100Gbit/s network adapter with PCIe 4.0 on 15 June 2016,

and the first 200Gbit/s network adapter with PCIe 4.0 on 10 November 2016.

In August 2016,

Synopsys

Synopsys is an American electronic design automation (EDA) company that focuses on silicon design and verification, silicon intellectual property and software security and quality. Products include tools for logic synthesis and physical de ...

presented a test setup with FPGA clocking a lane to PCIe 4.0 speeds at the

Intel Developer Forum

The Intel Developer Forum (IDF) was a biannual gathering of technologists to discuss Intel products and products based on Intel products. The first IDF was held in 1997.

To emphasize the importance of China, the Spring 2007 IDF was held in Beijin ...

. Their IP has been licensed to several firms planning to present their chips and products at the end of 2016.

On the IEEE Hot Chips Symposium in August 2016

IBM announced the first CPU with PCIe 4.0 support,

POWER9

POWER9 is a family of superscalar, multithreading, multi-core microprocessors produced by IBM, based on the Power ISA. It was announced in August 2016. The POWER9-based processors are being manufactured using a 14 nm FinFET process, in ...

.

[Brian Thompto, POWER9 Processor for the Cognitive Era](_blank)

/ref>[2016 IEEE Hot Chips 28 Symposium (HCS), 21–23 Aug. 2016](_blank)

/ref>

PCI-SIG officially announced the release of the final PCI Express 4.0 specification on 8 June 2017.

/ref>

NETINT Technologies introduced the first NVMe

NVM Express (NVMe) or Non-Volatile Memory Host Controller Interface Specification (NVMHCIS) is an open, logical-device interface specification for accessing a computer's non-volatile storage media usually attached via PCI Express (PCIe) bus. The ...

SSD based on PCIe 4.0 on 17 July 2018, ahead of Flash Memory Summit 2018AMD

Advanced Micro Devices, Inc. (AMD) is an American multinational semiconductor company based in Santa Clara, California, that develops computer processors and related technologies for business and consumer markets. While it initially manufactur ...

announced on 9 January 2019 its upcoming Zen 2

Zen 2 is a computer processor microarchitecture by AMD. It is the successor of AMD's Zen and Zen+ microarchitectures, and is fabricated on the 7 nanometer MOSFET node from TSMC. The microarchitecture powers the third generation of Ryzen proce ...

-based processors and X570 chipset would support PCIe 4.0.Tiger Lake

Tiger Lake is Intel's codename for the 11th generation Intel Core mobile processors based on the new Willow Cove Core microarchitecture, manufactured using Intel's third-generation 10 nm process node known as 10SF ("10 nm SuperFin"). Tig ...

microarchitecture.

PCI Express 5.0

In June 2017, PCI-SIG announced the PCI Express 5.0 preliminary specification.Jiangsu Huacun

Jiangsu (; ; pinyin: Jiāngsū, Postal romanization, alternatively romanized as Kiangsu or Chiangsu) is an Eastern China, eastern coastal Provinces of the People's Republic of China, province of the China, People's Republic of China. It is o ...

presented the first PCIe 5.0 Controller HC9001 in a 12 nm manufacturing process.Power10

Power10 is a superscalar, multithreading, multi-core microprocessor family, based on the open source Power ISA, and announced in August 2020 at the Hot Chips conference; systems with Power10 CPUs. Generally available from September 2021 ...

processor with PCIe 5.0 and up to 32 lanes per single-chip module (SCM) and up to 64 lanes per double-chip module (DCM).

On 9 September 2021, IBM announced the Power E1080 Enterprise server with planned availability date 17 September.[Power E1080 Enterprise server delivers a uniquely architected platform to help securely and efficiently scale core operational and AI applications in a hybrid cloud, IBM Europe Hardware Announcement ZG21-0059](_blank)

/ref> It can have up to 16 Power10 SCMs with maximum of 32 slots per system which can act as PCIe 5.0 x8 or PCIe 4.0 x16.[IBM Power E1080 Technical Overview and Introduction](_blank)

/ref> Alternatively they can be used as PCIe 5.0 x16 slots for optional optical CXP converter adapters connecting to external PCIe expansion drawers.

On 27 October 2021, Intel announced the 12th Gen Intel Core CPU family, the world's first consumer x86-64 processors with PCIe 5.0 (up to 16 lanes) connectivity.

On 22 March 2022, Nvidia announced Nvidia Hopper GH100 GPU, the world's first PCIe 5.0 GPU.

On 23 May 2022, AMD announced its Zen 4 architecture with support for up to 24 lanes of PCIe 5.0 connectivity on consumer platforms and 128 lanes on server platforms.

PCI Express 6.0

On 18 June 2019, PCI-SIG announced the development of PCI Express 6.0 specification. Bandwidth is expected to increase to 64GT/s, yielding 128GB/s in each direction in a 16-lane configuration, with a target release date of 2021.pulse-amplitude modulation

Pulse-amplitude modulation (PAM) is a form of signal modulation where the message information is encoded in the amplitude of a series of signal pulses. It is an analog pulse modulation scheme in which the amplitudes of a train of carrier pulse ...

(PAM-4) with a low-latency forward error correction (FEC) in place of non-return-to-zero

In telecommunication, a non-return-to-zero (NRZ) line code is a binary code in which ones are represented by one significant condition, usually a positive voltage, while zeros are represented by some other significant condition, usually a negati ...

(NRZ) modulation.PAM-4

Pulse-amplitude modulation (PAM) is a form of signal modulation where the message information is encoded in the amplitude of a series of signal pulses. It is an analog pulse modulation scheme in which the amplitudes of a train of carrier pulse ...

coding results in a vastly higher bit error rate

In digital transmission, the number of bit errors is the number of received bits of a data stream over a communication channel that have been altered due to noise, interference, distortion or bit synchronization errors.

The bit error rate (BER) ...

(BER) of 10−6 (vs. 10−12 previously), so in place of 128b/130b encoding, a 3-way interlaced forward error correction (FEC) is used in addition to cyclic redundancy check (CRC). A fixed 256 byte Flow Control Unit (FLIT) block carries 242 bytes of data, which includes variable-sized transaction level packets (TLP) and data link layer payload (DLLP); remaining 14 bytes are reserved for 8-byte CRC and 6-byte FEC.

PCI Express 7.0

On 21 June 2022, PCI-SIG announced the development of PCI Express 7.0 specification.

Extensions and future directions

Some vendors offer PCIe over fiber products,[IBM Power Systems E870 and E880 Technical Overview and Introduction](_blank)

/ref>InfiniBand

InfiniBand (IB) is a computer networking communications standard used in high-performance computing that features very high throughput and very low latency. It is used for data interconnect both among and within computers. InfiniBand is also use ...

or Ethernet

Ethernet () is a family of wired computer networking technologies commonly used in local area networks (LAN), metropolitan area networks (MAN) and wide area networks (WAN). It was commercially introduced in 1980 and first standardized in 198 ...

) that may require additional software to support it.

''Thunderbolt

A thunderbolt or lightning bolt is a symbolic representation of lightning when accompanied by a loud thunderclap. In Indo-European mythology, the thunderbolt was identified with the 'Sky Father'; this association is also found in later Hel ...

'' was co-developed by Intel

Intel Corporation is an American multinational corporation and technology company headquartered in Santa Clara, California. It is the world's largest semiconductor chip manufacturer by revenue, and is one of the developers of the x86 seri ...

and Apple

An apple is an edible fruit produced by an apple tree (''Malus domestica''). Apple fruit tree, trees are agriculture, cultivated worldwide and are the most widely grown species in the genus ''Malus''. The tree originated in Central Asia, wh ...

as a general-purpose high speed interface combining a logical PCIe link with DisplayPort

DisplayPort (DP) is a digital display interface developed by a consortium of PC and chip manufacturers and standardized by the Video Electronics Standards Association (VESA). It is primarily used to connect a video source to a display device su ...

and was originally intended as an all-fiber interface, but due to early difficulties in creating a consumer-friendly fiber interconnect, nearly all implementations are copper systems. A notable exception, the Sony VAIO Z VPC-Z2, uses a nonstandard USB port with an optical component to connect to an outboard PCIe display adapter. Apple has been the primary driver of Thunderbolt adoption through 2011, though several other vendorsUSB4

USB4 (aka: USB 4.0) is a specification by the USB Implementers Forum (USB-IF), which was released in version 1.0 on 29 August 2019. The USB4 protocol is based on the Thunderbolt 3 protocol; the Thunderbolt 3 specification was donated to the US ...

standard.

''Mobile PCIe'' specification (abbreviated to ''M-PCIe'') allows PCI Express architecture to operate over the MIPI Alliance

MIPI Alliance is a global business alliance that develops technical specifications for the mobile ecosystem, particularly smart phones but including mobile-influenced industries. MIPI was founded in 2003 by ARM, Intel, Nokia, Samsung, STMicroel ...

's M-PHY

M-PHY is a high speed data communications physical layer protocol standard developed by the MIPI Alliance, PHY Working group, and targeted at the needs of mobile multimedia devices. The specification's details are proprietary to MIPI member organ ...

physical layer technology. Building on top of already existing widespread adoption of M-PHY and its low-power design, Mobile PCIe lets mobile devices use PCI Express.

Draft process

There are 5 primary releases/checkpoints in a PCI-SIG specification:

Hardware protocol summary

The PCIe link is built around dedicated unidirectional couples of serial (1-bit), point-to-point connections known as ''lanes''. This is in sharp contrast to the earlier PCI connection, which is a bus-based system where all the devices share the same bidirectional, 32-bit or 64-bit parallel bus.

PCI Express is a layered protocol

The protocol stack or network stack is an implementation of a computer networking protocol suite or protocol family. Some of these terms are used interchangeably but strictly speaking, the ''suite'' is the definition of the communication protoco ...

, consisting of a '' transaction layer'', a ''data link layer

The data link layer, or layer 2, is the second layer of the seven-layer OSI model of computer networking. This layer is the protocol layer that transfers data between nodes on a network segment across the physical layer. The data link layer ...

'', and a '' physical layer''. The Data Link Layer is subdivided to include a media access control

In IEEE 802 LAN/MAN standards, the medium access control (MAC, also called media access control) sublayer is the layer that controls the hardware responsible for interaction with the wired, optical or wireless transmission medium. The MAC sublay ...

(MAC) sublayer. The Physical Layer is subdivided into logical and electrical sublayers. The Physical logical-sublayer contains a physical coding sublayer (PCS). The terms are borrowed from the IEEE 802

IEEE 802 is a family of Institute of Electrical and Electronics Engineers (IEEE) standards for local area networks (LAN), personal area network (PAN), and metropolitan area networks (MAN). The IEEE 802 LAN/MAN Standards Committee (LMSC) mainta ...

networking protocol model.

Physical layer

The PCIe Physical Layer (''PHY'', ''PCIEPHY'', ''PCI Express PHY'', or ''PCIe PHY'') specification is divided into two sub-layers, corresponding to electrical and logical specifications. The logical sublayer is sometimes further divided into a MAC sublayer and a PCS, although this division is not formally part of the PCIe specification. A specification published by Intel, the PHY Interface for PCI Express (PIPE),

The PCIe Physical Layer (''PHY'', ''PCIEPHY'', ''PCI Express PHY'', or ''PCIe PHY'') specification is divided into two sub-layers, corresponding to electrical and logical specifications. The logical sublayer is sometimes further divided into a MAC sublayer and a PCS, although this division is not formally part of the PCIe specification. A specification published by Intel, the PHY Interface for PCI Express (PIPE),Gbit

The bit is the most basic unit of information in computing and digital communications. The name is a portmanteau of binary digit. The bit represents a logical state with one of two possible values. These values are most commonly represented ...

/s, depending on the negotiated capabilities. Transmit and receive are separate differential pairs, for a total of four data wires per lane.

A connection between any two PCIe devices is known as a ''link'', and is built up from a collection of one or more ''lanes''. All devices must minimally support single-lane (x1) link. Devices may optionally support wider links composed of up to 32 lanes.[PCI Express System Architecture](_blank)

/ref>

/ref> This allows for very good compatibility in two ways:

* A PCIe card physically fits (and works correctly) in any slot that is at least as large as it is (e.g., an x1 sized card works in any sized slot);

* A slot of a large physical size (e.g., x16) can be wired electrically with fewer lanes (e.g., x1, x4, x8, or x12) as long as it provides the ground connections required by the larger physical slot size.

In both cases, PCIe negotiates the highest mutually supported number of lanes. Many graphics cards, motherboards and BIOS versions are verified to support x1, x4, x8 and x16 connectivity on the same connection.

The width of a PCIe connector is 8.8 mm, while the height is 11.25 mm, and the length is variable. The fixed section of the connector is 11.65 mm in length and contains two rows of 11 pins each (22 pins total), while the length of the other section is variable depending on the number of lanes. The pins are spaced at 1 mm intervals, and the thickness of the card going into the connector is 1.6 mm.

Data transmission

PCIe sends all control messages, including interrupts, over the same links used for data. The serial protocol can never be blocked, so latency is still comparable to conventional PCI, which has dedicated interrupt lines. When the problem of IRQ sharing of pin based interrupts is taken into account and the fact that message signaled interrupts (MSI) can bypass an I/O APIC and be delivered to the CPU directly, MSI performance ends up being substantially better. Line encoding

In telecommunication, a line code is a pattern of voltage, current, or photons used to represent digital data transmitted down a communication channel or written to a storage medium. This repertoire of signals is usually called a constrained co ...

limits the run length of identical-digit strings in data streams and ensures the receiver stays synchronised to the transmitter via clock recovery

In serial communication of digital data, clock recovery is the process of extracting timing information from a serial data stream itself, allowing the timing of the data in the stream to be accurately determined without separate clock information. ...

.

A desirable balance (and therefore spectral density

The power spectrum S_(f) of a time series x(t) describes the distribution of power into frequency components composing that signal. According to Fourier analysis, any physical signal can be decomposed into a number of discrete frequencies, ...

) of 0 and 1 bits in the data stream is achieved by XORing a known binary polynomial as a "scrambler

In telecommunications, a scrambler is a device that transposes or inverts signals or otherwise encodes a message at the sender's side to make the message unintelligible at a receiver not equipped with an appropriately set descrambling device. Wher ...

" to the data stream in a feedback topology. Because the scrambling polynomial is known, the data can be recovered by applying the XOR a second time. Both the scrambling and descrambling steps are carried out in hardware.

Data link layer

The data link layer performs three vital services for the PCIe link:

# sequence the transaction layer packets (TLPs) that are generated by the transaction layer,

# ensure reliable delivery of TLPs between two endpoints via an acknowledgement protocol ( ACK and NAK

In data networking, telecommunications, and computer buses, an acknowledgment (ACK) is a signal that is passed between communicating processes, computers, or devices to signify acknowledgment, or receipt of message, as part of a communicatio ...

signaling) that explicitly requires replay of unacknowledged/bad TLPs,

# initialize and manage flow control credits

On the transmit side, the data link layer generates an incrementing sequence number for each outgoing TLP. It serves as a unique identification tag for each transmitted TLP, and is inserted into the header of the outgoing TLP. A 32-bit cyclic redundancy check code (known in this context as Link CRC or LCRC) is also appended to the end of each outgoing TLP.

On the receive side, the received TLP's LCRC and sequence number are both validated in the link layer. If either the LCRC check fails (indicating a data error), or the sequence-number is out of range (non-consecutive from the last valid received TLP), then the bad TLP, as well as any TLPs received after the bad TLP, are considered invalid and discarded. The receiver sends a negative acknowledgement message (NAK) with the sequence-number of the invalid TLP, requesting re-transmission of all TLPs forward of that sequence-number. If the received TLP passes the LCRC check and has the correct sequence number, it is treated as valid. The link receiver increments the sequence-number (which tracks the last received good TLP), and forwards the valid TLP to the receiver's transaction layer. An ACK message is sent to remote transmitter, indicating the TLP was successfully received (and by extension, all TLPs with past sequence-numbers.)

If the transmitter receives a NAK message, or no acknowledgement (NAK or ACK) is received until a timeout period expires, the transmitter must retransmit all TLPs that lack a positive acknowledgement (ACK). Barring a persistent malfunction of the device or transmission medium, the link-layer presents a reliable connection to the transaction layer, since the transmission protocol ensures delivery of TLPs over an unreliable medium.

In addition to sending and receiving TLPs generated by the transaction layer, the data-link layer also generates and consumes data link layer packets (DLLPs). ACK and NAK signals are communicated via DLLPs, as are some power management messages and flow control credit information (on behalf of the transaction layer).

In practice, the number of in-flight, unacknowledged TLPs on the link is limited by two factors: the size of the transmitter's replay buffer (which must store a copy of all transmitted TLPs until the remote receiver ACKs them), and the flow control credits issued by the receiver to a transmitter. PCI Express requires all receivers to issue a minimum number of credits, to guarantee a link allows sending PCIConfig TLPs and message TLPs.

Transaction layer

PCI Express implements split transactions (transactions with request and response separated by time), allowing the link to carry other traffic while the target device gathers data for the response.

PCI Express uses credit-based flow control. In this scheme, a device advertises an initial amount of credit for each received buffer in its transaction layer. The device at the

opposite end of the link, when sending transactions to this device, counts the number of credits each TLP consumes from its account. The sending device may only transmit a TLP when doing so does not make its consumed credit count exceed its credit limit. When the receiving device finishes processing the TLP from its buffer, it signals a return of credits to the sending device, which increases the credit limit by the restored amount. The credit counters are modular counters, and the comparison of consumed credits to credit limit requires modular arithmetic

In mathematics, modular arithmetic is a system of arithmetic for integers, where numbers "wrap around" when reaching a certain value, called the modulus. The modern approach to modular arithmetic was developed by Carl Friedrich Gauss in his boo ...

. The advantage of this scheme (compared to other methods such as wait states or handshake-based transfer protocols) is that the latency of credit return does not affect performance, provided that the credit limit is not encountered. This assumption is generally met if each device is designed with adequate buffer sizes.

PCIe 1.x is often quoted to support a data rate of 250 MB/s in each direction, per lane. This figure is a calculation from the physical signaling rate (2.5 gigabaud

In telecommunication and electronics, baud (; symbol: Bd) is a common unit of measurement of symbol rate, which is one of the components that determine the speed of communication over a data channel.

It is the unit for symbol rate or modulati ...

) divided by the encoding overhead (10 bits per byte). This means a sixteen lane (x16) PCIe card would then be theoretically capable of 16x250 MB/s = 4 GB/s in each direction. While this is correct in terms of data bytes, more meaningful calculations are based on the usable data payload rate, which depends on the profile of the traffic, which is a function of the high-level (software) application and intermediate protocol levels.

Like other high data rate serial interconnect systems, PCIe has a protocol and processing overhead due to the additional transfer robustness (CRC and acknowledgements). Long continuous unidirectional transfers (such as those typical in high-performance storage controllers) can approach >95% of PCIe's raw (lane) data rate. These transfers also benefit the most from increased number of lanes (x2, x4, etc.) But in more typical applications (such as a USB

Universal Serial Bus (USB) is an industry standard that establishes specifications for cables, connectors and protocols for connection, communication and power supply (interfacing) between computers, peripherals and other computers. A broad ...

or Ethernet

Ethernet () is a family of wired computer networking technologies commonly used in local area networks (LAN), metropolitan area networks (MAN) and wide area networks (WAN). It was commercially introduced in 1980 and first standardized in 198 ...

controller), the traffic profile is characterized as short data packets with frequent enforced acknowledgements.

Efficiency of the link

As for any "network like" communication links, some of the "raw" bandwidth is consumed by protocol overhead:

Applications

PCI Express operates in consumer, server, and industrial applications, as a motherboard-level interconnect (to link motherboard-mounted peripherals), a passive backplane interconnect and as an

PCI Express operates in consumer, server, and industrial applications, as a motherboard-level interconnect (to link motherboard-mounted peripherals), a passive backplane interconnect and as an expansion card

In computing, an expansion card (also called an expansion board, adapter card, peripheral card or accessory card) is a printed circuit board that can be inserted into an electrical connector, or expansion slot (also referred to as a bus sl ...

interface for add-in boards.

In virtually all modern () PCs, from consumer laptops and desktops to enterprise data servers, the PCIe bus serves as the primary motherboard-level interconnect, connecting the host system-processor with both integrated peripherals (surface-mounted ICs) and add-on peripherals (expansion cards). In most of these systems, the PCIe bus co-exists with one or more legacy PCI buses, for backward compatibility with the large body of legacy PCI peripherals.

, PCI Express has replaced AGP as the default interface for graphics cards on new systems. Almost all models of graphics cards released since 2010 by AMD

Advanced Micro Devices, Inc. (AMD) is an American multinational semiconductor company based in Santa Clara, California, that develops computer processors and related technologies for business and consumer markets. While it initially manufactur ...

(ATI) and Nvidia

Nvidia CorporationOfficially written as NVIDIA and stylized in its logo as VIDIA with the lowercase "n" the same height as the uppercase "VIDIA"; formerly stylized as VIDIA with a large italicized lowercase "n" on products from the mid 1990s to ...

use PCI Express. Nvidia uses the high-bandwidth data transfer of PCIe for its Scalable Link Interface

Scalable Link Interface (SLI) is a brand name for a deprecated multi- GPU technology developed by Nvidia for linking two or more video cards together to produce a single output. SLI is a parallel processing algorithm for computer graphics, meant ...

(SLI) technology, which allows multiple graphics cards of the same chipset and model number to run in tandem, allowing increased performance. AMD has also developed a multi-GPU system based on PCIe called CrossFire

A crossfire (also known as interlocking fire) is a military term for the siting of weapons (often automatic weapons such as assault rifles or sub-machine guns) so that their arcs of fire overlap. This tactic came to prominence in World War I.

S ...

. AMD, Nvidia, and Intel have released motherboard chipsets that support as many as four PCIe x16 slots, allowing tri-GPU and quad-GPU card configurations.

External GPUs

Theoretically, external PCIe could give a notebook the graphics power of a desktop, by connecting a notebook with any PCIe desktop video card (enclosed in its own external housing, with a power supply and cooling); this is possible with an ExpressCard or Thunderbolt

A thunderbolt or lightning bolt is a symbolic representation of lightning when accompanied by a loud thunderclap. In Indo-European mythology, the thunderbolt was identified with the 'Sky Father'; this association is also found in later Hel ...

interface. An ExpressCard interface provides bit rates of 5 Gbit/s (0.5 GB/s throughput), whereas a Thunderbolt interface provides bit rates of up to 40 Gbit/s (5 GB/s throughput).

In 2006, Nvidia

Nvidia CorporationOfficially written as NVIDIA and stylized in its logo as VIDIA with the lowercase "n" the same height as the uppercase "VIDIA"; formerly stylized as VIDIA with a large italicized lowercase "n" on products from the mid 1990s to ...

developed the Quadro Plex

The Nvidia Quadro Plex is an external graphics processing unit ''(Visual Computing System)'' designed for large-scale 3D visualizations. The system consists of a box containing a pair of high-end Nvidia Quadro graphics cards featuring a variety ...

external PCIe family of GPUs

A graphics processing unit (GPU) is a specialized electronic circuit designed to manipulate and alter memory to accelerate the creation of images in a frame buffer intended for output to a display device. GPUs are used in embedded systems, mobil ...

that can be used for advanced graphic applications for the professional market.ATI XGP

Ati or ATI may refer to:

* Ati people, a Negrito ethnic group in the Philippines

**Ati language (Philippines), the language spoken by this people group

** Ati-Atihan festival, an annual celebration held in the Philippines

*Ati language (China), a ...