Epistemic probability on:

[Wikipedia]

[Google]

[Amazon]

Uncertainty quantification (UQ) is the science of quantitative characterization and reduction of uncertainties in both computational and real world applications. It tries to determine how likely certain outcomes are if some aspects of the system are not exactly known. An example would be to predict the acceleration of a human body in a head-on crash with another car: even if the speed was exactly known, small differences in the manufacturing of individual cars, how tightly every bolt has been tightened, etc., will lead to different results that can only be predicted in a statistical sense.

Many problems in the natural sciences and engineering are also rife with sources of uncertainty.

Computer experiment A computer experiment or simulation experiment is an experiment used to study a computer simulation, also referred to as an in silico system. This area includes computational physics, computational chemistry, computational biology and other similar ...

s on computer simulation

Computer simulation is the process of mathematical modelling, performed on a computer, which is designed to predict the behaviour of, or the outcome of, a real-world or physical system. The reliability of some mathematical models can be deter ...

s are the most common approach to study problems in uncertainty quantification.

Sources

Uncertainty can entermathematical model

A mathematical model is a description of a system using mathematical concepts and language. The process of developing a mathematical model is termed mathematical modeling. Mathematical models are used in the natural sciences (such as physics, ...

s and experimental measurements in various contexts. One way to categorize the sources of uncertainty is to consider:

; Parameter: This comes from the model parameters that are inputs to the computer model (mathematical model) but whose exact values are unknown to experimentalists and cannot be controlled in physical experiments, or whose values cannot be exactly inferred by statistical methods

Statistics (from German: ''Statistik'', "description of a state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, industria ...

. Some examples of this are the local free-fall

In Newtonian physics, free fall is any motion of a body where gravity is the only force acting upon it. In the context of general relativity, where gravitation is reduced to a space-time curvature, a body in free fall has no force acting on i ...

acceleration in a falling object experiment, various material properties in a finite element analysis for engineering, and multiplier uncertainty in the context of macroeconomic policy

Macroeconomics (from the Greek prefix ''makro-'' meaning "large" + ''economics'') is a branch of economics dealing with performance, structure, behavior, and decision-making of an economy as a whole.

For example, using interest rates, taxes, and ...

optimization.

; Parametric: This comes from the variability of input variables of the model. For example, the dimensions of a work piece in a process of manufacture may not be exactly as designed and instructed, which would cause variability in its performance.

; Structural uncertainty: Also known as model inadequacy, model bias, or model discrepancy, this comes from the lack of knowledge of the underlying physics in the problem. It depends on how accurately a mathematical model describes the true system for a real-life situation, considering the fact that models are almost always only approximations to reality. One example is when modeling the process of a falling object using the free-fall model; the model itself is inaccurate since there always exists air friction. In this case, even if there is no unknown parameter in the model, a discrepancy is still expected between the model and true physics.

; Algorithmic: Also known as numerical uncertainty, or discrete uncertainty. This type comes from numerical errors and numerical approximations per implementation of the computer model. Most models are too complicated to solve exactly. For example, the finite element method

The finite element method (FEM) is a popular method for numerically solving differential equations arising in engineering and mathematical modeling. Typical problem areas of interest include the traditional fields of structural analysis, heat ...

or finite difference method

In numerical analysis, finite-difference methods (FDM) are a class of numerical techniques for solving differential equations by approximating derivatives with finite differences. Both the spatial domain and time interval (if applicable) are ...

may be used to approximate the solution of a partial differential equation

In mathematics, a partial differential equation (PDE) is an equation which imposes relations between the various partial derivatives of a multivariable function.

The function is often thought of as an "unknown" to be solved for, similarly to h ...

(which introduces numerical errors). Other examples are numerical integration and infinite sum truncation that are necessary approximations in numerical implementation.

; Experimental: Also known as observation error, this comes from the variability of experimental measurements. The experimental uncertainty is inevitable and can be noticed by repeating a measurement for many times using exactly the same settings for all inputs/variables.

; Interpolation: This comes from a lack of available data collected from computer model simulations and/or experimental measurements. For other input settings that don't have simulation data or experimental measurements, one must interpolate or extrapolate in order to predict the corresponding responses.

Aleatoric and epistemic

Uncertainty is sometimes classified into two categories, prominently seen in medical applications. ; Aleatoric: Aleatoric uncertainty is also known as stochastic uncertainty, and is representative of unknowns that differ each time we run the same experiment. For example, a single arrow shot with a mechanical bow that exactly duplicates each launch (the same acceleration, altitude, direction and final velocity) will not all impact the same point on the target due to random and complicated vibrations of the arrow shaft, the knowledge of which cannot be determined sufficiently to eliminate the resulting scatter of impact points. The argument here is obviously in the definition of "cannot". Just because we cannot measure sufficiently with our currently available measurement devices does not preclude necessarily the existence of such information, which would move this uncertainty into the below category. ''Aleatoric'' is derived from the Latin alea or dice, referring to a game of chance. ; Epistemic uncertainty: Epistemic uncertainty is also known as systematic uncertainty, and is due to things one could in principle know but does not in practice. This may be because a measurement is not accurate, because the model neglects certain effects, or because particular data have been deliberately hidden. An example of a source of this uncertainty would be the drag in an experiment designed to measure the acceleration of gravity near the earth's surface. The commonly used gravitational acceleration of 9.8 m/s² ignores the effects of air resistance, but the air resistance for the object could be measured and incorporated into the experiment to reduce the resulting uncertainty in the calculation of the gravitational acceleration. ;Combined occurrence and interaction of aleatoric and epistemic uncertainty: Aleatoric and epistemic uncertainty can also occur simultaneously in a single term E.g., when experimental parameters show aleatoric uncertainty, and those experimental parameters are input to a computer simulation. If then for the uncertainty quantification asurrogate model A surrogate model is an engineering method used when an outcome of interest cannot be easily measured or computed, so a model of the outcome is used instead. Most engineering design problems require experiments and/or simulations to evaluate design ...

, e.g. a Gaussian process

In probability theory and statistics, a Gaussian process is a stochastic process (a collection of random variables indexed by time or space), such that every finite collection of those random variables has a multivariate normal distribution, i.e. ...

or a Polynomial Chaos Expansion, is learnt from computer experiments, this surrogate exhibits epistemic uncertainty that depends on or interacts with the aleatoric uncertainty of the experimental parameters. Such an uncertainty cannot solely be classified as aleatoric or epistemic any more, but is a more general inferential uncertainty.

In real life applications, both kinds of uncertainties are present. Uncertainty quantification intends to explicitly express both types of uncertainty separately. The quantification for the aleatoric uncertainties can be relatively straightforward, where traditional (frequentist) probability is the most basic form. Techniques such as the Monte Carlo method

Monte Carlo methods, or Monte Carlo experiments, are a broad class of computational algorithms that rely on repeated random sampling to obtain numerical results. The underlying concept is to use randomness to solve problems that might be deter ...

are frequently used. A probability distribution can be represented by its moments (in the Gaussian

Carl Friedrich Gauss (1777–1855) is the eponym of all of the topics listed below.

There are over 100 topics all named after this German mathematician and scientist, all in the fields of mathematics, physics, and astronomy. The English eponym ...

case, the mean

There are several kinds of mean in mathematics, especially in statistics. Each mean serves to summarize a given group of data, often to better understand the overall value ( magnitude and sign) of a given data set.

For a data set, the '' ar ...

and covariance

In probability theory and statistics, covariance is a measure of the joint variability of two random variables. If the greater values of one variable mainly correspond with the greater values of the other variable, and the same holds for the le ...

suffice, although, in general, even knowledge of all moments to arbitrarily high order still does not specify the distribution function uniquely), or more recently, by techniques such as Karhunen–Loève and polynomial chaos expansions. To evaluate epistemic uncertainties, the efforts are made to understand the (lack of) knowledge of the system, process or mechanism. Epistemic uncertainty is generally understood through the lens of Bayesian probability

Bayesian probability is an interpretation of the concept of probability, in which, instead of frequency or propensity of some phenomenon, probability is interpreted as reasonable expectation representing a state of knowledge or as quantification ...

, where probabilities are interpreted as indicating how certain a rational person could be regarding a specific claim.

Mathematical perspective

In mathematics, uncertainty is often characterized in terms of aprobability distribution

In probability theory and statistics, a probability distribution is the mathematical function that gives the probabilities of occurrence of different possible outcomes for an experiment. It is a mathematical description of a random phenomenon ...

. From that perspective, epistemic uncertainty means not being certain what the relevant probability distribution is, and aleatoric uncertainty means not being certain what a random sample

In statistics, quality assurance, and survey methodology, sampling is the selection of a subset (a statistical sample) of individuals from within a statistical population to estimate characteristics of the whole population. Statisticians atte ...

drawn from a probability distribution will be.

Types of problems

There are two major types of problems in uncertainty quantification: one is the forward propagation of uncertainty (where the various sources of uncertainty are propagated through the model to predict the overall uncertainty in the system response) and the other is the inverse assessment of model uncertainty and parameter uncertainty (where the model parameters are calibrated simultaneously using test data). There has been a proliferation of research on the former problem and a majority of uncertainty analysis techniques were developed for it. On the other hand, the latter problem is drawing increasing attention in the engineering design community, since uncertainty quantification of a model and the subsequent predictions of the true system response(s) are of great interest in designing robust systems.Forward

Uncertainty propagation is the quantification of uncertainties in system output(s) propagated from uncertain inputs. It focuses on the influence on the outputs from the ''parametric variability'' listed in the sources of uncertainty. The targets of uncertainty propagation analysis can be: * To evaluate low-order moments of the outputs, i.e.mean

There are several kinds of mean in mathematics, especially in statistics. Each mean serves to summarize a given group of data, often to better understand the overall value ( magnitude and sign) of a given data set.

For a data set, the '' ar ...

and variance

In probability theory and statistics, variance is the expectation of the squared deviation of a random variable from its population mean or sample mean. Variance is a measure of dispersion, meaning it is a measure of how far a set of numbe ...

.

* To evaluate the reliability of the outputs. This is especially useful in reliability engineering

Reliability engineering is a sub-discipline of systems engineering that emphasizes the ability of equipment to function without failure. Reliability describes the ability of a system or component to function under stated conditions for a specifie ...

where outputs of a system are usually closely related to the performance of the system.

* To assess the complete probability distribution of the outputs. This is useful in the scenario of utility

As a topic of economics, utility is used to model worth or value. Its usage has evolved significantly over time. The term was introduced initially as a measure of pleasure or happiness as part of the theory of utilitarianism by moral philosophe ...

optimization where the complete distribution is used to calculate the utility.

Inverse

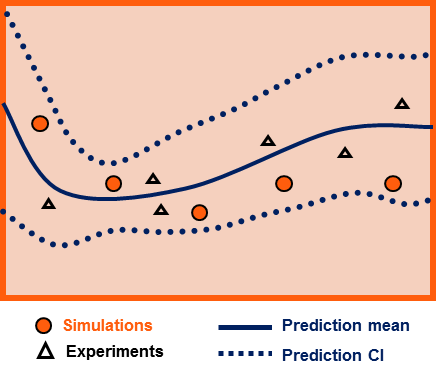

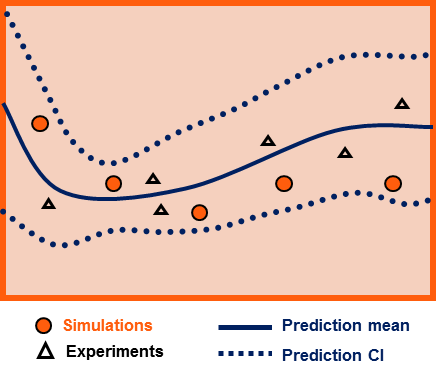

Given some experimental measurements of a system and some computer simulation results from its mathematical model, inverse uncertainty quantification estimates the discrepancy between the experiment and the mathematical model (which is called bias correction), and estimates the values of unknown parameters in the model if there are any (which is called parameter calibration or simply calibration). Generally this is a much more difficult problem than forward uncertainty propagation; however it is of great importance since it is typically implemented in a model updating process. There are several scenarios in inverse uncertainty quantification:

Bias correction only

Bias correction quantifies the ''model inadequacy'', i.e. the discrepancy between the experiment and the mathematical model. The general model updating formula for bias correction is: : where denotes the experimental measurements as a function of several input variables , denotes the computer model (mathematical model) response, denotes the additive discrepancy function (aka bias function), and denotes the experimental uncertainty. The objective is to estimate the discrepancy function , and as a by-product, the resulting updated model is . A prediction confidence interval is provided with the updated model as the quantification of the uncertainty.Parameter calibration only

Parameter calibration estimates the values of one or more unknown parameters in a mathematical model. The general model updating formulation for calibration is: : where denotes the computer model response that depends on several unknown model parameters , and denotes the true values of the unknown parameters in the course of experiments. The objective is to either estimate , or to come up with a probability distribution of that encompasses the best knowledge of the true parameter values.Bias correction and parameter calibration

It considers an inaccurate model with one or more unknown parameters, and its model updating formulation combines the two together: : It is the most comprehensive model updating formulation that includes all possible sources of uncertainty, and it requires the most effort to solve.Selective methodologies

Much research has been done to solve uncertainty quantification problems, though a majority of them deal with uncertainty propagation. During the past one to two decades, a number of approaches for inverse uncertainty quantification problems have also been developed and have proved to be useful for most small- to medium-scale problems.Forward propagation

Existing uncertainty propagation approaches include probabilistic approaches and non-probabilistic approaches. There are basically six categories of probabilistic approaches for uncertainty propagation: * Simulation-based methods:Monte Carlo simulations

Monte Carlo methods, or Monte Carlo experiments, are a broad class of computational algorithms that rely on repeated random sampling to obtain numerical results. The underlying concept is to use randomness to solve problems that might be determini ...

, importance sampling, adaptive sampling, etc.

*General surrogate-based methods: In a non-instrusive approach, a surrogate model A surrogate model is an engineering method used when an outcome of interest cannot be easily measured or computed, so a model of the outcome is used instead. Most engineering design problems require experiments and/or simulations to evaluate design ...

is learnt in order to replace the experiment or the simulation with a cheap and fast approximation. Surrogate-based methods can also be employed in a fully Bayesian fashion. This approach has proven particularly powerful when the cost of sampling, e.g. computationally expensive simulations, is prohibitively high.

* Local expansion-based methods: Taylor series, perturbation method, etc. These methods have advantages when dealing with relatively small input variability and outputs that don't express high nonlinearity. These linear or linearized methods are detailed in the article Uncertainty propagation.

* Functional expansion-based methods: Neumann expansion, orthogonal or Karhunen–Loeve expansions (KLE), with polynomial chaos expansion (PCE) and wavelet expansions as special cases.

* Most probable point (MPP)-based methods: first-order reliability method (FORM) and second-order reliability method (SORM).

* Numerical integration-based methods: Full factorial numerical integration (FFNI) and dimension reduction (DR).

For non-probabilistic approaches, interval analysis, Fuzzy theory, possibility theory and evidence theory are among the most widely used.

The probabilistic approach is considered as the most rigorous approach to uncertainty analysis in engineering design due to its consistency with the theory of decision analysis. Its cornerstone is the calculation of probability density functions for sampling statistics. This can be performed rigorously for random variables that are obtainable as transformations of Gaussian variables, leading to exact confidence intervals.

Inverse uncertainty

Frequentist

Inregression analysis

In statistical modeling, regression analysis is a set of statistical processes for estimating the relationships between a dependent variable (often called the 'outcome' or 'response' variable, or a 'label' in machine learning parlance) and one ...

and least squares

The method of least squares is a standard approach in regression analysis to approximate the solution of overdetermined systems (sets of equations in which there are more equations than unknowns) by minimizing the sum of the squares of the re ...

problems, the standard error

The standard error (SE) of a statistic (usually an estimate of a parameter) is the standard deviation of its sampling distribution or an estimate of that standard deviation. If the statistic is the sample mean, it is called the standard error o ...

of parameter estimates is readily available, which can be expanded into a confidence interval

In frequentist statistics, a confidence interval (CI) is a range of estimates for an unknown parameter. A confidence interval is computed at a designated ''confidence level''; the 95% confidence level is most common, but other levels, such as 9 ...

.

Bayesian

Several methodologies for inverse uncertainty quantification exist under the Bayesian framework. The most complicated direction is to aim at solving problems with both bias correction and parameter calibration. The challenges of such problems include not only the influences from model inadequacy and parameter uncertainty, but also the lack of data from both computer simulations and experiments. A common situation is that the input settings are not the same over experiments and simulations. Another common situation is that parameters derived from experiments are input to simulations. For computationally expensive simulations, then often asurrogate model A surrogate model is an engineering method used when an outcome of interest cannot be easily measured or computed, so a model of the outcome is used instead. Most engineering design problems require experiments and/or simulations to evaluate design ...

, e.g. a Gaussian process

In probability theory and statistics, a Gaussian process is a stochastic process (a collection of random variables indexed by time or space), such that every finite collection of those random variables has a multivariate normal distribution, i.e. ...

or a Polynomial Chaos Expansion, is necessary, defining an inverse problem for finding the surrogate model that best approximates the simulations.

= Modular approach

= An approach to inverse uncertainty quantification is the modular Bayesian approach. The modular Bayesian approach derives its name from its four-module procedure. Apart from the current available data, aprior distribution

In Bayesian statistical inference, a prior probability distribution, often simply called the prior, of an uncertain quantity is the probability distribution that would express one's beliefs about this quantity before some evidence is taken into ...

of unknown parameters should be assigned.

;Module 1: Gaussian process modeling for the computer model

To address the issue from lack of simulation results, the computer model is replaced with a Gaussian process

In probability theory and statistics, a Gaussian process is a stochastic process (a collection of random variables indexed by time or space), such that every finite collection of those random variables has a multivariate normal distribution, i.e. ...

(GP) model

:

where

:

is the dimension of input variables, and is the dimension of unknown parameters. While is pre-defined, , known as '' hyperparameters'' of the GP model, need to be estimated via maximum likelihood estimation (MLE). This module can be considered as a generalized kriging

In statistics, originally in geostatistics, kriging or Kriging, also known as Gaussian process regression, is a method of interpolation based on Gaussian process governed by prior covariances. Under suitable assumptions of the prior, kriging giv ...

method.

;Module 2: Gaussian process modeling for the discrepancy function

Similarly with the first module, the discrepancy function is replaced with a GP model

:

where

:

Together with the prior distribution of unknown parameters, and data from both computer models and experiments, one can derive the maximum likelihood estimates for . At the same time, from Module 1 gets updated as well.

;Module 3: Posterior distribution of unknown parameters

Bayes' theorem

In probability theory and statistics, Bayes' theorem (alternatively Bayes' law or Bayes' rule), named after Thomas Bayes, describes the probability of an event, based on prior knowledge of conditions that might be related to the event. For examp ...

is applied to calculate the posterior distribution

The posterior probability is a type of conditional probability that results from updating the prior probability with information summarized by the likelihood via an application of Bayes' rule. From an epistemological perspective, the posterior p ...

of the unknown parameters:

:

where includes all the fixed hyperparameters in previous modules.

;Module 4: Prediction of the experimental response and discrepancy function

=Full approach

= Fully Bayesian approach requires that not only the priors for unknown parameters but also the priors for the other hyperparameters should be assigned. It follows the following steps: # Derive the posterior distribution ; # Integrate out and obtain . This single step accomplishes the calibration; # Prediction of the experimental response and discrepancy function. However, the approach has significant drawbacks: * For most cases, is a highly intractable function of . Hence the integration becomes very troublesome. Moreover, if priors for the other hyperparameters are not carefully chosen, the complexity in numerical integration increases even more. * In the prediction stage, the prediction (which should at least include the expected value of system responses) also requires numerical integration.Markov chain Monte Carlo

In statistics, Markov chain Monte Carlo (MCMC) methods comprise a class of algorithms for sampling from a probability distribution. By constructing a Markov chain that has the desired distribution as its equilibrium distribution, one can obtain ...

(MCMC) is often used for integration; however it is computationally expensive.

The fully Bayesian approach requires a huge amount of calculations and may not yet be practical for dealing with the most complicated modelling situations.

Known issues

The theories and methodologies for uncertainty propagation are much better established, compared with inverse uncertainty quantification. For the latter, several difficulties remain unsolved: # Dimensionality issue: The computational cost increases dramatically with the dimensionality of the problem, i.e. the number of input variables and/or the number of unknown parameters. # Identifiability issue: Multiple combinations of unknown parameters and discrepancy function can yield the same experimental prediction. Hence different values of parameters cannot be distinguished/identified. This issue is circumvented in a Bayesian approach, where such combinations are averaged over. # Incomplete model response: Refers to a model not having a solution for some combinations of the input variables.See also

*Computer experiment A computer experiment or simulation experiment is an experiment used to study a computer simulation, also referred to as an in silico system. This area includes computational physics, computational chemistry, computational biology and other similar ...

* Further research is needed

* Quantification of margins and uncertainties

* Probabilistic numerics

* Bayesian regression

*Bayesian probability

Bayesian probability is an interpretation of the concept of probability, in which, instead of frequency or propensity of some phenomenon, probability is interpreted as reasonable expectation representing a state of knowledge or as quantification ...

References

{{reflist Applied mathematics Mathematical modeling Operations research Statistical theory