|

Constrained Optimization

In mathematical optimization, constrained optimization (in some contexts called constraint optimization) is the process of optimizing an objective function with respect to some variables in the presence of constraints on those variables. The objective function is either a cost function or energy function, which is to be minimized, or a reward function or utility function, which is to be maximized. Constraints can be either hard constraints, which set conditions for the variables that are required to be satisfied, or soft constraints, which have some variable values that are penalized in the objective function if, and based on the extent that, the conditions on the variables are not satisfied. Relation to constraint-satisfaction problems The constrained-optimization problem (COP) is a significant generalization of the classic constraint-satisfaction problem (CSP) model. COP is a CSP that includes an ''objective function'' to be optimized. Many algorithms are used to hand ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Penalty Method

In mathematical optimization, penalty methods are a certain class of algorithms for solving constrained optimization problems. A penalty method replaces a constrained optimization problem by a series of unconstrained problems whose solutions ideally converge to the solution of the original constrained problem. The unconstrained problems are formed by adding a term, called a penalty function, to the objective function that consists of a ''penalty parameter'' multiplied by a measure of violation of the constraints. The measure of violation is nonzero when the constraints are violated and is zero in the region where constraints are not violated. Description Let us say we are solving the following constrained problem: : \min_x f(\mathbf x) subject to : c_i(\mathbf x) \le 0 ~\forall i \in I. This problem can be solved as a series of unconstrained minimization problems : \min f_p (\mathbf x) := f (\mathbf x) + p ~ \sum_ ~ g(c_i(\mathbf x)) where : g(c_i(\mathbf x))=\max(0,c_ ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Mathematical Optimization

Mathematical optimization (alternatively spelled ''optimisation'') or mathematical programming is the selection of a best element, with regard to some criteria, from some set of available alternatives. It is generally divided into two subfields: discrete optimization and continuous optimization. Optimization problems arise in all quantitative disciplines from computer science and engineering to operations research and economics, and the development of solution methods has been of interest in mathematics for centuries. In the more general approach, an optimization problem consists of maxima and minima, maximizing or minimizing a Function of a real variable, real function by systematically choosing Argument of a function, input values from within an allowed set and computing the Value (mathematics), value of the function. The generalization of optimization theory and techniques to other formulations constitutes a large area of applied mathematics. Optimization problems Opti ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Simplex Method

In mathematical optimization, Dantzig's simplex algorithm (or simplex method) is a popular algorithm for linear programming. The name of the algorithm is derived from the concept of a simplex and was suggested by T. S. Motzkin. Simplices are not actually used in the method, but one interpretation of it is that it operates on simplicial ''cones'', and these become proper simplices with an additional constraint. The simplicial cones in question are the corners (i.e., the neighborhoods of the vertices) of a geometric object called a polytope. The shape of this polytope is defined by the constraints applied to the objective function. History George Dantzig worked on planning methods for the US Army Air Force during World War II using a desk calculator. During 1946, his colleague challenged him to mechanize the planning process to distract him from taking another job. Dantzig formulated the problem as linear inequalities inspired by the work of Wassily Leontief, however, at that ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Constrained Least Squares

In constrained least squares one solves a linear least squares problem with an additional constraint on the solution. This means, the unconstrained equation \mathbf \boldsymbol = \mathbf must be fit as closely as possible (in the least squares sense) while ensuring that some other property of \boldsymbol is maintained. There are often special-purpose algorithms for solving such problems efficiently. Some examples of constraints are given below: * Equality constrained least squares: the elements of \boldsymbol must exactly satisfy \mathbf \boldsymbol = \mathbf (see Ordinary least squares). * Stochastic (linearly) constrained least squares: the elements of \boldsymbol must satisfy \mathbf \boldsymbol = \mathbf + \mathbf , where \mathbf is a vector of random variables such that \operatorname(\mathbf ) = \mathbf and \operatorname(\mathbf \mathbf ^) = \tau^\mathbf. This effectively imposes a prior distribution for \boldsymbol and is therefore equivalent to Bayesian li ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Bucket Elimination

In constraint satisfaction, local consistency conditions are properties of constraint satisfaction problems related to the consistency of subsets of variables or constraints. They can be used to reduce the search space and make the problem easier to solve. Various kinds of local consistency conditions are leveraged, including node consistency, arc consistency, and path consistency. Every local consistency condition can be enforced by a transformation that changes the problem without changing its solutions; such a transformation is called constraint propagation. Constraint propagation works by reducing domains of variables, strengthening constraints, or creating new constraints. This leads to a reduction of the search space, making the problem easier to solve by some algorithms. Constraint propagation can also be used as an unsatisfiability checker, incomplete in general but complete in some particular cases. Local consistency conditions can be grouped into various classes. The orig ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Interval Arithmetic

Interval arithmetic (also known as interval mathematics; interval analysis or interval computation) is a mathematical technique used to mitigate rounding and measurement errors in mathematical computation by computing function bounds. Numerical methods involving interval arithmetic can guarantee relatively reliable and mathematically correct results. Instead of representing a value as a single number, interval arithmetic or interval mathematics represents each value as a range of possibilities. Mathematically, instead of working with an uncertain real-valued variable x, interval arithmetic works with an interval ,b/math> that defines the range of values that x can have. In other words, any value of the variable x lies in the closed interval between a and b. A function f, when applied to x, produces an interval ,d/math> which includes all the possible values for f(x) for all x \in ,b/math>. Interval arithmetic is suitable for a variety of purposes; the most common use is ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Branch-and-bound

Branch and bound (BB, B&B, or BnB) is a method for solving optimization problems by breaking them down into smaller sub-problems and using a bounding function to eliminate sub-problems that cannot contain the optimal solution. It is an algorithm design paradigm for discrete and combinatorial optimization problems, as well as mathematical optimization. A branch-and-bound algorithm consists of a systematic enumeration of candidate solutions by means of state space search: the set of candidate solutions is thought of as forming a rooted tree with the full set at the root. The algorithm explores ''branches'' of this tree, which represent subsets of the solution set. Before enumerating the candidate solutions of a branch, the branch is checked against upper and lower estimated ''bounds'' on the optimal solution, and is discarded if it cannot produce a better solution than the best one found so far by the algorithm. The algorithm depends on efficient estimation of the lower and u ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

NP Hard

NP may refer to: Arts and entertainment * NP (novel), ''NP'' (novel), by Japanese author Banana Yoshimoto Organizations * Nashua-Plainfield Community School District, Iowa, United States * National Party (other), various political parties * Ngee Ann Polytechnic, Singapore * Nigeria Police Force * Northern Pacific Railway (AAR reporting mark NP) * November Project, free, open-to-the-public exercise group Places * NP postcode area, Newport, Wales, UK * Nepal (ISO 3166-1 alpha-2 country code NP) ** .np, the country code top level domain (ccTLD) for Nepal Science, technology and mathematics Biology and medicine * Nucleoside phosphorylase, an enzyme * Nurse practitioner * Kallikrein 8, an enzyme * Neptunium, symbol Np, a chemical element * Nosocomial pneumonia * Natriuretic peptide Mathematics and computer science * NP (complexity), Nondeterministic Polynomial, a computational complexity class ** NP-complete, a class of decision problems ** NP-hard, a class of problems in c ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

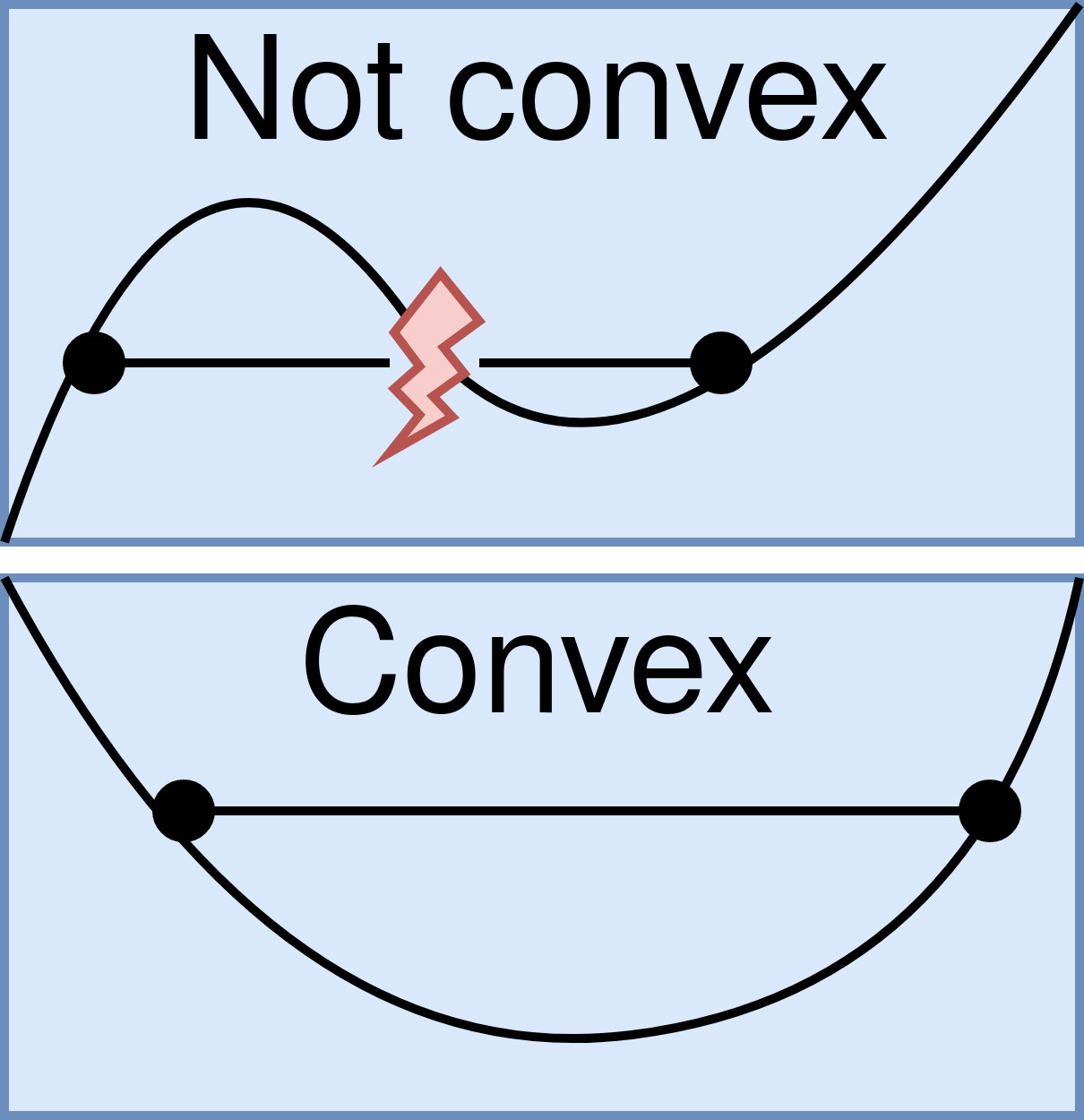

Convex Function

In mathematics, a real-valued function is called convex if the line segment between any two distinct points on the graph of a function, graph of the function lies above or on the graph between the two points. Equivalently, a function is convex if its epigraph (mathematics), ''epigraph'' (the set of points on or above the graph of the function) is a convex set. In simple terms, a convex function graph is shaped like a cup \cup (or a straight line like a linear function), while a concave function's graph is shaped like a cap \cap. A twice-differentiable function, differentiable function of a single variable is convex if and only if its second derivative is nonnegative on its entire domain of a function, domain. Well-known examples of convex functions of a single variable include a linear function f(x) = cx (where c is a real number), a quadratic function cx^2 (c as a nonnegative real number) and an exponential function ce^x (c as a nonnegative real number). Convex functions pl ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Ellipsoid Method

In mathematical optimization, the ellipsoid method is an iterative method for convex optimization, minimizing convex functions over convex sets. The ellipsoid method generates a sequence of ellipsoids whose volume uniformly decreases at every step, thus enclosing a minimizer of a convex function. When specialized to solving feasible linear optimization problems with rational data, the ellipsoid method is an algorithm which finds an optimal solution in a number of steps that is polynomial in the input size. History The ellipsoid method has a long history. As an iterative method, a preliminary version was introduced by Naum Z. Shor. In 1972, an approximation algorithm for real convex optimization, convex minimization was studied by Arkadi Nemirovski and David B. Yudin (Judin). As an algorithm for solving linear programming problems with rational data, the ellipsoid algorithm was studied by Leonid Khachiyan; Khachiyan's achievement was to prove the Polynomial time, polynomial-time ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |