|

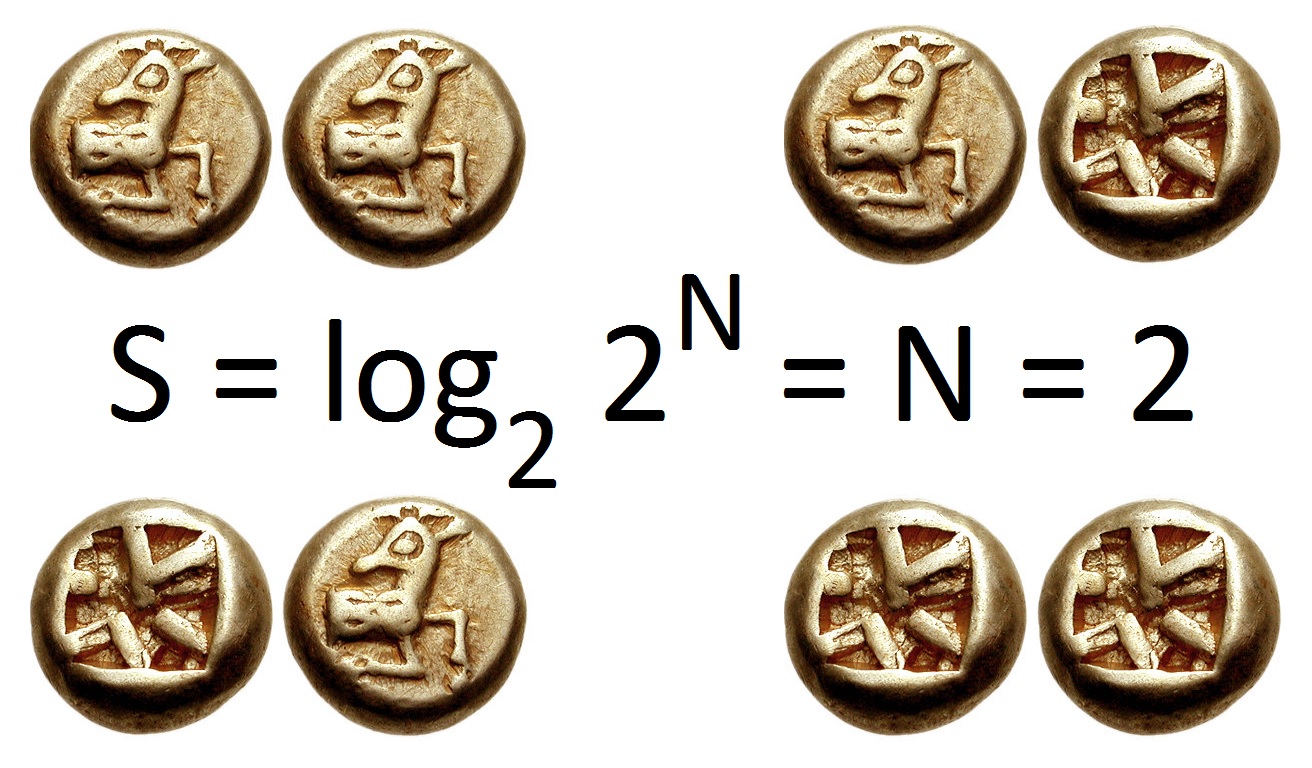

Bit

The bit is the most basic unit of information in computing and digital communication. The name is a portmanteau of binary digit. The bit represents a logical state with one of two possible values. These values are most commonly represented as either , but other representations such as ''true''/''false'', ''yes''/''no'', ''on''/''off'', or ''+''/''−'' are also widely used. The relation between these values and the physical states of the underlying storage or device is a matter of convention, and different assignments may be used even within the same device or program. It may be physically implemented with a two-state device. A contiguous group of binary digits is commonly called a '' bit string'', a bit vector, or a single-dimensional (or multi-dimensional) ''bit array''. A group of eight bits is called one ''byte'', but historically the size of the byte is not strictly defined. Frequently, half, full, double and quadruple words consist of a number of bytes which is ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Byte

The byte is a unit of digital information that most commonly consists of eight bits. Historically, the byte was the number of bits used to encode a single character of text in a computer and for this reason it is the smallest addressable unit of memory in many computer architectures. To disambiguate arbitrarily sized bytes from the common 8-bit definition, network protocol documents such as the Internet Protocol () refer to an 8-bit byte as an octet. Those bits in an octet are usually counted with numbering from 0 to 7 or 7 to 0 depending on the bit endianness. The size of the byte has historically been hardware-dependent and no definitive standards existed that mandated the size. Sizes from 1 to 48 bits have been used. The six-bit character code was an often-used implementation in early encoding systems, and computers using six-bit and nine-bit bytes were common in the 1960s. These systems often had memory words of 12, 18, 24, 30, 36, 48, or 60 bits, corresponding t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Bit String

A bit array (also known as bitmask, bit map, bit set, bit string, or bit vector) is an array data structure that compactly stores bits. It can be used to implement a simple set data structure. A bit array is effective at exploiting bit-level parallelism in hardware to perform operations quickly. A typical bit array stores ''kw'' bits, where ''w'' is the number of bits in the unit of storage, such as a byte or word, and ''k'' is some nonnegative integer. If ''w'' does not divide the number of bits to be stored, some space is wasted due to internal fragmentation. Definition A bit array is a mapping from some domain (almost always a range of integers) to values in the set . The values can be interpreted as dark/light, absent/present, locked/unlocked, valid/invalid, et cetera. The point is that there are only two possible values, so they can be stored in one bit. As with other arrays, the access to a single bit can be managed by applying an index to the array. Assuming its size ( ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

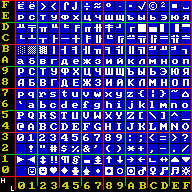

Bit Array

A bit array (also known as bitmask, bit map, bit set, bit string, or bit vector) is an array data structure that compactly stores bits. It can be used to implement a simple set data structure. A bit array is effective at exploiting bit-level parallelism in hardware to perform operations quickly. A typical bit array stores ''kw'' bits, where ''w'' is the number of bits in the unit of storage, such as a byte or Word (computer architecture), word, and ''k'' is some nonnegative integer. If ''w'' does not divide the number of bits to be stored, some space is wasted due to Fragmentation (computing), internal fragmentation. Definition A bit array is a mapping from some domain (almost always a range of integers) to values in the set . The values can be interpreted as dark/light, absent/present, locked/unlocked, valid/invalid, et cetera. The point is that there are only two possible values, so they can be stored in one bit. As with other arrays, the access to a single bit can be managed ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Units Of Information

A unit of information is any unit of measure of digital data size. In digital computing, a unit of information is used to describe the capacity of a digital data storage device. In telecommunications, a unit of information is used to describe the throughput of a communication channel. In information theory, a unit of information is used to measure information contained in messages and the entropy of random variables. Due to the need to work with data sizes that range from very small to very large, units of information cover a wide range of data sizes. Units are defined as multiples of a smaller unit except for the smallest unit which is based on convention and hardware design. Multiplier prefixes are used to describe relatively large sizes. For binary hardware, by far the most common hardware today, the smallest unit is the bit, a portmanteau of binary digit, which represents a value that is one of two possible values; typically shown as 0 and 1. The nibble, 4 bits, re ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Unit Of Information

A unit of information is any unit of measure of digital data size. In digital computing, a unit of information is used to describe the capacity of a digital data storage device. In telecommunications, a unit of information is used to describe the throughput of a communication channel. In information theory, a unit of information is used to measure information contained in messages and the entropy of random variables. Due to the need to work with data sizes that range from very small to very large, units of information cover a wide range of data sizes. Units are defined as multiples of a smaller unit except for the smallest unit which is based on convention and hardware design. Multiplier prefixes are used to describe relatively large sizes. For binary hardware, by far the most common hardware today, the smallest unit is the bit, a portmanteau of binary digit, which represents a value that is one of two possible values; typically shown as 0 and 1. The nibble, 4 bits, represents ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Binary Number

A binary number is a number expressed in the Radix, base-2 numeral system or binary numeral system, a method for representing numbers that uses only two symbols for the natural numbers: typically "0" (zero) and "1" (one). A ''binary number'' may also refer to a rational number that has a finite representation in the binary numeral system, that is, the quotient of an integer by a power of two. The base-2 numeral system is a positional notation with a radix of 2. Each digit is referred to as a bit, or binary digit. Because of its straightforward implementation in digital electronic circuitry using logic gates, the binary system is used by almost all modern computer, computers and computer-based devices, as a preferred system of use, over various other human techniques of communication, because of the simplicity of the language and the noise immunity in physical implementation. History The modern binary number system was studied in Europe in the 16th and 17th centuries by Thoma ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Information Entropy

In information theory, the entropy of a random variable quantifies the average level of uncertainty or information associated with the variable's potential states or possible outcomes. This measures the expected amount of information needed to describe the state of the variable, considering the distribution of probabilities across all potential states. Given a discrete random variable X, which may be any member x within the set \mathcal and is distributed according to p\colon \mathcal\to , 1/math>, the entropy is \Eta(X) := -\sum_ p(x) \log p(x), where \Sigma denotes the sum over the variable's possible values. The choice of base for \log, the logarithm, varies for different applications. Base 2 gives the unit of bits (or " shannons"), while base ''e'' gives "natural units" nat, and base 10 gives units of "dits", "bans", or " hartleys". An equivalent definition of entropy is the expected value of the self-information of a variable. The concept of information entropy was ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Error Detection And Correction

In information theory and coding theory with applications in computer science and telecommunications, error detection and correction (EDAC) or error control are techniques that enable reliable delivery of digital data over unreliable communication channels. Many communication channels are subject to channel noise, and thus errors may be introduced during transmission from the source to a receiver. Error detection techniques allow detecting such errors, while error correction enables reconstruction of the original data in many cases. Definitions ''Error detection'' is the detection of errors caused by noise or other impairments during transmission from the transmitter to the receiver. ''Error correction'' is the detection of errors and reconstruction of the original, error-free data. History In classical antiquity, copyists of the Hebrew Bible were paid for their work according to the number of stichs (lines of verse). As the prose books of the Bible were hardly ever w ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Nibble

In computing, a nibble, or spelled nybble to match byte, is a unit of information that is an aggregation of four- bits; half of a byte/ octet. The unit is alternatively called nyble, nybl, half-byte or tetrade. In networking or telecommunications, the unit is often called a semi-octet, quadbit, or quartet. As a nibble can represent sixteen () possible values, a nibble value is often shown as a hexadecimal digit (hex digit). A byte is two nibbles, and therefore, a value can be shown as two hex digits. Four-bit computers use nibble-sized data for storage and operations; as the word unit. Such computers were used in early microprocessors, pocket calculators and pocket computers. They continue to be used in some microcontrollers. In this context, 4-bit groups were sometimes also called characters rather than nibbles. History The term ''nibble'' originates from its representing half a byte, with ''byte'' a homophone of the English word ''bite''. In 2014, David B. Be ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Information Theory

Information theory is the mathematical study of the quantification (science), quantification, Data storage, storage, and telecommunications, communication of information. The field was established and formalized by Claude Shannon in the 1940s, though early contributions were made in the 1920s through the works of Harry Nyquist and Ralph Hartley. It is at the intersection of electronic engineering, mathematics, statistics, computer science, Neuroscience, neurobiology, physics, and electrical engineering. A key measure in information theory is information entropy, entropy. Entropy quantifies the amount of uncertainty involved in the value of a random variable or the outcome of a random process. For example, identifying the outcome of a Fair coin, fair coin flip (which has two equally likely outcomes) provides less information (lower entropy, less uncertainty) than identifying the outcome from a roll of a dice, die (which has six equally likely outcomes). Some other important measu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Computing Device

A computer is a machine that can be programmed to automatically carry out sequences of arithmetic or logical operations ('' computation''). Modern digital electronic computers can perform generic sets of operations known as ''programs'', which enable computers to perform a wide range of tasks. The term computer system may refer to a nominally complete computer that includes the hardware, operating system, software, and peripheral equipment needed and used for full operation; or to a group of computers that are linked and function together, such as a computer network or computer cluster. A broad range of industrial and consumer products use computers as control systems, including simple special-purpose devices like microwave ovens and remote controls, and factory devices like industrial robots. Computers are at the core of general-purpose devices such as personal computers and mobile devices such as smartphones. Computers power the Internet, which links billions o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Data Compression

In information theory, data compression, source coding, or bit-rate reduction is the process of encoding information using fewer bits than the original representation. Any particular compression is either lossy or lossless. Lossless compression reduces bits by identifying and eliminating statistical redundancy. No information is lost in lossless compression. Lossy compression reduces bits by removing unnecessary or less important information. Typically, a device that performs data compression is referred to as an encoder, and one that performs the reversal of the process (decompression) as a decoder. The process of reducing the size of a data file is often referred to as data compression. In the context of data transmission, it is called source coding: encoding is done at the source of the data before it is stored or transmitted. Source coding should not be confused with channel coding, for error detection and correction or line coding, the means for mapping data onto a sig ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |