Supercomputing on:

[Wikipedia]

[Google]

[Amazon]

A supercomputer is a computer with a high level of performance as compared to a general-purpose computer. The performance of a supercomputer is commonly measured in floating-point operations per second ( FLOPS) instead of

A supercomputer is a computer with a high level of performance as compared to a general-purpose computer. The performance of a supercomputer is commonly measured in floating-point operations per second ( FLOPS) instead of

A supercomputer is a computer with a high level of performance as compared to a general-purpose computer. The performance of a supercomputer is commonly measured in floating-point operations per second ( FLOPS) instead of

A supercomputer is a computer with a high level of performance as compared to a general-purpose computer. The performance of a supercomputer is commonly measured in floating-point operations per second ( FLOPS) instead of million instructions per second

Instructions per second (IPS) is a measure of a computer's processor speed. For complex instruction set computers (CISCs), different instructions take different amounts of time, so the value measured depends on the instruction mix; even for co ...

(MIPS). Since 2017, there have existed supercomputers which can perform over 1017 FLOPS (a hundred quadrillion

Two naming scales for large numbers have been used in English and other European languages since the early modern era: the long and short scales. Most English variants use the short scale today, but the long scale remains dominant in many non-E ...

FLOPS, 100 petaFLOPS or 100 PFLOPS).

For comparison, a desktop computer has performance in the range of hundreds of gigaFLOPS (1011) to tens of teraFLOPS (1013).

Since November 2017, all of the world's fastest 500 supercomputers run on Linux

Linux ( or ) is a family of open-source Unix-like operating systems based on the Linux kernel, an operating system kernel first released on September 17, 1991, by Linus Torvalds. Linux is typically packaged as a Linux distribution, w ...

-based operating systems. Additional research is being conducted in the United States, the European Union, Taiwan, Japan, and China to build faster, more powerful and technologically superior exascale supercomputers.

Supercomputers play an important role in the field of computational science, and are used for a wide range of computationally intensive tasks in various fields, including quantum mechanics

Quantum mechanics is a fundamental theory in physics that provides a description of the physical properties of nature at the scale of atoms and subatomic particles. It is the foundation of all quantum physics including quantum chemistr ...

, weather forecasting

Weather forecasting is the application of science and technology to predict the conditions of the atmosphere for a given location and time. People have attempted to predict the weather informally for millennia and formally since the 19th cent ...

, climate research

Climatology (from Greek , ''klima'', "place, zone"; and , '' -logia'') or climate science is the scientific study of Earth's climate, typically defined as weather conditions averaged over a period of at least 30 years. This modern field of stu ...

, oil and gas exploration, molecular modeling

Molecular modelling encompasses all methods, theoretical and computational, used to model or mimic the behaviour of molecules. The methods are used in the fields of computational chemistry, drug design, computational biology and materials scien ...

(computing the structures and properties of chemical compounds, biological macromolecules

A macromolecule is a very large molecule important to biophysical processes, such as a protein or nucleic acid. It is composed of thousands of covalently bonded atoms. Many macromolecules are polymers of smaller molecules called monomers. The ...

, polymers, and crystals), and physical simulations (such as simulations of the early moments of the universe, airplane and spacecraft aerodynamics

Aerodynamics, from grc, ἀήρ ''aero'' (air) + grc, δυναμική (dynamics), is the study of the motion of air, particularly when affected by a solid object, such as an airplane wing. It involves topics covered in the field of fluid dy ...

, the detonation of nuclear weapons, and nuclear fusion

Nuclear fusion is a reaction in which two or more atomic nuclei are combined to form one or more different atomic nuclei and subatomic particles ( neutrons or protons). The difference in mass between the reactants and products is manife ...

). They have been essential in the field of cryptanalysis.

Supercomputers were introduced in the 1960s, and for several decades the fastest were made by Seymour Cray

Seymour Roger Cray (September 28, 1925 – October 5, 1996

) was an American

) was an American

Control Data Corporation (CDC),

In 1960,

In 1960,

Cray Research

Cray Inc., a subsidiary of Hewlett Packard Enterprise, is an American supercomputer manufacturer headquartered in Seattle, Washington. It also manufactures systems for data storage and analytics. Several Cray supercomputer systems are listed ...

and subsequent companies bearing his name or monogram. The first such machines were highly tuned conventional designs that ran more quickly than their more general-purpose contemporaries. Through the decade, increasing amounts of parallelism were added, with one to four processors

A central processing unit (CPU), also called a central processor, main processor or just processor, is the electronic circuitry that executes instructions comprising a computer program. The CPU performs basic arithmetic, logic, controlling, ...

being typical. In the 1970s, vector processor

In computing, a vector processor or array processor is a central processing unit (CPU) that implements an instruction set where its instructions are designed to operate efficiently and effectively on large one-dimensional arrays of data calle ...

s operating on large arrays of data came to dominate. A notable example is the highly successful Cray-1 of 1976. Vector computers remained the dominant design into the 1990s. From then until today, massively parallel

Massively parallel is the term for using a large number of computer processors (or separate computers) to simultaneously perform a set of coordinated computations in parallel. GPUs are massively parallel architecture with tens of thousands of t ...

supercomputers with tens of thousands of off-the-shelf processors became the norm.

The US has long been the leader in the supercomputer field, first through Cray's almost uninterrupted dominance of the field, and later through a variety of technology companies. Japan made major strides in the field in the 1980s and 90s, with China becoming increasingly active in the field. As of May 2022, the fastest supercomputer on the TOP500

The TOP500 project ranks and details the 500 most powerful non- distributed computer systems in the world. The project was started in 1993 and publishes an updated list of the supercomputers twice a year. The first of these updates always coinci ...

supercomputer list is Frontier, in the US, with a LINPACK benchmark

The LINPACK Benchmarks are a measure of a system's floating-point computing power. Introduced by Jack Dongarra, they measure how fast a computer solves a dense ''n'' by ''n'' system of linear equations ''Ax'' = ''b'', which is a common ...

score of 1.102 ExaFlop/s, followed by Fugaku. The US has five of the top 10; China has two; Japan, Finland, and France have one each. In June 2018, all combined supercomputers on the TOP500 list broke the 1 exaFLOPS mark.

History

In 1960,

In 1960, UNIVAC

UNIVAC (Universal Automatic Computer) was a line of electronic digital stored-program computers starting with the products of the Eckert–Mauchly Computer Corporation. Later the name was applied to a division of the Remington Rand company an ...

built the Livermore Atomic Research Computer (LARC), today considered among the first supercomputers, for the US Navy Research and Development Center. It still used high-speed drum memory

Drum memory was a magnetic data storage device invented by Gustav Tauschek in 1932 in Austria. Drums were widely used in the 1950s and into the 1960s as computer memory.

For many early computers, drum memory formed the main working memory of ...

, rather than the newly emerging disk drive

Disk storage (also sometimes called drive storage) is a general category of storage mechanisms where data is recorded by various electronic, magnetic, optical, or mechanical changes to a surface layer of one or more rotating disks. A disk drive is ...

technology. Also, among the first supercomputers was the IBM 7030 Stretch

The IBM 7030, also known as Stretch, was IBM's first transistorized supercomputer. It was the fastest computer in the world from 1961 until the first CDC 6600 became operational in 1964."Designed by Seymour Cray, the CDC 6600 was almost three t ...

. The IBM 7030 was built by IBM for the Los Alamos National Laboratory

Los Alamos National Laboratory (often shortened as Los Alamos and LANL) is one of the sixteen research and development laboratories of the United States Department of Energy (DOE), located a short distance northwest of Santa Fe, New Mexico, ...

, which in 1955 had requested a computer 100 times faster than any existing computer. The IBM 7030 used transistors

upright=1.4, gate (G), body (B), source (S) and drain (D) terminals. The gate is separated from the body by an insulating layer (pink).

A transistor is a semiconductor device used to Electronic amplifier, amplify or electronic switch, switch ...

, magnetic core memory, pipelined instructions, prefetched data through a memory controller and included pioneering random access disk drives. The IBM 7030 was completed in 1961 and despite not meeting the challenge of a hundredfold increase in performance, it was purchased by the Los Alamos National Laboratory. Customers in England and France also bought the computer, and it became the basis for the IBM 7950 Harvest

The IBM 7950, also known as Harvest, was a one-of-a-kind adjunct to the Stretch computer which was installed at the United States National Security Agency (NSA). Built by IBM, it was delivered in 1962 and operated until 1976, when it was decommi ...

, a supercomputer built for cryptanalysis.

The third pioneering supercomputer project in the early 1960s was the Atlas

An atlas is a collection of maps; it is typically a bundle of maps of Earth or of a region of Earth.

Atlases have traditionally been bound into book form, but today many atlases are in multimedia formats. In addition to presenting geograp ...

at the University of Manchester

, mottoeng = Knowledge, Wisdom, Humanity

, established = 2004 – University of Manchester Predecessor institutions: 1956 – UMIST (as university college; university 1994) 1904 – Victoria University of Manchester 1880 – Victoria Univ ...

, built by a team led by Tom Kilburn. He designed the Atlas to have memory space for up to a million words of 48 bits, but because magnetic storage with such a capacity was unaffordable, the actual core memory of the Atlas was only 16,000 words, with a drum providing memory for a further 96,000 words. The Atlas operating system

An operating system (OS) is system software that manages computer hardware, software resources, and provides common services for computer programs.

Time-sharing operating systems schedule tasks for efficient use of the system and may also i ...

swapped data in the form of pages between the magnetic core and the drum. The Atlas operating system also introduced time-sharing

In computing, time-sharing is the sharing of a computing resource among many users at the same time by means of multiprogramming and multi-tasking.DEC Timesharing (1965), by Peter Clark, The DEC Professional, Volume 1, Number 1

Its emergence ...

to supercomputing, so that more than one program could be executed on the supercomputer at any one time. Atlas was a joint venture between Ferranti

Ferranti or Ferranti International plc was a UK electrical engineering and equipment firm that operated for over a century from 1885 until it went bankrupt in 1993. The company was once a constituent of the FTSE 100 Index.

The firm was known ...

and the Manchester University

, mottoeng = Knowledge, Wisdom, Humanity

, established = 2004 – University of Manchester Predecessor institutions: 1956 – UMIST (as university college; university 1994) 1904 – Victoria University of Manchester 1880 – Victoria Univer ...

and was designed to operate at processing speeds approaching one microsecond per instruction, about one million instructions per second.

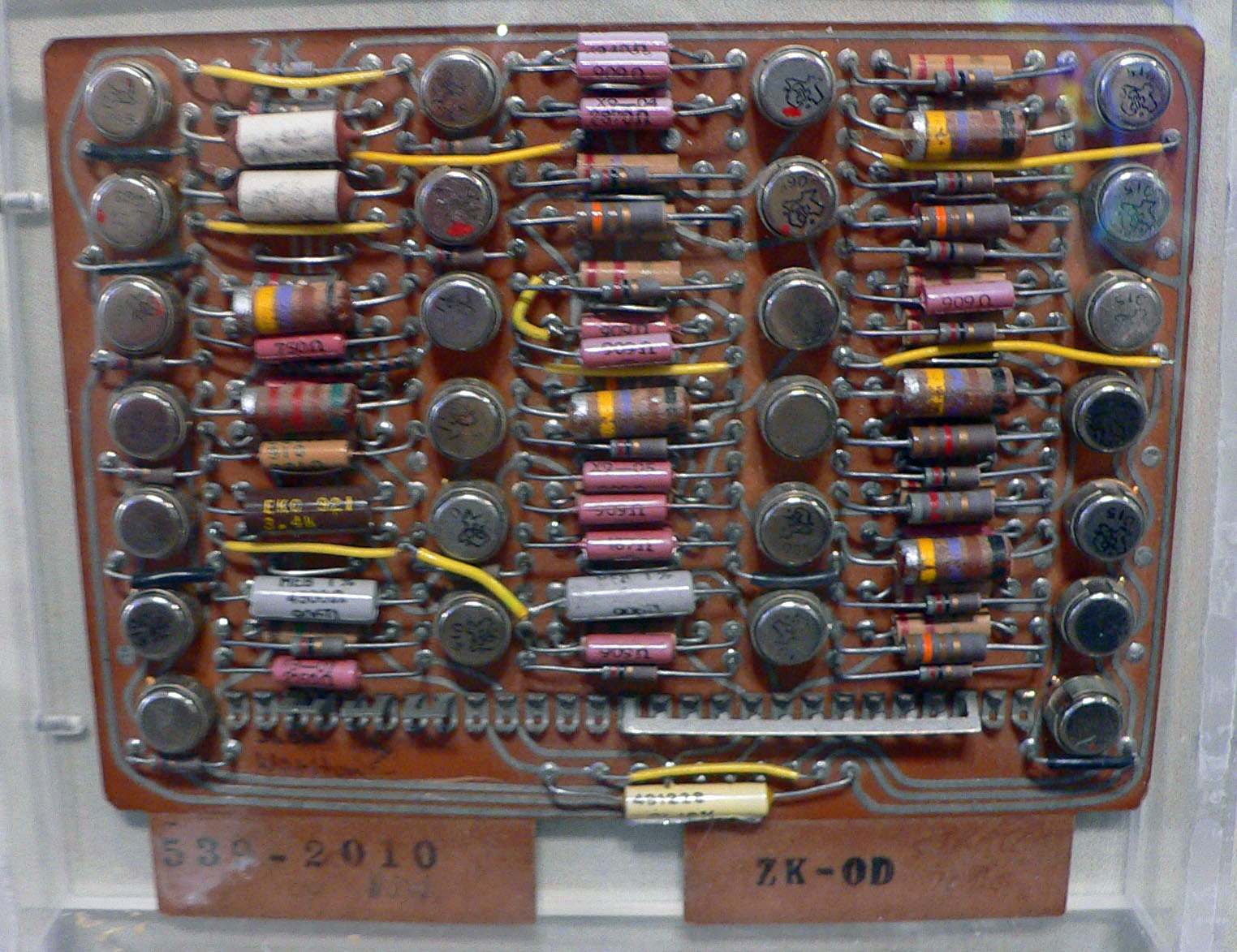

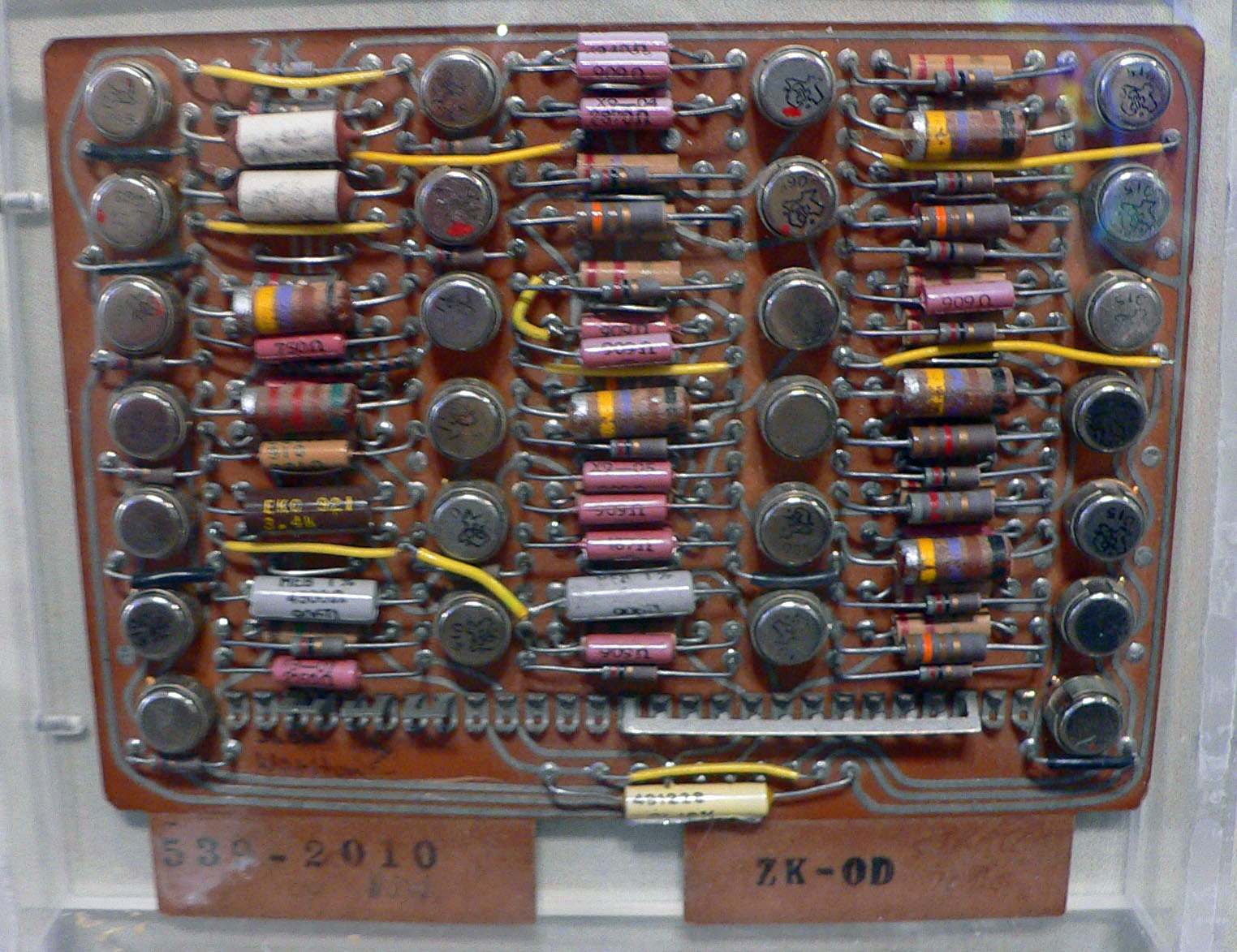

The CDC 6600

The CDC 6600 was the flagship of the 6000 series of mainframe computer systems manufactured by Control Data Corporation. Generally considered to be the first successful supercomputer, it outperformed the industry's prior recordholder, the IBM ...

, designed by Seymour Cray

Seymour Roger Cray (September 28, 1925 – October 5, 1996

) was an American

) was an American

germanium to

The only computer to seriously challenge the Cray-1's performance in the 1970s was the

The only computer to seriously challenge the Cray-1's performance in the 1970s was the

Systems with a massive number of processors generally take one of two paths. In the grid computing approach, the processing power of many computers, organized as distributed, diverse administrative domains, is opportunistically used whenever a computer is available. In another approach, many processors are used in proximity to each other, e.g. in a computer cluster. In such a centralized

Systems with a massive number of processors generally take one of two paths. In the grid computing approach, the processing power of many computers, organized as distributed, diverse administrative domains, is opportunistically used whenever a computer is available. In another approach, many processors are used in proximity to each other, e.g. in a computer cluster. In such a centralized

IBM creates world's most powerful computer

, ''NewScientist.com news service'', June 2007 The use of

Throughout the decades, the management of

Throughout the decades, the management of  Heat management is a major issue in complex electronic devices and affects powerful computer systems in various ways. The

Heat management is a major issue in complex electronic devices and affects powerful computer systems in various ways. The

An Evaluation of the Oak Ridge National Laboratory Cray XT3

' by Sadaf R. Alam etal ''International Journal of High Performance Computing Applications'' February 2008 vol. 22 no. 1 52–80 While in a traditional multi-user computer system

The parallel architectures of supercomputers often dictate the use of special programming techniques to exploit their speed. Software tools for distributed processing include standard APIs such as MPI and

The parallel architectures of supercomputers often dictate the use of special programming techniques to exploit their speed. Software tools for distributed processing include standard APIs such as MPI and

Opportunistic supercomputing is a form of networked grid computing whereby a "super virtual computer" of many loosely coupled volunteer computing machines performs very large computing tasks. Grid computing has been applied to a number of large-scale

Opportunistic supercomputing is a form of networked grid computing whereby a "super virtual computer" of many loosely coupled volunteer computing machines performs very large computing tasks. Grid computing has been applied to a number of large-scale

supports GIMPS's grid computing approach, one of the earliest volunteer computing projects, since 1997.

In general, the speed of supercomputers is measured and benchmarked in FLOPS (floating-point operations per second), and not in terms of MIPS (million instructions per second), as is the case with general-purpose computers. These measurements are commonly used with an SI prefix such as

In general, the speed of supercomputers is measured and benchmarked in FLOPS (floating-point operations per second), and not in terms of MIPS (million instructions per second), as is the case with general-purpose computers. These measurements are commonly used with an SI prefix such as

Since 1993, the fastest supercomputers have been ranked on the TOP500 list according to their

Since 1993, the fastest supercomputers have been ranked on the TOP500 list according to their

"Supercomputer Design: An Initial Effort to Capture the Environmental, Economic, and Societal Impacts"

Chemical and Biomolecular Engineering Publications and Other Works. {{Authority control American inventions Cluster computing Concurrent computing Distributed computing architecture Parallel computing

silicon

Silicon is a chemical element with the symbol Si and atomic number 14. It is a hard, brittle crystalline solid with a blue-grey metallic luster, and is a tetravalent metalloid and semiconductor. It is a member of group 14 in the periodic ta ...

transistors. Silicon transistors could run more quickly and the overheating problem was solved by introducing refrigeration to the supercomputer design. Thus, the CDC6600 became the fastest computer in the world. Given that the 6600 outperformed all the other contemporary computers by about 10 times, it was dubbed a ''supercomputer'' and defined the supercomputing market, when one hundred computers were sold at $8 million each.

Cray left CDC in 1972 to form his own company, Cray Research

Cray Inc., a subsidiary of Hewlett Packard Enterprise, is an American supercomputer manufacturer headquartered in Seattle, Washington. It also manufactures systems for data storage and analytics. Several Cray supercomputer systems are listed ...

. Four years after leaving CDC, Cray delivered the 80 MHz Cray-1 in 1976, which became one of the most successful supercomputers in history.''Readings in computer architecture'' by Mark Donald Hill, Norman Paul Jouppi, Gurindar Sohi 1999 page 41-48''Milestones in computer science and information technology'' by Edwin D. Reilly 2003 page 65 The Cray-2

The Cray-2 is a supercomputer with four vector processors made by Cray Research starting in 1985. At 1.9 GFLOPS peak performance, it was the fastest machine in the world when it was released, replacing the Cray X-MP in that spot. It was, i ...

was released in 1985. It had eight central processing unit

A central processing unit (CPU), also called a central processor, main processor or just Processor (computing), processor, is the electronic circuitry that executes Instruction (computing), instructions comprising a computer program. The CPU per ...

s (CPUs), liquid cooling

Liquid cooling refers to cooling by means of the convection or circulation of a liquid.

Examples of liquid cooling technologies include:

* Cooling by convection or circulation of coolant, including water cooling

* Liquid cooling and ventilati ...

and the electronics coolant liquid Fluorinert was pumped through the supercomputer architecture. It reached 1.9 gigaFLOPS, making it the first supercomputer to break the gigaflop barrier.

Massively parallel designs

The only computer to seriously challenge the Cray-1's performance in the 1970s was the

The only computer to seriously challenge the Cray-1's performance in the 1970s was the ILLIAC IV

The ILLIAC IV was the first massively parallel computer. The system was originally designed to have 256 64-bit floating point units (FPUs) and four central processing units (CPUs) able to process 1 billion operations per second. Due to budget cons ...

. This machine was the first realized example of a true massively parallel

Massively parallel is the term for using a large number of computer processors (or separate computers) to simultaneously perform a set of coordinated computations in parallel. GPUs are massively parallel architecture with tens of thousands of t ...

computer, in which many processors worked together to solve different parts of a single larger problem. In contrast with the vector systems, which were designed to run a single stream of data as quickly as possible, in this concept, the computer instead feeds separate parts of the data to entirely different processors and then recombines the results. The ILLIAC's design was finalized in 1966 with 256 processors and offer speed up to 1 GFLOPS, compared to the 1970s Cray-1's peak of 250 MFLOPS. However, development problems led to only 64 processors being built, and the system could never operate more quickly than about 200 MFLOPS while being much larger and more complex than the Cray. Another problem was that writing software for the system was difficult, and getting peak performance from it was a matter of serious effort.

But the partial success of the ILLIAC IV was widely seen as pointing the way to the future of supercomputing. Cray argued against this, famously quipping that "If you were plowing a field, which would you rather use? Two strong oxen or 1024 chickens?" But by the early 1980s, several teams were working on parallel designs with thousands of processors, notably the Connection Machine

A Connection Machine (CM) is a member of a series of massively parallel supercomputers that grew out of doctoral research on alternatives to the traditional von Neumann architecture of computers by Danny Hillis at Massachusetts Institute of Techno ...

(CM) that developed from research at MIT

The Massachusetts Institute of Technology (MIT) is a private land-grant research university in Cambridge, Massachusetts. Established in 1861, MIT has played a key role in the development of modern technology and science, and is one of the m ...

. The CM-1 used as many as 65,536 simplified custom microprocessor

A microprocessor is a computer processor where the data processing logic and control is included on a single integrated circuit, or a small number of integrated circuits. The microprocessor contains the arithmetic, logic, and control circ ...

s connected together in a network

Network, networking and networked may refer to:

Science and technology

* Network theory, the study of graphs as a representation of relations between discrete objects

* Network science, an academic field that studies complex networks

Mathematics ...

to share data. Several updated versions followed; the CM-5 supercomputer is a massively parallel processing computer capable of many billions of arithmetic operations per second.

In 1982, Osaka University's LINKS-1 Computer Graphics System used a massively parallel

Massively parallel is the term for using a large number of computer processors (or separate computers) to simultaneously perform a set of coordinated computations in parallel. GPUs are massively parallel architecture with tens of thousands of t ...

processing architecture, with 514 microprocessor

A microprocessor is a computer processor where the data processing logic and control is included on a single integrated circuit, or a small number of integrated circuits. The microprocessor contains the arithmetic, logic, and control circ ...

s, including 257 Zilog Z8001 control processors and 257 iAPX 86/20 floating-point processors. It was mainly used for rendering realistic 3D computer graphics

3D computer graphics, or “3D graphics,” sometimes called CGI, 3D-CGI or three-dimensional computer graphics are graphics that use a three-dimensional representation of geometric data (often Cartesian) that is stored in the computer for t ...

. Fujitsu's VPP500 from 1992 is unusual since, to achieve higher speeds, its processors used GaAs

Gallium arsenide (GaAs) is a III-V direct band gap semiconductor with a zinc blende crystal structure.

Gallium arsenide is used in the manufacture of devices such as microwave frequency integrated circuits, monolithic microwave integrated circ ...

, a material normally reserved for microwave applications due to its toxicity. Fujitsu's Numerical Wind Tunnel Numerical Wind Tunnel (数値風洞) was an early implementation of the vector parallel architecture developed in a joint project between National Aerospace Laboratory of Japan and Fujitsu. It was the first supercomputer with a sustained performance ...

supercomputer used 166 vector processors to gain the top spot in 1994 with a peak speed of 1.7 gigaFLOPS (GFLOPS) per processor. The Hitachi SR2201 obtained a peak performance of 600 GFLOPS in 1996 by using 2048 processors connected via a fast three-dimensional crossbar network. The Intel Paragon could have 1000 to 4000 Intel i860

The Intel i860 (also known as 80860) is a RISC microprocessor design introduced by Intel in 1989. It is one of Intel's first attempts at an entirely new, high-end instruction set architecture since the failed Intel iAPX 432 from the beginning of ...

processors in various configurations and was ranked the fastest in the world in 1993. The Paragon was a MIMD machine which connected processors via a high speed two-dimensional mesh, allowing processes to execute on separate nodes, communicating via the Message Passing Interface.

Software development remained a problem, but the CM series sparked off considerable research into this issue. Similar designs using custom hardware were made by many companies, including the Evans & Sutherland ES-1, MasPar

MasPar Computer Corporation was a minisupercomputer vendor that was founded in 1987 by Jeff Kalb. The company was based in Sunnyvale, California.

History

While Kalb was the vice-president of the division of Digital Equipment Corporation (DEC) t ...

, nCUBE

nCUBE was a series of parallel computing computers from the company of the same name. Early generations of the hardware used a custom microprocessor. With its final generations of servers, nCUBE no longer designed custom microprocessors for mach ...

, Intel iPSC The Intel Personal SuperComputer (Intel iPSC) was a product line of parallel computers in the 1980s and 1990s.

The iPSC/1 was superseded by the Intel iPSC/2, and then the Intel iPSC/860.

iPSC/1

In 1984, Justin Rattner became manager of the Intel ...

and the Goodyear MPP

The Goodyear Massively Parallel Processor (MPP) was a

massively parallel processing supercomputer built by Goodyear Aerospace

for the NASA Goddard Space Flight Center. It was designed to deliver enormous computational power at lower cost than oth ...

. But by the mid-1990s, general-purpose CPU performance had improved so much in that a supercomputer could be built using them as the individual processing units, instead of using custom chips. By the turn of the 21st century, designs featuring tens of thousands of commodity CPUs were the norm, with later machines adding graphic units to the mix.

massively parallel

Massively parallel is the term for using a large number of computer processors (or separate computers) to simultaneously perform a set of coordinated computations in parallel. GPUs are massively parallel architecture with tens of thousands of t ...

system the speed and flexibility of the ' becomes very important and modern supercomputers have used various approaches ranging from enhanced Infiniband

InfiniBand (IB) is a computer networking communications standard used in high-performance computing that features very high throughput and very low latency. It is used for data interconnect both among and within computers. InfiniBand is also use ...

systems to three-dimensional torus interconnect

A torus interconnect is a switch-less network topology for connecting processing nodes in a parallel computer system.

Introduction

In geometry, a torus is created by revolving a circle about an axis coplanar to the circle. While this is a ...

s.Knight, Will:IBM creates world's most powerful computer

, ''NewScientist.com news service'', June 2007 The use of

multi-core processor

A multi-core processor is a microprocessor on a single integrated circuit with two or more separate processing units, called cores, each of which reads and executes program instructions. The instructions are ordinary CPU instructions (such ...

s combined with centralization is an emerging direction, e.g. as in the Cyclops64 system.''Analysis and performance results of computing betweenness centrality on IBM Cyclops64'' by Guangming Tan, Vugranam C. Sreedhar and Guang R. Gao The Journal of Supercomputing Volume 56, Number 1, 1–24 September 2011

As the price, performance and energy efficiency of general-purpose graphics processing units (GPGPUs) have improved, a number of petaFLOPS

In computing, floating point operations per second (FLOPS, flops or flop/s) is a measure of computer performance, useful in fields of scientific computations that require floating-point calculations. For such cases, it is a more accurate meas ...

supercomputers such as Tianhe-I and Nebulae

A nebula ('cloud' or 'fog' in Latin; pl. nebulae, nebulæ or nebulas) is a distinct luminescent part of interstellar medium, which can consist of ionized, neutral or molecular hydrogen and also cosmic dust. Nebulae are often star-forming region ...

have started to rely on them. However, other systems such as the K computer

The K computer named for the Japanese word/numeral , meaning 10 quadrillion (1016)See Japanese numbers was a supercomputer manufactured by Fujitsu, installed at the Riken Advanced Institute for Computational Science campus in Kobe, Hyōgo Pref ...

continue to use conventional processors such as SPARC

SPARC (Scalable Processor Architecture) is a reduced instruction set computer (RISC) instruction set architecture originally developed by Sun Microsystems. Its design was strongly influenced by the experimental Berkeley RISC system develope ...

-based designs and the overall applicability of GPGPU

General-purpose computing on graphics processing units (GPGPU, or less often GPGP) is the use of a graphics processing unit (GPU), which typically handles computation only for computer graphics, to perform computation in applications traditiona ...

s in general-purpose high-performance computing applications has been the subject of debate, in that while a GPGPU may be tuned to score well on specific benchmarks, its overall applicability to everyday algorithms may be limited unless significant effort is spent to tune the application to it. However, GPUs are gaining ground, and in 2012 the Jaguar supercomputer was transformed into Titan by retrofitting CPUs with GPUs.

High-performance computers have an expected life cycle of about three years before requiring an upgrade. The Gyoukou

is a supercomputer developed by and PEZY Computing, based around ExaScaler's ZettaScaler immersion cooling system.

It was deployed at the Japan Agency for Marine-Earth Science and Technology (JAMSTEC) Yokohama Institute for Earth Sciences, th ...

supercomputer is unique in that it uses both a massively parallel design and liquid immersion cooling.

Special purpose supercomputers

A number of special-purpose systems have been designed, dedicated to a single problem. This allows the use of specially programmed FPGA chips or even custom ASICs, allowing better price/performance ratios by sacrificing generality. Examples of special-purpose supercomputers include Belle, Deep Blue, and Hydra for playingchess

Chess is a board game for two players, called White and Black, each controlling an army of chess pieces in their color, with the objective to checkmate the opponent's king. It is sometimes called international chess or Western chess to dist ...

, Gravity Pipe for astrophysics, MDGRAPE-3 for protein structure prediction and molecular dynamics, and Deep Crack for breaking the DES cipher.

Energy usage and heat management

Throughout the decades, the management of

Throughout the decades, the management of heat density

Heat flux or thermal flux, sometimes also referred to as ''heat flux density'', heat-flow density or ''heat flow rate intensity'' is a flow of energy per unit area per unit time. In SI its units are watts per square metre (W/m2). It has both a ...

has remained a key issue for most centralized supercomputers.''The Supermen: Story of Seymour Cray and the Technical Wizards Behind the Supercomputer'' by Charles J. Murray 1997, , pages 133–135''Parallel Computational Fluid Dyynamics; Recent Advances and Future Directions'' edited by Rupak Biswas 2010 page 401 The large amount of heat generated by a system may also have other effects, e.g. reducing the lifetime of other system components.''Supercomputing Research Advances'' by Yongge Huáng 2008, , pages 313–314 There have been diverse approaches to heat management, from pumping Fluorinert through the system, to a hybrid liquid-air cooling system or air cooling with normal air conditioning

Air conditioning, often abbreviated as A/C or AC, is the process of removing heat from an enclosed space to achieve a more comfortable interior environment (sometimes referred to as 'comfort cooling') and in some cases also strictly controlling ...

temperatures.''Parallel computing for real-time signal processing and control'' by M. O. Tokhi, Mohammad Alamgir Hossain 2003, , pages 201–202 A typical supercomputer consumes large amounts of electrical power, almost all of which is converted into heat, requiring cooling. For example, Tianhe-1A

Tianhe-I, Tianhe-1, or TH-1 (, ; '' Sky River Number One'') is a supercomputer capable of an Rmax (maximum range) of 2.5 peta FLOPS. Located at the National Supercomputing Center of Tianjin, China, it was the fastest computer in the worl ...

consumes 4.04 megawatt

The watt (symbol: W) is the unit of power or radiant flux in the International System of Units (SI), equal to 1 joule per second or 1 kg⋅m2⋅s−3. It is used to quantify the rate of energy transfer. The watt is named after James ...

s (MW) of electricity. The cost to power and cool the system can be significant, e.g. 4 MW at $0.10/kWh is $400 an hour or about $3.5 million per year.

Heat management is a major issue in complex electronic devices and affects powerful computer systems in various ways. The

Heat management is a major issue in complex electronic devices and affects powerful computer systems in various ways. The thermal design power

The thermal design power (TDP), sometimes called thermal design point, is the maximum amount of heat generated by a computer chip or component (often a CPU, GPU or system on a chip) that the cooling system in a computer is designed to dissipate ...

and CPU power dissipation Processor power dissipation or processing unit power dissipation is the process in which computer processors consume electrical energy, and dissipate this energy in the form of heat due to the resistance in the electronic circuits.

Power manag ...

issues in supercomputing surpass those of traditional computer cooling

Computer cooling is required to remove the waste heat produced by computer components, to keep components within permissible operating temperature limits. Components that are susceptible to temporary malfunction or permanent failure if over ...

technologies. The supercomputing awards for green computing

Green computing, green IT, or ICT sustainability, is the study and practice of environmentally sustainable computing or IT.

The goals of green computing are similar to green chemistry: reduce the use of hazardous materials, maximize energy effic ...

reflect this issue.

The packing of thousands of processors together inevitably generates significant amounts of heat density

Heat flux or thermal flux, sometimes also referred to as ''heat flux density'', heat-flow density or ''heat flow rate intensity'' is a flow of energy per unit area per unit time. In SI its units are watts per square metre (W/m2). It has both a ...

that need to be dealt with. The Cray-2

The Cray-2 is a supercomputer with four vector processors made by Cray Research starting in 1985. At 1.9 GFLOPS peak performance, it was the fastest machine in the world when it was released, replacing the Cray X-MP in that spot. It was, i ...

was liquid cooled, and used a Fluorinert "cooling waterfall" which was forced through the modules under pressure. However, the submerged liquid cooling approach was not practical for the multi-cabinet systems based on off-the-shelf processors, and in System X a special cooling system that combined air conditioning with liquid cooling was developed in conjunction with the Liebert company.''Computational science – ICCS 2005: 5th international conference'' edited by Vaidy S. Sunderam 2005, , pages 60–67

In the Blue Gene

Blue Gene is an IBM project aimed at designing supercomputers that can reach operating speeds in the petaFLOPS (PFLOPS) range, with low power consumption.

The project created three generations of supercomputers, Blue Gene/L, Blue Gene/P, ...

system, IBM deliberately used low power processors to deal with heat density. The IBM Power 775, released in 2011, has closely packed elements that require water cooling. The IBM Aquasar

Aquasar is a supercomputer (a high-performance computer) prototype created by IBM Labs in collaboration with ETH Zurich in Zürich, Switzerland and ETH Lausanne in Lausanne, Switzerland. While most supercomputers use air as their coolant of choi ...

system uses hot water cooling to achieve energy efficiency, the water being used to heat buildings as well.

The energy efficiency of computer systems is generally measured in terms of " FLOPS per watt". In 2008, Roadrunner

The roadrunners (genus ''Geococcyx''), also known as chaparral birds or chaparral cocks, are two species of fast-running ground cuckoos with long tails and crests. They are found in the southwestern and south-central United States and Mexico, us ...

by IBM operated at 3.76 MFLOPS/W. In November 2010, the Blue Gene/Q reached 1,684 MFLOPS/W and in June 2011 the top two spots on the Green 500

The Green500 is a biannual ranking of supercomputers, from the TOP500 list of supercomputers, in terms of energy efficiency. The list measures performance per watt using the TOP500 measure of high performance LINPACK benchmarks at double-precisi ...

list were occupied by Blue Gene

Blue Gene is an IBM project aimed at designing supercomputers that can reach operating speeds in the petaFLOPS (PFLOPS) range, with low power consumption.

The project created three generations of supercomputers, Blue Gene/L, Blue Gene/P, ...

machines in New York (one achieving 2097 MFLOPS/W) with the DEGIMA cluster in Nagasaki placing third with 1375 MFLOPS/W.

Because copper wires can transfer energy into a supercomputer with much higher power densities than forced air or circulating refrigerants can remove waste heat

Waste heat is heat that is produced by a machine, or other process that uses energy, as a byproduct of doing work. All such processes give off some waste heat as a fundamental result of the laws of thermodynamics. Waste heat has lower utility ...

, the ability of the cooling systems to remove waste heat is a limiting factor. , many existing supercomputers have more infrastructure capacity than the actual peak demand of the machine designers generally conservatively design the power and cooling infrastructure to handle more than the theoretical peak electrical power consumed by the supercomputer. Designs for future supercomputers are power-limited the thermal design power

The thermal design power (TDP), sometimes called thermal design point, is the maximum amount of heat generated by a computer chip or component (often a CPU, GPU or system on a chip) that the cooling system in a computer is designed to dissipate ...

of the supercomputer as a whole, the amount that the power and cooling infrastructure can handle, is somewhat more than the expected normal power consumption, but less than the theoretical peak power consumption of the electronic hardware.

Software and system management

Operating systems

Since the end of the 20th century, supercomputer operating systems have undergone major transformations, based on the changes in supercomputer architecture.''Encyclopedia of Parallel Computing'' by David Padua 2011 pages 426–429 While early operating systems were custom tailored to each supercomputer to gain speed, the trend has been to move away from in-house operating systems to the adaptation of generic software such asLinux

Linux ( or ) is a family of open-source Unix-like operating systems based on the Linux kernel, an operating system kernel first released on September 17, 1991, by Linus Torvalds. Linux is typically packaged as a Linux distribution, w ...

.''Knowing machines: essays on technical change'' by Donald MacKenzie 1998 page 149-151

Since modern massively parallel

Massively parallel is the term for using a large number of computer processors (or separate computers) to simultaneously perform a set of coordinated computations in parallel. GPUs are massively parallel architecture with tens of thousands of t ...

supercomputers typically separate computations from other services by using multiple types of nodes, they usually run different operating systems on different nodes, e.g. using a small and efficient lightweight kernel such as CNK or CNL on compute nodes, but a larger system such as a Linux

Linux ( or ) is a family of open-source Unix-like operating systems based on the Linux kernel, an operating system kernel first released on September 17, 1991, by Linus Torvalds. Linux is typically packaged as a Linux distribution, w ...

-derivative on server and I/O nodes.''Euro-Par 2004 Parallel Processing: 10th International Euro-Par Conference'' 2004, by Marco Danelutto, Marco Vanneschi and Domenico Laforenza, , page 835''Euro-Par 2006 Parallel Processing: 12th International Euro-Par Conference'', 2006, by Wolfgang E. Nagel, Wolfgang V. Walter and Wolfgang Lehner pageAn Evaluation of the Oak Ridge National Laboratory Cray XT3

' by Sadaf R. Alam etal ''International Journal of High Performance Computing Applications'' February 2008 vol. 22 no. 1 52–80 While in a traditional multi-user computer system

job scheduling

A job scheduler is a computer application for controlling unattended background program execution of jobs. This is commonly called batch scheduling, as execution of non-interactive jobs is often called batch processing, though traditional ''job ...

is, in effect, a tasking

TASKING GmbH is a provider of embedded-software development tools headquartered in Munich, Germany.

History

Founded as a software consulting company in 1977, TASKING developed its first C compiler in 1986. In 1988, its first embedded toolset f ...

problem for processing and peripheral resources, in a massively parallel system, the job management system needs to manage the allocation of both computational and communication resources, as well as gracefully deal with inevitable hardware failures when tens of thousands of processors are present.Open Job Management Architecture for the Blue Gene/L Supercomputer by Yariv Aridor et al. in ''Job scheduling strategies for parallel processing'' by Dror G. Feitelson 2005 pages 95–101

Although most modern supercomputers use Linux

Linux ( or ) is a family of open-source Unix-like operating systems based on the Linux kernel, an operating system kernel first released on September 17, 1991, by Linus Torvalds. Linux is typically packaged as a Linux distribution, w ...

-based operating systems, each manufacturer has its own specific Linux-derivative, and no industry standard exists, partly due to the fact that the differences in hardware architectures require changes to optimize the operating system to each hardware design.

Software tools and message passing

The parallel architectures of supercomputers often dictate the use of special programming techniques to exploit their speed. Software tools for distributed processing include standard APIs such as MPI and

The parallel architectures of supercomputers often dictate the use of special programming techniques to exploit their speed. Software tools for distributed processing include standard APIs such as MPI and PVM

Parallel Virtual Machine (PVM) is a software tool for parallel networking of computers. It is designed to allow a network of heterogeneous Unix and/or Windows machines to be used as a single distributed parallel processor. Thus large computatio ...

, VTL, and open source software such as Beowulf.

In the most common scenario, environments such as PVM

Parallel Virtual Machine (PVM) is a software tool for parallel networking of computers. It is designed to allow a network of heterogeneous Unix and/or Windows machines to be used as a single distributed parallel processor. Thus large computatio ...

and MPI for loosely connected clusters and OpenMP

OpenMP (Open Multi-Processing) is an application programming interface (API) that supports multi-platform shared-memory multiprocessing programming in C, C++, and Fortran, on many platforms, instruction-set architectures and operating syst ...

for tightly coordinated shared memory machines are used. Significant effort is required to optimize an algorithm for the interconnect characteristics of the machine it will be run on; the aim is to prevent any of the CPUs from wasting time waiting on data from other nodes. GPGPU

General-purpose computing on graphics processing units (GPGPU, or less often GPGP) is the use of a graphics processing unit (GPU), which typically handles computation only for computer graphics, to perform computation in applications traditiona ...

s have hundreds of processor cores and are programmed using programming models such as CUDA

CUDA (or Compute Unified Device Architecture) is a parallel computing platform and application programming interface (API) that allows software to use certain types of graphics processing units (GPUs) for general purpose processing, an approach ...

or OpenCL

OpenCL (Open Computing Language) is a framework for writing programs that execute across heterogeneous platforms consisting of central processing units (CPUs), graphics processing units (GPUs), digital signal processors (DSPs), field-progra ...

.

Moreover, it is quite difficult to debug and test parallel programs. Special techniques need to be used for testing and debugging such applications.

Distributed supercomputing

Opportunistic approaches

Opportunistic supercomputing is a form of networked grid computing whereby a "super virtual computer" of many loosely coupled volunteer computing machines performs very large computing tasks. Grid computing has been applied to a number of large-scale

Opportunistic supercomputing is a form of networked grid computing whereby a "super virtual computer" of many loosely coupled volunteer computing machines performs very large computing tasks. Grid computing has been applied to a number of large-scale embarrassingly parallel

In parallel computing, an embarrassingly parallel workload or problem (also called embarrassingly parallelizable, perfectly parallel, delightfully parallel or pleasingly parallel) is one where little or no effort is needed to separate the problem ...

problems that require supercomputing performance scales. However, basic grid and cloud computing

Cloud computing is the on-demand availability of computer system resources, especially data storage ( cloud storage) and computing power, without direct active management by the user. Large clouds often have functions distributed over mu ...

approaches that rely on volunteer computing

Volunteer computing is a type of distributed computing in which people donate their computers' unused resources to a research-oriented project, and sometimes in exchange for credit points. The fundamental idea behind it is that a modern desktop co ...

cannot handle traditional supercomputing tasks such as fluid dynamic simulations.

The fastest grid computing system is the volunteer computing project Folding@home

Folding@home (FAH or F@h) is a volunteer computing project aimed to help scientists develop new therapeutics for a variety of diseases by the means of simulating protein dynamics. This includes the process of protein folding and the movements ...

(F@h). , F@h reported 2.5 exaFLOPS of x86

x86 (also known as 80x86 or the 8086 family) is a family of complex instruction set computer (CISC) instruction set architectures initially developed by Intel based on the Intel 8086 microprocessor and its 8088 variant. The 8086 was intr ...

processing power. Of this, over 100 PFLOPS are contributed by clients running on various GPUs, and the rest from various CPU systems.

The Berkeley Open Infrastructure for Network Computing

The Berkeley Open Infrastructure for Network Computing (BOINC, pronounced – rhymes with "oink") is an open-source middleware system for volunteer computing (a type of distributed computing). Developed originally to support SETI@home, it beca ...

(BOINC) platform hosts a number of volunteer computing projects. , BOINC recorded a processing power of over 166 petaFLOPS through over 762 thousand active Computers (Hosts) on the network.

, Great Internet Mersenne Prime Search's (GIMPS) distributed Mersenne Prime

In mathematics, a Mersenne prime is a prime number that is one less than a power of two. That is, it is a prime number of the form for some integer . They are named after Marin Mersenne, a French Minim friar, who studied them in the early 17th ...

search achieved about 0.313 PFLOPS through over 1.3 million computers. Thsupports GIMPS's grid computing approach, one of the earliest volunteer computing projects, since 1997.

Quasi-opportunistic approaches

Quasi-opportunistic supercomputing is a form ofdistributed computing

A distributed system is a system whose components are located on different networked computers, which communicate and coordinate their actions by passing messages to one another from any system. Distributed computing is a field of computer sci ...

whereby the "super virtual computer" of many networked geographically disperse computers performs computing tasks that demand huge processing power. Quasi-opportunistic supercomputing aims to provide a higher quality of service than opportunistic grid computing by achieving more control over the assignment of tasks to distributed resources and the use of intelligence about the availability and reliability of individual systems within the supercomputing network. However, quasi-opportunistic distributed execution of demanding parallel computing software in grids should be achieved through implementation of grid-wise allocation agreements, co-allocation subsystems, communication topology-aware allocation mechanisms, fault tolerant message passing libraries and data pre-conditioning.

High-performance computing clouds

Cloud computing

Cloud computing is the on-demand availability of computer system resources, especially data storage ( cloud storage) and computing power, without direct active management by the user. Large clouds often have functions distributed over mu ...

with its recent and rapid expansions and development have grabbed the attention of high-performance computing (HPC) users and developers in recent years. Cloud computing attempts to provide HPC-as-a-service exactly like other forms of services available in the cloud such as software as a service

Software as a service (SaaS ) is a software licensing and delivery model in which software is licensed on a subscription basis and is centrally hosted. SaaS is also known as "on-demand software" and Web-based/Web-hosted software.

SaaS is con ...

, platform as a service

Platform as a service (PaaS) or application platform as a service (aPaaS) or platform-based service is a category of cloud computing services that allows customers to provision, instantiate, run, and manage a modular bundle comprising a computing ...

, and infrastructure as a service. HPC users may benefit from the cloud in different angles such as scalability, resources being on-demand, fast, and inexpensive. On the other hand, moving HPC applications have a set of challenges too. Good examples of such challenges are virtualization

In computing, virtualization or virtualisation (sometimes abbreviated v12n, a numeronym) is the act of creating a virtual (rather than actual) version of something at the same abstraction level, including virtual computer hardware platforms, stor ...

overhead in the cloud, multi-tenancy of resources, and network latency issues. Much research is currently being done to overcome these challenges and make HPC in the cloud a more realistic possibility.

In 2016, Penguin Computing, Parallel Works, R-HPC, Amazon Web Services

Amazon Web Services, Inc. (AWS) is a subsidiary of Amazon that provides on-demand cloud computing platforms and APIs to individuals, companies, and governments, on a metered pay-as-you-go basis. These cloud computing web services provide d ...

, Univa

Univa was a software company that developed workload management and cloud management products for compute-intensive applications in the data center and across public, private, and hybrid clouds, before being acquired by Altair Engineering in Septe ...

, Silicon Graphics International

Silicon Graphics International Corp. (SGI; formerly Rackable Systems, Inc.) was an American manufacturer of computer hardware and software, including high-performance computing systems, x86-based servers for datacenter deployment, and visualiz ...

, Rescale, Sabalcore, and Gomput started to offer HPC cloud computing

Cloud computing is the on-demand availability of computer system resources, especially data storage ( cloud storage) and computing power, without direct active management by the user. Large clouds often have functions distributed over mu ...

. The Penguin On Demand (POD) cloud is a bare-metal

In computer science, bare machine (or bare metal) refers to a computer executing instructions directly on logic hardware without an intervening operating system. Modern operating systems evolved through various stages, from elementary to the pre ...

compute model to execute code, but each user is given virtualized

In computing, virtualization or virtualisation (sometimes abbreviated v12n, a numeronym) is the act of creating a virtual (rather than actual) version of something at the same abstraction level, including virtual computer hardware platforms, stor ...

login node. POD computing nodes are connected via non-virtualized 10 Gbit/s Ethernet

Ethernet () is a family of wired computer networking technologies commonly used in local area networks (LAN), metropolitan area networks (MAN) and wide area networks (WAN). It was commercially introduced in 1980 and first standardized in 1 ...

or QDR InfiniBand

InfiniBand (IB) is a computer networking communications standard used in high-performance computing that features very high throughput and very low latency. It is used for data interconnect both among and within computers. InfiniBand is also use ...

networks. User connectivity to the POD data center ranges from 50 Mbit/s to 1 Gbit/s. Citing Amazon's EC2 Elastic Compute Cloud, Penguin Computing argues that virtualization

In computing, virtualization or virtualisation (sometimes abbreviated v12n, a numeronym) is the act of creating a virtual (rather than actual) version of something at the same abstraction level, including virtual computer hardware platforms, stor ...

of compute nodes is not suitable for HPC. Penguin Computing has also criticized that HPC clouds may have allocated computing nodes to customers that are far apart, causing latency that impairs performance for some HPC applications.

Performance measurement

Capability versus capacity

Supercomputers generally aim for the maximum in capability computing rather than capacity computing. Capability computing is typically thought of as using the maximum computing power to solve a single large problem in the shortest amount of time. Often a capability system is able to solve a problem of a size or complexity that no other computer can, e.g. a very complex weather simulation application. Capacity computing, in contrast, is typically thought of as using efficient cost-effective computing power to solve a few somewhat large problems or many small problems.''The Potential Impact of High-End Capability Computing on Four Illustrative Fields of Science and Engineering'' by Committee on the Potential Impact of High-End Computing on Illustrative Fields of Science and Engineering and National Research Council (28 October 2008) page 9 Architectures that lend themselves to supporting many users for routine everyday tasks may have a lot of capacity but are not typically considered supercomputers, given that they do not solve a single very complex problem.Performance metrics

tera-

A metric prefix is a unit prefix that precedes a basic unit of measure to indicate a multiple or submultiple of the unit. All metric prefixes used today are decadic. Each prefix has a unique symbol that is prepended to any unit symbol. The pr ...

, combined into the shorthand TFLOPS (1012 FLOPS, pronounced ''teraflops''), or peta-

A metric prefix is a unit prefix that precedes a basic unit of measure to indicate a multiple or submultiple of the unit. All metric prefixes used today are decadic. Each prefix has a unique symbol that is prepended to any unit symbol. The pre ...

, combined into the shorthand PFLOPS (1015 FLOPS, pronounced ''petaflops''.) Petascale supercomputers can process one quadrillion (1015) (1000 trillion) FLOPS. Exascale

Exascale computing refers to computing systems capable of calculating at least "1018 IEEE 754 Double Precision (64-bit) operations (multiplications and/or additions) per second ( exa FLOPS)"; it is a measure of supercomputer performance.

Exasca ...

is computing performance in the exaFLOPS (EFLOPS) range. An EFLOPS is one quintillion (1018) FLOPS (one million TFLOPS).

No single number can reflect the overall performance of a computer system, yet the goal of the Linpack benchmark is to approximate how fast the computer solves numerical problems and it is widely used in the industry. The FLOPS measurement is either quoted based on the theoretical floating point performance of a processor (derived from manufacturer's processor specifications and shown as "Rpeak" in the TOP500 lists), which is generally unachievable when running real workloads, or the achievable throughput, derived from the LINPACK benchmarks

The LINPACK Benchmarks are a measure of a system's floating-point computing power. Introduced by Jack Dongarra, they measure how fast a computer solves a dense ''n'' by ''n'' system of linear equations ''Ax'' = ''b'', which is a commo ...

and shown as "Rmax" in the TOP500 list. The LINPACK benchmark typically performs LU decomposition

In numerical analysis and linear algebra, lower–upper (LU) decomposition or factorization factors a matrix as the product of a lower triangular matrix and an upper triangular matrix (see matrix decomposition). The product sometimes includes a p ...

of a large matrix. The LINPACK performance gives some indication of performance for some real-world problems, but does not necessarily match the processing requirements of many other supercomputer workloads, which for example may require more memory bandwidth, or may require better integer computing performance, or may need a high performance I/O system to achieve high levels of performance.

The TOP500 list

Since 1993, the fastest supercomputers have been ranked on the TOP500 list according to their

Since 1993, the fastest supercomputers have been ranked on the TOP500 list according to their LINPACK benchmark

The LINPACK Benchmarks are a measure of a system's floating-point computing power. Introduced by Jack Dongarra, they measure how fast a computer solves a dense ''n'' by ''n'' system of linear equations ''Ax'' = ''b'', which is a common ...

results. The list does not claim to be unbiased or definitive, but it is a widely cited current definition of the "fastest" supercomputer available at any given time.

This is a recent list of the computers which appeared at the top of the TOP500 list, and the "Peak speed" is given as the "Rmax" rating. In 2018, Lenovo became the world's largest provider for the TOP500 supercomputers with 117 units produced.

Applications

The stages of supercomputer application may be summarized in the following table: The IBMBlue Gene

Blue Gene is an IBM project aimed at designing supercomputers that can reach operating speeds in the petaFLOPS (PFLOPS) range, with low power consumption.

The project created three generations of supercomputers, Blue Gene/L, Blue Gene/P, ...

/P computer has been used to simulate a number of artificial neurons equivalent to approximately one percent of a human cerebral cortex, containing 1.6 billion neurons with approximately 9 trillion connections. The same research group also succeeded in using a supercomputer to simulate a number of artificial neurons equivalent to the entirety of a rat's brain.

Modern-day weather forecasting also relies on supercomputers. The National Oceanic and Atmospheric Administration

The National Oceanic and Atmospheric Administration (abbreviated as NOAA ) is an United States scientific and regulatory agency within the United States Department of Commerce that forecasts weather, monitors oceanic and atmospheric conditio ...

uses supercomputers to crunch hundreds of millions of observations to help make weather forecasts more accurate.

In 2011, the challenges and difficulties in pushing the envelope in supercomputing were underscored by IBM's abandonment of the Blue Waters

Blue Waters was a petascale supercomputer operated by the National Center for Supercomputing Applications (NCSA) at the University of Illinois at Urbana-Champaign. On August 8, 2007, the National Science Board approved a resolution which auth ...

petascale project.

The Advanced Simulation and Computing Program currently uses supercomputers to maintain and simulate the United States nuclear stockpile.

In early 2020, COVID-19

Coronavirus disease 2019 (COVID-19) is a contagious disease caused by a virus, the severe acute respiratory syndrome coronavirus 2 (SARS-CoV-2). The first known case was identified in Wuhan, China, in December 2019. The disease quickly ...

was front and center in the world. Supercomputers used different simulations to find compounds that could potentially stop the spread. These computers run for tens of hours using multiple paralleled running CPU's to model different processes.

Development and trends

In the 2010s, China, the United States, the European Union, and others competed to be the first to create a 1exaFLOP

In computing, floating point operations per second (FLOPS, flops or flop/s) is a measure of computer performance, useful in fields of scientific computations that require floating-point calculations. For such cases, it is a more accurate meas ...

(1018 or one quintillion FLOPS) supercomputer. Erik P. DeBenedictis of Sandia National Laboratories

Sandia National Laboratories (SNL), also known as Sandia, is one of three research and development laboratories of the United States Department of Energy's National Nuclear Security Administration (NNSA). Headquartered in Kirtland Air Force Bas ...

has theorized that a zettaFLOPS (1021 or one sextillion FLOPS) computer is required to accomplish full weather modeling, which could cover a two-week time span accurately. Such systems might be built around 2030.

Many Monte Carlo simulations use the same algorithm to process a randomly generated data set; particularly, integro-differential equation

In mathematics, an integro-differential equation is an equation that involves both integrals and derivatives of a function.

General first order linear equations

The general first-order, linear (only with respect to the term involving derivati ...

s describing physical transport processes, the random paths, collisions, and energy and momentum depositions of neutrons, photons, ions, electrons, etc. The next step for microprocessors may be into the third dimension; and specializing to Monte Carlo, the many layers could be identical, simplifying the design and manufacture process.

The cost of operating high performance supercomputers has risen, mainly due to increasing power consumption. In the mid-1990s a top 10 supercomputer required in the range of 100 kilowatts, in 2010 the top 10 supercomputers required between 1 and 2 megawatts. A 2010 study commissioned by DARPA

The Defense Advanced Research Projects Agency (DARPA) is a research and development agency of the United States Department of Defense responsible for the development of emerging technologies for use by the military.

Originally known as the Ad ...

identified power consumption as the most pervasive challenge in achieving Exascale computing. At the time a megawatt per year in energy consumption cost about 1 million dollars. Supercomputing facilities were constructed to efficiently remove the increasing amount of heat produced by modern multi-core central processing unit

A central processing unit (CPU), also called a central processor, main processor or just Processor (computing), processor, is the electronic circuitry that executes Instruction (computing), instructions comprising a computer program. The CPU per ...

s. Based on the energy consumption of the Green 500 list of supercomputers between 2007 and 2011, a supercomputer with 1 exaFLOPS in 2011 would have required nearly 500 megawatts. Operating systems were developed for existing hardware to conserve energy whenever possible. CPU cores not in use during the execution of a parallelized application were put into low-power states, producing energy savings for some supercomputing applications.

The increasing cost of operating supercomputers has been a driving factor in a trend toward bundling of resources through a distributed supercomputer infrastructure. National supercomputing centers first emerged in the US, followed by Germany and Japan. The European Union launched the Partnership for Advanced Computing in Europe

A partnership is an arrangement where parties, known as business partners, agree to cooperate to advance their mutual interests. The partners in a partnership may be individuals, businesses, interest-based organizations, schools, governments o ...

(PRACE) with the aim of creating a persistent pan-European supercomputer infrastructure with services to support scientists across the European Union

The European Union (EU) is a supranational political and economic union of member states that are located primarily in Europe. The union has a total area of and an estimated total population of about 447million. The EU has often been de ...

in porting, scaling and optimizing supercomputing applications. Iceland built the world's first zero-emission supercomputer. Located at the Thor Data Center in Reykjavík

Reykjavík ( ; ) is the capital and largest city of Iceland. It is located in southwestern Iceland, on the southern shore of Faxaflói bay. Its latitude is 64°08' N, making it the world's northernmost capital of a sovereign state. With a po ...

, Iceland, this supercomputer relies on completely renewable sources for its power rather than fossil fuels. The colder climate also reduces the need for active cooling, making it one of the greenest facilities in the world of computers.

Funding supercomputer hardware also became increasingly difficult. In the mid-1990s a top 10 supercomputer cost about 10 million euros, while in 2010 the top 10 supercomputers required an investment of between 40 and 50 million euros. In the 2000s national governments put in place different strategies to fund supercomputers. In the UK the national government funded supercomputers entirely and high performance computing was put under the control of a national funding agency. Germany developed a mixed funding model, pooling local state funding and federal funding.

In fiction

Manyscience fiction

Science fiction (sometimes shortened to Sci-Fi or SF) is a genre of speculative fiction which typically deals with imaginative and futuristic concepts such as advanced science and technology, space exploration, time travel, parallel uni ...

writers have depicted supercomputers in their works, both before and after the historical construction of such computers. Much of such fiction deals with the relations of humans with the computers they build and with the possibility of conflict eventually developing between them. Examples of supercomputers in fiction include HAL 9000

HAL 9000 is a fictional artificial intelligence character and the main antagonist in Arthur C. Clarke's ''Space Odyssey'' series. First appearing in the 1968 film '' 2001: A Space Odyssey'', HAL ( Heuristically programmed ALgorithmic computer) ...

, Multivac, The Machine Stops, GLaDOS

GLaDOS (Genetic Lifeform and Disk Operating System) is a fictional artificially superintelligent computer system from the video game series ''Portal''. GLaDOS later appeared in ''The Lab'' and ''Lego Dimensions''. The character was created by ...

, The Evitable Conflict

"The Evitable Conflict" is a science fiction short story by American writer Isaac Asimov. It first appeared in the June 1950 issue of ''Astounding Science Fiction'' and subsequently appeared in the collections ''I, Robot'' (1950), ''The Complete ...

, Vulcan's Hammer

''Vulcan's Hammer'' is a 1960 science fiction novel by American writer Philip K. Dick. It was released originally as an Ace Double. This has been considered to be the final outing of Dick's 1950s style pulp science fiction writing, before his b ...

, Colossus, WOPR, and Deep Thought.

See also

* ACM/IEEE Supercomputing Conference * ACM SIGHPC *High-performance computing

High-performance computing (HPC) uses supercomputers and computer clusters to solve advanced computation problems.

Overview

HPC integrates systems administration (including network and security knowledge) and parallel programming into a mult ...

* High-performance technical computing

* Jungle computing

* Nvidia Tesla Personal Supercomputer

* Parallel computing

* Supercomputing in China

* Supercomputing in Europe

* Supercomputing in India

* Supercomputing in Japan

* Testing high-performance computing applications

* Ultra Network Technologies

* Quantum computing

References

External links

* McDonnell, Marshall T. (2013"Supercomputer Design: An Initial Effort to Capture the Environmental, Economic, and Societal Impacts"

Chemical and Biomolecular Engineering Publications and Other Works. {{Authority control American inventions Cluster computing Concurrent computing Distributed computing architecture Parallel computing