back propagation on:

[Wikipedia]

[Google]

[Amazon]

In

Initially, before training, the weights will be set randomly. Then the neuron learns from training examples, which in this case consist of a set of

Initially, before training, the weights will be set randomly. Then the neuron learns from training examples, which in this case consist of a set of  However, the output of a neuron depends on the weighted sum of all its inputs:

:

where and are the weights on the connection from the input units to the output unit. Therefore, the error also depends on the incoming weights to the neuron, which is ultimately what needs to be changed in the network to enable learning.

In this example, upon injecting the training data , the loss function becomes

Then, the loss function takes the form of a parabolic cylinder with its base directed along . Since all sets of weights that satisfy minimize the loss function, in this case additional constraints are required to converge to a unique solution. Additional constraints could either be generated by setting specific conditions to the weights, or by injecting additional training data.

One commonly used algorithm to find the set of weights that minimizes the error is

However, the output of a neuron depends on the weighted sum of all its inputs:

:

where and are the weights on the connection from the input units to the output unit. Therefore, the error also depends on the incoming weights to the neuron, which is ultimately what needs to be changed in the network to enable learning.

In this example, upon injecting the training data , the loss function becomes

Then, the loss function takes the form of a parabolic cylinder with its base directed along . Since all sets of weights that satisfy minimize the loss function, in this case additional constraints are required to converge to a unique solution. Additional constraints could either be generated by setting specific conditions to the weights, or by injecting additional training data.

One commonly used algorithm to find the set of weights that minimizes the error is

* Gradient descent with backpropagation is not guaranteed to find the

* Gradient descent with backpropagation is not guaranteed to find the

machine learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence.

Machine ...

, backpropagation (backprop, BP) is a widely used algorithm

In mathematics and computer science, an algorithm () is a finite sequence of rigorous instructions, typically used to solve a class of specific problems or to perform a computation. Algorithms are used as specifications for performing ...

for training feedforward artificial neural networks. Generalizations of backpropagation exist for other artificial neural network

Artificial neural networks (ANNs), usually simply called neural networks (NNs) or neural nets, are computing systems inspired by the biological neural networks that constitute animal brains.

An ANN is based on a collection of connected unit ...

s (ANNs), and for functions generally. These classes of algorithms are all referred to generically as "backpropagation". In fitting a neural network, backpropagation computes the gradient

In vector calculus, the gradient of a scalar-valued differentiable function of several variables is the vector field (or vector-valued function) \nabla f whose value at a point p is the "direction and rate of fastest increase". If the gr ...

of the loss function with respect to the weights of the network for a single input–output example, and does so efficiently, unlike a naive direct computation of the gradient with respect to each weight individually. This efficiency makes it feasible to use gradient methods for training multilayer networks, updating weights to minimize loss; gradient descent

In mathematics, gradient descent (also often called steepest descent) is a first-order iterative optimization algorithm for finding a local minimum of a differentiable function. The idea is to take repeated steps in the opposite direction of the ...

, or variants such as stochastic gradient descent, are commonly used. The backpropagation algorithm works by computing the gradient of the loss function with respect to each weight by the chain rule

In calculus, the chain rule is a formula that expresses the derivative of the composition of two differentiable functions and in terms of the derivatives of and . More precisely, if h=f\circ g is the function such that h(x)=f(g(x)) for every , ...

, computing the gradient one layer at a time, iterating backward from the last layer to avoid redundant calculations of intermediate terms in the chain rule; this is an example of dynamic programming

Dynamic programming is both a mathematical optimization method and a computer programming method. The method was developed by Richard Bellman in the 1950s and has found applications in numerous fields, from aerospace engineering to economics. ...

.

The term ''backpropagation'' strictly refers only to the algorithm for computing the gradient, not how the gradient is used; however, the term is often used loosely to refer to the entire learning algorithm, including how the gradient is used, such as by stochastic gradient descent. Backpropagation generalizes the gradient computation in the delta rule

In machine learning, the delta rule is a gradient descent learning rule for updating the weights of the inputs to artificial neurons in a single-layer neural network. It is a special case of the more general backpropagation algorithm. For a n ...

, which is the single-layer version of backpropagation, and is in turn generalized by automatic differentiation

In mathematics and computer algebra, automatic differentiation (AD), also called algorithmic differentiation, computational differentiation, auto-differentiation, or simply autodiff, is a set of techniques to evaluate the derivative of a function s ...

, where backpropagation is a special case of reverse accumulation

In mathematics and computer algebra, automatic differentiation (AD), also called algorithmic differentiation, computational differentiation, auto-differentiation, or simply autodiff, is a set of techniques to evaluate the derivative of a function s ...

(or "reverse mode")., "The back-propagation algorithm described here is only one approach to automatic differentiation. It is a special case of a broader class of techniques called ''reverse mode accumulation''." The term ''backpropagation'' and its general use in neural networks was announced in , then elaborated and popularized in , but the technique was independently rediscovered many times, and had many predecessors dating to the 1960s; see . A modern overview is given in the deep learning textbook by .

Overview

Backpropagation computes thegradient

In vector calculus, the gradient of a scalar-valued differentiable function of several variables is the vector field (or vector-valued function) \nabla f whose value at a point p is the "direction and rate of fastest increase". If the gr ...

in weight space of a feedforward neural network, with respect to a loss function. Denote:

* : input (vector of features)

* : target output

*:For classification, output will be a vector of class probabilities (e.g., , and target output is a specific class, encoded by the one-hot

In digital circuits and machine learning, a one-hot is a group of bits among which the legal combinations of values are only those with a single high (1) bit and all the others low (0). A similar implementation in which all bits are '1' except ...

/ dummy variable (e.g., ).

* : loss function or "cost function"

*:For classification, this is usually cross entropy

In information theory, the cross-entropy between two probability distributions p and q over the same underlying set of events measures the average number of bits needed to identify an event drawn from the set if a coding scheme used for the set is ...

(XC, log loss

In information theory, the cross-entropy between two probability distributions p and q over the same underlying set of events measures the average number of bits needed to identify an event drawn from the set if a coding scheme used for the set is ...

), while for regression it is usually squared error loss (SEL).

* : the number of layers

* : the weights between layer and , where is the weight between the -th node in layer and the -th node in layer

* : activation function

In artificial neural networks, the activation function of a node defines the output of that node given an input or set of inputs.

A standard integrated circuit can be seen as a digital network of activation functions that can be "ON" (1) or " ...

s at layer

*:For classification the last layer is usually the logistic function for binary classification, and softmax (softargmax) for multi-class classification, while for the hidden layers this was traditionally a sigmoid function

A sigmoid function is a mathematical function having a characteristic "S"-shaped curve or sigmoid curve.

A common example of a sigmoid function is the logistic function shown in the first figure and defined by the formula:

:S(x) = \frac = \ ...

(logistic function or others) on each node (coordinate), but today is more varied, with rectifier (ramp

An inclined plane, also known as a ramp, is a flat supporting surface tilted at an angle from the vertical direction, with one end higher than the other, used as an aid for raising or lowering a load. The inclined plane is one of the six clas ...

, ReLU

In the context of artificial neural networks, the rectifier or ReLU (rectified linear unit) activation function is an activation function defined as the positive part of its argument:

: f(x) = x^+ = \max(0, x),

where ''x'' is the input to a neu ...

) being common.

In the derivation of backpropagation, other intermediate quantities are used; they are introduced as needed below. Bias terms are not treated specially, as they correspond to a weight with a fixed input of 1. For the purpose of backpropagation, the specific loss function and activation functions do not matter, as long as they and their derivatives can be evaluated efficiently. Traditional activation functions include but are not limited to sigmoid, tanh, and ReLU

In the context of artificial neural networks, the rectifier or ReLU (rectified linear unit) activation function is an activation function defined as the positive part of its argument:

: f(x) = x^+ = \max(0, x),

where ''x'' is the input to a neu ...

. Since, swish, mish

Mish ( arz, مش ) is a traditional Egyptian cheese that is made by fermenting salty cheese for several months or years.

Mish may be similar to cheese that has been found in the tomb of the First Dynasty Pharaoh Hor-Aha at Saqqara, from 3200 BC ...

, and other activation functions were proposed as well.

The overall network is a combination of function composition and matrix multiplication

In mathematics, particularly in linear algebra, matrix multiplication is a binary operation that produces a matrix from two matrices. For matrix multiplication, the number of columns in the first matrix must be equal to the number of rows in the s ...

:

:

For a training set there will be a set of input–output pairs, . For each input–output pair in the training set, the loss of the model on that pair is the cost of the difference between the predicted output and the target output :

:

Note the distinction: during model evaluation, the weights are fixed, while the inputs vary (and the target output may be unknown), and the network ends with the output layer (it does not include the loss function). During model training, the input–output pair is fixed, while the weights vary, and the network ends with the loss function.

Backpropagation computes the gradient for a ''fixed'' input–output pair , where the weights can vary. Each individual component of the gradient, can be computed by the chain rule; however, doing this separately for each weight is inefficient. Backpropagation efficiently computes the gradient by avoiding duplicate calculations and not computing unnecessary intermediate values, by computing the gradient of each layer – specifically, the gradient of the weighted ''input'' of each layer, denoted by – from back to front.

Informally, the key point is that since the only way a weight in affects the loss is through its effect on the ''next'' layer, and it does so ''linearly'', are the only data you need to compute the gradients of the weights at layer , and then you can compute the previous layer and repeat recursively. This avoids inefficiency in two ways. Firstly, it avoids duplication because when computing the gradient at layer , you do not need to recompute all the derivatives on later layers each time. Secondly, it avoids unnecessary intermediate calculations because at each stage it directly computes the gradient of the weights with respect to the ultimate output (the loss), rather than unnecessarily computing the derivatives of the values of hidden layers with respect to changes in weights .

Backpropagation can be expressed for simple feedforward networks in terms of matrix multiplication

In mathematics, particularly in linear algebra, matrix multiplication is a binary operation that produces a matrix from two matrices. For matrix multiplication, the number of columns in the first matrix must be equal to the number of rows in the s ...

, or more generally in terms of the adjoint graph.

Matrix multiplication

For the basic case of a feedforward network, where nodes in each layer are connected only to nodes in the immediate next layer (without skipping any layers), and there is a loss function that computes a scalar loss for the final output, backpropagation can be understood simply by matrix multiplication. Essentially, backpropagation evaluates the expression for the derivative of the cost function as a product of derivatives between each layer ''from right to left'' – "backwards" – with the gradient of the weights between each layer being a simple modification of the partial products (the "backwards propagated error"). Given an input–output pair , the loss is: : To compute this, one starts with the input and works forward; denote the weighted input of each hidden layer as and the output of hidden layer as the activation . For backpropagation, the activation as well as the derivatives (evaluated at ) must be cached for use during the backwards pass. The derivative of the loss in terms of the inputs is given by the chain rule; note that each term is atotal derivative

In mathematics, the total derivative of a function at a point is the best linear approximation near this point of the function with respect to its arguments. Unlike partial derivatives, the total derivative approximates the function with res ...

, evaluated at the value of the network (at each node) on the input :

:

where is a Hadamard product, that is an element-wise product.

These terms are: the derivative of the loss function; the derivatives of the activation functions; and the matrices of weights:

:

The gradient is the transpose

In linear algebra, the transpose of a matrix is an operator which flips a matrix over its diagonal;

that is, it switches the row and column indices of the matrix by producing another matrix, often denoted by (among other notations).

The tr ...

of the derivative of the output in terms of the input, so the matrices are transposed and the order of multiplication is reversed, but the entries are the same:

:

Backpropagation then consists essentially of evaluating this expression from right to left (equivalently, multiplying the previous expression for the derivative from left to right), computing the gradient at each layer on the way; there is an added step, because the gradient of the weights isn't just a subexpression: there's an extra multiplication.

Introducing the auxiliary quantity for the partial products (multiplying from right to left), interpreted as the "error at level " and defined as the gradient of the input values at level :

:

Note that is a vector, of length equal to the number of nodes in level ; each component is interpreted as the "cost attributable to (the value of) that node".

The gradient of the weights in layer is then:

:

The factor of is because the weights between level and affect level proportionally to the inputs (activations): the inputs are fixed, the weights vary.

The can easily be computed recursively, going from right to left, as:

:

The gradients of the weights can thus be computed using a few matrix multiplications for each level; this is backpropagation.

Compared with naively computing forwards (using the for illustration):

:

there are two key differences with backpropagation:

# Computing in terms of avoids the obvious duplicate multiplication of layers and beyond.

# Multiplying starting from – propagating the error ''backwards'' – means that each step simply multiplies a vector () by the matrices of weights and derivatives of activations . By contrast, multiplying forwards, starting from the changes at an earlier layer, means that each multiplication multiplies a ''matrix'' by a ''matrix''. This is much more expensive, and corresponds to tracking every possible path of a change in one layer forward to changes in the layer (for multiplying by , with additional multiplications for the derivatives of the activations), which unnecessarily computes the intermediate quantities of how weight changes affect the values of hidden nodes.

Adjoint graph

For more general graphs, and other advanced variations, backpropagation can be understood in terms ofautomatic differentiation

In mathematics and computer algebra, automatic differentiation (AD), also called algorithmic differentiation, computational differentiation, auto-differentiation, or simply autodiff, is a set of techniques to evaluate the derivative of a function s ...

, where backpropagation is a special case of reverse accumulation

In mathematics and computer algebra, automatic differentiation (AD), also called algorithmic differentiation, computational differentiation, auto-differentiation, or simply autodiff, is a set of techniques to evaluate the derivative of a function s ...

(or "reverse mode").

Intuition

Motivation

The goal of anysupervised learning

Supervised learning (SL) is a machine learning paradigm for problems where the available data consists of labelled examples, meaning that each data point contains features (covariates) and an associated label. The goal of supervised learning alg ...

algorithm is to find a function that best maps a set of inputs to their correct output. The motivation for backpropagation is to train a multi-layered neural network such that it can learn the appropriate internal representations to allow it to learn any arbitrary mapping of input to output.

Learning as an optimization problem

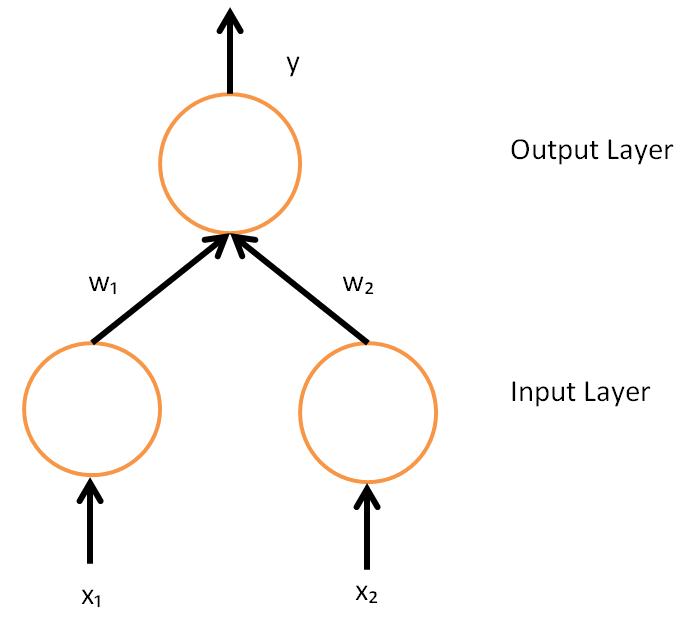

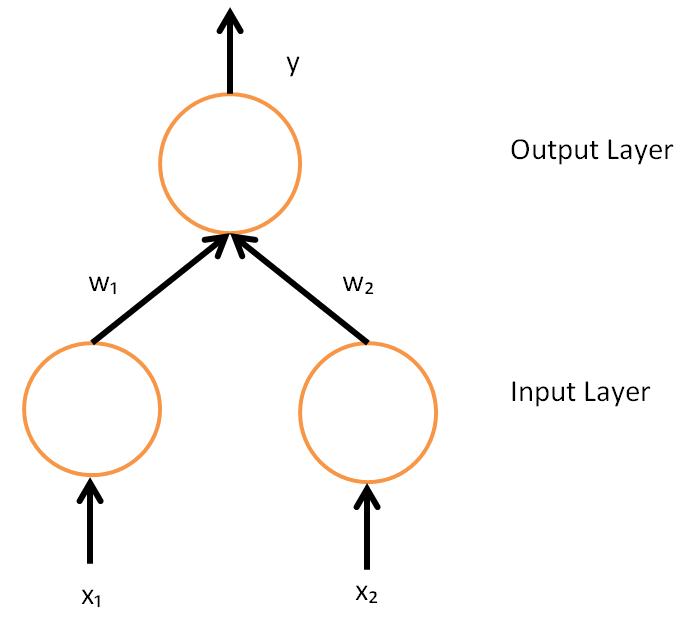

To understand the mathematical derivation of the backpropagation algorithm, it helps to first develop some intuition about the relationship between the actual output of a neuron and the correct output for a particular training example. Consider a simple neural network with two input units, one output unit and no hidden units, and in which each neuron uses a linear output (unlike most work on neural networks, in which mapping from inputs to outputs is non-linear) that is the weighted sum of its input. Initially, before training, the weights will be set randomly. Then the neuron learns from training examples, which in this case consist of a set of

Initially, before training, the weights will be set randomly. Then the neuron learns from training examples, which in this case consist of a set of tuple

In mathematics, a tuple is a finite ordered list (sequence) of elements. An -tuple is a sequence (or ordered list) of elements, where is a non-negative integer. There is only one 0-tuple, referred to as ''the empty tuple''. An -tuple is defi ...

s where and are the inputs to the network and is the correct output (the output the network should produce given those inputs, when it has been trained). The initial network, given and , will compute an output that likely differs from (given random weights). A loss function is used for measuring the discrepancy between the target output and the computed output . For regression analysis

In statistical modeling, regression analysis is a set of statistical processes for estimating the relationships between a dependent variable (often called the 'outcome' or 'response' variable, or a 'label' in machine learning parlance) and one ...

problems the squared error can be used as a loss function, for classification the categorical crossentropy can be used.

As an example consider a regression problem using the square error as a loss:

:

where is the discrepancy or error.

Consider the network on a single training case: . Thus, the input and are 1 and 1 respectively and the correct output, is 0. Now if the relation is plotted between the network's output on the horizontal axis and the error on the vertical axis, the result is a parabola. The minimum

In mathematical analysis, the maxima and minima (the respective plurals of maximum and minimum) of a function, known collectively as extrema (the plural of extremum), are the largest and smallest value of the function, either within a given r ...

of the parabola

In mathematics, a parabola is a plane curve which is Reflection symmetry, mirror-symmetrical and is approximately U-shaped. It fits several superficially different Mathematics, mathematical descriptions, which can all be proved to define exact ...

corresponds to the output which minimizes the error . For a single training case, the minimum also touches the horizontal axis, which means the error will be zero and the network can produce an output that exactly matches the target output . Therefore, the problem of mapping inputs to outputs can be reduced to an optimization problem

In mathematics, computer science and economics, an optimization problem is the problem of finding the ''best'' solution from all feasible solutions.

Optimization problems can be divided into two categories, depending on whether the variables ...

of finding a function that will produce the minimal error.  However, the output of a neuron depends on the weighted sum of all its inputs:

:

where and are the weights on the connection from the input units to the output unit. Therefore, the error also depends on the incoming weights to the neuron, which is ultimately what needs to be changed in the network to enable learning.

In this example, upon injecting the training data , the loss function becomes

Then, the loss function takes the form of a parabolic cylinder with its base directed along . Since all sets of weights that satisfy minimize the loss function, in this case additional constraints are required to converge to a unique solution. Additional constraints could either be generated by setting specific conditions to the weights, or by injecting additional training data.

One commonly used algorithm to find the set of weights that minimizes the error is

However, the output of a neuron depends on the weighted sum of all its inputs:

:

where and are the weights on the connection from the input units to the output unit. Therefore, the error also depends on the incoming weights to the neuron, which is ultimately what needs to be changed in the network to enable learning.

In this example, upon injecting the training data , the loss function becomes

Then, the loss function takes the form of a parabolic cylinder with its base directed along . Since all sets of weights that satisfy minimize the loss function, in this case additional constraints are required to converge to a unique solution. Additional constraints could either be generated by setting specific conditions to the weights, or by injecting additional training data.

One commonly used algorithm to find the set of weights that minimizes the error is gradient descent

In mathematics, gradient descent (also often called steepest descent) is a first-order iterative optimization algorithm for finding a local minimum of a differentiable function. The idea is to take repeated steps in the opposite direction of the ...

. By backpropagation, the steepest descent direction is calculated of the loss function versus the present synaptic weights. Then, the weights can be modified along the steepest descent direction, and the error is minimized in an efficient way.

Derivation

The gradient descent method involves calculating the derivative of the loss function with respect to the weights of the network. This is normally done using backpropagation. Assuming one output neuron, the squared error function is : where : is the loss for the output and target value , : is the target output for a training sample, and : is the actual output of the output neuron. For each neuron , its output is defined as : where theactivation function

In artificial neural networks, the activation function of a node defines the output of that node given an input or set of inputs.

A standard integrated circuit can be seen as a digital network of activation functions that can be "ON" (1) or " ...

is non-linear and differentiable

In mathematics, a differentiable function of one real variable is a function whose derivative exists at each point in its domain. In other words, the graph of a differentiable function has a non-vertical tangent line at each interior point in its ...

over the activation region (the ReLU is not differentiable at one point). A historically used activation function is the logistic function:

:

which has a convenient derivative of:

:

The input to a neuron is the weighted sum of outputs of previous neurons. If the neuron is in the first layer after the input layer, the of the input layer are simply the inputs to the network. The number of input units to the neuron is . The variable denotes the weight between neuron of the previous layer and neuron of the current layer.

Finding the derivative of the error

Calculating the partial derivative of the error with respect to a weight is done using thechain rule

In calculus, the chain rule is a formula that expresses the derivative of the composition of two differentiable functions and in terms of the derivatives of and . More precisely, if h=f\circ g is the function such that h(x)=f(g(x)) for every , ...

twice:

In the last factor of the right-hand side of the above, only one term in the sum depends on , so that

If the neuron is in the first layer after the input layer, is just .

The derivative of the output of neuron with respect to its input is simply the partial derivative of the activation function:

which for the logistic activation function

:

This is the reason why backpropagation requires the activation function to be differentiable

In mathematics, a differentiable function of one real variable is a function whose derivative exists at each point in its domain. In other words, the graph of a differentiable function has a non-vertical tangent line at each interior point in its ...

. (Nevertheless, the ReLU

In the context of artificial neural networks, the rectifier or ReLU (rectified linear unit) activation function is an activation function defined as the positive part of its argument:

: f(x) = x^+ = \max(0, x),

where ''x'' is the input to a neu ...

activation function, which is non-differentiable at 0, has become quite popular, e.g. in AlexNet)

The first factor is straightforward to evaluate if the neuron is in the output layer, because then and

If half of the square error is used as loss function we can rewrite it as

:

However, if is in an arbitrary inner layer of the network, finding the derivative with respect to is less obvious.

Considering as a function with the inputs being all neurons receiving input from neuron ,

:

and taking the total derivative

In mathematics, the total derivative of a function at a point is the best linear approximation near this point of the function with respect to its arguments. Unlike partial derivatives, the total derivative approximates the function with res ...

with respect to , a recursive expression for the derivative is obtained:

Therefore, the derivative with respect to can be calculated if all the derivatives with respect to the outputs of the next layer – the ones closer to the output neuron – are known. [Note, if any of the neurons in set were not connected to neuron , they would be independent of and the corresponding partial derivative under the summation would vanish to 0.]

Substituting , and in we obtain:

:

:

with

:

if is the logistic function, and the error is the square error:

:

To update the weight using gradient descent, one must choose a learning rate, . The change in weight needs to reflect the impact on of an increase or decrease in . If , an increase in increases ; conversely, if , an increase in decreases . The new is added to the old weight, and the product of the learning rate and the gradient, multiplied by guarantees that changes in a way that always decreases . In other words, in the equation immediately below, always changes in such a way that is decreased:

:

Second-order gradient descent

Using aHessian matrix

In mathematics, the Hessian matrix or Hessian is a square matrix of second-order partial derivatives of a scalar-valued function, or scalar field. It describes the local curvature of a function of many variables. The Hessian matrix was developed ...

of second-order derivatives of the error function, the Levenberg-Marquardt algorithm often converges faster than first-order gradient descent, especially when the topology of the error function is complicated. It may also find solutions in smaller node counts for which other methods might not converge. The Hessian can be approximated by the Fisher information

In mathematical statistics, the Fisher information (sometimes simply called information) is a way of measuring the amount of information that an observable random variable ''X'' carries about an unknown parameter ''θ'' of a distribution that model ...

matrix.

Loss function

The loss function is a function that maps values of one or more variables onto areal number

In mathematics, a real number is a number that can be used to measure a ''continuous'' one-dimensional quantity such as a distance, duration or temperature. Here, ''continuous'' means that values can have arbitrarily small variations. Every ...

intuitively representing some "cost" associated with those values. For backpropagation, the loss function calculates the difference between the network output and its expected output, after a training example has propagated through the network.

Assumptions

The mathematical expression of the loss function must fulfill two conditions in order for it to be possibly used in backpropagation. The first is that it can be written as an average over error functions , for individual training examples, . The reason for this assumption is that the backpropagation algorithm calculates the gradient of the error function for a single training example, which needs to be generalized to the overall error function. The second assumption is that it can be written as a function of the outputs from the neural network.Example loss function

Let be vectors in . Select an error function measuring the difference between two outputs. The standard choice is the square of theEuclidean distance

In mathematics, the Euclidean distance between two points in Euclidean space is the length of a line segment between the two points.

It can be calculated from the Cartesian coordinates of the points using the Pythagorean theorem, therefor ...

between the vectors and :The error function over training examples can then be written as an average of losses over individual examples:

Limitations

global minimum

In mathematical analysis, the maxima and minima (the respective plurals of maximum and minimum) of a function, known collectively as extrema (the plural of extremum), are the largest and smallest value of the function, either within a given ran ...

of the error function, but only a local minimum; also, it has trouble crossing plateaus

In geology and physical geography, a plateau (; ; ), also called a high plain or a tableland, is an area of a highland consisting of flat terrain that is raised sharply above the surrounding area on at least one side. Often one or more sides ha ...

in the error function landscape. This issue, caused by the non-convexity of error functions in neural networks, was long thought to be a major drawback, but Yann LeCun

Yann André LeCun ( , ; originally spelled Le Cun; born 8 July 1960) is a French computer scientist working primarily in the fields of machine learning, computer vision, mobile robotics and computational neuroscience. He is the Silver Professo ...

''et al.'' argue that in many practical problems, it is not.

* Backpropagation learning does not require normalization of input vectors; however, normalization could improve performance.

* Backpropagation requires the derivatives of activation functions to be known at network design time.

History

The term ''backpropagation'' and its general use in neural networks was announced in , then elaborated and popularized in , but the technique was independently rediscovered many times, and had many predecessors dating to the 1960s., "Efficient applications of the chain rule based on dynamic programming began to appear in the 1960s and 1970s, mostly for control applications (Kelley, 1960; Bryson and Denham, 1961; Dreyfus, 1962; Bryson and Ho, 1969; Dreyfus, 1973) but also for sensitivity analysis (Linnainmaa, 1976). ... The idea was finally developed in practice after being independently rediscovered in different ways (LeCun, 1985; Parker, 1985; Rumelhart ''et al.'', 1986a). The book ''Parallel Distributed Processing'' presented the results of some of the first successful experiments with back-propagation in a chapter (Rumelhart ''et al.'', 1986b) that contributed greatly to the popularization of back-propagation and initiated a very active period of research in multilayer neural networks." The basics of continuous backpropagation were derived in the context ofcontrol theory

Control theory is a field of mathematics that deals with the control of dynamical systems in engineered processes and machines. The objective is to develop a model or algorithm governing the application of system inputs to drive the system to a ...

by Henry J. Kelley

Henry J. Kelley (1926-1988) was Christopher C. Kraft Professor of Aerospace and Ocean Engineering at the Virginia Polytechnic Institute. He produced major contributions to control theory, especially in aeronautical engineering and flight optimizat ...

in 1960, and by Arthur E. Bryson

Arthur Earl Bryson Jr. (born October 7, 1925) is the Paul Pigott Professor of Engineering Emeritus at Stanford University and the "father of modern optimal control theory". With Henry J. Kelley, he also pioneered an early version of the backpr ...

in 1961. They used principles of dynamic programming

Dynamic programming is both a mathematical optimization method and a computer programming method. The method was developed by Richard Bellman in the 1950s and has found applications in numerous fields, from aerospace engineering to economics. ...

. In 1962, Stuart Dreyfus

A native of Terre Haute, Indiana, Stuart E. Dreyfus is professor emeritus at University of California, Berkeley in the Industrial Engineering and Operations Research Department. While at the Rand Corporation he was a programmer of the JOHNNIAC com ...

published a simpler derivation based only on the chain rule

In calculus, the chain rule is a formula that expresses the derivative of the composition of two differentiable functions and in terms of the derivatives of and . More precisely, if h=f\circ g is the function such that h(x)=f(g(x)) for every , ...

. Bryson and Ho described it as a multi-stage dynamic system optimization method in 1969. Backpropagation was derived by multiple researchers in the early 60's and implemented to run on computers as early as 1970 by Seppo Linnainmaa

Seppo Ilmari Linnainmaa (born 28 September 1945) is a Finnish mathematician and computer scientist. He was born in Pori. In 1974 he obtained the first doctorate ever awarded in computer science at the University of Helsinki. In 1976, he became As ...

. Paul Werbos was first in the US to propose that it could be used for neural nets after analyzing it in depth in his 1974 dissertation.The thesis, and some supplementary information, can be found in his book, While not applied to neural networks, in 1970 Linnainmaa published the general method for automatic differentiation

In mathematics and computer algebra, automatic differentiation (AD), also called algorithmic differentiation, computational differentiation, auto-differentiation, or simply autodiff, is a set of techniques to evaluate the derivative of a function s ...

(AD).Seppo Linnainmaa

Seppo Ilmari Linnainmaa (born 28 September 1945) is a Finnish mathematician and computer scientist. He was born in Pori. In 1974 he obtained the first doctorate ever awarded in computer science at the University of Helsinki. In 1976, he became As ...

(1970). The representation of the cumulative rounding error of an algorithm as a Taylor expansion of the local rounding errors. Master's Thesis (in Finnish), Univ. Helsinki, 6–7. Although very controversial, some scientists believe this was actually the first step toward developing a back-propagation algorithm. In 1973 Dreyfus adapts parameter

A parameter (), generally, is any characteristic that can help in defining or classifying a particular system (meaning an event, project, object, situation, etc.). That is, a parameter is an element of a system that is useful, or critical, when ...

s of controllers in proportion to error gradients. In 1974 Werbos mentioned the possibility of applying this principle to artificial neural networks, and in 1982 he applied Linnainmaa's AD method to non-linear functions.

Later the Werbos method was rediscovered and described in 1985 by Parker, and in 1986 by Rumelhart, Hinton and Williams. Rumelhart, Hinton and Williams showed experimentally that this method can generate useful internal representations of incoming data in hidden layers of neural networks. Yann LeCun

Yann André LeCun ( , ; originally spelled Le Cun; born 8 July 1960) is a French computer scientist working primarily in the fields of machine learning, computer vision, mobile robotics and computational neuroscience. He is the Silver Professo ...

proposed the modern form of the back-propagation learning algorithm for neural networks in his PhD thesis in 1987. In 1993, Eric Wan won an international pattern recognition contest through backpropagation.

During the 2000s it fell out of favour, but returned in the 2010s, benefitting from cheap, powerful GPU

A graphics processing unit (GPU) is a specialized electronic circuit designed to manipulate and alter memory to accelerate the creation of images in a frame buffer intended for output to a display device. GPUs are used in embedded systems, mobi ...

-based computing systems. This has been especially so in speech recognition

Speech recognition is an interdisciplinary subfield of computer science and computational linguistics that develops methodologies and technologies that enable the recognition and translation of spoken language into text by computers with the ...

, machine vision

Machine vision (MV) is the technology and methods used to provide imaging-based automatic inspection and analysis for such applications as automatic inspection, process control, and robot guidance, usually in industry. Machine vision refers to ...

, natural language processing, and language structure learning research (in which it has been used to explain a variety of phenomena related to first and second language learning.).

Error backpropagation has been suggested to explain human brain ERP components like the N400 and P600.

See also

*Artificial neural network

Artificial neural networks (ANNs), usually simply called neural networks (NNs) or neural nets, are computing systems inspired by the biological neural networks that constitute animal brains.

An ANN is based on a collection of connected unit ...

* Neural circuit

* Catastrophic interference

Catastrophic interference, also known as catastrophic forgetting, is the tendency of an artificial neural network to abruptly and drastically forget previously learned information upon learning new information. Neural networks are an important par ...

* Ensemble learning

In statistics and machine learning, ensemble methods use multiple learning algorithms to obtain better predictive performance than could be obtained from any of the constituent learning algorithms alone.

Unlike a statistical ensemble in statist ...

* AdaBoost

AdaBoost, short for ''Adaptive Boosting'', is a statistical classification meta-algorithm formulated by Yoav Freund and Robert Schapire in 1995, who won the 2003 Gödel Prize for their work. It can be used in conjunction with many other types of ...

* Overfitting

* Neural backpropagation

Neural backpropagation is the phenomenon in which, after the action potential of a neuron creates a voltage spike down the axon (normal propagation), another impulse is generated from the Soma (biology), soma and propagates towards the Apical den ...

* Backpropagation through time

Backpropagation through time (BPTT) is a gradient-based technique for training certain types of recurrent neural networks. It can be used to train Elman networks. The algorithm was independently derived by numerous researchers.

Algorithm

Th ...

Notes

References

Further reading

* * * *External links

* Backpropagation neural network tutorial at the Wikiversity * * * * {{Authority control Machine learning algorithms Artificial neural networks Articles with example pseudocode