Motivation and applications

SAR is capable of high-resolution remote sensing, independent of flight altitude, and independent of weather, as SAR can select frequencies to avoid weather-caused signal attenuation. SAR has day and night imaging capability as illumination is provided by the SAR.Tomographic SAR. Gianfranco Fornaro. National Research Council (CNR). Institute for Electromagnetic Sensing of the Environment (IREA) Via Diocleziano, 328,I-80124 Napoli, ITALYSynthetic Aperture Radar Imaging Using Spectral Estimation Techniques. Shivakumar Ramakrishnan, Vincent Demarcus, Jerome Le Ny, Neal Patwari, Joel Gussy. University of Michigan.

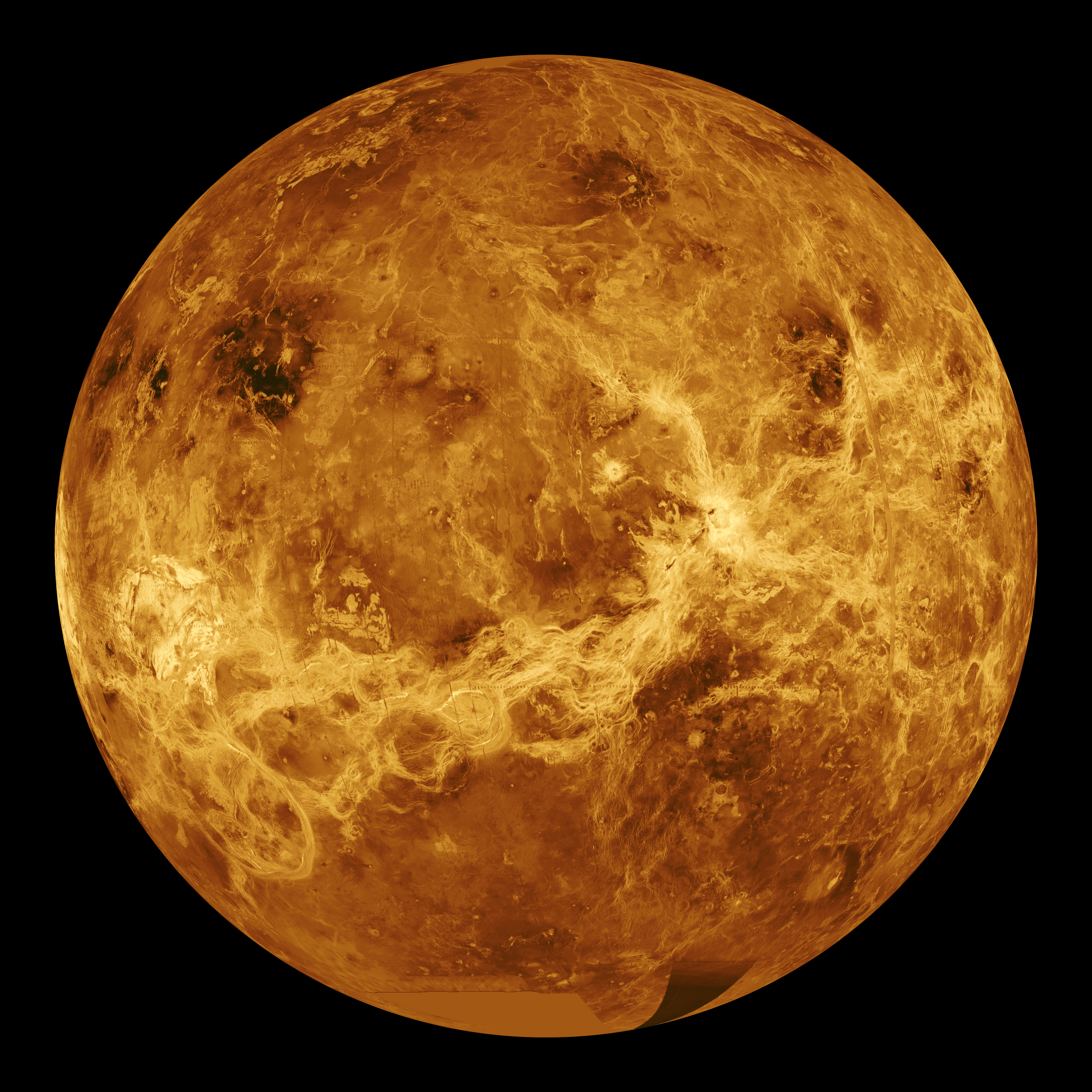

SAR images have wide applications in remote sensing and mapping of surfaces of the Earth and other planets. Applications of SAR are numerous. Examples include topography, oceanography, glaciology, geology (for example, terrain discrimination and subsurface imaging). SAR can also be used in forestry to determine forest height, biomass, and deforestation. Volcano and earthquake monitoring use differential

SAR is capable of high-resolution remote sensing, independent of flight altitude, and independent of weather, as SAR can select frequencies to avoid weather-caused signal attenuation. SAR has day and night imaging capability as illumination is provided by the SAR.Tomographic SAR. Gianfranco Fornaro. National Research Council (CNR). Institute for Electromagnetic Sensing of the Environment (IREA) Via Diocleziano, 328,I-80124 Napoli, ITALYSynthetic Aperture Radar Imaging Using Spectral Estimation Techniques. Shivakumar Ramakrishnan, Vincent Demarcus, Jerome Le Ny, Neal Patwari, Joel Gussy. University of Michigan.

SAR images have wide applications in remote sensing and mapping of surfaces of the Earth and other planets. Applications of SAR are numerous. Examples include topography, oceanography, glaciology, geology (for example, terrain discrimination and subsurface imaging). SAR can also be used in forestry to determine forest height, biomass, and deforestation. Volcano and earthquake monitoring use differential Basic principle

Algorithm

The SAR algorithm, as given here, generally applies to phased arrays. A three-dimensional array (a volume) of scene elements is defined, which will represent the volume of space within which targets exist. Each element of the array is a cubical voxel representing the probability (a "density") of a reflective surface being at that location in space. (Note that two-dimensional SARs are also possible, showing only a top-down view of the target area.) Initially, the SAR algorithm gives each voxel a density of zero; then, for each captured waveform, the entire volume is iterated. For a given waveform and voxel, the distance from the position represented by that voxel to the antenna(s) used to capture that waveform is calculated. That distance represents a time delay into the waveform. The sample value at that position in the waveform is then added to the voxel's density value. This represents a possible echo from a target at that position. Note there are several optional approaches here, depending on the precision of the waveform timing, among other things. For example, if phase cannot be accurately determined, only the envelope magnitude (with the help of a Hilbert transform) of the waveform sample might be added to the voxel. If waveform polarization and phase are known and are accurate enough, then these values might be added to a more complex voxel that holds such measurements separately. After all waveforms have been iterated over all voxels, the basic SAR processing is complete. What remains, in the simplest approach, is to decide what voxel density value represents a solid object. Voxels whose density is below that threshold are ignored. Note the threshold level chosen must be higher than the peak energy of any single wave, otherwise that wave peak would appear as a sphere (or ellipse, in the case of multistatic operation) of false "density" across the entire volume. Thus to detect a point on a target, there must be at least two different antenna echoes from that point. Consequently, there is a need for large numbers of antenna positions to properly characterize a target. The voxels that passed the threshold criteria are visualized in 2D or 3D. Optionally, added visual quality can sometimes be had by use of a surface detection algorithm like marching cubes.Existing spectral estimation approaches

Synthetic-aperture radar determines the 3D reflectivity from measured SAR data. It is basically a spectrum estimation, because for a specific cell of an image, the complex-value SAR measurements of the SAR image stack are a sampled version of the Fourier transform of reflectivity in elevation direction, but the Fourier transform is irregular. Thus the spectral estimation techniques are used to improve the resolution and reduce speckle compared to the results of conventional Fourier transform SAR imaging techniques.Non-parametric methods

FFT

FFT (Fast Fourier Transform i.e.,= Advantages

= * Additive group-theoretic properties of multidimensional input/output indexing sets are used for the mathematical formulations, therefore, it is easier to identify mapping between computing structures and mathematical expressions, thus, better than conventional methods. * The language of CKA algebra helps the application developer in understanding which are the more computational efficient FFT variants thus reducing the computational effort and improve their implementation time.= Disadvantages

= * FFT cannot separate sinusoids close in frequency. If the periodicity of the data does not match FFT, edge effects are seen.Capon method

The Capon spectral method, also called the minimum-variance method, is a multidimensional array-processing technique. It is a nonparametric covariance-based method, which uses an adaptive matched-filterbank approach and follows two main steps: # Passing the data through a 2D bandpass filter with varying center frequencies (). # Estimating the power at () for all of interest from the filtered data. The adaptive Capon bandpass filter is designed to minimize the power of the filter output, as well as pass the frequencies () without any attenuation, i.e., to satisfy, for each (), : subject to where ''R'' is the covariance matrix, is the complex conjugate transpose of the impulse response of the FIR filter, is the 2D Fourier vector, defined as , denotes Kronecker product. Therefore, it passes a 2D sinusoid at a given frequency without distortion while minimizing the variance of the noise of the resulting image. The purpose is to compute the spectral estimate efficiently. ''Spectral estimate'' is given as : where ''R'' is the covariance matrix, and is the 2D complex-conjugate transpose of the Fourier vector. The computation of this equation over all frequencies is time-consuming. It is seen that the forward–backward Capon estimator yields better estimation than the forward-only classical capon approach. The main reason behind this is that while the forward–backward Capon uses both the forward and backward data vectors to obtain the estimate of the covariance matrix, the forward-only Capon uses only the forward data vectors to estimate the covariance matrix.= Advantages

= * Capon can yield more accurate spectral estimates with much lower sidelobes and narrower spectral peaks than the fast Fourier transform (FFT) method. * Capon method can provide much better resolution.= Disadvantages

= * Implementation requires computation of two intensive tasks: inversion of the covariance matrix ''R'' and multiplication by the matrix, which has to be done for each point .APES method

The APES (amplitude and phase estimation) method is also a matched-filter-bank method, which assumes that the phase history data is a sum of 2D sinusoids in noise. APES spectral estimator has 2-step filtering interpretation: # Passing data through a bank of FIR bandpass filters with varying center frequency . # Obtaining the spectrum estimate for from the filtered data. Empirically, the APES method results in wider spectral peaks than the Capon method, but more accurate spectral estimates for amplitude in SAR. In the Capon method, although the spectral peaks are narrower than the APES, the sidelobes are higher than that for the APES. As a result, the estimate for the amplitude is expected to be less accurate for the Capon method than for the APES method. The APES method requires about 1.5 times more computation than the Capon method.= Advantages

= * Filtering reduces the number of available samples, but when it is designed tactically, the increase in signal-to-noise ratio (SNR) in the filtered data will compensate this reduction, and the amplitude of a sinusoidal component with frequency= Disadvantages

= * The autocovariance matrix is much larger in 2D than in 1D, therefore it is limited by memory available.SAMV method

SAMV (algorithm)">SAMV method is a parameter-free sparse signal reconstruction based algorithm. It achieves Super-resolution imaging">super-resolution and is robust to highly correlated signals. The name emphasizes its basis on the asymptotically minimum variance (AMV) criterion. It is a powerful tool for the recovery of both the amplitude and frequency characteristics of multiple highly correlated sources in challenging environment (e.g., limited number of snapshots, lowAdvantages

SAMV method is capable of achieving resolution higher than some established parametric methods, e.g.,Disadvantages

Parametric subspace decomposition methods

Eigenvector method

This subspace decomposition method separates the eigenvectors of the autocovariance matrix into those corresponding to signals and to clutter. The amplitude of the image at a point (= Advantages

= * Shows features of image more accurately.= Disadvantages

= * High computational complexity.MUSIC method

= Advantages

= *= Disadvantages

= * Resolution loss due to the averaging operation.Backprojection algorithm

Backprojection Algorithm has two methods: ''Time-domain Backprojection'' and ''Frequency-domain Backprojection''. The time-domain Backprojection has more advantages over frequency-domain and thus, is more preferred. The time-domain Backprojection forms images or spectrums by matching the data acquired from the radar and as per what it expects to receive. It can be considered as an ideal matched-filter for synthetic-aperture radar. There is no need of having a different motion compensation step due to its quality of handling non-ideal motion/sampling. It can also be used for various imaging geometries.Advantages

* ''It is invariant to the imaging mode'': which means, that it uses the same algorithm irrespective of the imaging mode present, whereas, frequency domain methods require changes depending on the mode and geometry. * Ambiguous azimuth aliasing usually occurs when the Nyquist spatial sampling requirements are exceeded by frequencies. Unambiguous aliasing occurs in squinted geometries where the signal bandwidth does not exceed the sampling limits, but has undergone "spectral wrapping." Backprojection Algorithm does not get affected by any such kind of aliasing effects. * ''It matches the space/time filter:'' uses the information about the imaging geometry, to produce a pixel-by-pixel varying matched filter to approximate the expected return signal. This usually yields antenna gain compensation. * With reference to the previous advantage, the back projection algorithm compensates for the motion. This becomes an advantage at areas having low altitudes.Disadvantages

* The computational expense is more for Backprojection algorithm as compared to other frequency domain methods. * It requires very precise knowledge of imaging geometry.Application: geosynchronous orbit synthetic-aperture radar (GEO-SAR)

In GEO-SAR, to focus specially on the relative moving track, the backprojection algorithm works very well. It uses the concept of Azimuth Processing in the time domain. For the satellite-ground geometry, GEO-SAR plays a significant role. The procedure of this concept is elaborated as follows. # The raw data acquired is segmented or drawn into sub-apertures for simplification of speedy conduction of procedure. # The range of the data is then compressed, using the concept of "Matched Filtering" for every segment/sub-aperture created. It is given by-Comparison between the algorithms

Capon and APES can yield more accurate spectral estimates with much lower sidelobes and more narrow spectral peaks than the fast Fourier transform (FFT) method, which is also a special case of the FIR filtering approaches. It is seen that although the APES algorithm gives slightly wider spectral peaks than the Capon method, the former yields more accurate overall spectral estimates than the latter and the FFT method. FFT method is fast and simple but have larger sidelobes. Capon has high resolution but high computational complexity. EV also has high resolution and high computational complexity. APES has higher resolution, faster than capon and EV but high computational complexity. MUSIC method is not generally suitable for SAR imaging, as whitening the clutter eigenvalues destroys the spatial inhomogeneities associated with terrain clutter or other diffuse scattering in SAR imagery. But it offers higher frequency resolution in the resulting power spectral density (PSD) than the fast Fourier transform (FFT)-based methods. The backprojection algorithm is computationally expensive. It is specifically attractive for sensors that are wideband, wide-angle, and/or have long coherent apertures with substantial off-track motion.Multistatic operation

SAR requires that echo captures be taken at multiple antenna positions. The more captures taken (at different antenna locations) the more reliable the target characterization. Multiple captures can be obtained by moving a single antenna to different locations, by placing multiple stationary antennas at different locations, or combinations thereof. The advantage of a single moving antenna is that it can be easily placed in any number of positions to provide any number of monostatic waveforms. For example, an antenna mounted on an airplane takes many captures per second as the plane travels. The principal advantages of multiple static antennas are that a moving target can be characterized (assuming the capture electronics are fast enough), that no vehicle or motion machinery is necessary, and that antenna positions need not be derived from other, sometimes unreliable, information. (One problem with SAR aboard an airplane is knowing precise antenna positions as the plane travels). For multiple static antennas, all combinations of monostatic and multistatic radar waveform captures are possible. Note, however, that it is not advantageous to capture a waveform for each of both transmission directions for a given pair of antennas, because those waveforms will be identical. When multiple static antennas are used, the total number of unique echo waveforms that can be captured is :Scanning modes

Stripmap mode airborne SAR

The antenna stays in a fixed position, and may be orthogonal to the flight path or squinted slightly forward or backward .

When the antenna aperture travels along the flight path, a signal is transmitted at a rate equal to the pulse repetition frequency (PRF). The lower boundary of the PRF is determined by the Doppler bandwidth of the radar. The backscatter of each of these signals is commutatively added on a pixel-by-pixel basis to attain the fine azimuth resolution desired in radar imagery.

The antenna stays in a fixed position, and may be orthogonal to the flight path or squinted slightly forward or backward .

When the antenna aperture travels along the flight path, a signal is transmitted at a rate equal to the pulse repetition frequency (PRF). The lower boundary of the PRF is determined by the Doppler bandwidth of the radar. The backscatter of each of these signals is commutatively added on a pixel-by-pixel basis to attain the fine azimuth resolution desired in radar imagery.

Spotlight mode SAR

The spotlight synthetic aperture is given by

:

The spotlight synthetic aperture is given by

: Scan mode SAR

While operating as a scan mode SAR, the antenna beam sweeps periodically and thus cover much larger area than the spotlight and stripmap modes. However, the azimuth resolution become much lower than the stripmap mode due to the decreased azimuth bandwidth. Clearly there is a balance achieved between the azimuth resolution and the scan area of SAR. Here, the synthetic aperture is shared between the sub swaths, and it is not in direct contact within one subswath. Mosaic operation is required in azimuth and range directions to join the azimuth bursts and the range sub-swaths.

* ScanSAR makes the swath beam huge.

* The azimuth signal has many bursts.

* The azimuth resolution is limited due to the burst duration.

* Each target contains varied frequencies which completely depends on where the azimuth is present.

While operating as a scan mode SAR, the antenna beam sweeps periodically and thus cover much larger area than the spotlight and stripmap modes. However, the azimuth resolution become much lower than the stripmap mode due to the decreased azimuth bandwidth. Clearly there is a balance achieved between the azimuth resolution and the scan area of SAR. Here, the synthetic aperture is shared between the sub swaths, and it is not in direct contact within one subswath. Mosaic operation is required in azimuth and range directions to join the azimuth bursts and the range sub-swaths.

* ScanSAR makes the swath beam huge.

* The azimuth signal has many bursts.

* The azimuth resolution is limited due to the burst duration.

* Each target contains varied frequencies which completely depends on where the azimuth is present.

Special techniques

Polarimetry

Radar waves have a polarization. Different materials reflect radar waves with different intensities, but

Radar waves have a polarization. Different materials reflect radar waves with different intensities, but SAR polarimetry

SAR polarimetry is a technique used for deriving qualitative and quantitative physical information for land, snow and ice, ocean and urban applications based on the measurement and exploration of the polarimetric properties of man-made and natural scatterers. ''Terrain'' and ''land use'' classification is one of the most important applications of polarimetric synthetic-aperture radar (PolSAR).

SAR polarimetry uses a scattering matrix (S) to identify the scattering behavior of objects after an interaction with electromagnetic wave. The matrix is represented by a combination of horizontal and vertical polarization states of transmitted and received signals.

SAR polarimetry is a technique used for deriving qualitative and quantitative physical information for land, snow and ice, ocean and urban applications based on the measurement and exploration of the polarimetric properties of man-made and natural scatterers. ''Terrain'' and ''land use'' classification is one of the most important applications of polarimetric synthetic-aperture radar (PolSAR).

SAR polarimetry uses a scattering matrix (S) to identify the scattering behavior of objects after an interaction with electromagnetic wave. The matrix is represented by a combination of horizontal and vertical polarization states of transmitted and received signals.

Three-component scattering power model

The three-component scattering power model by Freeman and Durden is successfully used for the decomposition of a PolSAR image, applying the reflection symmetry condition using covariance matrix. The method is based on simple physical scattering mechanisms (surface scattering, double-bounce scattering, and volume scattering). The advantage of this scattering model is that it is simple and easy to implement for image processing. There are 2 major approaches for a 3Four-component scattering power model

For PolSAR image analysis, there can be cases where reflection symmetry condition does not hold. In those cases a ''four-component scattering model'' can be used to decompose polarimetric synthetic-aperture radar (SAR) images. This approach deals with the non-reflection symmetric scattering case. It includes and extends the three-component decomposition method introduced by Freeman and Durden to a fourth component by adding the helix scattering power. This helix power term generally appears in complex urban area but disappears for a natural distributed scatterer. There is also an improved method using the four-component decomposition algorithm, which was introduced for the general polSAR data image analyses. The SAR data is first filtered which is known as speckle reduction, then each pixel is decomposed by four-component model to determine the surface scattering power (Interferometry

Rather than discarding the phase data, information can be extracted from it. If two observations of the same terrain from very similar positions are available, aperture synthesis can be performed to provide the resolution performance which would be given by a radar system with dimensions equal to the separation of the two measurements. This technique is calledDifferential interferometry

Differential interferometry (D-InSAR) requires taking at least two images with addition of a DEM. The DEM can be either produced by GPS measurements or could be generated by interferometry as long as the time between acquisition of the image pairs is short, which guarantees minimal distortion of the image of the target surface. In principle, 3 images of the ground area with similar image acquisition geometry is often adequate for D-InSar. The principle for detecting ground movement is quite simple. One interferogram is created from the first two images; this is also called the reference interferogram or topographical interferogram. A second interferogram is created that captures topography + distortion. Subtracting the latter from the reference interferogram can reveal differential fringes, indicating movement. The described 3 image D-InSAR generation technique is called 3-pass or double-difference method. Differential fringes which remain as fringes in the differential interferogram are a result of SAR range changes of any displaced point on the ground from one interferogram to the next. In the differential interferogram, each fringe is directly proportional to the SAR wavelength, which is about 5.6 cm for ERS and RADARSAT single phase cycle. Surface displacement away from the satellite look direction causes an increase in path (translating to phase) difference. Since the signal travels from the SAR antenna to the target and back again, the measured displacement is twice the unit of wavelength. This means in differential interferometry one fringe cycle − to + or one wavelength corresponds to a displacement relative to SAR antenna of only half wavelength (2.8 cm). There are various publications on measuring subsidence movement, slope stability analysis, landslide, glacier movement, etc. tooling D-InSAR. Further advancement to this technique whereby differential interferometry from satellite SAR ascending pass and descending pass can be used to estimate 3-D ground movement. Research in this area has shown accurate measurements of 3-D ground movement with accuracies comparable to GPS based measurements can be achieved.Tomo-SAR

SAR Tomography is a subfield of a concept named as multi-baseline interferometry. It has been developed to give a 3D exposure to the imaging, which uses the beam formation concept. It can be used when the use demands a focused phase concern between the magnitude and the phase components of the SAR data, during information retrieval. One of the major advantages of Tomo-SAR is that it can separate out the parameters which get scattered, irrespective of how different their motions are. On using Tomo-SAR with differential interferometry, a new combination named "differential tomography" (Diff-Tomo) is developed. Tomo-SAR has an application based on radar imaging, which is the depiction of Ice Volume and Forest Temporal Coherence (Temporal coherence describes the correlation between waves observed at different moments in time).Ultra-wideband SAR

Conventional radar systems emit bursts of radio energy with a fairly narrow range of frequencies. A narrow-band channel, by definition, does not allow rapid changes in modulation. Since it is the change in a received signal that reveals the time of arrival of the signal (obviously an unchanging signal would reveal nothing about "when" it reflected from the target), a signal with only a slow change in modulation cannot reveal the distance to the target as well as a signal with a quick change in modulation. Ultra-wideband (UWB) refers to any radio transmission that uses a very large bandwidth – which is the same as saying it uses very rapid changes in modulation. Although there is no set bandwidth value that qualifies a signal as "UWB", systems using bandwidths greater than a sizable portion of the center frequency (typically about ten percent, or so) are most often called "UWB" systems. A typical UWB system might use a bandwidth of one-third to one-half of its center frequency. For example, some systems use a bandwidth of about 1 GHz centered around 3 GHz. The two most common methods to increase signal bandwidth used in UWB radar, including SAR, are very short pulses and high-bandwidth chirping. A general description of chirping appears elsewhere in this article. The bandwidth of a chirped system can be as narrow or as wide as the designers desire. Pulse-based UWB systems, being the more common method associated with the term "UWB radar", are described here. A pulse-based radar system transmits very short pulses of electromagnetic energy, typically only a few waves or less. A very short pulse is, of course, a very rapidly changing signal, and thus occupies a very wide bandwidth. This allows far more accurate measurement of distance, and thus resolution. The main disadvantage of pulse-based UWB SAR is that the transmitting and receiving front-end electronics are difficult to design for high-power applications. Specifically, the transmit duty cycle is so exceptionally low and pulse time so exceptionally short, that the electronics must be capable of extremely high instantaneous power to rival the average power of conventional radars. (Although it is true that UWB provides a notable gain in channel capacity over a narrow band signal because of the relationship of bandwidth in theDoppler-beam sharpening

Doppler Beam Sharpening commonly refers to the method of processing unfocused real-beam phase history to achieve better resolution than could be achieved by processing the real beam without it. Because the real aperture of the radar antenna is so small (compared to the wavelength in use), the radar energy spreads over a wide area (usually many degrees wide in a direction orthogonal (at right angles) to the direction of the platform (aircraft)). Doppler-beam sharpening takes advantage of the motion of the platform in that targets ahead of the platform return a Doppler upshifted signal (slightly higher in frequency) and targets behind the platform return a Doppler downshifted signal (slightly lower in frequency). The amount of shift varies with the angle forward or backward from the ortho-normal direction. By knowing the speed of the platform, target signal return is placed in a specific angle "bin" that changes over time. Signals are integrated over time and thus the radar "beam" is synthetically reduced to a much smaller aperture – or more accurately (and based on the ability to distinguish smaller Doppler shifts) the system can have hundreds of very "tight" beams concurrently. This technique dramatically improves angular resolution; however, it is far more difficult to take advantage of this technique for range resolution. (SeeChirped (pulse-compressed) radars

A common technique for many radar systems (usually also found in SAR systems) is to "Typical operation

Data collection

In a typical SAR application, a single radar antenna is attached to an aircraft or spacecraft such that a substantial component of the antenna's radiated beam has a wave-propagation direction perpendicular to the flight-path direction. The beam is allowed to be broad in the vertical direction so it will illuminate the terrain from nearly beneath the aircraft out toward the horizon.Image resolution and bandwidth

Resolution in the range dimension of the image is accomplished by creating pulses which define very short time intervals, either by emitting short pulses consisting of a carrier frequency and the necessary sidebands, all within a certain bandwidth, or by using longer " chirp pulses" in which frequency varies (often linearly) with time within that bandwidth. The differing times at which echoes return allow points at different distances to be distinguished. Image resolution of SAR in its range coordinate (expressed in image pixels per distance unit) is mainly proportional to the radio bandwidth of whatever type of pulse is used. In the cross-range coordinate, the similar resolution is mainly proportional to the bandwidth of the Doppler shift of the signal returns within the beamwidth. Since Doppler frequency depends on the angle of the scattering point's direction from the broadside direction, the Doppler bandwidth available within the beamwidth is the same at all ranges. Hence the theoretical spatial resolution limits in both image dimensions remain constant with variation of range. However, in practice, both the errors that accumulate with data-collection time and the particular techniques used in post-processing further limit cross-range resolution at long ranges.= Image resolution and beamwidth

= The total signal is that from a beamwidth-sized patch of the ground. To produce a beam that is narrow in the cross-range direction,

The total signal is that from a beamwidth-sized patch of the ground. To produce a beam that is narrow in the cross-range direction, Pulse transmission and reception

The conversion of return delay time to geometric range can be very accurate because of the natural constancy of the speed and direction of propagation of electromagnetic waves. However, for an aircraft flying through the never-uniform and never-quiescent atmosphere, the relating of pulse transmission and reception times to successive geometric positions of the antenna must be accompanied by constant adjusting of the return phases to account for sensed irregularities in the flight path. SAR's in spacecraft avoid that atmosphere problem, but still must make corrections for known antenna movements due to rotations of the spacecraft, even those that are reactions to movements of onboard machinery. Locating a SAR in a crewed space vehicle may require that the humans carefully remain motionless relative to the vehicle during data collection periods. Returns from scatterers within the range extent of any image are spread over a matching time interval. The inter-pulse period must be long enough to allow farthest-range returns from any pulse to finish arriving before the nearest-range ones from the next pulse begin to appear, so that those do not overlap each other in time. On the other hand, the interpulse rate must be fast enough to provide sufficient samples for the desired across-range (or across-beam) resolution. When the radar is to be carried by a high-speed vehicle and is to image a large area at fine resolution, those conditions may clash, leading to what has been called SAR's ambiguity problem. The same considerations apply to "conventional" radars also, but this problem occurs significantly only when resolution is so fine as to be available only through SAR processes. Since the basis of the problem is the information-carrying capacity of the single signal-input channel provided by one antenna, the only solution is to use additional channels fed by additional antennas. The system then becomes a hybrid of a SAR and a phased array, sometimes being called a Vernier array.Data processing

Combining the series of observations requires significant computational resources, usually usingAmplitude data

The amplitude information, when shown in a map-like display, gives information about ground cover in much the same way that a black-and-white photo does. Variations in processing may also be done in either vehicle-borne stations or ground stations for various purposes, so as to accentuate certain image features for detailed target-area analysis.Phase data

Although the phase information in an image is generally not made available to a human observer of an image display device, it can be preserved numerically, and sometimes allows certain additional features of targets to be recognized.Coherence speckle

Unfortunately, the phase differences between adjacent image picture elements ("pixels") also produce random interference effects called "coherence speckle", which is a sort of graininess with dimensions on the order of the resolution, causing the concept of resolution to take on a subtly different meaning. This effect is the same as is apparent both visually and photographically in laser-illuminated optical scenes. The scale of that random speckle structure is governed by the size of the synthetic aperture in wavelengths, and cannot be finer than the system's resolution. Speckle structure can be subdued at the expense of resolution.Optical holography

Before rapid digital computers were available, the data processing was done using an optical holography technique. The analog radar data were recorded as a holographic interference pattern on photographic film at a scale permitting the film to preserve the signal bandwidths (for example, 1:1,000,000 for a radar using a 0.6-meter wavelength). Then light using, for example, 0.6-micrometer waves (as from a helium–neon laser) passing through the hologram could project a terrain image at a scale recordable on another film at reasonable processor focal distances of around a meter. This worked because both SAR and phased arrays are fundamentally similar to optical holography, but using microwaves instead of light waves. The "optical data-processors" developed for this radar purpose"A short history of the Optics Group of the Willow Run Laboratories", Emmett N. Leith, in ''Trends in Optics: Research, Development, and Applications'' (book), Anna Consortini, Academic Press, San Diego: 1996."Sighted Automation and Fine Resolution Imaging", W. M. Brown, J. L. Walker, and W. R. Boario, IEEE Transactions on Aerospace and Electronic Systems, Vol. 40, No. 4, October 2004, pp 1426–1445. were the first effective analog optical computer systems, and were, in fact, devised before the holographic technique was fully adapted to optical imaging. Because of the different sources of range and across-range signal structures in the radar signals, optical data-processors for SAR included not only both spherical and cylindrical lenses, but sometimes conical ones.Image appearance

The following considerations apply also to real-aperture terrain-imaging radars, but are more consequential when resolution in range is matched to a cross-beam resolution that is available only from a SAR.Range, cross-range, and angles

The two dimensions of a radar image are range and cross-range. Radar images of limited patches of terrain can resemble oblique photographs, but not ones taken from the location of the radar. This is because the range coordinate in a radar image is perpendicular to the vertical-angle coordinate of an oblique photo. The apparent entrance-pupil position (orVisibility

When viewed as specified above, fine-resolution radar images of small areas can appear most nearly like familiar optical ones, for two reasons. The first reason is easily understood by imagining a flagpole in the scene. The slant-range to its upper end is less than that to its base. Therefore, the pole can appear correctly top-end up only when viewed in the above orientation. Secondly, the radar illumination then being downward, shadows are seen in their most-familiar "overhead-lighting" direction. The image of the pole's top will overlay that of some terrain point which is on the same slant range arc but at a shorter horizontal range ("ground-range"). Images of scene surfaces which faced both the illumination and the apparent eyepoint will have geometries that resemble those of an optical scene viewed from that eyepoint. However, slopes facing the radar will be foreshortened and ones facing away from it will be lengthened from their horizontal (map) dimensions. The former will therefore be brightened and the latter dimmed. Returns from slopes steeper than perpendicular to slant range will be overlaid on those of lower-elevation terrain at a nearer ground-range, both being visible but intermingled. This is especially the case for vertical surfaces like the walls of buildings. Another viewing inconvenience that arises when a surface is steeper than perpendicular to the slant range is that it is then illuminated on one face but "viewed" from the reverse face. Then one "sees", for example, the radar-facing wall of a building as if from the inside, while the building's interior and the rear wall (that nearest to, hence expected to be optically visible to, the viewer) have vanished, since they lack illumination, being in the shadow of the front wall and the roof. Some return from the roof may overlay that from the front wall, and both of those may overlay return from terrain in front of the building. The visible building shadow will include those of all illuminated items. Long shadows may exhibit blurred edges due to the illuminating antenna's movement during the "time exposure" needed to create the image.Mirroring artefacts and shadows

Surfaces that we usually consider rough will, if that roughness consists of relief less than the radar wavelength, behave as smooth mirrors, showing, beyond such a surface, additional images of items in front of it. Those mirror images will appear within the shadow of the mirroring surface, sometimes filling the entire shadow, thus preventing recognition of the shadow. The direction of overlay of any scene point is not directly toward the radar, but toward that point of the SAR's current path direction that is nearest to the target point. If the SAR is "squinting" forward or aft away from the exactly broadside direction, then the illumination direction, and hence the shadow direction, will not be opposite to the overlay direction, but slanted to right or left from it. An image will appear with the correct projection geometry when viewed so that the overlay direction is vertical, the SAR's flight-path is above the image, and range increases somewhat downward.Objects in motion

Objects in motion within a SAR scene alter the Doppler frequencies of the returns. Such objects therefore appear in the image at locations offset in the across-range direction by amounts proportional to the range-direction component of their velocity. Road vehicles may be depicted off the roadway and therefore not recognized as road traffic items. Trains appearing away from their tracks are more easily properly recognized by their length parallel to known trackage as well as by the absence of an equal length of railbed signature and of some adjacent terrain, both having been shadowed by the train. While images of moving vessels can be offset from the line of the earlier parts of their wakes, the more recent parts of the wake, which still partake of some of the vessel's motion, appear as curves connecting the vessel image to the relatively quiescent far-aft wake. In such identifiable cases, speed and direction of the moving items can be determined from the amounts of their offsets. The along-track component of a target's motion causes some defocus. Random motions such as that of wind-driven tree foliage, vehicles driven over rough terrain, or humans or other animals walking or running generally render those items not focusable, resulting in blurring or even effective invisibility. These considerations, along with the speckle structure due to coherence, take some getting used to in order to correctly interpret SAR images. To assist in that, large collections of significant target signatures have been accumulated by performing many test flights over known terrains and cultural objects.History

Relationship to phased arrays

A technique closely related to SAR uses an array (referred to as a "See also

References

Bibliography

* * * *External links

InSAR measurements from the Space Shuttle

Images from the Space Shuttle SAR instrument

The Alaska Satellite Facility

has numerous technical documents, including an introductory text on SAR theory and scientific applications

SAR Journal

SAR Journal tracks the Synthetic Aperture Radar (SAR) industry

–