Probability Assessment on:

[Wikipedia]

[Google]

[Amazon]

Probability is a branch of

The sixteenth-century Italian polymath Gerolamo Cardano demonstrated the efficacy of defining

The sixteenth-century Italian polymath Gerolamo Cardano demonstrated the efficacy of defining

For example, if two coins are flipped, then the chance of both being heads is

For example, if two coins are flipped, then the chance of both being heads is

Virtual Laboratories in Probability and Statistics (Univ. of Ala.-Huntsville)

*

Probability and Statistics EBook

*

HTML index with links to PostScript files

an

PDF

(first three chapters)

* ttp://www.economics.soton.ac.uk/staff/aldrich/Probability%20Earliest%20Uses.htm Probability and Statistics on the Earliest Uses Pages (Univ. of Southampton)

Earliest Uses of Symbols in Probability and Statistics

o

A tutorial on probability and Bayes' theorem devised for first-year Oxford University students

, by Charles Grinstead, Laurie Snel

Source

''( GNU Free Documentation License)'' *

Probabilità e induzione

', Bologna, CLUEB, 1993. (digital version)

{{Authority control

mathematics

Mathematics is a field of study that discovers and organizes methods, Mathematical theory, theories and theorems that are developed and Mathematical proof, proved for the needs of empirical sciences and mathematics itself. There are many ar ...

and statistics

Statistics (from German language, German: ', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a s ...

concerning events and numerical descriptions of how likely they are to occur. The probability of an event is a number between 0 and 1; the larger the probability, the more likely an event is to occur."Kendall's Advanced Theory of Statistics, Volume 1: Distribution Theory", Alan Stuart and Keith Ord, 6th ed., (2009), .William Feller, ''An Introduction to Probability Theory and Its Applications'', vol. 1, 3rd ed., (1968), Wiley, . This number is often expressed as a percentage (%), ranging from 0% to 100%. A simple example is the tossing of a fair (unbiased) coin. Since the coin is fair, the two outcomes ("heads" and "tails") are both equally probable; the probability of "heads" equals the probability of "tails"; and since no other outcomes are possible, the probability of either "heads" or "tails" is 1/2 (which could also be written as 0.5 or 50%).

These concepts have been given an axiomatic mathematical formalization in ''probability theory

Probability theory or probability calculus is the branch of mathematics concerned with probability. Although there are several different probability interpretations, probability theory treats the concept in a rigorous mathematical manner by expre ...

'', which is used widely in areas of study such as statistics

Statistics (from German language, German: ', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a s ...

, mathematics

Mathematics is a field of study that discovers and organizes methods, Mathematical theory, theories and theorems that are developed and Mathematical proof, proved for the needs of empirical sciences and mathematics itself. There are many ar ...

, science

Science is a systematic discipline that builds and organises knowledge in the form of testable hypotheses and predictions about the universe. Modern science is typically divided into twoor threemajor branches: the natural sciences, which stu ...

, finance

Finance refers to monetary resources and to the study and Academic discipline, discipline of money, currency, assets and Liability (financial accounting), liabilities. As a subject of study, is a field of Business administration, Business Admin ...

, gambling

Gambling (also known as betting or gaming) is the wagering of something of Value (economics), value ("the stakes") on a Event (probability theory), random event with the intent of winning something else of value, where instances of strategy (ga ...

, artificial intelligence

Artificial intelligence (AI) is the capability of computer, computational systems to perform tasks typically associated with human intelligence, such as learning, reasoning, problem-solving, perception, and decision-making. It is a field of re ...

, machine learning

Machine learning (ML) is a field of study in artificial intelligence concerned with the development and study of Computational statistics, statistical algorithms that can learn from data and generalise to unseen data, and thus perform Task ( ...

, computer science

Computer science is the study of computation, information, and automation. Computer science spans Theoretical computer science, theoretical disciplines (such as algorithms, theory of computation, and information theory) to Applied science, ...

, game theory

Game theory is the study of mathematical models of strategic interactions. It has applications in many fields of social science, and is used extensively in economics, logic, systems science and computer science. Initially, game theory addressed ...

, and philosophy

Philosophy ('love of wisdom' in Ancient Greek) is a systematic study of general and fundamental questions concerning topics like existence, reason, knowledge, Value (ethics and social sciences), value, mind, and language. It is a rational an ...

to, for example, draw inferences about the expected frequency of events. Probability theory is also used to describe the underlying mechanics and regularities of complex systems

A complex system is a system composed of many components that may interact with one another. Examples of complex systems are Earth's global climate, organisms, the human brain, infrastructure such as power grid, transportation or communication s ...

.

Etymology

The word ''probability'' derives from the Latin , which can also mean " probity", a measure of theauthority

Authority is commonly understood as the legitimate power of a person or group of other people.

In a civil state, ''authority'' may be practiced by legislative, executive, and judicial branches of government,''The New Fontana Dictionary of M ...

of a witness

In law, a witness is someone who, either voluntarily or under compulsion, provides testimonial evidence, either oral or written, of what they know or claim to know.

A witness might be compelled to provide testimony in court, before a grand jur ...

in a legal case in Europe, and often correlated with the witness's nobility

Nobility is a social class found in many societies that have an aristocracy. It is normally appointed by and ranked immediately below royalty. Nobility has often been an estate of the realm with many exclusive functions and characteristics. T ...

. In a sense, this differs much from the modern meaning of ''probability'', which in contrast is a measure of the weight of empirical evidence

Empirical evidence is evidence obtained through sense experience or experimental procedure. It is of central importance to the sciences and plays a role in various other fields, like epistemology and law.

There is no general agreement on how the ...

, and is arrived at from inductive reasoning

Inductive reasoning refers to a variety of method of reasoning, methods of reasoning in which the conclusion of an argument is supported not with deductive certainty, but with some degree of probability. Unlike Deductive reasoning, ''deductive'' ...

and statistical inference

Statistical inference is the process of using data analysis to infer properties of an underlying probability distribution.Upton, G., Cook, I. (2008) ''Oxford Dictionary of Statistics'', OUP. . Inferential statistical analysis infers properties of ...

. Hacking, I. (2006) ''The Emergence of Probability: A Philosophical Study of Early Ideas about Probability, Induction and Statistical Inference'', Cambridge University Press,

Interpretations

When dealing with random experiments – i.e.,experiment

An experiment is a procedure carried out to support or refute a hypothesis, or determine the efficacy or likelihood of something previously untried. Experiments provide insight into cause-and-effect by demonstrating what outcome occurs whe ...

s that are random and well-defined – in a purely theoretical setting (like tossing a coin), probabilities can be numerically described by the number of desired outcomes, divided by the total number of all outcomes. This is referred to as theoretical probability (in contrast to empirical probability, dealing with probabilities in the context of real experiments). The probability is a number between 0 and 1; the larger the probability, the more likely the desired outcome is to occur. For example, tossing a coin twice will yield "head-head", "head-tail", "tail-head", and "tail-tail" outcomes. The probability of getting an outcome of "head-head" is 1 out of 4 outcomes, or, in numerical terms, 1/4, 0.25 or 25%. The probability of getting an outcome of at least one head is 3 out of 4, or 0.75, and this event is more likely to occur. However, when it comes to practical application, there are two major competing categories of probability interpretations, whose adherents hold different views about the fundamental nature of probability:

* Objectivists assign numbers to describe some objective or physical state of affairs. The most popular version of objective probability is frequentist probability, which claims that the probability of a random event denotes the '' relative frequency of occurrence'' of an experiment's outcome when the experiment is repeated indefinitely. This interpretation considers probability to be the relative frequency "in the long run" of outcomes. A modification of this is propensity probability, which interprets probability as the tendency of some experiment to yield a certain outcome, even if it is performed only once.

* Subjectivists assign numbers per subjective probability, that is, as a degree of belief. The degree of belief has been interpreted as "the price at which you would buy or sell a bet that pays 1 unit of utility if E, 0 if not E", although that interpretation is not universally agreed upon. The most popular version of subjective probability is Bayesian probability

Bayesian probability ( or ) is an interpretation of the concept of probability, in which, instead of frequency or propensity of some phenomenon, probability is interpreted as reasonable expectation representing a state of knowledge or as quant ...

, which includes expert knowledge as well as experimental data to produce probabilities. The expert knowledge is represented by some (subjective) prior probability distribution. These data are incorporated in a likelihood function

A likelihood function (often simply called the likelihood) measures how well a statistical model explains observed data by calculating the probability of seeing that data under different parameter values of the model. It is constructed from the ...

. The product of the prior and the likelihood, when normalized, results in a posterior probability distribution that incorporates all the information known to date. By Aumann's agreement theorem, Bayesian agents whose prior beliefs are similar will end up with similar posterior beliefs. However, sufficiently different priors can lead to different conclusions, regardless of how much information the agents share.

History

The scientific study of probability is a modern development of mathematics.Gambling

Gambling (also known as betting or gaming) is the wagering of something of Value (economics), value ("the stakes") on a Event (probability theory), random event with the intent of winning something else of value, where instances of strategy (ga ...

shows that there has been an interest in quantifying the ideas of probability throughout history, but exact mathematical descriptions arose much later. There are reasons for the slow development of the mathematics of probability. Whereas games of chance provided the impetus for the mathematical study of probability, fundamental issues are still obscured by superstitions.

According to Richard Jeffrey, "Before the middle of the seventeenth century, the term 'probable' (Latin ''probabilis'') meant ''approvable'', and was applied in that sense, univocally, to opinion and to action. A probable action or opinion was one such as sensible people would undertake or hold, in the circumstances."Jeffrey, R.C., ''Probability and the Art of Judgment,'' Cambridge University Press. (1992). pp. 54–55 . However, in legal contexts especially, 'probable' could also apply to propositions for which there was good evidence.Franklin, J. (2001) ''The Science of Conjecture: Evidence and Probability Before Pascal,'' Johns Hopkins University Press. (pp. 22, 113, 127)

The sixteenth-century Italian polymath Gerolamo Cardano demonstrated the efficacy of defining

The sixteenth-century Italian polymath Gerolamo Cardano demonstrated the efficacy of defining odds

In probability theory, odds provide a measure of the probability of a particular outcome. Odds are commonly used in gambling and statistics. For example for an event that is 40% probable, one could say that the odds are or

When gambling, o ...

as the ratio of favourable to unfavourable outcomes (which implies that the probability of an event is given by the ratio of favourable outcomes to the total number of possible outcomes).

Aside from the elementary work by Cardano, the doctrine of probabilities dates to the correspondence of Pierre de Fermat

Pierre de Fermat (; ; 17 August 1601 – 12 January 1665) was a French mathematician who is given credit for early developments that led to infinitesimal calculus, including his technique of adequality. In particular, he is recognized for his d ...

and Blaise Pascal

Blaise Pascal (19June 162319August 1662) was a French mathematician, physicist, inventor, philosopher, and Catholic Church, Catholic writer.

Pascal was a child prodigy who was educated by his father, a tax collector in Rouen. His earliest ...

(1654). Christiaan Huygens

Christiaan Huygens, Halen, Lord of Zeelhem, ( , ; ; also spelled Huyghens; ; 14 April 1629 – 8 July 1695) was a Dutch mathematician, physicist, engineer, astronomer, and inventor who is regarded as a key figure in the Scientific Revolution ...

(1657) gave the earliest known scientific treatment of the subject. Jakob Bernoulli's '' Ars Conjectandi'' (posthumous, 1713) and Abraham de Moivre's '' Doctrine of Chances'' (1718) treated the subject as a branch of mathematics. See Ian Hacking

Ian MacDougall Hacking (February 18, 1936 – May 10, 2023) was a Canadian philosopher specializing in the philosophy of science. Throughout his career, he won numerous awards, such as the Killam Prize for the Humanities and the Balzan Prize, ...

's ''The Emergence of Probability'' and James Franklin's ''The Science of Conjecture'' for histories of the early development of the very concept of mathematical probability.

The theory of errors may be traced back to Roger Cotes's ''Opera Miscellanea'' (posthumous, 1722), but a memoir prepared by Thomas Simpson in 1755 (printed 1756) first applied the theory to the discussion of errors of observation. The reprint (1757) of this memoir lays down the axioms that positive and negative errors are equally probable, and that certain assignable limits define the range of all errors. Simpson also discusses continuous errors and describes a probability curve.

The first two laws of error that were proposed both originated with Pierre-Simon Laplace

Pierre-Simon, Marquis de Laplace (; ; 23 March 1749 – 5 March 1827) was a French polymath, a scholar whose work has been instrumental in the fields of physics, astronomy, mathematics, engineering, statistics, and philosophy. He summariz ...

. The first law was published in 1774, and stated that the frequency of an error could be expressed as an exponential function of the numerical magnitude of the errordisregarding sign. The second law of error was proposed in 1778 by Laplace, and stated that the frequency of the error is an exponential function of the square of the error.Wilson EB (1923) "First and second laws of error". Journal of the American Statistical Association

The ''Journal of the American Statistical Association'' is a quarterly peer-reviewed scientific journal published by Taylor & Francis on behalf of the American Statistical Association. It covers work primarily focused on the application of statis ...

, 18, 143 The second law of error is called the normal distribution or the Gauss law. "It is difficult historically to attribute that law to Gauss, who in spite of his well-known precocity had probably not made this discovery before he was two years old."

Daniel Bernoulli

Daniel Bernoulli ( ; ; – 27 March 1782) was a Swiss people, Swiss-France, French mathematician and physicist and was one of the many prominent mathematicians in the Bernoulli family from Basel. He is particularly remembered for his applicati ...

(1778) introduced the principle of the maximum product of the probabilities of a system of concurrent errors.

Adrien-Marie Legendre (1805) developed the method of least squares, and introduced it in his ''Nouvelles méthodes pour la détermination des orbites des comètes'' (''New Methods for Determining the Orbits of Comets''). In ignorance of Legendre's contribution, an Irish-American writer, Robert Adrain, editor of "The Analyst" (1808), first deduced the law of facility of error,

where is a constant depending on precision of observation, and is a scale factor ensuring that the area under the curve equals 1. He gave two proofs, the second being essentially the same as John Herschel

Sir John Frederick William Herschel, 1st Baronet (; 7 March 1792 – 11 May 1871) was an English polymath active as a mathematician, astronomer, chemist, inventor and experimental photographer who invented the blueprint and did botanical work. ...

's (1850). Gauss

Johann Carl Friedrich Gauss (; ; ; 30 April 177723 February 1855) was a German mathematician, astronomer, Geodesy, geodesist, and physicist, who contributed to many fields in mathematics and science. He was director of the Göttingen Observat ...

gave the first proof that seems to have been known in Europe (the third after Adrain's) in 1809. Further proofs were given by Laplace (1810, 1812), Gauss (1823), James Ivory (1825, 1826), Hagen (1837), Friedrich Bessel (1838), W.F. Donkin (1844, 1856), and Morgan Crofton (1870). Other contributors were Ellis (1844), De Morgan (1864), Glaisher (1872), and Giovanni Schiaparelli (1875). Peters's (1856) formula for ''r'', the probable error of a single observation, is well known.

In the nineteenth century, authors on the general theory included Laplace, Sylvestre Lacroix (1816), Littrow (1833), Adolphe Quetelet

Lambert Adolphe Jacques Quetelet FRSF or FRSE (; 22 February 1796 – 17 February 1874) was a Belgian- French astronomer, mathematician, statistician and sociologist who founded and directed the Brussels Observatory and was influential ...

(1853), Richard Dedekind (1860), Helmert (1872), Hermann Laurent (1873), Liagre, Didion and Karl Pearson

Karl Pearson (; born Carl Pearson; 27 March 1857 – 27 April 1936) was an English biostatistician and mathematician. He has been credited with establishing the discipline of mathematical statistics. He founded the world's first university ...

. Augustus De Morgan and George Boole

George Boole ( ; 2 November 1815 – 8 December 1864) was a largely self-taught English mathematician, philosopher and logician, most of whose short career was spent as the first professor of mathematics at Queen's College, Cork in Ireland. H ...

improved the exposition of the theory.

In 1906, Andrey Markov introduced the notion of Markov chains, which played an important role in stochastic process

In probability theory and related fields, a stochastic () or random process is a mathematical object usually defined as a family of random variables in a probability space, where the index of the family often has the interpretation of time. Sto ...

es theory and its applications. The modern theory of probability based on measure theory

In mathematics, the concept of a measure is a generalization and formalization of geometrical measures (length, area, volume) and other common notions, such as magnitude (mathematics), magnitude, mass, and probability of events. These seemingl ...

was developed by Andrey Kolmogorov in 1931.

On the geometric side, contributors to ''The Educational Times'' included Miller, Crofton, McColl, Wolstenholme, Watson, and Artemas Martin. See integral geometry for more information.

Theory

Like other theories, the theory of probability is a representation of its concepts in formal termsthat is, in terms that can be considered separately from their meaning. These formal terms are manipulated by the rules of mathematics and logic, and any results are interpreted or translated back into the problem domain. There have been at least two successful attempts to formalize probability, namely the Kolmogorov formulation and the Cox formulation. In Kolmogorov's formulation (see alsoprobability space

In probability theory, a probability space or a probability triple (\Omega, \mathcal, P) is a mathematical construct that provides a formal model of a random process or "experiment". For example, one can define a probability space which models ...

), sets are interpreted as events and probability as a measure on a class of sets. In Cox's theorem, probability is taken as a primitive (i.e., not further analyzed), and the emphasis is on constructing a consistent assignment of probability values to propositions. In both cases, the laws of probability are the same, except for technical details.

There are other methods for quantifying uncertainty, such as the Dempster–Shafer theory or possibility theory, but those are essentially different and not compatible with the usually-understood laws of probability.

Applications

Probability theory is applied in everyday life inrisk

In simple terms, risk is the possibility of something bad happening. Risk involves uncertainty about the effects/implications of an activity with respect to something that humans value (such as health, well-being, wealth, property or the environ ...

assessment and modeling. The insurance industry and markets use actuarial science to determine pricing and make trading decisions. Governments apply probabilistic methods in environmental regulation, entitlement analysis, and financial regulation

Financial regulation is a broad set of policies that apply to the financial sector in most jurisdictions, justified by two main features of finance: systemic risk, which implies that the failure of financial firms involves public interest consi ...

.

An example of the use of probability theory in equity trading is the effect of the perceived probability of any widespread Middle East conflict on oil prices, which have ripple effects in the economy as a whole. An assessment by a commodity trader that a war is more likely can send that commodity's prices up or down, and signals other traders of that opinion. Accordingly, the probabilities are neither assessed independently nor necessarily rationally. The theory of behavioral finance emerged to describe the effect of such groupthink on pricing, on policy, and on peace and conflict.

In addition to financial assessment, probability can be used to analyze trends in biology (e.g., disease spread) as well as ecology (e.g., biological Punnett squares). (11 pages) As with finance, risk assessment can be used as a statistical tool to calculate the likelihood of undesirable events occurring, and can assist with implementing protocols to avoid encountering such circumstances. Probability is used to design games of chance so that casinos can make a guaranteed profit, yet provide payouts to players that are frequent enough to encourage continued play.

Another significant application of probability theory in everyday life is reliability. Many consumer products, such as automobiles and consumer electronics, use reliability theory in product design to reduce the probability of failure. Failure probability may influence a manufacturer's decisions on a product's warranty

In law, a warranty is an expressed or implied promise or assurance of some kind. The term's meaning varies across legal subjects. In property law, it refers to a covenant by the grantor of a deed. In insurance law, it refers to a promise by the ...

.

The cache language model and other statistical language models that are used in natural language processing

Natural language processing (NLP) is a subfield of computer science and especially artificial intelligence. It is primarily concerned with providing computers with the ability to process data encoded in natural language and is thus closely related ...

are also examples of applications of probability theory.

Mathematical treatment

Consider an experiment that can produce a number of results. The collection of all possible results is called the sample space of the experiment, sometimes denoted as . Thepower set

In mathematics, the power set (or powerset) of a set is the set of all subsets of , including the empty set and itself. In axiomatic set theory (as developed, for example, in the ZFC axioms), the existence of the power set of any set is po ...

of the sample space is formed by considering all different collections of possible results. For example, rolling a die can produce six possible results. One collection of possible results gives an odd number on the die. Thus, the subset is an element of the power set

In mathematics, the power set (or powerset) of a set is the set of all subsets of , including the empty set and itself. In axiomatic set theory (as developed, for example, in the ZFC axioms), the existence of the power set of any set is po ...

of the sample space of dice rolls. These collections are called "events". In this case, is the event that the die falls on some odd number. If the results that actually occur fall in a given event, the event is said to have occurred.

A probability is a way of assigning every event a value between zero and one, with the requirement that the event made up of all possible results (in our example, the event ) is assigned a value of one. To qualify as a probability, the assignment of values must satisfy the requirement that for any collection of mutually exclusive events (events with no common results, such as the events , , and ), the probability that at least one of the events will occur is given by the sum of the probabilities of all the individual events.

The probability of an event ''A'' is written as , , or . This mathematical definition of probability can extend to infinite sample spaces, and even uncountable sample spaces, using the concept of a measure.

The ''opposite'' or ''complement'' of an event ''A'' is the event ot ''A''(that is, the event of ''A'' not occurring), often denoted as , , or ; its probability is given by . As an example, the chance of not rolling a six on a six-sided die is For a more comprehensive treatment, see Complementary event

In probability theory, the complement of any event ''A'' is the event ot ''A'' i.e. the event that ''A'' does not occur.Robert R. Johnson, Patricia J. Kuby: ''Elementary Statistics''. Cengage Learning 2007, , p. 229 () The event ''A'' and ...

.

If two events ''A'' and ''B'' occur on a single performance of an experiment, this is called the intersection or joint probability of ''A'' and ''B'', denoted as

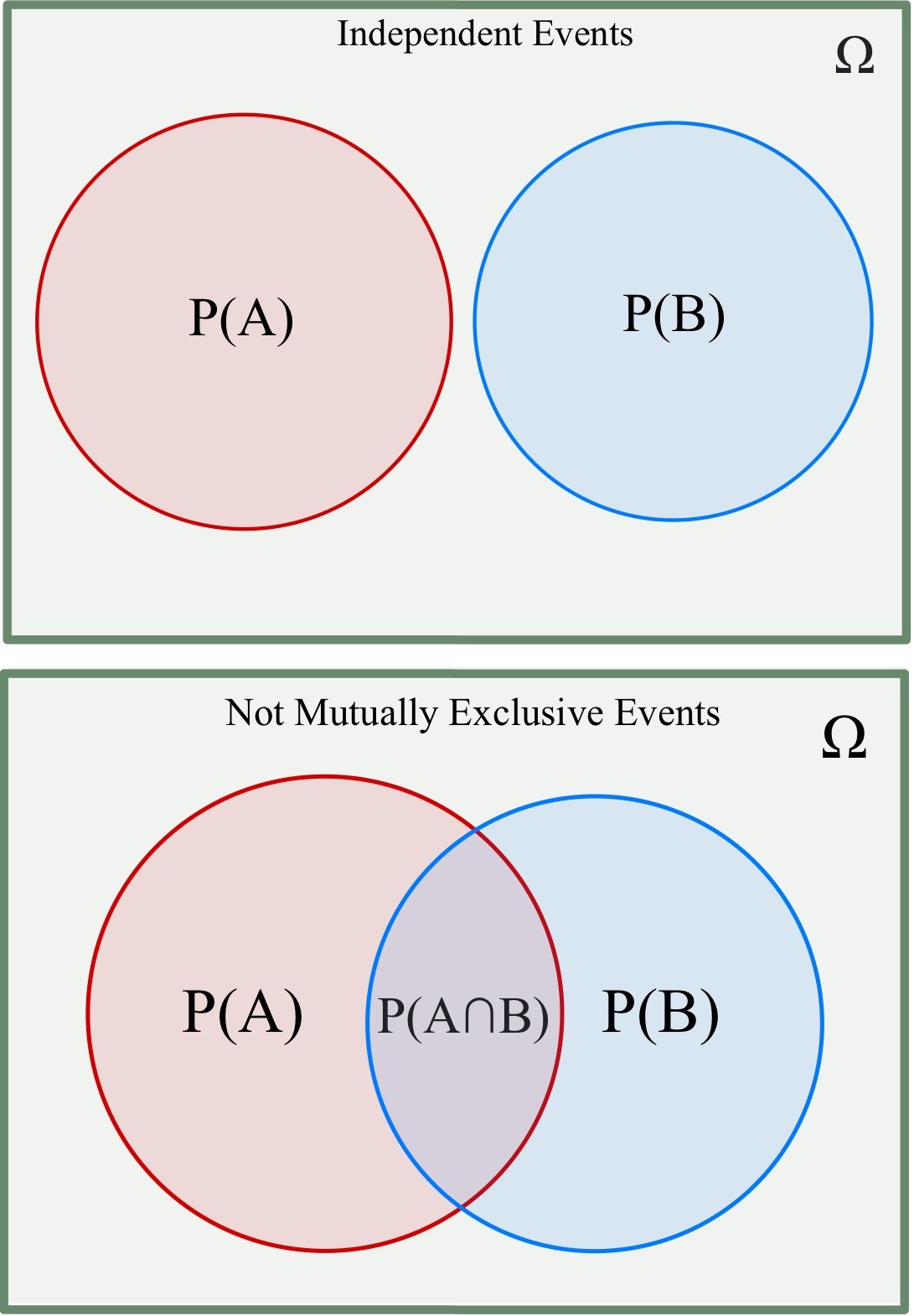

Independent events

If two events, ''A'' and ''B'' are independent then the joint probability is For example, if two coins are flipped, then the chance of both being heads is

For example, if two coins are flipped, then the chance of both being heads is

Mutually exclusive events

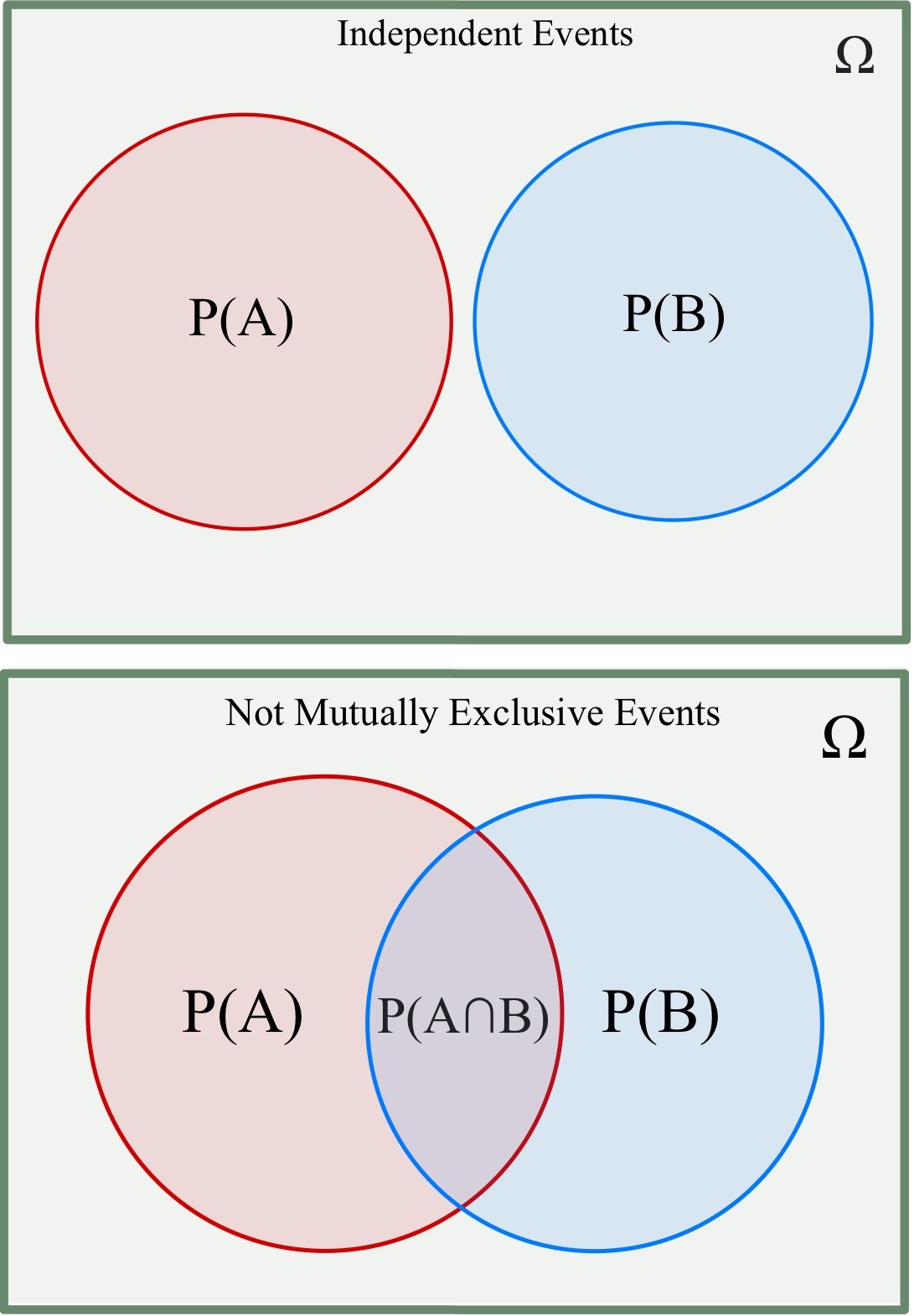

If either event ''A'' or event ''B'' can occur but never both simultaneously, then they are called mutually exclusive events. If two events are mutually exclusive, then the probability of ''both'' occurring is denoted as and If two events are mutually exclusive, then the probability of ''either'' occurring is denoted as and For example, the chance of rolling a 1 or 2 on a six-sided die isNot (necessarily) mutually exclusive events

If the events are not (necessarily) mutually exclusive then Rewritten, For example, when drawing a card from a deck of cards, the chance of getting a heart or a face card (J, Q, K) (or both) is since among the 52 cards of a deck, 13 are hearts, 12 are face cards, and 3 are both: here the possibilities included in the "3 that are both" are included in each of the "13 hearts" and the "12 face cards", but should only be counted once. This can be expanded further for multiple not (necessarily) mutually exclusive events. For three events, this proceeds as follows:It can be seen, then, that this pattern can be repeated for any number of events.Conditional probability

''Conditional probability

In probability theory, conditional probability is a measure of the probability of an Event (probability theory), event occurring, given that another event (by assumption, presumption, assertion or evidence) is already known to have occurred. This ...

'' is the probability of some event ''A'', given the occurrence of some other event ''B''. Conditional probability is written , and is read "the probability of ''A'', given ''B''". It is defined by

If then is formally undefined by this expression. In this case and are independent, since However, it is possible to define a conditional probability for some zero-probability events, for example by using a σ-algebra of such events (such as those arising from a continuous random variable).

For example, in a bag of 2 red balls and 2 blue balls (4 balls in total), the probability of taking a red ball is however, when taking a second ball, the probability of it being either a red ball or a blue ball depends on the ball previously taken. For example, if a red ball was taken, then the probability of picking a red ball again would be since only 1 red and 2 blue balls would have been remaining. And if a blue ball was taken previously, the probability of taking a red ball will be

Inverse probability

Inprobability theory

Probability theory or probability calculus is the branch of mathematics concerned with probability. Although there are several different probability interpretations, probability theory treats the concept in a rigorous mathematical manner by expre ...

and applications, '' Bayes' rule'' relates the odds

In probability theory, odds provide a measure of the probability of a particular outcome. Odds are commonly used in gambling and statistics. For example for an event that is 40% probable, one could say that the odds are or

When gambling, o ...

of event to event before (prior to) and after (posterior to) conditioning on another event The odds on to event is simply the ratio of the probabilities of the two events. When arbitrarily many events are of interest, not just two, the rule can be rephrased as ''posterior is proportional to prior times likelihood'', where the proportionality symbol means that the left hand side is proportional to (i.e., equals a constant times) the right hand side as varies, for fixed or given (Lee, 2012; Bertsch McGrayne, 2012). In this form it goes back to Laplace (1774) and to Cournot (1843); see Fienberg (2005).

Summary of probabilities

Relation to randomness and probability in quantum mechanics

In a deterministic universe, based on Newtonian concepts, there would be no probability if all conditions were known ( Laplace's demon) (but there are situations in which sensitivity to initial conditions exceeds our ability to measure them, i.e. know them). In the case of aroulette

Roulette (named after the French language, French word meaning "little wheel") is a casino game which was likely developed from the Italy, Italian game Biribi. In the game, a player may choose to place a bet on a single number, various grouping ...

wheel, if the force of the hand and the period of that force are known, the number on which the ball will stop would be a certainty (though as a practical matter, this would likely be true only of a roulette wheel that had not been exactly levelled – as Thomas A. Bass' Newtonian Casino revealed). This also assumes knowledge of inertia and friction of the wheel, weight, smoothness, and roundness of the ball, variations in hand speed during the turning, and so forth. A probabilistic description can thus be more useful than Newtonian mechanics for analyzing the pattern of outcomes of repeated rolls of a roulette wheel. Physicists face the same situation in the kinetic theory of gases, where the system, while deterministic ''in principle'', is so complex (with the number of molecules typically the order of magnitude of the Avogadro constant ) that only a statistical description of its properties is feasible.

Probability theory

Probability theory or probability calculus is the branch of mathematics concerned with probability. Although there are several different probability interpretations, probability theory treats the concept in a rigorous mathematical manner by expre ...

is required to describe quantum phenomena. A revolutionary discovery of early 20th century physics

Physics is the scientific study of matter, its Elementary particle, fundamental constituents, its motion and behavior through space and time, and the related entities of energy and force. "Physical science is that department of knowledge whi ...

was the random character of all physical processes that occur at sub-atomic scales and are governed by the laws of quantum mechanics

Quantum mechanics is the fundamental physical Scientific theory, theory that describes the behavior of matter and of light; its unusual characteristics typically occur at and below the scale of atoms. Reprinted, Addison-Wesley, 1989, It is ...

. The objective wave function

In quantum physics, a wave function (or wavefunction) is a mathematical description of the quantum state of an isolated quantum system. The most common symbols for a wave function are the Greek letters and (lower-case and capital psi (letter) ...

evolves deterministically but, according to the Copenhagen interpretation, it deals with probabilities of observing, the outcome being explained by a wave function collapse when an observation is made. However, the loss of determinism

Determinism is the Metaphysics, metaphysical view that all events within the universe (or multiverse) can occur only in one possible way. Deterministic theories throughout the history of philosophy have developed from diverse and sometimes ov ...

for the sake of instrumentalism did not meet with universal approval. Albert Einstein

Albert Einstein (14 March 187918 April 1955) was a German-born theoretical physicist who is best known for developing the theory of relativity. Einstein also made important contributions to quantum mechanics. His mass–energy equivalence f ...

famously remarked in a letter to Max Born

Max Born (; 11 December 1882 – 5 January 1970) was a German-British theoretical physicist who was instrumental in the development of quantum mechanics. He also made contributions to solid-state physics and optics, and supervised the work of a ...

: "I am convinced that God does not play dice". Like Einstein, Erwin Schrödinger

Erwin Rudolf Josef Alexander Schrödinger ( ; ; 12 August 1887 – 4 January 1961), sometimes written as or , was an Austrian-Irish theoretical physicist who developed fundamental results in quantum field theory, quantum theory. In particul ...

, who discovered the wave function, believed quantum mechanics is a statistical

Statistics (from German language, German: ', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a s ...

approximation of an underlying deterministic reality

Reality is the sum or aggregate of everything in existence; everything that is not imagination, imaginary. Different Culture, cultures and Academic discipline, academic disciplines conceptualize it in various ways.

Philosophical questions abo ...

. In some modern interpretations of the statistical mechanics of measurement, quantum decoherence is invoked to account for the appearance of subjectively probabilistic experimental outcomes.

See also

* Contingency * Equiprobability *Fuzzy logic

Fuzzy logic is a form of many-valued logic in which the truth value of variables may be any real number between 0 and 1. It is employed to handle the concept of partial truth, where the truth value may range between completely true and completely ...

* Heuristic (psychology)

Heuristics (from Ancient Greek εὑρίσκω, ''heurískō'', "I find, discover") is the process by which humans use mental shortcuts to arrive at decisions. Heuristics are simple strategies that humans, animals, organizations, and even machin ...

Notes

References

Bibliography

* Kallenberg, O. (2005) ''Probabilistic Symmetries and Invariance Principles''. Springer-Verlag, New York. 510 pp. * Kallenberg, O. (2002) ''Foundations of Modern Probability,'' 2nd ed. Springer Series in Statistics. 650 pp. * Olofsson, Peter (2005) ''Probability, Statistics, and Stochastic Processes'', Wiley-Interscience. 504 pp .External links

Virtual Laboratories in Probability and Statistics (Univ. of Ala.-Huntsville)

*

Probability and Statistics EBook

*

Edwin Thompson Jaynes

Edwin Thompson Jaynes (July 5, 1922 – April 30, 1998) was the Wayman Crow Distinguished Professor of Physics at Washington University in St. Louis. He wrote extensively on statistical mechanics and on foundations of probability and statistic ...

. ''Probability Theory: The Logic of Science''. Preprint: Washington University, (1996). �HTML index with links to PostScript files

an

(first three chapters)

* ttp://www.economics.soton.ac.uk/staff/aldrich/Probability%20Earliest%20Uses.htm Probability and Statistics on the Earliest Uses Pages (Univ. of Southampton)

Earliest Uses of Symbols in Probability and Statistics

o

A tutorial on probability and Bayes' theorem devised for first-year Oxford University students

, by Charles Grinstead, Laurie Snel

Source

''( GNU Free Documentation License)'' *

Bruno de Finetti

Bruno de Finetti (13 June 1906 – 20 July 1985) was an Italian probabilist statistician and actuary, noted for the "operational subjective" conception of probability. The classic exposition of his distinctive theory is the 1937 , which discuss ...

, Probabilità e induzione

', Bologna, CLUEB, 1993. (digital version)

{{Authority control