Objective Function on:

[Wikipedia]

[Google]

[Amazon]

In

mathematical optimization

Mathematical optimization (alternatively spelled ''optimisation'') or mathematical programming is the selection of a best element, with regard to some criteria, from some set of available alternatives. It is generally divided into two subfiel ...

and decision theory

Decision theory or the theory of rational choice is a branch of probability theory, probability, economics, and analytic philosophy that uses expected utility and probabilities, probability to model how individuals would behave Rationality, ratio ...

, a loss function or cost function (sometimes also called an error function) is a function that maps an event or values of one or more variables onto a real number

In mathematics, a real number is a number that can be used to measure a continuous one- dimensional quantity such as a duration or temperature. Here, ''continuous'' means that pairs of values can have arbitrarily small differences. Every re ...

intuitively representing some "cost" associated with the event. An optimization problem seeks to minimize a loss function. An objective function is either a loss function or its opposite (in specific domains, variously called a reward function, a profit function, a utility function

In economics, utility is a measure of a certain person's satisfaction from a certain state of the world. Over time, the term has been used with at least two meanings.

* In a Normative economics, normative context, utility refers to a goal or ob ...

, a fitness function, etc.), in which case it is to be maximized. The loss function could include terms from several levels of the hierarchy.

In statistics, typically a loss function is used for parameter estimation, and the event in question is some function of the difference between estimated and true values for an instance of data. The concept, as old as Laplace, was reintroduced in statistics by Abraham Wald in the middle of the 20th century. In the context of economics

Economics () is a behavioral science that studies the Production (economics), production, distribution (economics), distribution, and Consumption (economics), consumption of goods and services.

Economics focuses on the behaviour and interac ...

, for example, this is usually economic cost or regret. In classification

Classification is the activity of assigning objects to some pre-existing classes or categories. This is distinct from the task of establishing the classes themselves (for example through cluster analysis). Examples include diagnostic tests, identif ...

, it is the penalty for an incorrect classification of an example. In actuarial science, it is used in an insurance context to model benefits paid over premiums, particularly since the works of Harald Cramér in the 1920s. In optimal control, the loss is the penalty for failing to achieve a desired value. In financial risk management

Financial risk management is the practice of protecting Value (economics), economic value in a business, firm by managing exposure to financial risk - principally credit risk and market risk, with more specific variants as listed aside - as well ...

, the function is mapped to a monetary loss.

Examples

Regret

Leonard J. Savage argued that using non-Bayesian methods such as minimax, the loss function should be based on the idea of '' regret'', i.e., the loss associated with a decision should be the difference between the consequences of the best decision that could have been made under circumstances will be known and the decision that was in fact taken before they were known.Quadratic loss function

The use of a quadratic loss function is common, for example when using least squares techniques. It is often more mathematically tractable than other loss functions because of the properties ofvariance

In probability theory and statistics, variance is the expected value of the squared deviation from the mean of a random variable. The standard deviation (SD) is obtained as the square root of the variance. Variance is a measure of dispersion ...

s, as well as being symmetric: an error above the target causes the same loss as the same magnitude of error below the target. If the target is ''t'', then a quadratic loss function is

:

for some constant ''C''; the value of the constant makes no difference to a decision, and can be ignored by setting it equal to 1. This is also known as the squared error loss (SEL).

Many common statistics, including t-tests, regression models, design of experiments, and much else, use least squares methods applied using linear regression theory, which is based on the quadratic loss function.

The quadratic loss function is also used in linear-quadratic optimal control problems. In these problems, even in the absence of uncertainty, it may not be possible to achieve the desired values of all target variables. Often loss is expressed as a quadratic form

In mathematics, a quadratic form is a polynomial with terms all of degree two (" form" is another name for a homogeneous polynomial). For example,

4x^2 + 2xy - 3y^2

is a quadratic form in the variables and . The coefficients usually belong t ...

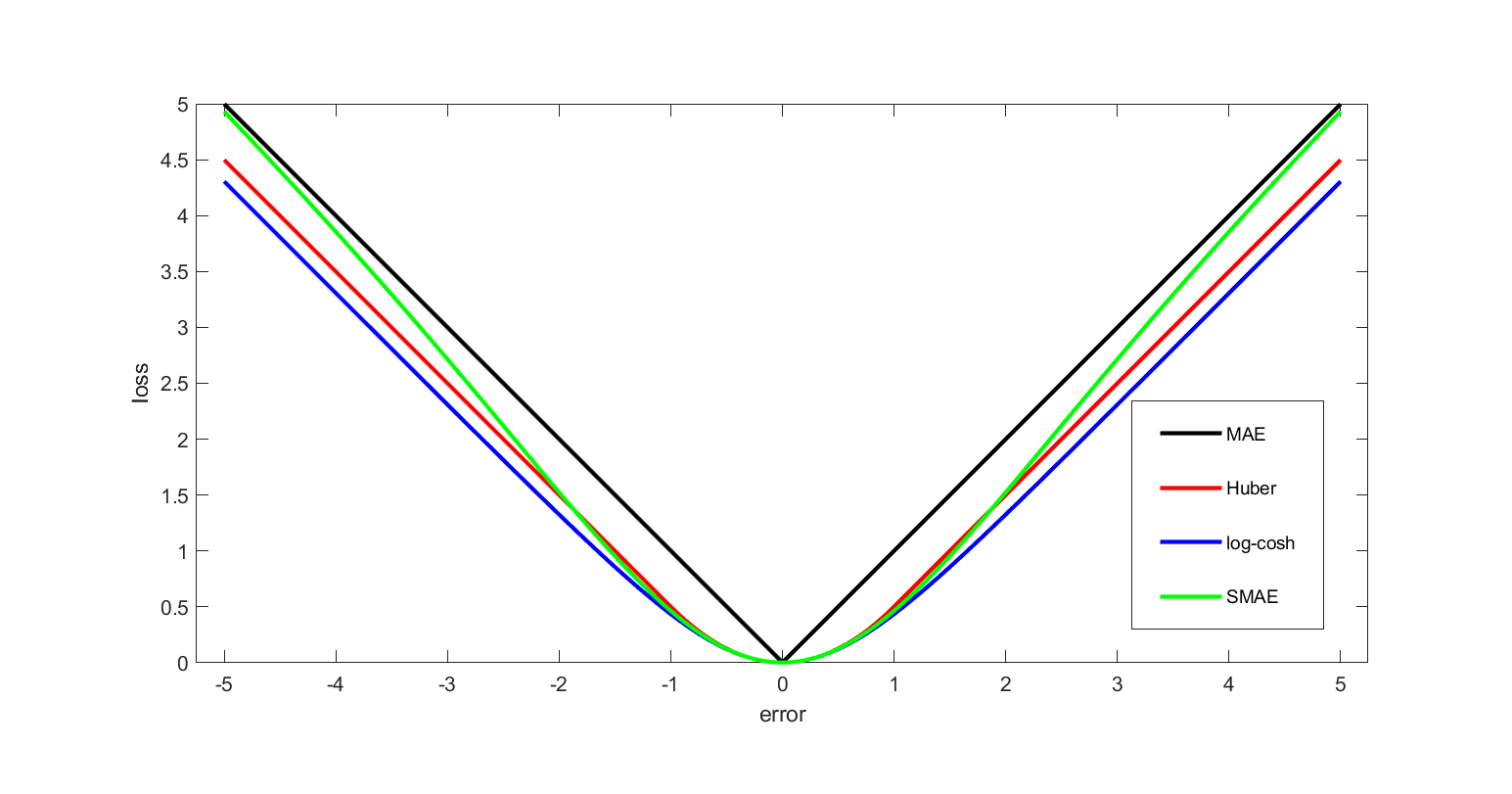

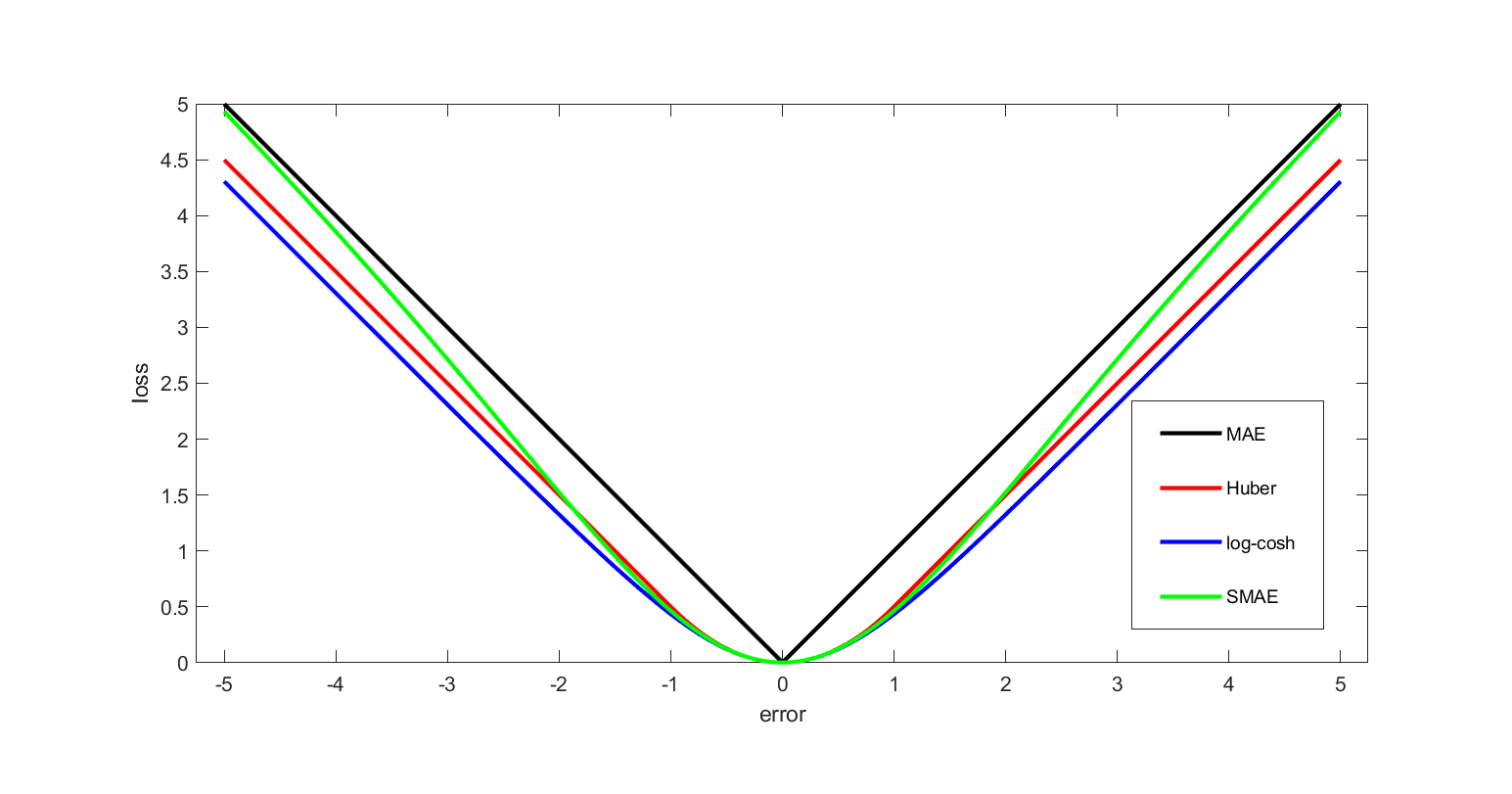

in the deviations of the variables of interest from their desired values; this approach is tractable because it results in linear first-order conditions. In the context of stochastic control, the expected value of the quadratic form is used. The quadratic loss assigns more importance to outliers than to the true data due to its square nature, so alternatives like the Huber, Log-Cosh and SMAE losses are used when the data has many large outliers.

0-1 loss function

Instatistics

Statistics (from German language, German: ', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a s ...

and decision theory

Decision theory or the theory of rational choice is a branch of probability theory, probability, economics, and analytic philosophy that uses expected utility and probabilities, probability to model how individuals would behave Rationality, ratio ...

, a frequently used loss function is the ''0-1 loss function''

:

using Iverson bracket notation, i.e. it evaluates to 1 when , and 0 otherwise.

Constructing loss and objective functions

In many applications, objective functions, including loss functions as a particular case, are determined by the problem formulation. In other situations, the decision maker’s preference must be elicited and represented by a scalar-valued function (called also utility function) in a form suitable for optimization — the problem that Ragnar Frisch has highlighted in hisNobel Prize

The Nobel Prizes ( ; ; ) are awards administered by the Nobel Foundation and granted in accordance with the principle of "for the greatest benefit to humankind". The prizes were first awarded in 1901, marking the fifth anniversary of Alfred N ...

lecture.

The existing methods for constructing objective functions are collected in the proceedings of two dedicated conferences.

In particular, Andranik Tangian showed that the most usable objective functions — quadratic and additive — are determined by a few indifference points. He used this property in the models for constructing these objective functions from either ordinal or cardinal data that were elicited through computer-assisted interviews with decision makers.

Among other things, he constructed objective functions to optimally distribute budgets for 16 Westfalian universities

and the European subsidies for equalizing unemployment rates among 271 German regions.

Expected loss

In some contexts, the value of the loss function itself is a random quantity because it depends on the outcome of a random variable ''X''.Statistics

Both frequentist and Bayesian statistical theory involve making a decision based on theexpected value

In probability theory, the expected value (also called expectation, expectancy, expectation operator, mathematical expectation, mean, expectation value, or first Moment (mathematics), moment) is a generalization of the weighted average. Informa ...

of the loss function; however, this quantity is defined differently under the two paradigms.

Frequentist expected loss

We first define the expected loss in the frequentist context. It is obtained by taking the expected value with respect to theprobability distribution

In probability theory and statistics, a probability distribution is a Function (mathematics), function that gives the probabilities of occurrence of possible events for an Experiment (probability theory), experiment. It is a mathematical descri ...

, ''P''''θ'', of the observed data, ''X''. This is also referred to as the risk function of the decision rule ''δ'' and the parameter ''θ''. Here the decision rule depends on the outcome of ''X''. The risk function is given by:

:

Here, ''θ'' is a fixed but possibly unknown state of nature, ''X'' is a vector of observations stochastically drawn from a population

Population is a set of humans or other organisms in a given region or area. Governments conduct a census to quantify the resident population size within a given jurisdiction. The term is also applied to non-human animals, microorganisms, and pl ...

, is the expectation over all population values of ''X'', ''dP''''θ'' is a probability measure

In mathematics, a probability measure is a real-valued function defined on a set of events in a σ-algebra that satisfies Measure (mathematics), measure properties such as ''countable additivity''. The difference between a probability measure an ...

over the event space of ''X'' (parametrized by ''θ'') and the integral is evaluated over the entire support of ''X''.

Bayes Risk

In a Bayesian approach, the expectation is calculated using the prior distribution * of the parameter ''θ'': : where m(x) is known as the ''predictive likelihood'' wherein θ has been "integrated out," * (θ , x) is the posterior distribution, and the order of integration has been changed. One then should choose the action ''a*'' which minimises this expected loss, which is referred to as ''Bayes Risk''. In the latter equation, the integrand inside dx is known as the ''Posterior Risk'', and minimising it with respect to decision ''a'' also minimizes the overall Bayes Risk. This optimal decision, ''a*'' is known as the ''Bayes (decision) Rule'' - it minimises the average loss over all possible states of nature θ, over all possible (probability-weighted) data outcomes. One advantage of the Bayesian approach is to that one need only choose the optimal action under the actual observed data to obtain a uniformly optimal one, whereas choosing the actual frequentist optimal decision rule as a function of all possible observations, is a much more difficult problem. Of equal importance though, the Bayes Rule reflects consideration of loss outcomes under different states of nature, θ.Examples in statistics

* For a scalar parameter ''θ'', a decision function whose output is an estimate of ''θ'', and a quadratic loss function ( squared error loss) the risk function becomes the mean squared error of the estimate, An Estimator found by minimizing the Mean squared error estimates the Posterior distribution's mean. * In density estimation, the unknown parameter is probability density itself. The loss function is typically chosen to be a norm in an appropriate function space. For example, for ''L''2 norm, the risk function becomes the mean integrated squared errorEconomic choice under uncertainty

In economics, decision-making under uncertainty is often modelled using the von Neumann–Morgenstern utility function of the uncertain variable of interest, such as end-of-period wealth. Since the value of this variable is uncertain, so is the value of the utility function; it is the expected value of utility that is maximized.Decision rules

A decision rule makes a choice using an optimality criterion. Some commonly used criteria are: * Minimax: Choose the decision rule with the lowest worst loss — that is, minimize the worst-case (maximum possible) loss: * Invariance: Choose the decision rule which satisfies an invariance requirement. *Choose the decision rule with the lowest average loss (i.e. minimize theexpected value

In probability theory, the expected value (also called expectation, expectancy, expectation operator, mathematical expectation, mean, expectation value, or first Moment (mathematics), moment) is a generalization of the weighted average. Informa ...

of the loss function):

Selecting a loss function

Sound statistical practice requires selecting an estimator consistent with the actual acceptable variation experienced in the context of a particular applied problem. Thus, in the applied use of loss functions, selecting which statistical method to use to model an applied problem depends on knowing the losses that will be experienced from being wrong under the problem's particular circumstances. A common example involves estimating " location". Under typical statistical assumptions, the mean or average is the statistic for estimating location that minimizes the expected loss experienced under the squared-error loss function, while themedian

The median of a set of numbers is the value separating the higher half from the lower half of a Sample (statistics), data sample, a statistical population, population, or a probability distribution. For a data set, it may be thought of as the “ ...

is the estimator that minimizes expected loss experienced under the absolute-difference loss function. Still different estimators would be optimal under other, less common circumstances.

In economics, when an agent is risk neutral

In economics and finance, risk neutral preferences are preference (economics), preferences that are neither risk aversion, risk averse nor risk seeking. A risk neutral party's decisions are not affected by the degree of uncertainty in a set of out ...

, the objective function is simply expressed as the expected value of a monetary quantity, such as profit, income, or end-of-period wealth. For risk-averse or risk-loving agents, loss is measured as the negative of a utility function

In economics, utility is a measure of a certain person's satisfaction from a certain state of the world. Over time, the term has been used with at least two meanings.

* In a Normative economics, normative context, utility refers to a goal or ob ...

, and the objective function to be optimized is the expected value of utility.

Other measures of cost are possible, for example mortality or morbidity in the field of public health

Public health is "the science and art of preventing disease, prolonging life and promoting health through the organized efforts and informed choices of society, organizations, public and private, communities and individuals". Analyzing the de ...

or safety engineering.

For most optimization algorithms, it is desirable to have a loss function that is globally continuous and differentiable.

Two very commonly used loss functions are the squared loss, , and the absolute loss, . However the absolute loss has the disadvantage that it is not differentiable at . The squared loss has the disadvantage that it has the tendency to be dominated by outlier

In statistics, an outlier is a data point that differs significantly from other observations. An outlier may be due to a variability in the measurement, an indication of novel data, or it may be the result of experimental error; the latter are ...

s—when summing over a set of 's (as in ), the final sum tends to be the result of a few particularly large ''a''-values, rather than an expression of the average ''a''-value.

The choice of a loss function is not arbitrary. It is very restrictive and sometimes the loss function may be characterized by its desirable properties. Among the choice principles are, for example, the requirement of completeness of the class of symmetric statistics in the case of i.i.d. observations, the principle of complete information, and some others.

W. Edwards Deming and Nassim Nicholas Taleb argue that empirical reality, not nice mathematical properties, should be the sole basis for selecting loss functions, and real losses often are not mathematically nice and are not differentiable, continuous, symmetric, etc. For example, a person who arrives before a plane gate closure can still make the plane, but a person who arrives after can not, a discontinuity and asymmetry which makes arriving slightly late much more costly than arriving slightly early. In drug dosing, the cost of too little drug may be lack of efficacy, while the cost of too much may be tolerable toxicity, another example of asymmetry. Traffic, pipes, beams, ecologies, climates, etc. may tolerate increased load or stress with little noticeable change up to a point, then become backed up or break catastrophically. These situations, Deming and Taleb argue, are common in real-life problems, perhaps more common than classical smooth, continuous, symmetric, differentials cases.

See also

* Bayesian regret * Loss functions for classification * Discounted maximum loss * Hinge loss * Scoring rule * Statistical riskReferences

Further reading

* * * * * {{DEFAULTSORT:Loss Function Optimal decisions *