Decision Tree Pruning on:

[Wikipedia]

[Google]

[Amazon]

Pruning is a

Pruning is a

MDL based decision tree pruning

Decision tree pruning using backpropagation neural networks

Fast, Bottom-Up Decision Tree Pruning Algorithm

Introduction to Decision tree pruning

Decision trees Machine learning

Pruning is a

Pruning is a data compression

In information theory, data compression, source coding, or bit-rate reduction is the process of encoding information using fewer bits than the original representation. Any particular compression is either lossy or lossless. Lossless compressi ...

technique in machine learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence.

Machine ...

and search algorithm

In computer science, a search algorithm is an algorithm designed to solve a search problem. Search algorithms work to retrieve information stored within particular data structure, or calculated in the Feasible region, search space of a problem do ...

s that reduces the size of decision tree

A decision tree is a decision support tool that uses a tree-like model of decisions and their possible consequences, including chance event outcomes, resource costs, and utility. It is one way to display an algorithm that only contains co ...

s by removing sections of the tree that are non-critical and redundant to classify instances. Pruning reduces the complexity of the final classifier, and hence improves predictive accuracy by the reduction of overfitting

mathematical modeling, overfitting is "the production of an analysis that corresponds too closely or exactly to a particular set of data, and may therefore fail to fit to additional data or predict future observations reliably". An overfitt ...

.

One of the questions that arises in a decision tree algorithm is the optimal size of the final tree. A tree that is too large risks overfitting

mathematical modeling, overfitting is "the production of an analysis that corresponds too closely or exactly to a particular set of data, and may therefore fail to fit to additional data or predict future observations reliably". An overfitt ...

the training data and poorly generalizing to new samples. A small tree might not capture important structural information about the sample space. However, it is hard to tell when a tree algorithm should stop because it is impossible to tell if the addition of a single extra node will dramatically decrease error. This problem is known as the horizon effect

The horizon effect, also known as the horizon problem, is a problem in artificial intelligence whereby, in many games, the number of possible states or positions is immense and computers can only feasibly search a small portion of them, typically ...

. A common strategy is to grow the tree until each node contains a small number of instances then use pruning to remove nodes that do not provide additional information.

Pruning should reduce the size of a learning tree without reducing predictive accuracy as measured by a cross-validation set. There are many techniques for tree pruning that differ in the measurement that is used to optimize performance.

Techniques

Pruning processes can be divided into two types (pre- and post-pruning). Pre-pruning procedures prevent a complete induction of the training set by replacing a stop () criterion in the induction algorithm (e.g. max. Tree depth or information gain (Attr)> minGain). Pre-pruning methods are considered to be more efficient because they do not induce an entire set, but rather trees remain small from the start. Prepruning methods share a common problem, the horizon effect. This is to be understood as the undesired premature termination of the induction by the stop () criterion. Post-pruning (or just pruning) is the most common way of simplifying trees. Here, nodes and subtrees are replaced with leaves to reduce complexity. Pruning can not only significantly reduce the size but also improve the classification accuracy of unseen objects. It may be the case that the accuracy of the assignment on the train set deteriorates, but the accuracy of the classification properties of the tree increases overall. The procedures are differentiated on the basis of their approach in the tree (top-down or bottom-up).Bottom-up pruning

These procedures start at the last node in the tree (the lowest point). Following recursively upwards, they determine the relevance of each individual node. If the relevance for the classification is not given, the node is dropped or replaced by a leaf. The advantage is that no relevant sub-trees can be lost with this method. These methods include Reduced Error Pruning (REP), Minimum Cost Complexity Pruning (MCCP), or Minimum Error Pruning (MEP).Top-down pruning

In contrast to the bottom-up method, this method starts at the root of the tree. Following the structure below, a relevance check is carried out which decides whether a node is relevant for the classification of all n items or not. By pruning the tree at an inner node, it can happen that an entire sub-tree (regardless of its relevance) is dropped. One of these representatives is pessimistic error pruning (PEP), which brings quite good results with unseen items.Pruning algorithms

Reduced error pruning

One of the simplest forms of pruning is reduced error pruning. Starting at the leaves, each node is replaced with its most popular class. If the prediction accuracy is not affected then the change is kept. While somewhat naive, reduced error pruning has the advantage of simplicity and speed.Cost complexity pruning

Cost complexity pruning generates a series of trees where is the initial tree and is the root alone. At step , the tree is created by removing a subtree from tree and replacing it with a leaf node with value chosen as in the tree building algorithm. The subtree that is removed is chosen as follows: # Define the error rate of tree over data set as . # The subtree that minimizes is chosen for removal. The function defines the tree obtained by pruning the subtrees from the tree . Once the series of trees has been created, the best tree is chosen by generalized accuracy as measured by a training set or cross-validation.See also

*Alpha–beta pruning

Alpha–beta pruning is a search algorithm that seeks to decrease the number of nodes that are evaluated by the minimax algorithm in its search tree. It is an adversarial search algorithm used commonly for machine playing of two-player games ...

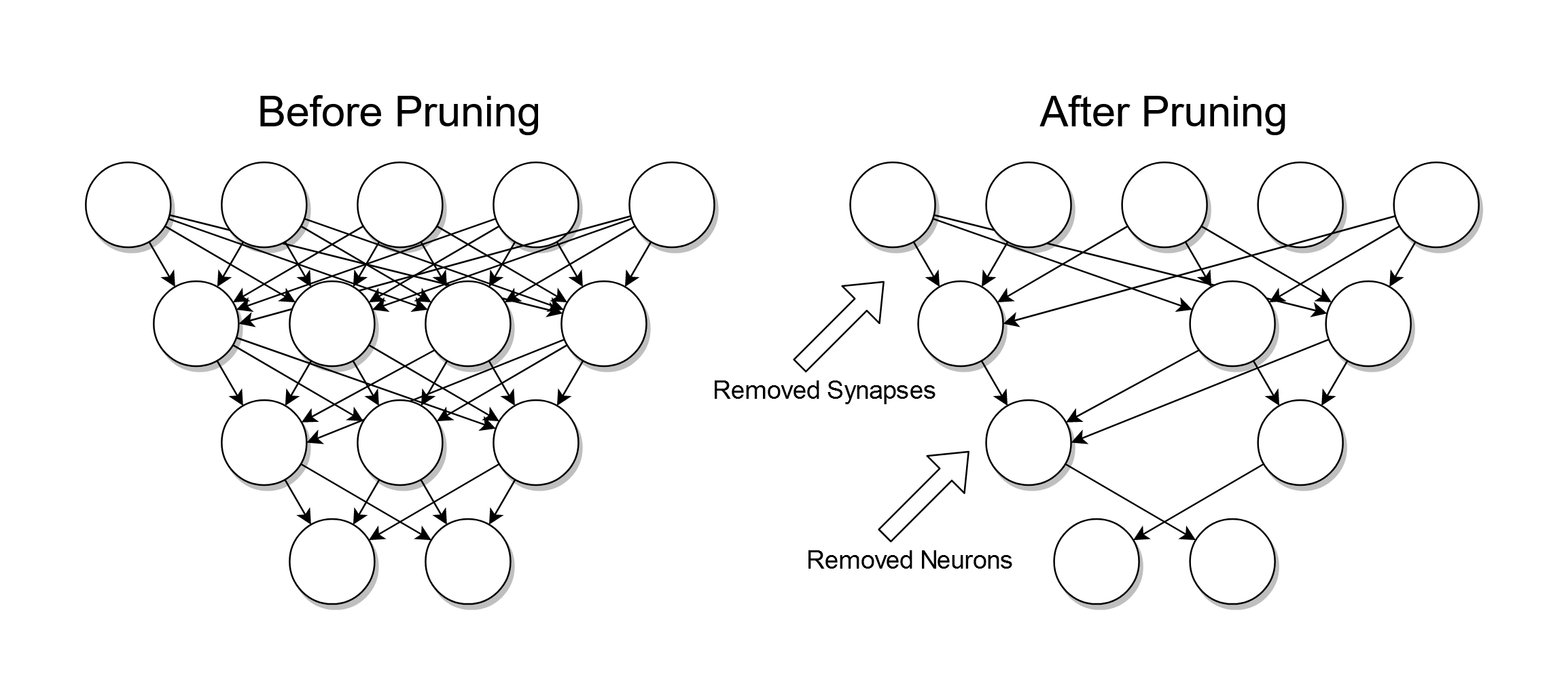

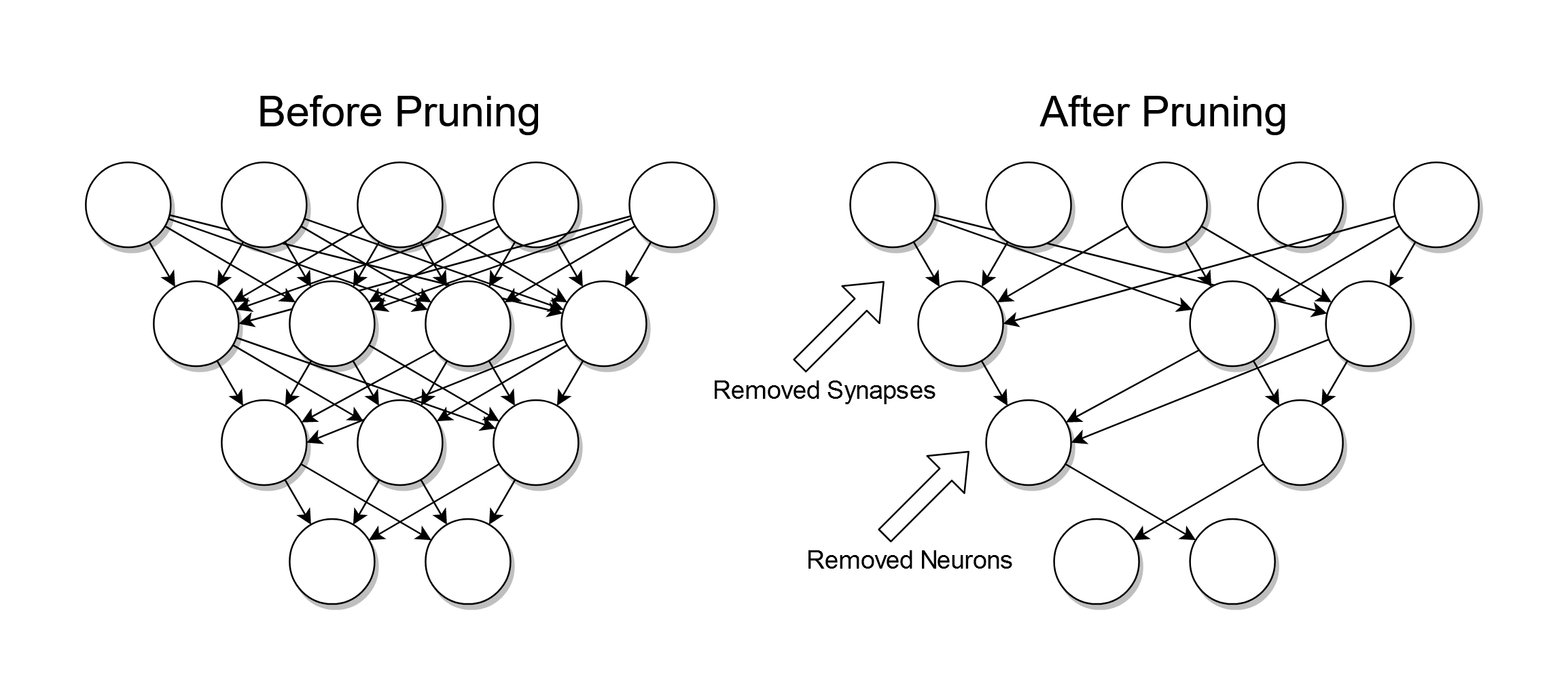

* Artificial neural network

Artificial neural networks (ANNs), usually simply called neural networks (NNs) or neural nets, are computing systems inspired by the biological neural networks that constitute animal brains.

An ANN is based on a collection of connected units ...

* Null-move heuristic

References

* * * * {{reflistFurther reading

MDL based decision tree pruning

Decision tree pruning using backpropagation neural networks

External links

Fast, Bottom-Up Decision Tree Pruning Algorithm

Introduction to Decision tree pruning

Decision trees Machine learning