|

Sample Entropy

Sample entropy (SampEn) is a modification of approximate entropy (ApEn), used for assessing the complexity of physiological time-series signals, diagnosing diseased states. SampEn has two advantages over ApEn: data length independence and a relatively trouble-free implementation. Also, there is a small computational difference: In ApEn, the comparison between the template vector (see below) and the rest of the vectors also includes comparison with itself. This guarantees that probabilities C_'^(r) are never zero. Consequently, it is always possible to take a logarithm of probabilities. Because template comparisons with itself lower ApEn values, the signals are interpreted to be more regular than they actually are. These self-matches are not included in SampEn. However, since SampEn makes direct use of the correlation integrals, it is not a real measure of information but an approximation. The foundations and differences with ApEn, as well as a step-by-step tutorial for its applicati ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Approximate Entropy

In statistics, an approximate entropy (ApEn) is a technique used to quantify the amount of regularity and the unpredictability of fluctuations over time-series data. For example, consider two series of data: : Series A: (0, 1, 0, 1, 0, 1, 0, 1, 0, 1, 0, 1, 0, 1, 0, 1, ...), which alternates 0 and 1. : Series B: (0, 1, 0, 0, 1, 0, 1, 0, 0, 1, 1, 1, 1, 0, 0, 1, ...), which has either a value of 0 or 1, chosen randomly, each with probability 1/2. Moment statistics, such as mean and variance, will not distinguish between these two series. Nor will rank order statistics distinguish between these series. Yet series A is perfectly regular: knowing a term has the value of 1 enables one to predict with certainty that the next term will have the value of 0. In contrast, series B is randomly valued: knowing a term has the value of 1 gives no insight into what value the next term will have. Regularity was originally measured by exact regularity statistics, which has mainly centered on various ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Vector (mathematics And Physics)

In mathematics and physics, vector is a term that refers colloquially to some quantities that cannot be expressed by a single number (a scalar), or to elements of some vector spaces. Historically, vectors were introduced in geometry and physics (typically in mechanics) for quantities that have both a magnitude and a direction, such as displacements, forces and velocity. Such quantities are represented by geometric vectors in the same way as distances, masses and time are represented by real numbers. The term ''vector'' is also used, in some contexts, for tuples, which are finite sequences of numbers of a fixed length. Both geometric vectors and tuples can be added and scaled, and these vector operations led to the concept of a vector space, which is a set equipped with a vector addition and a scalar multiplication that satisfy some axioms generalizing the main properties of operations on the above sorts of vectors. A vector space formed by geometric vectors is called a Euclidean ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

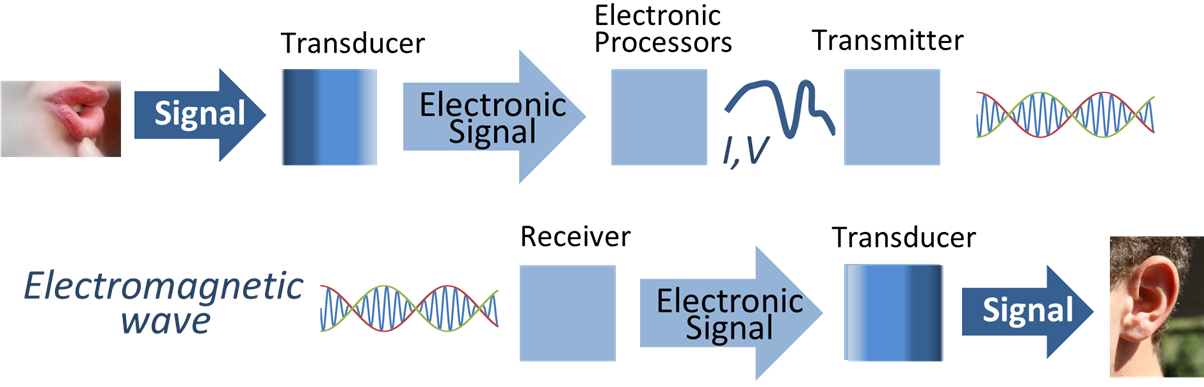

Statistical Signal Processing

Signal processing is an electrical engineering subfield that focuses on analyzing, modifying and synthesizing ''signals'', such as sound, images, and scientific measurements. Signal processing techniques are used to optimize transmissions, digital storage efficiency, correcting distorted signals, subjective video quality and to also detect or pinpoint components of interest in a measured signal. History According to Alan V. Oppenheim and Ronald W. Schafer, the principles of signal processing can be found in the classical numerical analysis techniques of the 17th century. They further state that the digital refinement of these techniques can be found in the digital control systems of the 1940s and 1950s. In 1948, Claude Shannon wrote the influential paper "A Mathematical Theory of Communication" which was published in the Bell System Technical Journal. The paper laid the groundwork for later development of information communication systems and the processing of signals fo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Approximate Entropy

In statistics, an approximate entropy (ApEn) is a technique used to quantify the amount of regularity and the unpredictability of fluctuations over time-series data. For example, consider two series of data: : Series A: (0, 1, 0, 1, 0, 1, 0, 1, 0, 1, 0, 1, 0, 1, 0, 1, ...), which alternates 0 and 1. : Series B: (0, 1, 0, 0, 1, 0, 1, 0, 0, 1, 1, 1, 1, 0, 0, 1, ...), which has either a value of 0 or 1, chosen randomly, each with probability 1/2. Moment statistics, such as mean and variance, will not distinguish between these two series. Nor will rank order statistics distinguish between these series. Yet series A is perfectly regular: knowing a term has the value of 1 enables one to predict with certainty that the next term will have the value of 0. In contrast, series B is randomly valued: knowing a term has the value of 1 gives no insight into what value the next term will have. Regularity was originally measured by exact regularity statistics, which has mainly centered on various ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Kolmogorov Complexity

In algorithmic information theory (a subfield of computer science and mathematics), the Kolmogorov complexity of an object, such as a piece of text, is the length of a shortest computer program (in a predetermined programming language) that produces the object as output. It is a measure of the computational resources needed to specify the object, and is also known as algorithmic complexity, Solomonoff–Kolmogorov–Chaitin complexity, program-size complexity, descriptive complexity, or algorithmic entropy. It is named after Andrey Kolmogorov, who first published on the subject in 1963 and is a generalization of classical information theory. The notion of Kolmogorov complexity can be used to state and prove impossibility results akin to Cantor's diagonal argument, Gödel's incompleteness theorem, and Turing's halting problem. In particular, no program ''P'' computing a lower bound for each text's Kolmogorov complexity can return a value essentially larger than ''P'''s own leng ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Standard Deviation

In statistics, the standard deviation is a measure of the amount of variation or dispersion of a set of values. A low standard deviation indicates that the values tend to be close to the mean (also called the expected value) of the set, while a high standard deviation indicates that the values are spread out over a wider range. Standard deviation may be abbreviated SD, and is most commonly represented in mathematical texts and equations by the lower case Greek letter σ (sigma), for the population standard deviation, or the Latin letter '' s'', for the sample standard deviation. The standard deviation of a random variable, sample, statistical population, data set, or probability distribution is the square root of its variance. It is algebraically simpler, though in practice less robust, than the average absolute deviation. A useful property of the standard deviation is that, unlike the variance, it is expressed in the same unit as the data. The standard deviation of a popu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Self-similarity

__NOTOC__ In mathematics, a self-similar object is exactly or approximately similar to a part of itself (i.e., the whole has the same shape as one or more of the parts). Many objects in the real world, such as coastlines, are statistically self-similar: parts of them show the same statistical properties at many scales. Self-similarity is a typical property of fractals. Scale invariance is an exact form of self-similarity where at any magnification there is a smaller piece of the object that is similar to the whole. For instance, a side of the Koch snowflake is both symmetrical and scale-invariant; it can be continually magnified 3x without changing shape. The non-trivial similarity evident in fractals is distinguished by their fine structure, or detail on arbitrarily small scales. As a counterexample, whereas any portion of a straight line may resemble the whole, further detail is not revealed. A time developing phenomenon is said to exhibit self-similarity if the numerical v ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Euclidean Distance

In mathematics, the Euclidean distance between two points in Euclidean space is the length of a line segment between the two points. It can be calculated from the Cartesian coordinates of the points using the Pythagorean theorem, therefore occasionally being called the Pythagorean distance. These names come from the ancient Greek mathematicians Euclid and Pythagoras, although Euclid did not represent distances as numbers, and the connection from the Pythagorean theorem to distance calculation was not made until the 18th century. The distance between two objects that are not points is usually defined to be the smallest distance among pairs of points from the two objects. Formulas are known for computing distances between different types of objects, such as the distance from a point to a line. In advanced mathematics, the concept of distance has been generalized to abstract metric spaces, and other distances than Euclidean have been studied. In some applications in statistic ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Chebyshev Distance

In mathematics, Chebyshev distance (or Tchebychev distance), maximum metric, or L∞ metric is a metric defined on a vector space where the distance between two vectors is the greatest of their differences along any coordinate dimension. It is named after Pafnuty Chebyshev. It is also known as chessboard distance, since in the game of chess the minimum number of moves needed by a king to go from one square on a chessboard to another equals the Chebyshev distance between the centers of the squares, if the squares have side length one, as represented in 2-D spatial coordinates with axes aligned to the edges of the board. For example, the Chebyshev distance between f6 and e2 equals 4. Definition The Chebyshev distance between two vectors or points ''x'' and ''y'', with standard coordinates x_i and y_i, respectively, is :D_(x,y) := \max_i(, x_i -y_i, ).\ This equals the limit of the L''p'' metrics: :\lim_ \bigg( \sum_^n \left, x_i - y_i \^p \bigg)^, hence it is also known as th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Simultaneous

{{disambiguation ...

Simultaneity may refer to: * Relativity of simultaneity, a concept in special relativity. * Simultaneity (music), more than one complete musical texture occurring at the same time, rather than in succession * Simultaneity, a concept in Endogeneity See also *Non-simultaneity * Diversity factor, or simultaneity factor *Time Structured Mapping Time Structured Mapping (TSM) is a score based system created and used by the composer Pete M Wyer. It uses the bar-lines found in conventional musical scores to indicate durational periods during which performers, who may include actors, sing ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Time-series

In mathematics, a time series is a series of data points indexed (or listed or graphed) in time order. Most commonly, a time series is a sequence taken at successive equally spaced points in time. Thus it is a sequence of discrete-time data. Examples of time series are heights of ocean tides, counts of sunspots, and the daily closing value of the Dow Jones Industrial Average. A time series is very frequently plotted via a run chart (which is a temporal line chart). Time series are used in statistics, signal processing, pattern recognition, econometrics, mathematical finance, weather forecasting, earthquake prediction, electroencephalography, control engineering, astronomy, communications engineering, and largely in any domain of applied science and engineering which involves temporal measurements. Time series ''analysis'' comprises methods for analyzing time series data in order to extract meaningful statistics and other characteristics of the data. Time series ''forecasting'' ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Probability

Probability is the branch of mathematics concerning numerical descriptions of how likely an Event (probability theory), event is to occur, or how likely it is that a proposition is true. The probability of an event is a number between 0 and 1, where, roughly speaking, 0 indicates impossibility of the event and 1 indicates certainty."Kendall's Advanced Theory of Statistics, Volume 1: Distribution Theory", Alan Stuart and Keith Ord, 6th Ed, (2009), .William Feller, ''An Introduction to Probability Theory and Its Applications'', (Vol 1), 3rd Ed, (1968), Wiley, . The higher the probability of an event, the more likely it is that the event will occur. A simple example is the tossing of a fair (unbiased) coin. Since the coin is fair, the two outcomes ("heads" and "tails") are both equally probable; the probability of "heads" equals the probability of "tails"; and since no other outcomes are possible, the probability of either "heads" or "tails" is 1/2 (which could also be written ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |