|

Ridge Function

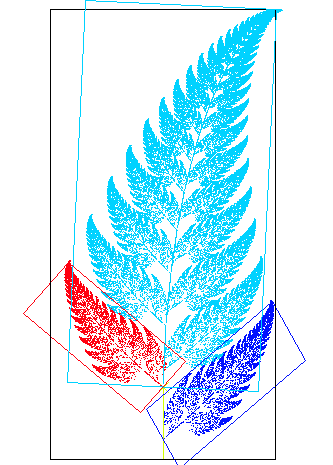

In mathematics, a ridge function is any function f:\R^d\rightarrow\R that can be written as the composition of a univariate function with an affine transformation, that is: f(\boldsymbol) = g(\boldsymbol\cdot \boldsymbol) for some g:\R\rightarrow\R and \boldsymbol\in\R^d. Coinage of the term 'ridge function' is often attributed to B.F. Logan and L.A. Shepp. Relevance A ridge function is not susceptible to the curse of dimensionality, making it an instrumental tool in various estimation problems. This is a direct result of the fact that ridge functions are constant in d-1 directions: Let a_1,\dots,a_ be d-1 independent vectors that are orthogonal to a, such that these vectors span d-1 dimensions. Then : f\left(\boldsymbol + \sum_^c_k\boldsymbol_k\right)=g\left(\boldsymbol\cdot\boldsymbol + \sum_^ c_k\boldsymbol_k\cdot\boldsymbol\right)=g\left(\boldsymbol\cdot\boldsymbol + \sum_^ c_k0\right) = g(\boldsymbol \cdot \boldsymbol)=f(\boldsymbol) for all c_i\in\R,1\le i [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Univariate

In mathematics, a univariate object is an expression, equation, function or polynomial involving only one variable. Objects involving more than one variable are multivariate. In some cases the distinction between the univariate and multivariate cases is fundamental; for example, the fundamental theorem of algebra and Euclid's algorithm for polynomials are fundamental properties of univariate polynomials that cannot be generalized to multivariate polynomials. In statistics, a univariate distribution characterizes one variable, although it can be applied in other ways as well. For example, univariate data are composed of a single scalar component. In time series analysis, the whole time series is the "variable": a univariate time series is the series of values over time of a single quantity. Correspondingly, a "multivariate time series" characterizes the changing values over time of several quantities. In some cases, the terminology is ambiguous, since the values within a univariate t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Affine Transformation

In Euclidean geometry, an affine transformation or affinity (from the Latin, ''affinis'', "connected with") is a geometric transformation that preserves lines and parallelism, but not necessarily Euclidean distances and angles. More generally, an affine transformation is an automorphism of an affine space (Euclidean spaces are specific affine spaces), that is, a function which maps an affine space onto itself while preserving both the dimension of any affine subspaces (meaning that it sends points to points, lines to lines, planes to planes, and so on) and the ratios of the lengths of parallel line segments. Consequently, sets of parallel affine subspaces remain parallel after an affine transformation. An affine transformation does not necessarily preserve angles between lines or distances between points, though it does preserve ratios of distances between points lying on a straight line. If is the point set of an affine space, then every affine transformation on can be repre ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Curse Of Dimensionality

The curse of dimensionality refers to various phenomena that arise when analyzing and organizing data in high-dimensional spaces that do not occur in low-dimensional settings such as the three-dimensional physical space of everyday experience. The expression was coined by Richard E. Bellman when considering problems in dynamic programming. Dimensionally cursed phenomena occur in domains such as numerical analysis, sampling, combinatorics, machine learning, data mining and databases. The common theme of these problems is that when the dimensionality increases, the volume of the space increases so fast that the available data become sparse. In order to obtain a reliable result, the amount of data needed often grows exponentially with the dimensionality. Also, organizing and searching data often relies on detecting areas where objects form groups with similar properties; in high dimensional data, however, all objects appear to be sparse and dissimilar in many ways, which prevents co ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Projection Pursuit

Projection pursuit (PP) is a type of statistical technique which involves finding the most "interesting" possible projections in multidimensional data. Often, projections which deviate more from a normal distribution are considered to be more interesting. As each projection is found, the data are reduced by removing the component along that projection, and the process is repeated to find new projections; this is the "pursuit" aspect that motivated the technique known as matching pursuit. The idea of projection pursuit is to locate the projection or projections from high-dimensional space to low-dimensional space that reveal the most details about the structure of the data set. Once an interesting set of projections has been found, existing structures (clusters, surfaces, etc.) can be extracted and analyzed separately. Projection pursuit has been widely used for blind source separation, so it is very important in independent component analysis. Projection pursuit seeks one projecti ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Generalized Linear Model

In statistics, a generalized linear model (GLM) is a flexible generalization of ordinary linear regression. The GLM generalizes linear regression by allowing the linear model to be related to the response variable via a ''link function'' and by allowing the magnitude of the variance of each measurement to be a function of its predicted value. Generalized linear models were formulated by John Nelder and Robert Wedderburn as a way of unifying various other statistical models, including linear regression, logistic regression and Poisson regression. They proposed an iteratively reweighted least squares method for maximum likelihood estimation (MLE) of the model parameters. MLE remains popular and is the default method on many statistical computing packages. Other approaches, including Bayesian regression and least squares fitting to variance stabilized responses, have been developed. Intuition Ordinary linear regression predicts the expected value of a given unknown quantity ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Activation Function

In artificial neural networks, the activation function of a node defines the output of that node given an input or set of inputs. A standard integrated circuit can be seen as a digital network of activation functions that can be "ON" (1) or "OFF" (0), depending on input. This is similar to the linear perceptron in neural networks. However, only ''nonlinear'' activation functions allow such networks to compute nontrivial problems using only a small number of nodes, and such activation functions are called nonlinearities. Classification of activation functions The most common activation functions can be divided in three categories: ridge functions, radial functions and fold functions. An activation function f is saturating if \lim_ , \nabla f(v), = 0. It is nonsaturating if it is not saturating. Non-saturating activation functions, such as ReLU, may be better than saturating activation functions, as they don't suffer from vanishing gradient. Ridge activation functions ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Neural Network

A neural network is a network or circuit of biological neurons, or, in a modern sense, an artificial neural network, composed of artificial neurons or nodes. Thus, a neural network is either a biological neural network, made up of biological neurons, or an artificial neural network, used for solving artificial intelligence (AI) problems. The connections of the biological neuron are modeled in artificial neural networks as weights between nodes. A positive weight reflects an excitatory connection, while negative values mean inhibitory connections. All inputs are modified by a weight and summed. This activity is referred to as a linear combination. Finally, an activation function controls the amplitude of the output. For example, an acceptable range of output is usually between 0 and 1, or it could be −1 and 1. These artificial networks may be used for predictive modeling, adaptive control and applications where they can be trained via a dataset. Self-learning resulting from e ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |