|

Program Counter

The program counter (PC), commonly called the instruction pointer (IP) in Intel x86 and Itanium microprocessors, and sometimes called the instruction address register (IAR), the instruction counter, or just part of the instruction sequencer, is a processor register that indicates where a computer is in its program sequence. Usually, the PC is incremented after fetching an instruction, and holds the memory address of (" points to") the next instruction that would be executed. Processors usually fetch instructions sequentially from memory, but ''control transfer'' instructions change the sequence by placing a new value in the PC. These include branches (sometimes called jumps), subroutine calls, and returns. A transfer that is conditional on the truth of some assertion lets the computer follow a different sequence under different conditions. A branch provides that the next instruction is fetched from elsewhere in memory. A subroutine call not only branches but saves the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

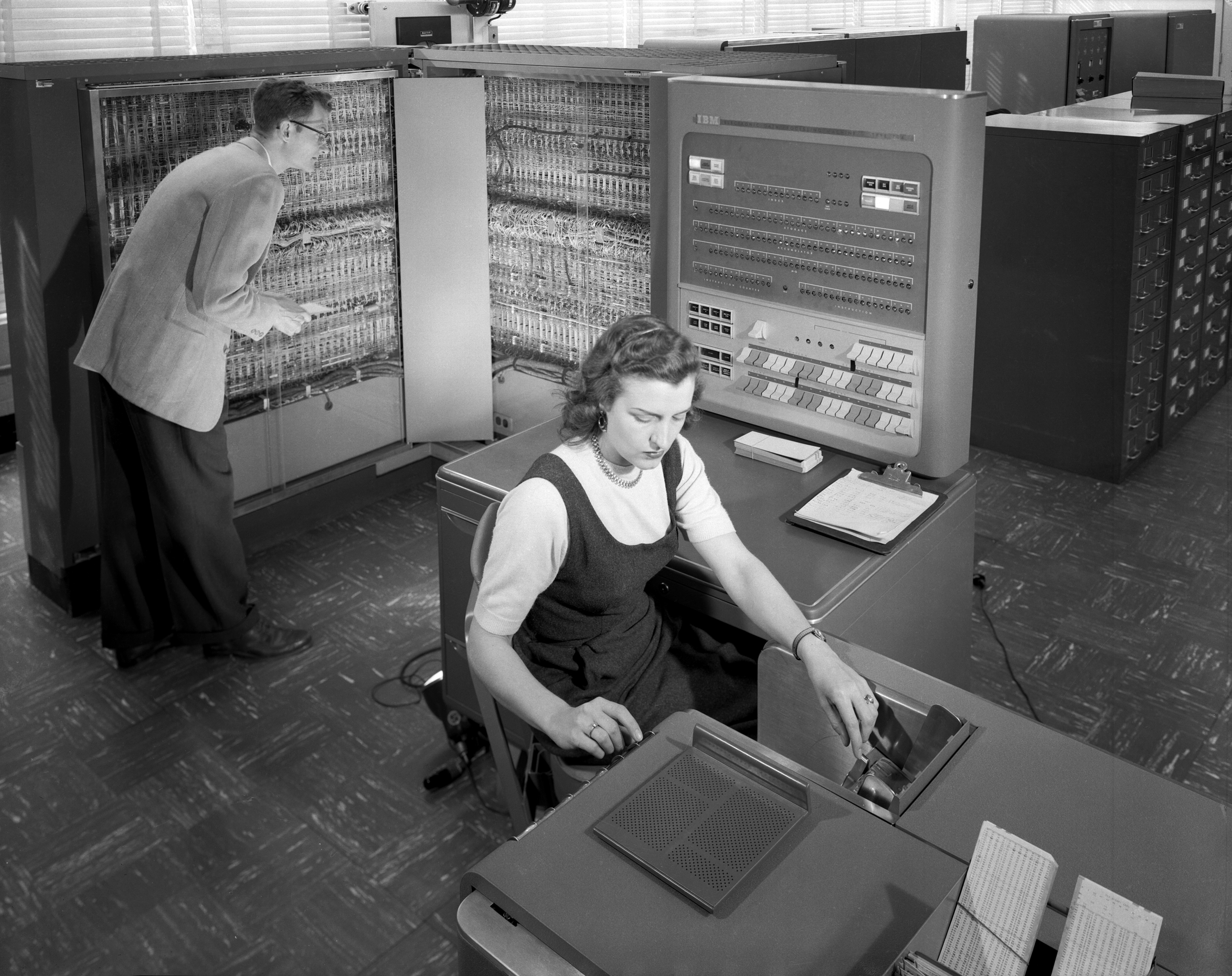

IBM 701console

International Business Machines Corporation (using the trademark IBM), nicknamed Big Blue, is an American multinational technology company headquartered in Armonk, New York, and present in over 175 countries. It is a publicly traded company and one of the 30 companies in the Dow Jones Industrial Average. IBM is the largest industrial research organization in the world, with 19 research facilities across a dozen countries; for 29 consecutive years, from 1993 to 2021, it held the record for most annual U.S. patents generated by a business. IBM was founded in 1911 as the Computing-Tabulating-Recording Company (CTR), a holding company of manufacturers of record-keeping and measuring systems. It was renamed "International Business Machines" in 1924 and soon became the leading manufacturer of punch-card tabulating systems. During the 1960s and 1970s, the IBM mainframe, exemplified by the System/360 and its successors, was the world's dominant computing platform, with the com ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Counter (digital)

In digital electronics, a counter is a sequential logic circuit that counts and stores the number of positive or negative transitions of a clock signal. A counter typically consists of flip-flop (electronics), flip-flops, which store a value representing the current count, and in many cases, additional logic to effect particular counting sequences, qualify clocks and perform other functions. Each relevant clock transition causes the value stored in the counter to increment or decrement (increase or decrease by one). A digital counter is a finite state machine, with a ''clock'' input signal and multiple output signals that collectively represent the state. The state indicates the current count, encoded directly as a binary number, binary or binary-coded decimal (BCD) number or using encodings such as one-hot or Gray code. Most counters have a ''reset'' input which is used to initialize the count. Depending on the design, a counter may have additional inputs to control functions suc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Dataflow

In computing, dataflow is a broad concept, which has various meanings depending on the application and context. In the context of software architecture, data flow relates to stream processing or reactive programming. Software architecture Dataflow computing is a software paradigm based on the idea of representing computations as a directed graph, where nodes are computations and data flow along the edges. Dataflow can also be called stream processing or reactive programming. There have been multiple data-flow/stream processing languages of various forms (see Stream processing). Data-flow hardware (see Dataflow architecture) is an alternative to the classic von Neumann architecture. The most obvious example of data-flow programming is the subset known as reactive programming with spreadsheets. As a user enters new values, they are instantly transmitted to the next logical "actor" or formula for calculation. Distributed data flows have also been proposed as a programming ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Parallel Computing

Parallel computing is a type of computing, computation in which many calculations or Process (computing), processes are carried out simultaneously. Large problems can often be divided into smaller ones, which can then be solved at the same time. There are several different forms of parallel computing: Bit-level parallelism, bit-level, Instruction-level parallelism, instruction-level, Data parallelism, data, and task parallelism. Parallelism has long been employed in high-performance computing, but has gained broader interest due to the physical constraints preventing frequency scaling.S.V. Adve ''et al.'' (November 2008)"Parallel Computing Research at Illinois: The UPCRC Agenda" (PDF). Parallel@Illinois, University of Illinois at Urbana-Champaign. "The main techniques for these performance benefits—increased clock frequency and smarter but increasingly complex architectures—are now hitting the so-called power wall. The computer industry has accepted that future performance inc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Von Neumann Bottleneck

The von Neumann architecture—also known as the von Neumann model or Princeton architecture—is a computer architecture based on the '' First Draft of a Report on the EDVAC'', written by John von Neumann in 1945, describing designs discussed with John Mauchly and J. Presper Eckert at the University of Pennsylvania's Moore School of Electrical Engineering. The document describes a design architecture for an electronic digital computer made of "organs" that were later understood to have these components: * A processing unit with both an arithmetic logic unit and processor registers * A control unit that includes an instruction register and a program counter * Memory that stores data and instructions * External mass storage * Input and output mechanisms.. The attribution of the invention of the architecture to von Neumann is controversial, not least because Eckert and Mauchly had done a lot of the required design work and claim to have had the idea for stored programs ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Control Flow

In computer science, control flow (or flow of control) is the order in which individual statements, instructions or function calls of an imperative program are executed or evaluated. The emphasis on explicit control flow distinguishes an ''imperative programming'' language from a ''declarative programming'' language. Within an imperative programming language, a ''control flow statement'' is a statement that results in a choice being made as to which of two or more paths to follow. For non-strict functional languages, functions and language constructs exist to achieve the same result, but they are usually not termed control flow statements. A set of statements is in turn generally structured as a block, which in addition to grouping, also defines a lexical scope. Interrupts and signals are low-level mechanisms that can alter the flow of control in a way similar to a subroutine, but usually occur as a response to some external stimulus or event (that can occur asynchr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Von Neumann Architecture

The von Neumann architecture—also known as the von Neumann model or Princeton architecture—is a computer architecture based on the '' First Draft of a Report on the EDVAC'', written by John von Neumann in 1945, describing designs discussed with John Mauchly and J. Presper Eckert at the University of Pennsylvania's Moore School of Electrical Engineering. The document describes a design architecture for an electronic digital computer made of "organs" that were later understood to have these components: * A processing unit with both an arithmetic logic unit and processor registers * A control unit that includes an instruction register and a program counter * Memory that stores data and instructions * External mass storage * Input and output mechanisms.. The attribution of the invention of the architecture to von Neumann is controversial, not least because Eckert and Mauchly had done a lot of the required design work and claim to have had the idea for stored programs ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Page (computer Memory)

A page, memory page, or virtual page is a fixed-length contiguous block of virtual memory, described by a single entry in a page table. It is the smallest unit of data for memory management in an operating system that uses virtual memory. Similarly, a page frame is the smallest fixed-length contiguous block of physical memory into which memory pages are mapped by the operating system. A transfer of pages between main memory and an auxiliary store, such as a hard disk drive, is referred to as paging or swapping. Explanation Computer memory is divided into pages so that information can be found more quickly. The concept is named by analogy to the pages of a printed book. If a reader wanted to find, for example, the 5,000th word in the book, they could count from the first word. This would be time-consuming. It would be much faster if the reader had a listing of how many words are on each page. From this listing they could determine which page the 5,000th word appears on, and h ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Segmentation (memory)

Memory segmentation is an operating system memory management technique of dividing a computer's primary memory into segments or sections. In a computer system using segmentation, a reference to a memory location includes a value that identifies a segment and an offset (memory location) within that segment. Segments or sections are also used in object files of compiled programs when they are linked together into a program image and when the image is loaded into memory. Segments usually correspond to natural divisions of a program such as individual routines or data tables so segmentation is generally more visible to the programmer than paging alone. Segments may be created for program modules, or for classes of memory usage such as code segments and data segments. Certain segments may be shared between programs. Segmentation was originally invented as a method by which system software could isolate software processes ( tasks) and data they are using. It was intended to ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

AVR Microcontrollers

AVR is a family of microcontrollers developed since 1996 by Atmel, acquired by Microchip Technology in 2016. They are 8-bit RISC single-chip microcontrollers based on a modified Harvard architecture. AVR was one of the first microcontroller families to use on-chip flash memory for program storage, as opposed to one-time programmable ROM, EPROM, or EEPROM used by other microcontrollers at the time. AVR microcontrollers are used numerously as embedded systems. They are especially common in hobbyist and educational embedded applications, popularized by their inclusion in many of the Arduino line of open hardware development boards. The AVR 8-bit microcontroller architecture was introduced in 1997. By 2003, Atmel had shipped 500 million AVR flash microcontrollers. History The AVR architecture was conceived by two students at the Norwegian Institute of Technology (NTH), Alf-Egil Bogen and Vegard Wollan.Archived aGhostarchiveand thWayback Machine Atmel says that the nam ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Stored-program Computer

A stored-program computer is a computer that stores program instructions in electronically, electromagnetically, or optically accessible memory. This contrasts with systems that stored the program instructions with plugboards or similar mechanisms. The definition is often extended with the requirement that the treatment of programs and data in memory be interchangeable or uniform. Description In principle, stored-program computers have been designed with various architectural characteristics. A computer with a von Neumann architecture stores program data and instruction data in the same memory, while a computer with a Harvard architecture has separate memories for storing program and data. However, the term ''stored-program computer'' is sometimes used as a synonym for the von Neumann architecture. Jack Copeland considers that it is "historically inappropriate, to refer to electronic stored-program digital computers as 'von Neumann machines. Hennessy and Patterson wrote th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Bus (computing)

In computer architecture, a bus (historically also called a data highway or databus) is a communication system that transfers Data (computing), data between components inside a computer or between computers. It encompasses both Computer hardware, hardware (e.g., wires, optical fiber) and software, including communication protocols. At its core, a bus is a shared physical pathway, typically composed of wires, traces on a circuit board, or busbars, that allows multiple devices to communicate. To prevent conflicts and ensure orderly data exchange, buses rely on a communication protocol to manage which device can transmit data at a given time. Buses are categorized based on their role, such as system buses (also known as internal buses, internal data buses, or memory buses) connecting the Central processing unit, CPU and Computer memory, memory. Expansion buses, also called peripheral buses, extend the system to connect additional devices, including peripherals. Examples of widely ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |