|

Null Distribution

In statistical hypothesis testing, the null distribution is the probability distribution of the test statistic when the null hypothesis is true. For example, in an F-test, the null distribution is an F-distribution. Null distribution is a tool scientists often use when conducting experiments. The null distribution is the distribution of two sets of data under a null hypothesis. If the results of the two sets of data are not outside the parameters of the expected results, then the null hypothesis is said to be true. Examples of application The null hypothesis is often a part of an experiment. The null hypothesis tries to show that among two sets of data, there is no statistical difference between the results of doing one thing as opposed to doing a different thing. For an example of this, a scientist might be trying to prove that people who walk two miles a day have healthier hearts than people who walk less than two miles a day. The scientist would use the null hypothesis to test ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Hypothesis Testing

A statistical hypothesis test is a method of statistical inference used to decide whether the data at hand sufficiently support a particular hypothesis. Hypothesis testing allows us to make probabilistic statements about population parameters. History Early use While hypothesis testing was popularized early in the 20th century, early forms were used in the 1700s. The first use is credited to John Arbuthnot (1710), followed by Pierre-Simon Laplace (1770s), in analyzing the human sex ratio at birth; see . Modern origins and early controversy Modern significance testing is largely the product of Karl Pearson ( ''p''-value, Pearson's chi-squared test), William Sealy Gosset ( Student's t-distribution), and Ronald Fisher ("null hypothesis", analysis of variance, "significance test"), while hypothesis testing was developed by Jerzy Neyman and Egon Pearson (son of Karl). Ronald Fisher began his life in statistics as a Bayesian (Zabell 1992), but Fisher soon grew disenchanted with t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Mark Van Der Laan

Mark Johannes van der Laan is the Jiann-Ping Hsu/Karl E. Peace Professor of Biostatistics and Statistics at the University of California, Berkeley. He has made contributions to survival analysis, semiparametric statistics, multiple testing, and causal inference. He also developed the targeted maximum likelihood estimation methodology. He is a founding editor of the Journal of Causal Inference. Education He received his Ph.D. from Utrecht University in 1993 with a dissertation titled "Efficient and Inefficient Estimation in Semiparametric Models". Career and research He received the COPSS Presidents' Award The COPSS Presidents' Award is given annually by the Committee of Presidents of Statistical Societies to a young statistician in recognition of outstanding contributions to the profession of statistics. The COPSS Presidents' Award is generally ... in 2005, the Mortimer Spiegelman Award in 2004, and the van Dantzig Award in 2005. Publications * * * References ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bayesian Probability

Bayesian probability is an Probability interpretations, interpretation of the concept of probability, in which, instead of frequentist probability, frequency or propensity probability, propensity of some phenomenon, probability is interpreted as reasonable expectation representing a state of knowledge or as quantification of a personal belief. The Bayesian interpretation of probability can be seen as an extension of propositional logic that enables reasoning with Hypothesis, hypotheses; that is, with propositions whose truth value, truth or falsity is unknown. In the Bayesian view, a probability is assigned to a hypothesis, whereas under frequentist inference, a hypothesis is typically tested without being assigned a probability. Bayesian probability belongs to the category of evidential probabilities; to evaluate the probability of a hypothesis, the Bayesian probabilist specifies a prior probability. This, in turn, is then updated to a posterior probability in the light of new, re ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Trevor Hastie

Trevor John Hastie (born 27 June 1953) is an American statistician and computer scientist. He is currently serving as the John A. Overdeck Professor of Mathematical Sciences and Professor of Statistics at Stanford University. Hastie is known for his contributions to applied statistics, especially in the field of machine learning, data mining, and bioinformatics. He has authored several popular books in statistical learning, including ''The Elements of Statistical Learning: Data Mining, Inference, and Prediction''. Hastie has been listed as an ISI Highly Cited Author in Mathematics by the ISI Web of Knowledge. Education and career Hastie was born on 27 June 1953 in South Africa. He received his B.S. in statistics from the Rhodes University in 1976 and master's degree from University of Cape Town in 1979. Hastie joined the doctoral program at Stanford University in 1980 and received his Ph.D. in 1984 under the supervision of Werner Stuetzle. His dissertation was "Principal Curves ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bradley Efron

Bradley Efron (; born May 24, 1938) is an American statistician. Efron has been president of the American Statistical Association (2004) and of the Institute of Mathematical Statistics (1987–1988).Cochran, J. (1 September 2015), "ASA Leaders Reminisce: Brad Efron", ''Amstat News''. He is a past editor (for theory and methods) of the ''Journal of the American Statistical Association'', and he is the founding editor of the '' Annals of Applied Statistics''. Efron is also the recipient of many awards (see below). Efron is especially known for proposing the bootstrap resampling technique, which has had a major impact in the field of statistics and virtually every area of statistical application. The bootstrap was one of the first computer-intensive statistical techniques, replacing traditional algebraic derivations with data-based computer simulations. Life and career Efron was born in St. Paul, Minnesota in May 1938, the son of Russian Jewish immigrants Esther and Miles E ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Maximum Likelihood Estimation

In statistics, maximum likelihood estimation (MLE) is a method of estimating the parameters of an assumed probability distribution, given some observed data. This is achieved by maximizing a likelihood function so that, under the assumed statistical model, the observed data is most probable. The point in the parameter space that maximizes the likelihood function is called the maximum likelihood estimate. The logic of maximum likelihood is both intuitive and flexible, and as such the method has become a dominant means of statistical inference. If the likelihood function is differentiable, the derivative test for finding maxima can be applied. In some cases, the first-order conditions of the likelihood function can be solved analytically; for instance, the ordinary least squares estimator for a linear regression model maximizes the likelihood when all observed outcomes are assumed to have Normal distributions with the same variance. From the perspective of Bayesian inference, M ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Power

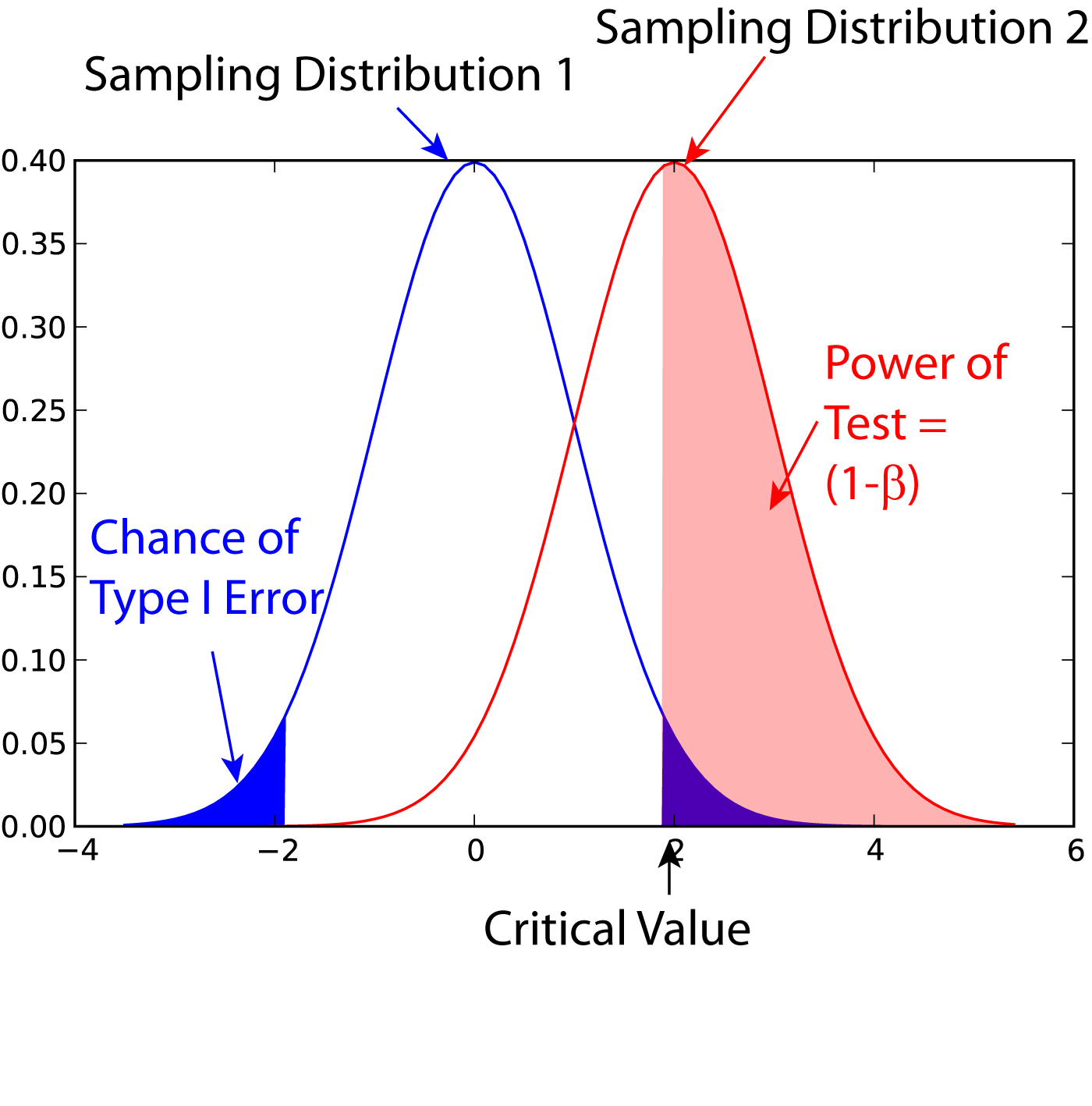

In statistics, the power of a binary hypothesis test is the probability that the test correctly rejects the null hypothesis (H_0) when a specific alternative hypothesis (H_1) is true. It is commonly denoted by 1-\beta, and represents the chances of a true positive detection conditional on the actual existence of an effect to detect. Statistical power ranges from 0 to 1, and as the power of a test increases, the probability \beta of making a type II error by wrongly failing to reject the null hypothesis decreases. Notation This article uses the following notation: * ''β'' = probability of a Type II error, known as a "false negative" * 1 − ''β'' = probability of a "true positive", i.e., correctly rejecting the null hypothesis. "1 − ''β''" is also known as the power of the test. * ''α'' = probability of a Type I error, known as a "false positive" * 1 − ''α'' = probability of a "true negative", i.e., correctly not rejecting the null hypothesis Description For a ty ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Type I Error

In statistical hypothesis testing, a type I error is the mistaken rejection of an actually true null hypothesis (also known as a "false positive" finding or conclusion; example: "an innocent person is convicted"), while a type II error is the failure to reject a null hypothesis that is actually false (also known as a "false negative" finding or conclusion; example: "a guilty person is not convicted"). Much of statistical theory revolves around the minimization of one or both of these errors, though the complete elimination of either is a statistical impossibility if the outcome is not determined by a known, observable causal process. By selecting a low threshold (cut-off) value and modifying the alpha (α) level, the quality of the hypothesis test can be increased. The knowledge of type I errors and type II errors is widely used in medical science, biometrics and computer science. Intuitively, type I errors can be thought of as errors of ''commission'', i.e. the researcher unluck ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bootstrapping (statistics)

Bootstrapping is any test or metric that uses random sampling with replacement (e.g. mimicking the sampling process), and falls under the broader class of resampling methods. Bootstrapping assigns measures of accuracy (bias, variance, confidence intervals, prediction error, etc.) to sample estimates.software This technique allows estimation of the sampling distribution of almost any statistic using random sampling methods. Bootstrapping estimates the properties of an (such as its ) by measuring those properties when sampling from an approximating distribution. One standard choice for an a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sandrine Dudoit

Sandrine Dudoit is a professor of statistics and public health at the University of California, Berkeley. Her research applies statistics to microarray and genetic data; she is known as one of the founders of the open-source Bioconductor project for the development of bioinformatics software. Education and career Dudoit studied for the French baccalauréat in mathematics and physical sciences at the in Paris. She moved to Canada for her undergraduate studies, completing a bachelor's degree in mathematics in 1992 at Carleton University. As a beginning graduate student in the department of statistics at the University of California, Berkeley, Dudoit was the recipient of the Gertrude Cox Scholarship of the American Statistical Association's Committee on Women in Statistics. She earned her doctorate at Berkeley in 1999. Her dissertation, ''Linkage Analysis of Complex Human Traits Using Identity by Descent Data'', was supervised by Terry Speed. Career and research After postdoctoral re ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Probability Distribution

In probability theory and statistics, a probability distribution is the mathematical function that gives the probabilities of occurrence of different possible outcomes for an experiment. It is a mathematical description of a random phenomenon in terms of its sample space and the probabilities of events (subsets of the sample space). For instance, if is used to denote the outcome of a coin toss ("the experiment"), then the probability distribution of would take the value 0.5 (1 in 2 or 1/2) for , and 0.5 for (assuming that the coin is fair). Examples of random phenomena include the weather conditions at some future date, the height of a randomly selected person, the fraction of male students in a school, the results of a survey to be conducted, etc. Introduction A probability distribution is a mathematical description of the probabilities of events, subsets of the sample space. The sample space, often denoted by \Omega, is the set of all possible outcomes of a random phe ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Type I Errors

In statistical hypothesis testing, a type I error is the mistaken rejection of an actually true null hypothesis (also known as a "false positive" finding or conclusion; example: "an innocent person is convicted"), while a type II error is the failure to reject a null hypothesis that is actually false (also known as a "false negative" finding or conclusion; example: "a guilty person is not convicted"). Much of statistical theory revolves around the minimization of one or both of these errors, though the complete elimination of either is a statistical impossibility if the outcome is not determined by a known, observable causal process. By selecting a low threshold (cut-off) value and modifying the alpha (α) level, the quality of the hypothesis test can be increased. The knowledge of type I errors and type II errors is widely used in medical science, biometrics and computer science. Intuitively, type I errors can be thought of as errors of ''commission'', i.e. the researcher unluck ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |