|

Likelihood Principle

In statistics, the likelihood principle is the proposition that, given a statistical model, all the evidence in a sample relevant to model parameters is contained in the likelihood function. A likelihood function arises from a probability density function considered as a function of its distributional parameterization argument. For example, consider a model which gives the probability density function \; f_X(x \,\vert\, \theta)\; of observable random variable \, X \, as a function of a parameter \,\theta~. Then for a specific value \,x\, of \,X~, the function \,\mathcal(\theta \,\vert\, x) = f_X(x \,\vert\, \theta)\; is a likelihood function of \,\theta\;:~ it gives a measure of how "likely" any particular value of \,\theta\, is, if we know that \,X\, has the value \,x~. The density function may be a density with respect to counting measure, i.e. a probability mass function. Two likelihood functions are ''equivalent'' if one is a scalar multiple of the other. The like ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistics

Statistics (from German language, German: ''wikt:Statistik#German, Statistik'', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, industrial, or social problem, it is conventional to begin with a statistical population or a statistical model to be studied. Populations can be diverse groups of people or objects such as "all people living in a country" or "every atom composing a crystal". Statistics deals with every aspect of data, including the planning of data collection in terms of the design of statistical survey, surveys and experimental design, experiments.Dodge, Y. (2006) ''The Oxford Dictionary of Statistical Terms'', Oxford University Press. When census data cannot be collected, statisticians collect data by developing specific experiment designs and survey sample (statistics), samples. Representative sampling as ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bayes Factor

The Bayes factor is a ratio of two competing statistical models represented by their marginal likelihood, and is used to quantify the support for one model over the other. The models in questions can have a common set of parameters, such as a null hypothesis and an alternative, but this is not necessary; for instance, it could also be a non-linear model compared to its linear approximation. The Bayes factor can be thought of as a Bayesian analog to the likelihood-ratio test, but since it uses the (integrated) marginal likelihood instead of the maximized likelihood, both tests only coincide under simple hypotheses (e.g., two specific parameter values). Also, in contrast with null hypothesis significance testing, Bayes factors support evaluation of evidence ''in favor'' of a null hypothesis, rather than only allowing the null to be rejected or not rejected. Although conceptually simple, the computation of the Bayes factor can be challenging depending on the complexity of the model ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Conditionality Principle

The conditionality principle is a Fisherian principle of statistical inference that Allan Birnbaum formally defined and studied in his 1962 JASA article. Informally, the conditionality principle can be taken as the claim that experiments which were not actually performed are statistically irrelevant. Together with the sufficiency principle, Birnbaum's version of the principle implies the famous likelihood principle. Although the relevance of the proof to data analysis remains controversial among statisticians, many Bayesians and likelihoodists consider the likelihood principle foundational for statistical inference. Formulation The conditionality principle makes an assertion about an experiment ''E'' that can be described as a mixture of several component experiments ''E''h where ''h'' is an ancillary statistic An ancillary statistic is a measure of a sample whose distribution (or whose pmf or pdf) does not depend on the parameters of the model. An ancillary statistic is a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Allan Birnbaum

Allan Birnbaum (May 27, 1923 – July 1, 1976) was an American statistician who contributed to statistical inference, foundations of statistics, statistical genetics, statistical psychology, and history of statistics. Life and career Birnbaum was born in San Francisco. His parents were Russian-born Orthodox Jews. He studied mathematics at the University of California, Berkeley, doing a premedical programme at the same time. After taking a bachelor's degree in mathematics in 1945, he spent two years doing graduate courses in science, mathematics and philosophy, planning perhaps a career in the philosophy of science. One of his philosophy teachers, Hans Reichenbach, suggested he combine philosophy with science. He went to Columbia University to do a PhD with Abraham Wald but, when Wald died in a plane crash, Birnbaum asked Erich Leo Lehmann, who was visiting Columbia to take him on. Birnbaum's thesis and his early work was very much in the spirit of Lehmann's classic text ''Testi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Philosophy Of Science

Philosophy of science is a branch of philosophy concerned with the foundations, methods, and implications of science. The central questions of this study concern what qualifies as science, the reliability of scientific theories, and the ultimate purpose of science. This discipline overlaps with metaphysics, ontology, and epistemology, for example, when it explores the relationship between science and truth. Philosophy of science focuses on metaphysical, epistemic and semantic aspects of science. Ethical issues such as bioethics and scientific misconduct are often considered ethics or science studies rather than the philosophy of science. There is no consensus among philosophers about many of the central problems concerned with the philosophy of science, including whether science can reveal the truth about unobservable things and whether scientific reasoning can be justified at all. In addition to these general questions about science as a whole, philosophers of science consi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Ian Hacking

Ian MacDougall Hacking (born February 18, 1936) is a Canadian philosopher specializing in the philosophy of science. Throughout his career, he has won numerous awards, such as the Killam Prize for the Humanities and the Balzan Prize, and been a member of many prestigious groups, including the Order of Canada, the Royal Society of Canada and the British Academy. Life Born in Vancouver, British Columbia, Canada, he earned undergraduate degrees from the University of British Columbia (1956) and the University of Cambridge (1958), where he was a student at Trinity College. Hacking also earned his PhD at Cambridge (1962), under the direction of Casimir Lewy, a former student of Ludwig Wittgenstein. He started his teaching career as an instructor at Princeton University in 1960 but, after just one year, moved to the University of Virginia as an assistant professor. After working as a research fellow at Cambridge from 1962 to 1964, he taught at his alma mater, UBC, first as an ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Ronald A

Ronald is a masculine given name derived from the Old Norse ''Rögnvaldr'',#H2, Hanks; Hardcastle; Hodges (2006) p. 234; #H1, Hanks; Hodges (2003) § Ronald. or possibly from Old English ''Regenweald''. In some cases ''Ronald'' is an Anglicised form of the Gaelic ''Raghnall'', a name likewise derived from ''Rögnvaldr''. The latter name is composed of the Old Norse elements ''regin'' ("advice", "decision") and ''valdr'' ("ruler"). ''Ronald'' was originally used in England and Scotland, where Scandinavian influences were once substantial, although now the name is common throughout the English-speaking world. A short form of ''Ronald'' is ''Ron''. Pet forms of ''Ronald'' include ''Roni'' and ''Ronnie (given name), Ronnie''. ''Ronalda'' and ''Rhonda'' are feminine forms of ''Ronald''. ''Rhona (other), Rhona'', a modern name apparently only dating back to the late nineteenth century, may have originated as a feminine form of ''Ronald''.#H2, Hanks; Hardcastle; Hodges (2006) pp ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Maximum Likelihood

In statistics, maximum likelihood estimation (MLE) is a method of estimation theory, estimating the Statistical parameter, parameters of an assumed probability distribution, given some observed data. This is achieved by Mathematical optimization, maximizing a likelihood function so that, under the assumed statistical model, the Realization (probability), observed data is most probable. The point estimate, point in the parameter space that maximizes the likelihood function is called the maximum likelihood estimate. The logic of maximum likelihood is both intuitive and flexible, and as such the method has become a dominant means of statistical inference. If the likelihood function is Differentiable function, differentiable, the derivative test for finding maxima can be applied. In some cases, the first-order conditions of the likelihood function can be solved analytically; for instance, the ordinary least squares estimator for a linear regression model maximizes the likelihood when ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Significance Level

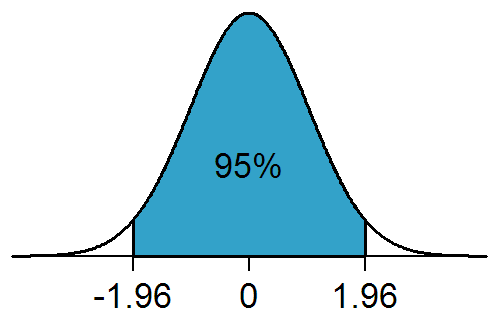

In statistical hypothesis testing, a result has statistical significance when it is very unlikely to have occurred given the null hypothesis (simply by chance alone). More precisely, a study's defined significance level, denoted by \alpha, is the probability of the study rejecting the null hypothesis, given that the null hypothesis is true; and the ''p''-value of a result, ''p'', is the probability of obtaining a result at least as extreme, given that the null hypothesis is true. The result is statistically significant, by the standards of the study, when p \le \alpha. The significance level for a study is chosen before data collection, and is typically set to 5% or much lower—depending on the field of study. In any experiment or observation that involves drawing a sample from a population, there is always the possibility that an observed effect would have occurred due to sampling error alone. But if the ''p''-value of an observed effect is less than (or equal to) the significa ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Simple Hypothesis

A statistical hypothesis test is a method of statistical inference used to decide whether the data at hand sufficiently support a particular hypothesis. Hypothesis testing allows us to make probabilistic statements about population parameters. History Early use While hypothesis testing was popularized early in the 20th century, early forms were used in the 1700s. The first use is credited to John Arbuthnot (1710), followed by Pierre-Simon Laplace (1770s), in analyzing the human sex ratio at birth; see . Modern origins and early controversy Modern significance testing is largely the product of Karl Pearson ( ''p''-value, Pearson's chi-squared test), William Sealy Gosset ( Student's t-distribution), and Ronald Fisher ("null hypothesis", analysis of variance, "significance test"), while hypothesis testing was developed by Jerzy Neyman and Egon Pearson (son of Karl). Ronald Fisher began his life in statistics as a Bayesian (Zabell 1992), but Fisher soon grew disenchanted with ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Power

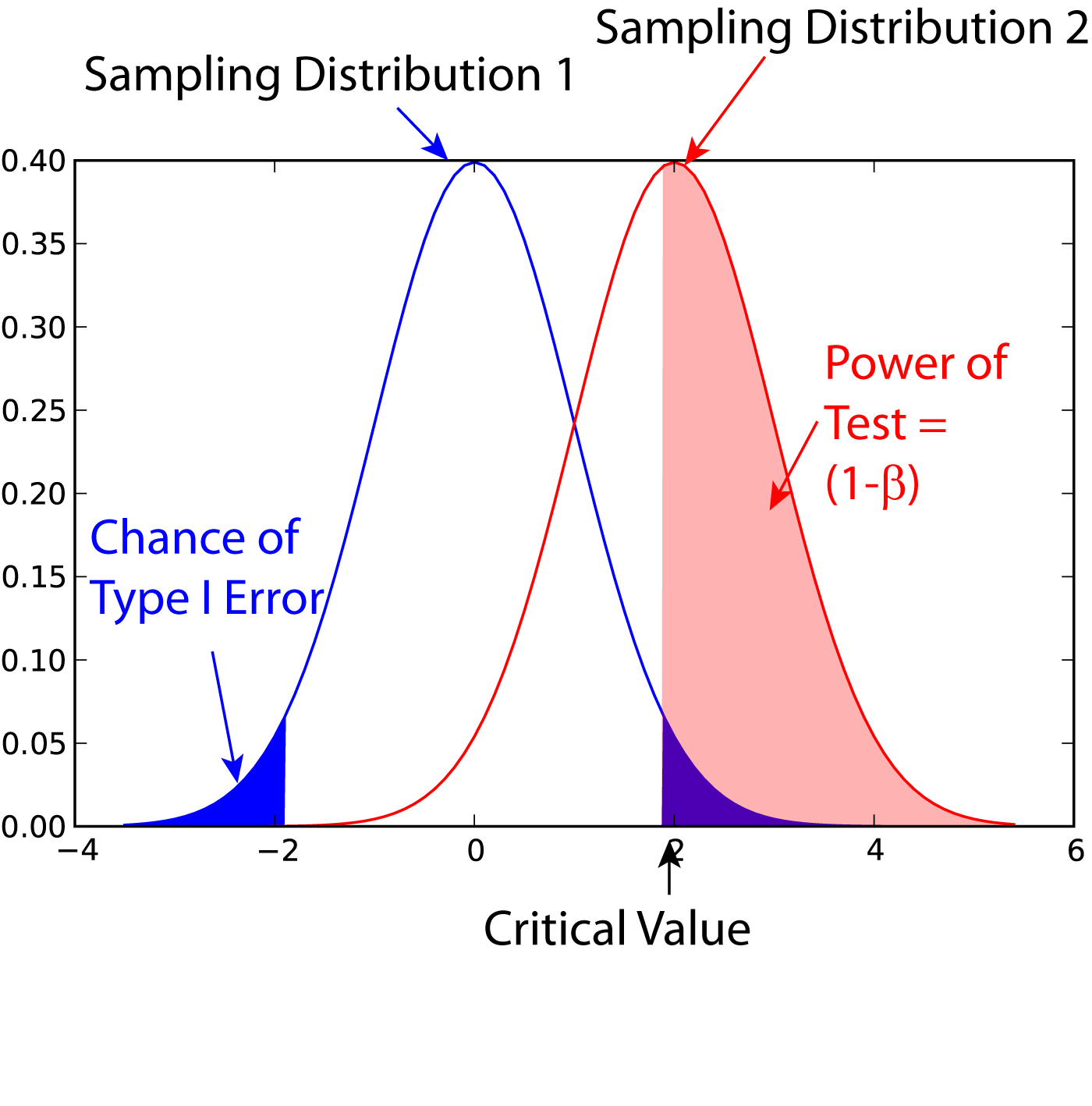

In statistics, the power of a binary hypothesis test is the probability that the test correctly rejects the null hypothesis (H_0) when a specific alternative hypothesis (H_1) is true. It is commonly denoted by 1-\beta, and represents the chances of a true positive detection conditional on the actual existence of an effect to detect. Statistical power ranges from 0 to 1, and as the power of a test increases, the probability \beta of making a type II error by wrongly failing to reject the null hypothesis decreases. Notation This article uses the following notation: * ''β'' = probability of a Type II error, known as a "false negative" * 1 − ''β'' = probability of a "true positive", i.e., correctly rejecting the null hypothesis. "1 − ''β''" is also known as the power of the test. * ''α'' = probability of a Type I error, known as a "false positive" * 1 − ''α'' = probability of a "true negative", i.e., correctly not rejecting the null hypothesis Description For a ty ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Neyman–Pearson Lemma

In statistics, the Neyman–Pearson lemma was introduced by Jerzy Neyman and Egon Pearson in a paper in 1933. The Neyman-Pearson lemma is part of the Neyman-Pearson theory of statistical testing, which introduced concepts like errors of the second kind, power function, and inductive behavior.The Fisher, Neyman-Pearson Theories of Testing Hypotheses: One Theory or Two?: Journal of the American Statistical Association: Vol 88, No 424The Fisher, Neyman-Pearson Theories of Testing Hypotheses: One Theory or Two?: Journal of the American Statistical Association: Vol 88, No 424/ref>Wald: Chapter II: The Neyman-Pearson Theory of Testing a Statistical HypothesisWald: Chapter II: The Neyman-Pearson Theory of Testing a Statistical Hypothesis/ref>The Empire of ChanceThe Empire of Chance/ref> The previous Fisherian theory of significance testing postulated only one hypothesis. By introducing a competing hypothesis, the Neyman-Pearsonian flavor of statistical testing allows investigating the two ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |