|

Lossless

Lossless compression is a class of data compression that allows the original data to be perfectly reconstructed from the compressed data with no loss of information. Lossless compression is possible because most real-world data exhibits statistical redundancy. By contrast, lossy compression permits reconstruction only of an approximation of the original data, though usually with greatly improved compression rates (and therefore reduced media sizes). By operation of the pigeonhole principle, no lossless compression algorithm can shrink the size of all possible data: Some data will get longer by at least one symbol or bit. Compression algorithms are usually effective for human- and machine-readable documents and cannot shrink the size of random data that contain no redundancy. Different algorithms exist that are designed either with a specific type of input data in mind or with specific assumptions about what kinds of redundancy the uncompressed data are likely to contain. Lo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

TIFF

Tag Image File Format or Tagged Image File Format, commonly known by the abbreviations TIFF or TIF, is an image file format for storing raster graphics images, popular among graphic artists, the publishing industry, and photographers. TIFF is widely supported by scanning, faxing, word processing, optical character recognition, image manipulation, desktop publishing, and page-layout applications. The format was created by the Aldus Corporation for use in desktop publishing. It published the latest version 6.0 in 1992, subsequently updated with an Adobe Systems copyright after the latter acquired Aldus in 1994. Several Aldus or Adobe technical notes have been published with minor extensions to the format, and several specifications have been based on TIFF 6.0, including TIFF/EP (ISO 12234-2), TIFF/IT (ISO 12639), TIFF-F (RFC 2306) and TIFF-FX (RFC 3949). History TIFF was created as an attempt to get desktop scanner vendors of the mid-1980s to agree on a common scanned image f ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Data Compression

In information theory, data compression, source coding, or bit-rate reduction is the process of encoding information using fewer bits than the original representation. Any particular compression is either lossy or lossless. Lossless compression reduces bits by identifying and eliminating statistical redundancy. No information is lost in lossless compression. Lossy compression reduces bits by removing unnecessary or less important information. Typically, a device that performs data compression is referred to as an encoder, and one that performs the reversal of the process (decompression) as a decoder. The process of reducing the size of a data file is often referred to as data compression. In the context of data transmission, it is called source coding: encoding is done at the source of the data before it is stored or transmitted. Source coding should not be confused with channel coding, for error detection and correction or line coding, the means for mapping data onto a sig ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Audio Compression (data)

In information theory, data compression, source coding, or bit-rate reduction is the process of encoding information using fewer bits than the original representation. Any particular compression is either lossy or lossless. Lossless compression reduces bits by identifying and eliminating Redundancy (information theory), statistical redundancy. No information is lost in lossless compression. Lossy compression reduces bits by removing unnecessary or less important information. Typically, a device that performs data compression is referred to as an encoder, and one that performs the reversal of the process (decompression) as a decoder. The process of reducing the size of a data file is often referred to as data compression. In the context of data transmission, it is called source coding: encoding is done at the source of the data before it is stored or transmitted. Source coding should not be confused with channel coding, for error detection and correction or line coding, the means ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Lossy Compression

In information technology, lossy compression or irreversible compression is the class of data compression methods that uses inexact approximations and partial data discarding to represent the content. These techniques are used to reduce data size for storing, handling, and transmitting content. Higher degrees of approximation create coarser images as more details are removed. This is opposed to lossless data compression (reversible data compression) which does not degrade the data. The amount of data reduction possible using lossy compression is much higher than using lossless techniques. Well-designed lossy compression technology often reduces file sizes significantly before degradation is noticed by the end-user. Even when noticeable by the user, further data reduction may be desirable (e.g., for real-time communication or to reduce transmission times or storage needs). The most widely used lossy compression algorithm is the discrete cosine transform (DCT), first published by N ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Portable Network Graphics

Portable Network Graphics (PNG, officially pronounced , colloquially pronounced ) is a raster graphics, raster-graphics file graphics file format, format that supports lossless data compression. PNG was developed as an improved, non-patented replacement for Graphics Interchange Format (GIF). PNG supports palette-based images (with palettes of 24-bit RGB color model, RGB or 32-bit RGBA color space, RGBA colors), grayscale images (with or without an Alpha compositing, alpha channel for transparency), and full-color non-palette-based RGB or RGBA images. The PNG working group designed the format for transferring images on the Internet, not for professional-quality print graphics; therefore, non-RGB color spaces such as CMYK color model, CMYK are not supported. A PNG file contains a single image in an extensible structure of ''chunks'', encoding the basic pixels and other information such as textual comments and Integrity checker, integrity checks documented in Request for Comments ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bit Rate

In telecommunications and computing, bit rate (bitrate or as a variable ''R'') is the number of bits that are conveyed or processed per unit of time. The bit rate is expressed in the unit bit per second (symbol: bit/s), often in conjunction with an SI prefix such as kilo (1 kbit/s = 1,000 bit/s), mega (1 Mbit/s = 1,000 kbit/s), giga (1 Gbit/s = 1,000 Mbit/s) or tera (1 Tbit/s = 1,000 Gbit/s). The non-standard abbreviation bps is often used to replace the standard symbol bit/s, so that, for example, 1 Mbps is used to mean one million bits per second. In most computing and digital communication environments, one byte per second (symbol: B/s) corresponds roughly to 8 bit/s. However if stop bits, start bits, and parity bits need to be factored in, a higher number of bits per second will be required to achieve a throughput of the same number of bytes. Prefixes When quantifying large or small bit rates, SI ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

JPEG2000

JPEG 2000 (JP2) is an image compression standard and coding system. It was developed from 1997 to 2000 by a Joint Photographic Experts Group committee chaired by Touradj Ebrahimi (later the JPEG president), with the intention of superseding their original JPEG standard (created in 1992), which is based on a discrete cosine transform (DCT), with a newly designed, wavelet-based method. The standardized filename extension is .jp2 for ISO/ IEC 15444-1 conforming files and .jpx for the extended part-2 specifications, published as ISO/IEC 15444-2. The MIME types for JPEG 2000 are defined in RFC 3745. The MIME type for JPEG 2000 (ISO/IEC 15444-1) is image/jp2. The JPEG 2000 project was motivated by Ricoh's submission in 1995 of the CREW (Compression with Reversible Embedded Wavelets) algorithm to the standardization effort of JPEG LS. Ultimately the LOCO-I algorithm was selected as the basis for JPEG LS, but many of the features of CREW ended up in the JPEG 2000 standard. JPEG 2000 c ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

LZ77 And LZ78

LZ77 and LZ78 are the two lossless data compression algorithms published in papers by Abraham Lempel and Jacob Ziv in 1977 and 1978. They are also known as Lempel-Ziv 1 (LZ1) and Lempel-Ziv 2 (LZ2) respectively. These two algorithms form the basis for many variations including LZW, LZSS, LZMA and others. Besides their academic influence, these algorithms formed the basis of several ubiquitous compression schemes, including GIF and the DEFLATE algorithm used in PNG and ZIP. They are both theoretically dictionary coders. LZ77 maintains a sliding window during compression. This was later shown to be equivalent to the ''explicit dictionary'' constructed by LZ78—however, they are only equivalent when the entire data is intended to be decompressed. Since LZ77 encodes and decodes from a sliding window over previously seen characters, decompression must always start at the beginning of the input. Conceptually, LZ78 decompression could allow random access to the input if the en ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Joint Encoding

In audio engineering, joint encoding is the joining of several channels of similar information during encoding in order to obtain higher quality, a smaller file size, or both. Joint stereo The term joint stereo has become prominent as the Internet has allowed for the transfer of relatively low bit rate, acceptable-quality audio with modest Internet access speeds. Joint stereo refers to any number of encoding techniques used for this purpose. Two forms are described here, both of which are implemented in various ways with different codecs, such as MP3, AAC and Ogg Vorbis. Intensity stereo coding This form of joint stereo uses a technique known as joint frequency encoding, which functions on the principle of sound localization. Human hearing is predominantly less acute at perceiving the direction of certain audio frequencies. By exploiting this characteristic, intensity stereo coding can reduce the data rate of an audio stream with little or no perceived change in apparent qualit ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Delta Encoding

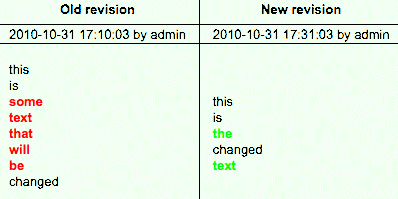

Delta encoding is a way of storing or transmitting data in the form of '' differences'' (deltas) between sequential data rather than complete files; more generally this is known as data differencing. Delta encoding is sometimes called delta compression, particularly where archival histories of changes are required (e.g., in revision control software). The differences are recorded in discrete files called "deltas" or "diffs". In situations where differences are small – for example, the change of a few words in a large document or the change of a few records in a large table – delta encoding greatly reduces data redundancy. Collections of unique deltas are substantially more space-efficient than their non-encoded equivalents. From a logical point of view, the difference between two data values is the information required to obtain one value from the other – see relative entropy. The difference between identical values (under some equivalence) is often called ''0'' or the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Graphics Interchange Format

The Graphics Interchange Format (GIF; or , ) is a bitmap image format that was developed by a team at the online services provider CompuServe led by American computer scientist Steve Wilhite and released on June 15, 1987. The format can contain up to 8 bits per pixel, allowing a single image to reference its own palette of up to 256 different colors chosen from the 24-bit RGB color space. It can also represent multiple images in a file, which can be used for animations, and allows a separate palette of up to 256 colors for each frame. These palette limitations make GIF less suitable for reproducing color photographs and other images with color gradients but well-suited for simpler images such as graphics or logos with solid areas of color. GIF images are compressed using the Lempel–Ziv–Welch (LZW) lossless data compression technique to reduce the file size without degrading the visual quality. While once in widespread usage on the World Wide Web because of i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |