|

Cumulant

In probability theory and statistics, the cumulants of a probability distribution are a set of quantities that provide an alternative to the '' moments'' of the distribution. Any two probability distributions whose moments are identical will have identical cumulants as well, and vice versa. The first cumulant is the mean, the second cumulant is the variance, and the third cumulant is the same as the third central moment. But fourth and higher-order cumulants are not equal to central moments. In some cases theoretical treatments of problems in terms of cumulants are simpler than those using moments. In particular, when two or more random variables are statistically independent, the -th-order cumulant of their sum is equal to the sum of their -th-order cumulants. As well, the third and higher-order cumulants of a normal distribution are zero, and it is the only distribution with this property. Just as for moments, where ''joint moments'' are used for collections of random variab ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Variance-to-mean Ratio

In probability theory and statistics, the index of dispersion, dispersion index, coefficient of dispersion, relative variance, or variance-to-mean ratio (VMR), like the coefficient of variation, is a normalized measure of the dispersion of a probability distribution: it is a measure used to quantify whether a set of observed occurrences are clustered or dispersed compared to a standard statistical model. It is defined as the ratio of the variance \sigma^2 to the mean \mu,'' :D = . It is also known as the Fano factor, though this term is sometimes reserved for ''windowed'' data (the mean and variance are computed over a subpopulation), where the index of dispersion is used in the special case where the window is infinite. Windowing data is frequently done: the VMR is frequently computed over various intervals in time or small regions in space, which may be called "windows", and the resulting statistic called the Fano factor. It is only defined when the mean \mu is non-zero, and is ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Negative Binomial Distribution

In probability theory and statistics, the negative binomial distribution is a discrete probability distribution that models the number of failures in a sequence of independent and identically distributed Bernoulli trials before a specified (non-random) number of successes (denoted r) occurs. For example, we can define rolling a 6 on a die as a success, and rolling any other number as a failure, and ask how many failure rolls will occur before we see the third success (r=3). In such a case, the probability distribution of the number of failures that appear will be a negative binomial distribution. An alternative formulation is to model the number of total trials (instead of the number of failures). In fact, for a specified (non-random) number of successes (r), the number of failures (n - r) are random because the total trials (n) are random. For example, we could use the negative binomial distribution to model the number of days n (random) a certain machine works (specified by r) ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Moment (mathematics)

In mathematics, the moments of a function are certain quantitative measures related to the shape of the function's graph. If the function represents mass density, then the zeroth moment is the total mass, the first moment (normalized by total mass) is the center of mass, and the second moment is the moment of inertia. If the function is a probability distribution, then the first moment is the expected value, the second central moment is the variance, the third standardized moment is the skewness, and the fourth standardized moment is the kurtosis. The mathematical concept is closely related to the concept of moment in physics. For a distribution of mass or probability on a bounded interval, the collection of all the moments (of all orders, from to ) uniquely determines the distribution (Hausdorff moment problem). The same is not true on unbounded intervals (Hamburger moment problem). In the mid-nineteenth century, Pafnuty Chebyshev became the first person to think systematic ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Eccentricity (mathematics)

In mathematics, the eccentricity of a conic section is a non-negative real number that uniquely characterizes its shape. More formally two conic sections are similar if and only if they have the same eccentricity. One can think of the eccentricity as a measure of how much a conic section deviates from being circular. In particular: * The eccentricity of a circle is zero. * The eccentricity of an ellipse which is not a circle is greater than zero but less than 1. * The eccentricity of a parabola is 1. * The eccentricity of a hyperbola is greater than 1. * The eccentricity of a pair of lines is \infty Definitions Any conic section can be defined as the locus of points whose distances to a point (the focus) and a line (the directrix) are in a constant ratio. That ratio is called the eccentricity, commonly denoted as . The eccentricity can also be defined in terms of the intersection of a plane and a double-napped cone associated with the conic section. If the cone is oriented ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

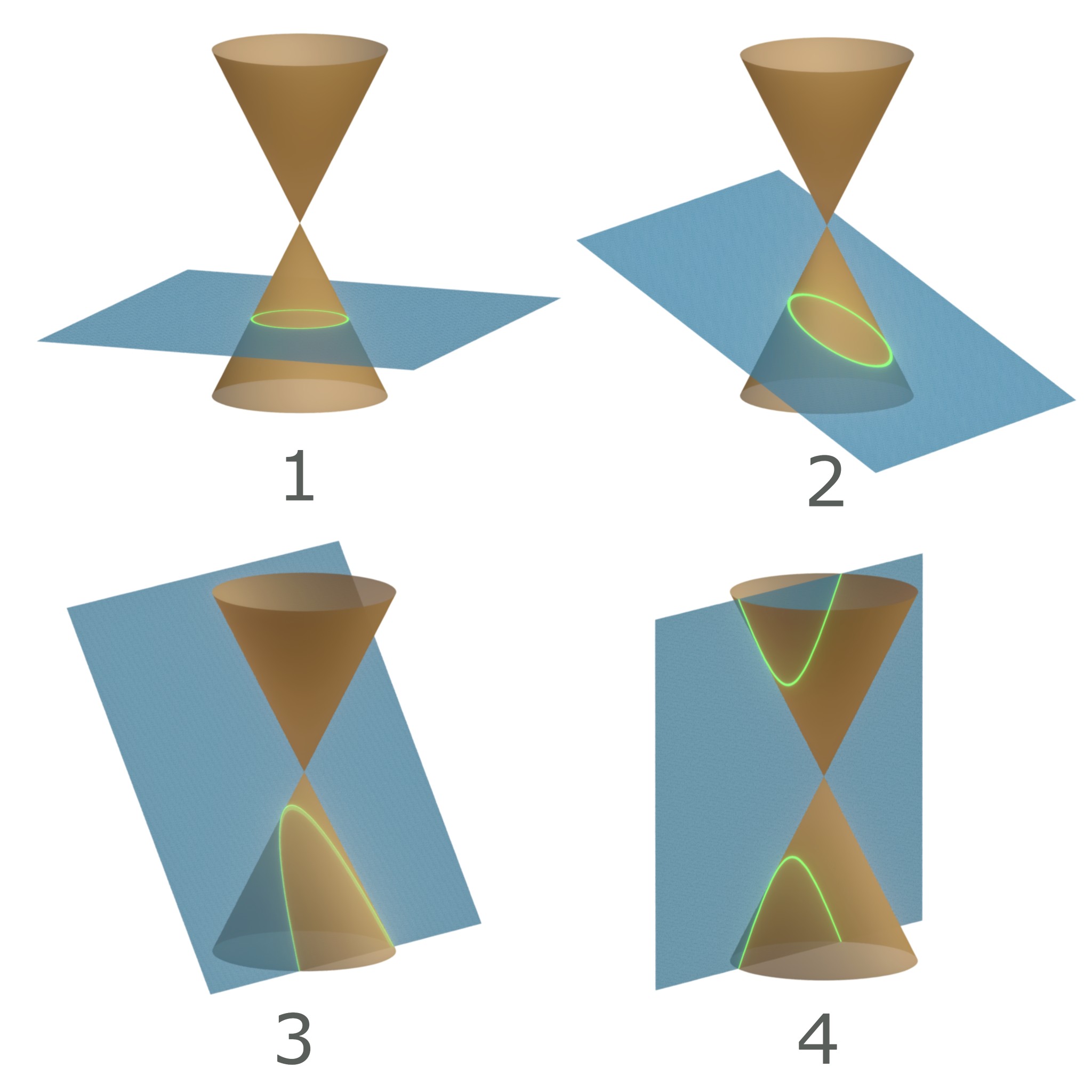

Conic Sections

In mathematics, a conic section, quadratic curve or conic is a curve obtained as the intersection of the surface of a cone with a plane. The three types of conic section are the hyperbola, the parabola, and the ellipse; the circle is a special case of the ellipse, though historically it was sometimes called a fourth type. The ancient Greek mathematicians studied conic sections, culminating around 200 BC with Apollonius of Perga's systematic work on their properties. The conic sections in the Euclidean plane have various distinguishing properties, many of which can be used as alternative definitions. One such property defines a non-circular conic to be the set of those points whose distances to some particular point, called a ''focus'', and some particular line, called a ''directrix'', are in a fixed ratio, called the ''eccentricity''. The type of conic is determined by the value of the eccentricity. In analytic geometry, a conic may be defined as a plane algebraic curve of deg ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Poisson Distribution

In probability theory and statistics, the Poisson distribution is a discrete probability distribution that expresses the probability of a given number of events occurring in a fixed interval of time or space if these events occur with a known constant mean rate and Statistical independence, independently of the time since the last event. It is named after France, French mathematician Siméon Denis Poisson (; ). The Poisson distribution can also be used for the number of events in other specified interval types such as distance, area, or volume. For instance, a call center receives an average of 180 calls per hour, 24 hours a day. The calls are independent; receiving one does not change the probability of when the next one will arrive. The number of calls received during any minute has a Poisson probability distribution with mean 3: the most likely numbers are 2 and 3 but 1 and 4 are also likely and there is a small probability of it being as low as zero and a very smal ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Uniform Distribution (continuous)

In probability theory and statistics, the continuous uniform distribution or rectangular distribution is a family of symmetric probability distributions. The distribution describes an experiment where there is an arbitrary outcome that lies between certain bounds. The bounds are defined by the parameters, ''a'' and ''b'', which are the minimum and maximum values. The interval can either be closed (e.g. , b or open (e.g. (a, b)). Therefore, the distribution is often abbreviated ''U'' (''a'', ''b''), where U stands for uniform distribution. The difference between the bounds defines the interval length; all intervals of the same length on the distribution's support are equally probable. It is the maximum entropy probability distribution for a random variable ''X'' under no constraint other than that it is contained in the distribution's support. Definitions Probability density function The probability density function of the continuous uniform distribution is: : f(x)=\begin ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Characteristic Function (probability Theory)

In probability theory and statistics, the characteristic function of any real-valued random variable completely defines its probability distribution. If a random variable admits a probability density function, then the characteristic function is the Fourier transform of the probability density function. Thus it provides an alternative route to analytical results compared with working directly with probability density functions or cumulative distribution functions. There are particularly simple results for the characteristic functions of distributions defined by the weighted sums of random variables. In addition to univariate distributions, characteristic functions can be defined for vector- or matrix-valued random variables, and can also be extended to more generic cases. The characteristic function always exists when treated as a function of a real-valued argument, unlike the moment-generating function. There are relations between the behavior of the characteristic function of a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Moment-generating Function

In probability theory and statistics, the moment-generating function of a real-valued random variable is an alternative specification of its probability distribution. Thus, it provides the basis of an alternative route to analytical results compared with working directly with probability density functions or cumulative distribution functions. There are particularly simple results for the moment-generating functions of distributions defined by the weighted sums of random variables. However, not all random variables have moment-generating functions. As its name implies, the moment-generating function can be used to compute a distribution’s moments: the ''n''th moment about 0 is the ''n''th derivative of the moment-generating function, evaluated at 0. In addition to real-valued distributions (univariate distributions), moment-generating functions can be defined for vector- or matrix-valued random variables, and can even be extended to more general cases. The moment-generating func ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Binomial Distribution

In probability theory and statistics, the binomial distribution with parameters ''n'' and ''p'' is the discrete probability distribution of the number of successes in a sequence of ''n'' independent experiments, each asking a yes–no question, and each with its own Boolean-valued outcome: ''success'' (with probability ''p'') or ''failure'' (with probability q=1-p). A single success/failure experiment is also called a Bernoulli trial or Bernoulli experiment, and a sequence of outcomes is called a Bernoulli process; for a single trial, i.e., ''n'' = 1, the binomial distribution is a Bernoulli distribution. The binomial distribution is the basis for the popular binomial test of statistical significance. The binomial distribution is frequently used to model the number of successes in a sample of size ''n'' drawn with replacement from a population of size ''N''. If the sampling is carried out without replacement, the draws are not independent and so the resulting ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Independence

Independence is a fundamental notion in probability theory, as in statistics and the theory of stochastic processes. Two events are independent, statistically independent, or stochastically independent if, informally speaking, the occurrence of one does not affect the probability of occurrence of the other or, equivalently, does not affect the odds. Similarly, two random variables are independent if the realization of one does not affect the probability distribution of the other. When dealing with collections of more than two events, two notions of independence need to be distinguished. The events are called pairwise independent if any two events in the collection are independent of each other, while mutual independence (or collective independence) of events means, informally speaking, that each event is independent of any combination of other events in the collection. A similar notion exists for collections of random variables. Mutual independence implies pairwise independence ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Probability Theory

Probability theory is the branch of mathematics concerned with probability. Although there are several different probability interpretations, probability theory treats the concept in a rigorous mathematical manner by expressing it through a set of axioms. Typically these axioms formalise probability in terms of a probability space, which assigns a measure taking values between 0 and 1, termed the probability measure, to a set of outcomes called the sample space. Any specified subset of the sample space is called an event. Central subjects in probability theory include discrete and continuous random variables, probability distributions, and stochastic processes (which provide mathematical abstractions of non-deterministic or uncertain processes or measured quantities that may either be single occurrences or evolve over time in a random fashion). Although it is not possible to perfectly predict random events, much can be said about their behavior. Two major results in probability ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |